Audio inpainting of music by means of neural networks

We studied the ability of deep neural networks (DNNs) to restore missing audio content based on its context, a process usually referred to as audio inpainting. We focused on gaps in the range of tens of milliseconds. The proposed DNN structure was tr…

Authors: Andres Marafioti, Nicki Holighaus, Piotr Majdak

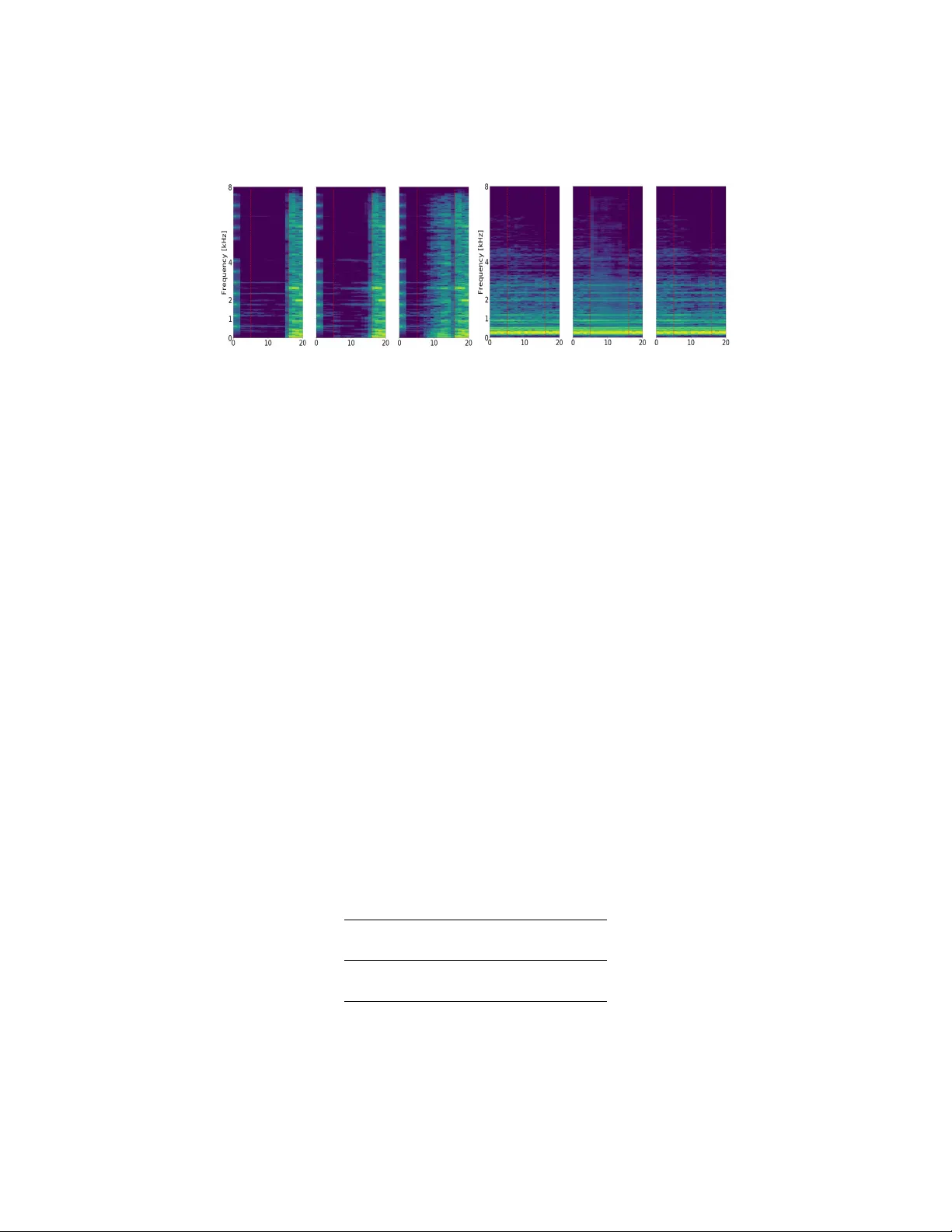

A UDIO INP AINTING OF MUSIC BY MEANS OF NEURAL NETW ORKS ANDR ´ ES MARAFIOTI † ‡ , NICKI HOLIGHA US † , PIOTR MAJD AK † , AND NA THANA ¨ EL PERRA UDIN ∗ Abstract. W e studied the abilit y of deep neural netw orks (DNNs) to restore missing audio conten t based on its con text, a pro cess usually referred to as audio inpain ting. W e fo cused on gaps in the range of tens of milliseconds. The prop osed DNN structure was trained on audio signals con taining music and m usical instrumen ts, separately , with 64-ms long gaps. The input to the DNN was the con text, i.e., the signal surrounding the gap, transformed into time-frequency (TF) coefficients. Our results w ere compared to those obtained from a reference method based on linear predictiv e coding (LPC). F or m usic, our DNN significan tly outperformed the reference method, demonstrating a generally goo d usabilit y of the prop osed DNN structure for inpain ting complex audio signals like m usic. 1. Introduction Audio pro cessing tasks often encounter lo cally degraded or even lost infor- mation. Some common examples are corrupted audio files, lost information in audio transmission, and audio signals lo cally contaminated by noise. Audio inpain ting deals with the reconstruction of lost information in audio. Recon- struction is usually aimed at providing coheren t and meaningful information while preven ting audible artifacts so that the listener remains unaw are of any o ccurred problem. Successful algorithms are limited to deal with a sp ecific class of audio signals, they fo cus on a sp ecific duration of the problematic signal parts, and exploit a-priori information ab out the problem. In this w ork, w e explore a new machine-learning algorithm with resp ect to the reconstruction of audio signals. F rom all p ossible classes of audio signals, w e limit the reconstruction to either music, or individual musical instruments. Key wor ds and phr ases. audio inpainting, context encoder, deep neural netw orks, short- time F ourier transform. This work has b een supp orted b y Austrian Science F und (FWF) pro ject MERLIN (Mo dern metho ds for the restoration of lost information in digital signals;I 3067-N30). W e gratefully ackno wledge the supp ort of NVIDIA Corp oration with the donation of the Titan X P ascal GPU used for this research. This is the Author’s A c c epte d Manuscript version of work pr esente d at the 146th Convention of the Audio Engine ering So ciety. It is lic ense d under the terms of the Cr e ative Commons Attribution 4.0 International Lic ense, which p ermits unr estricte d use, distribution, and r epr o duction in any me dium, pr ovide d the original author and sour c e ar e cr e dite d. The publishe d version is available at: https://www.aes.org/e- lib/browse.cfm?elib=20303 . ‡ Corresp onding author 1 2 A. MARAFIOTI, N. HOLIGHA US, P . MAJD AK, AND N. PERRAUDIN W e fo cus on the duration of the problematic signal parts, i.e., gaps, b eing in the range of tens of milliseconds. F urther, w e exploit the av ailable audio information surrounding the gap, i.e., the context. The prop osed algorithm is based on an unsup ervised feature-learning algorithm driven by context-based sample prediction. It relies on a neural net work with con v olutional and fully-connected la y ers trained to generate sounds b eing conditioned on its context. Suc h an approach was first in tro- duced for images [ 1 ] where the terminology of the context enco der (CE) w as coined as an analogy to auto enco ders [ 2 ]. Hence, w e treat our algorithm as an audio-inpain ting context enco der. An application of the conv olutional netw ork directly on the time-domain audio signals requires extremely large training datasets for go od results [ 3 ]. In order to reduce the size of required datasets, our CE works on ch unks of time-frequency (TF) co efficien ts calculated from the time-domain audio signal. T rained with the TF co efficien ts, our CE is aimed to recov er the lost TF co efficien ts within the gap based on pro vided TF co efficien ts of the gap’s surroundings. The TF co efficien ts were obtained from an inv ertible represen- tation, namely , a redundan t short-time F ourier transform (STFT)[ 4 , 5 ], in order to allow a robust synthesis of the reconstructed time-domain signal based on the net w ork output. Our CE reconstructs the magnitude co effi- cien ts only , which are then applied to a phase-reconstruction algorithm in order to obtain complex v alued TF co efficien ts required for synthesizing the time-domain signal. F rom accurate TF magnitude information, phaseless reconstruction metho ds are kno wn to provide p erceptually close, often indis- cernible, reconstruction despite the resulting time-domain w a veforms usually b eing rather different. Tw o net work structure w e considered reconstructs the magnitude co effi- cien ts only , which are then applied to a phase-reconstruction algorithm in order to obtain complex-v alued TF co efficien ts required for synthesising the time-domain signal. F rom accurate TF magnitude information, phaseless reconstruction metho ds such as [ 6 , 7 , 8 ] are kno wn to pro vide p erceptually close, often indiscernable, reconstruction despite the resulting time-domain w a veforms usually b eing rather differen t. 1.1. Related deep-learning tec hniques. Deep learning excels in classifi- cation, regression, and anomaly detection tasks [ 2 ] and has b een recently successfully applied to audio [ 9 ]. Deep learning has also shown go o d results in generative mo deling with techniques such as v ariational auto-enco ders[ 10 ] and generativ e adversarial netw orks [ 11 ]. Unfortunately , for audio synthesis only the latter has b een studied and to limited success [ 12 ]. The state- of-the-art audio signal synthesis require sophisticated net works, [ 13 , 14 ] in order to obtain meaningful results. While these approac hes directly predict audio samples based on the preceding samples, in the field of text-to-speech syn thesizing audio in domains other than time such as sp ectrograms [ 15 ], and 3 mel-sp ectrograms [ 16 ] ha ve b een proposed. In the field of speech transmission, DNNs ha ve b een used to achiev e pac k et loss concealment [17]. The syn thesis of music al audio signals using deep learning, how ev er, is ev en more challenging [ 18 ]. A music signal is comprised of complex sequences ranging from short-term structures (any perio dicit y in the w av eform) to long-term structures (like figures, motifs, or sections). In order to simplify the problem brought by long-range dep endencies, music synthesis in m ultiple steps has b een prop osed including an intermediate symbolic representation lik e MIDI sequences [19], and features of a parametric voco der [20]. While all of these con tributions can pro vide insigh ts on the design of a neural netw ork for audio synthesis, none of them addresses the specific setting of audio inpainting where some audio information has b een lost, but some of its context is kno wn. In particular in this contribution, we explored the setting in which the the audio information surrounding a missing gap is a v ailable. 1.2. Related audio-inpainting algorithms. Audio inpainting techniques for time-domain data loss comp ensation can be roughly divided into tw o categories: (a) Methods that attempt to reco v er precisely the lost data relying only on very lo cal information in the direct vicinity of the corruptions. They are usually designed for reconstructions of gap with a duration of less than 10 ms and also work well in the presence of randomly lost audio samples. (b) Metho ds that aim at pro viding a p erceptually pleasing o cclusion of the corruption, i.e., the corruption should not b e anno ying, or in the b est case undetectable, for a human listener. The prop osed restorations may still differ from the lost conten t. Suc h approac hes are often based on self similarity , require a more global analysis of the degraded audio signal, and rely heavily on rep etitiv e structures in audio data. They often cop e with data loss b ey ond h undreds or even thousands of milliseconds. In either of these categories, successful metho ds often dep end on analyzing TF features of the audio signal instead of the time-domain signal itself. In the first category , we highligh t t w o approac hes based on the assumption that audio data is exp ected to p ossess appro ximately sparse time-frequency represen tations: In particular, v ariations of orthogonal matc hing pursuit (OMP) with time-frequency disctionaries [ 21 , 22 , 23 ], as well as (structured) ` 1 -regularization [ 24 , 25 ]. They ha ve b een successfully applied to reconstruct gaps of up to 10-ms duration, how ev er it is evident that neither of these metho ds is comp etitiv e when treating longer gaps. In the second class of metho ds, a method for pack et loss concealmen t based on MFCC feature similarit y and explicitly targeting a p erceptually plausible restoration was prop osed [ 26 ]. Similarly , exemplar-based inpainting w as p erformed on a scale of seconds based on a graph enco ding sp ectro-temporal similarities within an audio signal [ 27 ]. In both studies, gap durations where b ey ond several h undreds of milliseconds and their reconstruction needed to b e ev aluated in psyc hoacoustic exp erimen ts. 4 A. MARAFIOTI, N. HOLIGHA US, P . MAJD AK, AND N. PERRAUDIN In our con tribution, w e target gap durations of tens of milliseconds, a scale where the non-stationary characteristic of audio already becomes imp ortan t, but a sample-by-sample extrap olation of the missing information from the con text data still seems to b e realistic. The methods from category (a) can not b e exp ected to provide go od results for such long gaps, and the metho ds from category (b) do not aim at reconstructions on the sample-by-sample lev el. Interestingly , the combination of that gap durations and that lev el of signal reconstructions do not seem to hav e receiv ed m uc h attention yet. F or simple sounds lik e those of m usical instruments, linear prediction co d- ing (LPC) can b e applied. Assuming a mo deling of the sound as an acoustic source filtered b y a p ole filter, extrap olation based on linear prediction has b een shown to work well for gaps in the range of 5 to 100 milliseconds, e.g., [ 28 , 29 ]. Although the p erformance of linear prediction relies hea vily on the underlying stationarity assumption to b e fulfilled, it seems to b e the only comp etitiv e, established metho d in the considered scenario. Deep-learning tec hniques on the other hand, some of whic h we study here, promise a more generalized signal representation and therefore b etter results, whenev er the lost data cannot b e predicted b y linear filtering. Ho w ev er, a link of deep-learning techniques with audio inpainting seems to b e missing un til now. 2. Context Encoder W e consider the audio signal s ∈ R L , con taining L samples of audio. The cen tral L g samples of s represen t the gap s g , while the remaining L c samples on eac h side of the gap from the context. W e distinguish b et w een s b and s a , whic h is the context signals b efore and after the gap, respectively . The arc hitecture of our net w ork is an enco der-decoder pip eline fed with the con text information. Instead of passing the time-domain signals s b and s a directly to the net w ork, the audio signal is processed to obtain TF coefficients, S b , and S a , whic h is the input to the enco der. The TF represen tation is propagated through the encode and deco der, b oth trained to predict TF co efficien ts represen ting the gap, S 0 g . That output of the deco der is then p ost-processed in order to synthesize a reconstruction in the time domain, s 0 . The netw ork structure is comprised of standard, widely-used building blo c ks, i.e., con volutional and fully-connected la y ers, and rectified linear units (ReLUs). It is inspired by the context enco der for image restoration [ 1 ]. 1 Moreo v er, for the training, w e do not use an adv ersarial discriminator, but optimize an adapted ` 2 -based loss. 1 Before fixing the net work structure described in the remainder of this section, w e ep erimen ted with differen t arc hitectures, depths, and kernel shap es, out of which the curren t structure show ed the most promise. Additionally , we also considered drop out [ 30 ] and skip connections, discarding them after not achieving any notable improv ements. 5 The net w ork w as implemen ted in T ensorflow [ 31 ]. F or the training, we applied the sto c hastic gradient descen t solv er ADAM [ 32 ]. Our soft w are, along with instructiv e examples, is av ailable to the public. 2 2.1. Pre-pro cessing stage. In the pre-processing stage, STFTs are applied on the context s b and s a yielding S b and S a , resp ectiv ely . They are then split in to real and imaginary parts, resulting in four c hannels S Re b , S I m b , S Re a , S I m a . The STFT is determined by the windo w g , hop size a and the num b er of frequency c hannels M . In our study , g w as an appropriately normalized Hann windo w of length M and a was M / 4, enabling p erfect reconstruction b y an inv erse STFT with the same parameters and windo w. In order to obtain co efficien ts without artifacts even at the context b orders, s b and s a w ere extended with zeros to the length of L c + 3 a tow ards the gap. 2.2. Enco der. The enco der is a conv olutional neural netw ork. The inputs S Re b , S I m b , S Re a , S I m a of the con text information are treated as separate chan- nels, thus, the netw ork is required to learn ho w the c hannels interact and ho w to mix them. Similar to [ 1 ], all lay ers are conv olutional and sequentially connected via ReLUs [ 33 ], after which batch normalization [ 34 ] is applied. The resulting enco der architecture is shown in Figure 1, for M = 512. Note that because the enco der is comprised of only conv olutional lay ers, the information can not reliably propagate from one end of the feature map to another. This is a consequence of conv olutional la y ers connecting all the feature maps together, but never directly connecting all lo cations within a sp ecific feature map [1]. Figure 1. The enco der is a conv olutional netw ork with six la y ers follow ed by reshaping. The four c hannel time-frequency input is transformed into an enco ding of size 2048. Gra y rectangles represent the con v olution filters with size expressed as (heigh t, width). White cub es represent the signal. 2 www.gith ub.com/andimarafioti/audioContextEncoder 6 A. MARAFIOTI, N. HOLIGHA US, P . MAJD AK, AND N. PERRAUDIN 2.3. Deco der. The deco der b egins with a fully connected lay er (FCL) with a ReLU nonlinearit y in order to spread the enco der information among the c hannels. 3 Similar to [ 1 ], all the subsequent lay ers are (de-)con v olutional and, as for the encoder, connected b y ReLUs with batc h normalization. The net work arc hitecture is shown in Figure 2, for M = 512 and a gap size of 1024 samples, i.e., ev ery output channel is of size 257 × 11. The final la y er dep ends on the netw ork. F or the complex netw ork, the final la yer has tw o outputs, corresp onding to the real and imaginary part of the complex-v alued TF co efficien ts. F or the magnitude netw ork, the final la y er has a single output for the magnitude TF co efficien ts. W e denote the output TF co efficien ts as S 0 g . Figure 2. The deco der generates magnitude time-frequency co efficien ts from the enco ding pro duced by the enco der, Fig. 1. It consists of a fully connected lay er (F CL) and five decon- v olution la yers, with reshaping after the fully-connected, as w ell as the third and fourth deconv olution lay ers. All other con v entions as in Figure 1. 2.4. P ost-pro cessing stage. The aim of the p ost-processing stage is to syn thesize the reconstructed audio signal in the time domain. T o this end, the reconstructed gap TF co efficien ts from the deco der, S 0 g , are inserted b et w een the TF co efficien ts of the con text, S b and S a . How ev er, the gap b et w een S b and S a is smaller then the size of S 0 g ’ because the con text coefficients w ere calculate from zero-padded time-domain signals. The co efficien ts at the con text b order represent the zero-padded information and are discarded for syn thesis, forming S 0 b and S 0 a . The sequence S 0 = ( S 0 b , S 0 g , S 0 a ) then has the same size as S . By p erforming the insertion directly in the time-frequency domain, w e prev ent transitional artifacts b et w een the con text and the gap, since syn thesis by the inv erse STFT introduces an inherent cross-fading. 3 F ully connected la yers are computationally very exp ensiv e; in our case it con tains 38% of all the parameters of the net work. In [ 1 ], this issue was addressed b y using a ’c hannel-wise fully connected lay er’. W e tested that approach but obtained consistently w orse results. 7 The deco der output represents the magnitudes of the TF co efficien ts and the missing phase information needs to b e estimated separately . First, the phase gradien t heap integration algorithm prop osed in [ 35 ] w as applied to the magnitude co efficien ts pro duced b y the deco der in order to obtain an initial estimation of the TF phase. Then, this estimation was refined by applying 100 iterations of the fast Griffin-Lim algorithm [ 6 , 7 ] implemen ted in the Phase Retriev al T o olbox Library [ 36 ]. 4 The resulting complex-v alued TF co efficien ts S 0 g w ere then transformed into a time-domain signal s 0 b y in v erse STFT. 2.5. Loss F unction. The netw ork training is based on the minimization of the total loss of the reconstruction. Generally , w e computed the recon- struction loss by comparing the original gap TF co efficien ts S g with the reconstructed gap TF co efficien ts S 0 g . The comparison is then done on the basis of the squared ` 2 -norm of the difference betw een S g and S 0 g as it is customary for this type of netw ork [ 37 ], commonly known as mean squared error (MSE). The absolute MSE dep ends, how ever, on the total energy of S g , clearly putting more w eight on signals containing more energy . In con trast, the comparison can b e done b y using the normalize d mean squared error (NMSE), (1) NMSE ( S g , S 0 g ) = k S g − S 0 g k 2 k S g k 2 While theoretically inv ariant to amplitude changes, this measure strongly amplifies small errors when the energy of S g is small. In practice, how ever, v ery minor deviations from S g are insignifican t regardless of the con ten t of S g , whic h is not reflected in NMSE. Therefore, we prop ose to use a weigh ted mix b et w een MSE and NMSE for the calculation of the loss function. This leads to (2) F ( S g , S 0 g ) = k S g − S 0 g k 2 c − 1 + k S g k 2 , where the constan t c > 0 con trols the incorp orated comp ensation for small amplitude. In our exp erimen ts, c = 5 yielded go od results. Finally , as prop osed in [ 38 ], the total loss is the sum of the loss function and a regularization term controlling the trainable weigh ts in terms of their ` 2 -norm: (3) T = F ( S g , S 0 g ) + λ 2 X i w 2 i , with w i b eing weigh ts of the net w ork and λ b eing the regularization parameter, here set to 0 . 01. 4 The combination of these t wo algorithms provided consistently b etter results than separate application of either. 8 A. MARAFIOTI, N. HOLIGHA US, P . MAJD AK, AND N. PERRAUDIN 3. Ev alua tion The main ob jectiv es of the ev aluation w ere to in vestigate the general abilit y of the net w orks to adapt to the considered class of audio signals, as well as ho w they compare to the reference metho d, i.e., LPC-based extrap olation as prop osed in [ 29 ]. Additionally , w e considered the effects of changing the gap duration. T o this end, w e considered t wo classes of audio signals: instrumen t sounds, and music. F or instrumen ts and m usic, the net work w as trained on the targeted signal class, with an assumed gap size of 64 ms. Reconstruction w as ev aluated on the trained signal class and pure tones for 64 ms gaps. Additionally , the netw ork w as ev aluated for 48 ms gaps. Reconstruction qualit y was ev aluated b y means of signal-to-noise ratios (SNR) applied to the magnitude sp ectrograms, to accommo date for p erceptually irrelev an t phase c hanges. F urther, all results were compared to the reconstruction based on the reference metho d. 3.1. P arameters. The sampling rate was 16 kHz. W e considered audio segmen ts with a duration of 320 ms, which corresp onds to L = 5120 samples. Eac h segmen t was separated in a gap of 64 ms (or 48 ms, corresp onding to L g = 1024 or L g = 768 samples) of the central part of a segment and the con text of t wice of 128 ms (or 136 ms), corresp onding to L c = 2048 (or L c = 2176) samples. Both, the size of the windo w g and the n um b er of frequency c hannels M w ere fixed to 512 samples. Consequently , a w as 128 samples, and the input to the enco der was S b , S a ∈ C 257 × 16 . 3.2. Datasets. The dataset representing musical instruments was derived from the NSynth dataset [ 39 ]. NSynth is an audio dataset con taining 305,979 m usical notes from 1,006 instrumen ts, each with a unique pitch, timbre, and en v elop e. Eac h example is four seconds long, monophonic, and sampled at 16 kHz. The dataset representing music was derived from the free m usic arc hive (FMA) [ 40 ]. The FMA is an op en and easily accessible dataset, usually used for ev aluating tasks in musical information retriev al (MIR). W e used the small version of the FMA comprised of 8,000 30-s segmen ts of songs with eigh t balanced genres sampled at 44 . 1 kHz. W e resampled eac h segmen t to the sampling rate of 16 kHz. The original segments in the tw o datasets w ere pro cessed to fit the ev alua- tion parameters. First, for eac h example the silence was remov ed. Second, from eac h example, pieces of the duration of 320 ms w ere copied, starting with the first segmen t at the b eginning of a segment, contin uing with fur- ther segments with a shift of 32 ms. Thus, eac h example yielded m ultiple o v erlapping segments s . Note that for a gap of 64 ms, the segmen t can b e considered as a 3-tuple by lab eling the first 128 ms as the context b efore the gap s b , the subsequen t 64 ms as the gap s g , and the last 128 ms as the 9 con text after the gap s a . Finally , all segments with RMS smaller than ten to the negativ e four in s g w ere discarded. The datasets were split into training, v alidation, and testing sets. F or the instrumen ts, we used the splitting prop osed b y [ 39 ]. The music dataset, was split in to appro ximately 70%, 20% and 10%, resp ectiv ely . The statistics of the resulting sets are presented in T able 1. Coun t P ercen tage Instrumen ts training 19.4M 94.1 Instrumen ts v alidation 0.9M 4.4 Instrumen ts testing 0.3M 1.5 Music training 5.2M 70.0 Music v alidation 1.5M 20.0 Music testing 0.7M 10.0 T able 1. Datasets used in the ev aluation. 3.3. T raining. The net work was trained separately for the instrumen t and m usic dataset. This resulted in tw o trained net works. Eac h training started with the learning rate of 10 − 3 . The reconstructed phase was not considered in the training. Ev ery 2000 steps, the training progress w as monitored. T o this end, the net work’s output w as calculated for the m usic v alidation dataset and the NMSE was calculated b et w een the predicted and the actual TF co efficien ts of the gap. When conv erging, whic h usually happ ened after appro ximately 600k steps, the learning rate was reduced to 10 − 4 and the training w as contin ued by additional 200k steps. 5 3.4. Ev aluation metrics. F or the ev aluation of our results, in general, we calculated the SNR (in dB) (4) SNR ( x, x 0 ) = 10 log k x k 2 k x − x 0 k 2 for each segment of a testing dataset separately , and then a v eraged all these SNR across all segmen ts of that testing dataset. F or the ev aluation in the TF domain, w e calculated SNR ( | S g | , | S rg | ), where S rg represen ts the cen tral 5 frames of the STFT computed from the restored signal s 0 and thus represen ts the restoration of the gap. In other w ords, we compute the SNR b et w een the spectrograms of the original signal and the restored signal, but only in the region of the gap. W e refer to the a v erage of this metric (across all segmen ts of a testing dataset) to as SNR MS , 5 W e also considered training on the instrumen t training dataset (800k steps) follo wed b y a refinement with the m usic training dataset (300k steps). While it did not show substan tial differences to the training p erformed on music only , a pre-trained netw ork on instrumen ts with a subsequen t refinemen t to m usic may b e an option in applications addressing a sp ecific music genre. 10 A. MARAFIOTI, N. HOLIGHA US, P . MAJD AK, AND N. PERRAUDIN where MS references to magnitude sp ectrogram. Note that SNR MS is directly related to the logarithmic inv erse of the sp ectral con v ergence prop osed in [ 41 ]. 3.5. Reference metho d. W e compared our results to those obtained with a reference metho d based on LPC [42]. LPC is particularly widely used for the pro cessing of sp eec h [ 43 ], but also frequen tly for extrap olation of audio signals [29, 44]. F or the implementation, we follo wed [ 29 ], esp ecially [ 29 , Section 5.3]. In detail, the context signals s b and s a w ere extrap olated on to the gap s g b y computing their impulse resp onses and using them as prediction filters for a classical linear predictor. The impulse responses w ere obtained using Burg’s Metho d [ 45 ] and w ere fixed to hav e 1000 co efficien ts according to the suggestions from [ 44 ] and [ 46 ]. Their duration was the same as that for our context encoder in order to pro vide the same amoun t of context information. The tw o extrap olations were mixed with the squared-cosine w eigh ting function. Our implemen tation of the LPC extrap olation is a v ailable online 6 . Finally , we ev aluate the results pro duced b y the reference metho d in the same w ay as we ev aluate the results pro duced b y the netw orks: predictions w ere calculated for each segment from both testing datasets and the SNR TD as w ell as the SNR MS w ere calculated on the predictions. 4. Resul ts and discussion 4.1. Comparison to the reference metho d. T able 2 pro vides the SNR MS for the LPC-based reference reconstruction metho d. When tested on music, on av erage, the magnitude netw ork outp erformed the LPC-based metho d by 1.4 dB. When tested on instrumen ts, the magnitude netw ork underp erformed b y 8.6 dB. Music Instrumen ts Mag LPC Mag LPC Mean 7.7 6.3 22.0 30.6 Std 4.3 5.1 10.4 18.9 T able 2. SNR MS (in dB) of reconstructions of 64 ms gaps for the magnitude net works and the LPC-based metho d. When lo oking more in the details of the reconstruction, b oth metho ds sho wed different c haracteristics: In Figure 3 w e sho w sp ectrograms of an instrumen t signal with frequency-mo dulated comp onen ts. The LPC-based reconstruction sho ws a discontin uit y in the middle of the gap instead of a steady transition. This is the consequence of the tw o extrap olations (forward and bac kwards), mixed in the middle of the gap. The magnitude netw ork trained on the music learned ho w to represent frequency mo dulations and 6 www.gith ub.com/andimarafioti/audioContextEncoder 11 pro vides less artifacts in the reconstruction, which yielded a 5 dB larger SNR MS . Another in teresting examples are shown in Figure 4. The top ro w sho ws an example in whic h the magnitude net w ork outp erformed the LPC-based metho d. In this case, the signal is comprised of steady harmonic tones in the left side con text and a broadband sound in the right side context. While the LPC-based metho d extrap olated the broadband noise in to the gap, the magnitude netw ork was able to foresee the transition from the steady sounds to the broadband burst, yielding a prediction m uc h closer to the original gap, with a 13 dB larger SNR MS than that from the LPC-based metho d. On the other hand, the magnitude net work not alwa ys outp erformed the LPC-based metho d. The b ottom ro w of Figure 4 shows sp ectrograms of such an example. This signal had stable sounds in the gap, whic h were well-suited for an extrap olation, but rather complex to be p erfectly reconstructed by the magnitude net w ork. Th us, the LPC-based method outperformed the magnitude net work yielding a 9 dB larger SNR MS . Figure 3. Sections of magnitude spectrograms (in dB) of an exemplary signal reconstruction. Left: Original signal. Cen ter: Reconstruction b y the magnitude net w ork. Righ t: Reconstruction by the LPC-based metho d. The magnitude net w ork provided a 5 dB larger SNR MS . The excellent performance of the LPC-based metho d reconstructing instru- men ts can b e explained by the assumptions b ehind the LPC w ell-fitting to the single-note instrumen t sounds. These sounds usually consist of harmonics stable on a short-time scale. LPC extrap olates these harmonics preserving the sp ectral en velope of the signal. Nevertheless, the m agn itude netw ork yielded an SNR MS of 22.0 dB, on av erage, demonstrating a go o d ability to reconstruct instrumen t sounds. When applied on music, the p erformance of b oth methods w as muc h p oorer, with our net w ork p erforming slightly but statistically significan tly b etter than the LPC-based metho d. The b etter p erformance of our net w ork can b e explained by its abilit y to adapt to transient sounds and mo dulations in frequencies, sound prop erties that the LPC-based metho d is not suited to handle. 12 A. MARAFIOTI, N. HOLIGHA US, P . MAJD AK, AND N. PERRAUDIN Figure 4. Magnitude spectrograms (in dB) of exemplary signal reconstructions. Left: Original signal. Cen ter: Recon- struction b y the magnitude net w ork. Right: Reconstruction b y the LPC-based method. T op: Example with the mag- nitude net w ork outp erforming the reference by an SNR MS of 13 dB. Bottom: Example with the magnitude netw ork underp erforming the reference by an SNR MS of 9 dB. 4.2. Effect of the gap duration. The prop osed net w ork structure can b e trained with differen t con texts and gap durations. F or problems of v arying gap duration, a netw ork trained to the particular gap duration migh t app ear optimal. How ev er, training takes time, and it might b e simpler to train a net w ork to single gap duration and use it to reconstruct an y shorter gap as w ell. In order to test this idea, we in tro duced gaps of 48 ms in our testing datasets. These gaps were then reconstructed b y the net w ork trained for 64 ms gaps. As this netw ork outputs, at reconstruction time, a solution for a gap of length 64-ms, the 48-ms gaps needs to b e extended. W e tested three t yp es of the extension: 16 ms forwards, 16 ms backw ards, and centered (8 ms forw ards and 8 ms backw ards). T able 3 shows SNR MS obtained from reconstructions of the three t yp es of gap extension, as a v erages o v er these extension t yp es. Also, the corresponding SNR MS for the LPC-based method are sho wn. The results are similar to those obtained for larger gaps: for the instruments, the LPC-based metho d outp erformed our netw ork; for the m usic, our netw ork outp erformed the LPC-based metho d. Music Instrumen ts Ours LPC Ours LPC Mean 8.0 6.9 21.6 33.2 Std 4.6 5.5 11.5 20.1 T able 3. SNR MS (in dB) of reconstructions of 48 ms gaps for the magnitude net work and the LPC-based metho d. 13 5. Conclusions and Outlook W e prop osed a conv olutional neural netw ork arc hitecture working as a con text enco der on TF co efficien ts. F or the reconstruction of complex signals lik e music, that netw ork was able to outp erform the LPC-based reference metho d, in terms SNR calculated on magnitude spectrograms. How ev er, LPC yielded b etter results when applied on more simple signals like instrument sounds. W e hav e further shown that the prop osed netw ork was able to adapt to the particular pitches provided b y the training material and that it can b e applied to gaps shorter than the trained ones. In general, our results suggest that standard comp onen ts and a mo derately sized netw ork can b e applied to form audio-inpain ting models, offering a n um b er of angles for future impro vemen t. T raining a generativ e metho d directly on audio data requires v ast size of datasets and large netw orks. T o construct more compact mo dels of mo derately sized datasets, it is imperative to use efficien t input audio features and an in v ertible feature represen tation at the output. Here, the STFT features, mean t as a reasonable first choice, provided a decen t p erformance. In the future, we expect more hearing-related features to pro vide even b etter reconstructions. In particular, an inv estigation of Audlet frames, i.e., in v ertible time-frequency systems adapted to p erceptual frequency scales, [47], as features for audio inpainting present intriguing opp ortunities. Generally , b etter results can be expected for increased depth of the net work and the av ailable con text. Unfortunately , our preliminary tests of simply increasing the netw ork’s depth led to minor improv emen ts only . As it seems, a careful consideration of the building blo cks of the mo del is required instead. Here, preferred architectures are those not relying on a predetermined target and input feature length, e.g., a recurrent netw ork. Recent adv ances in generativ e netw orks will pro vide other in teresting alternatives for analyzing and pro cessing audio data as well. These approaches are yet to be fully explored. Finally , music data can b e highly complex and it is unreasonable to exp ect a single trained mo del to accurately inpain t a large num b er of musical styles and instruments at once. Th us, instead of training on a v ery general dataset, w e exp ect significan tly improv ed p erformance for more sp ecialized netw orks that could b e trained by restricting the training data to sp ecific genres or instrumen tation. Applied to a complex mixture and p oten tially preceded by a source-separation algorithm, the resulting mo dels could be used join tly in a mixture-of-exp erts, [48], approach. References [1] P athak, D., Krahenbuhl, P ., Donah ue, J., Darrell, T., and Efros, A., “Con text Enco ders: F eature Learning by Inpainting,” 2016. [2] Go odfellow, I., Bengio, Y., and Courville, A., De ep L e arning , MIT Press, 2016, http://www.deeplearningbook.org . 14 A. MARAFIOTI, N. HOLIGHA US, P . MAJD AK, AND N. PERRAUDIN [3] P ons, J., Nieto, O., Pro c kup, M., Sc hmidt, E. M., Ehmann, A. F., and Serra, X., “End-to-end learning for m usic audio tagging at scale,” CoRR , abs/1711.02520, 2017. [4] Portnoff, M., “Implementation of the digital phase voco der using the fast fourier transform,” IEEE Tr ans. Ac oust. Sp e e ch Signal Pr o c ess. , 24(3), pp. 243–248, 1976. [5] Gr¨ oc henig, K., Foundations of Time-Fr e quency Analysis , Appl. Numer. Harmon. Anal., Birkh¨ auser, 2001. [6] Griffin, D. and Lim, J., “Signal estimation from mo dified short-time F ourier transform,” IEEE T r ansactions on A c oustics, Sp e e ch and Signal Pr o c essing , 32(2), pp. 236–243, 1984. [7] P erraudin, N., Balazs, P ., and Søndergaard, P . L., “A fast Griffin-Lim algorithm,” in Applic ations of Signal Pr o c essing to Audio and A c oustics (W ASP AA), 2013 IEEE Workshop on , pp. 1–4, IEEE, 2013. [8] Pr ˚ u ˇ sa, Z., Balazs, P ., and Søndergaard, P ., “A noniterative metho d for reconstruction of phase from STFT magnitude,” IEEE/ACM T r ansac- tions on A udio, Sp e e ch and L anguage Pr o c essing , 25(5), pp. 1154–1164, 2017. [9] Sc hl ¨ uter, J., De ep L e arning for Event Dete ction, Se quenc e L ab el ling and Similarity Estimation in Music Signals , Ph.D. thesis, Johannes Kepler Univ ersit y Linz, Austria, 2017. [10] Kingma, D. and W elling, M., “Auto-Enco ding V ariational Ba y es.” CoRR , abs/1312.6114, 2013. [11] Go odfellow, I., P ouget-Abadie, J., Mirza, M., Xu, B., W arde-F arley , D., Ozair, S., Courville, A., and Bengio, Y., “Generativ e adversarial nets,” in A dvanc es in neur al information pr o c essing systems , pp. 2672–2680, 2014. [12] Donah ue, C., McAuley , J., and Puck ette, M., “Syn thesizing Audio with Generativ e Adversarial Netw orks,” ArXiv e-prints , 2018. [13] Mehri, S., Kumar, K., Gulra jani, I., Kumar, R., Jain, S., Sotelo, J., Courville, A., and Bengio, Y., “SampleRNN: An Unconditional End-to- End Neural Audio Generation Mo del,” CoRR , abs/1612.07837, 2016. [14] v an den Oord, A., Dieleman, S., Zen, H., Simony an, K., Vin y als, O., Gra ves, A., Kalch brenner, N., Senior, A., and Kavuk cuoglu, K., “W av eNet: A Generative Model for Raw Audio,” CoRR , abs/1609.03499, 2016. [15] W ang, Y., Skerry-Ry an, R., Stanton, D., W u, Y., W eiss, R., Jaitly , N., Y ang, Z., Xiao, Y., Chen, Z., Bengio, S., Le, Q., Agiomyrgiannakis, Y., Clark, R., and Saurous, R., “T acotron: A F ully End-to-End T ext-T o- Sp eec h Synthesis Mo del,” CoRR , abs/1703.10135, 2017. [16] Shen, J., P ang, R., W eiss, R., Sc h uster, M., Jaitly , N., Y ang, Z., Chen, Z., Zhang, Y., W ang, Y., Sk erry-Ry an, R., Saurous, R., Agiomyrgiannakis, Y., and W u, Y., “Natural TTS Syn thesis b y Conditioning W av eNet on Mel Sp ectrogram Predictions,” CoRR , abs/1712.05884, 2017. 15 [17] Lee, B.-K. and Chang, J.-H., “Pac k et Loss Concealmen t Based on Deep Neural Netw orks for Digital Sp eec h T ransmission,” IEEE/ACM T r ans. Audio, Sp e e ch and L ang. Pr o c. , 24(2), pp. 378–387, 2016, ISSN 2329-9290, doi:10.1109/T ASLP .2015.2509780. [18] Dieleman, S., Oord, A. v. d., and Simon y an, K., “The challenge of realistic m usic generation: mo delling raw audio at scale,” arXiv pr eprint arXiv:1806.10474 , 2018. [19] Boulanger-Lew ando wski, N., Bengio, Y., and Vincen t, P ., “Mo deling T emp oral Dep endencies in High-Dimensional Sequences: Application to P olyphonic Music Generation and T ranscription,” in ICML , 2012. [20] Blaau w, M. and Bonada, J., “A Neural P arametric Singing Syn thesizer,” CoRR , abs/1704.03809, 2017. [21] Adler, A., Emiya, V., Jafari, M., Elad, M., Grib on v al, R., and Plum bley , M., “A constrained matching pursuit approach to audio declipping,” in IEEE International Confer enc e on A c oustics, Sp e e ch and Signal Pr o c essing (ICASSP) , 2011, doi:10.1109/icassp.2011.5946407. [22] Adler, A., Emiya, V., Jafari, M. G., Elad, M., Grib on v al, R., and Plum bley , M. D., “Audio Inpainting,” IEEE T r ansactions on A udio, Sp e e ch and L anguage Pr o c essing , 20(3), pp. 922–932, 2012, doi:10.1109/ T ASL.2011.2168211. [23] T oumi, I. and Emiya, V., “Sparse non-lo cal similarit y modeling for audio inpain ting,” in ICASSP - IEEE International Confer enc e on A c oustics, Sp e e ch and Signal Pr o c essing , Calgary , Canada, 2018. [24] Sieden burg, K., D¨ orfler, M., and Ko w alski, M., “Audio inpainting with so cial sparsity ,” SP ARS (Signal Pr o c essing with A daptive Sp arse Struc- tur e d R epr esentations) , 2013. [25] Lieb, F. and Stark, H.-G., “Audio inpain ting: Ev aluation of time- frequency represen tations and structured sparsit y approaches,” Signal Pr o c essing , 153, pp. 291–299, 2018. [26] Bahat, Y., Sc hec hner, Y., and Elad, M., “Self-con tent-based audio inpain ting,” Signal Pr o c essing , 111, pp. 61–72, 2015, doi:10.1016/j. sigpro.2014.11.023. [27] P erraudin, N., Holighaus, N., Ma jdak, P ., and Balazs, P ., “Inpainting of long audio segments with similarity graphs,” IEEE/A CM T r ansactions on Audio, Sp e e ch and L anguage Pr o c essing , PP(99), pp. 1–1, 2018, ISSN 2329-9290, doi:10.1109/T ASLP .2018.2809864. [28] Etter, W., “Restoration of a discrete-time signal segment by interpolation based on the left-sided and righ t-sided autoregressive parameters,” IEEE T r ansactions on Signal Pr o c essing , 44(5), pp. 1124–1135, 1996, doi: 10.1109/78.502326. [29] Kauppinen, I. and Roth, K., “Audio signal extrap olation–theory and applications,” in Pr o c. D AFx , pp. 105–110, 2002. [30] Sriv astav a, N., Hinton, G., Krizhevsky , A., Sutsk ev er, I., and Salakhut- dino v, R., “Drop out: a simple wa y to preven t neural net works from o v erfitting,” The Journal of Machine L e arning R ese ar ch , 15(1), pp. 16 A. MARAFIOTI, N. HOLIGHA US, P . MAJD AK, AND N. PERRAUDIN 1929–1958, 2014. [31] Abadi, M., Agarwal, A., Barham, P ., Brevdo, E., Chen, Z., Citro, C., Corrado, G., Davis, A., Dean, J., Devin, M., Ghemaw at, S., Go odfellow, I., Harp, A., Irving, G., Isard, M., Jia, Y., Jozefowicz, R., Kaiser, L., Kudlur, M., Leven berg, J., Man ´ e, D., Monga, R., Mo ore, S., Murray , D., Olah, C., Sch uster, M., Shlens, J., Steiner, B., Sutsk ev er, I., T alw ar, K., T uck er, P ., V anhouck e, V., V asudev an, V., Vi´ egas, F., Vin y als, O., W arden, P ., W attenberg, M., Wic k e, M., Y u, Y., and Zheng, X., “T ensorFlow: Large-Scale Mac hine Learning on Heterogeneous Systems,” 2015, soft ware av ailable from tensorflow.org. [32] Kingma, D. and Ba, J., “Adam: A Metho d for Sto c hastic Optimization,” online: arxiv.org/p df/1412.6980v9. [33] Ramac handran, P ., Zoph, B., and Le, Q., “Searching for Activ ation F unctions,” online: arxiv.org/p df/1710.05941v2. [34] Ioffe, S. and Szegedy , C., “Batch Normalization: Accelerating Deep Net work T raining by Reducing In ternal Co v ariate Shift,” CoRR , abs/1502.03167, 2015. [35] Pr ˚ u ˇ sa, Z. and Søndergaard, P . L., “Real-Time Sp ectrogram Inv ersion Using Phase Gradient Heap Integration,” in Pr o c. Int. Conf. Digital A udio Effe cts (DAFx-16) , pp. 17–21, 2016. [36] Pr ˚ u ˇ sa, Z., “The Phase Retriev al T o olbox,” in AES International Con- fer enc e On Semantic Audio , Erlangen, Germany , 2017. [37] Zhao, H., Gallo, O., F rosio, I., and Kautz, J., “Loss F unctions for Image Restoration With Neural Net works,” IEEE T r ansactions on Computational Imaging , 3(1), pp. 47–57, 2017, ISSN 2333-9403, doi: 10.1109/TCI.2016.2644865. [38] Krogh, A. and Hertz, J., “A Simple W eight Deca y Can Improv e Gener- alization,” in A dvanc es in neur al information pr o c essing systems 4 , pp. 950–957, Morgan Kaufmann, 1992. [39] Engel, J., Resnick, C., Rob erts, A., Dieleman, S., Eck, D., Simon y an, K., and Norouzi, M., “Neural Audio Synthesis of Musical Notes with W av eNet Auto enco ders,” 2017. [40] Defferrard, M., Benzi, K., V andergheynst, P ., and Bresson, X., “FMA: A Dataset for Music Analysis,” in 18th International So ciety for Music Information R etrieval Confer enc e , 2017. [41] Sturmel, N. and Daudet, L., “Signal reconstruction from STFT magni- tude: A state of the art,” in International c onfer enc e on digital audio effe cts (DAFx) , pp. 375–386, 2011. [42] T remain, T. E., “The Gov ernment Standard Linear Predictive Co ding Algorithm: LPC-10,” Sp e e ch T e chnolo gy , pp. 40–49, 1982. [43] Ra jman, M. and Pallota, V., Sp e e ch and language engine ering , EPFL Press, 2007. [44] Kauppinen, I., Kauppinen, J., and Saarinen, P ., “A method for long extrap olation of audio signals,” Journal of the Audio Engine ering So ciety , 49(12), pp. 1167–1180, 2001. 17 [45] Burg, J. P ., “Maximum entrop y sp ectral analysis,” 37th Annual Inter- national Me eting, So c. of Explor. Ge ophys., Oklahoma City , 1967. [46] Kauppinen, I. and Kauppinen, J., “Reconstruction method for missing or damaged long p ortions in audio signal,” Journal of the Audio Engine ering So ciety , 50(7/8), pp. 594–602, 2002. [47] Necciari, T., Holighaus, N., Balazs, P ., Pr ˚ u ˇ sa, Z., Ma jdak, P ., and Derrien, O., “Audlet Filter Banks: A V ersatile Analysis/Syn thesis F ramework Using Auditory F requency Scales,” Applie d Scienc es , 8(1:96), 2018. [48] Y uksel, S. E., Wilson, J. N., and Gader, P . D., “Twen t y years of mixture of exp erts,” IEEE tr ansactions on neur al networks and le arning systems , 23(8), pp. 1177–1193, 2012. † A coustics Research Institute, Austrian A cademy of Sciences, Wohlleben- gasse 12–14, A-1040 Vienna, A ustria Email address : amarafioti@kfs.oeaw.ac.at Email address : nicki.holighaus@oeaw.ac.at Email address : piotr.majdak@oeaw.ac.at ∗ Swiss D a t a Science Center, ETH Z ¨ urich, Universit ¨ atstrasse 25, 8006 Z ¨ urich Email address : nathanael.perraudin@sdsc.ethz.ch

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment