Overcoming Some Drawbacks of Dynamic Movement Primitives

Dynamic Movement Primitives (DMPs) is a framework for learning a point-to-point trajectory from a demonstration. Despite being widely used, DMPs still present some shortcomings that may limit their usage in real robotic applications. Firstly, at the …

Authors: Michele Ginesi, Nicola Sansonetto, Paolo Fiorini

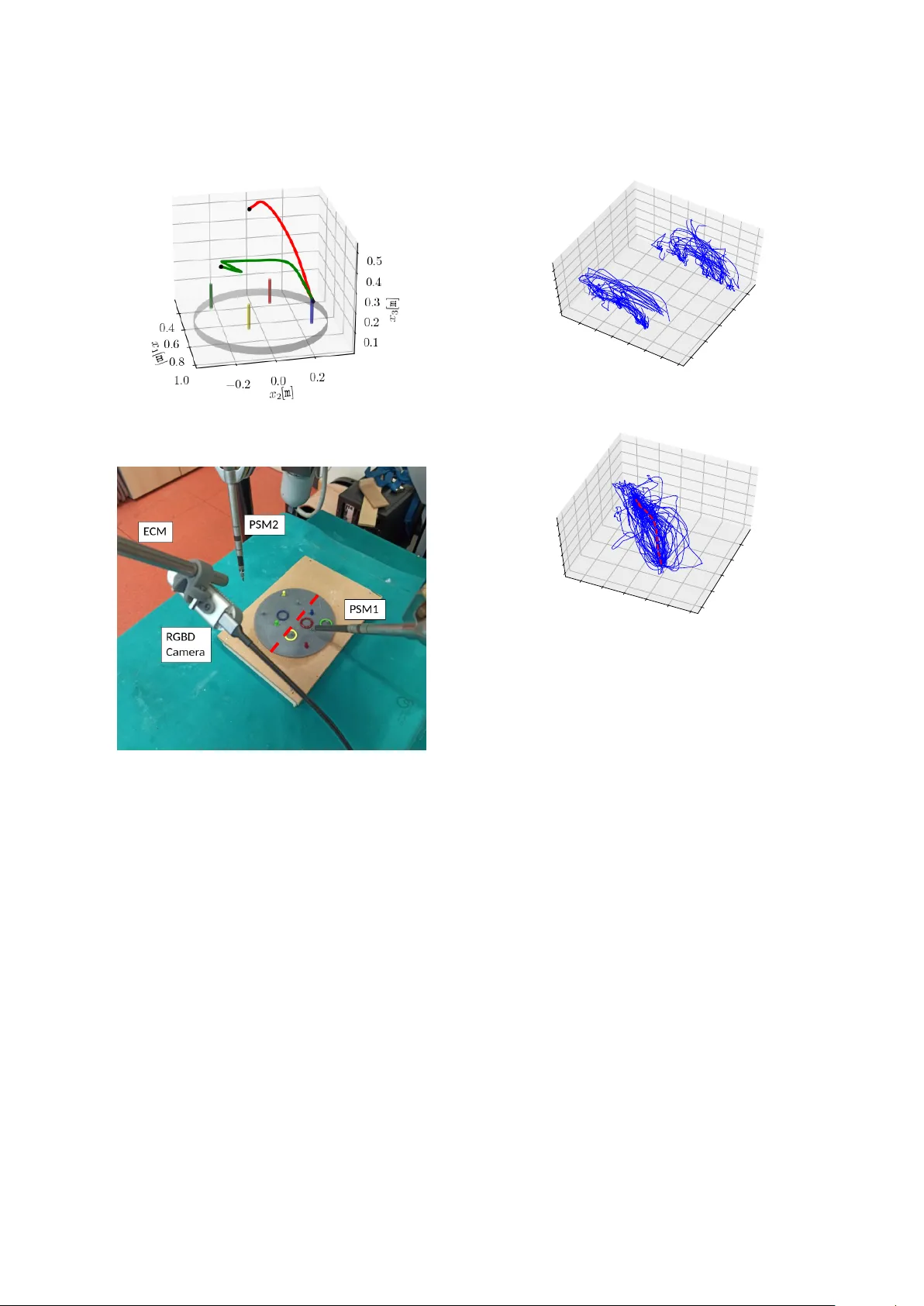

Overcoming Some Dra wbacks of Dynamic Mov ement Primiti ves Michele Ginesi a, ∗ , Nicola Sansonetto a , Paolo Fiorini a a Department of Computer Science, University of V er ona, Strada Le Grazie 15, V er ona, Italy Abstract Dynamic Mov ement Primitiv es (DMPs) is a framework for learning a point-to-point trajectory from a demonstra- tion. Despite being widely used, DMPs still present some shortcomings that may limit their usage in real robotic applications. Firstly , at the state of the art, mainly Gaussian basis functions ha ve been used to perform function approximation. Secondly , the adaptation of the trajectory generated by the DMP hea vily depends on the choice of hy- perparameters and the ne w desired goal position. Lastly , DMPs are a frame work for ‘one-shot learning’, meaning that they are constrained to learn from a unique demonstration. In this work, we present and motiv ate a new set of basis functions to be used in the learning process, showing their ability to accurately approximate functions while ha ving both analytical and numerical advantages w .r .t. Gaussian basis functions. Then, we show how to use the in variance of DMPs w .r .t. a ffi ne transformations to make the generalization of the trajectory robust against both the choice of hyperparameters and ne w goal position, performing both synthetic tests and experiments with real robots to sho w this increased robustness. Finally , we propose an algorithm to extract a common behavior from multiple observations, validating it both on a synthetic dataset and on a dataset obtained by performing a task on a real robot. K eywor ds: Learning from Demonstrations, Motion and Path Planning, Kinematics, Dynamic Movement Primiti ves. 1. Intr oduction The recent improv ements in robot dexterity have giv en rise to increasing attention to Learning from Demonstration (LfD) approaches to make robots faith- fully mimic human motions. Dynamic Movement Primitiv e (DMP) [1, 2, 3, 4] is one of the most used frame works for trajectory learn- ing from a single demonstration. They are based on a system of second-order Ordinary Di ff erential Equations (ODEs), in which a for cing term can be “learned” to en- code the desired trajectory . This approach has already been prov en e ff ectiv e in teaching a robot how to perform some human task as, for instance, (table) tennis swing [1, 5], to play drums [6], to write [7, 8], and to per- form surgical-related tasks [9, 10]. The framew ork of DMPs has been shown to be fle xible and robust enough to allow learning sensory experience [11, 12, 13], han- dling obstacle a voidance [14, 15, 16, 17], describing bi- manual tasks [18], learning orientations [19, 20], and working in scenarios with human-robot interaction [21]. Despite their wide use, DMPs approach has still some shortcomings that need to be fixed to obtain a more ro- bust frame work. ∗ Corresponding author In this work, we discuss three aspects of DMPs and propose modifications that guarantee a more robust im- plementation of the framework. In more detail, we dis- cuss di ff erent classes of basis functions to be used in- stead of the Gaussian radial basis functions. The n, we show how to exploit the in variance of DMPs with re- spect to particular transformations in order to make the generalization of the learned trajectory more robust. Fi- nally , we present an algorithm to learn a unique DMP from multiple observations without the need to rely on probabilistic approaches or additional parameters. W e remark that probabilistic approaches for trajectory learning can deal with generalization and learning from multiple demonstrations. These approaches include, for instance, Pr obabilistic Movement Primitives [22], Ker - nelized Movement Primitives [23], and Gaussian Mix- tur e Models [24]. Howe ver , these methods necessarily require multiple trajectories to extract a common behav- ior , and, di ff erently from DMPs, cannot be used as one- shot learning framew orks. Moreov er, generalization is heavily limited by the quality of the dataset. Indeed, if the dataset is not descripti ve enough of the task and all the di ff erent scenarios, the learned behavior may fail to generalize in certain situations. Finally , these methods hav e not only a probabilistic learning phase but also a Pr eprint submitted to Elsevier J uly 21, 2021 stochastic execution. This aspect makes them not suit- able in some critical scenarios, such as Robotic Mini- mally Inv asiv e Surgery . On the other hand, DMP is a completely deterministic approach. Thus, the improve- ments we present in this work allow to extend the DMP framew ork adding some of the strong points of proba- bilistic frame works while maintaining the strengths of a deterministic approach. The work is organized as follows. In Section 2 we revie w the two classical formulations of DMPs, empha- sizing their shortcomings. In Section 3 we revie w mul- tiple classes of basis functions and introduce a new one, which has both the desirable properties of being smooth and compactly supported. W e then test and compare various numerical aspects of all the sets of basis func- tions presented, showing that our proposed one gives rise to a numerically more stable and faster learning phase. In Section 4 we show ho w to generalize the DMPs to an y new starting and goal positions by e xploit- ing the in variance property of DMPs under a ffi ne trans- formations. In Section 5 we present and test a nov el algorithm to learn a unique DMP from a set of observ a- tions. Lastly , in Section 6 we present the conclusions. Our implementation of DMPs, written in Python 3.8, is av ailable at the link https://github.com/ mginesi/dmp_pp . T ogether with the implementation of DMPs and our e xtensions, the repository contains the scripts to run all the tests presented in Sections 3.3, 4.2, and 5.2, together with the tests that are mentioned but not shown. 2. Dynamic Mov ement Primitiv es: an Ov erview DMPs are used to model both periodic and discrete mov ements [1, 2, 3]. Ho we ver , in this work, we will focus on the latter . They consist of a system of second-order ODEs (one for each dimension of the ambient space) of mass-spring- damper type with a forcing term. DMPs aim to model the forcing term in such a way to be able to general- ize the trajectory to ne w start and goal positions while maintaining the shape of the learned trajectory . The one-dimensional formulation of DMPs is [1, 2, 3]: ( τ ˙ v = K ( g − x ) − Dv + ( g − x 0 ) f ( s ) (1a) τ ˙ x = v (1b) where x , v ∈ R are, respectiv ely , position and velocity of a prescribed point of the system. x 0 ∈ R is the initial position, and g ∈ R is the goal . Constants K , D ∈ R + are, respectiv ely , the spring and damping terms, chosen in such a way that the associated homogeneous system is critically damped: D = 2 √ K . P arameter τ ∈ R + is a temporal scaling factor , and f is a real-v alued, non- linear for cing (also called perturbation ) term . s ∈ (0 , 1] is a re-parametrization of time t ∈ [0 , T ], governed by the so-called canonical system : τ ˙ s = − α s . (2) α ∈ R + determines the exponential decay of canonical system (2). The initial v alue is set to s (0) = 1. The forcing term f in (1a) is written in terms of basis functions as f ( s ) = P N i = 0 ω i ψ i ( s ) P N i = 0 ψ i ( s ) s , (3) where ψ i ( s ) = exp − h i ( s − c i ) 2 (4) are Gaussian Basis Functions (GBFs) with centers c i and widths h i . Centers c i are defined as: c i = exp − α i T N , i = 0 , 1 , . . . , N , (5) so that they are equispaced in time interv al [0 , T ]. W idths h i [25] are defined as h i = ˜ h ( c i + 1 − c i ) 2 , i = 0 , 1 , . . . , N − 1 , h N = h N − 1 , (6) where ˜ h > 0 controls the overlapping between the basis functions. Usually , ˜ h is set to one, ˜ h = 1 [19, 26]. The learning pr ocess focuses on the computation of the weights ω i ∈ R that best approximate the desired forc- ing term, obtained by solving (1a) for f . Given a de- sired trajectory x ( t ) , t ∈ [0 , T ] with velocity v ( t ), we set τ = 1 , x 0 = x (0) , and g = x ( T ). The forcing term is then computed as f ( s ( t )) = 1 g − x 0 ˙ v ( t ) − K g − x ( t ) + Dv , (7) where s ( t ) = e xp( − α t ). Then, the vector ω = [ ω 0 , ω 1 , . . . , ω N ] T can be computed by solving the lin- ear system A ω = b , (8) where A = [ a hk ] is a ( N + 1) × ( N + 1) matrix and b = [ b h ] is a vector in R N + 1 with components, respectiv ely , a hk = Z s ( T ) s (0) ψ h ( s ) ψ k ( s ) P N i = 0 ψ i ( s ) 2 s 2 d s , (9a) b h = Z s ( T ) s (0) ψ h ( s ) P N i = 0 ψ i ( s ) f ( s ) s d s . (9b) 2 0 1 2 3 x 1 0 . 0 0 . 5 1 . 0 1 . 5 2 . 0 x 2 (a) Slight change in goal position. 0 1 2 3 x 1 − 1 . 0 − 0 . 5 0 . 0 0 . 5 1 . 0 x 2 (b) Change of sign in goal position. Figure 1: Comparison between DMPs’ formulation (10) and (11). In both plots, the blue solid line shows the learned trajectory . The dot and star mark, respectively , the new initial position x 0 (which, for simplicity , in this example is kept the same as the learned one) and new goal position g . The black dotted line shows the generalization to the new goal using DMP formulation (10), while the dashed green line shows the generalization using formulation (11). The grid is plotted to emphasize that in Figure 1b the vertical component of the new goal position is of opposite sign than the learned one. When dealing with d -dimensional trajectories, we make d decoupled copies of system (1), obtaining the following v ector formulation: ( τ ˙ v = K ( g − x ) − Dv + ( g − x 0 ) f ( s ) (10a) τ ˙ x = v (10b) where x , v , g , x 0 , f ( s ) ∈ R d and K , D ∈ R d × d are diago- nal matrices, so to maintain each component decoupled from the others. The operator denotes the component- wise multiplication: given v = [ v i ] , w = [ w i ] ∈ R d , we define v w = [ v i w i ] i . Thanks to the decoupling of the system, the forcing term can be learned component by component. Three main drawbacks characterize DMP formula- tion (10) (see [15]). Firstly , if in an y component the goal position coincides with the starting position, g = x 0 , the perturbation term f clearly does not contribute to the ev olution of the dynamical system. Secondly , if g − x 0 is “small” the scaling of the perturbation term f by the quantity g − x 0 may produce une xpected (and undesired) behaviors. Finally , if the scaling factor g − x 0 changes sign from the learned trajectory to the new one, the tra- jectory will result mirrored. In Figure 1 we present an example similar to that in Fig- ure 2 of [15] to sho w the aforementioned drawbacks. In both tests, the vertical component of the di ff erence g − x 0 is quite small: ( g − x 0 ) | 2 = 1 15 ≈ 0 . 0667. Fig- ure 1a sho ws that e ven a slight change in goal position results in a complete di ff erent trajectory when using for- mulation (1). Figure 1b, instead, shows the ‘mirror- ing’ problem. In this case, the vertical component of g − x 0 changes sign from the learned to the new exe- cution. Thus, the trajectory results mirrored w .r .t. the horizontal axis x 2 = 0. T o ov ercome these disadvantages, the following for- mulation was proposed in [14, 27, 15]: ( τ ˙ v = K ( g − x ) − Dv − K ( g − x 0 ) s + Kf ( s ) (11a) τ ˙ x = v (11b) where the ev olution of s is still described by the canon- ical system (2), and the forcing term is still written in terms of basis functions as in (3). By remo ving the scaling term g − x 0 from the forcing term f , this new formulation solves the aforementioned problems of (1), as it can be seen in Figure 1. Ho wev er , while solving the drawbacks characterizing formulation (10), DMPs’ formulation (11) still presents an important drawback: the generalization to di ff erent spatial scales is not always feasible since the forcing term does not hav e any dependence on the relati ve position between starting and ending points. W e will discuss the details of this aspect in Section 4 when presenting our proposed modifications to solve this dra wback. 3. New Set of Basis Functions A change of the set of basis functions is moti vated by the fact that a desirable property of basis functions is to be compactly supported [8] since compactly supported basis functions pro vide an easier update of the learned 3 trajectory . Indeed, if only a portion of the trajectory has to be modified, only a subset of the weights needs to be re-computed (we will discuss the details of this as- pect in Section 3.2). Moreo ver , while with GBFs the forcing term nev er vanishes, compactly supported ba- sis functions allow the forcing term to be zero outside the support, thus pro viding a faster conv ergence of the system to the goal position. In [8] the authors proposed to use T runcated Gaus- sian Basis Functions (TGBFs): ˜ ψ i ( s ) = exp − h i 2 ( s − c i ) 2 if s − c i ≤ θ i 0 otherwise , (12) where c i and h i are defined as in (5) and (6) respectively , and θ i = K / √ h i . Unfortunately , this approach has two main drawbacks. Firstly , a discontinuity is introduced at the truncation points θ i , which may produce une xpected behaviors. Secondly , in order to work properly , this ap- proach requires the introduction of biases terms in (3), which now reads f ( s ) = P N i = 0 ( ω i s + β i ) ˜ ψ i ( s ) P N i = 0 ˜ ψ i ( s ) , (13) thus doubling the number of parameters that need to be learned (a weight ω i and a bias β i for each basis func- tion), and increasing the ov erall computational cost. Finally , we remark that these basis functions are not compactly supported since their support is an interval ( −∞ , L ] with L ∈ R . Moreover , since the truncation point is at the “right” in s (i.e. ˜ ψ i ( s ) ≡ 0 when s is abov e a certain quantity), and s is a decreasing function in t , we remark that these basis functions ne ver v anish as t → ∞ . In this part, we propose a ne w set of basis functions that improves the TGBFs (12). Indeed, our proposed set contains compactly supported basis functions, which then v anish in both directions. Moreo ver , since no trun- cation (and, thus, discontinuities) are introduced, there is no need to double the number of parameters, and our formulation will require the learning of only the weights ω i in (3). W e will also show that our proposed set of basis func- tions results in a minimization problem that is both nu- merically more stable and faster to solve. 3.1. Mollifier-like and W endland Basis Functions In this work, we propose a new set of basis functions that are both smooth and compactly supported. Consider the mollifier ϕ : R → R defined as ϕ ( x ) = 1 I n exp − 1 1 −| x | 2 if | x | < 1 0 otherwise , (14) 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 s 0 . 0 0 . 2 ϕ ( s ) 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 t 0 . 0 0 . 2 ϕ ( s ( t )) Figure 2: Plot of mollifier-like basis functions as defined in (15). In this example N = 9, α = 4 and T = 1. The first plot shows the basis functions as a function of s , while the second plot shows them as functions of time t . where I n is set so that R R ϕ ( x ) d x = 1. Function ϕ is smooth and compactly supported (its support is the in- terval [ − 1 , 1]). It is then natural to define the set of mollifier -like basis functions { ϕ i ( s ) } i = 0 , 1 ,..., N giv en by ϕ i ( s ) = exp − 1 1 − r 2 if r < 1 0 otherwise , (15) where r is a function of s defined as r = | a i ( s − c i ) | , (16) where, gi ven the centers c i as in (5), we define the widths a i as a i = ˜ h | c i − c i − 1 | , i = 1 , 2 , . . . , N , a 0 = a 1 . (17) As in (6), scalar ˜ h > 0 determines the ov erlapping be- tween basis functions. In all our tests, we fix ˜ h to value one: ˜ h = 1. W e remark that the usual normalization term I n in (14) is omitted in (15) since it does not enter our approach. In Figure 2 we plot an example of mollifier-lik e basis functions both as function of s and of t . W e remark that the basis functions are equispaced in t . Moreover , since s is a strictly decreasing function of t , their order changes from s to t (the first basis function in s is the last in t and vice versa), as it was for the GBFs used so far in the literature. Other well known regular (see T able 1 for details on the regularity) and compactly supported basis functions are W endland functions [28, 29]. In Section 3.3 we test 4 T able 1: Summary of the properties (regularity and support compactness) of the di ff erent sets of basis functions presented in Section 3. Bold font is used to mark the more desirable properties (smoothness and compact support). Function Regularity Compactly Suppor ted Gaussian ψ i ( s )( · ) C ω ( R ) No T runcated Gaussian ˜ ψ i ( s )( · ) Discontinuous No Mollifier-like ϕ i ( s )( · ) C ∞ ( R ) Y es W endland φ (2) ( · ) C 0 ( R ) Y es φ (3) ( · ) C 0 ( R ) Y es φ (4) ( · ) C 2 ( R ) Y es φ (5) ( · ) C 2 ( R ) Y es φ (6) ( · ) C 4 ( R ) Y es φ (7) ( · ) C 4 ( R ) Y es φ (8) ( · ) C 6 ( R ) Y es the follo wing W endland basis function (where the op- erator ( · ) + denotes the positive part function : ( x ) + = max { 0 , x } ): φ (2) i ( r ) = (1 − r ) + 2 , (18a) φ (3) i ( r ) = (1 − r ) + 3 , (18b) φ (4) i ( r ) = (1 − r ) + 4 (4 r + 1) , (18c) φ (5) i ( r ) = (1 − r ) + 5 (5 r + 1) , (18d) φ (6) i ( r ) = (1 − r ) + 6 (35 r 2 + 18 r + 3) , (18e) φ (7) i ( r ) = (1 − r ) + 7 (16 r 2 + 7 r + 1) , (18f ) φ (8) i ( r ) = (1 − r ) + 8 (32 r 3 + 25 r 2 + 8 r + 1) . (18g) where c i are the centers as in (5) and a i are the widths as in (17), and r is defined as in (16). In T able 1 we summarize the properties of the ba- sis functions we presented in this section. As can be seen, mollifier -like basis functions are the only ones be- ing both smooth and compactly supported. 3.2. T rajectory Update In [8] was pointed out that if only a portion of the trajectory has to be re-learned, only a subset of weights has to be re-computed. T o be more precise, assume that a desired trajectory γ ( t ) has to be updated in the time in- terval [ t 0 , t 1 ] ⊂ [0 , T ]. Then, not all the weights ω i hav e to be re-computed, but only those whose basis functions ϕ i satisfy (where the support has to be intended on the values of t and not s ) supp( ϕ i ) ∩ [ t 0 , t 1 ] , ∅ . From this, it is easy to notice that for classical GBFs this means that all weights hav e to be re-computed, since their support is the whole real line R : supp( ψ i ) = R . For compactly supported basis functions, this intersection will be usually smaller than the whole set of index es ϕ ¯ ı c ¯ ı c ¯ ı − 1 a ¯ ı c ¯ ı + 1 a ¯ ı ( ) ˜ s 1 ˜ s 0 ( ) s 1 s 0 Figure 3: Depiction of compactly supported basis functions whose support intersects an interval ( s 1 , s 0 ). The basis functions drawn us- ing solid lines are those whose weights have to be updated. As one can observe, they satisfy condition (19). Interval ( ˜ s 1 , ˜ s 0 ) shows the domain in which the integrals in (9) ha ve to be computed. { 0 , 1 , . . . , N } , and it will depend only on the length t 1 − t 0 of the interval. The support, in s , of each basis function ϕ i is the interval ( c i − 1 / a i , c i + 1 / a i ) . Therefore, the set of weights { ω i } i ∈ I that has to be updated are those for which c i − 1 a i ≤ s 0 and c i + 1 a i ≥ s 1 , (19) where s 0 = exp( − α t 0 ) and s 1 = exp( − α t 1 ). Once the set of index es I has been identified using (19), one must solve a linear problem as in (8), where A is a | I | × | I | matrix, A ∈ R | I |×| I | , and b is a vector with | I | components, b ∈ R | I | . The components of matrix A and vector b are gi ven in (9). W e remark that the integrals in (9) hav e to be ev aluated on the interval ( ˜ s 1 , ˜ s 0 ) = [ i ∈ I supp( ϕ i ) , where the support has to be intended in s . By solving the linear problem, the weights ω i , i ∈ I are updated. Figure 3 gives an intuition on how to identify the set { ϕ i } i ∈ I of basis functions satisfying (19) and the interval ( ˜ s 1 , ˜ s 0 ). 5 10 1 10 2 n. of basis functions 10 − 3 10 − 2 10 − 1 10 0 rel. err ψ ˜ ψ U ˜ ψ B ϕ φ (2) φ (3) φ (4) φ (5) φ (6) φ (7) φ (8) (a) Error as function of the number of basis functions. 10 1 10 2 n. of parameters 10 − 3 10 − 2 10 − 1 10 0 rel. err ψ ˜ ψ U ˜ ψ B ϕ φ (2) φ (3) φ (4) φ (5) φ (6) φ (7) φ (8) (b) Error as function of the number of parameters. Figure 4: Plot of the L 2 error done by approximating the hat function (20). The first plot shows the error w .r .t. the number of basis functions, while the second plot shows the error w .r .t. the number of parameters that has to be learned. TGBFs are tested both using the (classical) unbiased formulation (3) and the biased one (13). These two di ff erent approaches are denoted respectively by ˜ ψ U and ˜ ψ B in the legend. Remark 1 . In the case of TGBFs, the set of weights to update will depend not only on the length of the interv al but also on its ‘position’. For instance, if the last part of the trajectory has to be updated, i.e. [ t 0 , t 1 ] = [ t 0 , T ], all the weights must be updated since T ∈ supp( ˜ ψ i ) for any i = 0 , 1 , . . . , N . Thus, in general, when a portion of the trajectory has to be modified, the number of weights to re-compute will be less when using compactly supported basis functions than when using TGBFs. 3.3. Results W e now in vestig ate the goodness of the approxima- tions obtained using the various examples of basis func- tions we introduced. In particular , we test both the clas- sical (4) and the truncated (12) Gaussian basis func- tions, the mollifier-like basis functions (15), and the W endland functions (18). W e test three numerical as- pects: in Section 3.3.1 the approximation error on par- ticular target functions, in Section 3.3.2 the condition number of matrix A in (8), and in Section 3.3.3 we test the computtional time needed to solve the minimization problem (8). Additionally , in Section 3.3.4 we present a test on the trajectory update property presented in Sec- tion 3.2. 3.3.1. Numerical Accuracy . W e test the accuracy of the approximation of the fol- lowing function: η ( t ) = t 2 cos( π t ) , t ∈ [0 , 1] . (20) Figure 4 shows the approximation error, measured w .r .t. the L 2 -norm, both w .r .t. the number of basis func- tions (Figure 4a), and w .r .t. the number of parameters that need to be learned (Figure 4b). W e recall that for TGBFs the number of parameters is double the num- ber of basis functions since ev ery basis function requires one weight and one bias term. On the other hand, for all other classes of basis functions, the number of parame- ters coincide with the number of basis functions, since for each basis function only one parameter (the weight) has to be computed. The plot shows that TGBFs (12) work properly only us- ing the biased formulation (denoted by ˜ ψ B in the leg- end) for the forcing term (13), which means that giv en N basis functions we are solving a 2 N -length linear sys- tem when computing the weights and the biases. On the other hand, when using the unbiased formulation (3) (denoted by ˜ ψ U in the legend) the approximant does not con ver ge to the desired forcing term. W e observe that when comparing the error w .r .t. the number of basis functions, the error obtained by us- ing TGBFs is comparable to the error done using clas- sical GBFs. On the other hand, when comparing the error w .r .t. the number of parameters that hav e to be computed, TGBFs approximate worse than mollifier ba- sis functions when the number of parameters is below 60. Usually , in the applications, no more than fifty ba- sis functions are used. For this particular value and target function, mollifier-like basis functions and trun- cated Gaussian have almost identical approximation er- rors. Ho wever , we remark that these results depend on the particular choice of the tar get function η in (20), 6 10 1 10 2 n. of parameters 10 − 4 10 − 3 10 − 2 10 − 1 10 0 rel. err ψ ˜ ψ U ˜ ψ B ϕ φ (2) φ (3) φ (4) φ (5) φ (6) φ (7) φ (8) (a) pushing_needle . 10 1 10 2 n. of parameters 10 − 4 10 − 3 10 − 2 10 − 1 10 0 rel. err ψ ˜ ψ U ˜ ψ B ϕ φ (2) φ (3) φ (4) φ (5) φ (6) φ (7) φ (8) (b) positioning_needle . Figure 5: Plot of the L 2 error done approximating two real gestures. The error is given as a function of the number of parameters that need to be learned. TGBFs are tested both using the (classical) unbiased formulation (3) and the biased one (13). These two di ff erent approaches are denoted respectiv ely by ˜ ψ U and ˜ ψ B in the legend. and that di ff erent target functions may gi ve di ff erent re- sults. T ests performed with di ff erent target functions show similar results: classical Gaussian functions re- main the best approximator , while truncated Gaussian and mollifier-like giv e similar approximation accuracy and usually work better than W endland functions. Additionally , we performed this test on trajectories obtained from real task ex ecutions. In particular , we re- lied on the JIGSA W dataset [30]. This dataset contains data on three elementary surgical tasks (suturing, knot- tying, and needle-passing). The demonstrations were collected from eight di ff erent subjects of di ff erent ex- perience in robotic surgery . In Figure 5 we show the con ver gence error for two gestures extracted from the dataset. In particular , Figure 5a shows the results for the pushing_needle gesture, while Figure 5b shows the results for the positioning_needle gesture. Both gestures were extracted from the same user (‘D’) per- forming the same task (suturing). As it can be seen, the results are similar to those obtained by approximating function η in (20): GBFs (4) are the best approximators, TGBFs (12) and mollifier -like basis functions (15) hav e similar con vergence result, and W endland basis func- tions (18) are the less accurate basis functions. In all tests, we tested classical Gaussians, W endland, and mollifier-lik e basis functions also using the biased formulation (13), without noticing any di ff erence in the goodness of the approximation. 3.3.2. Condition Number . W e no w discuss the numerical accuracy of the min- imization problem (8) used to compute the weights ω i 10 1 10 2 n. of basis functions 10 5 10 6 10 7 10 8 condition number ψ ϕ φ (2) φ (3) φ (4) φ (5) φ (6) φ (7) φ (8) Figure 6: Condition number of the matrix A with elements a hk defined in (32) w .r .t. the number of basis functions. in (3). T o do so, we in vestigate the condition number of matrix A , cond( A ) def = k A k k A − 1 k , in (8). The im- portance of this test is giv en from the fact that when one solves numerically a linear system, the approxima- tion error is directly proportional to the condition num- ber . Indeed, when numerically solving a linear system A ω = b , whose exact solution is ˜ ω , and numerical so- lution is ˆ ω , the following inequality holds: k ˜ ω − ˆ ω k k ˆ ω k ≤ cond( A ) k ς k k b k , (21) where ς def = b − A ˜ ω is the r esidual . Inequality (21) says that the relative error done when numerically solving a linear system is amplified by the condition number of the matrix A . 7 0 20 40 60 80 100 1 20 0 20 40 60 80 100 120 Figure 7: Sparsity pattern of matrix A with elements a hk defined in (9a) in the case of N = 2 7 = 128 using mollifier-like basis functions. In this test, we do not consider TGBFs since, in this case, the components A and b of the linear problem (8) hav e di ff erent formulations, ha ving to learn both the weights and the biases. Figure 6 shows that the condition number cond( A ) of matrix A increases faster for GBFs, while the com- pactly supported basis functions show a slo wer increase. Moreov er , ev en with only twenty basis functions, the condition number obtained with GBFs is significantly bigger than any compactly supported basis function. This means that solving the minimization problem (8) using GBFs results in a more severe numerical cancel- lation error than mollifier-lik e or W endland functions. The lower condition number can be explained by the fact that mollifier -like and W endland basis functions are compactly supported, and then the resulting matrix A will hav e many o ff -diagonal components equal to zero, as it can be seen in the sparsity pattern shown in Fig- ure 7. On the other hand, when one uses GBFs, all the components of A are non-zero, since ψ i ( s ) > 0 , ∀ s ∈ R . Thus, A is a full matrix when using GBFs, while it is a sparse n − diagonal matrix when using compactly sup- ported basis functions; and, in general, full matrices hav e a bigger condition number than sparse n − diagonal ones. W e remark that con ver gence analysis shown in Fig- ures 4 and 5 is done on particular choices of target func- tions. The conv ergence error may di ff er depending on the forcing term that needs to be approximated. How- ev er , we stress that the condition number shown in Fig- ure 6 does not depend on the target function but only on the choice of basis functions. 10 1 10 2 n. of basis fun ctions 10 − 4 10 − 3 time [ sec ] ψ ϕ φ (2) φ (3) φ (4) φ (5) φ (6) φ (7) φ (8) Figure 8: A verage computational time when solving the minimization problem (8) as function of the number of basis functions N . 3.3.3. Computational T ime An additional adv antage in using compactly sup- ported basis functions lies in the improvements in com- putational time when solving the linear problem (8). In- deed, when the number of basis functions N increases, the entries in matrix A increases quadratically: O ( N 2 ). When GBFs are used, all entries in A are non-zero. On the other hand, when compactly supported basis func- tions are adopted, the number of non-zero entries in A increases linearly , O ( N ), where is the number of non- zero diagonals in A , and is determined by the overlap- ping between basis functions, which depends on param- eter ˜ h in (17). Consequently , as the number of basis functions N in- creases, the number of operations that are needed to solve the linear problem (8) increases cubically , O ( N 3 ), when the matrix is full; and only quadratically , O ( N 2 ), when the matrix is sparse. Thus, one should expect the minimization problem (8) to be f aster to solve when compactly supported basis functions are used. T o test the improv ement in computational time, we perform the following test. For di ff erent values of the number of basis functions N , we compute the matrix A in (8). Then, for each value of N , we consider thirty di ff erent v alues for vector b and solve the linear problem (8) saving the time needed to solv e it. W e perform this test for the GBFs (4), mollifier -like basis functions (15), and W endland basis functions (18). Figure 8 shows the result of this test. In particular, the log-scale plot shows the av erage computational time for each v alue of N . As it can be seen, as the number of ba- sis functions increases, the computational time needed to solve the linear problem (8) has a greater gro wth when GBFs are used. On the other hand, when com- pactly supported basis functions are adopted, the incre- ment in computational time is reduced. 8 − 1 0 1 x 1 − 1 . 5 − 1 . 0 − 0 . 5 0 . 0 0 . 5 1 . 0 1 . 5 2 . 0 x 2 − 1 0 1 x 1 Figure 9: Result for the ‘trajectory update’ property of compactly sup- ported basis functions. On the left, the two trajectories are sho wn, showing that they di ff er only in the central portion. On the right, the execution of the obtained DMP is shown. The DMP is initially learned from the ‘big’ trajectory , and then updated using the middle portion of the small one. T ests were performed on a notebook with a quad-core Intel Core i7-7000 CPU with 16 GB of RAM. 3.3.4. T rajectory Update In this Section, we present a synthetic test to show the “trajectory update” property presented in Section 3.2. T o do so, we generate two trajectories, which can be seen on the left plot in Figure 9, using clamped splines (to hav e null initial and final velocities). The trajecto- ries are identical on the ‘tails’ but they di ff er in the cen- tral portion (one is larger than the other). T o show the update properties of compactly supported basis func- tions, we start by learning a DMP from the ‘largest’ trajectory (shown in red in Figure 9). DMPs parame- ters are K = K I d 2 and D = D I d 2 with K = 150 and D = 2 √ K ≈ 24 . 49, α = 4, and N = 100. Next, we identificate, using (19), the set of basis func- tions that have to be updated when the middle portion of the trajectory has to be updated. This result in the set of indexes I = { 24 , 25 , . . . , 63 } ⊂ { 0 , 1 , . . . , N } = { 0 , 1 , . . . , 100 } . Then, we re-compute the set of weights { ω i } i ∈ I . The plot on the right in Figure 9 shows the ex ecution of the resulting, updated DMP . As one can observe, by updating only a subset of the weights the resulting tra- jectory mimics the new desired beha vior . 3.4. General Considerations The tests performed in Section 3.3 sho wed the advan- tages of using compactly supported basis functions. Firstly , these sets of functions gi ve a sparse matrix in minimization problem (8). As a consequence, the learn- ing phase is both numerically faster and more stable than when using GBFs (4). Secondly , compactly supported basis functions allow to ‘update’ a DMP when only a portion of the trajectory has to be modified, instead of learning a ne w one from scratch. Finally , the usage of compactly supported basis func- tions ensures a faster con ver gence of the DMP system to the goal position since the resulting forcing term is null after a finite amount of time, f ( s ( t )) = 0 , ∀ t > T [8]. This is not true for (both classical and truncated) Gaussian basis functions (4), (12). Indeed, the support of the classical GBFs is the whole real line R ; while the support for TGBFs is an interval ( −∞ , L ], L ∈ R in s , which is an interval [ L 0 , + ∞ ), L 0 ∈ R in t . Among the families of compactly supported basis functions we presented in this work, our proposed mollifier-lik e basis functions (15) are both the most reg- ular and the best approximants. For these reasons, we argue that mollifier-like basis functions may become the new standard when using DMPs. Remark 2 . The numerical advantages (stability and time e ffi ciency) sho wed by compactly supported basis func- tions influence only the learning phase. Indeed, since the approximation errors are similar and, ov erall, small among the di ff erent classes of basis functions, the re- sulting DMP execution will have no meaningful di ff er- ence. As a final observation, we remark that other ap- proaches based upon basis functions approximation (such as ProMPs [22]) could take advantage of the com- putational stability and e ffi ciency of compactly sup- ported basis functions. 4. Inv ariance Under Inv ertible Linear T ransf orma- tions In variance under translations of DMPs formulations (1) and (11) is straightforward. Indeed, by changing the initial position from x 0 to x 0 0 , and by considering, in- stead of g , the new goal position g 0 = g + ( x 0 0 − x 0 ), the whole trajectory is translated by a quantity x 0 0 − x 0 . DMPs are able to adapt to small changes of the rel- ativ e position between goal and start, g − x 0 ; howe ver , the robustness of DMPs w .r .t. sensible changes of v ec- tor g − x 0 heavily depends on the choice of the hyper - parameters K , D in (11) and α in (2) (see, for instance, the tests performed in Figures 10, 11). Generalization to arbitrary changes of quantity g − x 0 can be achiev ed by exploiting the inv ariance property of equations (11) 9 w .r .t. (inv ertible) a ffi ne transformations, see [15]. At the best of the authors’ knowledge, this well-known prop- erty has nev er been exploited in order to make DMPs globally robust w .r .t. arbitrary changes of g − x 0 . The inv ariance of (11) can be easily prov en (see [15]). Consider the in vertible transformation matrix S ∈ R d × d . By substituting x 0 = Sx , v 0 = Sv , x 0 0 = Sx 0 , g 0 = Sg , K 0 = SKS − 1 , D 0 = SDS − 1 , (22) in (11) we obtain the transformation la w of the forcing term f 0 = Sf . (23) Thus, if we want to generate a ne w trajectory Sx , it is su ffi cient to apply the transformations in (22) and (23) to (11). The resulting DMP system, thus, reads τ ˙ v = K 0 ( g − x ) − D 0 v − K 0 ( g − x 0 ) s + K 0 f ( s ) (24a) τ ˙ x = v (24b) W e remark that if the elastic and damping terms are the same for each degree-of-freedom, then K and D are multiple of the identity matrix and thus K = K 0 and D = D 0 . 4.1. Invariance Under Roto-Dilatation A case of particular interest is when S in (22), (23) represents a r oto-dilatation . Consider a trajectory which is learned together with the relativ e position between the goal and the starting point, i.e. the vector g − x 0 ; and a new trajectory , with start- ing and ending points x 0 0 and g 0 respectiv ely has to be reproduced. W e first compute the rotation matrix R [ g 0 − x 0 0 [ g − x 0 , which maps the unit vector \ g − x 0 to \ g 0 − x 0 0 , where operator b · de- notes the normalization: b v def = v / k v k . Rotation matrix R [ g 0 − x 0 0 [ g − x 0 can be computed using the algorithm presented in [31]. After performing the rotation, we perform a dilatation of k g 0 − x 0 0 k / k g − x 0 k obtaining the transformation matrix S = g 0 − x 0 0 k g − x 0 k R [ g 0 − x 0 0 [ g − x 0 . (25) Therefore, when a learned DMP has to be used to gen- erate a ne w trajectory , characterized by the parameters x 0 0 and g 0 , we compute the matrix S as in (25), and then the quantities K 0 , D 0 , and f 0 as in (22) and (23). This approach can be used even if the goal position changes with time during the execution of the trajectory simply by updating the matrix S at each time. This, in particular , means that S depends on time. In Section 4.2 we will test this approach both for static and moving goal positions. W e remark that this method cannot be performed when starting and ending points coincide: x 0 = g , since the matrix S in (25) would result in a null matrix, S = 0 . Howe ver , in such cases, one should use rhythmic (or periodic ) DMPs instead of discrete ones, which are be- yond the scope of this paper . Remark 3 . The choice to use a roto-dilatation as the transformation matrix S is not a unique possibility . Indeed, any inv ertible matrix can be used. F or ex- ample, if we write S as the diagonal matrix S = diag( s 1 , s 2 , . . . , s d ) in which each component of the di- agonal is defined as (assuming that the learned goal and starting positions di ff er in each component) s i = g 0 i − x 0 0 i g i − x 0 i , i = 1 , 2 , . . . , d , we would obtain a formulation similar to (10), in which each component is scaled by g − x 0 . Remark 4 . Choosing roto-dilatation has the advantage that the ev aluation of the in verse transformation does not require numerically in verting the matrix. Indeed, since the matrix S of the transformation can be written as a non-zero scalar value multiplied by an orthogonal matrix S = a R , a ∈ R \ { 0 } , R ∈ R d × d , R − 1 = R T , where R is the rotation matrix, the in verse is simply S − 1 = 1 a R − 1 = 1 a R T . Thanks to this property , the computation of the inv erse matrix S − 1 in (22) can be performed e ffi ciently (since transposing a matrix is faster than in verting one). 4.2. Results In this Section, we present se veral synthetic tests and robotic experiments to sho w how DMP formulation (24) results in better behaviors when compared to formula- tion (11). In the following, we refer to classical DMPs when talking about the implementation without exploiting the in variance property . Similarly , we refer to extended DMPs when talking about the formulation (24) we in- troduced in Section 4.1, in which the inv ariance prop- erty is exploited by using, as linear in vertible transfor- mation, the roto-dilatation defined in (25). 10 − 2 − 1 0 1 2 3 x 1 0 1 2 3 x 2 (a) Rotation 0 2 4 6 x 1 − 1 0 1 2 3 x 2 (b) Dilatation 0 1 2 3 x 1 − 0 . 5 0 . 0 0 . 5 1 . 0 1 . 5 x 2 (c) Shrinking 0 1 2 3 x 1 0 . 0 0 . 5 1 . 0 1 . 5 2 . 0 2 . 5 3 . 0 x 2 (d) Moving goal Figure 10: Di ff erent behaviors obtained when changing the goal position. In Figures 10a, 10b, and 10c the goal is di ff erent from the learned one and still. In Figure 10d the goal starts in the learned position and moves continuously . The desired curve is given by (26). In all four plots, the desired curve is plotted using the blue dashed line. The execution of extended DMP is shown by the red full line for α = 4, and by the orange dotted line for α = 2 (in all these experiments, they overlap). The execution of classical DMPs are marked with the dash-dotted green line when α = 4, and with the purple one dash three dots line when α is set to 2. The dot and star mark, respectively , the desired starting and goal positions x 0 and g . The stars trial in Figure 10d shows the movement of the goal. 4.2.1. Synthetic T ests. W e in vestigate the rob ustness gain ensured by the in- variance property under rotation and dilatation of the reference system by considering two examples in which a trajectory is learned and then executed changing the relativ e position between goal and starting point g − x 0 . In these tests, we do not change x 0 since DMPs are na- tiv ely translational in variant. W e compare the di ff erent behaviors obtained with and without the scaling term S defined in (25). 1 For all the tests presented in this Sec- tion, we use mollifier-lik e basis functions (15). Ho w- ev er , we remark that tests done with other classes of ba- sis functions giv e similar results. In the first simulation, we generate the desired curve 1 W e will not compare the results with the original DMPs (10) since the drawbacks of such formulation ha ve already been discussed in the literature [14, 15, 27]. in the plane: x ( t ) = t , sin 2 ( t ) , t ∈ [0 , π ] . (26) Then we perform the learning step to compute the weights ω i , and we test the generalization properties of DMPs by changing in di ff erent manners the goal posi- tion. In particular , in Figures 10a, 10b, and 10c the goal is set to a di ff erent configuration and is still. Instead, in Figure 10d, the goal starts in the learned position and mov es to wards a final target configuration while the DMP is being executed. All tests are executed using both classical and extended DMPs. W e performed these tests with elastic term K = 150, and damping term D = 2 √ 150 ≈ 24 . 49 for both com- ponents x 1 and x 2 , that is K = K I 2 and D = D I 2 , being I 2 the 2 × 2 identity matrix. W e test the generalization by using two di ff erent values of α in the canonical sys- tem (2), α = 4 and α = 2. Both these values for α 11 − 60 − 40 − 20 0 20 40 x 1 − 20 0 20 40 x 2 (a) Rotation − 20 0 20 40 60 80 x 1 − 40 − 20 0 20 40 x 2 (b) Dilatation − 20 0 20 40 x 1 − 20 − 10 0 10 20 30 x 2 (c) Shrinking − 20 0 20 40 x 1 − 10 0 10 20 30 40 x 2 (d) Moving goal Figure 11: Di ff erent behaviors obtained when changing the goal position. In Figures 11a, 11b, and 11c the goal is di ff erent from the learned one and still. In Figure 11d the goal starts in the learned position and moves continuously . The desired curve is given by (27). In all four plots, the desired curve is plotted using the blue dashed line. The execution of extended DMP is shown by the red full line for K = 150, and by the orange dotted line for K = 15 (in Figures 11a–11c, they overlap). The execution of classical DMPs are marked with the dash-dotted green line when K = 150, and with the purple one dash three dots line when K is set to 15. The dot and star mark, respectively , the desired starting and goal positions x 0 and g . The stars trial in Figure 11d shows the movement of the goal. result in a canonical system that vanishes at the final time within a tolerance smaller than 1%. Indeed, since the final time is t 1 = π in (26), for α = 2 we ha ve exp( − α T ) = exp( − 2 π ) ≈ 1 . 9 e − 03; while for α = 4 we have exp( − α T ) = exp( − 4 π ) ≈ 3 . 5 e − 06 . Figure 10 shows the results of our tests. In the second simulation, the desired curve is: x ( t ) = t 2 cos( t ) , t sin( t ) , t ∈ [0 , 2 π ] . (27) Also in this case we test the generalization properties of both classical DMPs and extended DMPs when the relativ e position g − x 0 is changed. In Figures 11a, 11b, and 11c the goal is set to a di ff erent configuration before we start executing the DMP . Instead, in Figure 11d, the goal starts in the learned position and mov es towards a final target configuration while the DMP is being exe- cuted. The main di ff erence is that in this second test we set α = 4, and we use two di ff erent v alues for K (and, thus, for D ). Indeed, we set K = K I and set K to assume value 150 and 15. In both cases, the damping term is set to D = √ K I 2 . Both these tests show how extended DMPs are more robust than the classical DMP formulation since the tra- jectories generated by taking adv antage of the in vari- ance property have a shape that closely resembles the learned trajectory , while classical DMPs may generate trajectories that do not resemble the learned behavior . Moreov er , we notice that the goodness of the general- ization of classical DMPs heavily depends on the choice of the parameters. For instance, in the cases of dilatation and moving goal for (26), they generalize well when α = 4, but fails when α = 2 (see Figure 10b and 10d). Similarly , we observe that the generalization of classical DMPs heavily depends also on the choice of parameter K (and, consequently , D ). For instance, Fig- ure 11d shows that the trajectories are di ff erent, ev en if 12 (a) Panda setup. (b) daV inci setup. Figure 12: Setups of the Peg & Ring task. the change in goal position is the same. On the other hand, we see that the generalization of the trajectory is robust against the particular choice of hyperparameters when using extended DMPs. Indeed, in almost all our tests, the generalizations obtained by extended DMPs completely ov erlap when changing the goal position. The only case in which there is an actual di ff erence, ev en if hardly noticeable, is for the test in Figure 11d, i.e. when the goal is moving and we compare two DMPs with di ff erent v alues of K (and, thus, D ). This is due to two factors: the scaling matrix S depends explicitly on time (since g is moving), and di ff erent v alues for K in- fluence the un-perturbed e volution of dynamical system (11). On the other hand, we observe that, in the case presented in 10d, there is no di ff erence between the tw o trajectories, e ven if S depends on time because the un- perturbed ev olution of the system is not influenced by changes in α . 4.2.2. T ests on Real Robots. On a real setup, we test our ne w formulation by per- forming a task on a 7 Degrees-of-Freedom (DoF) in- dustrial manipulator , a Panda robot from Franka Emika shown in Figure 12a. W e will perform two tests. In the first test, the learned behavior will be used to ex ecute the task on the same robot, and we will sho w ho w extended DMPs (24) result in a better adaptation than classical DMPs (11). The second test, instead, will sho w how ex- tended DMPs (24) can be used to transfer the learned behavior to a di ff erent setup, in our case, the daV inci surgical robot from Intuiti ve. The P e g & Ring task consists of grabbing, one at a time, four colored rings and moving them to the same-colored peg. The task consists of two gestures, namely move , in which the end-e ff ector has to reach the ring, and carry , in which the ring is moved to ward the peg. Automa- tion of this task on surgical setups is a crucial aspect in Autonomous Robotic Surgery since it presents sev eral x 1 − 0 . 75 − 0 . 50 − 0 . 25 0 . 00 0 . 25 0 . 50 0 . 75 x 2 − 0 . 1 0 . 0 0 . 1 0 . 2 x 3 0 . 075 0 . 100 0 . 125 0 . 150 0 . 175 0 . 200 0 . 225 Figure 13: Generalization of extended DMPs (24). The blue dashed line shows the desired behavior , learned from the carry movement for the red ring. The solid red line sho ws the e xecuted extended DMP (24) for the starting and ending points for the carry movement for the blue ring. The green dash-dotted line shows the behavior obtained using classical DMPs (11) adapted to the critical points of the blue ring. challenges of real sur gery (e.g. grasping) [9, 10]. Dur- ing the learning phase, we learn a 3 − dimensional DMP for both gestures, on the Panda, via kinesthetic teach- ing. F or both tests, DMPs parameters are K = K I d 3 and D = D I d 3 , with K = 150 and D = 2 √ K ≈ 24 . 49, and α = 4. As it can be seen from Figure 12a, the four carry movements are qualitativ ely di ff erent for each color: the red and blue rings must be mov ed ‘in diago- nal’ along the base to be put in the corresponding pegs, while the green and yellow rings must be moved ‘hori- zontally’. T o prov e the adaptability of extended DMPs, we learn the desired behavior from the execution of the carry gesture for the red ring and use it to ex ecute the ges- ture for all the rings in the scene. Figure 13 shows the result of this test. In particular , one can observe that the shape of the executed trajectory (for the blue ring) maintains a trajectory of similar shape to the learned be- havior (from the execution for the red ring). F or com- pleteness, we plot the behavior obtained with classical DMPs (11). From this, it is clear that the behavior of ex- tended DMPs success in maintaining the same shape as the learned beha vior , while classical DMPs fail in doing so. As the second test, we sho w how extended DMPs al- low to ‘transfer’ the desired behavior between di ff er- ent robotic setups. T o do so, we use the DMPs ob- tained from the ex ecution performed on the Panda in- dustrial manipulator to execute the Peg & Ring task on 13 Figure 14: Generalization of DMPs between the learned trajectory on the P anda and the executed trajectory on the daV inci surgical robot. The blue dashed line shows the desired (and learned) trajectory , obtained on the Panda industrial manipulator via kinesthetic teaching. The red solid line shows the e xecuted extended DMPs (24) on the daV inci surgical robot. The green dash-dotted line shows the adaptation of classical DMPs (11) to the same starting and goal positions as the red line. the daV inci surgical robot (which setup can be seen in Figure 12b). Figure 14 shows the resulting trajectories for this test for an instance of the move gesture. Ad- ditionally , we plot the trajectory obtained by adapting classical DMPs (11) to the ne w starting and goal posi- tions. This experiment shows that extended DMPs (24) are able to generalize to a very di ff erent length-scale, while maintaining a shape similar to the learned behav- ior , e ff ectiv ely permitting to transfer mov ement from the industrial manipulator to the surgical robot. Moreover , it is possible to observe that the trajectory obtained us- ing classical DMPs fails in adapting to the new length- scale, generating a behavior that cannot be executed with the surgical robot. 5. Learning from Multiple T rajectories DMPs have been used to learn trajectories from a sin- gle demonstration, because a set of demonstrated trajec- tories, in general, does not hav e the same starting and ending points, making the learning phase highly non- trivial. So-called Stylistic DMPs [5, 32] were dev eloped to learn from multiple demonstrations by introducing an additional v ariable, called style parameter , and by mak- ing the execution dependent on this new variable. This approach is useful when the “style” is needed to de- scribe a trajectory (for instance, the trajectory may de- pend on the height of an obstacle). Howe ver , when the style is not an issue, this approach introduces additional and undesired variables that increase the complexity of the problem. 5.1. Linear Regr ession Over Aligned DMPs W e propose a technique that allows to extract a unique set of weights w i ∈ R d , i = 0 , 1 , . . . , N , from a set of multiple observ ations as a single linear regres- sion problem. W e consider a set of M demonstrated trajectories n x ( j ) ( t ) , t ∈ h t ( j ) 0 , t ( j ) 1 i o M j = 1 . Using the technique introduced in Section 4.1 we transform each trajectory in such a way that all the starting and ending points are the same. W e choose x 0 = 0 and g = 1 , but we emphasize that any choice satisfying x 0 , g would gi ve the same 14 results. W e compute the roto-dilatation matrices, for j = 1 , 2 , . . . , M , S ( j ) = k 1 − 0 k x ( j ) t ( j ) 1 − x ( j ) t ( j ) 0 R [ 1 − 0 \ x ( j ) t ( j ) 1 − x ( j ) t ( j ) 0 , (28) and use these matrices to create the ne w set of trans- formed trajectories n ˇ x ( j ) ( t ) = S ( j ) x ( j ) ( t ) , t ∈ h t ( j ) 0 , t ( j ) 1 i o j = 1 , 2 ,..., M . Next, we perform a time scaling , so that each curve has [0 , T ] as time domain, for a given T > 0. T o do so, we define ˜ x ( j ) ( t ) = ˇ x ( j ) t ( j ) 1 − t ( j ) 0 T t + t ( j ) 0 . (29) It’ s easy to see that ˜ x ( j ) (0) = ˇ x ( j ) t ( j ) 0 , ˜ x ( j ) ( T ) = ˇ x ( j ) t ( j ) 1 . From these two step, we obtain a new set of ‘trans- formed’ curves { ˜ x ( j ) ( t ) , t ∈ [0 , T ] } M j = 1 , each with time domain [0 , T ], and 0 and 1 as starting and ending points respectiv ely . Equation (11a) allows to compute the set of forcing terms { f ( j ) ( s ) } j = 1 , 2 ,..., M , and then we are able to com- pute the set of weights { w i } i = 0 , 1 ,..., N that minimizes the sum of the squared errors (w .r .t. the L 2 -norm) between the function generated using (3) and the forcing terms f ( j ) ( s ), j = 1 , 2 , . . . , M . For this purpose, we decompose the problem in each independent component. For each component p = 1 , 2 , . . . , d , we look for the weight vector ω = [ ω 0 , ω 1 , . . . , ω N ] T ∈ R N + 1 satisfying ω = arg min ω ∈ R N + 1 M X j = 1 P N i = 0 ω i ϕ i ( s ) P N i = 0 ϕ i ( s ) s − f ( j ) p ( s ) 2 2 | {z } F ( ω ) , where f ( j ) p ( s ) denotes the p -th component of f ( j ) ( s ) and is computed as f ( j ) p s ( t ) = ˙ v ( j ) p + D p v p K p − g ( j ) p − x p ( t ) + ( g p − x 0 , p ) s ( t ) , (30) with s ( t ) = exp( − α t ). The existence and uniqueness of a minimum for F is guaranteed by its continuity and strict con ve xity . More- ov er , since F is smooth, ω is the vector that nullifies its gradient: ∇ ω F ( · ) | ω = 0 . Let us denote with G the gradient of F : G ( · ) . = ∇ ω F ( · ) : R N + 1 → R N + 1 . Its h -th component, denoted by G h is (recalling that k ζ ( s ) k 2 2 = R s 1 s 0 ( ζ ( s )) 2 d s ) G h ( ω ) = ∂ F ∂ω h ( ω ) = M X j = 1 Z s 1 s 0 2 P N i = 0 ω i ϕ i ( s ) P N i = 0 ϕ i ( s ) s − f ( j ) p ( s ) ϕ h ( s ) P N i = 0 ϕ i ( s ) s d s . Function G h is linear in ω , thus the minimization prob- lem can be written as a linear system: A ω = b . (31) The component in row h and column k of A , a hk , is, for h , k ∈ { 0 , 1 , . . . , N } , a hk = ∂ G h ∂ω k ( ω ) = M X j = 1 Z s 1 s 0 2 ϕ h ( s ) ϕ k ( s ) P N i = 0 ϕ i ( s ) 2 s 2 d s = 2 M Z s 1 s 0 ϕ h ( s ) ϕ k ( s ) P N i = 0 ϕ i ( s ) 2 s 2 d s , (32) while the h -th component of the v ector b , b h , is, for h = 0 , 1 , . . . , N , b h = M X j = 1 Z s 1 s 0 2 ϕ h ( s ) P N i = 0 ϕ i ( s ) f ( j ) p ( s ) s d s . (33) Using any quadrature formula (e.g. Simpson’ s rule), the integrals in equations (32) and (33) can be computed for each h , k = 0 , 1 , . . . , N giving matrix A and vector b of (31). Thus, we can solve the linear problem and obtain ω . W e recall that this procedure has to be repeated for each component p = 1 , 2 , . . . , d . The algorithm to perform regression is summarized in Algorithm 1. Remark 5 . Although we presented the algorithm using mollifier-lik e basis functions { ϕ i } i = 0 , 1 ,..., N , this algorithm works for any choice of the set of basis functions. Remark 6 . This approach does not encode information about ‘styles’, di ff erently from [32]. Thus, the gener- alization of the DMP depends only on the starting and goal positions x 0 and g . 15 Algorithm 1 Regression Over Multiple Demonstrations Input: Set of desired trajectories { x ( j ) ( t ) } j = 1 , 2 ,..., M , set of basis functions { ϕ i ( s ) } i = 0 , 1 ,..., N , DMPs hyperparame- ter K , D and α , final time T > 0; Output: Set of weights { ω ( p ) } p for each direction p = 1 , 2 , . . . , d . Align the trajectories (both temporally and spa- tially) 1: for j = 1 , 2 . . . , M do 2: Compute S ( j ) using (28) 3: Compute ˇ x ( j ) ( t ) = S ( j ) x ( t ) 4: Compute ˜ x ( j ) ( t ) using (29) 5: end for 6: Evaluate the canonical system extrema s 0 = 1 , s 1 = e − α T Compute the matrix A = [ a hk ] h , k = 0 , 1 ,..., N 7: for h = 0 , 1 , . . . , N do 8: a hh = 2 M R s 1 s 0 ϕ h ( s ) 2 ( P N i = 0 ϕ i ( s ) ) 2 s 2 d s 9: f or k = h + 1 , h + 2 , . . . , N do 10: a hk = 2 M R s 1 s 0 ϕ h ( s ) ϕ k ( s ) ( P N i = 0 ϕ i ( s ) ) 2 s 2 d s 11: a kh = a hk 12: end for 13: end for For loop along the directions 14: for p = 1 , 2 , . . . , d do 15: F or each trajectory ˜ x ( j ) ( t ) compute the forcing term f ( j ) ( s ) for direction p using (30). Compute the vector b = [ b h ] h = 0 , 1 ,..., N 16: f or h = 0 , 1 , . . . , N do 17: b h = P M j = 1 R s 1 s 0 2 ϕ h ( s ) P N i = 0 ϕ i ( s ) f ( j ) ( s ) s d s 18: end for 19: Solv e the minimization problem: A ω ( p ) = b 20: end for Remark 7 . The presented method performs classical lin- ear regression ov er a set of observed trajectories, and it giv es a non-probabilistic result, di ff erently from other approaches, such as Probabilistic Mov ement Primitiv es [22] and Kernelized Movement Primiti ves [23], which are purely probabilistic approaches. 5.2. Results In this Section, we test the ability to learn from mul- tiple demonstrations by performing both synthetic tests and experiments with real robots. As first synthetic test, we create a set of trajectories x ( j ) ( t ) = x ( j ) 1 ( t ) , x ( j ) 2 ( t ) j by integrating a known dy- namical system. The second synthetic test is performed using a well-know dataset containing trajectories ex- tracted from surgical tasks. − 1 . 0 − 0 . 5 0 . 0 0 . 5 1 . 0 x 1 − 1 . 0 − 0 . 5 0 . 0 0 . 5 1 . 0 x 2 (a) Synthetic test before rescaling. 0 . 0 0 . 5 1 . 0 1 . 5 2 . 0 x 1 0 . 0 0 . 5 1 . 0 1 . 5 x 2 x 0 g (b) Synthetic test after rescaling. Figure 15: T ests for our proposed approach to perform DMPs learning from multiple trajectories. The trajectories are obtained by integrat- ing (34) with di ff erent initial conditions. In both plots the solid lines represent the demonstrated trajectories, while the dashed one is an execution of the learned DMP . The tests are plotted both before (Fig- ure 15a) and after (Figure 15b) the spatial scaling step. In Figure 15b, the dot represents the initial position x 0 = (0 , 0), while the star marks the goal g = (1 , 1). On the real setup, we use real ex ecutions to automate the P e g & Ring task on poth the Panda industrial ma- nipulator and the daV inci surgical robot. In the e xperi- ment on the daV inci, we make the task more challenging by integrating extended DMPs (24) with obstacle avoid- ance methods. 5.2.1. Synthetic T est. For the test with synthetic data, we generate the tra- jectories computing the solution of the dynamical sys- tem ˙ x 1 = x 3 1 + x 2 2 x 1 − x 1 − x 2 ˙ x 2 = x 3 2 + x 2 1 x 2 + x 1 − x 2 , (34) which is known to have a alpha-limit on the circum- ference of radius 1 and an attracti ve equilibrium at the origin. The set of (fifty) trajectories is generated by 16 0 . 5 1 . 0 1 . 5 2 . 0 x 1 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 1 . 2 x 2 x 0 g Figure 16: Comparison of two DMPs obtained by a noisy data-set. The solid red line shows the trajectory obtained using the proposed method for regression over multiple observations. The dashed green line shows the execution of a DMP learned from one sample of the dataset. choosing a random angle θ 0 ∈ [0 , 2 π ) and a random ra- dius ρ 0 ∈ (0 . 8 , 1), and then setting as initial position x 1 (0) = ρ 0 cos( θ 0 ), x 2 (0) = ρ 0 sin( θ 0 ). Then the dy- namical system is integrated using the classic fourth- or der Runge-K utta method up to a random final time T ∈ (5 , 10). Choosing a final time greater than 5 al- lows the system to reach the origin with an error smaller than 2%: k x − 0 k < 0 . 02. Moreover , having the fi- nal time change within executions mimics the time in- consistencies that may arise when learning trajectories from real executions. After generating the set of obser- vations, we use Algorithm 1 to generate a DMP . The DMP’ s hyperparameters are K = K I 2 , D = D I 2 , with K = 150 D = 2 √ K ≈ 24 . 49, and α = 4. Finally , we randomly select an initial point x 0 with k x 0 k ∈ (0 . 8 , 1) and ex ecute the obtained DMP by setting as goal the attractiv e equilibrium of the system: g = (0 , 0). Figure 15 shows the results of these tests. In particular Figure 15a shows the set of trajectories obtained by in- tegrating the ODE (34), together with an execution of the obtained DMP . Figure 15b shows the same trajecto- ries when spatially rescaled to hav e 0 and 1 as starting and ending points respectiv ely . T o highlight the usefulness of learning from a set of observations, we added a Gaussian noise ∼ N (0 , σ 2 ) of null mean and v ariance σ 2 = 5 · 10 − 5 to the set of tra- jectories obtained by solving the dynamical system (34). W e then use Algorithm 1 to extract a DMP (the hyper - parameters are the same as the previous test). Moreover , we learned a second DMP , with the same hyperparame- ters, using as desired trajectory a single trajectory of the dataset. In Figure 16 it can be seen that learning from a single Figure 17: Comparison between two DMPs obtained by the JIGSA W dataset. The solid red line (on the left) sho ws the ex ecution of the DMP obtained via regression. The dataset is shown on the right in blue. The green line marks a DMP obtained by a single element of the dataset. sample of the dataset results in a DMP with undesired oscillation. On the other hand, performing regression heavily reduces these oscillations. T o emphasize this aspect, we perform a similar com- parison on the JIGSA W [30] dataset. In particular, we extract all the occurrences of the gesture ‘G1’ ( r eaching needle with the right hand ) and use them to extract a unique DMP via Algorithm 1. DMPs’ parameters are K = 150 , D = 2 √ K ≈ 24 . 49, and α = 4. Since this gesture in v olves only the right arm, we e xtract only the Cartesian components for it and we ignore the left end-e ff ector . As it can be seen in Figure 17, in par - ticular from the second component x 2 , oscillations are reduced when a unique behavior is extracted from mul- tiple demonstrations. 5.2.2. T ests on Real Robots. In this Section, we present two experiments on real robotic setups to sho ws the ability of our proposed method for DMPs regression to extract a unique behav- ior from multiple observations. T est on the P anda Industrial Manipulator . For the ex- periments on a real setup, we executed the Pe g & Ring task with a Panda industrial manipulator . The main phases of the task are presented in Figure 18. The task can be decomposed into two gestures, namely move and carry . The first gesture is executed to reach the grasp- ing point of the ring with the robot end-e ff ector . The second gesture, instead, is used to carry the ring toward the same colored peg. The task uses four colored rings (and, thus, four same-colored pegs) and we execute the 17 (a) Initial setup. (b) Grasped ring. (c) Carrying the ring. (d) Released ring. Figure 18: The Peg&Ring task with the Panda robot. task a total of fifty times on the Panda robot, chang- ing the grasping and releasing positions (i.e., the starting and final positions of each gesture). Since we executed the task fifty times, we obtained a total of 200 samples for both the move and carry gestures. Figure 19a and 19b show the trajectories of, respec- tiv ely , move and carry obtained with the industrial ma- nipulator together with an ex ecution of the obtained DMP with parameters K = 150 , D = 2 √ K ≈ 24 . 49, and α = 4. These are plotted after the rescaling so that x 0 = 0 , and g = 1 for both gestures. W e then used these DMPs to automate the Pe g & Ring task. W e implemented a Finite State Machine which takes as input the order of colors and then automatically ex ecutes the task by alternating the move and carry gestures. The learned DMPs are used to model the Cartesian ev olution of the robot’ s end-e ff ector of the robot. Figure 18 shows the step of the task’ s e xecution for the green ring. Figure 20 shows the movement of the end-e ff ector . T est on the daV inci Sur gical Robot. As a second e xper- iment on real robots, we perform the Peg & Ring task also on the daV inci surgical manipulator (which setup is shown in Figure 21). In this case, the task is more complicated since it is executed with two end-e ff ectors, and an additional movement, called pass has to be learned. This gesture is used to transfer the ring from one end-e ff ector to the other . The workspace is split into two parts (see Figure 21), left and right and each x 1 [ m ] − 1 0 1 2 x 2 [ m ] − 2 − 1 0 1 x 3 [ m ] 0 . 0 0 . 5 1 . 0 1 . 5 2 . 0 (a) move x 1 [ m ] 0 . 0 0 . 5 1 . 0 1 . 5 x 2 [ m ] − 0 . 5 0 . 0 0 . 5 1 . 0 1 . 5 x 3 [ m ] 0 . 0 0 . 5 1 . 0 1 . 5 2 . 0 (b) carry Figure 19: Experiments on the real robot. For both gestures ( move and carry ), the trajectories of the dataset are plotted using solid lines, while the execution of the obtained DMP is plotted using a dashed line. All the trajectories are plotted after the rescaling, so that x 0 = 0 , and g = 1 for both gestures. end-e ff ector must mov e only in one of the portions. For this reason, if the ring initial position and the tar get-peg placement are in the same half, the end-e ff ector pick- ing the ring will be the same placing it, and the pass gesture will be unnecessary . On the other hand, when the ring is in one half of the workspace and the same- colored peg is in the other , the ring will be transferred between end-e ff ectors. In our test, we set the initial placement of the rings in such a way that the arm picking up the ring always dif- fers from the hand placing it. Thus, the ring has always to be passed from one end-e ff ector to the other . On this setup, the a vailable w orkspace is proportionally smaller w .r .t. the robot dimension. Moreover , the pe gs and rings are proportionally bigger . For this reason, we integrate 18 Figure 20: Plot of the move (in red) and carry (in green) gesture in an automatic execution of the Pe g & Ring task on the Panda robot. Figure 21: daV inci setup for the bimanual Peg & Ring task. The red line splits the workspace in left and right portion. the extended DMP system (24) with the obstacle a void- ance method presented in [16] in order to av oid colli- sions with the pegs. Thus, (24a) reads τ ˙ v = K 0 ( g − x ) − D 0 v − K 0 ( g − x 0 ) s + K 0 f ( s ) + p ( x ) , where, intuitiv ely , p ( x ) is a ‘repulsi ve term’ that pushes the trajectory away from the obstacle. Since the move and carry gestures require the move- ment of a single end-e ff ector at a time, we model both with a 3 − dimensional DMP . On the other hand, the pass gesture requires that the two end-e ff ectors move together to wards the center of the w orkspace. Thus, this third gesture is modeled using a single 6 − dimensional DMP . In this way , the two end-e ff ectors share the same canonical system so that their movement is synchro- nized. Figure 22 sho ws the dataset and obtained DMP x 1 − 0 . 10 − 0 . 05 0 . 00 0 . 05 0 . 10 x 2 − 0 . 03 − 0 . 02 − 0 . 01 0 . 00 0 . 01 0 . 02 0 . 03 x 3 − 0 . 14 − 0 . 13 − 0 . 12 − 0 . 11 − 0 . 10 − 0 . 09 (a) Original dataset. x 1 − 0 . 5 0 . 0 0 . 5 1 . 0 1 . 5 x 2 − 1 . 0 − 0 . 5 0 . 0 0 . 5 1 . 0 1 . 5 x 3 − 0 . 5 0 . 0 0 . 5 1 . 0 1 . 5 2 . 0 (b) Roto-dilatated dataset (in blu) and obtained DMP (in dashed red). Figure 22: T rajectory samples for the move gesture of the Peg & Ring task on the daV inci surgical robot. for the move gesture. The obtained DMPs allowed to successfully automate the task. 6. Conclusions In this work, we hav e highlighted some of the weak aspects of the DMP frame work, proposing three major modifications to ov ercome them. At first, we proposed a new formulation for the set of basis functions. W e prov ed the proposed mollifier-like basis functions to be good approximators for the forcing term, while providing a faster and more stable learning phase. Moreov er, being compactly supported, this ne w set of basis functions allows to update a DMP when only a portion of the trajectory has to be modified. Secondly , we proposed a strategy to exploit the in variance under a ffi ne transformations of the DMP formulation (11). In particular , we showed ho w to generalize the learned tra- jectory to any length scale and any rotation of the ref- erence frame maintaining the qualitativ e shape of the 19 learned trajectory , specifying that the same approach can be extended to any inv ertible transformation. Fi- nally , we solved one of the major issues in DMPs, which is the inability of the original frame work to learn a com- mon behavior from multiple observ ations. As future work, we aim to extend these improvements to the unit quaternion formulation of DMPs [20], in or- der to make more rob ust also the orientation formulation of DMPs. Moreov er , we aim to use the proposed formulation to improv e the ‘Surgical T ask Automation Frameworks’ presented in [9] and [10], in which DMPs are used to generate surgical gestures. Funding This project has recei ved funding from the Euro- pean Research Council (ERC) under the European Union’ s Horizon 2020 research and innovation pro- gramme, ARS (Autonomous Robotic Surgery) project, grant agreement No. 742671. References [1] A. J. Ijspeert, J. Nakanishi, S. Schaal, Movement imitation with nonlinear dynamical systems in humanoid robots, in: Robotics and Automation, 2002. Proceedings. ICRA ’02. IEEE Interna- tional Conference on, V ol. 2, IEEE, 2002, pp. 1398–1403. [2] A. J. Ijspeert, J. Nakanishi, S. Schaal, Learning attractor land- scapes for learning motor primitives, in: Advances in neural in- formation processing systems, 2003, pp. 1547–1554. [3] S. Schaal, Dynamic movement primitives-a framework for mo- tor control in humans and humanoid robotics, in: Adaptiv e mo- tion of animals and machines, Springer , 2006, pp. 261–280. [4] A. J. Ijspeert, J. Nakanishi, H. Ho ff mann, P . Pastor, S. Schaal, Dynamical movement primitives: learning attractor models for motor behaviors, Neural computation 25 (2) (2013) 328–373. [5] T . Matsubara, S.-H. Hyon, J. Morimoto, Learning stylistic dy- namic movement primitiv es from multiple demonstrations, in: Intelligent Robots and Systems (IROS), 2010 IEEE / RSJ Inter- national Conference on, 2010, pp. 1277–1283. [6] A. Ude, A. Gams, T . Asfour, J. Morimoto, T ask-specific gener- alization of discrete and periodic dynamic movement primitives, IEEE T ransactions on Robotics 26 (5) (2010) 800–815. [7] T . Kulvicius, K. Ning, M. T amosiunaite, F . W orgötter , Join- ing movement sequences: Modified dynamic movement primi- tiv es for robotics applications ex emplified on handwriting, IEEE T ransactions on Robotics 28 (1) (2012) 145–157. [8] R. W ang, Y . W u, W . L. Chan, K. P . T ee, Dynamic movement primitiv es plus: For enhanced reproduction quality and e ffi cient trajectory modification using truncated kernels and local biases, in: Intelligent Robots and Systems (IROS), 2016 IEEE / RSJ In- ternational Conference on, IEEE, 2016, pp. 3765–3771. [9] M. Ginesi, D. Meli, H. C. Nakawala, A. Roberti, P . Fiorini, A knowledge-based framework for task automation in surgery , in: 2019 19th International Conference on Advanced Robotics (ICAR), 2019, pp. 37–42. [10] M. Ginesi, D. Meli, A. Roberti, N. Sansonetto, P . Fiorini, Autonomous task planning and situation awareness in robotic surgery*, in: 2020 IEEE / RSJ International Conference on Intel- ligent Robots and Systems (IR OS), 2020, pp. 3144–3150. [11] P . Pastor , L. Righetti, M. Kalakrishnan, S. Schaal, Online mov e- ment adaptation based on pre vious sensor experiences, in: 2011 IEEE / RSJ International Conference on Intelligent Robots and Systems, 2011, pp. 365–371. [12] P . Pastor , M. Kalakrishnan, L. Righetti, S. Schaal, T o wards as- sociativ e skill memories, in: Humanoid Robots (Humanoids), 2012 12th IEEE-RAS International Conference on, IEEE, 2012, pp. 309–315. [13] D. Kappler , P . Pastor , M. Kalakrishnan, M. Wüthrich, S. Schaal, Data-driv en online decision making for autonomous manipula- tion., in: Robotics: Science and Systems, 2015. [14] D.-H. Park, H. Ho ff mann, P . P astor, S. Schaal, Movement re- production and obstacle av oidance with dynamic movement primitiv es and potential fields, in: Humanoid Robots, 2008. Humanoids 2008. 8th IEEE-RAS International Conference on, IEEE, 2008, pp. 91–98. [15] H. Ho ff mann, P . Pastor , D.-H. Park, S. Schaal, Biologically- inspired dynamical systems for movement generation: auto- matic real-time goal adaptation and obstacle av oidance, in: Robotics and Automation, 2009. ICRA ’09. IEEE International Conference on, IEEE, 2009, pp. 2587–2592. [16] M. Ginesi, D. Meli, A. Calanca, D. Dall’Alba, N. Sansonetto, P . Fiorini, Dynamic mov ement primitives: V olumetric obstacle av oidance, in: 2019 19th International Conference on Adv anced Robotics (ICAR), 2019, pp. 234–239. [17] M. Ginesi, D. Meli, A. Roberti, N. Sansonetto, P . Fiorini, Dynamic movement primitives: V olumetric obstacle av oid- ance using dynamic potential functions, Journal of Intelli- gent & Robotic Systems 101 (4) (2021) 79. doi:10.1007/ s10846- 021- 01344- y . URL https://doi.org/10.1007/s10846- 021- 01344- y [18] A. Gams, B. Nemec, A. J. Ijspeert, A. Ude, Coupling move- ment primiti ves: Interaction with the en vironment and bimanual tasks, IEEE T rans. Robotics 30 (4) (2014) 816–830. [19] A. Ude, B. Nemec, T . Petri ´ c, J. Morimoto, Orientation in carte- sian space dynamic mov ement primitives, in: Robotics and Automation (ICRA), 2014 IEEE International Conference on, IEEE, 2014, pp. 2997–3004. [20] M. Saveriano, F . Franzel, D. Lee, Mer ging position and orienta- tion motion primitives, in: International Conference on Robotics and Automation (ICRA), 2019, 2019. [21] H. B. Amor, G. Neumann, S. Kamthe, O. Kroemer , J. Pe- ters, Interaction primitives for human-robot cooperation tasks, in: Robotics and Automation (ICRA), 2014 IEEE International Conference on, IEEE, 2014, pp. 2831–2837. [22] A. Paraschos, C. Daniel, J. R. Peters, G. Neumann, Probabilistic movement primitiv es, in: Advances in neural information pro- cessing systems, 2013, pp. 2616–2624. [23] Y . Huang, L. Rozo, J. Silvério, D. G. Caldwell, Kernelized movement primitiv es, The International Journal of Robotics Re- search 38 (7) (2019) 833–852. [24] S. Calinon, A tutorial on task-parameterized mo vement learning and retriev al, Intelligent service robotics 9 (1) (2016) 1–29. [25] A. Sidiropoulos, Z. Doulgeri, A rev ersible dynamic movement primitiv e formulation, arXiv preprint arXi v:2010.07708 (2020). [26] F . J. Ab u-Dakka, V . Kyrki, Geometry-aware dynamic movement primitiv es, arXiv preprint arXi v:2003.06061 (2020). [27] P . Pastor , H. Ho ff mann, T . Asfour, S. Schaal, Learning and gen- eralization of motor skills by learning from demonstration, in: Robotics and Automation, 2009. ICRA ’09. IEEE International Conference on, IEEE, 2009, pp. 763–768. 20 [28] H. W endland, Piecewise polynomial, positiv e definite and com- pactly supported radial functions of minimal degree, Advances in computational Mathematics 4 (1) (1995) 389–396. [29] R. Schaback, A practical guide to radial basis functions, Elec- tronic Resource 11 (2007). [30] Y . Gao, S. S. V edula, C. E. Reiley , N. Ahmidi, B. V aradarajan, H. C. Lin, L. T ao, L. Zappella, B. Béjar, D. D. Y uh, et al., Jhu-isi gesture and skill assessment working set (JIGSA WS): A surgi- cal activity dataset for human motion modeling, in: MICCAI W orkshop: M2CAI, V ol. 3, 2014, p. 3. [31] O. I. Zhelezov , N-dimensional rotation matrix generation algo- rithm, American Journal of Computational and Applied Mathe- matics 7 (2) (2017) 51–57. [32] T . Matsubara, S.-H. Hyon, J. Morimoto, Learning parametric dynamic mov ement primitives from multiple demonstrations, Neural networks 24 (5) (2011) 493–500. 21

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment