Decentralized Heterogeneous Multi-Player Multi-Armed Bandits with Non-Zero Rewards on Collisions

We consider a fully decentralized multi-player stochastic multi-armed bandit setting where the players cannot communicate with each other and can observe only their own actions and rewards. The environment may appear differently to different players,…

Authors: Akshayaa Magesh, Venugopal V. Veeravalli

1 Decentralized Heterogeneous Multi-Player Multi-Armed Bandits with Non-Zero Re wards on Collisions Akshayaa Magesh, Graduate Student Member , IEEE, and V enugopal V . V eerav alli, F ellow , IEEE Abstract —W e consider a fully decentralized multi-play er stochastic multi-armed bandit setting where the players cannot communicate with each other and can observe only their own actions and rewards. The en vironment may appear differently to different players, i.e. , the reward distributions for a given arm are heter ogeneous across players. In the case of a collision (when more than one play er plays the same arm), we allow f or the colliding players to recei ve non-zer o rewards. The time-horizon T for which the arms are played is not known to the players. Within this setup, where the number of players is allowed to be greater than the number of arms, we present a policy that achieves near order-optimal expected regr et of order O (log 1+ δ T ) for δ > 0 (howev er small) over a time-horizon of duration T . Index T erms —Multi-player , Non-homogeneous rewards, Decen- tralized Bandits, Spectrum Access. I . I N T R O D U C T I O N T HE multi-armed bandit (MAB) is a well-studied model for sequential decision-making problems with an inherent exploration-e xploitation trade-of f. MABs ha ve seen applications in recommendation systems, advertising, ranking results of search engines, and more. The classical stochastic MAB setup considers an agent/player , who at each time instant t , chooses an action from a finite set of actions (or arms). The agent receiv es a rew ard drawn from an unknown distrib ution associated with the arm chosen. The goal is to design a decision-making policy that maximizes the agent’ s av erage cumulati ve reward, or equiv alently , minimizes the average accumulated regret. Policies that are designed to minimize re gret in bandit settings aim to achiev e sub-linear regret with respect to the time horizon T . The MAB problem was first considered in the context of clinical trials by Thompson [1], who introduced a posterior sampling heuristic commonly known as Thompson sampling. In their seminal work, Lai and Robbins [2] formalized the stochastic MAB setting and provided a lower bound on the av erage regret of order Ω(log T ) for time horizon T . They also presented an asymptotically optimal decision polic y using the idea of upper confidence bounds, which was further explored in [3] and [4]. Other variants of the MAB setup such as adversarial, contextual and Markovian ha ve also been studied in literature (see, e.g., [5], [6]). A. Magesh and V .V . V eera valli are with the Department of Electrical and Computer Engineering, University of Illinois at Urbana-Champaign, IL, 61820 USA. (email: amagesh2@illinois.edu, vvv@illinois.edu) This research was supported by the US National Science Foundation SpecEES program, under grant number 1730882, through the University of Illinois at Urbana-Champaign. A. Multi-player multi-armed bandits Recently , there has been a growing interest in the study of multi-player MAB settings, where instead of a single agent, there are K agents simultaneously pulling the arms at each time instant. The average system re gret is defined with respect to the optimal assignment of arms that maximizes the sum of expected re wards of all players (which can be interpreted as the system performance). The e vent of multiple players pulling the same arm simultaneously is commonly referred to as a collision , and leads to the players receiving reduced or zero rewards. Thus, while designing policies for the multi-player MAB setting, in addition to balancing the exploration-exploitation trade-off, it is important to control the number of collisions that occur . Multi-player bandit models can be broadly classified into centralized and decentralized settings. In the centralized setting, there exists a central controller that can coordinate the actions of all the players. In this case, the multi-player problem can be reduced to a single agent MAB problem, where the agent is the set of all players taken collectively , and this agent can choose multiple arms at a time as directed by the controller . Howe ver , the communication o verhead placed by the central controller , and the communication bottleneck at the controller might be prohibiti ve. Therefore, it is of interest to study a decentralized system without central control, which is the focus of our study . A tight (in the order sense) lo wer bound for the system regret for the centralized case is of course the same as that for a single agent multi-armed bandit setup, i.e. , Ω(log T ) , which also serves as a lower bound on the system regret for the decentralized case. It should be noted that no larger lo wer bounds ha ve been proven for the decentralized case. Multi-player MABs with cooperative ag ents in the decen- tralized setting ( i.e. , where the players communicate among themselves in order to achieve a common objective) have seen applications in geographically distributed ad servers [7], peer-to-peer networks [8], and recommendation systems [9]. Multi-player stochastic MABs in the decentralized setting are particularly rele v ant to cogniti ve radio and dynamic spectrum access systems [10]. In these systems, the finite number of channels representing dif ferent frequency bands are treated as arms, the users in the network are treated as players, and the data rates receiv ed from the channels can be interpreted as rew ards. Since the maximum rate that can be receiv ed from a channel is limited, assuming bounded rewards for the arms is justified. In this setting, the players are competing for the same set of finite resources (the rew ards recei ved from the finite set 2 of arms). B. Pr evious r elated work on decentralized multi-player MABs Some of the prior work in the decentralized setting assumes that communication between the players is possible [11], [12]. Howe ver , cooperation through communication between the players imposes an additional cost and may suffer from latency issues due to delays. Other works assume that sensing occurs at the level of ev ery individual player, such as smart devices in a network being able to sense if a channel is being used or not without transmitting on it. The work in [13] considers such a setting where an auction algorithm is used by players to come to a consensus on the optimal assignment of arms. Howe ver in other systems, such as emerging architectures in Internet of Things (IoT), the individual nodes may not be capable of such sensing. This motiv ates the study of a fully decentralized scenario, where there is no central control and the players cannot communicate with each other in any manner . The players can observe only their o wn actions and rewards. In most of the prior work on the fully decentralized setting, the assumption is made that the rew ard distribution for any arm is the same (homogeneous) for all players. This setting was first considered in [14], where prior kno wledge of the number of players is not assumed. The algorithm presented in [14], named Multi-user -Greedy collision A voiding algorithm (MEGA), combines a probabilistic -greedy algorithm with a collision av oiding mechanism inspired by the ALOHA protocol, and provides guarantees of sub-linear regret. An algorithm named Musical Chairs is proposed in [15], which is composed of a learning phase for the players to learn an -correct ranking of arms and the number of players, and a ‘Musical Chairs’ phase, in which the K players fix on the top K arms. High probability guarantees of constant re gret are pro vided in [15] for the Musical Chairs algorithm. The fully decentralized setting with homogeneous reward distributions across the players is also considered in [16]–[18]. All of the above mentioned works also assume that in the event of a collision, all the colliding players recei ve zer o re wards. In cognitiv e radio and uncoordinated dynamic spectrum access networks, the users are usually not colocated physically , and therefore the re ward distrib utions for a gi ven arm may be heterogeneous across users. There hav e been a few works that study such a heterogeneous setting. In [13], [19] the heterogeneous setting is studied under the assumption that the players are capable of sensing ( i.e. , players can observe whether an arm is being used or not without pulling it). A fully decentralized heterogeneous setting is studied in [20]– [23], where players can observe only their own actions and rew ards. In [20], [21] it is assumed that in the ev ent of a collision, the colliding players get zero rewards; the idea of forced collisions is used to enable the players to communicate with one another and settle on the optimal assignment of arms. The work in [22] takes a game-theoretic approach adapted from [24], where the assumption of zero rewards on collisions is made and guarantees of average sub-linear regret of order O (log 2+ δ T ) ov er a time-horizon of T , for 0 < δ < 1 , are provided. An extension of [22] with near-order optimal regret of O (log 1+ δ T ) was presented recently in [23]. Another assumption in much of the prior work on multi- player MABs that needs to be closely examined is that of zero re wards on collisions. In the example of uncoordinated dynamic spectrum access, when more than one user (player) transmits on a channel, the colliding players may recei ve reduced, but not necessarily zero, rates or rew ards. Thus allo wing for non-zero re wards on collisions results in a more realistic model. Such a setting with homogeneous reward distributions across players and non-zero re wards on collisions is considered in [25]. The algorithm presented in [25], which allo ws for the number of players to be greater than the number of arms, is an extension of the Musical Chairs algorithm [15] and comes with high probability guarantees of constant regret. C. Our Contributions In this paper , we study a multi-player MAB with heteroge- neous rew ard distrib utions and non-zero rew ards on collisions. W e also allow for the number of players to be greater than the number of arms. The analysis of our algorithm relies on [24], and in contrast to the w ork in [22], requires non-trivial modifications to the results in [24] to accommodate non-zero rew ards on collisions. T o the best of our knowledge, ours is the first work to consider a model that allows for both heterogeneous re ward distributions and non-zero rew ards on collisions. In this setting, we propose an algorithm that achiev es near order optimal regret of order O (log 1+ δ T ) ov er a time- horizon T , for δ > 0 (howe ver small). I I . S Y S T E M M O D E L W e consider a multi-player MAB problem, in which the set of players is [ K ] = { 1 , 2 , . . . , K } . The action space of each player j ∈ [ K ] is the set of M arms A j = [ M ] . Let the time-horizon be denoted by T , and the action taken (or arm played) by player j at time t by a t,j . The action profile a t is defined as the vector of the actions taken by the players, i.e. , a t = [ a t, 1 , ..., a t,K ] . At any given time, the players can observe only their own re wards and cannot observe the actions taken by the other players. W e assume the re ward distribution of each arm to hav e support [0 , 1] . In the e vent that multiple players play the same arm m , they could get non-zero rewards. Let k ( a t,j ) denote the number of players playing arm a t,j (including player j ). Note that the number of players on arm a t,j is a function of the complete action profile a t . The re ward received by player j playing arm m , which is played by a total of k ( m ) players (including player j ) is denoted by r j ( m, k ( m )) . The rew ard is drawn from a distribution with mean µ j ( m, k ( m )) = E [ r j ( m, k ( m ))] . (1) W e assume that µ j ( m, k ( m )) = 0 for all k ( m ) ≥ N + 1 , for some N that depends on the system, i.e., when there are more than N players playing the same arm, they all recei ve zero re wards. This constrains the maximum number of players allowed in the system to be M N ( K ≤ M N ). 3 The action space A of the players is simply the product space of the individual action spaces, i.e. , A = Π K j =1 A j . W e refer to an element a ∈ A as a matching . Let a ∗ ∈ A be such that a ∗ ∈ arg max a ∈ A K X j =1 µ j ( a j , k ( a j )) . (2) In this work, we restrict our attention to the case where there is a unique 1 optimal matching a ∗ . Let J 1 = P K j =1 µ j ( a ∗ j , k ( a ∗ j )) be the system rew ard for the optimal matching, and J 2 the system rew ard for the second optimal matching. Define ∆ = J 1 − J 2 2 M N . (3) Unlike pre vious works ( [13], [21], [22]), we do not make the assumption that the players ha ve knowledge of ∆ (or a lo wer bound on ∆ ). The expected re gret during a time horizon T is defined as: R ( T ) = T K X j =1 µ j ( a ∗ j , k ( a ∗ j )) − E T X t =1 K X j =1 µ j ( a t,j , k ( a t,j )) (4) where the e xpectation is o ver the actions of the players. In order for the players to get estimates of the mean rewards of the arms, we assume that the players hav e unique IDs at the beginning of the algorithm. Note that this is required only in the ev ent that K > M . If K ≤ M , the players can get unique IDs at the beginning of the algorithm (see, e.g., [20], [21]). Since all previous related works (and this work) assume that the players are time synchronized, the assumption that the players have unique IDs is justified 2 . Note that such an assumption of unique IDs is common in applications such as multi-agent reinforcement learning [26]. I I I . A L G O R I T H M Our proposed polic y for each player j in the decentralized multi-player MAB setting with heterogeneous reward distri- butions and non-zero rew ards on collisions is presented in Algorithm 1. The policy for a player depends only on the player’ s own actions and observed rewards. Our algorithm proceeds in epochs since we do not assume knowledge of the time horizon T . The parameters δ > 0 , and ∈ (0 , 1) are inputs to the algorithm, and further details on these parameters are provided in Sections IV and VI respectively . Let L T denote the number of epochs in time horizon T . Each epoch ` has three phases: Exploration, Matching and Exploitation. Since we assume that the players hav e been assigned unique IDs at the beginning of the algorithm, it may be reasonable to assume that the total number of players is also known to them. Ne vertheless, for the sake of completeness, we pro vide Algorithm 2, which the players can use to calculate the number 1 The assumption of a unique optimal matching has been made and justified in previous works, see, e.g., [22], [23]. This assumption is needed to establish the con ver gence of the proposed decentralized algorithm to the optimal action profile. 2 In the dynamic spectrum access application, time synchronization and ID assignment can be implemented via a low bandwidth side channel, which is handled, for example, by a cellular network provider . Algorithm 1 Policy for each player j Initialization: Set ˆ µ j ( m, n ) = 0 for all j ∈ [ K ] , m ∈ [ M ] and n ∈ [ N ] . Let L T be the last epoch with time horizon T . Parameters δ > 0 and ∈ (0 , 1) are provided as inputs. Calculate K : All players run Algorithm 2 for epoch ` = 1 to L T do Exploration phase: Run Algorithm 3 with input ` Matching phase: Run Algorithm 4 with input ` for τ ` = p c 2 ` δ plays. Count the number of ‘plays’ where each action m ∈ [ M ] was played that resulted in player j being content: W ` ( j, m ) = τ ` X h =1 1 { ( a h,j = m,S h,j = C ) } Exploitation phase: For c 3 2 ` time units, play the action played most frequently from epochs d ` 2 e to ` that resulted in player j being content: a j = arg max m ` X i = d ` 2 e W i ( j, m ) end f or of players before the beginning of epoch 1. Since the players hav e unique IDs from 1 to K , players form groups of N , and use the fact that zero rewards are receiv ed when more than N players occupy a channel, to calculate the number of complete groups of N , and if needed, the size of the last incomplete group. The exploration phase is for player j to obtain estimates of the mean rewards (denoted by ˆ µ j ( m, n ) ) of arms m ∈ [ M ] , for all n ∈ [ N ] . This phase proceeds for a fixed number of time units ( T e ) in ev ery epoch. Since ev ery player has an unique ID, and the total number of players has been calculated, the exploration phase follows a protocol where the players sample each arm m ∈ [ M ] , for each n ∈ [ N ] , for T 0 ` δ time units. The exploration phase of epoch ` proceeds for T e ≤ K M N T 0 ` δ time units (the inequality arises as the number of players may not be an exact multiple of N ). During epoch ` , if the estimated mean re wards obtained at the end of the exploration phase do not deviate from the mean rewards by more than ∆ , the exploration phase of this epoch is successful . Since we do not assume that the players ha ve kno wledge of ∆ , ha ving increasing lengths of the e xploration phases by setting δ > 0 ensures that this phase is successful with high probability e ventually (for large enough ` ). Once the players have estimates of the mean re wards of the arms, they all need to find the action profile that maximizes the system re ward. This is done in the matching phase of each epoch. Gi ven an action profile a , we define the utility of player j to be u j ( a ) = ˆ µ j ( a j , k ( a j )) . (5) Section VI explains in detail the matching phase and the choice of the abo ve defined utility function. The matching phase is based on the work in [24], in which a strategic form game is studied, where players are aware of only their own payoffs 4 Algorithm 2 Calculate K Starting from player number 2, players form groups of size N in order of their IDs (from 2 to N +1, N + 2 to 2 N + 1 , etc.), gi ving d K − 1 N e ≤ M groups. The last group may hav e size < N . Calculate number of groups ( M time units): Groups numbered 1 to d K − 1 N e occupy arm corresponding to their group number . Player 1 plays arms 1 to M in order . Player 1 then knows the number of complete groups of size N (number of arms that yield zero rew ard). Calculate number of players in last group ( N time units): If a group received non-zero re ward (an incomplete last group if exists) during the pre vious step, the players of that group and player 1 play arm 1 for N time units. Players of group 1 ( 2 to N + 1 ) pick arm 1 in order cumulatively , i.e. , player 2 plays arm 1 during the first time unit of this step, players 2 and 3 during the second, and so on. The number of players in last group N 1 is equal to N minus the number of additional players from group 1 needed for players on arm 1 to get zero re ward. At the end of this step, player 1 kno ws K and con veys it to other players in the ne xt stages. Con vey K to complete groups ( ( K + 1) M time units): Groups numbered 1 to d K − 1 N e occupy arm corresponding to their group number . Player 1 plays arms 1 to M in order K times ( K M time units), during which the players in the complete groups receive zero re wards. Player 1 then stays silent for the next M time units, which con veys K to the complete groups. The complete groups no w know if there is an incomplete group or not, and release their arms. Con vey K to incomplete group ( K + 1 time units): If there is no incomplete group, all players stay silent for this step. Otherwise, the first N 1 + 1 players of group 1 (who kno w K now) play the incomplete group arm for K time units, and stay silent for the next time unit. (utilities). A decentralized strategy is presented in [24] that leads to an ef ficient configuration of the players’ actions. The work in [22], which considers essentially the same setting as in the current paper but with zero rewards on collisions, applies the decentralized strategy proposed in [24] directly without any modifications. This is because, with zero re wards on collisions, the utility of each player j can take only two possible values: (i) 0 , or (ii) the estimated mean rew ard of the arm chosen, which is known to player j from the exploration phase. Therefore, the matching phase in [22] corresponds precisely to the problem studied in [24], where the players know their own utilities exactly . Howe ver , in our setting with non-zero rewards on collisions, we face the difficulty that the utilities of the players take on more than two possible v alues and are not known exactly . This is because, in our setting, each player j does not kno w the total number of players choosing the same arm k ( a j ) , and therefore cannot determine the utility defined in (5) exactly . Each player j needs to estimate k ( a j ) based on the actual instantaneous re wards seen in the matching phase, as described in Algorithm 4. Thus, we ha ve to work with estimated utilities as opposed to exact utilities as in [24] and [22]. In order to Algorithm 3 Exploration Phase for n = 1 to N do Starting from player 1, players form groups of size n in order of their IDs (from 1 to n , n + 1 to 2 n , and so on). Let the number of complete groups of size n be G . if b G M c ≥ 1 then for g = 1 to b G M c do Groups ( g − 1) M + 1 to g M play arms 1 to M in a round-robin fashion for T 0 ` δ time units, i.e . , they play arms 1 , 2 , . . . , M respectiv ely for T 0 ` δ time units, then play arms 2 , 3 , . . . , M , 1 respectiv ely for T 0 ` δ time units, and so on, until they play arms M , 1 , 2 , . . . , M − 1 respectiv ely for T 0 ` δ time units. end f or end if if G M / ∈ Z then Groups b G M c M + 1 to G play arms 1 to M in a round- robin fashion for T 0 ` δ time units, i.e. , they play arms 1 , . . . , G − b G M c M respecti vely for T 0 ` δ time units, then play arms 2 , . . . , G −b G M c M + 1 for T 0 ` δ time units, and so on, until they play arms M , 1 , . . . , G − b G M c M − 1 for T 0 ` δ time units. end if If the final group is incomplete with n 1 < n players, it is completed with n − n 1 players from group 1, and the completed group plays arms 1 to M for T 0 ` δ time units each. end f or provide regret guarantees, we need to prove that our algorithm leads to an action profile maximizing the sum of utilities of the players ev en with these estimated utilities. This is analyzed in Section VI. This phase proceeds for τ ` ˜ τ ` = c 2 ` δ time units in epoch ` , where c 2 is a constant. As in the exploration phase, we need increasing lengths of matching phases ( δ > 0 ) to guarantee that the players identify the optimal action profile with high probability . The action profile identified at the end of the matching phase is played in the exploitation phase for c 3 2 ` time units, where c 3 is a constant. As ` increases, the players get better estimates of the mean rewards and the probability of identifying the optimal action profile increases. Therefore, the length of the exploitation phase is set to be exponential in ` . The constants c 2 and c 3 are are chosen to be of the order of T e , the time taken by the exploration phase. Below , we provide the pseudo-code of the protocol to cal- culate K (Algorithm 2), and the e xploration phase (Algorithm 3) and the matching phase (Algorithm 4) of the algorithm. I V . M A I N R E S U LT Theorem 1. Given the system model specified in Section 2, the expected r e gret of the pr oposed algorithm for a time-horizon T and some 0 < δ < 1 is R ( T ) = O (log 1+ δ T ) . Pr oof: Let L T be the last epoch within a time-horizon of T . The regret incurred during the L T epochs can be analyzed as the sum of the regret incurred during the three phases of the 5 Algorithm 4 Matching phase algorithm Initialization: Let κ > M N . Denote by ˆ Z h,j the observed (estimated) state of player j at time h , and set ˆ Z 1 ,j = [¯ a 1 ,j , ¯ u 1 ,j , S 1 ,j ] , where ¯ a 1 ,j unif ∼ [ M ] , ¯ u 1 ,j = 0 and S 1 ,j = D . Set τ ` = ˜ τ ` = p c 2 ` δ . Input parameter ∈ (0 , 1) . for play h = 1 to τ ` do Action dynamics: If S h,j = C , set action a h,j as: a h,j = ( ¯ a h,j with prob 1 − κ a ∈ [ M ] \ ¯ a h,j with uniform prob κ M − 1 . If S h,j = D , action a h,j is chosen uniformly from [ M ] . Estimate utility: Upon choosing action a h,j , play that arm for ˜ τ ` time units, and let the sample mean of the re wards observed during this duration be ¯ r ( a h,j ) . If ¯ r ( a h,j ) = 0 , the estimated utility of the player ˆ u h,j = 0 . Else let ˆ k ( a h,j ) = arg min n ∈ [ N ] , ˆ µ j ( a h,j ,n ) 6 =0 | ¯ r ( a h,j ) − ˆ µ j ( a h,j , n ) | . and the estimated utility ˆ u h,j is: ˆ u h,j = ˆ µ j ( a h,j , ˆ k ( a h,j )) . State Dynamics: If S h,j = C and [ a h,j , ˆ u h,j ] = [¯ a h,j , ¯ u h,j ] , set: ˆ Z h +1 ,j = ˆ Z h,j If S h,j = C and [ a h,j , ˆ u h,j ] 6 = [¯ a h,j , ¯ u h,j ] or S h,j = D , set: ˆ Z h +1 ,j = ( [ a h,j , ˆ u h,j , C ] with prob 1 − ˆ u h,j [ a h,j , ˆ u h,j , D ] with prob 1 − 1 − ˆ u h,j (6) end f or algorithm. The exploitation phase in epoch ` of the algorithm lasts for c 3 2 ` time units. Thus, it is easy to see that L T < log T . Let R 1 , R 2 and R 3 denote the regret incurred over L T epochs, during the exploration phase, the matching phase, and the exploitation phase, respectively . Let ` 0 be the first epoch ` such that T 0 ∆ 2 2 ` 4 δ ≥ 1 , (7) C 0 e − C ρ ` δ/ 2 ≤ e − 1 (8) ˜ τ ` = p c 2 ` δ ≥ d 2 ln( 2 κ ) (∆ + 2 ν min ) 2 e (9) where C ρ is defined in (52) in Appendix B. Note that (7) - (9) hold for all ` ≥ ` 0 . W e upper bound the regret incurred during the e xploration and matching phases by K (which is the maximum regret that can be accumulated in one time unit) times the total time taken by these phases. For the e xploitation phase, we incur regret only when the action profile played during this phase is not optimal. 1) Exploration phase: Since the exploration phase in each epoch ` proceeds for at most K M N T 0 ` δ time units, R 1 ≤ L T X ` =1 K 2 M N T 0 ` δ ≤ K 2 M N T 0 log 1+ δ T . (10) 2) Matching phase: In epoch ` , the matching phase runs for τ ` ˜ τ ` = c 2 ` δ time units. Thus R 2 ≤ K L T X ` =1 c 2 ` δ ≤ K c 2 L 1+ δ T ≤ K c 2 log 1+ δ T . (11) 3) Exploitation phase: In the exploitation phase, re gret is incurred in the following two events: a) Let E ` denote the e vent that there exists some player j ∈ [ K ] , arm m ∈ [ M ] , and number of players on the arm n ≤ N , such that there exists some epoch i , with d ` 2 e ≤ i ≤ ` , such that the estimate of the mean reward ˆ µ j ( m, n ) obtained after the exploration phase of epoch i satisfies | ˆ µ j ( m, n ) − µ j ( m, n ) | ≥ ∆ . b) Let F ` denote the ev ent that given that all the players hav e | µ j ( m, n ) − ˆ µ j ( m, n ) | ≤ ∆ for all m ∈ [ M ] and all n ∈ [ N ] for all epochs d ` 2 e to ` , the action profile chosen in the matching phase of epoch ` is not optimal. From Lemma 1, we hav e an upper bound on the probability of e vent E ` j,m,n that for some fix ed player j , arm m , and number of players on the arm n , there exists some epoch i , with d ` 2 e ≤ i ≤ ` , such that the estimate of the mean reward ˆ µ j ( m, n ) obtained after the e xploration phase of epoch i satisfies | ˆ µ j ( m, n ) − µ j ( m, n ) | ≥ ∆ . Thus, we ha ve that P ( E ` ) = P [ j ∈ [ K ] ,m ∈ [ M ] ,n ∈ [ N ] E ` j,m,n (12) ≤ K M N P ( E ` j,m,n ) (13) ≤ K M N e − T 0 ∆ 2 2 ( ` 4 ) δ ` 1 − e − T 0 ∆ 2 ( ` 4 ) δ . (14) W e also hav e the follo wing upper bound on the proba- bility of e vent F ` from Lemma 6: P ( F ` ) ≤ C 0 exp ( − C ρ ` δ / 2 ) ` . (15) Using (7) and (8) and the upper bounds in (14) and (15) , we ha ve that for all epochs ` ≥ ` 0 : P ( E ` ) ≤ 2 K M N e − ` (16) P ( F ` ) ≤ e − ` . (17) Therefore, R 3 = K L T X ` =1 c 3 2 ` ( P ( E ` ) + P ( F ` )) (18) ≤ 2 K c 3 2 ` 0 + K c 3 L T X ` = ` 0 (2 K M N + 1) 2 e ` (19) ≤ 2 K c 3 2 ` 0 + 2 K c 3 (2 K M N + 1) e − 2 . (20) 6 Note that (18) follows from the fact that regret is incurred in the exploitation phase only if ev ents E ` or F ` occur , (19) follows from upper bounding the regret incurred during the first ` 0 epochs by the maximum possible regret, and using (16) and (17) to upper bound the re gret for epochs greater than ` 0 . Thus R ( T ) = R 1 + R 2 + R 3 ≤ K 2 M N T 0 log 1+ δ T + K c 2 log 1+ δ T + 2 K c 3 2 ` 0 + 2 K c 3 (2 K M N + 1) e − 2 ∼ O (log 1+ δ T ) . (21) Remark 1. Since the system parameters such as ∆ and the mixing times of the Markov chain ar e unknown, having incr easing lengths of exploration and matching phases by setting δ > 0 guarantees that ther e exists some epoch ` 0 , suc h that for all epochs ` ≥ ` 0 , the probabilities of events E ` and F ` decr ease exponentially as e − ` . However , we have observed empirically (refer Section VII) that setting δ = 0 r esults in incurring zer o r egr et during the exploitation phase with high pr obability right fr om the earlier epochs. Remark 2. If a lower bound on the par ameter ∆ , say ˜ ∆ , is known, T 0 can be set to 2 ˜ ∆ 2 . Note that, since c 2 and c 3 ar e of or der T e , and T e is of the or der K M N ∆ 2 , the re gr et bound in terms of all the key system parameters is then of order O ( K 2 M N ˜ ∆ 2 log 1+ δ T ) . In the following sections, we provide details on the key results that are used in the proof of Theorem 1. V . E X P L O R AT I O N P H A S E The exploration phase is for player j to obtain estimates of the mean re wards of arms m ∈ [ M ] for all n ∈ [ N ] . During the exploitation phase of epoch ` , each player plays the most frequently played action during the matching phases of epochs d ` 2 e to ` while being in a content state. Thus, we bound the probability that the estimated mean re wards deviate from the mean re wards by more than ∆ in at least one of the epochs from d ` 2 e to ` , by O ( e − ` ) . Lemma 1. Given ∆ as defined in Section 2, for a fixed player j , arm m , and number of players on the arm n ≤ N , let E ` j,m,n denote the event that there exists at least one epoch i with d ` 2 e ≤ i ≤ ` such that the estimate of the mean r ewar d ˆ µ j ( m, n ) obtained after the explor ation phase of epoch i satisfies | ˆ µ j ( m, n ) − µ j ( m, n ) | ≥ ∆ . Then P ( E ` j,m,n ) ≤ e − T 0 ∆ 2 2 ( ` 4 ) δ ` 1 − e − T 0 ∆ 2 ( ` 4 ) δ . (22) The proof of this lemma is provided in Appendix A. V I . M A T C H I N G P H A S E Strategic form games in game theory are used to model situations where players choose actions simultaneously (rather than sequentially) and do not hav e knowledge of the actions of other players. In such games, each player has a utility function u j : A → [0 , 1] , that assigns a real valued payoff (utility) to each action profile a ∈ A . An algorithm that works under the assumption that ev ery agent can observe only their own action and utility receiv ed is called a payoff based method. The matching phase of our proposed algorithm builds on [24], where a payof f based decentralized algorithm that leads to maximizing the sum of the utilities of the players is presented. In order to pose the multi-player MAB problem as a strategic form game, we need to design the utility functions of the players in a way such that the system regret is minimized, or equi valently , the system performance is maximized. Denote by u j ( a ) the utility of player j associated with the action profile a . W e define: u j ( a ) = ˆ µ j ( a j , k ( a j )) . (23) A similar utility function is used in [22], where it is assumed that collisions result in zero rewards for the colliding players. Note that when players receive zero rew ards on collisions, ˆ µ j ( a j , k ( a j )) = 0 whenev er k ( a j ) ≥ 2 . Therefore, in the work of [22], the utility for each player j can be determined exactly based on whether the instantaneous re ward is zero or non-zero. Howe ver , in the setting we consider with non-zero rew ards on collision, since player j does not know k ( a j ) , the utility u j ( a ) is also not known exactly . Each player j needs to estimate k ( a j ) based on the instantaneous re wards seen in the matching phase, and use this to estimate the utility , as described in Algorithm 4. The action profile that maximizes the sum of the utilities is called an efficient action profile. The following lemma states that if for all j ∈ [ K ] , m ∈ [ M ] and n ∈ [ N ] , | ˆ µ j ( m, n ) − µ j ( m, n ) | ≤ ∆ , then by our choice of the utility function, the efficient action profile maximizing the sum of the utilities is also the same as the optimal action profile maximizing the sum of e xpected re wards. Lemma 2. If for all j ∈ [ K ] , m ∈ [ M ] and n ∈ [ N ] , the following condition is satisfied : | ˆ µ j ( m, n ) − µ j ( m, n ) | ≤ ∆ , (24) then arg max a ∈ A K X i =1 µ j ( a j , k ( a j )) = arg max a ∈ A K X i =1 ˆ µ j ( a j , k ( a j )) . (25) The condition giv en by (24) is guaranteed with high probability by the exploration phase of the algorithm. The proof of the abov e lemma is similar to the proof of [22, Lemma 1]. Thus, the ef ficient action profile that maximizes the sum of utilities (estimated mean rewards) is the same as the optimal action profile that minimizes regret or equiv alently , maximizes system performance. A. Description of The Matching Phase Algorithm This phase consists of τ ` plays, where each play lasts ˜ τ ` time units and ∈ (0 , 1) is a parameter of the algorithm. Each 7 player j is associated with a state Z h,j = [¯ a h,j , ¯ u h,j , S h,j ] during play h , where ¯ a h,j ∈ [ M ] is the baseline action of the player , ¯ u h,j ∈ [0 , 1] is the baseline utility of the player and S h,j ∈ { C, D } is the mood of the player ( C denotes “content” and D denotes “discontent”). Note that { Z 1 ,j , Z 2 ,j , ... } are the states resulting from running the matching phase algorithm (essentially the state update step) with the exact utilities u j . Since in our setting, the utility or payoff received by each player is estimated and the state is updated using this estimate, each player in our algorithm works instead with an estimated state ˆ Z h,j . When the player is content, the baseline action is chosen with high probability ( 1 − κ ) and every other action is chosen with uniform probability . The parameter is provided as an input to the algorithm, and Appendix C discusses ho w to choose . If the player is discontent, the action is chosen uniformly from all arms and there is a high probability that the player would choose an arm different from the baseline action. This part of the algorithm constitutes the action dynamics. The baseline action can be interpreted as the arm the agent expects to play for a long time e ventually and the baseline utility can be interpreted as the payoff the player expects to receiv e upon playing the baseline action. The player being content is an indication that the payoff received by the player while playing his baseline action is satisfactory and as expected. Thus, the goal in designing the matching phase algorithm is for all the players to align their baseline actions and baseline utilities to the efficient action profile and be content in this state. Note that the action dynamics do not depend on the utilities. W e have seen the justification for using the utility function u j ( a ) = ˆ µ j ( a j , k ( a j )) in the introduction of Section VI. Howe ver , the p layers observe only their o wn instantaneous re ward and do not know k ( a j ) (since it depends on the actions chosen by all the players during the action dynamics step), in order to determine the utility . Thus, each player estimates k ( a j ) as ˆ k ( a j ) and uses this to estimate u j ( a ) as ˆ u j ( a ) . This is done by the player pulling the arm chosen during the action dynamics step for ˜ τ ` time units and recording the sample mean of the rewards observed during this duration as ¯ r j ( a j ) . The estimate ˆ k ( a j ) is given by: ˆ k ( a j ) = arg min n ∈ [ N ] , ˆ µ j ( a j ,n ) 6 =0 | ¯ r ( a j ) − ˆ µ j ( a j , n ) | and ˆ u j ( a ) = ˆ µ j ( a j , ˆ k ( a j )) . Lemma 3. If ˜ τ ` ≥ d 2 ln( 2 κ ) (∆+2 ν min ) 2 e , which holds for all ` ≥ ` 0 (see (9) ), we have that p = P { u j ( a ) 6 = ˆ u j ( a ) } ≤ κ , wher e ν min = min j,m,n 1 ,n 2 | µ j ( m, n 1 ) − µ j ( m, n 2 ) | , with n 1 , n 2 ∈ [ N ] , µ j ( m, n 1 ) , µ j ( m, n 2 ) 6 = 0 , j ∈ [ K ] and m ∈ [ M ] . The proof follo ws directly from Hoeffding’ s inequality . Thus, each player has an estimate of his utility that is correct with high probability . Note that in Algorithm 4, ˆ u j ( a ) is referred to as just ˆ u j for readability . The player updates his current estimated state by comparing the action played and the estimate of the utility receiv ed with the baseline action and baseline utility associated with the current estimated state. If the player is content and his baseline action and utility match the action played and the estimate of the utility , the estimated state remains the same. Otherwise, the estimated state for the ne xt play is chosen probabilistically based on the estimate of the utility . The rationale behind the particular probabilities chosen is that when the utility receiv ed is high, the player is more likely to be content. The utility each player receives is equiv alent to feedback from the system on how the entire action profile af fects the rew ard received by this player . If the player receiv es a lower payoff due to that arm not being good or due to collisions, there is a higher probability of the player becoming discontent and exploring other arms. On the other hand, if the payoff receiv ed is higher , there is a higher probability of the player staying content and exploiting the same arm again. Thus the agent dynamics and state dynamics balance the exploration- exploitation tradeoff in the multi-player MAB setting. During the matching phase algorithm, each player keeps a count of the number of times each arm was played that resulted in the player being content: W ` ( j, m ) = τ ` X h =1 1 { ( a h,j = m,S h,j = C ) } . The action chosen by the player for the exploitation phase is the arm played most frequently from epochs d ` 2 e to ` that resulted in the player being content: a j = arg max m ` X i = d ` 2 e W i ( j, m ) . B. Analysis of the Matching Phase Algorithm The matching phase algorithm is based on the work in [24], and the guarantees pro vided there state that the action profile maximizing the sum of the utilities of the players is played for a majority of the time. The analysis of the algorithm in [24] relies on the theory of regular perturbed Markov decision processes [27]. The dynamics of the matching phase algorithm induce a Marko v chain ov er the state space Z = Π K j =1 ([ M ] × [0 , 1] × M ) where M = { C , D } , i.e. , each state z ∈ Z is a vector of the states of all players. Let P 0 denote the probability transition matrix of the process when = 0 and P denote the transition matrix when > 0 . The process P is a regular perturbed Marko v process if for any z , z 0 ∈ Z (Equations (6),(7) and (8) of Appendix of [27]): 1) P is ergodic 2) lim → 0 P z z 0 = P 0 z z 0 3) P z z 0 > 0 implies for some , there exists r ≥ 0 such that 0 < lim → 0 − r P z z 0 < ∞ The value of r satisfying the third condition is called the resistance of the transition z → z 0 , denoted by r ( z → z 0 ) . 8 Let µ be the unique stationary distrib ution of P , where P is a regular perturbed Markov process. Then lim → 0 µ exists and the limiting distribution µ 0 is a stationary distribution of P 0 . The stochastically stable states are the support of µ 0 . The main result of [24, Theorem 3.2] states that the stochastically stable states of the Markov chain induced by their proposed payoff based decentralized learning rule maximize the sum of the utilities of the players. Ho we ver , [24, Theorem 3.2] cannot be applied directly in our setting, because: 1) The utilities in our algorithm are estimated, and thus the resulting dynamics in our matching phase algorithm are different from that in [24]. 2) Our game is not interdependent (interdependence prop- erty implies that it is not possible to divide the agents into two distinct subsets, where the actions of agents in one subset do not af fect the utilities of those in the other). W e pro ve that, despite these two differences, a result similar to [24, Theorem 3.2] holds, i.e. , the stochastically stable states of our matching phase algorithm maximize the sum of the utilities of the players. Follo wing that, we prov e that the stochastically stable state that maximizes the sum of utilities is played for the majority o f time in the matching phase with high probability . Theorem 2. Under the dynamics defined in Algorithm 4, a state z ∈ Z is a stochastically stable state if and only if the action pr ofile given by the baseline actions of all the players in this state maximizes the sum of their utilities and all the players are content. Belo w , we present a fe w key lemmas required for the proof of Theorem 2. The rest of the proof follows from the proof of [24, Theorem 3.2]. Lemma 4. The dynamics presented in Algorithm 4 is a r e gular perturbed Marko v pr ocess. Pr oof: The transitions where P z z 0 > 0 and lim → 0 P z z 0 = 0 are called perturbations. The players in our algorithm do not directly observe their utilities. Instead they estimate their utilities, and there is a probability of error of p in this step. Howe ver , this can be represented as an perturbation by rewriting the state update step as follows: If S h,j = C : If [ a h,j , u h,j ] = [¯ a h,j , ¯ u h,j ] , the ne w state is ˆ Z h +1 ,j = [¯ a h,j , ¯ u h,j , C ] → [¯ a h,j , ¯ u h,j , C ] w .p. 1 − p [¯ a h,j , ˆ u h,j , C ] , w .p. p ( 1 − ˆ u h,j ) [¯ a h,j , ˆ u h,j , D ] , w .p. p (1 − 1 − ˆ u h,j ) (26) If [ a h,j , u h,j ] 6 = [¯ a h,j , ¯ u h,j ] , where q < 1 ˆ Z h +1 ,j = [¯ a h,j , ¯ u h,j , C ] → [ a h,j , u h,j , C ] , w .p. (1 − p )( 1 − u h,j ) [ a h,j , u h,j , D ] , w .p. (1 − p )(1 − 1 − u h,j ) [¯ a h,j , ¯ u h,j , C ] w .p. q p [ a h,j , ˆ u h,j , C ] , w .p. (1 − q ) p ( 1 − ˆ u h,j ) [ a h,j , ˆ u h,j , D ] , w .p. (1 − q ) p (1 − 1 − ˆ u h,j ) (27) If S h,j = D : ˆ Z h +1 ,j = [¯ a h,j , ¯ u h,j , D ] → [ a h,j , u h,j , C ] w .p. (1 − p ) 1 − u h,j [ a h,j , u h,j , D ] , w .p. (1 − p )(1 − 1 − u h,j ) [ a h,j , ˆ u h,j , C ] , w .p. p 1 − ˆ u h,j [ a h,j , ˆ u h,j , D ] , w .p. p (1 − 1 − ˆ u h,j ) (28) Note that Z h +1 ,j is the state obtained when updated with the true utility or payoff receiv ed at each time. Another way of looking at this transformed state dynamics is that, ˆ Z h +1 ,j = Z h +1 ,j w .p. 1 − p (29) W e hav e from Lemma 3 that p ≤ κ . Thus we can see that the unperturbed process of our matching algorithm ( i.e. when = 0 ) is the same as that in [24]. It can be easily seen from the rewritten state update step that our dynamics satisfy the three conditions (mentioned in the beginning of Section VI-B ) for a regular perturbed Markov chain. The second way in which our dynamics differ from those in [24] is that our strategic form game is not interdependent (see [24, Definition 1]). The interdependence property implies that it is not possible to di vide the agents into two distinct subsets, where the actions of agents in one subset do not affect the utilities of those in the other . Ho we ver , in our case, consider an action profile where N + 1 players play an arm m . These N + 1 players will recei ve zero utility , no matter what the other players play . Thus, our game is not interdependent. Howe ver , the only time the interdependence property is used in [24] is to find the recurrence classes of P 0 , and we can prove a similar result about the recurrence classes of the Marko v chain induced by our matching phase algorithm using the specific structure of our algorithm. Lemma 5. Let D 0 r epresent the set of states in which everyone is discontent. Let C 0 r epresent the set of states in which all agents are content and their baseline actions and utilities ar e aligned. Then the r ecurrence classes of the unperturbed process ar e D 0 and all singletons z ∈ C 0 . The proof is provided in Appendix B. Let D be any state in D 0 and z , z 0 ∈ C 0 . It can be seen that the resistances for the paths z → D , D → z and z → z 0 in our algorithm are the same as those in [24, Section 4]. For instance, the transition from z → D occurs only when a player explores or the utility is miscalculated. Since we have p ≤ κ , the probability of this e vent is O ( κ ) and hence the resistance of the transition is c . Similarly it can be seen that the resistance for the path D → z is ( K − P j ∈ [ K ] ¯ u j ) and z → z 0 is bounded in [ c, 2 c ) . The rest of the proof of Theorem 2 follo ws from [24, Theorem 3.2]. Thus, the stochastically stable states of our matching phase algorithm maximize the sum of the utilities of the players. Since we assume a unique optimal action profile, the state with the baseline actions and utilities corresponding 9 to the optimal action profile and all players being content is the stochastically stable state. In the following lemma, we bound the probability of the optimal action profile not being played during the exploitation phase of epoch ` (identified from the matching phase of epochs d ` 2 e to ` ), giv en that e vent E ` does not occur ( i.e. , the exploration phases of epochs d ` 2 e to ` were successful). Lemma 6. In some epoch ` , let a ∗ = arg max a ∈ A K X j =1 u j ( a ) and let a 0 = [ a 0 1 , ..., a 0 K ] wher e a 0 j = arg max m ∈ [ M ] ` X i = d ` 2 e W i ( j, m ) is the action pr ofile played in the exploitation phase of epoch ` by player j . Assume that for all players j ∈ [ K ] , for all arms m ∈ [ M ] and all n ∈ [ N ] , the estimated mean re wards obtained at the end of the exploration phase for epochs d ` 2 e ≤ i ≤ ` satisfy | ˆ µ j ( m, n ) − µ j ( m, n ) | ≤ ∆ . Then P { a ∗ 6 = a 0 } ≤ C 0 exp ( − C ρ ` δ / 2 ) ` for some C 0 , C ρ > 0 . The proof relies on using Chernoff-Hoef fding bounds for Marko v chains ( [28, Theorem 3]) and is provided in Appendix B. V I I . S I M U L A T I O N R E S U LT S In this section, we present some illustrative simulation results. W e consider two cases, one with K = M and the other with K > M . In both cases, we set N = 2 . The mean re wards µ j ( m, 1) for player j , arm m are generated uniformly at random from [0 . 2 , 0 . 95] . The mean rewards µ j ( m, 2) are generated as µ j ( m, 2) = 0 . 5 µ j ( m, 1) + u , where u is a uniform random v ariable in [ − 0 . 05 , 0 . 05] . The rewards are generated from a uniform distribution in the range [-0.18, 0.18] around the mean. W e set δ = 0 (see Appendix C for details). Due to numerical considerations, we modify the probabilities in (6) as u max j − ˆ u j and 1 − u max j − ˆ u j instead of 1 − ˆ u j and 1 − 1 − ˆ u j , where u max j is the maximum utility that can be receiv ed by the player . Further explanation of this is giv en in Appendix C. T o the best of our knowledge, the setting we ha ve considered that allows for heterogeneous rew ard distributions and non- zero re wards on collisions has not been studied prior to this work. Therefore, it is not possible to compare our algorithms against existing algorithms. A naive uniform pull algorithm, where every player uniformly chooses an arm at each time instant would giv e linear regret, which would be worse than our proposed algorithm. For the case with K = M , we consider a system with K = 6 players and M = 6 arms. The optimal action profile is a ∗ = [2 1 1 6 4 5] . The considered system has ∆ = 0 . 05 . Fig. 1: A verage accumulated regret as a function of time Fig. 2: A verage accumulated regret as a function of time W e set T 0 = 800 , c 2 = c 3 = 6 × 10 3 . The value of is set to 10 − 5 . Since it is possible for each player to play a distinct arm and receiv e non-zero rewards, u max j = max m ∈ [ M ] ˆ µ j ( m, 1) for all j ∈ [ K ] . The algorithm was run for 10 epochs and the experiment was repeated for 100 iterations and the accumulated regret averaged over the iterations. For the case with K > M , we consider a system with K = 6 players and M = 3 arms. The optimal action profile is a ∗ = [1 2 3 1 2 3] . The considered system has ∆ = 0 . 0305 . W e set T 0 = 2 × 10 3 , c 2 = c 3 = 10 4 . The value of is set to 10 − 5 . Since it is required for 2 players to choose each arm for all the players to recei ve non-zero rewards, u max j = max m ∈ [ M ] ˆ µ j ( m, 2) . The algorithm was run for 10 epochs and the experiment was repeated for 100 iterations and the accumulated regret averaged over the iterations. From Figure 1 and Figure 2, we see that the av erage accumulated regret grows sub-linearly with time. W e can also observe that the av erage regret incurred during the exploitation phase of each epoch is small as the matching phase con verges to the optimal action profile with high probability . 10 V I I I . C O N C L U S I O N The multi-player multi-armed bandit approach has been used extensi vely in recent literature to model uncoordinated dynamic spectrum access systems. The aim of this work was to pose the more realistic and challenging problem setup of multi-player multi-armed bandits with heterogeneous re ward distributions and non-zero re wards on collisions, and provide a completely decentralized algorithm achieving near order-optimal regret. W e considered a setting where the players cannot communicate with each other and can observe only their o wn actions and re wards. While settings with non-zero rewards on collisions and heterogeneous re ward distrib utions of arms across players ha ve been considered separately in prior work, a model allowing for both has not been studied previously to the best of our kno wledge. W ith this setup, we presented a policy that achiev es near order optimal expected re gret of order O (log 1+ δ T ) for some 0 < δ < 1 ov er a time-horizon of duration T . Our results have applications to non-orthogonal multiple access systems (NOMA) (see e.g. [29] for some recent results in this area). A common assumption in most works studying the multi-player multi-armed bandit setup, including ours, is time synchronization. A possible direction of future study in this area is to examine this problem without the assumption that players are time synchronized. A P P E N D I X A E X P L O R A T I O N P H A S E : P R O O F O F L E M M A 1 W e want to bound the probability of the e vent E ` j,m,n that after the exploration phase of epoch ` , there exists at least epoch d ` 2 e ≤ i ≤ ` such that the estimated mean reward after the exploration phase of epoch i ˆ µ j ( m, n ) satisfies | µ j ( m, n ) − ˆ µ j ( m, n ) | ≥ ∆ . For a fixed player j , arm m and number of players on the arm n ≤ N , the number of total number of re ward samples obtained after the exploration phase of epoch i from the corresponding re ward distribution with mean re ward µ j ( m, n ) is T 0 P i j =1 j δ ≥ T 0 ( i 2 ) 1+ δ . After the exploration phase of epoch i , the probability that the estimated mean rew ard ˆ µ j ( m, n ) deviates from µ j ( m, n ) by more than ∆ is: P { After epoch i : | ˆ µ j ( m, n ) − µ j ( m, n ) | ≥ ∆ } ≤ e − 2 T 0 ∆ 2 P i j =1 j δ ≤ e − 2 T 0 ∆ 2 ( i 2 ) 1+ δ It follo ws that P E ` j,m,n = P ` [ i = d ` 2 e { After epoch i : | ˆ µ j ( m, n ) − µ j ( m, n ) | ≥ ∆ } ≤ ` X i = d ` 2 e P { After epoch i : | ˆ µ j ( m, n ) − µ j ( m, n ) | ≥ ∆ } ≤ ` X i = d ` 2 e e − 2 T 0 ∆ 2 ( i 2 ) 1+ δ ≤ ` X i = d ` 2 e e − T 0 ∆ 2 ( ` 4 ) δ i ≤ e − T 0 ∆ 2 2 ( ` 4 ) δ ` 1 − e − T 0 ∆ 2 ( ` 4 ) δ . (30) A P P E N D I X B M A T C H I N G P H A S E By definition of the stochastically stable state, this state is played for a majority of time ev entually . The matching phase algorithm presented in our paper differs from the dynamics presented in [24] in two aspects. Despite these two dif ferences, we sho w that the proof technique of [24, Theorem 3.2] can be adapted to our dynamics as well. A. Proof of Lemma 5 In the unperturbed process, if all the players are discontent, they remain discontent with probability 1 . Thus we have that D 0 represents a single recurrence class. In each state z ∈ C 0 , each player chooses their baseline action, and since the utilities recei ved would be the same as the baseline utilities, each player stays content with probability 1 . Thus we have that D 0 and the singletons z ∈ C 0 are recurrence classes. T o see that the above are the only recurrence classes, look at any state that has atleast one discontent player and atleast one content player . W e have that the baseline actions and utilities of the content players are aligned (since this is the unperturbed process). Consider one of the discontent players. This player chooses an action at random and there is a positive probability (bounded away from 0) of choosing the action of a content player . This would cause the utility of the content player to become misaligned with his baseline utility , thus leading to that player becoming discontent. This continues until all players become discontent. Thus any such state cannot be a recurrent state. Now consider a state where all agents are content, b ut there is at least one player j whose benchmark action and utility are not aligned. F or the unperturbed process, in the following step, the same action profile would be played but this would cause player j to become discontent and it follows from the pre vious argument that this leads to all players becoming discontent. Thus we have that D 0 and all singletons in C 0 are the only recurrent states of the unperturbed process. 11 B. Proof of Lemma 6 Since it is giv en that the exploration phases for all epochs d ` 2 e to ` are successful, the efficient action profiles maximizing the sum of utilities in the matching phase for all epochs d ` 2 e ≤ i ≤ ` are the same, and are equal to the optimal action profile maximizing the sum of expected mean re wards: arg max a ∈ A K X i =1 µ j ( a j , k ( a j )) = arg max a ∈ A K X i =1 u j ( a ) . Let ¯ u ` denote the utilities of the players for the optimal action profile a ∗ during epoch ` . The optimal state of the Markov chain is then z ∗ ` = [ a ∗ , ¯ u ` , C K ] during epoch ` . Note that optimal state differs only in the baseline utilities for epochs d ` 2 e to ` . In order to bound the probability of the event { a ∗ 6 = a 0 } , we use the Chernoff Hoeffding bounds for Markov chains from [28], which is also used in [22] and [23]. When the Marko v chain is in state z , the estimated/observed state is ˆ z , corresponding to the estimated states of all the players. The function f ( z ) considered here in order to use the bound from [28, Theorem 3] for epoch ` is: f ( z ) = 1 { ˆ z = z ∗ ` } , (31) i.e. the estimated state is the optimal state. Recall that τ i = √ c 2 i δ / 2 (replacing ` by i in τ ` ). It follo ws that P { a 0 6 = a ∗ } ≤ P ` X i = d ` 2 e τ i X h =1 f ( z h ) ≤ 1 2 ` X i = d ` 2 e τ i . (32) Define X i = τ i X h =1 1 { ˆ z h = z ∗ i } (33) L = 1 2 ` X i = d ` 2 e τ i . (34) Using the Chernof f bound, for some s > 0 , it follo ws that P ` X i = d ` 2 e X i ≤ 1 2 ` X i = d ` 2 e τ i = P e − s P ` i = d ` 2 e X i ≥ e − sL (35) ≤ e sL Π ` i = d ` 2 e E e − sX i . (36) In order to use the bound from [28, Theorem 3], we need to calculate µ ` = E [ f ( z )] = P ( ˆ z = z ∗ ` ) . Observe that E [ f ( z )] = P { ˆ z = z ∗ ` } (37) ≥ P { z = z ∗ ` , ˆ z = z } (38) ≥ P { z = z ∗ ` } (1 − K κ ) (39) where the last step follows from Lemma 3. Define π z = min d ` 2 e≤ i ≤ ` P { z = z ∗ i } (40) µ = min d ` 2 e≤ i ≤ ` µ i . (41) From the definition of a stochastically stable state, we can choose an small enough such that µ ≥ π z (1 − K κ ) > 1 2(1 − η ) (42) for some 0 < η < 1 / 2 . W e can no w use the bound from [28, Theorem 3] for epoch d ` 2 e ≤ i ≤ ` to get P n X i ≤ τ i 2 o ≤ P ( τ i X h =1 1 { ˆ z h = z ∗ i } ≤ (1 − η ) µ i τ i ) (43) ≤ c 0 k φ i k π exp − η 2 µ i τ i 72 T (44) ≤ c 0 k φ i k π exp − η 2 µτ i 72 T (45) where c 0 > 0 , φ i is the initial distribution of the Marko v chain in the i -th epoch and T is the 1/8-th mixing time of the Markov chain. Using s = η 2 (1 − η )72 T it follo ws that, E e − sX i ≤ (1 + c 0 k φ i k π ) exp − η 2 µτ i 72 T . (46) Using the abo ve in (36), P ` X i = d ` 2 e X i ≤ 1 2 ` X i = d ` 2 e τ i (47) ≤ Π ` i = d ` 2 e (1 + c 0 k φ i k π ) e η 2 L (1 − η )72 T e − η 2 µτ i 72 T (48) ≤ C ` 0 exp − η 2 ( µ − 1 2(1 − η ) )2 L 72 T ! (49) ≤ C ` 0 exp − η 2 √ c 2 2 − (1+ δ / 2) ( µ − 1 2(1 − η ) ) ` 1+ δ / 2 72 T ! (50) ≤ C 0 exp − C ρ ` δ / 2 ` (51) where C 0 = max d ` 2 e≤ i ≤ ` (1 + c 0 k φ i k π ) , and C ρ = η 2 √ c 2 2 − (1+ δ / 2) ( µ − 1 2(1 − η ) ) 72 T > 0 . (52) A P P E N D I X C D E TA I L S O N S I M U L AT I O N S Due to numerical considerations, we modify the state update step in the matching phase of the algorithm, which is defined in (6). W e modify the probabilities in (6) as u max j − ˆ u j and 1 − u max j − ˆ u j , instead of 1 − ˆ u j and 1 − 1 − ˆ u j respectiv ely . Here u max j denotes the maximum utility that can be receiv ed by player j , without reducing the utility of any other player to 12 zero. For e xample, when there are K = 6 players and M = 6 arms, each player can occupy a separate arm and all the players could receiv e non-zero utilities. Thus the value of u max j of player j would be u max j = max m ∈ [ M ] ˆ µ j ( m, 1) . Consider the case when there are K = 6 players, M = 3 arms and N = 2 . In this case, it is not possible for any player j to occupy an arm by himself without reducing the utilities of some other players to zero. In this case, u max j = max m ∈ [ M ] ˆ µ j ( m, 2) . The algorithm presented here is moti v ated for small systems. As the system size increases, ∆ becomes smaller and hence it becomes difficult to implement the proposed algorithm. Larger systems could be accommodated by dividing into subsystems and applying the protocol separately on each subsystem. Note that δ > 0 was required in the proof of Theorem 1 to bound the re gret incurred during the exploration and matching phases. Howe ver , in practice we observe that setting T 0 and c 2 large enough guarantees that equations (7) and (8) are satisfied ev en with δ = 0 . The order of magnitude of is important for the performance of the algorithm. Howe ver , the exact value of does not play much of a role. For example, in our simulations, we use = 10 − 5 , and similar results are achiev ed for = 10 − 4 as well. The value of needs to be chosen small enough such that the state corresponding to the optimal action is visited a certain number of times during the matching phase. Empirically , we observe that as the value of ∆ decreases, we need a smaller v alue of for con vergence. W e find that the value of depends on the optimality gap J 1 − J 2 and the size of the system. While [22] provides a upper bound on the value of , this bound depends on the analysis of the resistance tree structure of the perturbed Markov chain and needs to be computed beforehand for each setting. Note that such an analysis inv olving the resistance tree structures of our algorithm would be tedious and the resulting bound would be expensi ve to calculate. Since we only need to be of the right order of magnitude, we could tune this parameter using simulations. A C K N O W L E D G M E N T The authors of this paper would like to thank Aditya Deshmukh for v aluable discussions. R E F E R E N C E S [1] W . R. Thompson, “On the likelihood that one unknown probability exceeds another in view of the evidence of two samples, ” Biometrika , vol. 25, no. 3/4, pp. 285–294, 1933. [2] T . L. Lai and H. Robbins, “ Asymptotically efficient adaptiv e allocation rules, ” Advances in Applied Mathematics , vol. 6, no. 1, pp. 4–22, 1985. [3] P . Auer , N. Cesa-Bianchi, and P . Fischer, “Finite-time analysis of the multiarmed bandit problem, ” Mac hine learning , vol. 47, no. 2-3, pp. 235–256, 2002. [4] A. Garivier and O. Cappé, “The KL-UCB algorithm for bounded stochastic bandits and beyond, ” in Pr oceedings of the 24th Annual Confer ence on Learning Theory , vol. 19, Budapest, Hungary , 2011, pp. 359–376. [5] S. Bubeck, N. Cesa-Bianchi et al. , “Regret analysis of stochastic and nonstochastic multi-armed bandit problems, ” F oundations and T rends in Machine Learning , vol. 5, no. 1, pp. 1–122, 2012. [6] T . Lattimore and C. Szepesvári, Bandit Algorithms . Cambridge Univ ersity Press, 2020. [7] N. Cesa-Bianchi, C. Gentile, Y . Mansour, and A. Minora, “Delay and cooperation in nonstochastic bandits, ” in Conference on Learning Theory , vol. 49, Columbia University , New Y ork, New Y ork, USA, 2016, pp. 605–622. [8] B. Szörényi, R. Busa-Fekete, I. Heged ˝ us, R. Ormándi, M. Jelasity , and B. Kégl, “Gossip-based distrib uted stochastic bandit algorithms, ” in Journal of Machine Learning Resear ch W orkshop and Conference Pr oceedings , vol. 2. Atlanta, Georgia, USA: International Machine Learning Society , 2013, pp. 1056–1064. [9] N. K orda, B. Szörényi, and L. Shuai, “Distributed clustering of linear bandits in peer to peer networks, ” in Journal of Machine Learning Resear ch W orkshop and Confer ence Pr oceedings , vol. 48. New Y ork, New Y ork, USA: International Machine Learning Society , 2016, pp. 1301–1309. [10] E. Biglieri, A. J. Goldsmith, L. J. Greenstein, H. V . Poor, and N. B. Mandayam, Principles of cognitive radio . Cambridge University Press, 2013. [11] N. Evirgen and A. Kose, “The ef fect of communication on noncooperative multiplayer multi-armed bandit problems, ” in 2017 16th IEEE Interna- tional Conference on Machine Learning and Applications (ICMLA) , Cancun, 2017, pp. 331–336. [12] O. A vner and S. Mannor , “Multi-user lax communications: a multi-armed bandit approach, ” in IEEE INFOCOM 2016-The 35th Annual IEEE International Conference on Computer Communications , San Francisco, CA, 2016, pp. 1–9. [13] D. Kalathil, N. Nayyar, and R. Jain, “Decentralized learning for multiplayer multiarmed bandits, ” IEEE T ransactions on Information Theory , vol. 60, no. 4, pp. 2331–2345, 2014. [14] O. A vner and S. Mannor , “Concurrent bandits and cognitive radio networks, ” in Machine Learning and Knowledge Discovery in Databases . Springer Berlin Heidelberg, 2014, pp. 66–81. [15] J. Rosenski, O. Shamir, and L. Szlak, “Multi-player bandits–a Musical Chairs approach, ” in International Confer ence on Machine Learning , vol. 48, New Y ork, NY , USA, 2016, pp. 155–163. [16] A. Anandkumar , N. Michael, A. K. T ang, and A. Swami, “Distributed algorithms for learning and cognitiv e medium access with logarithmic regret, ” IEEE Journal on Selected Areas in Communications , vol. 29, no. 4, pp. 731–745, 2011. [17] E. Boursier and V . Perchet, “SIC-MMAB: Synchronisation inv olves communication in multiplayer multi-armed bandits, ” in Advances in Neural Information Pr ocessing Systems , vol. 32. Curran Associates, Inc., 2019, pp. 12 071–12 080. [18] L. Besson and E. Kaufmann, “Multi-player bandits revisited, ” arXiv pr eprint arXiv:1711.02317 , 2017. [19] H. Tibre wal, S. Patchala, M. K. Hanaw al, and S. J. Darak, “Distributed learning and optimal assignment in multiplayer heterogeneous networks, ” in IEEE INFOCOM 2019-IEEE Conference on Computer Communica- tions , Paris, France, 2019, pp. 1693–1701. [20] A. Mehrabian, E. Boursier, E. Kaufmann, and V . Perchet, “ A practical algorithm for multiplayer bandits when arm means v ary among players, ” in Proceedings of the T wenty Third International Confer ence on Artificial Intelligence and Statistics , v ol. 108, 2020, pp. 1211–1221. [21] A. Magesh and V . V . V eera valli, “Multi-user MABs with user dependent rew ards for uncoordinated spectrum access, ” in IEEE Asilomar Con- fer ence on Signals, Systems, and Computers , Pacific Grove, CA, USA, 2019, pp. 969–972. [22] I. Bistritz and A. Leshem, “Distributed multi-player bandits - A Game of Thrones approach, ” in Advances in Neural Information Pr ocessing Systems , vol. 31. Curran Associates, Inc., 2018, pp. 7222–7232. [23] ——, “Game of thrones: Fully distributed learning for multiplayer bandits, ” Mathematics of Operations Research , Oct 2020. [Online]. A vailable: http://dx.doi.org/10.1287/moor .2020.1051 [24] J. R. Marden, H. P . Y oung, and L. Y . P ao, “ Achieving pareto optimality through distributed learning, ” SIAM J ournal on Contr ol and Optimization , vol. 52, no. 5, pp. 2753–2770, 2014. [25] M. Bande, A. Magesh, and V . V . V eerav alli, “Dynamic spectrum access using stochastic multi-user bandits, ” IEEE W ireless Communications Letters , vol. 10, no. 5, pp. 953–956, 2021. [26] C. Boutilier, “Planning, learning and coordination in multiagent decision processes, ” in Proceedings of the 6th confer ence on Theor etical aspects of Rationality and Knowledge , 1996, pp. 195–210. [27] H. P . Y oung, “The evolution of conv entions, ” Econometrica: Journal of the Econometric Society , vol. 61, pp. 57–84, 1993. [28] K. M. Chung, H. Lam, Z. Liu, and M. Mitzenmacher, “Chernoff- Hoeffding bounds for Mark ov chains: Generalized and simplified, ” in 29th International Symposium on Theoretical Aspects of Computer Science (ST ACS 2012) , vol. 14, Dagstuhl, Germany , 2012, pp. 124–135. [29] M. J. Y oussef, V . V . V eera valli, J. Farah, and C. A. Nour, “Stochastic multi-player multi-armed bandits with multiple plays for uncoordinated spectrum access, ” in 2020 IEEE 31st Annual International Symposium 13 on P ersonal, Indoor and Mobile Radio Communications , London, United Kingdom, 2020, pp. 1–7. Akshayaa Magesh (S ’20) received her B. T ech. degree in Electrical Engineering from the Indian Institute of T echnology Madras, Chennai, India in 2018, and her Masters degree from the Department of Electrical and Computer Engineering at the Univ ersity of Illinois at Urbana-Champaign in 2020. She is now a Ph.D. candidate at the Department of Electrical and Computer Engineering at the University of Illinois at Urbana-Champaign. Her research interests span the areas of statistical inference, theoretical and algorithmic aspects of robust machine learning and reinforcement learning. V enugopal V . V eerav alli (M’92, SM’98, F’06) receiv ed the B.T ech. de gree (Silver Medal Honors) from the Indian Institute of T echnology , Bombay , in 1985, the M.S. degree from Carnegie Mellon Uni versity , Pittsburgh, P A, in 1987, and the Ph.D. degree from the Univ ersity of Illinois at Urbana- Champaign, in 1992, all in electrical engineering. He joined the Uni versity of Illinois at Urbana-Champaign in 2000, where he is currently the Henry Magnuski Professor in the Department of Electrical and Computer Engineering, and where he is also affiliated with the Department of Statistics, and the Coordinated Science Laboratory . Prior to joining the Uni versity of Illinois, he was on faculty of the ECE Department at Cornell University . He served as a Program Director for communications research at the U.S. National Science Foundation from 2003 to 2005. His research interests span the theoretical areas of statistical inference, machine learning, and information theory , with applications to data science, wireless communications, and sensor networks. He was a Distinguished Lecturer for the IEEE Signal Processing Society during 2010–2011. He has been on the Board of Governors of the IEEE Information Theory Society . He has been an Associate Editor for Detection and Estimation for the IEEE T ransactions on Information Theory and for the IEEE T ransactions on W ireless Communications. Among the awards he has receiv ed for research and teaching are the IEEE Browder J. Thompson Best Paper A w ard, the Presidential Early Career A ward for Scientists and Engineers (PECASE), and the W ald Prize in Sequential Analysis.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

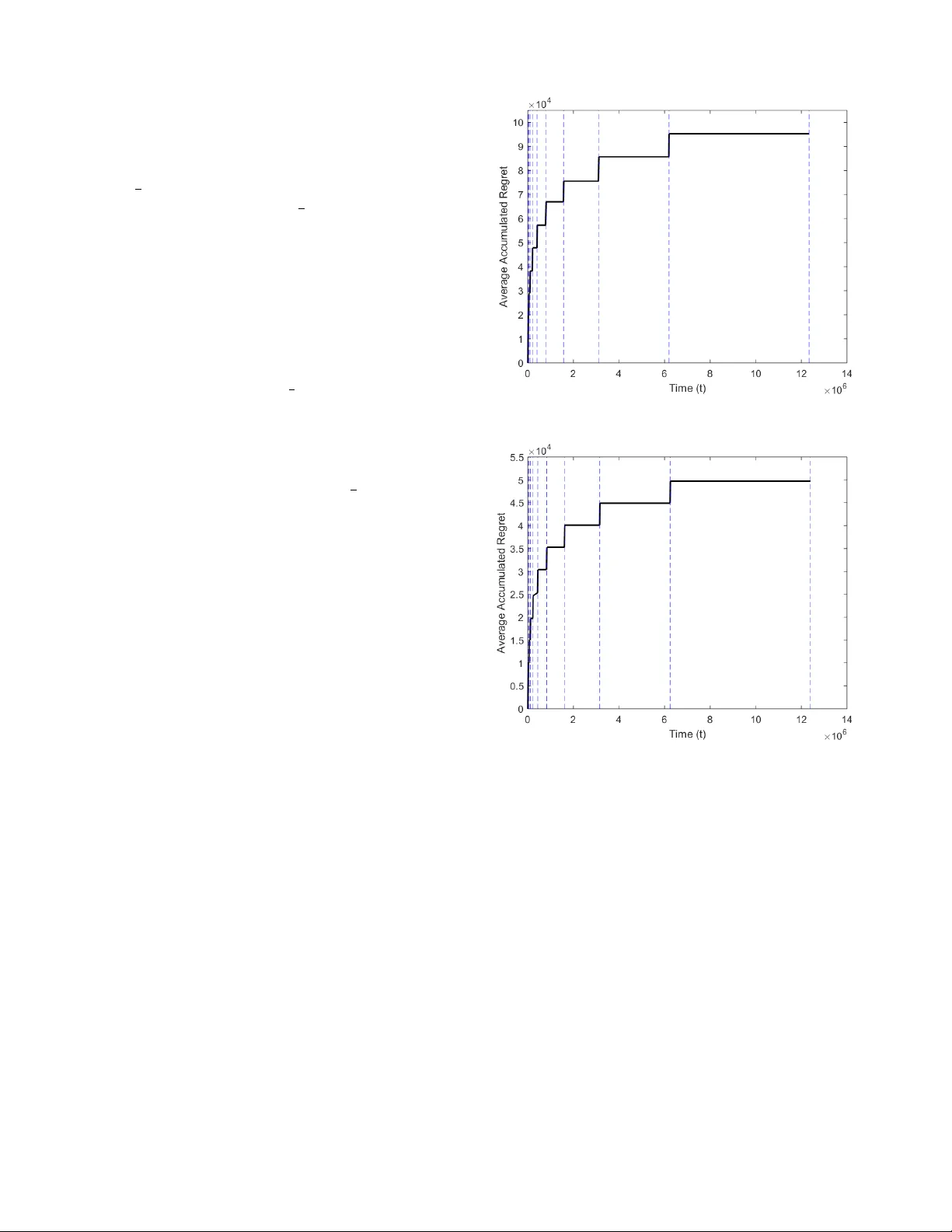

Leave a Comment