MW-GAN: Multi-Warping GAN for Caricature Generation with Multi-Style Geometric Exaggeration

Given an input face photo, the goal of caricature generation is to produce stylized, exaggerated caricatures that share the same identity as the photo. It requires simultaneous style transfer and shape exaggeration with rich diversity, and meanwhile …

Authors: Haodi Hou, Jing Huo, Jing Wu

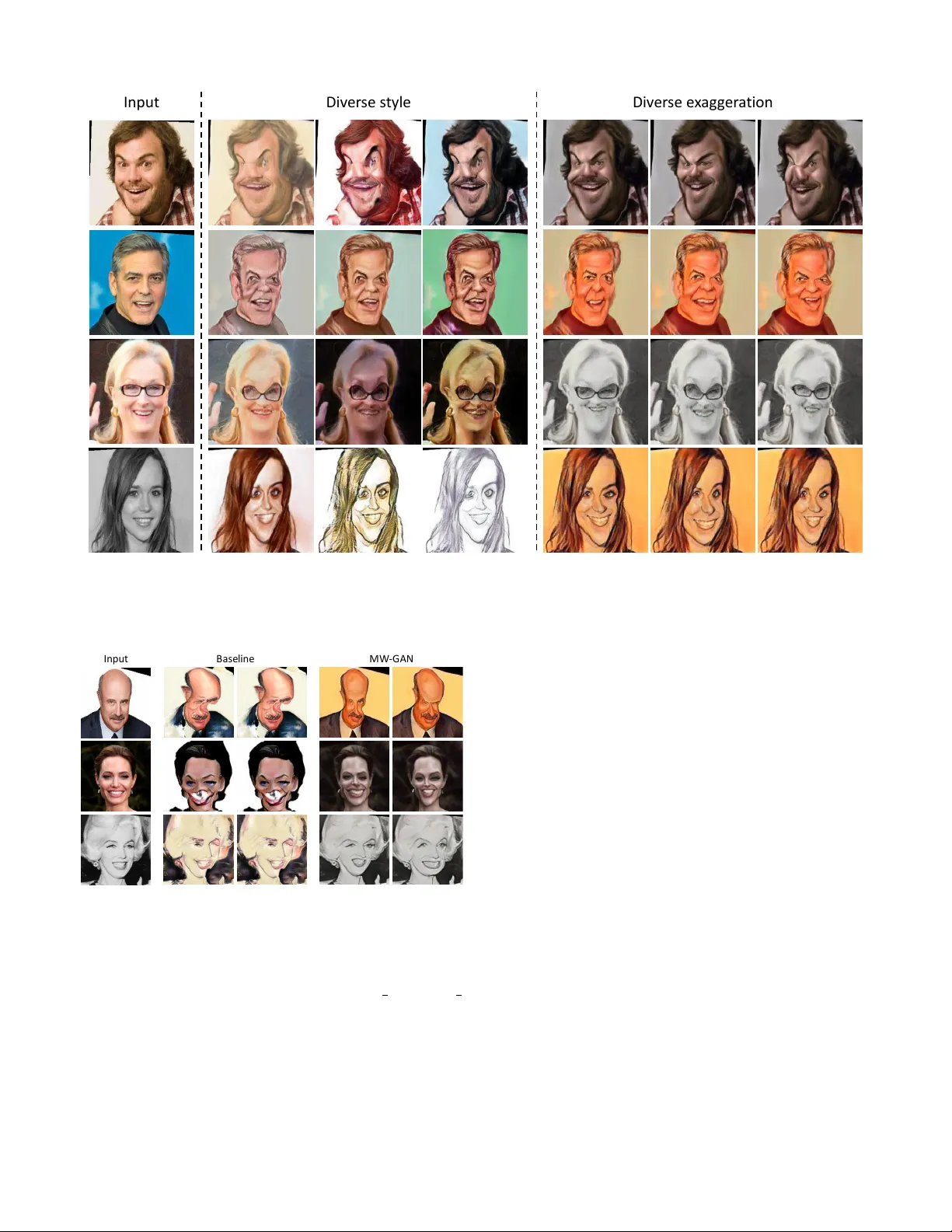

IEEE TRANSA CTIONS ON IMA GE PR OCESSING 1 MW -GAN: Multi-W arping GAN for Caricature Generation with Multi-Style Geometric Exaggeration Haodi Hou, Jing Huo, Jing W u, Y u-Kun Lai, and Y ang Gao, Member , IEEE Abstract —Given an input face photo, the goal of caricature generation is to produce stylized, exaggerated caricatures that share the same identity as the photo. It requires simultaneous style transfer and shape exaggeration with rich diversity , and meanwhile pr eserving the identity of the input. T o address this challenging problem, we pr opose a novel framework called Multi- W arping GAN (MW -GAN), including a style network and a geometric network that ar e designed to conduct style transfer and geometric exaggeration respecti vely . W e bridge the gap between the style/landmark space and their corresponding latent code spaces by a dual way design, so as to generate caricatures with arbitrary styles and geometric exaggeration, which can be specified either through random sampling of latent code or fr om a given caricature sample. Besides, we apply identity preser ving loss to both image space and landmark space, leading to a great impro vement in quality of generated caricatures. Experiments show that caricatures generated by MW-GAN ha ve better quality than existing methods. Index T erms —Caricature Generation, Generative Adversarial Nets, Multiple Styles, W arping I . I N T RO D U C T I O N C ARICA TURES are artistic drawings of faces with exag- geration of facial features to emphasize the impressions of or intentions tow ards the subject. As an art form, caricatures hav e various depiction styles, such as sketching, pencil strokes and oil painting, and various exaggeration styles to express different impressions and emphasize different aspects of the subject. Artists hav e their own subjectivity and dif ferent skills which also contrib ute to the div ersity of caricatures. As shown in Figure 1, caricatures drawn by artists can hav e various texture styles and different shape exaggerations ev en for the same subject. These v arieties in caricature generation make caricatures a fascinating art form with long-lasting popularity . Howe ver , such div ersity has not been achieved in comput- erized generation of caricatures. Early works generate carica- tures through amplifying the difference from the mean f ace [1], This work is supported by Science and T echnology Innovation 2030 Ne w Generation Artificial Intelligence Major Project (2018AAA0100905), the National Natural Science Foundation of China (61806092), Natural Science Foundation of Jiangsu Province (BK20180326), the Fundamental Research Funds for the Central Univ ersities (02021438008) and the Collaborative Innov ation Center of Novel Software T echnology and Industrialization. (Cor - r esponding author: Jing Huo) H. Hou, J. Huo and Y . Gao are with the State Ke y Laboratory for Novel Software T echnology , Nanjing Uni versity , No. 163 Xianlin A venue, Nanjing, 210023, Jiangsu, China. E-mail: hhd@smail.nju.edu.cn, huojing@nju.edu.cn, gaoy@nju.edu.cn. J. W u and Y .-K. Lai are with the School of Computer Science & Informat- ics, Cardif f Uni versity , UK. E-mail: { W uJ11,LaiY4 } @cardiff.ac.uk. [2], [3] or automatically learning rules from paired photos and caricatures. Howe ver , these methods can only generate caricatures with a specific style. The recent style transfer methods [4], [5], [6], [7] and image translation methods [8], [9], [10], [11], [12], [13] based on Con volutional Neural Net- works (CNNs) and Generative Adversarial Nets (GANs) [14] hav e achiev ed appealing results on image style translation in texture and color . Howe ver , these methods are not designed to deal with geometric shape exaggeration in caricatures. The recent GAN-based caricature generation methods [15], [16], [17] can generate caricatures with reasonable exaggerations, but still lack variety in geometric exaggeration, leaving a gap between computer generated and real caricatures. CariGAN by Li et al. [15] translates both texture and shape in a single network, and treats the translation as a deterministic mapping function, which restricts t he di versity of the generated caricatures. W arpGAN by Shi et al. [16] and CariGANs by Cao et al. [17] separately render the images’ texture and exaggerate face shapes. Though they can generate caricatures with appealing texture styles and meaningful exaggerations, their exaggeration is fixed according to the input photo. T o tackle this issue, in this paper , we propose Multi-W arping GAN for generating caricatures from face photos with a focus on generating various geometric exaggerations. It is a GAN- based framew ork to generate caricatures with multiple exag- gerations by applying Multiple W arping styles to face images, and is thus called Multi-W arping GAN (MW -GAN). T o allow for the div ersity of both texture and geometric exaggeration styles, MW -GAN is designed to have a style network and a geometric network. The style network is trained to render images with different texture and coloring styles, while the geometric network learns the exaggeration in the landmark space and warps images accordingly . In both networks, we propose to use latent codes to control the texture and exagger - ation styles respectively . The diversity is achie ved by random sampling of the latent codes or extracting them from sample caricatures. T o correlate the latent codes with meaningful texture styles and shape exaggerations, we propose a dual way architecture, which simultaneously translates photos into caricatures and caricatures into photos, with the aim to provide more supervision on the latent code. With the dual way design, cycle consistency loss on latent code can be introduced. This allows us to not only get more meaningful latent codes, but also obtain better generation results compared with using the single way design. Besides, compared with [16] and [17], our method supports multiple exaggeration styles for the same IEEE TRANSA CTIONS ON IMA GE PR OCESSING 2 P hot o H and - dr a w n MW - GAN Fig. 1. Caricature di versity . The first column sho ws input photos. The follo wing three columns are caricatures dra wn by artists. Caricatures in the last three columns are generated by our MW -GAN with photos in the first column as input. It shows that artists can draw caricatures with various texture styles and exaggerations, and our MW -GAN is designed to model these diversities. input photo. In addition to diversity , another challenge is the identity preservation in generated caricatures. Pre viously , Shi et al. [16] hav e proposed to use an identity preservation loss which is defined in the image space to preserve identity . Observing that caricaturization in volv es both style translation and shape deformation, to preserve the identity of the subject in the input photo, we deploy identity recognition loss in both image space and landmark space when training the networks. The loss in both spaces leads to remarkable quality improvement of the generated caricatures. W e conducted ablation studies to verify the effecti veness of the dual way architecture in comparison with the single way design, and the introduction of the landmark constraints in the identity recognition loss. W e compared our method with the state-of-the-art caricature generation methods in terms of the quality of the generated caricatures. And we demonstrated the div ersity of both the te xture and e xaggeration styles in the generated caricatures using our method. Results showed both the effecti veness of our method and its superiority ov er the state-of-the-arts. In summary , the contrib utions of our work are as follows: 1) Our method is the first to focus on the div ersity of geometric exaggeration in caricature generation, and we propose a GAN-based framework that can generate car- icatures with arbitrary texture and exaggeration styles. 2) Our framew ork proposes a dual way design to learn more meaningful relations between the image style/shape e xaggeration spaces and their corresponding latent code spaces, and enables the specification of the styles and exaggerations of generated caricatures from caricature samples. 3) T o preserve the identity of the subject in the photo, we also deploy identity recognition loss in both image space and landmark space when training the network, which leads to remarkable improv ement in the quality of generated caricatures. W e compare our results with those from the state-of-the- art methods, and demonstrate the superiority of our method in terms of both quality and di versity of the generated caricatures. I I . R E L A T E D W O R K A. Style T ransfer Since CNNs have achiev ed great success in understanding the semantics in images, it is widely studied to apply CNNs to style transfer . The ground-breaking work of Gatys et al. [4] presented a general neural style transfer method that can transfer the texture style from a style image to a content image. Follo wing this work, many improved methods [5], [6] have been proposed to speed up the transfer process by learning a specific style with a feed-forward network and transfer an arbitrary style in real time through adapti ve instance normalization [7]. Despite the achiev ements in transferring images with realistic artistic styles, these methods can only change the texture rendering of images, but are not designed to make the geometric exaggeration required in caricature generation. In our MW -GAN, a style network together with a geometric network are used to simultaneously render the image’ s texture style and exaggerate its geometric shape, with the aim to generate caricatures with both realistic texture styles and meaningful shape exaggerations. B. Generative Models for Image T ranslation The success of Generative Adversarial Nets (GANs) [14] has inspired a series of work on cross-domain image trans- lation [18], [10], [19]. The pix2pix network [18] is trained with a conditional GAN, and needs supervision from paired images which are hard to get. T riangle GAN [20] achiev ed semi-supervised image translation by combining a conditional GAN and a Bidirectional GAN [21] with a triangle frame work. There have been efforts to achieve image translation in a totally unsupervised manner through shared weights and latent space [9], [8], cycle consistency [10], and making use of semantic features [12]. The above methods treat image trans- lation as a one-to-one mapping. Recently more methods hav e been proposed to deal with image translation with multiple styles. Augmented Cycle GAN [22] extends c ycle GAN to IEEE TRANSA CTIONS ON IMA GE PR OCESSING 3 multiple translations by adding a style code to model various styles. MUNIT [11] and CD AAE [13] disentangle an image into a content code and a style code, so that a single input image can be translated to v arious output images by sampling different style codes. These methods can successfully translate images between different domains, and can render with various texture styles in one translation. Howe ver , these translations mostly keep the image’ s geometric shapes unchanged, which is not suitable for caricature generation. By contrast, we sepa- rately model the two aspects, texture rendering and geometric exaggeration, and achie ve both translations in a multiple style manner . That is, our model can generate caricatures with various texture styles and diverse geometric exaggerations for a given input. C. Caricatur e Generation Caricature generation has been studied for a long time. T raditional methods translate photos to caricatures using computer graphics techniques. The first interactive caricature generator was presented by Brennan et al. [23]. The caricature generator allows users to manipulate photos interacti vely to create caricatures. Following their work, rule-based methods were proposed [1], [3], [2] to automatically amplify the difference from the mean face. Example-based methods [24], [25] can automatically learn rules from photo-caricature pairs. Although these methods can generate caricature automatically or semi-automatically , they suffer from some limitations, such as the need of human interactive manipulation and paired data collection. Moreover , caricatures generated by these early methods are often unrealistic and lack div ersity . Since GANs hav e made great progress in image generation, many GAN-based methods for caricature generation were presented recently . Some of these methods translate photos to caricatures with a straightforward network [12], [15], while others translate the texture style and geometric shapes sep- arately [16], [17]. For the straightforward methods, Domain T ransfer Network (DTN) [12] uses a pretrained neural network to e xtract semantic features from input so that semantic content can be preserved during translation. CariGAN by Li et al. [15] adopts facial landmarks as an additional condition to enforce reasonable exaggeration and facial deformation. As these methods translate both texture and shape in a single network, it is hard for them to achiev e meaningful deformation or to bal- ance identity preserv ation and shape e xaggeration. By contrast, W arpGAN [16] and CariGANs by Cao et al. [17] separately render the image’ s texture and exaggerates its shape. Although they can generate caricatures with realistic texture styles and meaningful e xaggerations, W arpGAN and CariGANs [17] still suffer from lacking e xaggeration v ariety . Specifically , when the input is specified, they can only generate caricatures with a fixed exaggeration. Howe ver , in real world, it is common that different artists draw caricatures with dif ferent exaggeration styles for the same photo. In this paper , we design a frame work that is able to model the variety of both texture styles and geometric exaggerations and propose the first model that can generate caricatures with di verse styles in both texture and exaggeration for one input photo. I I I . M U LT I - W A R P I N G G A N In this section, we describe the network architecture of the proposed Multi-W arping GAN and the loss functions used for training. A. Notations Let x p ∈ X p denote an image in the photo domain X p , and x c ∈ X c denote an image in the caricature domain X c . Giv en an input face photo x p ∈ X p , the goal is to generate a caricature image in the space X c , while sharing the same identity as x p . This process in volves two types of transition, texture style transfer and geometric shape exaggeration. Pre- vious works [17], [16] can only generate caricatures with a fixed geometric exaggeration style when an input is gi ven. In this paper, we focus on the problem of caricature generation with multiple geometric exaggeration styles, and propose the first framework to deal with it. The notations used in this paper are as follows. W e use x, z , l, y to denote image sample, latent code, landmark and identity label respecti vely . Subscripts p and c refer to photo and caricature respectiv ely , while superscripts s and c repre- sent style and content. Encoders, generators (a.k.a. decoders) and discriminators are represented by capital letters E , G and D , respectiv ely . B. Multi-W arping GAN The network architecture of Multi-W arping GAN is shown in Figure 2. It consists of a style network and a geometric network. The style network is designed to render images with different texture and texture styles, while the geometric network aims to exaggerate the face shapes in the input images. The style network works in the image space, while the geometric network is built on landmarks and exaggerates geometric shapes through warping. Both style and geometric networks are designed in a dual way , i.e., there is one way to translate photos to caricatures and also the other way to translate caricatures to photos. In this paper, we are mainly interested in translating photos to caricatures. Although it can also be achiev ed with a single way network, we claim that the dual way design is essential for high-quality generation. For the style network, using a single way is also reasonable and may achiev e competiti ve results. Howe ver , using a dual way design has its superiority in constraining the content of the generated caricature, as the dual way model can encode the content of the generated caricature backward and constrain it with the cycle loss. As for the geometric network, using an additional encoder to map the generated caricature back to the landmark latent code is necessary to enforce the network to learn a bidirectional mapping, while a single way model can easily ignore the landmark information. W e experimen- tally verified that the dual way framework is more ef fectiv e compared with the single way design. In our dual way design, the style and the shape exaggeration are represented by latent codes z s and z l respectiv ely . Both latent codes can be sampled from Gaussian distribution or ex- tracted from sample caricature images to achie ve the di versity IEEE TRANSA CTIONS ON IMA GE PR OCESSING 4 ′ → ′ ′ → ′ Δ w ar p → Δ w ar p → St y le Ne t w o rk G e o me t ric Ne t w o rk Fig. 2. The network architecture of the proposed multi-warping GAN. The left part is the style network and the right part is the geometric network. The gray dashed arrows denote the flo w of two auto-encoders, with the upper one being the auto-encoder of photos and the lower one for caricature reconstruction. The orange arrows denote the flow of photo-to-caricature generation and the blue arrows for caricature-to-photo generation. In our dual way framework, we assume the caricature and the photo share the same content feature space but have separate style spaces. E c p and E c c are two encoders to encode the content of photos and caricatures respectiv ely . Similarly defined, E s p and E s c are two encoders to encode the style of photos and caricatures. Gaussian distribution is imposed on their outputs ˜ z s p and ˜ z s c , so that we can sample style codes from Gaussian when translating photos to caricatures or vice versa. As for the geometric exaggeration, we assume that it depends on the content latent code ( z c c or z c p ) and a landmark transformation latent code ( z l c or z l p ), where the former captures the characteristics of the input face, while the latter represents the artistic style. That is to say , a generator network G l c ( G l p ) is trained to output a landmark displacement map ∆ l c ( ∆ l p ) with z c p ( z c c ) and z l c ( z l p ) as input. T o get the final translated caricature x p → c (translated photo x c → p ), we conduct geometric exaggeration on the stylized image x 0 p → c ( x 0 c → p ) through warping according to its original facial landmarks l p ( l c ) and the learned landmark displacements ∆ l c ( ∆ l p ). Here we only show the image translation flow of the geometric network, and more details are illustrated in Section III-B2. in both style and e xaggeration. T o train this network, we design a set of loss functions to tighten the corresponding relations between the latent code space and the image space, and to keep identity consistenc y . In the following, we will explain the details of our style network and geometric network along with the loss functions accordingly . 1) Style Network: During the te xture style transfer , the face shape in the image should be preserved. W e thus assume that there is a joint shape space, referred to as “content” space, shared by both photos and caricatures, while their style spaces are independent. Follo wing MUNIT [11], the style network is composed of two autoencoders for content and style respectiv ely , and is trained to satisfy the constraints in both the image reconstruction process and the style translation process. The image reconstruction process is shown in Figure 2 with gray dashed arrows, and can be formulated as follo ws: x 0 p = G s p ( E c p ( x p ) , E s p ( x p )) , x 0 c = G s c ( E c c ( x c ) , E s c ( x c )) . (1) where E c p and E s p are content and style encoders for photos. Similarly , E c c and E s c are content and style encoders for carica- tures. G s p and G s c are two decoders for photos and caricatures respectiv ely . The image reconstruction loss is defined as the ` 1 difference between the input and reconstructed images: L rec x = k x 0 p − x p k 1 + k x 0 c − x c k 1 . (2) The style translation process is shown in Figure 2 with coloured arrows, and can be formulated as: x 0 p → c = G s c ( E c p ( x p ) , z s c ) , x 0 c → p = G s p ( E c c ( x c ) , z s p ) , (3) where z s c and z s p are style codes which can be sampled from Gaussian distributions for the two modalities. x 0 p → c is a generated image with the content from the input photo and a caricature style, while x 0 c → p is a generated image with the content from the input caricature and a photo style. The constraints in the style transfer process are based on three aspects. Firstly , after transfer , the style code of the transferred image should be consistent with the style code of input, i.e., L rec s = k z s p − E s p ( x 0 c → p ) k 1 + k z s c − E s c ( x 0 p → c ) k 1 (4) where x 0 c → p and x 0 p → c are defined in Eq. (3). Secondly , the image content should keep unchanged during the transfer . A IEEE TRANSA CTIONS ON IMA GE PR OCESSING 5 cycle consistency loss on the content codes of the input and the transferred images is used, as shown below: L cy c c = k E c p ( x p ) − E c c ( x 0 p → c ) k 1 + k E c c ( x c ) − E c p ( x 0 c → p ) k 1 (5) Thirdly , the transferred image should be able to conv ert back when passing through the same encoder -decoder and using the original style code. Again a cycle consistency loss on the input and transferred images is used for this constraint: L cy c x = k x p − G s p ( E c c ( x 0 p → c ) , E s p ( x p )) k 1 + k x c − G s c ( E c p ( x 0 c → p ) , E s c ( x c )) k 1 . (6) Please note, the second terms in Eq. (5) and Eq. (6) constrain the style transfer from caricatures to photos. It is only possible to impose this cycle consistency in our dual way design. It is expected that the cycle consistency can help build the relation between the latent code space and the image space. A single way network from photos to caricatures only is also implemented as a baseline (see Section III-C) which is trained without these two terms. Experimental results, as in Section IV, demonstrate the superior generation results using the dual way design with the cycle consistency loss. Eqs. (2), (4), (5) and (6) gi ve all the loss functions to train the style network. W ith the abov e network architecture, we can generate caricatures with various texture styles by sampling different style codes. 2) Geometric Network: Geometric exaggeration is an es- sential feature of caricatures. There are two aspects to con- sider when modeling caricature exaggerations. One is that exaggerations usually emphasize the subject’ s characteristics. The other is that they also reflect the skills and preference of the artists. Therefore, in caricature generation, it is natural to model geometric exaggeration based on both the input photo and an independent exaggeration style. By changing the exaggeration style, we can mimic different artists to generate caricatures that hav e dif ferent shape exaggerations for a giv en input photo. It is straightforward to use a random latent code to model this independent exaggeration style. Howe ver , this idea suf fers from some problems: 1) a random latent code may be ignored while training the model to generate realistic caricatures and 2) it is hard to ensure that a random latent code leads to meaningful exaggeration. Thus, we design our geometric network to learn a bidirectional mapping between an exaggeration latent code space and the face landmarks. In our design, we build a latent code space on landmarks (landmark transformation latent code space) to represent the different exaggeration styles from artists, and use the content code to represent the input photo. The geometric network is designed and trained to learn mappings of two directions. The first one is the mapping from a combination of a code in the landmark transformation latent space and a content code to a landmark displacement map, which defines the geometric deformation between the input photo and the caricature to be generated. The landmark displacement map thus captures both the input subject’ s characteristics and a specific geometric exaggeration style. In the second mapping, a pair of landmarks of photo and caricature is mapped back to the landmark transformation latent code space, so that points in the landmark transformation latent code space are associated with mean- ingful exaggeration styles. Geometric exaggeration is finally achiev ed by warping [26] the input photo according to the learned landmark displacements. More concretely , the stylized input image is warped by deforming the image according to its original landmarks and the learned landmark displacements. In our paper , landmarks from the W ebCaricature dataset are used. In real-w orld applications, face landmarks can be detected with existing detectors. Follo wing the above assumption, the design of our geo- metric network is as shown in Figure 3. It consists of one generator ( G l c or G l p ) in each way of translation and two encoders ( E l p , E l c ) whose functions will be explained later . T aking the translation from photos to caricatures as an e xample (shown in Figure 3a), the generator G l c takes the content code z c p and the landmark transformation latent code z l c as input, and outputs the landmark displacements ∆ l c which are then added to the input landmarks l p to get the target caricature landmarks ˜ l c . The landmark transformation latent code z l c encodes a shape e xaggeration style. T o make it correlate to meaning- ful shape e xaggerations, we follow the idea of Augmented CycleGAN [22] and introduce two encoders E l p , E l c into the geometric network. Again, taking the translation from photos to caricatures for example, the encoder E l c takes the landmarks of the photo l p and the landmarks of the corresponding caricature ˜ l c as input, extracts the difference between them and reconstructs the landmark latent code z 0 l c . By introducing the encoder , it enables us 1) to enforce cycle consistency between the randomly sampled latent code z l c and the encoded latent code z 0 l c to correlate z l c to meaningful exaggerations; and 2) to extract z l c from example caricatures to perform sample guided shape e xaggeration. The same applies for the encoder E l p used in translation from caricatures to photos (Figure 3b). Basically , to train the geometric network, we hav e the landmark transformation latent code reconstruction loss: L rec z l = k z l p − E l p ( ˜ l p , l c ) k 1 + k z l c − E l c ( ˜ l c , l p ) k 1 (7) where the first term is the reconstruction loss of z l p , the land- mark transformation latent code from photos to caricatures. The second term is the reconstruction loss of z l c and is defined in a similar way . Besides the above loss, we use LSGAN [27] to match the generated landmarks with real ones: L G g an l = k 1 − D l p ( ˜ l p ) k 2 + k 1 − D l c ( ˜ l c ) k 2 , (8) L D g an l = k 1 − D l p ( l p ) k 2 + k D l p ( ˜ l p ) k 2 + k 1 − D l c ( l c ) k 2 + k D l c ( ˜ l c ) k 2 . (9) where Eq. (8) is the loss for generators and Eq. (9) is the loss for discriminators. The objective of generators is to make the generated caricature landmarks ˜ l c or photo landmarks ˜ l p indistinguishable from real landmarks, i.e., the output of the discriminator with the generated landmarks as input becomes 1. On the other hand, the objecti ve of discriminators is to discriminate between the real photo landmarks l p and the generated photo landmarks ˜ l p , as well as to discriminate IEEE TRANSA CTIONS ON IMA GE PR OCESSING 6 a : T ra n s l a ti o n fro m p h o to to c a ri c a tu re b : T ra n s l a ti o n fro m c a ri c a tu re to p h o to Fig. 3. Geometric Network. The left part is the network for learning a transformation from a photo’s landmarks to a caricature’ s landmarks. The right part is the network for the rev erse transformation. For the left network, a generator G l c with the content code of a photo z c p and a landmark transformation latent code z l c (which can be randomly sampled from a Gaussian distribution) as input will output landmark displacement vectors ∆ l c . By adding the displacement vectors to the photo’ s landmark positions, we get the transformed caricature landmarks ˜ l c . T o make the randomly sampled z l c correlate to meaningful shape transformation styles, we introduce two encoders and force cycle consistency loss on the encoded latent code and sampled latent code. For example, z 0 l c = E l c ( ˜ l c , l p ) is the encoded latent code, and we force z 0 l c to be as close as possible to z l c . between the real caricature landmarks l c and the generated caricature landmarks ˜ l c . Similar to above loss for generated landmarks, we define the loss for generated images: L G g an x = k 1 − D x p ( x c → p ) k 2 + k 1 − D x c ( x p → c ) k 2 , (10) L D g an x = k 1 − D x p ( x p ) k 2 + k D x p ( x c → p ) k 2 + k 1 − D x c ( x c ) k 2 + k D x c ( x p → c ) k 2 . (11) The definition of the abov e two losses are in the same way as the losses in Eqs. (8) and (9), except that the generated landmarks are now changed to images. W e also use LSGAN [27] to match all the latent codes (including both landmark transformation latent code and the style latent code, except content code) to Gaussian: L G g an z = k 1 − D z ( ˜ z ) k 2 , (12) L D g an z = k 1 − D z ( z ) k 2 + k D z ( ˜ z ) k 2 . (13) Here, ˜ z is latent codes encoded by neural encoders, while z is latent codes sampled from Gaussian distribution. Eq. (12) is the loss for the generator and Eq. (13) is the loss for the discriminator . The objective of the generator is to make the discriminator unable to tell whether the encoded latent code ˜ z is sampled from Gaussian or not. And the discriminator’ s objectiv e is to try to discriminate between these two kinds of codes. 3) Identity Preservation: Identity preserv ation in the gen- erated caricatures becomes more challenging with the explicit geometric deformation introduced. As a result, in addition to preserving identity in the image space as in [16], we add further constraints on identity in the landmark space. T wo discriminators are added to classify the identity from both the image and the landmarks. L id x = − log( D x id ( y p , x p )) − log( D x id ( y p , x p → c )) − log( D x id ( y c , x c )) − log( D x id ( y c , x c → p )) (14) L id l = − log( D l id ( y p , l p )) − log( D l id ( y p , ˜ l c )) − log( D l id ( y c , l c )) − log( D x id ( y c , ˜ l p )) . (15) Here, the two discriminators D x id and D l id are both classifiers for face identity , except that D x id takes images as input while D l id takes landmarks as input. y p and y c are the identity labels for the corresponding photos and caricatures. The label only represents the face identity no matter what style it is or whether it is photo or caricature. As for the translated images x p → c and x c → p , y p and y c are labels of the corresponding input images x p and x c respectiv ely , so that the translated images hav e the same identity as the input images. It is similar for the translated landmarks ˜ l c and ˜ l p . T o be clear, these two discriminators are trained with the whole framework end-to- end, without pretraining. 4) Overall Loss: In summary , training the proposed MW - GAN is to minimize the following types of loss functions: 1) the reconstruction loss of the image L rec x , the style latent code L rec s , and the landmark transformation latent code L rec z l , and the cycle consistency loss of the content code L cy c c and of the image L cy c x ; 2) the generative adversarial loss pairs on images L G g an x , L D g an x , landmarks L G g an l , L D g an l , and latent codes L G g an z , L D g an z ; and 3) the identify loss on the image L id x , and on the landmarks L id l . Our framework is trained by optimizing the following ov erall objecti ve functions on encoders, generators, and dis- criminators: min E ,G λ 1 L rec x + λ 2 ( L rec s + L rec z l + L cy c c + L cy c x ) + λ 3 L id x + λ 4 L id l + λ 5 ( L G g an x + L G g an l + L G g an z ) , (16) min D λ 3 L id x + λ 4 L id l + λ 5 ( L D g an x + L D g an l + L D g an z ) . (17) Here, E and G denote the encoder and generator networks, whereas D denotes the discriminator networks. λ 1 , λ 2 , λ 3 , λ 4 and λ 5 are weight parameters to balance the influence of the different loss terms in the overall objectiv e function. The whole framework is trained in an end-to-end manner . W ith a mini batch of training data (including images, landmarks and identity labels), we update parameters of E , G and D alternately . That is to say , in one step of iteration, we first optimize all the discriminators with Eq. (17) and then optimize all the encoders and generator with Eq. (16). IEEE TRANSA CTIONS ON IMA GE PR OCESSING 7 W a rp Fig. 4. Framework of baseline single way GAN. The upper two rows denote the style network and the lower two rows denote the geometric network. C. De gradation to Single W ay Baseline Notice for the caricature generation task, we can also degrade the abov e framework to a single w ay network, i.e. only from photos to caricatures as sho wn in Figure 4. Howe ver , we found that without the cycle consistency loss, the single way network for caricature generation performs not as good as the dual way design. Here, we giv e details of the single way network and this forms a baseline method in our experiments. As shown in Figure 4, the de graded single way network also consists of a style network and a geometric network. The style network is used to render an input photo with a caricature style while preserving the geometric shapes. It consists of two encoders E s p , E c p and one generator G s c . The content encoder E c p extracts the feature map z c p that contains the geometric information of the input photo, while the style encoder E s p extracts texture style z s p from the input. The style code z s p is adapted to a Gaussian distribution and affects the image’ s style through adaptiv e instance normalization [7]. The geometric network exaggerates the face in the rendered image by warping it according to the landmark displacements ∆ l c . T o achiev e multi-style exaggerations, we assume that ∆ l c is controlled by not only the photo’ s content z c p but also a landmark transformation latent code z l c that follo ws a Gaussian distribution. This straightforward framew ork can increase the variety of exaggeration styles through various landmark transformation latent codes. Howe ver , it suffers from some limitations. Firstly , as it is a one-way framework without cycle consistency , there lacks supervision to relate the generated caricatures with the landmark transformation latent code. Then with the use of discriminator, the model may ignore the landmark transformation latent code in training which actually restricts the geometric deformation. Secondly , this one-way framework lacks supervision to bridge the gap between the latent space of z l c and the landmark space. Thus the learned landmark latent code may have no reference to real landmarks. In experiments, we compare the generation results using our dual way network with using the single way network, and demonstrate the advantages of our dual-way design. I V . E X P E R I M E N T S W e conducted experiments on the W ebCaricature dataset [28], [29]. W e will first describe the details of the dataset and the training process, and then demonstrate the effecti veness of the dual way design and the landmark based identity loss through ablation studies. W e will show the ability of our method to generate caricatures with a variety of both texture and exaggeration styles. And finally , we will compare the caricatures generated using our method with the pre vious state-of-the-art methods and sho w the superiority of our method in terms of the generation quality through qualitativ e and quantitative comparisons, as well as a perceptual study . A. Experimental Details Dataset prepr ocessing. W e trained and tested our network on a public dataset W ebCaricature [28], [29]. There are 6,042 caricatures and 5,974 photographs from 252 persons in this dataset. W e first pre-processed all the images by rotating the faces to make the line between eyes horizontal, and cropping the face using a bounding box which co vers hair and ears. In detail, an initial box is first created by passing through the centers of ears, the top of head and the chin. Then the bounding box used is the initial box enlarged by a factor of 1 . 5 . All processed images are resized to 256 × 256 . W e randomly split the dataset into a training set of 202 identities (4,804 photos and 4,773 caricatures) and a test set of 50 identities (1,170 photos and 1,269 caricatures). The generated caricatures have the same resolution as the inputs, which is 256 × 256 . All the images presented in this paper are from identities in the test set. The landmarks used in our experiments are the 17 landmarks provided in the W ebCaricature dataset [28], [29]. Details of implementation. Our frame work is implemented with T ensorflow . The style network is modified based on MUNIT [11]. W e remov ed the discriminator on the stylized images and added a discriminator on the warped images at the end. W e also added a discriminator on the style latent code generated by the style encoder to match it with a Gaussian distribution. In the geometric network, we take G l c and E l p as an example to explain the detailed structure, and G l p and E l c are implemented in the same way . For G l c , the content code is firstly down-sampled by max pooling with kernel size 3 and stride 2 , and then is fed into three blocks of 3 × 3 conv olution with stride 1 followed by leaky ReLU with α = 0 . 01 and 3 × 3 max pooling with stride 2. After that, there is a fully connected layer mapping this to a 32-dimensional vector . The landmark latent code is also mapped to a 32-dimensional vector by a fully connected layer . Then the two vectors are concatenated and fed into a fully connected layer to output ∆ l c . For E l p , the two sets of input landmarks (landmarks for photo and landmarks for caricature) are firstly concatenated and then fed into four fully connected layers to give the estimated landmark latent code. All the fully connected layers in G l c and E l p are activ ated with leaky ReLU, except the last layer . Discriminators for images are composed of 6 blocks of 4 × 4 con volution with stride 2 and a last layer with full connection, while discriminators for latent codes consist of six IEEE TRANSA CTIONS ON IMA GE PR OCESSING 8 Input 𝑤/ 𝑜 𝐿 !" _$ 𝑤/ 𝑜 𝐿 !" _% MW - GAN 𝑤/ 𝑜 𝐿 &'( _$ Fig. 5. Ablation study . For MW -GAN v ariants, we generate one caricature for each input. For MW -GAN, we generate three caricatures for each input by sampling dif ferent style latent codes and landmark transformation latent codes. T ABLE I A B LAT IO N S T UDY . C O M P A R I S ON O F T H E T H RE E V A R I AN T S O F M W-G A N , T H E S I N G LE W A Y B A SE L I N E A N D M W-G A N . “AC C ” I S S H ORT F O R T H E R A NK - 1 A CC U R AC Y . Method w/o L id l w/o L id x w/o L gan l baseline MW -GAN FID 47.53 56.44 41.09 57.38 36.29 A CC 73.68% 37.95% 59.49% 43.59% 74.87% layers with full connection. Leaky ReLU is used as activ ation for all discriminators. W e empirically set λ 1 = 10 , λ 2 = λ 5 = 1 . 0 , λ 3 = 0 . 05 , λ 4 = 0 . 01 . W e used AD AM optimizers with β 1 = 0 . 5 and β 2 = 0 . 999 to train the whole network. The network is trained for 500,000 steps with batch size of 1. The learning rate is started with 0.0001 and decreased by half e very 100,000 steps. The model is trained on a computer with an NVIDIA GeForce R TX 2080 T i GPU, and the training takes about three days. B. Ablation Study T o analyze our dual way design, geometric network train- ing and identity recognition loss, we conducted experiments using the baseline method and three MW -GAN variants by respectiv ely removing the GAN loss on generated landmarks ( L g an l ), the identity recognition loss in the image space ( L id x ) and landmark space ( L id l ). Other losses are either ba- sic (reconstruction losses) or their effecti veness (GAN losses, cycle-consistenc y loss) hav e been demonstrated in other image translation or caricature generation methods [30], [10], [11], [17], [16]. They are therefore not included in this ablation study to av oid repetiti ve ev aluations. For all these experiments, we have both qualitatively and quantitatively compared our MW -GAN with its three variants and the one-way base- line method. For quantitative ev aluation, Fr ´ echet Inception Distance (FID) [31] is used to ev aluate the quality of the generated caricatures and rank-1 identification accuracy is used to e valuate the identity preservation ability . For the calculation of rank-1 accuracy , the ArcFace [32] model is adopted. The identification experiment was conducted where photos were kept in the gallery and the generated caricatures were used as probes. The rank-1 identification accuracy is then calculated accordingly . Study on different losses. Figure 5 sho ws the caricatures generated using MW -GAN and its three variants with dif ferent identity losses. From an overall view , it is obvious that caricatures generated by MW -GAN have much better visual quality than its other variants. At a closer look, the caricatures generated using the variant omitting the loss on images ( w/o L id x ) suffer from bad visual quality , as it lacks adversarial supervision from the image vie w . As for the variant omitting the loss on landmarks ( w/o L id l ), the generated caricatures hav e better visual quality , but their exaggerations are not in the direction to emphasize the subjects’ characteristics. As for the v ariant omitting the GAN loss on generated landmarks ( w/o L g an l ), the exaggeration direction may break the facial components, since it lacks the supervision from adv ersarial IEEE TRANSA CTIONS ON IMA GE PR OCESSING 9 Input Di v er se s tyl e Di v er se e x ag g er a ti on Fig. 6. Diversity in texture style and exaggeration of the proposed MW -GAN. The 1st column shows input images. The 2nd-4th columns are corresponding generated caricatures with dif ferent texture styles with a fixed exaggeration. The last three columns are generated caricatures with a fixed texture style but different exaggerations. MW - G A N B ase l i ne I n p u t Fig. 7. Comparison with the single way baseline method. learning, which helps with learning reasonable facial land- marks. By contrast, MW -GAN with both L id x and L id l can exaggerate facial shapes to enlarge the characteristics of the subjects, and meanwhile can render the caricatures with appealing texture styles. Additionally , as sho wn from the quantitativ e results in T able I, our GAN loss on generated facial landmarks, identity recognition loss in both landmark space and image space can greatly improve the quality of the generated caricatures (with lower FID scores). Besides, these losses also contribute to the identity preservation (with higher rank-1 accuracy). These observations are consistent with the qualitati ve analysis abov e. Comparison with the single way baseline. Figure 7 sho ws the comparison of caricatures generated using our MW -GAN and using the baseline method described in Section III-C. For each input we randomly sample one style code and two landmark codes and generate two caricatures. From the results, we can see that with dif ferent landmark transforma- tion codes, MW -GAN can generate caricatures with different exaggeration styles, while the single-way baseline method generates caricatures with almost the same exaggeration for each input. Moreover , it is also obvious that the exaggerations from the single-way method are sometimes out of control with unrealistic distortions, while our MW -GAN can generate much more meaningful exaggerations with the added cycle consistency supervision. Besides, from the FID and rank-1 recognition accuracy of the baseline and MW -GAN in T able I, it is obvious that the quality and identity preserv ation ability of MW -GAN are much better than the baseline. This verifies our opinion in Section III-B that the dual way model can encode the content of the generated caricature backward and constrain it with the cycle loss. This makes the MW -GAN better in constraining the content of the generated caricature. C. Diversity in T extur e Style and Exag geration In our MW -GAN network, the generated caricatures have their texture styles and shape exaggerations controlled by the IEEE TRANSA CTIONS ON IMA GE PR OCESSING 10 I nput I nt erpo lat ion out put Fig. 8. Interpolation experiment results. The top two rows are caricatures generated by interpolating the landmark transformation latent codes, while the bottom two rows are caricatures generated by interpolating the style latent codes. In pu t ph ot os Gu i de c ari c a tur es Fig. 9. Sample-guided caricature generation of the proposed method. style latent code and the landmark transformation latent code respectiv ely . T o achie ve the div ersity in generated caricatures, we can sample different style codes and landmark transforma- tion codes, and apply them to the input photo. Figure 6 shows generated caricatures with dif ferent texture and exaggeration styles. For each input, we generate three caricatures with fix ed exaggeration but different texture styles and another three caricatures with fixed texture style b ut dif ferent exaggerations. The results meet our expectation that different style codes lead to dif ferent texture and coloring, while dif ferent landmark codes lead to different shape exaggerations. Our dual-way design of MW -GAN enables unsupervised learning of the bidirectional mapping between image style and style latent space, geometric exaggeration and landmark transformation latent space. Therefore, MW -GAN can also T ABLE II C O MPA R IS O N O F F I D A N D R A NK - 1 A CC U R AC Y ( AC C ) W I T H S T AT E - O F - TH E - A RT M E TH O D S . CycleGAN MUNIT W arpGAN CariGANs MW -GAN FID 49.82 52.33 40.69 36.46 36.29 A CC - - 78.55% 57.95% 74.87% generate caricatures with a guide sample by applying its style and landmark transformation codes to generators in the network. T o do this, we firstly feed the guiding caricature into the style encoder and landmark encoder ( E s c and E l c ) to get its style and landmark transformation codes. Then we use these codes as the style and landmark transformation codes in caricature generation. Figure 9 shows example caricatures generated with dif ferent guide samples. W e can see that the generated caricatures not only have similar texture styles as the guide caricatures, but also try to mimic the exaggeration styles of the guide caricatures. From left to right, the generated caricatures ha ve similar exaggeration styles of wide cheeks and narro w forehead, high cheekbones and squeezed f acial features (eyes, nose and mouth), long face and pointed chin, and laughing with the mouth wide open. D. Interpolation Experiments As the texture style and exaggeration style are respectiv ely controlled by style code and landmark transformation code in MW -GAN, we can achieve a ‘morphing’ ef fect from one caricature to another by interpolating their codes (either color , exaggeration, or both). For exaggeration interpolation, we randomly sample two landmark transformation latent codes z l c 1 and z l c 2 , and generate caricatures with their interpolation w z l c 1 + (1 − w ) z l c 2 , where w ranges from 0 to 1 with step of 0.1. The texture style interpolation experiment is similarly conducted. Results are sho wn in Figure 8. W e can see that the color and exaggeration style of caricatures change smoothly with different w . From left to right, the caricature face in the first ro w changes from thinner face with bigger nose to IEEE TRANSA CTIONS ON IMA GE PR OCESSING 11 C y c l eGA N M UNI T W a r pGA N MW - GA N I nput C ariG AN s Fig. 10. Comparison with state-of-the-art methods. CycleGAN and MUNIT can only generate images with style changed. Compared with W arpGAN and CariGANs, the proposed MW -GAN can generate caricatures with better visual quality and more flexible exaggeration. Besides, MW -GAN can generate caricatures with v arious styles and shape exaggerations. wider face with smaller nose. The face in the second ro w changes from bigger mouth to smaller ones. In the third row , the caricature color changes from orange face with red hair to purple face with black hair . In the last row , the caricature changes from a more colorful style to a grayer style. The smooth changes further demonstrate that the style and landmark latent codes are meaningfully learned in MW - GAN, and well represent the color and exaggeration styles of caricatures. E. Comparison with State-of-the-Art Methods W e qualitativ ely and quantitati vely compare our MW -GAN with pre vious state-of-the-art methods in image translation: CycleGAN [10], Multimodal UNsupervised Image-to-image T ranslation (MUNIT) [11], and in caricature generation: W arp- GAN [16], CariGANs [17]. Since CariGANs are not open source and the data used in the paper is not publicly av ailable, we implemented the CariGeoGAN using 17 landmarks with 34 dimension. The landmarks’ dimension was reduced to 21, with 99.04% of total v ariants preserved. Figure 10 shows the generated caricatures using different methods. When using MUNIT , W arpGAN and CariGANs, we randomly sample two style codes to generate two caricatures with different texture style for each input. When using our MW -GAN, we randomly sample style codes and landmark transformation codes and generate three caricatures for each input. CycleGAN is a deterministic method, and can only generate a fixed caricature for each input. As shown in Figure 10, CycleGAN can only generate caricatures with limited changes in texture. Some results ev en look almost the same as the input. Caricatures generated by MUNIT have some changes in shape, but some results show clear artifacts, such as the speckles around the nose (2nd row) and the dark and patchy appearance (3rd row). As MUNIT is not designed for shape deformation, we speculate these artifacts arise from its attempt to achiev e the appearance of shape deformation by some te xture disguise. Results from W arpGAN and CariGANs can generate caricatures with more reasonable texture changes and shape exaggerations. Howe ver , given the input photo, their exaggeration style is deterministic, which does not reflect the div erse skills and preferences among artists. In comparison, our MW -GAN is designed to achiev e div ersity in both texture styles and shape exaggerations. As can be seen in Figure 10, different style codes and landmark codes lead to different texture styles and shape exaggerations in the generated caricatures. W e also calculated FID to quantitati vely measure the quality of generated caricatures (shown in T able II). Lower FID scores indicate the generated caricatures are more similar with real ones. Since CycleGAN and MUNIT are designed only for texture transformation but not geometric exagger - ation as required in caricature generation, their FIDs are much higher than W arpGAN and MW -GAN, indicating lo wer quality . When it comes to W arpGAN, CariGANs and MW - GAN, it is sho wn that MW -GAN and CariGANs hav e a much lower FID than W arpGAN. MW -GAN and CariGANs have IEEE TRANSA CTIONS ON IMA GE PR OCESSING 12 Input O u t p u t Fig. 11. T ranslation of caricatures back to photos. The inputs are caricatures drawn by artists. The outputs are photos generated by MW -GAN with randomly sampled style latent codes and landmark latent codes. very similar FID scores, with the FID of MW -GAN slightly lower . W e believe this is due to three reasons. Firstly , MW - GAN specifically considers the exaggeration div ersity , which is a closer assumption to the real distribution of caricatures. Secondly , the identity recognition loss in both image space and landmark space enables the learning of more meaningful shape exaggerations using MW -GAN. Finally , our dual-way design enables the learning of a bidirectional translation between caricatures and photos, and bridges the two with style latent codes and landmark latent codes. This design helps to train the model as a whole. In order to quantify identity preservation accuracy for generated caricatures, we e valuated automatic face recognition performance for the three methods with geometric exagger - ation (W arpGAN, CariGANs and our MW -GAN) using a state-of-the-art face recognition model, the ArcFace [32]. The identification experiment was conducted where photos were kept in the gallery while the generated caricatures were used as probes. W e calculated the rank-1 identification accurac y and the results are shown in T able II. From the results, we can see that CariGANs has the lo west identity preserv ation accuracy , while W arpGAN and MW -GAN both ha ve accuracy ov er 70%. Though the identity preservation accuracy of MW - GAN is a little lower than that of W arpGAN, we consider that exaggerations without diversity are easier to achiev e a high identity preservation accuracy , and the follo wing perceptual study also confirms that the div erse exaggerations generated by MW -GAN are reasonable. F . P er ceptual Study W e conducted a perceptual study to e valuate the gen- erated caricatures in terms of their 1) visual quality , i.e., T ABLE III P E RC E P T UA L S T UDY O N E X AG G ER A T I ON Q UA L I TY ( E Q ) A N D V I S UA L Q UA L IT Y ( V Q) . Method CycleGAN MUNIT W arpGAN CariGANs MW -GAN EQ-best 71 21 290 464 454 EQ-worst 625 407 91 91 86 EQ-score -0.426 -0.297 0.153 0.287 0.283 VQ-best 176 9 305 396 414 VQ-worst 40 956 113 122 69 VQ-score 0.105 -0.728 0.148 0.211 0.265 whether the generated caricatures are visually appealing; and 2) exaggeration quality , i.e., whether the exaggerated face is recognizable. For each photo in the test set, 5 caricatures were generated using the fiv e methods: CycleGAN, MUNIT , W arp-GAN, CariGANs, and our MW -GAN. In each study of visual quality and exaggeration quality , volunteers are sho wn an input photo and the corresponding caricatures generated by the fi ve methods and were asked to vote for the best and worst ones from the fi ve caricatures. W e randomly selected 100 test photos and their generated caricatures, and presented them to 13 volunteers for voting. That is 1300 votes in total. One best vote counts +1, while one worst v ote counts -1. The final score for each method is the average of counts. Results are shown in T able III. It is obvious that W arpGAN, CariGANs and MW -GAN hav e much better e xaggeration quality than CycleGAN and MUNIT , as these caricature generation methods explicitly exaggerate geometric shapes through warping. Among the three, CariGANs and MW -GAN are the best with compara- ble exaggeration quality . Ho we ver , MW -GAN can generate caricatures with various exaggeration styles from the same input photo, while CariGANs can only exaggerate the face in a certain way . When it comes to the visual quality , we can see that MW -GAN has a higher score than others. W e reason that it is because the explicit encoding of the variety of color and exaggeration styles better captures the distribution of real caricatures. MUNIT has the worst visual quality , as the content code reconstruction in MUNIT is contradictory to the fact that caricatures ha ve different shape structures from photos. In summary , the e xaggerations generated by MW -GAN are both reasonable and diverse, and the caricatures generated by MW -GAN are the most visually appealing among the fiv e methods, according to the perceptual study . G. T ranslation of Caricatur es Back to Photos Although not our main goal, there is also a path in our network architecture to translate caricatures back to photos because of the dual way design. W e conducted experiments by using caricatures and their landmarks as input, and randomly sampled style latent code z s p and landmark latent code z l p for photos from the corresponding distributions. The generated photos are shown in Figure 11. The results sho w that MW - GAN can restore photos from caricatures by rev ersely deform- ing the exaggerated faces back to normal. As can be seen in the 2nd and 4th rows, the shapes of the cheek, chin and mouth in the generated photos look realistic. It shows the capability IEEE TRANSA CTIONS ON IMA GE PR OCESSING 13 of MW -GAN to moderately deform the caricature shapes to photo shapes. Howe ver , as deformations from caricatures to photos are more complicated, the 17 landmarks are often insufficient to represent such deformations. When the input caricature has extreme exaggeration, MW -GAN may generate the photo without sufficient shape deformation, resulting resid- ual deformations from the input caricature e.g., the ear and the chin in the 1st ro w of Figure 11, and the eyes and cheek in the 3rd ro w . of Figure 11. Further exploration is required for translations of caricatures back to photos. H. Limitations and Future W ork As the first framework to generate caricatures with diversi- ties in both texture styles and shape e xaggerations, MW -GAN still has some limitations to be improved in the future. Firstly , when translating caricatures back to photos, because of the more complicated deformation, the results are not desirable. T o address this problem, further explorations are required. One possible direction is to use more flexible deformation representation. Secondly , not all of the generated e xaggerations are plausible. For example, some generated caricatures have eyes with dif ferent sizes e.g., the 3rd row in Figure 10, which may be caused by the asymmetry of the hairs around the eyes. Therefore, further improv ements of the framew ork and loss design could be explored to generate caricatures with higher quality . MW -GAN is proposed as a base frame work to generate caricatures with diverse style and exaggerations. More explorati ons, such as using better GAN network and other improvement to this frame work, are also worth further study . Lastly , as ev aluation of the exaggeration quality is subjectiv e which varies from person to person, we consider another potential direction is to combine user interaction with the proposed method to allow further adjustment of the exaggeration results. V . C O N C L U S I O N In this paper, we propose the first frame work that can gener- ate caricatures with diversities in both texture styles and shape exaggerations. In our design, we use style latent code and landmark transformation latent code to capture the di versity in texture and exaggeration respectively . W e also design a dual way frame work to learn the bidirectional translation between photos and caricatures. This design helps the model to learn the bidirectional translation between the image style, face land- marks and their corresponding latent spaces, which enables the generation of caricatures with sample-guided texture and exaggeration styles. W e also introduced identity recognition loss in both image space and landmark space, which enables the model to learn more meaningful exaggeration and texture styles for the input photo. Qualitative and quantitati ve results demonstrate that MW -GAN outperforms the state-of-the-art methods in image translation and caricature generation. Future work includes improving the geometric exaggeration quality , incorporating user interactions and exploring better GAN network structures. The problem of translating caricatures to photos is also worth studying. R E F E R E N C E S [1] H. Koshimizu, M. T ominaga, T . Fujiwara, and K. Murakami, “On kansei facial image processing for computerized facial caricaturing system picasso, ” in IEEE International Conference on Systems, Man, and Cybernetics , v ol. 6, 1999, pp. 294–299. [2] N. K. H. Le, Y . P . Why , and G. Ashraf, “Shape stylized face caricatures, ” in Advances in Multimedia Modeling , 2011, pp. 536–547. [3] P .-Y . C. W .-H. Liao and T .-Y . Li, “ Automatic caricature generation by analyzing facial features, ” in Pr oceeding of Asia Confer ence on Computer V ision , vol. 2, 2004. [4] L. A. Gatys, A. S. Ecker, and M. Bethge, “Image style transfer using con volutional neural networks, ” in IEEE Conference on Computer V ision and P attern Recognition , 2016, pp. 2414–2423. [5] J. Johnson, A. Alahi, and L. Fei-Fei, “Perceptual losses for real-time style transfer and super-resolution, ” in Pr oceedings of the Eur opean Confer ence on Computer V ision , 2016, pp. 694–711. [6] D. Ulyanov , V . Lebedev , Andrea, and V . Lempitsky , “T exture networks: Feed-forward synthesis of textures and stylized images, ” in Pr oceedings of the International Conference on Machine Learning , vol. 48, 2016, pp. 1349–1357. [7] X. Huang and S. Belongie, “ Arbitrary style transfer in real-time with adaptiv e instance normalization, ” in IEEE International Conference on Computer V ision , 2017, pp. 1510–1519. [8] M.-Y . Liu and O. Tuzel, “Coupled generati ve adversarial networks, ” in Advances in Neural Information Pr ocessing Systems , 2016, pp. 469–477. [9] M.-Y . Liu, T . Breuel, and J. Kautz, “Unsupervised image-to-image translation networks, ” in Advances in Neural Information Processing Systems , 2017, pp. 700–708. [10] J. Zhu, T . Park, P . Isola, and A. A. Efros, “Unpaired image-to-image translation using cycle-consistent adversarial networks, ” in IEEE Inter- national Confer ence on Computer V ision , 2017, pp. 2242–2251. [11] X. Huang, M.-Y . Liu, S. Belongie, and J. Kautz, “Multimodal unsu- pervised image-to-image translation, ” in Pr oceedings of the Eur opean Confer ence on Computer V ision , 2018, pp. 179–196. [12] Y . T aigman, A. Polyak, and L. W olf, “Unsupervised cross-domain image generation, ” in International Conference on Learning Repr esentations , 2017. [13] H. Hou, J. Huo, and Y . Gao, “Cross-domain adversarial autoencoder for fine grained category preserving image translation, ” in International Joint Conference on Neural Networks , 2020, pp. 1–7. [14] I. Goodfellow , J. Pouget-Abadie, M. Mirza, B. Xu, D. W arde-Farley , S. Ozair, A. Courville, and Y . Bengio, “Generati ve adversarial nets, ” in Advances in Neural Information Processing Systems , 2014, pp. 2672– 2680. [15] W . Li, W . Xiong, H. Liao, J. Huo, Y . Gao, and J. Luo, “Carigan: Car- icature generation through weakly paired adversarial learning, ” Neural Networks , vol. 132, pp. 66–74, 2020. [16] Y . Shi, D. Deb, and A. K. Jain, “W arpgan: Automatic caricature genera- tion, ” in IEEE Conference on Computer V ision and P attern Recognition , 2019, pp. 10 762–10 771. [17] K. Cao, J. Liao, and L. Y uan, “Carigans: unpaired photo-to-caricature translation, ” ACM T ransactions on Graphics (TOG) , vol. 37, no. 6, pp. 1–14, 2018. [18] P . Isola, J. Zhu, T . Zhou, and A. A. Efros, “Image-to-image translation with conditional adversarial networks, ” in IEEE Confer ence on Com- puter V ision and P attern Recognition , 2017, pp. 5967–5976. [19] H. T ang, H. Liu, and N. Sebe, “Unified generative adversarial networks for controllable image-to-image translation, ” IEEE T ransactions on Image Processing , vol. 29, pp. 8916–8929, 2020. [20] Z. Gan, L. Chen, W . W ang, Y . Pu, Y . Zhang, H. Liu, C. Li, and L. Carin, “Triangle generative adversarial networks, ” in Advances in Neural Information Processing Systems , 2017, pp. 5247–5256. [21] J. Donahue, P . Kr ¨ ahenb ¨ uhl, and T . Darrell, “ Adversarial feature learn- ing, ” arXiv preprint , 2016. [22] A. Almahairi, S. Rajeshwar , A. Sordoni, P . Bachman, and A. Courville, “ Augmented CycleGAN: Learning many-to-many mappings from un- paired data, ” in Pr oceedings of the International Conference on Machine Learning , vol. 80, 2018, pp. 195–204. [23] S. E. Brennan, “Caricature generator: The dynamic e xaggeration of faces by computer , ” Leonardo , vol. 18, no. 3, pp. 170–178, 1985. [24] Lin Liang, Hong Chen, Y ing-Qing Xu, and Heung-Y eung Shum, “Example-based caricature generation with e xaggeration, ” in P acific Confer ence on Computer Graphics and Applications , 2002, pp. 386– 393. IEEE TRANSA CTIONS ON IMA GE PR OCESSING 14 [25] R. N. Shet, K. H. Lai, E. A. Edirisinghe, and P . W . H. Chung, “Use of neural networks in automatic caricature generation: An approach based on drawing style capture, ” in P attern Recognition and Image Analysis , 2005, pp. 343–351. [26] F . Cole, D. Belanger, D. Krishnan, A. Sarna, I. Mosseri, and W . T . Freeman, “Synthesizing normalized faces from facial identity features, ” in IEEE Conference on Computer V ision and P attern Recognition , 2017, pp. 3386–3395. [27] X. Mao, Q. Li, H. Xie, R. Y . K. Lau, Z. W ang, and S. P . Smolley, “Least squares generative adversarial networks, ” in IEEE International Confer ence on Computer V ision , 2017, pp. 2813–2821. [28] J. Huo, Y . Gao, Y . Shi, and H. Y in, “V ariation robust cross-modal metric learning for caricature recognition, ” in Pr oceedings of the on Thematic W orkshops of A CM Multimedia 2017 , 2017, pp. 340–348. [29] J. Huo, W . Li, Y . Shi, Y . Gao, and H. Yin, “W ebcaricature: a benchmark for caricature recognition, ” in British Machine V ision Conference , 2018. [30] A. Makhzani, J. Shlens, N. Jaitly , I. Goodfello w , and B. Fre y , “ Adver- sarial autoencoders, ” arXiv pr eprint arXiv:1511.05644 . [31] M. Heusel, H. Ramsauer , T . Unterthiner , B. Nessler, and S. Hochreiter , “Gans trained by a two time-scale update rule con verge to a local nash equilibrium, ” in Advances in Neural Information Pr ocessing Systems , 2017, pp. 6626–6637. [32] J. Deng, J. Guo, N. Xue, and S. Zafeiriou, “ Arcface: Additive angular margin loss for deep face recognition, ” in IEEE/CVF Conference on Computer V ision and P attern Recognition , 2019, pp. 4685–4694. Haodi Hou received the BSc from Nanjing Univer - sity , China in 2017. Currently , he is a MSc candidate in the Department of Computer Science and T ech- nology at Nanjing Univ ersity and a member of RL Group, which is led by professor Y ang Gao. His research interests are mainly on Neural Networks, Generativ e Adv ersarial Nets and their application in image translation and face recognition. Jing Huo received the PhD degree from the Depart- ment of Computer Science and T echnology , Nan- jing Univ ersity , Nanjing, China, in 2017. She is currently an Assistant Researcher with the Depart- ment of Computer Science and T echnology , Nanjing Univ ersity . Her current research interests include machine learning and computer vision, with a focus on subspace learning, adversarial learning and their applications to heterogeneous face recognition and cross-modal face generation. Jing Wu received the BSc and MSc degrees from Nanjing University , China in 2002 and 2005 respec- tiv ely . She received the PhD degree in computer sci- ence from the Uni versity of Y ork, UK in 2009. She worked as a research associate in Cardiff University subsequently , and is currently a lecturer in computer science and informatics at Cardiff Univ ersity . Her research interests are on image-based 3D reconstruc- tion and its applications. She is a member of A CM and BMV A. Y u-Kun Lai received the bachelor’ s and PhD de- grees in computer science from Tsinghua University , in 2003 and 2008, respectiv ely . He is currently a Professor with the School of Computer Science & Informatics, Cardiff University . His research inter- ests include computer graphics, geometry process- ing, image processing, and computer vision. He is on the editorial boards of Computer Graphics F orum and The V isual Computer . Y ang Gao (M’05) received the Ph.D. degree in com- puter software and theory from Nanjing University , Nanjing, China, in 2000. He is a Professor with the Department of Computer Science and T echnology , Nanjing University . He has published over 100 pa- pers in top conferences and journals. His current research interests include artificial intelligence and machine learning.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment