Improved error rates for sparse (group) learning with Lipschitz loss functions

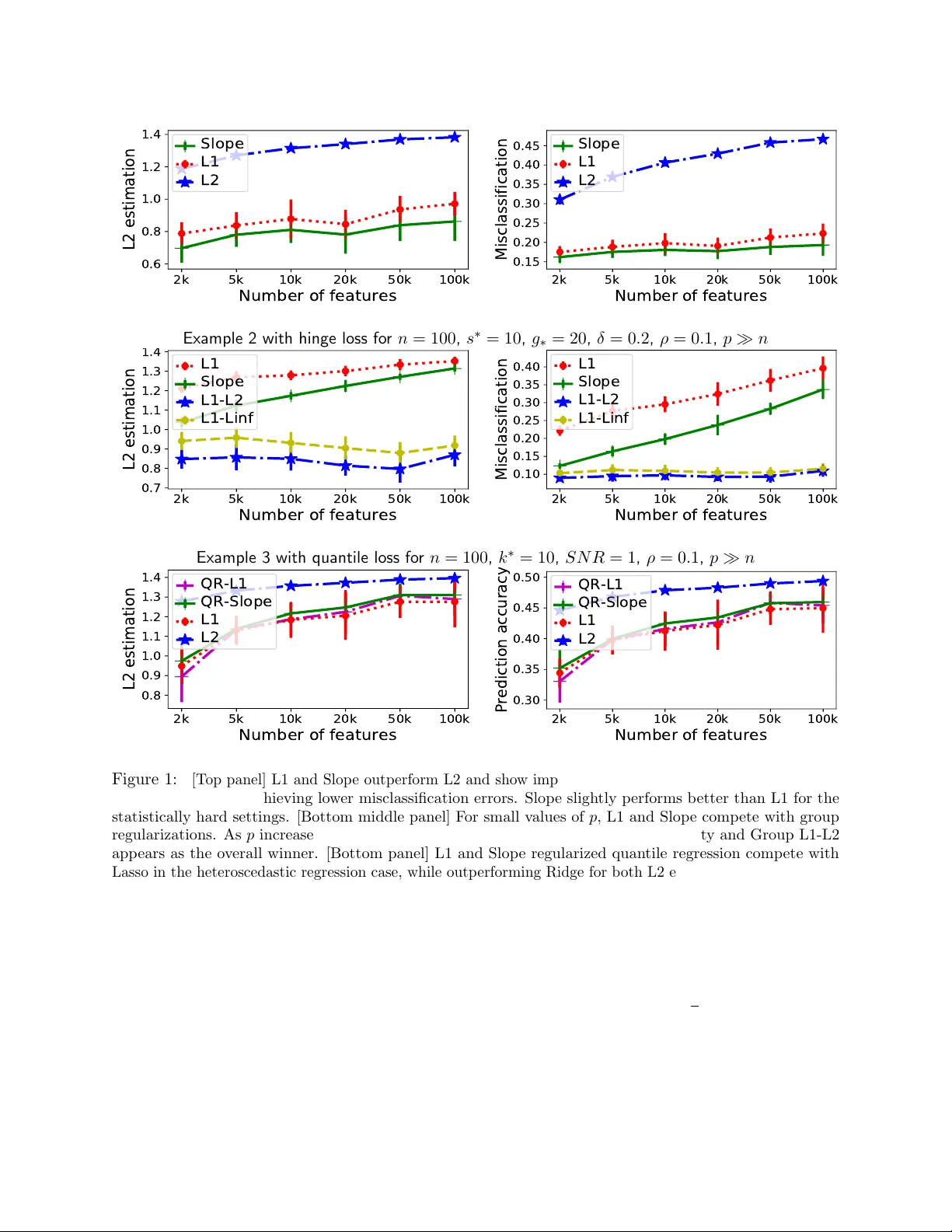

We study a family of sparse estimators defined as minimizers of some empirical Lipschitz loss function -- which include the hinge loss, the logistic loss and the quantile regression loss -- with a convex, sparse or group-sparse regularization. In par…

Authors: Antoine Dedieu