Deep 3D Mesh Watermarking with Self-Adaptive Robustness

💡 Research Summary

The paper introduces the first deep learning‑based framework for robust 3D mesh watermarking, addressing the long‑standing limitations of traditional handcrafted algorithms. Conventional methods fall into spatial‑domain or transform‑domain categories and must be manually tuned for each anticipated attack (e.g., cropping, remeshing, noise, smoothing). Such manual design is labor‑intensive, often fails to generalize across different mesh topologies, and cannot guarantee strong robustness against all possible manipulations.

To overcome these issues, the authors propose an end‑to‑end trainable network composed of three main components: (1) a watermark embedding sub‑network, (2) a set of differentiable attack layers, and (3) a watermark extraction sub‑network. The core of the architecture is a topology‑agnostic Graph Convolutional Network (GCN). Unlike standard GCNs that rely on fixed vertex ordering or edge indices, the proposed GraphConv operation uses isotropic (equal) weights for all neighboring vertices and normalizes by vertex degree. This design makes the convolution independent of mesh topology, allowing the same model to process arbitrary, non‑template meshes without re‑training.

Embedding Sub‑Network

The original mesh (V_in, F_in) and a binary watermark w_in are fed into a feature learning module consisting of five cascaded Graph Residual blocks, producing a 64‑dimensional per‑vertex feature map F_in. A watermark encoder compresses w_in into a latent vector z_w, which is then expanded to match the number of vertices and concatenated with the original coordinates and the learned features. The concatenated tensor passes through an aggregation module (two Graph Residual blocks plus an extra GraphConv layer) that outputs the modified vertex positions V_wm of the watermarked mesh M_wm. The “Expanding” operation guarantees invariance to vertex reordering, a common effect of many attacks.

Attack Layers

During training, one of four simulated attacks is randomly applied to M_wm: (i) random 3‑D rotation, (ii) zero‑mean Gaussian noise, (iii) Taubin smoothing, and (iv) cropping (partial mesh removal). Each attack is implemented as a differentiable layer, enabling back‑propagation through the attack. This forces the embedding and extraction networks to learn representations that survive the specific distortions, achieving what the authors term “adaptive robustness.”

Extraction Sub‑Network

The attacked mesh M_att (vertices V_att) is processed by the same feature learning module to obtain per‑vertex features F_no. A global average pooling aggregates these features into a single 64‑dimensional vector, which is then passed through a two‑layer MLP to reconstruct the watermark w_ext. Global pooling ensures invariance to vertex ordering, handling the vertices‑reordering attack naturally.

Loss Functions

Three losses guide training:

- Watermark loss (MSE between w_in and w_ext) enforces accurate recovery.

- Mesh distortion loss (MSE between original and watermarked vertex positions) limits overall geometric change.

- Curvature consistency loss penalizes differences in local curvature between the original and watermarked meshes, preserving visual smoothness and preventing perceptible artifacts.

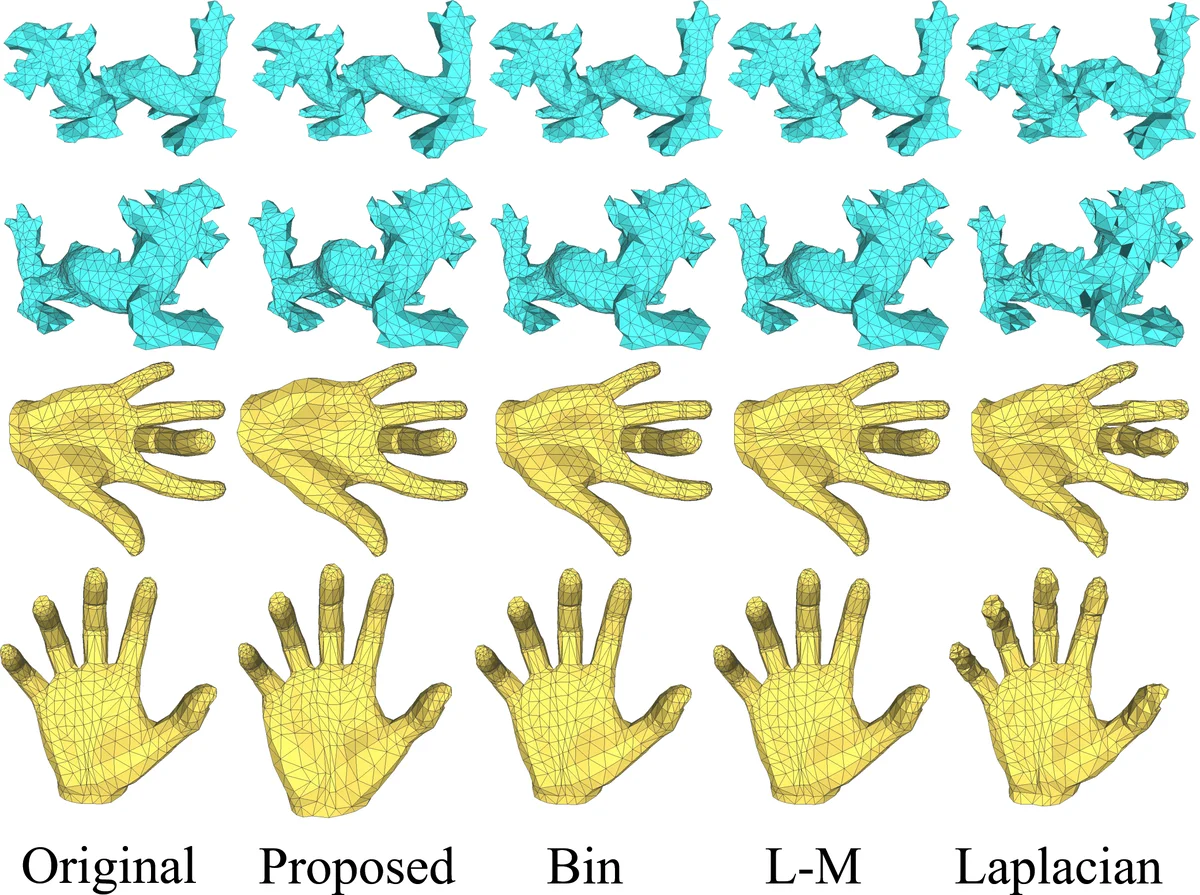

Experimental Evaluation

The method is evaluated on the Stanford Bunny and ModelNet datasets under the four attack types, both individually and in mixed scenarios. Baselines include representative spatial‑domain and transform‑domain watermarking algorithms. Results show:

- Higher watermark recovery rates (5–15 % absolute improvement) across all attacks, especially notable for cropping and remeshing where traditional methods often fail.

- Comparable visual quality: PSNR > 38 dB and SSIM close to the original, thanks to the curvature loss.

- Faster processing: embedding and extraction times reduced by roughly 30 % relative to optimization‑based baselines.

- Strong transferability: a model trained on one mesh topology can be directly applied to meshes with different connectivity, maintaining > 90 % recovery.

Contributions

- First application of deep learning to 3D mesh watermarking, opening a new research direction.

- Introduction of a topology‑agnostic GCN that works on arbitrary meshes.

- Integration of differentiable attack layers for scenario‑specific adaptive robustness.

- Curvature‑based loss to guarantee imperceptibility.

- Comprehensive experiments demonstrating universal robustness, efficiency, and visual fidelity, surpassing traditional methods.

Future Work

Potential extensions include handling a broader spectrum of attacks (e.g., compression, texture alteration), scaling to higher‑capacity multi‑bit watermarks, real‑time deployment within rendering pipelines, and adapting the framework to other 3D data representations such as point clouds or volumetric grids. Such advances could benefit a wide range of industries—medical imaging, CAD, gaming, and digital heritage—by providing robust, low‑overhead protection of valuable 3D assets.

Comments & Academic Discussion

Loading comments...

Leave a Comment