What Do Compressed Deep Neural Networks Forget?

Deep neural network pruning and quantization techniques have demonstrated it is possible to achieve high levels of compression with surprisingly little degradation to test set accuracy. However, this measure of performance conceals significant differ…

Authors: Sara Hooker, Aaron Courville, Gregory Clark

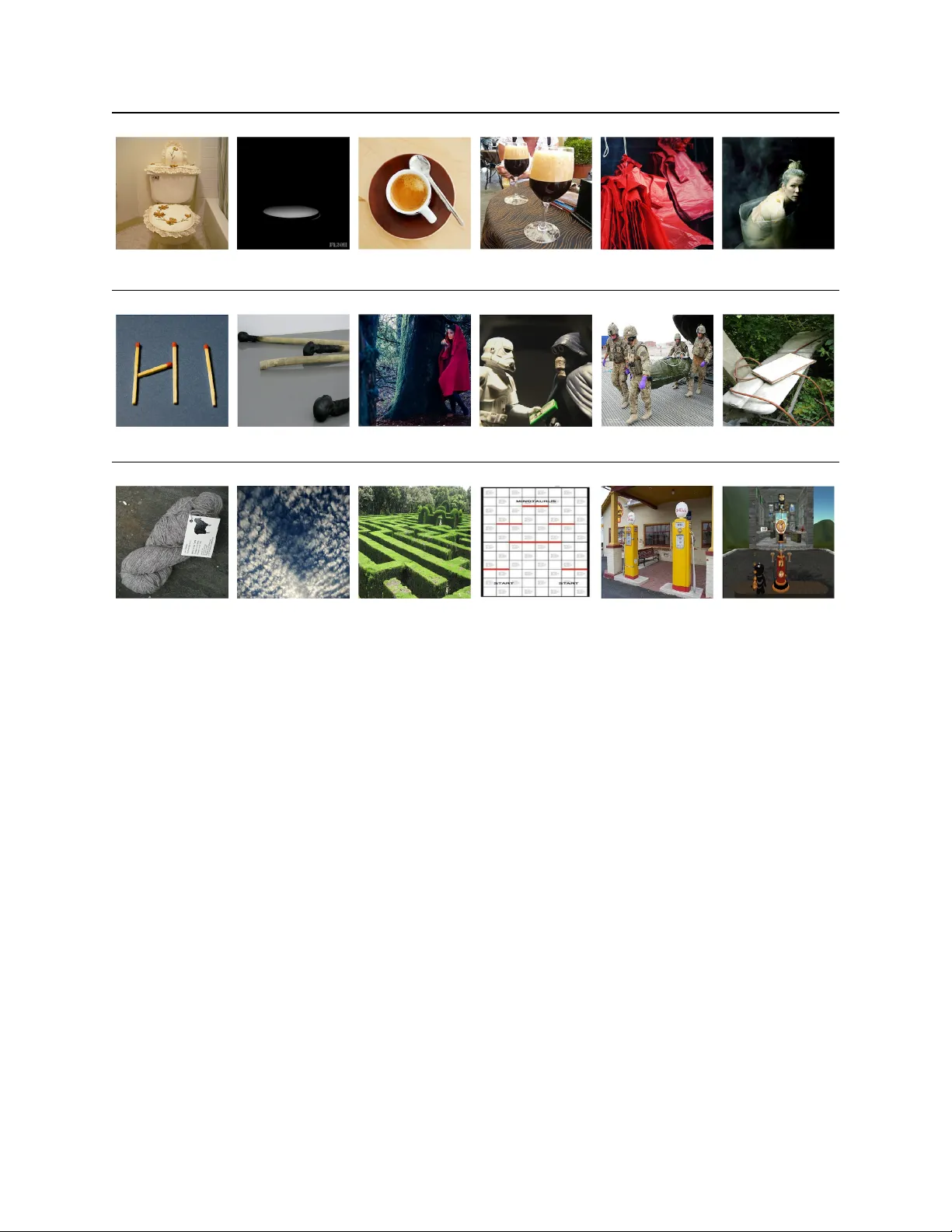

W H A T D O C O M P R E S S E D D E E P N E U R A L N E T W O R K S F O R G E T ? Sara Hooker ∗ Google Brain Aaron Cour ville MILA Gregory Clark Google Y ann Dauphin Google Brain Andrea Fr ome Google Brain A B S T R AC T Deep neural network pruning and quantization techniques ha ve demonstrated it is possible to achiev e high lev els of compression with surprisingly little degradation to test set accuracy . Ho wev er , this measure of performance conceals significant differences in ho w different classes and images are impacted by model compression techniques. W e find that models with radically dif ferent numbers of weights ha ve comparable top-line performance metrics but di verge considerably in beha vior on a narrow subset of the dataset. This small subset of data points, which we term Pruning Identified Exemplars (PIEs), are systematically more impacted by the introduction of sparsity . Our work is the first to provide a formal framework for auditing the disparate harm incurred by compression and a way to quantify the trade- of fs in v olved. An understanding of this disparate impact is critical giv en the widespread deployment of compressed models in the wild. 1 Introduction Between infanc y and adulthood, the number of synapses in our brain first multiply and then fall. Synaptic pruning improv es efficienc y by removing redundant neurons and strengthening synaptic connections that are most useful for the en vironment (Rakic et al., 1994). Despite losing 50% of all synapses between age two and ten, the brain continues to function (K olb & Whishaw, 2009; So well et al., 2004). The phrase "Use it or lose it" is frequently used to describe the en vironmental influence of the learning process on synaptic pruning, ho wev er there is little scientific consensus on what exactly is lost (Casey et al., 2000). In this work, we ask what is lost when we compress a deep neural network. W ork since the 1990s has shown that deep neural networks can be pruned of “e xcess capacity” in a similar fashion to synaptic pruning (Cun et al., 1990; Hassibi et al., 1993a; No wlan & Hinton, 1992; W eigend et al., 1991). At f ace v alue, compression appears to promise you can hav e it all. Deep neural networks are remarkably tolerant of high le vels of pruning and quantization with an almost negligible loss to top-1 accurac y (Han et al., 2015; Ullrich et al., 2017; Liu et al., 2017; Louizos et al., 2017; Collins & K ohli, 2014; Lee et al., 2018). These more compact networks are frequently f av ored in resource constrained settings; compressed models require less memory , ener gy consumption and hav e lower inference latency (Reagen et al., 2016; Chen et al., 2016; Theis et al., 2018; Kalchbrenner et al., 2018; V alin & Sk oglund, 2018; T essera et al., 2021). The ability to compress networks with seemingly so little degradation to generalization performance is puzzling. Ho w can networks with radically different representations and number of parameters ha ve compa- rable top-le vel metrics? One possibility is that test-set accurac y is simply not a precise enough measure to capture ho w compression impacts the generalization properties of the model. Despite the widespread use of compression techniques, articulating the trade-offs of compression has ov erwhelmingly focused on change to ov erall top-1 accuracy for a gi ven lev el of compression. The cost to top-1 accuracy appears minimal if it is spread uniformally across all classes, b ut what if the cost is concentrated in only a fe w classes? Ar e certain types of examples or classes dispr oportionately ∗ Correspondence should be directed to shooker@google.com W H A T D O C O MP R E S S E D D E E P N E U R A L N E TO W R K S F O R G E T ? toilet seat espresso plastic bag Non-PIE PIE Non-PIE PIE Non-PIE PIE matchstick cloak stretcher Non-PIE PIE Non-PIE PIE Non-PIE PIE wool maze gas pump Non-PIE PIE Non-PIE PIE Non-PIE PIE Figure 1: Pruning Identified Exemplars (PIEs) are images where there is a high le v el of disagreement between the predictions of pruned and non-pruned models. V isualized are a sample of ImageNet PIEs alongside a non-PIE image from the same class. Abo ve each image pair is the true label. impacted by compr ession? In this work, we propose a formal frame work to audit the impact of compression on generalization properties be yond top-line metrics. Our w ork is the first to our kno wledge that asks how dis-aggregated measures of model performance at a class and e xemplar le vel are impacted by compression. Contributions W e run thousands of large scale experiments and establish consistent results across multiple datasets— CIF AR-10 (Krizhe vsk y, 2012), CelebA (Liu et al., 2015) and ImageNet (Deng et al., 2009), widely used pruning and quantization techniques, and model architectures. W e find that: 1. T op-line metrics such as top-1 or top-5 test-set accuracy hide critical details in the ways that pruning impacts model generalization. Certain parts of the data distribution are far more sensiti ve to v arying the number of weights in a network, and bear the brunt of the cost of varying the weight representation. 2. The examples most impacted by pruning, which we term Pruning Identified Exemplar s (PIEs) , are more challenging for both models and humans to classify . W e conduct a human study and find that PIEs tend to be mislabelled, of lower quality , depict multiple objects, or require fine-grained classification. Compression impairs the model’ s ability to predict accurately on the long-tail of less frequent instances. 3. Pruned networks are more sensitiv e to natural adversarial images and corruptions. This sensitivity is amplified at higher le vels of compression. 4. While all compression techniques that we e v aluate ha ve a non-uniform impact, not all methods are created equal. High levels of pruning incur a far higher disparate impact than is observed for the quantization techniques that we e valuate. 2 W H A T D O C O M P R E S S E D D E E P N E U R A L N E T OW R K S F O R G E T ? Our w ork pro vides intuition into the role of capacity in deep neural networks and a mechanism to audit the trade-of fs incurred by compression. Our findings suggest that caution should be used before deplo ying compressed networks to sensiti ve domains. Our PIE methodology could concei v ably be explored as a mechanism to surface a tractable subset of atypical examples for further human inspection (Leibig et al., 2017; Zhang, 1992), to choose not to classify certain examples when the model is uncertain (Bartlett & W egkamp, 2008; Cortes et al., 2016), or to aid interpretability as a case based reasoning tool to e xplain model behavior (Kim et al., 2016; Caruana, 2000; Hook er et al., 2019). 2 Methodology and Experiment Framework 2.1 Preliminaries W e consider a supervised classification problem where a deep neural network is trained to approximate the function F that maps an input v ariable X to an output variable Y , formally F : X 7→ Y . The model is trained on a training set of N images D = { ( x i , y i ) } N i =1 , and at test time makes a prediction y ∗ i for each image in the test set. The true labels y i are each assumed to be one of C classes, such that y i = [1 , ...., C ] . A reasonable response to our desire for more compact representations is to simply train a netw ork with fe wer weights. Howe v er , as of yet, starting out with a compact dense model has not yielded competiti ve test-set performance (Li et al., 2020; Zhu & Gupta, 2017b). Instead, research has centered on a more tractable direction of in vestigation – the model begins training with "excess capacity" and the goal is to remov e the parts that are not strictly necessary for the task by/at the end of training. A pruning method P identifies the subset of weights to set to zero. A sparse model function, ˆ f p t , is one where a fraction t of all model weights are set to zero. Equating weight v alue to zero ef fecti vely remov es the contrib ution of a weight, as multiplication with inputs no longer contrib utes to the activ ation. A non-compressed model function is one where all weights are trainable ( t = 0 ). W e refer to the o verall model accurac y as β M t . In contrast, t = 0 . 9 indicates that 90% of model weights are removed o ver the course of training, lea ving a maximum of 10% non-zero weights. 2.2 Class lev el measure of impact If the impact of compression was completely uniform, the relativ e relationship between class lev el accuracy β c t and o v erall model performance will be unaltered. This forms our null hypothesis ( H 0 ). W e must decide for each class c whether to reject the null hypothesis and accept the alternate hypothesis ( H 1 ) - the relati ve change to class le vel recall dif fers from the change to o verall accurac y in either a positi ve or ne gati ve direction: H 0 : β c 0 β M 0 = β c t β M t (1) H 1 : β c 0 β M 0 6 = β c t β M t (2) W elch’ s t-test Ev aluating whether the dif ference between the samples of mean-shifted class accurac y from compressed and non-compressed models is “real” amounts to determining whether these two data samples are drawn from the same underlying distribution, which is the subject of a large body of goodness of fit literature (D’Agostino & Stephens, 1986; Anderson & Darling, 1954; Huber-Carol et al., 2002). W e independently train a population of K models for each compression method, dataset, and model that we consider . Thus, we hav e a sample S c t of accuracy metrics per class c at each lev el of compression t . For each class c , we we use a two-tailed, independent W elch’ s t-test (W elch, 1947) to determine whether the mean-shifted class accuracy S c t = { β c t,k − β M t,k } K k =1 of the samples S c t and S c 0 dif fer significantly . If the p -v alue < = 0 . 05 , we reject the null hypothesis and consider the class to be disparately impacted by t le vel of compression relati ve to the baseline. Controlling f or overall changes to top-line metrics Note that by comparing the relative dif ference in class accuracy S c t , we control for an y o verall dif ference in model test-set accuracy . This is important because while 3 W H A T D O C O M P R E S S E D D E E P N E U R A L N E T OW R K S F O R G E T ? small, the dif ference in top-line metrics is not zero (see T able. 2). Along with the p -v alue, for each class we report the av erage relati ve de viation in class-level accurac y , which we refer to as relative r ecall differ ence : 1 K K X k =1 β c t,k β c 0 ,k ! (3) T able 1: Distributions of top-1 accurac y for populations of independently quantized and pruned models for ImageNet, CIF AR-10 and CelebA. For ImageNet, we also include top-5. Note that the scale of the x-axis dif fers between plots. 2.3 Pruning Identified Exemplars In addition to measuring the class le v el impact of compression, we are interested in ho w model predictiv e behavior changes through the compression process. Giv en the limitations of un-calibrated probabilities in deep neural netw orks (Guo et al., 2017; K endall & Gal, 2017), we focus on the le vel of disagreement between the predictions of compressed and non-compressed networks on a giv en image. Using the populations of models K described in the prior section, we construct sets of predictions Y ∗ i,t = { y ∗ i,k,t } K k =1 for a gi ven image i . For set Y ∗ i,t we find the modal label , i.e. the class predicted most frequently by the t -pruned model population for image i , which we denote y M i,t . The ex emplar is classified as a pruning identified ex emplar PIE t if and only if the modal label is dif ferent between the set of t -pruned models and the non-pruned baseline models: PIE i,t = 1 if y M i, 0 6 = y M i,t 0 otherwise W e note that there is no constraint that the non-pruned predictions for PIEs match the true label. Thus the detection of PIEs is an unsupervised protocol that can be performed at test time. 2.4 Experimental framework T asks W e ev aluate the impact of compression across three classification tasks and models: a wide ResNet model (Zagoruyko & Komodakis, 2016) trained on CIF AR-10, a ResNet-50 (He et al., 2015) trained on ImageNet, and a ResNet-18 trained on CelebA. All networks are trained with batch normalization (Iof fe & Szegedy, 2015), weight decay , decreasing learning rate schedules, and augmented training data. W e train for 32 , 000 steps (approximately 90 epochs) on ImageNet with a batch size of 1024 images, for 80 , 000 steps on CIF AR-10 with a batch size of 128 , and 10 , 000 steps on CelebA with a batch size of 256 . For ImageNet, 4 W H A T D O C O M P R E S S E D D E E P N E U R A L N E T OW R K S F O R G E T ? CIF AR-10 and CelebA, the baseline non-compressed model obtains a mean top-1 accuracy of 76 . 68% , 94 . 35% and 94 . 73% respecti vely . Our goal is to mov e beyond anecdotal observations, and to measure statistical de viations between populations of models. Thus, we report metrics and statistical significance for each dataset, model and compression v ariant across 30 independent trainings. Figure 2: Compression disproportionately impacts a small subset of ImageNet classes. Plum bars indicate the subset of examples where the impact of compression is statistically significant. Green scatter points sho w normalized recall difference which normalizes by ov erall change in model accuracy , and the bars show absolute recall difference. Left: 50% pruning. Center: 70% pruning. Right: post-training int8 dynamic range quantization. The class labels are sampled for readability . Pruning and quantization techniques considered W e ev aluate magnitude pruning as proposed by Zhu & Gupta (2017a). For pruning, we vary the end sparsity precisely for t ∈ { 0 . 3 , 0 . 5 , 0 . 7 , 0 . 9 } . For example, t = 0 . 9 indicates that 90% of model weights are removed o ver the course of training, lea ving a maximum of 10% non-zero weights. F or each le vel of pruning t , we train 30 models from random initialization. W e ev aluate three different quantization techniques: float16 quantization float16 (Micike vicius et al., 2017), hybrid dynamic range quantization with int8 weights hybrid (Alv arez et al., 2016) and fix ed-point only quantization with int8 weights created with a small representati ve dataset fixed-point (V anhouck e et al., 2011; Jacob et al., 2018). All quantization methods we e v aluate are implemented post-training, in contrast to the pruning which is applied progressi vely o ver the course of training. W e use a limited grid search to tailor the pruning schedule and hyperparameters to each dataset to maximize top-1 accurac y . W e include additional details about training methodology and pruning techniques in the supplementary material. All the code for this paper is publicly av ailable here. 5 W H A T D O C O M P R E S S E D D E E P N E U R A L N E T OW R K S F O R G E T ? 3 Results 3.1 Disparate impact of compression W e find consistent results across all datasets and compression techniques considered; a small subset of classes are disproportionately impacted. This disparate impact is far from random, with statistically significant differ - ences in class le v el recall between a population of non-compressed and compressed models. Compression induces “selective for getting” with performance on certain classes evidencing far more sensiti vity to v arying the representation of the network. This sensitivity is amplified at higher le vels of sparsity with more classes e videncing a statistically significant relativ e change in recall. For example, as seen in T able 2 at 50% sparsity 170 ImageNet classes are statistically significant which increases to 372 classes at 70% sparsity . Cannibalizing a small subset of classes Out of the classes where there is a statistically significant deviation in performance, we observe a subset of classes that benefit relative to the av erage class as well as classes that are impacted adversely . Ho we ver , the av erage absolute class decrease in recall is far larger than the av erage increase, meaning that the losses in generalization caused by pruning is f ar more concentrated than the relati ve gains. Compression cannibalizes performance on a small subset of classes to preserve a similar ov erall top-line accuracy . Comparison of quantization and pruning techniques While all the techniques we benchmark evidence disparate class lev el impact, we note that quantization appears to introduce less disparate harm. For example, the most aggressi ve form of post-training quantization considered, fix ed-point only quantization with int8 weights fixed-point , impacts the relative r ecall dif fer ence of 119 ImageNet classes in a statistically significant way . In contrast, at 90% sparsity , r elative r ecall dif fer ence is statistically significant for 637 classes. These results suggest that the representation learnt by a netw ork is far more robust to changes in precision versus remo ving the weights entirely . F or sensiti ve tasks, quantization may be more viable for practitioners as there is less systematic disparate impact. Complexity of task The impact of compression depends upon the de gree of o verparameterization present in the network gi v en the complexity of the task in question. For example, the ratio of classes that are significantly impacted by pruning was lower for CIF AR-10 than for ImageNet. One class out of ten was significantly impacted at 30% and 50% , and two classes were impacted at 90% . W e suspect that we measured less disparate impact for CIF AR-10 because, while the model has less capacity , the number of weights is still suf ficient to model the limited number of classes and lower dimensional dataset. In the next section, we le verage PIEs to characterize and gain intuition into why certain parts of the distrib ution are systematically far more sensiti ve to compression. 3.2 Pruning Identified Exemplars T o better understand why a narro w part of the data distrib uton is far more sensiti ve to compression, we ( 1 ) e v aluate whether PIEs are more dif ficult for an algorithm to classify , ( 2 ) conduct a human study to codify the attributes of a sample of PIEs and Non-PIEs, and ( 3 ) e valuate whether PIEs o ver -index on underrepresented sensiti ve attrib utes in CelebA. At e very le vel of compression, we identify a subset of PIE images that are disproportionately sensitiv e to the remov al of weights (for each of CIF AR-10, CelebA and ImageNet). The number of images classified as PIE increases with the le vel of pruning. At 90% sparsity , we classify 10 . 27% of all ImageNet test-set images as PIEs, 2 . 16% of CIF AR-10, and 16 . 17% of CelebA. T est-error on PIEs In Fig. 3, we e v aluate a random sample of ( 1 ) PIE images, ( 2 ) non-PIE images and ( 3 ) entire test-set for each of the datasets considered. W e find that PIE images are far more challenging for a non-compressed model to classify . Evaluation on PIE images alone yields substantially lower top-1 accuracy . The results are consistent across CIF AR-10 (top-1 accuracy falls from 94 . 89% to 43 . 64% ), CelebA ( 94 . 10% to 50 . 41% ), and ImageNet datasets ( 76 . 75% to 39 . 81% ). Notably , on ImageNet, we find that removing PIEs greatly improv es generalization performance. T est-set accuracy on non-PIEs increased to 81 . 20% relati ve to baseline top-1 performance of 76 . 75% . 6 W H A T D O C O M P R E S S E D D E E P N E U R A L N E T OW R K S F O R G E T ? F R AC T I O N P R U N E D T O P 1 T O P 5 C O U N T S I G N I F C L A S S E S C O U N T P I E S 0 7 6 . 6 8 9 3 . 2 5 - - 3 0 7 6 . 4 6 9 3 . 1 7 6 8 1 , 8 1 9 5 0 7 5 . 8 7 9 2 . 8 6 1 7 0 2 , 1 9 3 7 0 7 5 . 0 2 9 2 . 4 3 3 7 2 3 , 0 7 3 9 0 7 2 . 6 0 9 1 . 1 0 6 3 7 5 , 1 3 6 Q UA N T I Z AT I O N FL O A T 1 6 7 6 . 6 5 9 3 . 2 5 5 8 2 0 1 9 DY N A M I C R A N G E I N T 8 7 6 . 1 0 9 2 . 9 4 1 4 4 2 1 9 3 FI X E D - P O I N T I N T 8 7 6 . 4 6 9 3 . 1 6 1 1 9 2 0 9 3 T able 2: ImageNet top-1 and top-5 accuracy at all lev els of pruning and quantization, averaged o ver all runs. Count PIEs is the count of images classified as a Pruning Identified Ex emplars at e very compression le vel. W e include comparable tables for CelebA and CIF AR-10 in the appendix. Human study W e conducted a human study ( 85 participants) to label a random sample of 1230 PIE and non-PIE ImageNet images. Humans in the study were shown a balanced sample of PIE and non-PIE images that were selected at random and shuf fled. The classification as PIE or non-PIE was not kno wn or a v ailable to the human. What mak es PIEs differ ent fr om non-PIEs? The participants were ask ed to codify a set of attributes for each image. W e report the relative distrib ution of PIE and non-PIE after each attribute, with the higher relati ve share in bold: 1. ground truth label incorr ect or inadequate – image contains insuf ficient information for a human to arri ve at the correct ground truth label. [ 8 . 90% of non-PIEs, 20.05% of PIEs] 2. multiple-object image – image depicts multiple objects where a human may consider se veral labels to be appropriate (e.g., an image which depicts both a paddle and canoe or a desktop computer consisting of a screen , mouse , and monitor ). [ 39 . 53% of non-PIE, 59.15 % of PIEs] 3. corrupted image – image exhibits common corruptions such as motion blur , contrast, pixelation. W e also include in this cate gory images with super -imposed text or an artificial frame as well as images that are black and white rather than the typical RGB color images in ImageNet. [ 14.37% of non-PIE, 13 . 72% of PIEs] 4. fine grained classification – image inv olv es classifying an object that is semantically close to v arious other class categories present in the dataset (e.g., rock crab and fiddler crab , bassinet and cradle , cuirass and breastplate ). [ 8 . 9% of non-PIEs, 43.55% of PIEs] 5. abstract repr esentations – image depicts a class object in an abstract form such a cartoon, painting, or sculptured incarnation of the object. [ 3 . 43% of non-PIE, 5.76% of PIE] PIEs heavily o ver -index relativ e to non-PIEs on certain properties, such as ha ving an incorr ect gr ound truth label , inv olving a fine-grained classification task or multiple objects . This suggests that the task itself is often incorrectly specified. For e xample, while ImageNet is a single image classification tasks, 59% of ImageNet PIEs codified by humans were identified as multi-object images where multiple labels could be considered reasonable (vs. 39% of non-PIEs). In ImageNet, the ov er-inde xing of incorrectly labelled data and multi-object images in PIE also raises questions about whether the explosion of growth in number of weights in deep neural networks is solving a problem that is better addressed in the data cleaning pipeline. 4 Sensitivity of compr essed models to distrib ution shift Non-compressed models hav e already been shown to be v ery brittle to small shifts in the distribution that humans are rob ust. This can cause une xpected changes in model beha vior in the wild that can compromise human welfare (Zech et al., 2018). Here, we ask does compr ession amplify this brittleness? Understanding relati ve dif ferences in rob ustness helps understand the implications for AI safety of the widespread use of compressed models. 7 W H AT D O C O M P R E S S E D D E E P N E U R A L N E T O W R K S F O R G E T ? T op-1 Accuracy on PIE, All T est-Set, Non-PIE CelebA CIF AR-10 ImageNet Figure 3: A comparison of model performance on 1 ) a sample of Pruning Identified Exemplars (PIE), 2 ) the entire test-set and 3 ) a sample excluding PIEs. Inference on the non-PIE sample improves test-set top-1 accuracy relati ve to the baseline for ImageNet. Ev aluation on PIE images alone yields substantially lo wer top-1 accuracy . ImageNet-A ImageNet-C Figure 4: High lev els of compression amplify sensitivity to distrib ution shift. Left: Change in top-1 and top-5 recall of a pruned model relati ve to a non-pruned model on ImageNet-A. Right: W e measure the top-1 test-set performance on a subset of ImageNet-C corruptions of a pruned model relative to the non-pruned model on the same corruption. An extended list of all corruptions considered and top-5 accuracy is included in the supplementary material. T o answer this question, we e v aluate the sensiti vity of pruned models r elative to non-pruned models gi ven two open-source benchmarks for rob ustness: 1. ImageNet-C (Hendrycks & Dietterich, 2019) – 16 algorithmically generated corruptions (blur , noise, fog) applied to the ImageNet test-set. 2. ImageNet-A (Hendrycks et al., 2019) – a curated test set of 7 , 500 naturally adversarial images designed to produce drastically lo wer test accuracy . For each ImageNet-C corruption q ∈ Q , we compare top-1 accuracy of the pruned model ev aluated on corruption q normalized by non-pruned model performance on the same corruption. W e a verage across intensities of corruptions as described by Hendrycks & Dietterich (2019). If the relative top-1 accurac y was 0 it would mean that there is no dif ference in sensiti vity to corruptions considered. As seen in Fig. 4, pruning greatly amplifies sensitivit y to both ImageNet-C and ImageNet-A relati ve to non-pruned performance on the same inputs. For ImageNet-C, it is worth noting that relati ve de gradation 8 W H AT D O C O M P R E S S E D D E E P N E U R A L N E T O W R K S F O R G E T ? in performance is remarkably v aried across corruptions, with certain corruptions such as gaussian , shot noise , and impulse noise consistently causing far higher relativ e de gradation. At t = 90 , the high- est degradation in relativ e top-1 is shot noise ( − 40 . 11% ) and the lo west relati v e drop is brightness ( − 7 . 73% ). Sensiti vity to small distribution shifts is amplified at higher le vels of sparsity . W e include results for all corruptions and the absolute top-1 and top-5 accuracy on each corruption, lev el of pruning considered in the supplementary material T able. 8. The amplified sensitivity of smaller models to distribution shifts and the ov er -indexing of PIEs on lo w frequency attrib utes suggests that much of a models excess capacity is helpful for learning features which aid generalization on atypical or out-of-distrib ution data points. This builds upon recent w ork which suggests memorization can benefit generalization properties (Feldman & Zhang, 2020). 5 Related work The set of model compression techniques is diverse and includes research directions such as reducing the precision or bit size per model weight (quantization) (Jacob et al., 2018; Courbariaux et al., 2014; Hubara et al., 2016; Gupta et al., 2015), ef forts to start with a netw ork that is more compact with fe wer parameters, layers or computations (architecture design) (Ho ward et al., 2017; Iandola et al., 2016; K umar et al., 2017), student networks with fe wer parameters that learn from a larger teacher model (model distillation) (Hinton et al., 2015) and finally pruning by setting a subset of weights or filters to zero (Louizos et al., 2017; W en et al., 2016; Cun et al., 1990; Hassibi et al., 1993b; Ström, 1997; Hassibi et al., 1993a; Zhu & Gupta, 2017; See et al., 2016; Narang et al., 2017). In this work, we e v aluate the dis-aggre gated impact of a subset of pruning and quantization methods. Despite the widespread use of compression techniques, articulating the trade-offs of compression has ov erwhelming centered on change to ov erall accurac y for a gi ven le vel of com pression (Ström, 1997; Cun et al., 1990; Evci et al., 2019; Narang et al., 2017; Gale et al., 2019). Our work is the first to our kno wledge that asks ho w dis-aggregated measures of model performance at a class and e xemplar le v el are impacted by compression. In section 4, we also measure sensiti vity to two types of distrib ution shift – ImageNet-A and ImageNet-C. Recent work by (Guo et al., 2018; Sehwag et al., 2019) has considered sensiti vity of pruned models to a a dif ferent notion of robustness: l − p norm adversarial attacks. In contrast to adversarial robustness which measures the worst-case performance on tar geted perturbation, our results pro vide some understanding of ho w compressed models perform on subsets of challenging or corrupted natural image examples. Zhou et al. (2019) conduct an experiment which sho ws that networks which are pruned subsequent to training are more sensiti ve to the corruption of labels at training time. 6 Discussion and Future W ork The quantization and pruning techniques we ev aluate in this paper are already widely used in production systems and integrated with popular deep learning libraries. The popularity and widespread use of these techniques is dri ven by the se vere resource constraints of deploying models to mobile phones or embedded de vices (Samala et al., 2018). Many of the algorithms on your phone are likely pruned or compressed in some way . Our results suggest that a reliance on top-line metrics such as top-1 or top-5 test-set accurac y hides critical details in the ways that compression impacts model generalization. Caution should be used before deploying compressed models to sensiti v e domains such as hiring, health care diagnostics, self-driving cars, facial recognition softw are. For these domains, the introduction of pruning may be at odds with the need to guarantee a certain le vel of recall or performance for certain subsets of the dataset. Role of Capacity in Deep Neural Networks A “bigger is better” race in the number of model parameters has gripped the field of machine learning (Canziani et al., 2016; Strubell et al., 2019). Howe v er , the role of additional weights is not well understood. The ov er -indexing of PIEs on lo w frequency attrib utes suggest that non-compressed networks use the majority of capacity to encode a useful representation for these examples. 9 W H AT D O C O M P R E S S E D D E E P N E U R A L N E T O W R K S F O R G E T ? This costly approach to learning an appropriate mapping for a small subset of examples may be better solved in the data pipeline. A uditing and improving compressed models Our methodology offers one w ay for humans to better un- derstand the trade-offs incurred by compression and surface challenging examples for human judgement. Identifying harm is the first step in proposing a remedy , and we anticipate our work may spur focus on de veloping ne w compression techniques that improve upon the disparate impact we identify and characterize in this work. Limitations There is substantial ground we were not able to address within the scope of this work. Open questions remain about the implications of these findings for other possible desirable objecti ves such as fairness.Underserv ed areas worthy of future consideration include e v aluating the impact of compression on additional domains such as language and audio, and le veraging these insights to explicitly optimize for compressed models that also minimize the disparate impact on underrepresented data attributes. Acknowledgements W e thank the generosity of our peers for valuable input on earlier versions of this work. In particular, we would like to ackno wledge the input of Jonas Kemp, Simon K ornblith, Julius Adebayo, Hugo Larochelle, Dumitru Erhan, Nicolas Papernot, Catherine Olsson, Cliff Y oung, Martin W attenberg, Utku Evci, James W exler , T rev or Gale, Melissa Fabros, Prajit Ramachandran, Pieter Kindermans, Erich Elsen and Moustapha Cisse. W e thank R6 from ICML 2021 for pointing out some impro vements to the formulation of the class le vel metrics. W e thank the institutional support and encouragement of Natacha Mainville and Ale xander Popper . References Alv arez, R., Prabhav alkar , R., and Bakhtin, A. On the ef ficient representation and execution of deep acoustic models. Interspeec h 2016 , Sep 2016. doi: 10.21437/interspeech.2016- 128. URL http://dx.doi.org/ 10.21437/Interspeech.2016- 128 . Anderson, T . W . and Darling, D. A. A test of goodness of fit. Journal of the American Statistical Association , 49(268):765–769, 1954. ISSN 01621459. URL http://www.jstor.org/stable/2281537 . Bartlett, P . L. and W egkamp, M. H. Classification with a reject option using a hinge loss. J. Mach. Learn. Res. , 9:1823–1840, June 2008. ISSN 1532-4435. URL http://dl.acm.org/citation.cfm?id=1390681. 1442792 . Canziani, A., Paszke, A., and Culurciello, E. An Analysis of Deep Neural Network Models for Practical Applications. arXiv e-prints , art. arXiv:1605.07678, May 2016. Caruana, R. Case-based explanation for artificial neural nets. In Malmgren, H., Borga, M., and Niklasson, L. (eds.), Artificial Neural Networks in Medicine and Biology , pp. 303–308, London, 2000. Springer London. ISBN 978-1-4471-0513-8. Casey , B., Giedd, J. N., and Thomas, K. M. Structural and functional brain development and its relation to cogniti ve dev elopment. Biological Psyc hology , 54(1):241 – 257, 2000. ISSN 0301-0511. doi: https://doi.org/10.1016/S0301- 0511(00)00058- 2. URL http://www.sciencedirect.com/science/ article/pii/S0301051100000582 . Chen, Y ., Emer, J., and Sze, V . Eyeriss: A spatial architecture for energy-ef ficient dataflo w for con v olutional neural networks. In 2016 A CM/IEEE 43rd Annual International Symposium on Computer Ar chitectur e (ISCA) , pp. 367–379, June 2016. doi: 10.1109/ISCA.2016.40. Collins, M. D. and K ohli, P . Memory Bounded Deep Con volutional Networks. ArXiv e-prints , December 2014. Collins, M. D. and K ohli, P . Memory bounded deep con volutional networks. CoRR , abs/1412.1442, 2014. URL . 10 W H AT D O C O M P R E S S E D D E E P N E U R A L N E T O W R K S F O R G E T ? Cortes, C., DeSalvo, G., and Mohri, M. Boosting with abstention. In Lee, D. D., Sugiyama, M., Luxbur g, U. V ., Guyon, I., and Garnett, R. (eds.), Advances in Neural Information Pr ocessing Sys- tems 29 , pp. 1660–1668. Curran Associates, Inc., 2016. URL http://papers.nips.cc/paper/ 6336- boosting- with- abstention.pdf . Courbariaux, M., Bengio, Y ., and David, J.-P . T raining deep neural networks with lo w precision multiplica- tions. arXiv e-prints , art. arXiv:1412.7024, Dec 2014. Cun, Y . L., Denker , J. S., and Solla, S. A. Optimal brain damage. In Advances in Neural Information Pr ocessing Systems , pp. 598–605. Morgan Kaufmann, 1990. D’Agostino, R. B. and Stephens, M. A. (eds.). Goodness-of-fit T echniques . Marcel Dekker , Inc., New Y ork, NY , USA, 1986. ISBN 0-824-77487-6. Deng, J., Dong, W ., Socher , R., Li, L.-J., Li, K., and Fei-Fei, L. ImageNet: A Lar ge-Scale Hierarchical Image Database. In CVPR09 , 2009. Evci, U., Gale, T ., Menick, J., Castro, P . S., and Elsen, E. Rigging the lottery: Making all tickets winners, 2019. Feldman, V . and Zhang, C. What Neural Networks Memorize and Why: Discovering the Long T ail via Influence Estimation. arXiv e-prints , art. arXiv:2008.03703, August 2020. Gale, T ., Elsen, E., and Hooker , S. The state of sparsity in deep neural networks. CoRR , abs/1902.09574, 2019. URL . Gordon, A., Eban, E., Nachum, O., Chen, B., W u, H., Y ang, T .-J., and Choi, E. Morphnet: F ast & simple resource-constrained structure learning of deep networks. 2018 IEEE/CVF Conference on Computer V ision and P attern Recognition , Jun 2018. doi: 10.1109/cvpr .2018.00171. URL http://dx.doi.org/ 10.1109/CVPR.2018.00171 . Guo, C., Pleiss, G., Sun, Y ., and W einberger, K. Q. On Calibration of Modern Neural Netw orks. arXiv e-prints , art. arXi v:1706.04599, Jun 2017. Guo, Y ., Y ao, A., and Chen, Y . Dynamic netw ork surgery for ef ficient dnns. CoRR , abs/1608.04493, 2016. URL . Guo, Y ., Zhang, C., Zhang, C., and Chen, Y . Sparse dnns with impro ved adv ersarial rob ustness. In Bengio, S., W allach, H., Larochelle, H., Grauman, K., Cesa-Bianchi, N., and Garnett, R. (eds.), Advances in Neural Information Pr ocessing Systems 31 , pp. 242–251. Curran Associates, Inc., 2018. URL http: //papers.nips.cc/paper/7308- sparse- dnns- with- improved- adversarial- robustness.pdf . Gupta, S., Agrawal, A., Gopalakrishnan, K., and Narayanan, P . Deep learning with limited numerical precision. CoRR , abs/1502.02551, 2015. URL . Han, S., Pool, J., Tran, J., and Dally , W . J. Learning both Weights and Connections for Ef ficient Neural Network. In NIPS , pp. 1135–1143, 2015. Hassibi, B., Stork, D. G., and Com, S. C. R. Second order deri v ati ves for network pruning: Optimal brain surgeon. In Advances in Neural Information Pr ocessing Systems 5 , pp. 164–171. Morgan Kaufmann, 1993a. Hassibi, B., Stork, D. G., and W olf f, G. J. Optimal brain surgeon and general network pruning. In IEEE International Confer ence on Neur al Networks , pp. 293–299 vol.1, March 1993b. doi: 10.1109/ICNN.1993. 298572. He, K., Zhang, X., Ren, S., and Sun, J. Deep Residual Learning for Image Recognition. ArXiv e-prints , December 2015. Hendrycks, D. and Dietterich, T . Benchmarking neural network robustness to common corruptions and perturbations. In International Conference on Learning Representations , 2019. URL https: //openreview.net/forum?id=HJz6tiCqYm . Hendrycks, D., Zhao, K., Basart, S., Steinhardt, J., and Song, D. Natural Adv ersarial Examples. arXiv e-prints , art. arXi v:1907.07174, Jul 2019. 11 W H AT D O C O M P R E S S E D D E E P N E U R A L N E T O W R K S F O R G E T ? Hinton, G., V in yals, O., and Dean, J. Distilling the Kno wledge in a Neural Network. arXiv e-prints , art. arXi v:1503.02531, Mar 2015. Hooker , S., Erhan, D., Kindermans, P .-J., and Kim, B. A benchmark for interpretability methods in deep neural networks. In NeurIPS 2019 , 2019. Ho ward, A. G., Zhu, M., Chen, B., Kalenichenko, D., W ang, W ., W eyand, T ., Andreetto, M., and Adam, H. MobileNets: Efficient Con volutional Neural Networks for Mobile V ision Applications. ArXiv e-prints , April 2017. Hubara, I., Courbariaux, M., Soudry , D., El-Y ani v , R., and Bengio, Y . Quantized neural networks: T raining neural networks with lo w precision weights and activ ations. CoRR , abs/1609.07061, 2016. URL http: //arxiv.org/abs/1609.07061 . Huber-Carol, C., Balakrishnan, N., Nikulin, M., and Mesbah, M. Goodness-of-F it T ests and Model V alidity . Goodness-of-fit T ests and Model V alidity . Birkhäuser Boston, 2002. ISBN 9780817642099. URL https://books.google.com/books?id=gUMcv2_NrhkC . Iandola, F . N., Han, S., Moskewicz, M. W ., Ashraf, K., Dally, W . J., and Keutzer, K. SqueezeNet: Ale xNet- le vel accurac y with 50x fe wer parameters and < 0.5MB model size. ArXiv e-prints , February 2016. Iof fe, S. and Sze gedy , C. Batch normalization: Accelerating deep netw ork training by reducing internal cov ariate shift. CoRR , abs/1502.03167, 2015. URL . Jacob, B., Kligys, S., Chen, B., Zhu, M., T ang, M., Howard, A., Adam, H., and Kalenichenko, D. Quantization and training of neural networks for ef ficient inte ger-arithmetic-only inference. 2018 IEEE/CVF Confer ence on Computer V ision and P attern Recognition , Jun 2018. doi: 10.1109/cvpr .2018.00286. URL http: //dx.doi.org/10.1109/CVPR.2018.00286 . Kalchbrenner , N., Elsen, E., Simonyan, K., Noury , S., Casagrande, N., Lockhart, E., Stimberg, F ., v an den Oord, A., Dieleman, S., and Kavukcuoglu, K. Efficient Neural Audio Synthesis. In Proceedings of the 35th International Confer ence on Machine Learning, ICML 2018, Stoc kholmsmässan, Stockholm, Sweden, J uly 10-15, 2018 , pp. 2415–2424, 2018. K endall, A. and Gal, Y . What uncertainties do we need in bayesian deep learning for computer vision? In Guyon, I., Luxb urg, U. V ., Bengio, S., W allach, H., Fergus, R., V ishwanathan, S., and Garnett, R. (eds.), Advances in Neural Information Pr ocessing Systems 30 , pp. 5574–5584. Curran Associates, Inc., 2017. Kim, B., Khanna, R., and K oyejo, O. O. Examples are not enough, learn to criticize! criticism for interpretability . In Lee, D. D., Sugiyama, M., Luxburg, U. V ., Guyon, I., and Garnett, R. (eds.), Advances in Neural Information Pr ocessing Systems 29 , pp. 2280–2288. Curran Associates, Inc., 2016. K olb, B. and Whisha w , I. Fundamentals of Human Neur opsychology . A series of books in psychology . W orth Publishers, 2009. ISBN 9780716795865. Krizhe vsky , A. Learning multiple layers of features from tiny images. University of T or onto , 05 2012. Kumar , A., Goyal, S., and V arma, M. Resource-efficient machine learning in 2 KB RAM for the internet of things. In Precup, D. and T eh, Y . W . (eds.), Pr oceedings of the 34th International Confer ence on Machine Learning , v olume 70 of Pr oceedings of Machine Learning Resear ch , pp. 1935–1944, International Con v ention Centre, Sydney , Australia, 06–11 Aug 2017. PMLR. URL http://proceedings.mlr. press/v70/kumar17a.html . Lattner , C., Amini, M., Bondhugula, U., Cohen, A., Davis, A., Pienaar , J., Riddle, R., Shpeisman, T ., V asilache, N., and Zinenko, O. Mlir: A compiler infrastructure for the end of moore’ s law , 2020. Lee, N., Ajanthan, T ., and T orr , P . H. S. SNIP: single-shot network pruning based on connection sensiti vity . CoRR , abs/1810.02340, 2018. URL . Leibig, C., Allken, V ., A yhan, M. S., Berens, P ., and W ahl, S. Le v eraging uncertainty information from deep neural networks for disease detection. Scientific Reports , 7, 12 2017. doi: 10.1038/s41598- 017- 17876- z. Li, Z., W allace, E., Shen, S., Lin, K., Keutzer , K., Klein, D., and Gonzalez, J. E. Train lar ge, then compress: Rethinking model size for ef ficient training and inference of transformers, 2020. Liu, Z., Luo, P ., W ang, X., and T ang, X. Deep learning face attributes in the wild. In Pr oceedings of International Confer ence on Computer V ision (ICCV) , December 2015. 12 W H AT D O C O M P R E S S E D D E E P N E U R A L N E T O W R K S F O R G E T ? Liu, Z., Li, J., Shen, Z., Huang, G., Y an, S., and Zhang, C. Learning Ef ficient Con volutional Networks through Network Slimming. ArXiv e-prints , August 2017. Louizos, C., W elling, M., and Kingma, D. P . Learning Sparse Neural Networks through L _ 0 Regularization. ArXiv e-prints , December 2017. Micike vicius, P ., Narang, S., Alben, J., Diamos, G., Elsen, E., Garcia, D., Ginsbur g, B., Houston, M., Kuchaie v, O., V enkatesh, G., and W u, H. Mix ed Precision Training. arXiv e-prints , art. October 2017. Narang, S., Elsen, E., Diamos, G., and Sengupta, S. Exploring Sparsity in Recurrent Neural Networks. arXiv e-prints , art. arXi v:1704.05119, Apr 2017. No wlan, S. J. and Hinton, G. E. Simplifying neural networks by soft weight-sharing. Neural Computation , 4 (4):473–493, 1992. doi: 10.1162/neco.1992.4.4.473. URL https://doi.org/10.1162/neco.1992.4. 4.473 . Rakic, P ., Bourgeois, J.-P ., and Goldman-Rakic, P . S. Synaptic dev elopment of the cerebral corte x: implica- tions for learning, memory , and mental illness. In Pelt, J. V ., Corner , M., Uylings, H., and Silv a, F . L. D. (eds.), The Self-Or ganizing Brain: F r om Gr owth Cones to Functional Networks , v olume 102 of Pr ogr ess in Brain Resear ch , pp. 227 – 243. Else vier, 1994. doi: https://doi.org/10.1016/S0079- 6123(08)60543- 9. URL http://www.sciencedirect.com/science/article/pii/S0079612308605439 . Reagen, B., Whatmough, P ., Adolf, R., Rama, S., Lee, H., Lee, S. K., Hernández-Lobato, J. M., W ei, G., and Brooks, D. Minerva: Enabling lo w-po wer , highly-accurate deep neural network accelerators. In 2016 A CM/IEEE 43r d Annual International Symposium on Computer Ar chitectur e (ISCA) , pp. 267–278, June 2016. doi: 10.1109/ISCA.2016.32. Samala, R. K., Chan, H.-P ., Hadjiiski, L. M., Helvie, M. A., Richter , C., and Cha, K. Ev olutionary pruning of transfer learned deep con volutional neural network for breast cancer diagnosis in digital breast tomosynthesis. Physics in Medicine & Biology , 63(9):095005, may 2018. doi: 10.1088/1361- 6560/aabb5b. See, A., Luong, M.-T ., and Manning, C. D. Compression of Neural Machine Translation Models via Pruning. arXiv e-prints , art. arXi v:1606.09274, Jun 2016. Sehwag, V ., W ang, S., Mittal, P ., and Jana, S. T o wards compact and robust deep neural networks. CoRR , abs/1906.06110, 2019. URL . So well, E. R., Thompson, P . M., Leonard, C. M., W elcome, S. E., Kan, E., and T oga, A. W . Longitudinal mapping of cortical thickness and brain growth in normal children. Journal of Neur oscience , 24(38): 8223–8231, 2004. doi: 10.1523/JNEUR OSCI.1798- 04.2004. URL https://www.jneurosci.org/ content/24/38/8223 . Strubell, E., Ganesh, A., and McCallum, A. Energy and Policy Considerations for Deep Learning in NLP. arXiv e-prints , art. arXi v:1906.02243, June 2019. Ström, N. Sparse connection and pruning in large dynamic artificial neural netw orks, 1997. T essera, K., Hooker , S., and Rosman, B. Keep the gradients flowing: Using gradient flo w to study sparse network optimization. CoRR , abs/2102.01670, 2021. URL . Theis, L., K orshuno va, I., T ejani, A., and Huszár , F . Faster gaze prediction with dense networks and Fisher pruning. CoRR , abs/1801.05787, 2018. URL . Ullrich, K., Meeds, E., and W elling, M. Soft Weight-Sharing for Neural Network Compression. CoRR , abs/1702.04008, 2017. V alin, J. and Skoglund, J. Lpcnet: Improving Neural Speech Synthesis Through Linear Prediction. CoRR , abs/1810.11846, 2018. URL . V anhouck e, V ., Senior , A., and Mao, M. Z. Improving the speed of neural networks on cpus. In Deep Learning and Unsupervised F eature Learning W orkshop, NIPS 2011 , 2011. W eigend, A. S., Rumelhart, D. E., and Huberman, B. A. Generalization by weight-elimination with application to forecasting. In Lippmann, R. P ., Moody , J. E., and T ouretzky , D. S. (eds.), Advances in Neural Information Pr ocessing Systems 3 , pp. 875–882. Morgan-Kaufmann, 1991. 13 W H AT D O C O M P R E S S E D D E E P N E U R A L N E T O W R K S F O R G E T ? W elch, B. L. The generalization of ‘Student’ s’ problem when sev eral different population v ariances are in v olved. Biometrika , 34:28–35, 1947. ISSN 0006-3444. doi: 10.2307/2332510. URL https://doi. org/10.2307/2332510 . W en, W ., W u, C., W ang, Y ., Chen, Y ., and Li, H. Learning Structured Sparsity in Deep Neural Networks. ArXiv e-prints , August 2016. Zagoruyko, S. and K omodakis, N. W ide residual networks. CoRR , abs/1605.07146, 2016. URL http: //arxiv.org/abs/1605.07146 . Zech, J. R., Badgeley , M. A., Liu, M., Costa, A. B., T itano, J. J., and Oermann, E. K. V ariable generalization performance of a deep learning model to detect pneumonia in chest radiographs: A cross-sectional study . PLOS Medicine , 15(11):1–17, 11 2018. doi: 10.1371/journal.pmed.1002683. URL https: //doi.org/10.1371/journal.pmed.1002683 . Zhang, J. Selecting typical instances in instance-based learning. In Sleeman, D. and Edw ards, P . (eds.), Machine Learning Pr oceedings 1992 , pp. 470 – 479. Morg an Kaufmann, San Francisco (CA), 1992. ISBN 978-1-55860-247-2. doi: https://doi.org/10.1016/B978- 1- 55860- 247- 2.50066- 8. URL http: //www.sciencedirect.com/science/article/pii/B9781558602472500668 . Zhou, W ., V eitch, V ., Austern, M., Adams, R. P ., and Orbanz, P . Non-v acuous generalization bounds at the imagenet scale: a pac-bayesian compression approach. In ICLR , 2019. Zhu, M. and Gupta, S. T o prune, or not to prune: e xploring the ef ficacy of pruning for model compression. ArXiv e-prints , October 2017. Zhu, M. and Gupta, S. T o prune, or not to prune: e xploring the ef ficacy of pruning for model compression. CoRR , abs/1710.01878, 2017a. URL . Zhu, M. and Gupta, S. T o prune, or not to prune: exploring the ef ficacy of pruning for model compression, 2017b. 14 W H AT D O C O M P R E S S E D D E E P N E U R A L N E T O W R K S F O R G E T ? A ppendix A Pruning and quantization techniques considered Magnitude pruning There are various pruning methodologies that use the absolute value of weights to rank their importance and remov e weights that are belo w a user -specified threshold (Collins & K ohli, 2014; Guo et al., 2016; Zhu & Gupta, 2017a). These works lar gely differ in whether the weights are remo ved permanently or can “recov er" by still receiving subsequent gradient updates. This would allow certain weights to become non-zero ag ain if pruned incorrectly . While magnitude pruning is often used as a criteria to remo ve indi vidual weights, it can be adapted to remov e entire neurons or filters by extending the ranking criteria to a set of weights and setting the threshold appropriately (Gordon et al., 2018). In this work, we use the magnitude pruning methodology as proposed by Zhu & Gupta (2017a). It has been sho wn to outperform more sophisticated Bayesian pruning methods and is considered state-of-the-art across both computer vision and language models (Gale et al., 2019). The choice of magnitude pruning also allo wed us to specify and precisely v ary the final model sparsity for purposes of our analysis, unlike regularizer approaches that allow the optimization process itself to determine the final le vel of sparsity (Liu et al., 2017; Louizos et al., 2017; Collins & K ohli, 2014; W en et al., 2016; W eigend et al., 1991; No wlan & Hinton, 1992). Quantization All networks were trained with 32-bit floating point weights and quantized post-training. This means there is no additional gradient updates to the weights post-quantization. In this work, we ev aluate three dif ferent quantization methods. The first type replaces the weights with 16-bit floating point weights (Micike vicius et al., 2017). The second type quantizes all weights to 8-bit integer values (Alvarez et al., 2016). The third type uses the first 100 training examples of each dataset as representati ve e xamples for the fixed-point only models. W e chose to benchmark these quantization methods in part because each has open source code av ailable. W e use T ensorFlow Lite with MLIR (Lattner et al., 2020). B Pruning Protocol W e prune ov er the course of training to obtain a target end pruning lev el t ∈ { 0 . 0 , 0 . 1 , 0 . 3 , 0 . 5 , 0 . 7 , 0 . 9 } . Remov ed weights continue to recei ve gradient updates after being pruned. These hyperparameter choices were based upon a limited grid search which suggested that these particular settings minimized degradation to test-set accuracy across all pruning levels. W e note that for CelebA we were able to still conv er ge to a comparable final performance at much higher le vels of pruning t ∈ { 0 . 95 , 0 . 99 } . W e include these results, and note that the tolerance for e xtremely high le vels of pruning may be related the relati ve difficulty of the task. Unlike CIF AR-10 and ImageNet which inv olv e more than 2 classes ( 10 and 1000 respecti vely), CelebA is a binary classification problem. Here, the task is predicting hair color Y = { blonde, dark haired } . Quantization techniques are applied post-training - the weights are not re-calibrated after quantizing. Figure 1 sho ws the distributions of model accuracy across model populations for the pruned and quantized models for ImageNet, CIF AR-10 and CelebA. T able. 4 and T able. 5 include top-line metrics for all compression methods considered. C Human study W e conducted a human study (in v olving 85 volunteers) to label a random sample of 1230 PIE and non-PIE ImageNet images. Humans in the study were shown a balanced sample of PIE and non-PIE images that were selected at random and shuffled. The classification as PIE or non-PIE was not kno wn or av ailable to the human. P articipants answered the following questions for each image that w as presented: • Does label 1 accurately label an object in the imag e? (0/1) • Does this image depict a single object? (0/1) • W ould you consider labels 1, 2 and 3 to be semantically very close to each other? (does this image r equir e fine grained classification) (0/1) 15 W H AT D O C O M P R E S S E D D E E P N E U R A L N E T O W R K S F O R G E T ? ImageNet Robustness to ImageNet-C Corruptions (By Le vel of Pruning) Pruning Fraction Corruption T ype T op-1 T op-5 T op-1 Norm T op-5 Norm 0.0 brightness 69.49 88.98 0.00 0.00 0.7 brightness 67.50 87.86 -2.87 -1.25 0.9 brightness 64.12 85.63 -7.74 -3.77 0.0 contrast 42.30 61.80 0.00 0.00 0.7 contrast 41.34 61.58 -2.26 -0.36 0.9 contrast 38.04 58.43 -10.06 -5.45 0.0 defocus_blur 49.77 72.45 0.00 0.00 0.7 defocus_blur 47.49 70.69 -4.58 -2.43 0.9 defocus_blur 44.69 68.26 -10.22 -5.79 0.0 elastic 57.09 76.71 0.00 0.00 0.7 elastic 55.09 75.29 -3.51 -1.85 0.9 elastic 52.81 73.62 -7.50 -4.02 0.0 fog 56.21 79.25 0.00 0.00 0.7 fog 54.46 78.25 -3.12 -1.25 0.9 fog 50.36 75.10 -10.41 -5.23 0.0 frosted_glass_blur 40.89 60.51 0.00 0.00 0.7 frosted_glass_blur 38.75 58.68 -5.23 -3.03 0.9 frosted_glass_blur 36.87 57.02 -9.83 -5.78 0.0 gaussian_noise 45.43 65.67 0.00 0.00 0.7 gaussian_noise 42.01 62.40 -7.53 -4.98 0.9 gaussian_noise 32.88 51.49 -27.64 -21.59 0.0 impulse_noise 42.23 63.16 0.00 0.00 0.7 impulse_noise 37.91 58.82 -10.24 -6.87 0.9 impulse_noise 25.29 43.13 -40.12 -31.70 0.0 jpeg_compression 65.75 86.25 0.00 0.00 0.7 jpeg_compression 63.47 84.81 -3.47 -1.68 0.9 jpeg_compression 60.57 82.77 -7.88 -4.04 0.0 pixelate 57.34 78.05 0.00 0.00 0.7 pixelate 54.93 76.17 -4.21 -2.41 0.9 pixelate 51.31 72.98 -10.51 -6.50 0.0 shot_noise 43.82 64.06 0.00 0.00 0.7 shot_noise 39.88 60.04 -8.99 -6.28 0.9 shot_noise 30.80 48.86 -29.71 -23.72 0.0 zoom_blur 37.16 58.90 0.00 0.00 0.7 zoom_blur 34.60 56.68 -6.89 -3.76 0.9 zoom_blur 31.78 53.97 -14.47 -8.37 T able 3: Pruned models are more sensitive to image corruptions that are meaningless to a human. W e measure the av erage top-1 and top-5 test set accurac y of models trained to v arying le vels of pruning on the ImageNet-C test-set (the models were trained on uncorrupted ImageNet). For each corruption type, we report the av erage accuracy of 50 trained models relativ e to the baseline models across all 5 levels of pruning. 16 W H AT D O C O M P R E S S E D D E E P N E U R A L N E T O W R K S F O R G E T ? CelebA Fraction Pruned T op 1 # PIEs 0 94.73 - 0.3 94.75 555 0.5 94.81 638 0.7 94.44 990 0.9 94.07 3229 0.95 93.39 5057 0.99 90.98 8754 Quantization T op 1 # PIEs hybrid int8 94.65 404 fixed-point int8 94.65 414 T able 4: CelebA top-1 accuracy at all le vels of pruning, av eraged over runs. The task we consider for CelebA is a binary classification method. W e consider exemplar le vel di ver gence and classify Pruning Identified Exemplars as the e xamples where the modal label differs between a population of 30 compressed and non-compressed models. Note that the CelebA task is a binary classification task to predict whether the celebrity is blond or non-blond. Thus, there are only two classes. Figure 5: A pie chart of the codified attributes of a sample of pruning identified examplars (PIEs) and non-PIE images. The human study shows that PIEs over -inde x on both noisy ex emplars with partial or corrupt information (corrupted images, incorrect labels, multi-object images) and/or atypical or challenging images (abstract representation, fine grained classification). • Do you consider the object in the image to be a typical e xemplar for the class indicated by label 1? (0/1) • Is the imag e quality corrupted (some common imag e corruptions – o verlaid text, brightness, contr ast, filter , defocus blur , fog, jpe g compr ession, pixelate, shot noise, zoom blur , black and white vs. rgb)? (0/1) • Is the object in the image an abstract r epr esentation of the class indicated by label 1? [[an abstract r epr esentation is an object in an abstract form, such as a painting, drawing or r endering using a differ ent material.]] (0/1) W e find that PIEs heavily ov er-inde x relati ve to non-PIEs on both noisy examples with corrupted information (incorrect ground truth label, multiple objects, image corruption) and atypical or challenging examples (fine-grained classification task, abstract representation). W e include the per attribute relati v e representation of PIE vs. Non-PIE for the study (in Figure. 7). 17 W H AT D O C O M P R E S S E D D E E P N E U R A L N E T O W R K S F O R G E T ? ImageNet Fraction Pruned T op 1 # Signif classes # PIEs 0 76.68 - - 30 76.46 68 1,819 50 75.87 170 2,193 70 75.02 372 3,073 90 72.60 637 5,136 Quantization float16 76.65 58 2019 dynamic range int8 76.10 144 2193 fixed-point int8 76.46 119 2093 CIF AR-10 Fraction Pruned T op 1 # Signif classes # PIEs 0 94.53 - - 30 94.47 1 114 50 94.39 1 144 70 94.30 0 137 90 94.14 2 216 T able 5: CIF AR-10 and ImageNet top-1 accurac y at all levels of pruning, a veraged ov er 30 runs. T op-5 accuracy for CIF AR-10 w as 99 . 8% for all levels of pruning. The third column is the number of classes significantly impacted by pruning. D Benchmarks to ev aluate rob ustness ImageNet-A Extended Results ImageNet-A is a curated test set of 7 , 500 natural adversarial images designed to produce drastically low test accuracy . W e find that the sensitivity of pruned models to ImageNet-A mirrors the patterns of degradation to ImageNet-C and sets of PIEs. As pruning increases, top-1 and top-5 accurac y further erode, suggesting that pruned models are more brittle to adv ersarial e xamples. T able 8 includes relati ve and absolute sensiti vity at all le vels of compression considered. For each rob ustness benchmark and le v el of pruning that we e v aluate, we a verage model rob ustness o ver 5 models independently trained from random initialization. Figure 6: V isualization of Pruning Identified Exemplars from the CIF AR-10 dataset. This subset of impacted images is identified by considering a set of 30 non-pruned wide ResNet models and 30 models trained to 30% pruning. Belo w each image are three labels: 1) true label, 2) the modal (most frequent) prediction from the set of non-pruned models, 3) the modal prediction from the set of pruned models. 18 W H AT D O C O M P R E S S E D D E E P N E U R A L N E T O W R K S F O R G E T ? T op-1 accuracy T op-5 accuracy ImageNet Fraction Pruned Non-PIEs PIEs All Non-PIEs PIEs All 10.0 79.34 26.14 76.75 94.89 68.52 93.35 30.0 79.23 26.21 76.75 95.04 69.30 93.35 50.0 79.54 28.74 76.75 94.89 71.47 93.35 70.0 80.16 32.06 76.75 94.99 74.74 93.35 90.0 81.20 39.81 76.75 95.11 78.90 93.35 CIF AR-10 Fraction Pruned Non-PIEs PIEs All Non-PIEs PIEs All 10.0 95.11 43.23 94.89 99.91 95.30 99.91 30.0 95.40 40.61 94.89 99.92 92.83 99.91 50.0 95.45 40.42 94.89 99.93 93.53 99.91 70.0 95.56 43.64 94.89 99.94 95.95 99.91 90.0 95.60 50.71 94.89 99.92 96.67 99.91 CelebA Fraction Pruned Non-PIEs PIEs All Non-PIEs PIEs All 30.0 94.76 49.82 94.76 - - - 50.0 94.78 50.55 94.78 - - - 70.0 94.54 52.61 94.54 - - - 90.0 94.10 50.41 94.10 - - - 95.0 93.40 45.57 93.40 - - - 99.0 90.97 39.84 90.97 - - - T able 6: A comparison of non-compressed model performance on Pruning Identified Exemplars (PIE) relati ve to a random sample dra wn independently from the test-set and a sample excluding PIEs (non-PIEs). Inference on the non-PIE sample improv es test-set top-1 accurac y relati ve to the baseline for ImageNet and Cif ar-10. Ev aluation on PIE images alone yields substantially lower top-1 accuracy . Note that CelebA top-5 is not included as it is a binary classification problem. Figure 7: High le vels of compression amplify sensitivity to distrib ution shift. Left: Change to top-1 normalized recall of a pruned model relati ve to a non-pruned model on ImageNet-C (all corruptions). Right: Change to top-5 normalized recall of a pruned model relati ve to a non-pruned model on ImageNet-C (all corruptions). W e measure the top-1 test-set performance on a subset of ImageNet-C corruptions of a pruned model relati ve to the non-pruned model on the same corruption. 19 W H AT D O C O M P R E S S E D D E E P N E U R A L N E T O W R K S F O R G E T ? T able 7: PIE vs non-PIE relati v e representation for different attributes. These attributes were codified in a human study in volving 85 indi viduals inspecting a balanced random sample of PIE and non-PIE. The classification as PIE or non-PIE was not kno wn or a v ailable to the human. ImageNet Robustness to ImageNet-A Corruptions (By Le vel of Pruning) Pruning Fraction T op-1 T op-5 T op-1 Norm T op-5 Norm 0.0 0.89 7.56 0.00 0.00 10.0 0.85 7.53 -4.04 -0.39 30.0 0.76 7.21 -14.33 -4.62 50.0 0.62 6.53 -30.54 -13.65 70.0 0.51 5.83 -42.63 -22.96 90.0 0.36 4.47 -59.80 -40.96 T able 8: Pruned models are more sensitive to natural adv ersarial images. ImageNet-A is a curated test set of 7 , 500 natural adversarial images designed to produce drastically low test accuracy . W e compute the absolute performance of models pruned to dif ferent lev els of sparsity on ImageNet-A (T op-1 and T op-5) as well as the normalized performance relati ve to a non-pruned model on ImageNet-A. ImageNet-C Extended Results ImageNet-C (Hendrycks & Dietterich, 2019) is an open source data set that consists of algorithmic generated corruptions (blur , noise) applied to the ImageNet test-set. W e compare top-1 accuracy given inputs with corruptions of different severity . As described by the methodology of Hendrycks & Dietterich (2019), we compute the corruption error for each type of corruption by measuring model performance rate across fi ve corruption se verity levels (in our implementation, we normalize the per-corruption error by the performance of the non-compressed model on the same corruption). ImageNet-C corruption substantially degrades mean top-1 accuracy of pruned models relati ve to non-pruned. As seen in Fig.7, this sensiti vity is amplified at high le vels of pruning, where there is a further steep decline in top-1 accuracy . Unlike the main body , in this figure we visualize all corruption types considered. Sensitivity to different corruptions is remarkably v aried, with certain corruptions such as Gaussian, shot an impulse noise consistently causing more de gradation. W e include a visualization for a lar ger sample of corruptions considered in T able 3. 20

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment