SpaRCe: Improved Learning of Reservoir Computing Systems through Sparse Representations

"Sparse" neural networks, in which relatively few neurons or connections are active, are common in both machine learning and neuroscience. Whereas in machine learning, "sparsity" is related to a penalty term that leads to some connecting weights beco…

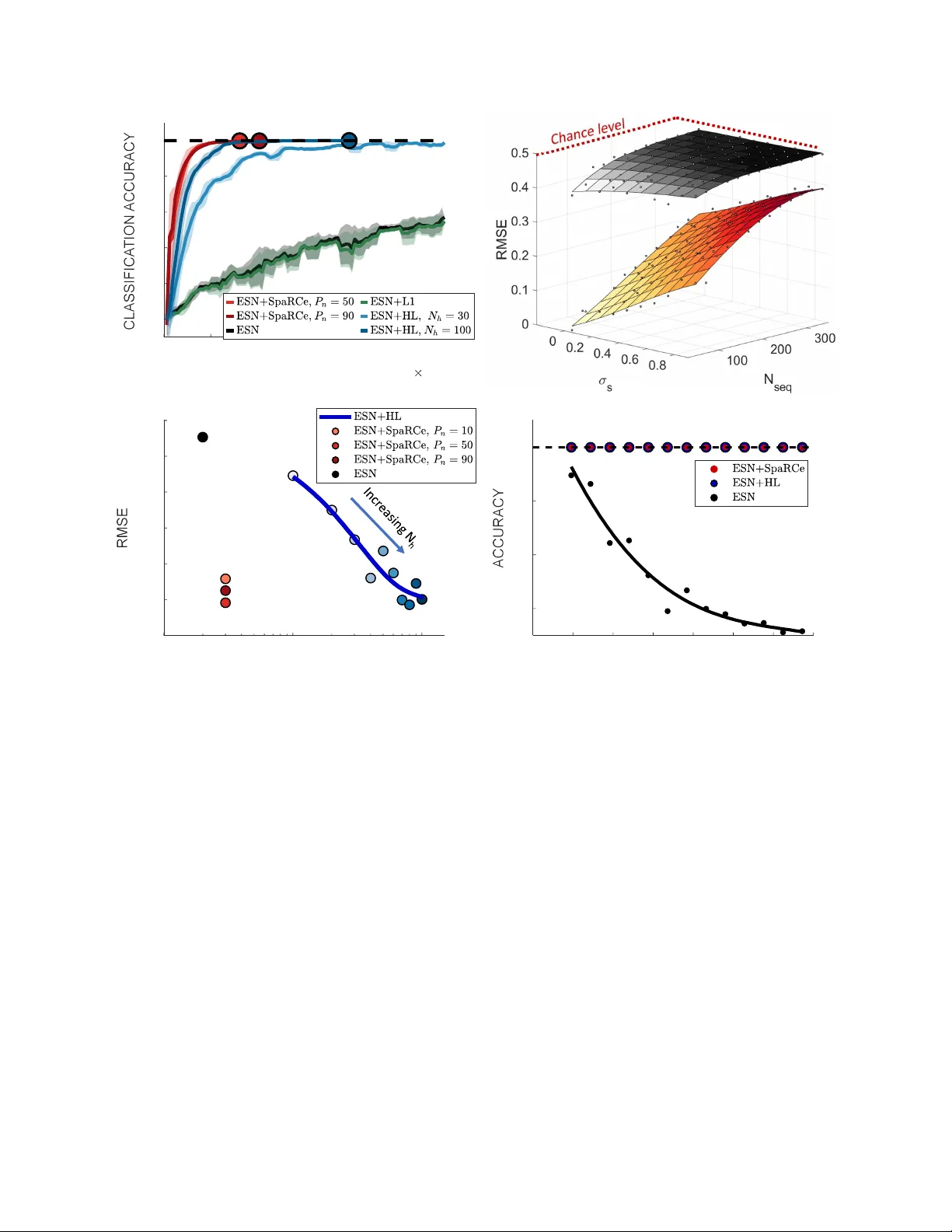

Authors: Luca Manneschi, Andrew C. Lin, Eleni Vasilaki