Unsupervised and Efficient Vocabulary Expansion for Recurrent Neural Network Language Models in ASR

In automatic speech recognition (ASR) systems, recurrent neural network language models (RNNLM) are used to rescore a word lattice or N-best hypotheses list. Due to the expensive training, the RNNLM's vocabulary set accommodates only small shortlist …

Authors: Yerbolat Khassanov, Eng Siong Chng

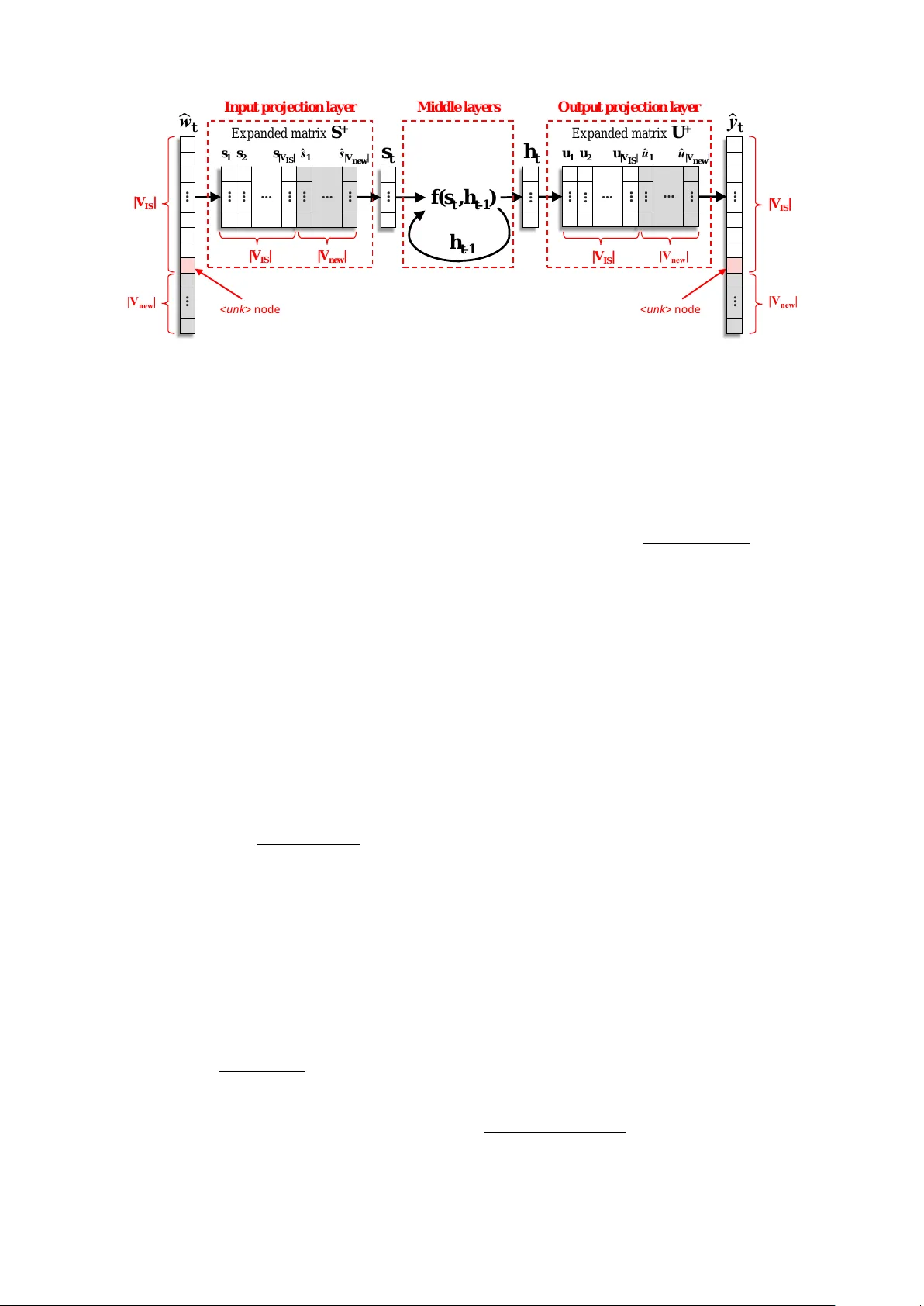

Unsupervised and Efficient V ocab ulary Expansion f or Recurr ent Neural Network Language Models in ASR Y erbolat Khassanov , Eng Siong Chng Rolls-Royce@NTU Corporate Lab, Nan yang T echnological Uni versity , Singapore yerbolat002@edu.ntu.sg, aseschng@ntu.edu.sg Abstract In automatic speech recognition (ASR) systems, recurrent neu- ral network language models (RNNLM) are used to rescore a word lattice or N-best hypotheses list. Due to the expen- siv e training, the RNNLM’ s vocab ulary set accommodates only small shortlist of most frequent words. This leads to suboptimal performance if an input speech contains many out-of-shortlist (OOS) words. An ef fectiv e solution is to increase the shortlist size and re- train the entire network which is highly inef ficient. Therefore, we propose an efficient method to expand the shortlist set of a pretrained RNNLM without incurring expensiv e retraining and using additional training data. Our method exploits the struc- ture of RNNLM which can be decoupled into three parts: in- put projection layer, middle layers, and output projection layer . Specifically , our method expands the word embedding matri- ces in projection layers and keeps the middle layers unchanged. In this approach, the functionality of the pretrained RNNLM will be correctly maintained as long as OOS words are properly modeled in two embedding spaces. W e propose to model the OOS words by borrowing linguistic knowledge from appropri- ate in-shortlist words. Additionally , we propose to generate the list of OOS words to e xpand v ocabulary in unsupervised man- ner by automatically extracting them from ASR output. Index T erms : v ocabulary expansion, recurrent neural network, language model, speech recognition, word embedding 1. Introduction The language model (LM) plays an important role in automatic speech recognition (ASR) system. It ensures that recognized output hypotheses obe y the linguistic re gularities of the tar get language. The LMs are employed at two different stages of the state-of-the-art ASR pipeline: decoding and rescoring. At the decoding stage, a simple model such as count-based n -gram [1] is used as a background LM to produce initial word lattice. At the rescoring stage, this word lattice or N -best hypotheses list extracted from it is rescored by a more complex model such as recurrent neural network language model (RNNLM) [2, 3, 4]. Due to the simplicity and efficient implementation, the count-based n -gram LM is trained over a lar ge vocab ulary set, typically in the order of hundreds of thousands w ords. On the other hand, computationally expensi ve RNNLM is usually trained with a small subset of most frequent words known as in-shortlist (IS) set, typically in the order of tens of thousands words, whereas the remaining words are deemed out-of-shortlist (OOS) and jointly modeled by single node < unk > [5]. Since the probability mass of < unk > node is shared by many w ords, it will poorly represent properties of the individual words leading to unreliable probability estimates of OOS words. Moreover , these estimates tend to be very small which makes RNNLM biased in fav or of hypotheses mostly comprised of IS words. Consequently , if an input speech with many OOS words is supplied to ASR system, the performance of RNNLM will be suboptimal. An ef fectiv e solution is to increase the IS set size and retrain the entire network. Ho wever , this approach is highly inefficient as training RNNLM might take from several days up to several weeks depending on the scale of application [6]. Moreo ver , ad- ditional textual data containing training instances of OOS words would be required, which is difficult to find for rare domain- specific words. Therefore, the effecti ve and efficient methods to expand RNNLM’ s vocabulary co verage is of great interest [7]. In this work, we propose an efficient method to expand the vocab ulary of pretrained RNNLM without incurring expensiv e retraining and using additional training data. T o achiev e this, we exploit the structure of RNNLM which can be decoupled into three parts: 1) input projection layer, 2) middle layers and 3) output projection layer as shown in figure 1. The input and output projection layers are defined by input and output word embedding matrices that perform linear word transformations from high to lo w and lo w to high dimensions, respectiv ely . The middle layers are a non-linear function used to generate high-lev el feature representation of contextual information. Our method e xpands the vocabulary coverage of RNNLM by insert- ing ne w words into input and output word embedding matrices, and keeping the parameters of middle layers unchanged. This method keeps the functionality of pretrained RNNLM intact as long as new words are properly modeled in input and output word embedding spaces. W e propose to model the new words by borrowing linguistic knowledge from other “similar” words present in word embedding matrices. Furthermore, the list of OOS words to expand vocabulary can be generated either in supervised or unsupervised manners. For example, in supervised manner , they can be manually col- lected by the human expert. Whereas in unsupervised manner, a subset of most frequent words from OOS set can be selected. In this work, we propose to generate the list of OOS words in unsupervised manner by automatically extracting them from ASR output. The motiv ation is that the background LM usually cov ers much larger vocab ulary , and hence, during the decoding stage it will produce a word lattice which will contain the most relev ant OOS words that might be present in test data. W e e valuate our method by rescoring N -best list output from the state-of-the-art TED 1 talks ASR system. The ex- perimental results show that vocab ulary expanded RNNLM achiev es 4% relati ve word error rate (WER) improv ement over the con ventional RNNLM. Moreov er, 7% relative WER im- prov ement is achieved over the strong Kneser-Ney smoothed 5 -gram model used to rescore the word lattice. Importantly , all these improv ements are achie ved without using additional train- ing data and by incurring very little computational cost. 1 https://www .ted.com/ Ex pan ded m at rix S + w t … s t … h t y t … f(s t ,h t - 1 ) … h t - 1 Exp and ed m at rix U + … … … … … … … … … … … … … … … … s 1 s 2 s |V IS | s 1 s | V n e w | u 1 u 2 u |V IS | u 1 u | V n e w | | V IS | | V n e w | | V IS | |V n e w | | V IS | |V n e w | | V IS | |V n e w | < unk > node < unk > n ode Input pr oj ectio n lay er Middle lay ers Out put pr oj ection lay er Figure 1: RNNLM arc hitectur e after vocabulary expansion. The rest of the paper is or ganized as follows. The related works on v ocabulary co verage e xpansion of RNNLMs are re- viewed in section 2. Section 3 briefly describes the RNNLM architecture. Section 4 presents the proposed methodology to increase the IS set of RNNLM by expanding the word embed- ding matrices. In section 5, the experiment setup and obtained results are discussed. Lastly , section 6 concludes the paper . 2. Related works This section briefly describes popular approaches to expand the vocab ulary cov erages of RNNLMs. These approaches mostly focus on intelligently redistrib uting the probability mass of < unk > node among OOS words, optimizing the training speed for large-v ocabulary models or training sub-word lev el RNNLM. These approaches can also be used in combination. Redistributing probability mass of < unk > : Park et al. [5] proposed to e xpand the vocab ulary co verage by gathering all OOS words under special node < unk > and explicitly mod- eling it together with IS words, see figure 1. This is a standard scheme commonly employed in the state-of-the-art RNNLMs. The probability mass of < unk > node is then can be redis- tributed among OOS words by using statistics of simpler LMs such as count-based n -gram model as follows: ˜ P R ( w t +1 | h t ) = P R ( w t +1 | h t ) w t +1 ∈ V IS β ( w t +1 | h t ) P R ( < unk > | h t ) otherwise (1) β ( w t +1 | h t ) = P N ( w t +1 | h t ) P w / ∈ V IS P N ( w | h t ) (2) where P R () and P N () are conditional probability estimates ac- cording to RNNLM and n -gram LM respecti vely , for some word w t +1 giv en context h t . The n -gram model is trained with whole vocabulary set V , whereas RNNLM is trained with smaller in-shortlist subset V IS ⊂ V . The β () is a normalization coefficient used to ensure the sum-to-one constraint of obtained probability function ˜ P R () . Later , [3] proposed to uniformly redistribute the probability mass of < unk > token among OOS words as follows: ˜ P R ( w t +1 | h t ) = P R ( w t +1 | h t ) w t +1 ∈ V IS P R ( < unk > | h t ) | V \ V IS | + 1 otherwise (3) where ‘ \ ’ symbol is the set difference operation. In this way , the vocab ulary cov erage of RNNLM is expanded to the full vocab- ulary size | V | without relying on the statistics of simpler LMs. T raining speed optimization: Rather than expanding vo- cabulary of the pretrained model, this group of studies focuses on speeding-up the training of large-v ocabulary RNNLMs. One of the most ef fective ways to speed up the training of RNNLMs is to approximate the softmax function. The softmax function is used to normalize obtained word scores to form a probability distribution, hence, it requires scores y w 0 of ev ery word in the v ocabulary: sof tmax ( y w ) = exp ( y w ) P w 0 ∈ V IS exp ( y w 0 ) (4) Consequently , its computational cost is proportional to the num- ber of words in the vocab ulary and it dominates the training of the whole model which is the network’ s main bottleneck [8]. Many techniques have been proposed to approximate the softmax computation. The most popular ones include hierar- chical softmax [9, 10, 11], importance sampling [12, 13] and noise contrastive estimation [8, 14]. The comparati ve study of these techniques can be found in [7, 15]. Other techniques, be- sides softmax function approximation, to speed up the training of large-v ocabulary models can be found in [16]. Sub-word le vel RNNLM: Another effectiv e method to expand the vocab ulary cov erage is to train a sub-word lev el RNNLM. Different from standard word-lev el RNNLMs, they model finer linguistic units such as characters [17] or sylla- bles [18], hence, a larger range of words will be co vered. Furthermore, character-lev el RNNLM doesn’t suffer from the OOS problem, though, it performs worse than word-lev el mod- els 2 [18]. Recently , there has been a lot of research effort aim- ing to train the hybrid of word and sub-word le vel models where promising results are obtained [19, 6, 20]. 3. RNNLM architectur e The con ventional RNNLM architecture can be decoupled into three parts: 1) input projection layer, 2) middle layers and 3) output projection layer, as shown in figure 1. The input projection layer is defined by input word embedding matrix S ∈ R d s ×| V IS | used to transform the one-hot encoding repre- sentation of word w t ∈ R | V IS | at time t into lower dimensional continuous space vector s t ∈ R d s , where d s is input word em- bedding vector dimension: s t = S w t (5) This vector s t and compressed context vector from pre vious time step h t − 1 ∈ R d h are then merged by non-linear middle 2 At least for English. layer , which can be represented as function f () , to produce a new compressed context vector h t ∈ R d h , where d h is context vector dimension: h t = f ( s t , h t − 1 ) (6) The function f () can be simple acti vation units such as sig- moid and hyperbolic tangent, or more complex units such as LSTM [3] and GRU [21]. The middle layer can also be formed by stacking sev eral such functions. The compressed context vector h t is then supplied to out- put projection layer where it is transformed into higher dimen- sion vector y t ∈ R | V IS | by output word embedding matrix U ∈ R d h ×| V IS | : y t = U T h t (7) The entries of output vector y t represent the scores of words to follow the context h t . These scores are then normalized by softmax function to form probability distribution (eq. (4)). 4. V ocab ulary expansion This section describes our proposed method to e xpand the vo- cabulary co verage of pretrained RNNLM. Our method is based on the observation that input and output projection layers learn the w ord embedding matrices, and middle layers learn the map- ping from the input word embedding vectors to compressed context vectors. Thus, by modifying the input and output word embedding matrices to accommodate new words, we can ex- pand the vocabulary cov erage of RNNLM. Meanwhile, the pa- rameters of middle layers are kept unchanged which allo ws us to a void e xpensive retraining. This approach will preserve the linguistic regularities encapsulated within pretrained RNNLM as long as the new words are properly modeled in input and output embedding spaces. T o model the ne w words, we will use word embedding vectors of “similar” w ords present in V IS set. The proposed method has three main challenges: 1) ho w to find rele v ant OOS words for vocab ulary expansion, 2) criteria to select “similar” candidate words to model a target OOS w ord and 3) ho w to expand the word embedding matrices. The details are discussed in section 4.1, 4.2 and 4.3, respectiv ely . 4.1. Finding rele vant OOS words The first step to voca bulary expansion is finding rele vant OOS words. This step is important as expanding vocabulary with ir- relev ant words absent in the input test data is inef fectiv e. The relev ant OOS words can be found either in supervised or unsu- pervised manners. For example, in supervised manner , the y can be manually collected by human expert. In unsupervised man- ner , the subset of most frequent OOS words can be selected. In this work, we employed an unsupervised method where relev ant OOS words are automatically extracted from the ASR output. The reason is that at the decoding stage a background LM cov ering very large vocab ulary set is commonly employed. Subsequently , the generated w ord lattice will contain the most relev ant OOS words that might be present in the input test data. 4.2. Selecting candidate words Giv en a list of relev ant OOS words, let’ s call it set V new , the next step is to select candidate words that will be used to model each of them. The selected candidates must be present in V IS set and should be similar to the target OOS word in both se- mantic meaning and syntactic behavior . Selecting inadequate candidates might deteriorate the linguistic regularities incorpo- rated within pretrained RNNLM, thus, they should be carefully inspected. In natural language processing, many ef fectiv e tech- niques exist that can find appropriate candidate words satisfying conditions mentioned abov e [22, 23, 24]. 4.3. Expanding word embedding matrices This section describes our proposed approach to expand the word embedding matrices S and U . The matrix S : This matrix holds input word embedding vectors S = [ s 1 ... s | V IS | ] and it’ s used to transform w ords from discrete form into lower dimensional continuous space. In this space, vectors of “similar” words are clustered together [25]. Moreov er , these vectors hav e been sho wn to capture meaningful semantic and syntactic features of language [26]. Subsequently , if two words hav e similar semantic and syntactic roles, their embedding v ectors are e xpected to belong to the same cluster . As such, words in a cluster can be used to approximate a new word that belongs to the same cluster . For example, let’ s consider a scenario where we want to add a ne w word Astana, the capital of Kazakhstan, to the v ocabu- lary set of an existing RNNLM. Here, we can select candidate words from V IS with similar semantic and syntactic roles such as London and P aris. Specifically , we extract the input word em- bedding vectors of selected candidates C = { s london , s paris } and combine them to form a new input word embedding vector ˆ s astana ∈ R d s as follows: ˆ s astana = P s ∈ C m s s | C | (8) where m s is some normalized metric used to weigh the candi- dates. W e repeat this procedure for all words in set V new . Obtained new input word embedding vectors are then used to form matrix ˆ S = [ ˆ s 1 ... ˆ s | V new | ] where ˆ S ∈ R d s ×| V new | , which is appended to the initial matrix S ∈ R d s ×| V IS | to form the e x- panded matrix: S + = [ S ˆ S ] (9) where S + ∈ R d s × ( | V IS | + | V new | ) . The input word v ector w t ∈ R | V IS | should be also expanded to accommodate the new words from V new , which results in the new input v ector ˆ w t ∈ R | V IS | + | V new | . The input v ector and input word embedding matrix after expansion are depicted in figure 1. The matrix U : This matrix holds the output word embed- ding vectors U = [ u 1 ... u | V IS | ] where u ∈ R d h . These vectors are compared against context vector h t using the dot product to determine the score of the next possible word w t +1 [8]. Intu- itiv ely , for a gi ven context h t , the interchangeable words with similar semantic and syntactic roles should have similar scores to follow it. Therefore, in the output word embedding space, interchangeable words should belong to the same cluster . Sub- sequently , we can use the same procedure and candidates which were used to expand matrix S to model the ne w w ords in output word embedding space. Howe ver , this time we operate in the column space of matrix U . The output vector and output word embedding matrix after expansion are depicted in figure 1. 5. Experiment This section describes experiments conducted to ev aluate the efficac y of proposed vocab ulary expansion method for pre- trained RNNLMs on ASR task. The ASR system is built by Kaldi [27] speech recognition toolkit on the first release of TED-LIUM [28] speech corpus. T o highlight the importance of vocabulary expansion, we train the LMs on generic-domain text corpus One Billion W ord Benchmark (OBWB) [29]. T able 1: The char acteristics of TED-LIUM corpus Characteristics T rain Dev T est No. of talks 774 8 11 No. of w ords 1.3M 17.7k 27.5k T otal duration 122hr 1.5hr 2.5hr As the baseline LMs, we trained two state-of-the-art models, namely the modified Kneser-Ney smoothed 5-gram (KN5) [30] and recurrent LSTM network (LSTM) [3]. W e call our system VE-LSTM which is constructed by expanding the vocab ulary of the baseline LSTM. The performance of these three models is ev aluated using both perplexity and WER. Experiment setup: The TED-LIUM corpus is comprised of monologue talks gi ven by experts on specific topics, its char - acteristics are giv en in table 1. Its train set was used to build the acoustic model with the ‘nnet3+chain’ setup of Kaldi includ- ing the latest developments. Its de v set was used to tune hyper- parameters such as the number of candidates to use to model the new words, word insertion penalty and LM scale. The test set was used to compare the performance of proposed VE-LSTM and two baseline models. Additionally , the TED-LIUM corpus has a predesigned pronunciation lexicon of 150 k words which was also used as a v ocabulary set for baseline LMs. The OBWB corpus consists of text collected from various domains including the news and parliamentary speeches. Its train set contains around 700 M words and is used to train both baseline LMs. Its validation set of size 141 k words was used to stop the training of LSTM model. The baseline KN5 was trained using SRILM [31] toolkit with 150 k vocab ulary . It was used to rescore the word lattice and 300-best list. Its pruned 3 version KN5 pruned was used as a background LM during the decoding stage. The baseline LSTM was trained as a four -layer network similar to [3] using our own implementation in PyT orch [32]. The LSTM explicitly models only the 10 k most frequent words 4 of 150 k vocab ulary set. The remaining 140 k words are mod- eled by uniformly distributing the probability mass of < unk > node using equation (3). Thus, the input w t and output y t vector sizes are 10 k + 1 which we call as V IS set. Hence, the baseline LSTM theoretically models the same 150 k vocabulary set as KN5. The OOS rate with respect to dev and test sets are 6 . 8% and 5 . 5% , respectiv ely . The input d s and output d h word em- bedding vector dimensions were set to 300 and 1500 , respec- tiv ely . The parameters of the model are learned by truncated backpropagation through time algorithm (BPTT) [33] unrolled for 10 steps. For regularization, we applied 50% dropout on the non-recurrent connections as suggested by [34]. The VE-LSTM model is obtained by expanding the vocab- ulary of baseline LSTM with OOS words extracted from the ASR output. For example, to construct the VE-LSTM model for the test set, we collect the list of OOS words V new from the recognized hypotheses of the test set. For each OOS word in V new , we then select the appropriate set of candidate words C . The selection criteria will be explained later. Next, se- lected candidates are used to model the new input and out- put word embedding v ectors of tar get OOS words as in equa- tion (8). F or simplicity , we didn’t weigh the selected candi- dates. Lastly , these generated new vectors are appended to the input and output word embedding matrices of baseline LSTM model, see figure 1. Consequently , the obtained VE-LSTM will 3 The pruning coefficient is 10 − 7 . 4 Plus the beginning < s > and end of sentence < /s > symbols. T able 2: The perplexity and WER results on dev and test sets of TED-LIUM corpus LM Perplexity WER (%) Dev T est Rescore Dev T est KN5 pruned 384 341 - 13.05 12.82 KN5 237 218 Lattice 10.85 10.49 300-best 11.03 10.79 LSTM 211 185 300-best 10.63 10.18 VE-LSTM 163 150 300-best 10.44 9.77 explicitly model 10 k + 1 + | V new | words, whereas the remaining 140 k − | V new | words are modeled by uniformly distrib uting the probability mass of < unk > node using equation (3). T o select candidate words we used the classical skip-gram model [23]. The skip-gram model is trained with default param- eters on OBWB corpus covering all unique words. T ypically , when presented with a tar get OOS word, the skip-gram model returns a list of “similar” w ords. From this list, we only select top eight 5 words which are present in V IS set. Results: The experiment results are gi ven in table 2. W e ev aluated LMs on perplexity and WER measure. The perple xity was computed on the reference data and the OOS words for vocab ulary expansion were extracted from the reference data as well. The perplexity results computed on the test set show that VE-LSTM significantly outperforms KN5 and LSTM models by 31% and 18% relativ e, respectiv ely . For WER experiment, the KN5 is e valuated on both lat- tice and 300-best rescoring tasks. The LSTM and VE-LSTM are e valuated only on 300-best rescoring task. W e tried to ex- tract the OOS words for vocabulary expansion from different N -best lists. Interestingly , the best result is achie ved when they are extracted from the 1 -best. The reason is that 1 -best hy- pothesis list contains high confidence score w ords, hence, OOS words extracted from it will be reliable. Whereas using other N -best lists will result in unreliable OOS words which confuse the VE-LSTM model. The VE-LSTM outperforms the baseline LSTM model by 4% relati ve WER. Compared to KN5 used to rescore the word lattice, 7% relativ e WER improv ement is achiev ed. Such improv ements suggest that the proposed v ocab- ulary expansion method is ef fective. 6. Conclusion In this paper , we have proposed an efficient vocab ulary e xpan- sion method for pretrained RNNLM. Our method which mod- ifies the input and output projection layers, while keeping the parameters of middle layers unchanged was shown to be feasi- ble. It was found that e xtracting OOS words for vocab ulary ex- pansion from the ASR output is effecti ve when high confidence words are selected. Our method achiev ed significant perplex- ity and WER improv ements on the state-of-the-art ASR system ov er two strong baseline LMs. Importantly , the expensiv e re- training was avoided and no additional training data was used. W e believ e that our approach of manipulating input and output projection layers is general enough to be applied to other neural network models with similar architectures. 7. Acknowledgements This work was conducted within the Rolls-Royce@NTU Cor- porate Lab with support from the National Research Foundation (NRF) Singapore under the Corp Lab@Uni versity Scheme. 5 This number was tuned on the de v set. 8. References [1] J. T . Goodman, “ A bit of progress in language modeling, ” Com- puter Speech & Language , vol. 15, no. 4, pp. 403–434, 2001. [2] T . Mikolo v , M. Karafi ´ at, L. Burget, J. Cernock ` y, and S. Khudan- pur , “Recurrent neural network based language model. ” in Inter- speech , 2010. [3] M. Sunderme yer , R. Schl ¨ uter , and H. Ney , “Lstm neural networks for language modeling, ” in Interspeech , 2012. [4] X. Liu, X. Chen, Y . W ang, M. J. Gales, and P . C. W oodland, “T wo efficient lattice rescoring methods using recurrent neural network language models, ” IEEE/ACM T ransactions on Audio, Speech, and Language Pr ocessing , vol. 24, no. 8, pp. 1438–1449, 2016. [5] J. Park, X. Liu, M. J. Gales, and P . C. W oodland, “Improved neu- ral network based language modelling and adaptation, ” in Inter- speech , 2010. [6] R. Jozefo wicz, O. V inyals, M. Schuster, N. Shazeer, and Y . W u, “Exploring the limits of language modeling, ” arXiv preprint arXiv:1602.02410 , 2016. [7] W . Chen, D. Grangier, and M. Auli, “Strategies for training large vocab ulary neural language models, ” in Pr oceedings of the 54th Annual Meeting of the Association for Computational Linguistics , 2016, pp. 1975–1985. [8] A. Mnih and Y . W . T eh, “ A fast and simple algorithm for training neural probabilistic language models, ” in In Pr oceedings of the International Conference on Machine Learning , 2012. [9] F . Morin and Y . Bengio, “Hierarchical probabilistic neural net- work language model, ” in AISTA TS , 2005, pp. 246–252. [10] A. Mnih and G. E. Hinton, “ A scalable hierarchical distributed language model, ” in Advances in neural information pr ocessing systems , 2009, pp. 1081–1088. [11] T . Mikolov , S. K ombrink, L. Burget, J. ernock, and S. Khudanpur , “Extensions of recurrent neural network language model, ” in 2011 IEEE International Conference on Acoustics, Speec h and Signal Pr ocessing (ICASSP) , 2011, pp. 5528–5531. [12] Y . Bengio and J.-S. Sen ´ ecal, “Quick training of probabilistic neu- ral nets by importance sampling. ” in AIST ATS , 2003, pp. 1–9. [13] ——, “ Adaptive importance sampling to accelerate training of a neural probabilistic language model, ” IEEE T ransactions on Neu- ral Networks , vol. 19, no. 4, pp. 713–722, 2008. [14] X. Chen, X. Liu, M. J. F . Gales, and P . C. W oodland, “Recurrent neural netw ork language model training with noise contrasti ve es- timation for speech recognition, ” in 2015 IEEE International Con- fer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , 2015, pp. 5411–5415. [15] E. Grave, A. Joulin, M. Ciss ´ e, D. Grangier , and H. J ´ egou, “Effi- cient softmax approximation for gpus, ” in In Pr oceedings of the International Conference on Machine Learning , 2017. [16] T . Mikolov , A. Deoras, D. Povey , L. Burget, and J. ernock, “Strategies for training large scale neural network language mod- els, ” in 2011 IEEE W orkshop on Automatic Speech Reco gnition Understanding , 2011, pp. 196–201. [17] I. Sutskever , J. Martens, and G. E. Hinton, “Generating text with recurrent neural networks, ” in In Pr oceedings of the International Confer ence on Machine Learning , 2011, pp. 1017–1024. [18] T . Mikolov , I. Sutske ver , A. Deoras, H.-S. Le, S. Kombrink, and J. Cernock ´ y, “Subword language modeling with neural networks, ” 2011. [19] Y . Kim, Y . Jernite, D. Sontag, and A. M. Rush, “Character-aware neural language models. ” in AAAI , 2016, pp. 2741–2749. [20] H. Xu, K. Li, Y . W ang, J. W ang, S. Kang, X. Chen, D. Po vey , and S. Khudanpur , “Neural network language modeling with letter- based features and importance sampling, ” in 2018 IEEE Inter- national Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , 2018. [21] J. Chung, C. Gulcehre, K. Cho, and Y . Bengio, “Gated feedback recurrent neural networks, ” in In Pr oceedings of the International Confer ence on Machine Learning , 2015, pp. 2067–2075. [22] G. Miller and C. Fellbaum, “W ordnet: An electronic lexical database, ” 1998. [23] T . Mikolov , K. Chen, G. Corrado, and J. Dean, “Efficient esti- mation of word representations in vector space, ” arXiv preprint arXiv:1301.3781 , 2013. [24] J. Pennington, R. Socher, and C. Manning, “Glove: Global vec- tors for word representation, ” in Pr oceedings of the 2014 con- fer ence on Empirical Methods in Natural Languag e Pr ocessing (EMNLP) , 2014, pp. 1532–1543. [25] R. Collobert and J. W eston, “ A unified architecture for natural language processing: Deep neural networks with multitask learn- ing, ” in In Pr oceedings of the International Conference on Ma- chine Learning , 2008, pp. 160–167. [26] T . Mikolo v , W .-t. Y ih, and G. Zweig, “Linguistic regularities in continuous space word representations. ” in HLT -NAA CL , 2013, pp. 746–751. [27] D. Pove y , A. Ghoshal, G. Boulianne, L. Burget, O. Glembek, N. Goel, M. Hannemann, P . Motlicek, Y . Qian, P . Schwarz et al. , “The kaldi speech recognition toolkit, ” in IEEE 2011 W orkshop on Automatic Speech Recognition and Understanding , 2011. [28] A. Rousseau, P . Del ´ eglise, and Y . Esteve, “T ed-lium: an automatic speech recognition dedicated corpus. ” in LREC , 2012, pp. 125– 129. [29] C. Chelba, T . Mikolov , M. Schuster , Q. Ge, T . Brants, P . Koehn, and T . Robinson, “One billion word benchmark for measuring progress in statistical language modeling, ” in Interspeech , 2014. [30] S. F . Chen and J. Goodman, “ An empirical study of smoothing techniques for language modeling, ” Computer Speech & Lan- guage , v ol. 13, no. 4, pp. 359–394, 1999. [31] A. Stolcke, “Srilm - an extensible language modeling toolkit, ” in Interspeech , 2002. [32] A. Paszke, S. Gross, S. Chintala, G. Chanan, E. Y ang, Z. DeV ito, Z. Lin, A. Desmaison, L. Antiga, and A. Lerer , “ Automatic differ - entiation in pytorch, ” 2017. [33] R. J. W illiams and J. Peng, “ An efficient gradient-based algo- rithm for on-line training of recurrent network trajectories, ” Neu- ral computation , vol. 2, no. 4, pp. 490–501, 1990. [34] W . Zaremba, I. Sutskever , and O. V inyals, “Recurrent neural net- work regularization, ” CoRR , 2014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment