Monitoring Link Faults in Nonlinear Diffusively-coupled Networks

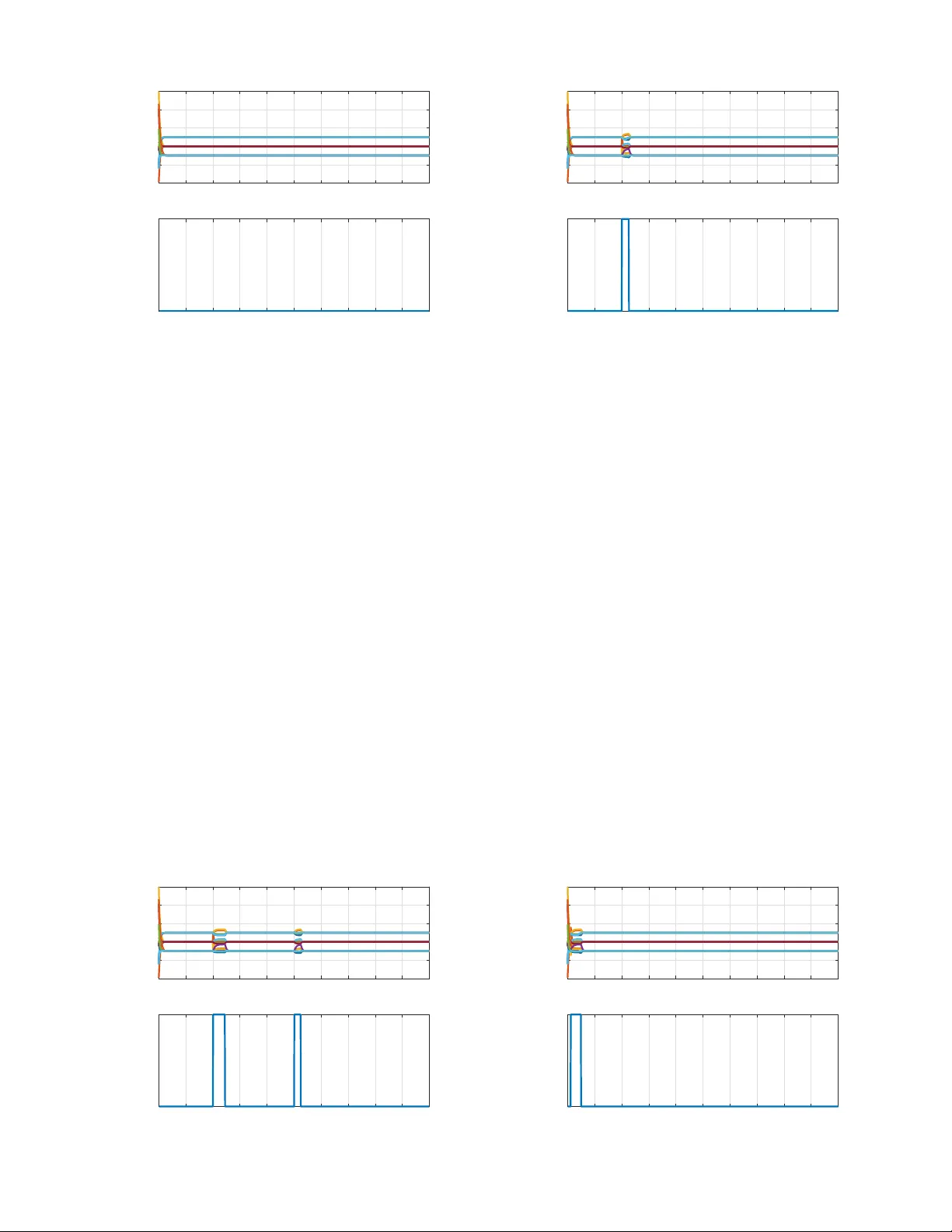

Fault detection and isolation is an area of engineering dealing with designing on-line protocols for systems that allow one to identify the existence of faults, pinpoint their exact location, and overcome them. We consider the case of multi-agent sys…

Authors: Miel Sharf, Daniel Zelazo