Unsupervised Audiovisual Synthesis via Exemplar Autoencoders

We present an unsupervised approach that converts the input speech of any individual into audiovisual streams of potentially-infinitely many output speakers. Our approach builds on simple autoencoders that project out-of-sample data onto the distribu…

Authors: ** - Yuxuan Du 외 (CMU) *※ 논문에 명시된 정확한 저자 목록은 원문을 확인 바랍니다.* **

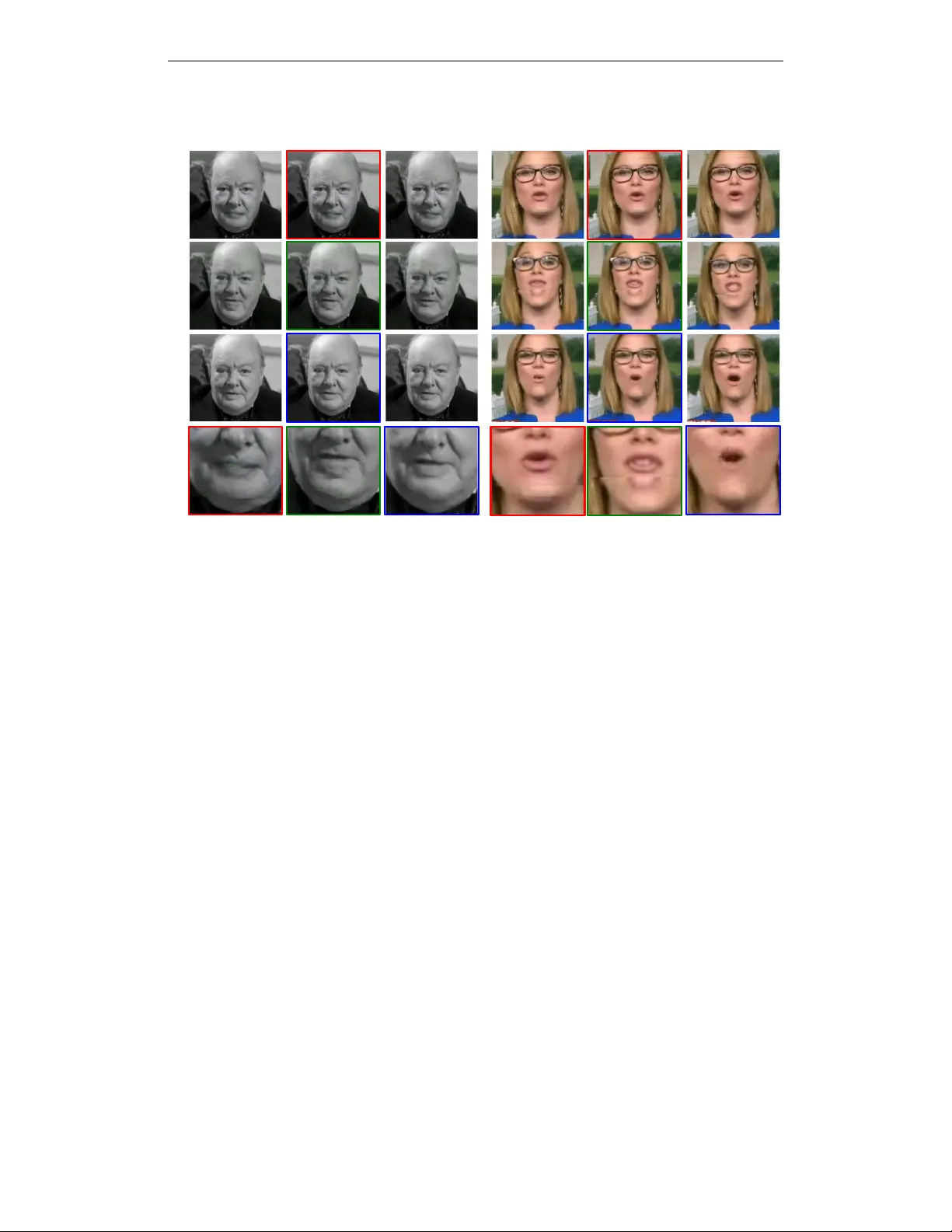

Published as a conference paper at ICLR 2021 U N S U P E R V I S E D A U D I OV I S U A L S Y N T H E S I S V I A E X E M P L A R A U T O E N C O D E R S Kangle Deng, Aayush Bansal, Deva Ramanan Carnegie Mellon Uni versity Pittsbur gh, P A 15213, USA { kangled,aayushb,deva } @cs.cmu.edu A B S T R AC T W e present an unsupervised approach that conv erts the input speech of any in- dividual into audio visual streams of potentially-infinitely man y output speakers. Our approach b uilds on simple autoencoders that project out-of-sample data onto the distribution of the training set. W e use Exemplar Autoencoders to learn the voice, stylistic prosody , and visual appearance of a specific target ex emplar speech. In contrast to existing methods, the proposed approach can be easily ex- tended to an arbitrarily large number of speak ers and styles using only 3 minutes of target audio-video data, without requiring any training data for the input speaker . T o do so, we learn audiovisual bottleneck representations that capture the struc- tured linguistic content of speech. W e outperform prior approaches on both audio and video synthesis, and pro vide extensiv e qualitativ e analysis on our project page – https://www.cs.cmu.edu/ ˜ exemplar- ae/ . 1 I N T R O D U C T I O N W e present an unsupervised approach to retargeting the speech of any unkno wn speaker to an au- diovisual stream of a known target speaker . Using our approach, one can retarget a celebrity video clip to say the words “W elcome to ICLR 2021” in dif ferent languages including English, Hindi, and Mandarin (please see our associated video). Our approach enables a variety of novel applications because it eliminates the need for training on large datasets; instead, it is trained with unsupervised learning on only a few minutes of the tar get speech, and does not require any training examples of the input speaker . By retargeting input speech generated by medical de vices such as electrolarynxs and text-to-speech (TTS) systems, our approach enables personalized voice generation for voice- impaired indi viduals (Kain et al., 2007; Nakamura et al., 2012). Our work also enables applications in education and entertainment; one can create interactive documentaries about historical figures in their voice (Bansal et al., 2018), or generate the sound of actors who are no longer able to perform. W e highlight such representative applications in Figure 1. Prior work typically independently looks at the problem of audio conv ersion (Chou et al., 2019; Kaneko et al., 2019a;b; Mohammadi & Kim, 2019; Qian et al., 2019) and video generation from audio signals (Y ehia et al., 2002; Chung et al., 2017; Suwajanakorn et al., 2017; Zhou et al., 2019; Zhu et al., 2018). Particularly relev ant are zero-shot audio translation approaches (Chou et al., 2019; Mohammadi & Kim, 2019; Polyak & W olf, 2019; Qian et al., 2019) that learn a generic low- dimensional embedding (from a training set) that are designed to be agnostic to speaker identity (Fig. 2-a). W e will empirically show that such generic embeddings may struggle to capture stylistic details of in-the-wild speech that differs from the training set. Alternati vely , one can directly learn an audio translation engine specialized to specific input and output speakers, often requiring data of the two speak ers either aligned/paired (Chen et al., 2014; Nakashika et al., 2014; Sun et al., 2015; T oda et al., 2007) or unaligned/unpaired (Chou et al., 2018; Fang et al., 2018; Kameoka et al., 2018; Kaneko & Kameoka, 2018; Kaneko et al., 2019a;b; Serr ` a et al., 2019). This requirement restricts such methods to known input speakers at test time (Fig. 2-b). In terms of video synthesis from audio input, zero-shot facial synthesis approaches (Chung et al., 2017; Zhou et al., 2019; Zhu et al., 2018) animate the lips but struggle to capture realistic facial characteristics of the entire person. Other approaches (Ginosar et al., 2019; Shlizerman et al., 2018; Suwajanakorn et al., 2017) restrict 1 Published as a conference paper at ICLR 2021 ... (a). Assistive T ool for Speech Impaired: Zero-Shot Natural V oice Synthesis (b). Beyond Language Constraints: Zero-Shot Multi-Lingual T ranslation We train Exemplar Autoencoders for infinitely many speakers using ~3 minutes of speech for an individual speaker without any additional information. output : natural voice like T akeo Kanade. input : T ext-to-Speech System output : natural voice like Michelle Obama. input : Electrolarynx output : Chinese speech in John Oliver’s voice. input : native Chinese speaker output : Hindi speech in John Oliver’s voice. input : native Hindi speaker (c). Educational/Entertainment Purposes: Zero-Shot Audio-Visual Content Creation output : Winston Churchill Audio-Visual Speech. input : “ this is not the end, this is not even the … ” Figure 1: W e train audiovisual (A V) ex emplar autoencoders that capture personalized in-the-wild web speech as sho wn in the top -ro w . W e then show three representati ve applications of Exemplar Autoencoders: (a) Our approach enables zero-shot natural voice synthesis from an Electrolarynx or a TTS used by a speech-impaired person; (b) Without any knowledge of Chinese and Hindi, our approach can generate Chinese and Hindi speech for John Oliver , an English-speaking late-night show host; and (c) W e can generate audio-visual content for historical documents that could not be otherwise captured. themselves to kno wn input speakers at test time and require large amounts of data to train a model in a supervised manner . Our work combines the zero-shot nature of generic embeddings with the stylistic detail of person- specific translation systems. Simply put, given a tar get speech with a particular style and ambient en vironment, we learn an autoencoder specific to that tar get speech (Fig. 2-c). W e deem our ap- proach “Exemplar Autoencoders”. At test time, we demonstrate that one can translate any input speech into the target simply by passing it through the target ex emplar autoencoder . W e demon- strate this property is a consequence of two curious facts, sho wn in Fig. 3: (1) linguistic phonemes tend to cluster quite well in spectrogram space (Fig. 3-c); and (2) autoencoders with sufficiently small bottlenecks act as projection operators that project out-of-sample source data onto the target training distribution, allo wing us to preserv e the content (words) of the source and the style of the target (Fig. 3-b). Finally , we jointly synthesize audiovisual (A V) outputs by adding a visual stream to the audio autoencoder . Importantly , our approach is data-ef ficient and can be trained using 3 minutes of audio-video data of the target speaker and no training data for the input speaker . The ability to train e xemplar autoencoders on small amounts of data is crucial when learning specialized models tailored to particular target data. T able 1 contrasts our w ork with leading approaches in au- dio con version (Kaneko et al., 2019b; Qian et al., 2019) and audio-to-video synthesis (Chung et al., 2017; Suwajanakorn et al., 2017). Contributions: (1) W e introduce Ex emplar Autoencoders, which allow for any input speech to be con verted into an arbitrarily-lar ge number of target speakers (“any-to-man y” A V synthesis). (2) W e mov e beyond well-curated datasets and work with in-the-wild web audio-video data in this paper . W e also provide a new CelebAudio dataset for ev aluation. (3) Our approach can be used as an off- the-shelf plug and play tool for target-specific voice con version. Finally , we discuss broader impacts of audio-visual content creation in the appendix, including forensic e xperiments that suggests fake content can be identified with high accuracy . 2 R E L A T E D W O R K A tremendous interest in audio-video generation for health-care, quality-of-life improvement, educa- tional, and entertainment purposes has influenced a wide v ariety of work in audio, natural language processing, computer vision, and graphics literature. In this work, we seek to explore a standard representation for a user-controllable “an y-to-many” audio visual synthesis. Speech Synthesis & V oice Con version: Earlier w orks (Hunt & Black, 1996; Zen et al., 2009) in speech synthesis use text inputs to create T ext-to-Speech (TTS) systems. Sequence-to-sequence (Seq2seq) structures (Sutske ver et al., 2014) hav e led to significant adv ancements in TTS sys- tems (Oord et al., 2016; Shen et al., 2018; W ang et al., 2017). Recently Jia et al. (2018) has 2 Published as a conference paper at ICLR 2021 decoder content embedding speaker embedding input speech from any user S 1 Offline training with a fixed number of speakers: {S 1 , S 2 , …, S N } Offline training using a pair of speakers: {S 1 , S 2 } S 2 S 1 S 2 On-the-fly training with any number of speakers: {{S 1 }, {S 2 },.., {S N }, …} S 1 S N (a) zero-shot conversion (b) one-on-one conversion (c) ours — exemplar autoencoders S 2 . . . . . . S N+1 . . . . T est time: any unknown speaker T est time: {S 1 , S 2 } T est time: any unknown speaker input style from target speaker target speech Generic-User Person-Specific Generic-User & Person-Specific translation on a pair of speakers learning a speaker-specific model S 1 S N S N+1 S 2 Figure 2: Prior approaches can be classified into two groups: (a) Zero-shot con version learns a generic low-dimensional embedding from a training set that is designed to be agnostic to speaker identity . W e empirically observe that such generic embeddings may struggle to capture stylistic details of in-the-wild speech that dif fers from the training set. (b) Person-specific one-on-one con- version learns a translation engine specialized to specific input and output speakers, restricting them to kno wn input speakers at test time. (c) In this work, we combine the zero-shot nature of generic embeddings with the stylistic detail of person-specific translation systems. Simply put, given a tar - get speech with a particular style and ambient en vironment, we learn an autoencoder specific to that target speech. At test time, one can translate any input speech into the target simply by passing it through the target exemplar autoencoder . extended these models to incorporate multiple speakers. These approaches enable audio con version by generating te xt from the input via a speech-to-text (STT), and use a TTS for target audio. Despite enormous progress in b uilding TTS systems, it is not trivial to embody perfect emotion and prosody due to the limited e xpressi veness of text (Pitrelli et al., 2006). In this work, we use the raw speech signal of a target speech to encode the stylistic nuance and subtlety that we wish to synthesize. A udio-to-A udio Conv ersion: The problem of audio-to-audio con version has largely been con- fined to one-to-one translation, be it using a paired (Chen et al., 2014; Nakashika et al., 2014; Sun et al., 2015; T oda et al., 2007) or unpaired (Chen et al., 2019; Kaneko & Kameoka, 2018; Kanek o et al., 2019a;b; Serr ` a et al., 2019; T obing et al., 2019; Zhao et al., 2019) data setup. Recent ap- proaches (Chou et al., 2019; Mohammadi & Kim, 2019; Paul et al., 2019; Polyak & W olf, 2019; Qian et al., 2019) have begun to explore any-to-any translation, where the goal is to generalize to any input and an y target speaker . T o do so, these approaches learn a speak er-agnostic embedding space that is meant to generalize to nev er-before-seen identities. Our work is closely inspired by such ap- proaches, b ut is based on the observ ation that such generic embeddings tend to be lo w-dimensional, making it dif ficult to capture the full range of stylistic prosody and ambient environments that can exist in speech. W e can, howe ver , capture these subtle b ut important aspects via an ex emplar au- toencoder that is trained for a specific tar get speech ex emplar . Moreover , it is not clear how to extend generic embeddings to audiovisual generation because visual appearance is highly multi- modal (people can appear different due to clothing). Exemplar autoencoders, on the other hand, are straightforward to extend to video because they inherently are tuned to the particular visual mode (background, clothing, etc.) in the target speech. A udio-Video Synthesis: There is a growing interest (Chung et al., 2018; Nagrani et al., 2018; Oh et al., 2019) to jointly study audio and video for better recognition (Kazakos et al., 2019), localizing sound (Gao et al., 2018; Senocak et al., 2018), or learning better visual representations (Owens & Efros, 2018; Owens et al., 2016). There is a wide literature (Berthouzoz et al., 2012; Bregler et al., 1997; Fan et al., 2015; Fried et al., 2019; Greenwood et al., 2018; T aylor et al., 2017; Thies et al., 2020; Kumar et al., 2020) on synthesizing videos (talking-heads) from an audio signal. Early work (Y ehia et al., 2002) links speech acoustics with coefficients necessary to animate natural face and head motion. Recent approaches (Chung et al., 2017; Zhou et al., 2019; Zhu et al., 2018) hav e looked at zero-shot facial synthesis from audio signals. These approaches register human faces using f acial keypoints so that one can expect a certain facial part at a fixed location. At test time, an image of the target speaker and audio is pro vided. While these approaches can animate lips of the tar get 3 Published as a conference paper at ICLR 2021 Method input: audio, unknown test speak er-specific output : ? speaker model Auto-VC (Qian et al., 2019) A 3 7 StarGAN-VC (Kaneko et al., 2019b) A 7 3 Speech2V id (Chung et al., 2017) V 3 7 Synthesizing Obama (Suwajanakorn et al., 2017) V 7 3 Ours (Exemplar Autoencoders) A V 3 3 T able 1: W e contrast our work with leading approaches in audio con version (Kanek o et al., 2019b; Qian et al., 2019) and audio-to-video generation (Chung et al., 2017; Suwajanakorn et al., 2017). Unlike past work, we generate both audio-video output from an audio input ( left ). Zero-shot meth- ods are attracti ve because they can generalize to unknown tar get speakers at test time ( middle ). Howe ver , in practice, models specialized to particular pairs of kno wn input and target speak ers tend to produce more accurate results ( right ). Our method combines the best of both worlds by tuning an ex emplar autoencoder on-the-fly to the target speak er of interest. speaker , it is still dif ficult to capture other realistic facial expressions. Other approaches (Ginosar et al., 2019; Shlizerman et al., 2018; Suwajanakorn et al., 2017) use large amount of training data and supervision (in the form of ke ypoints) to learn person-specific synthesis models. These approaches generate realistic results. Howe ver , they are restricted to known input users at test time. In contrast, our goal is to synthesize audiovisual content (giv en any input audio) in a data-ef ficient manner (using 2-3 minutes of unsupervised data). A utoencoders: Autoencoders are unsupervised neural networks that learn to reconstruct inputs via a bottleneck layer (Goodfellow et al., 2017). They ha ve traditionally been used for dimensionality reduction or feature learning, though v ariational formulations ha ve extended them to full generativ e models that can be used for probabilistic synthesis (Kingma & W elling, 2013). Most related to us are denoising autoencoders (V incent et al., 2008), which learn to project noisy input data onto the underlying training data manifold. Such methods are typically trained on synthetically-corrupted inputs with the goal of learning useful representations. Instead, we e xploit the projection property of autoencoders itself, by repurposing them as translation engines that “project” the input speech of an unknown speaker onto the tar get speaker manifold. It is well-kno wn that linear autoencoders, which can be learned with PCA (Baldi & Hornik, 1989), produce the best reconstruction of any out-of-sample input (Fig. 3-a), in terms of squared error from the subspace spanned by the training dataset (Bishop, 2006). W e empirically verify that this property approximately holds for nonlinear autoencoders (Fig. 3). When trained on a tar get (ex emplar) dataset consisting of a particular speech style, we demonstrate that reprojections of out-of-sample inputs tend to preserve the content of the input and the style of the target. Recently , W ang et al. (2021) demonstrated these properties for various video analytic and processing tasks. 3 E X E M P L A R A U T O E N C O D E R S There is an enormous space of audiovisual styles spanning visual appearance, prosody , pitch, emo- tions, and environment. It is challenging for a single lar ge model to capture such div ersity for accurate audiovisual synthesis. Howe ver , man y small models may easily capture the various nu- ances. In this work, we learn a separate autoencoder for indi vidual audio visual clips that are limited to a particular style. W e call our technique Exemplar Autoencoders. One of our remarkable findings is that Ex emplar Autoencoders (when trained appropriately) can be dri ven with the speech input of a nev er-before-seen speak er , allo wing for the overall system to function as a audiovisual translation engine. W e study in detail why audiovisual autoencoders preserve the content (words) of never - before-seen input speech but preserve the style of the target speech on which it was trained. The heart of our approach relies on the compressibility of audio speech, which we describe below . Speech contains two types of information: (i) the content , or words being said and (ii) style infor- mation that describes the scene context, person-specific characteristics, and prosody of the speech deliv ery . It is natural to assume that speech is generated by the follo wing process. First, a style s 4 Published as a conference paper at ICLR 2021 (c) t-SNE for acoustic features (a) Linear Autoencoder (PCA) (b) Autoencoder out-of- sample input projected point training data subspace training data manifold out-of- sample input projected point Figure 3: A utoencoders with sufficiently small bottlenecks project out-of-sample data onto the manifold spanned by the training set (Goodfellow et al., 2017), which can be easily seen in the case of linear autoencoders learned via PCA (Bishop, 2006). W e observe that acoustic featur es (MEL spectograms) of words spoken by different speakers (from the VCTK dataset (V eaux et al., 2016)) easily cluster together irrespective of who said them and with what style. W e combine these observations to design a simple style transfer engine that works by training an autoencoder on a single tar get style with a MEL-spectogram r econstruction loss . Exemplar Autoencoders project out-of-sample input speech content onto the style-specific manifold of the target. is drawn from the style space S . Then a content code w is drawn from the content space W . Fi- nally , x = f ( s, w ) denotes the speech of the content w spoken in style s , where f is the generating function of speech. 3 . 1 S T RU C T U R E D S P E E C H S PAC E In human acoustics, one uses dif ferent shapes of their v ocal tract 1 to pronounce different words with their voice. Interestingly , different people use similar , or ideally the same, shapes of v ocal tract to pronounce the same words. For this reason, we find a built-in structure that the acoustic features of the same w ords in dif ferent styles are very close (also sho wn in Fig. 3-c). Gi ven two styles s 1 and s 2 for example, f ( s 1 , w 0 ) is one spoken word in style s 1 . Then f ( s 2 , w 0 ) should be closer to f ( s 1 , w 0 ) than any other word in style s 2 . W e can formulate this property as follows: Error ( f ( s 1 , w 0 ) , f ( s 2 , w 0 )) ≤ Error ( f ( s 1 , w 0 ) , f ( s 2 , w )) , ∀ w ∈ W, where s 1 , s 2 ∈ S. This can be further presented in equation form: f ( s 2 , w 0 ) = arg min x ∈ M Error ( x, f ( s 1 , w 0 )) , where M = { f ( s 2 , w ) : w ∈ W } . (1) 3 . 2 A U TO E N C O D E R S F O R S T Y L E T R A N S F E R W e now provide a statistical moti v ation for the ability of e xemplar autoencoders to preserv e content while transferring style. Giv en training examples { x i } , one learns an encoder E and decoder D so as to minimize reconstruction error: min E ,D X i Error x i , D ( E ( x i )) . In the linear case (where E ( x ) = Ax , D ( x ) = B x , and Error = L2), optimal weights are given by the eigenv ectors that span the input subspace of data (Baldi & Hornik, 1989). Gi ven suf ficiently small bottlenecks, linear autoencoders project out-of-sample points into the input subspace, so as to minimize the reconstruction error of the output (Fig. 3-a). W eights of the autoencoder (eigen vec- tors) capture dataset-specific style common to all samples from that dataset, while the bottleneck activ ations (projection coef ficients) capture sample-specific content (properties that capture individ- ual differences between samples). W e empirically verify that a similar property holds for nonlinear 1 The v ocal tract is the ca vity in human beings where the sound produced at the sound source is filtered. The shape of the vocal tract is mainly determined by the positions and shapes of the tongue, throat and mouth. 5 Published as a conference paper at ICLR 2021 !"#$%&'() *+",(",-*+)( ./)0 + Ove rview 1234 5634 78 9 *:: × ; <0 9 =>4? × @ 8+A"->$%&' 0"B !&->$%&'0"B <0 9 =>4? 78 9 *:: × ; STFT *+",(",-*+)( 78 9 *:: CD$% (-* +)( @E53F4G6H ;8 9 ,D$"#*:: × 5 7@137@13F4G6H !& 9 I(# <' +JK × 6 E7@3E7@3F4G6H Video&Decoder Aud io&D eco der 563F4G;@H <0 9 =>4? × ; ;8 9 *:: × ; 𝑥 𝑚 # 𝑚 $ v $ 𝑥 ./)0 + L"J+)(D ./)0 + 8(J+)(D ./)0 + L"J+)(D ./)0 + 8(J+)(D M0)(+ 8(J+)(D (a)V oice - Conversion (b)Audio - Video Syn the si s N$O ( : (, Figure 4: Network Architectur e: (a) The voice-con version network consists of a content encoder , and an audio decoder (denoted as green). This network serves as a person & attributes-specific auto- encoder at training time, b ut is able to con vert speech from an yone to personalized audio for the target speaker at inference time. (b) The audio-video synthesis network incorporates a video decoder (denoted as yellow) into the voice-con version system. The video decoder also re gards the content encoder as its front-end, but takes the unsampled content code as input due to time alignment. The video architecture is mainly borrowed from StackGAN (Radford et al., 2016; Zhang et al., 2017), which synthesizes the video through 2 resolution-based stages. autoencoders (Appendix E): gi ven sufficiently-small bottlenecks, they approximately project out-of- sample data onto the nonlinear manifold M spanned by the training set: D ( E ( ˆ x )) ≈ arg min m ∈ M Error ( m, ˆ x ) , where M = { D ( E ( x )) | x ∈ R d } , (2) where the approximation is exact for the linear case. In the nonlinear case, let M = { f ( s 2 , w ) : w ∈ W } be the manifold spanning a particular style s 2 . Let ˆ x = f ( s 1 , w 0 ) be a par- ticular word w 0 spoken with an y other style s 1 . The output D ( E ( ˆ x )) will be a point on the tar get manifold M (roughly) closest to ˆ x . From Eq. 1 and Fig. 3, the closest point on the target manifold tends to be the same word spoken by the tar get style: D ( E ( ˆ x )) ≈ arg min t ∈ M Error ( t, ˆ x ) = arg min t ∈ M Error ( t, f ( s 1 , w 0 )) ≈ f ( s 2 , w 0 ) . (3) Note that nothing in our analysis is language-specific. In principle, it holds for other languages such as Mandarin and Hindi. W e posit that the “content” w i can be represented by phonemes that can be shared by dif ferent languages. W e demonstrate the possibility of audiovisual translation across different languages, i.e., one can dri ve a nativ e English speaker to speak in Chinese or Hindi. 3 . 3 S T Y L I S T I C A U D I O V I S U A L ( A V ) S Y N T H E S I S W e now operationalize our previous analysis into an approach for stylistic A V synthesis, i.e., we learn A V representations with autoencoders tailored to particular target speech. T o enable these rep- resentations to be driv en by the audio input of any user , we learn in a manner that ensures bottleneck activ ations capture structured linguistic “word” content: we pre-train an audio-only autoencoder, and then learn a video decoder while finetuning the audio component. This adaptation allows us to train A V models for a target identity with as little as 3 minutes of data (in contrast to the 14 hours used in Suwajanakorn et al. (2017)). A udio A utoencoder: Giv en the target audio stream of interest x , we first con vert it to a mel spec- togram m = F ( x ) using a short-time Fourier T ransform (Oppenheim et al., 1996). W e train an encoder E and decoder D that reconstructs Mel-spectograms ˜ m , and finally use a W av eNet v ocoder V (Oord et al., 2016) to conv ert ˜ m back to speech ˜ x . All components (the encoder E , decoder D , and vocoder V ) are trained with a joint reconstruction loss in both frequency and audio space: 6 Published as a conference paper at ICLR 2021 VCTK (V eaux et al., 2016) Zero-Shot Extra-Data SCA (%) ↑ MCD ↓ StarGAN-VC (Kaneko et al., 2019b) 7 3 69.5 582.1 VQ-V AE (van den Oord et al., 2017) 7 3 69.9 663.4 Chou et al. (Chou et al., 2018) 7 3 98.9 406.2 Blow (Serr ` a et al., 2019) 7 3 87.4 444.3 Chou et al. (Chou et al., 2019) 3 3 57.0 491.1 Auto-VC (Qian et al., 2019) 3 3 98.5 408.8 Ours 3 7 99.6 420.3 T able 2: Objective Evaluation for A udio T ranslation: W e e v aluate on VCTK, which provides paired data. The speaker -classification accuracy (SCA) criterion enables us to study the naturalness of generated audio samples and similarity to the tar get speaker . Higher is better . The Mel-Cepstral distortion (MCD) enables us to study the preservation of the content. Lower is better . Our approach achiev es competitive performance to prior state-of-the-art without requiring additional data from other speakers and yet be zero-shot. Error Audio ( x, ˜ x ) = E k m − ˜ m k 1 + L W av eN et ( x, ˜ x ) , (4) where L W av eN et is the standard cross-entrop y loss used to train W av enet (Oord et al., 2016). Fig. 4 (top-row) summarizes our network design for audio-only autoencoders. Additional training param- eters are provided in the Appendix C. V ideo decoder: Giv en the trained audio autoencoder E ( x ) and a target video v , we now train a video decoder that reconstructs the video ˜ v from E ( x ) (see Fig. 4). Specifically , we train using a joint loss: Error AV ( x, v , ˜ x, ˜ v ) = Error Audio ( x, ˜ x ) + E k v − ˜ v k 1 + L Adv ( v , ˜ v ) , (5) where L Adv is an adv ersarial loss (Goodfello w et al., 2014) used to improv e video quality . W e found it helpful to simultaneously fine-tune the audio autoencoder . 4 E X P E R I M E N T S W e now quantitati vely ev aluate the proposed method for audio conv ersion in Sec. 4.1 and audio- video synthesis in Sec. 4.2. W e use existing datasets for a fair ev aluation with prior art. W e also introduce a new dataset (for ev aluation purposes alone) that consists of recordings in non-studio en vironments. Many of our motiv ating applications, such as education and assistive technology , require processing unstructured real-world data collected outside a studio. 4 . 1 A U D I O T R A N S L A T I O N Datasets: W e use the publicly av ailable VCTK dataset (V eaux et al., 2016), which contains 44 hours of utterances from 109 nati ve speakers of English with various accents. Each speaker reads a dif ferent set of sentences, except for two paragraphs. While the con version setting is unpaired, there exists a small amount of paired data that enables us to conduct objectiv e ev aluation. W e also introduce the new in-the-wild CelebA udio dataset for audio translation to v alidate the ef fectiv eness as well as the robustness of various approaches. This dataset consists of speeches (with an a verage of 30 minutes, but some as lo w as 3 minutes) of various public figures collected from Y ouT ube, including nativ e and non-native speakers. The content of these speeches is entirely different from one another , thereby forcing the future methods to be unpaired and unsupervised. There are 20 different identities. W e provide more details about CelebAudio dataset in Appendix D. Quantitative Evaluation: W e rigorously ev aluate our output with a variety of metrics. When ground-truth paired examples are a v ailable for testing (VCTK), we follow past w ork (Kaneko et al., 2019a;b) and compute Mel-Cepstral distortion ( MCD ), which measures the squared distance be- tween the synthesized audio and ground-truth in frequency space. W e also use speaker -classification 7 Published as a conference paper at ICLR 2021 CelebA udio Extra Naturalness ↑ V oice Similarity ↑ Content Consistency ↑ Geometric Mean Data MOS Pref (%) MOS Pref (%) MOS Pref (%) of VS-CC ↑ A uto-VC (Qian et al., 2019) off-the-shelf - 1 . 21 0 1 . 31 0 1 . 60 0 1 . 45 fine-tuned 3 2 . 35 13 . 3 1 . 97 2 . 0 4 . 28 48 . 0 2 . 90 scratch 7 2 . 28 6 . 7 1 . 90 2 . 0 4 . 05 18 . 0 2 . 77 Ours 7 2 . 78 66 . 7 3 . 32 94 . 0 4 . 00 22 . 0 3 . 64 T able 3: Human Studies for A udio : W e conduct e xtensiv e human studies on Amazon Mechanical T urk. W e report Mean Opinion Score (MOS) and user preference (percentage of time that method ranked best) for naturalness, target voice similarity (VS), source content consistency (CC), and the geometric mean of VS-CC (since either can be trivially maximized by reporting a target/input sam- ple). Higher the better , on a scale of 1-5. The off-the-shelf Auto-VC model struggles to generalize to CelebAudio, indicating the dif ficulty of in-the-wild zero-shot con version. Fine-tuning on Cele- bAudio dataset significantly improv es performance. When restricting Auto-VC to the same training data as our model (scratch), performance drops a small but noticeable amount. Our results strongly outperform Auto-VC for VS-CC, suggesting e xemplar autoencoders are able to generate speech that maintains source content consistency while being similar to the tar get voice style. accuracy ( SCA ), which is defined as the percentage of times a translation is correctly classified by a speaker -classifier (trained with Serr ` a et al. (2019)). Human Study Evaluation: W e conduct e xtensiv e human studies on Amazon Mechanical Turk (AMT) to analyze the generated audio samples from CelebAudio. The study assesses the natural- ness of the generated data, the voice similarity (VS) to the target speaker , the content/word consis- tency (CC) to the input, and the geometric mean of VS-CC (since either can be trivially maximized by reporting a target/input speech sample). Each is measured on a scale from 1-5. W e select 10 CelebAudio speak ers as targets and randomly choose 5 utterances from the other speakers as inputs. W e then produce 5 × 10 = 50 translations, each of which is ev aluated by 10 AMT users. Baselines: W e contrast our method with several existing v oice con version systems (Chou et al., 2018; Kanek o et al., 2019b; v an den Oord et al., 2017; Qian et al., 2019; Serr ` a et al., 2019). Because StarGAN-VC (Kaneko et al., 2019b), VQ-V AE (v an den Oord et al., 2017), Chou et al. (Chou et al., 2018), and Blo w (Serr ` a et al., 2019) do not claim zero-shot v oice con version, we train these models on 20 speakers from VCTK and ev aluate voice con version on those speakers. As shown in T able 2, we observe competiti ve performance without using an y extra data. A uto-VC (Qian et al., 2019): Auto-VC is a zero-shot translator . W e observe competitive perfor- mance on VCTK dataset in T able 2. W e conduct human studies to extensi vely study it in T able 3. Firstly , we use an off-the-shelf model for e v aluation. W e observe poor performance, both quanti- tativ ely and qualitatively . W e then fine-tune the existing model on audio data from 20 CelebAudio speakers. W e observe significant performance improv ement in Auto-VC when restricting it to the same set of examples as ours. W e also trained the Auto-VC model from scratch , for an apples-to- apples comparison with us. The performance on all three criterion dropped with lesser data. On the other hand, our approach generates significantly better audio which sounds more like the target speaker while still preserving the content. 4 . 2 A U D I O - V I D E O S Y N T H E S I S Dataset: In addition to augmenting CelebAudio with video clips, we also e v aluate on V oxCeleb (Chung et al., 2018), an audio-visual dataset of short celebrity interview clips from Y ouTube. Baselines: W e compare to Speech2Vid (Chung et al., 2017), using their publicly av ailable code. This approach requires the face re gion to be registered before feeding it to a pre-trained model for facial synthesis. W e find various failure cases where the human face from in-the-wild videos of V oxCeleb dataset cannot be correctly registered. Additionally , Speech2V id generates the video of a cropped face re gion by default. W e composite the output on a still background to make it more compelling and comparable to ours. Finally , we also compare to the recent work of LipGAN (KR et al., 2019). This approach synthesizes region around lips and paste this generated face crop into 8 Published as a conference paper at ICLR 2021 “ en d” “w an t” Speech2Vid0 LipGAN 0 Ours0 Zoom-in0View0 Speech2Vid0 LipGAN 0 Ours0 Speech2Vid0 LipGAN 0 Ours0 Figure 5: Visual Comparisons of A udio-to-Video Synthesis: W e contrast our approach with Speech2vid (Chung et al., 2017) (first row) and LipGAN (KR et al., 2019) (second row) using their publicly av ailable codes. While Speech2V id synthesize new sequences by morphing mouth shapes, LipGAN pastes modified lip region on original videos. This leads to artifacts (see zoom-in views at bottom ro w) when morphed mouth shapes are v ery dif ferent from the original or in case of dynamic facial mo vements. Our approach (third row), ho we ver , generates full face and does not ha ve these artifacts. the gi ven video. W e observe that the paste is not seamless and leads to artifacts especially when working with in-the-wild web videos. W e sho w comparisons with both Speech2V id (Chung et al., 2017) and LipGAN (KR et al., 2019) in Figure 5. The artifacts from both approaches are visible when used for in-the-wild video examples from CelebAudio and V oxCeleb dataset. Howe v er , our approach generates the complete face without these artifacts and captures articulated e xpressions. Human Studies: W e conducted an AB-T est on AMT : we show a pair of our video output and another method to the user , and ask the user to select which looks better . Each pair is sho wn to 5 users. Our approach is preferred 87 . 2% over Speech2V id (Chung et al., 2017) and 59 . 3% ov er LipGAN (KR et al., 2019). 5 D I S C U S S I O N In this paper , we propose ex emplar autoencoders for unsupervised audiovisual synthesis from speech input. Jointly modeling audio and video enables us to learn a meaningful representation in a data efficient manner . Our model is able to generalize to in-the-wild web data and div erse forms of speech input, enabling novel applications in entertainment, education, and assistiv e technology . Our work opens up a rethinking of autoencoders’ modeling capacity . Under certain conditions, autoencoders serve as projecti ve operators to some specific distrib ution. In the appendix, we provide discussion of broader impacts including potential ab uses of such tech- nology . W e explore mitigation strategies, including empirical e vidence that forensic classifiers can be used to detect synthesized content from our method. 9 Published as a conference paper at ICLR 2021 R E F E R E N C E S Pierre Baldi and Kurt Hornik. Neural networks and principal component analysis: Learning from examples without local minima. Neural networks , 1989. Aayush Bansal, Shugao Ma, Dev a Ramanan, and Y aser Sheikh. Recycle-gan: Unsupervised video retargeting. In ECCV , 2018. Floraine Berthouzoz, W ilmot Li, and Maneesh Agrawala. T ools for placing cuts and transitions in interview video. A CM T rans. Graph. , 2012. Christopher M. Bishop. P attern Recognition and Machine Learning . Springer , 2006. Christoph Bregler , Michele Cov ell, and Malcolm Slaney . V ideo re write: Dri ving visual speech with audio. In SIGGRAPH , 1997. Li-W ei Chen, Hung-Y i Lee, and Y u Tsao. Generativ e adv ersarial netw orks for unpaired v oice trans- formation on impaired speech. In Interspeech , 2019. Ling-Hui Chen, Zhen-Hua Ling, Li-Juan Liu, and Li-Rong Dai. V oice con version using deep neural networks with layer -wise generativ e training. In T ASLP , 2014. Ju-Chieh Chou, Cheng-Chieh Y eh, Hung-Y i Lee, and Lin-Shan Lee. Multi-tar get V oice Con ver - sion without Parallel Data by Adversarially Learning Disentangled Audio Representations. In Interspeech , 2018. Ju-chieh Chou, Cheng-chieh Y eh, and Hung-yi Lee. One-shot voice con v ersion by separating speaker and content representations with instance normalization. In Interspeech , 2019. Joon Son Chung, Amir Jamaludin, and Andrew Zisserman. Y ou said that? In BMVC , 2017. Joon Son Chung, Arsha Nagrani, and Andrew Zisserman. V oxCeleb2: Deep speaker recognition. In Interspeech , 2018. Bo Fan, Lijuan W ang, Frank K Soong, and Lei Xie. Photo-real talking head with deep bidirectional lstm. In ICASSP , 2015. Fuming Fang, Junichi Y amagishi, Isao Echizen, and Jaime Lorenzo-T rueba. High-Quality Nonpar- allel V oice Con version Based on Cycle-Consistent Adv ersarial Network. In ICASSP , 2018. Ohad Fried, A yush T ewari, Michael Zollh ¨ ofer , Adam Finkelstein, Eli Shechtman, Dan B Gold- man, Kyle Genova, Zeyu Jin, Christian Theobalt, and Maneesh Agraw ala. T ext-based editing of talking-head video. ACM T rans. Graph. , 2019. Ruohan Gao, Rogerio Feris, and Kristen Grauman. Learning to separate object sounds by watching unlabeled video. In ECCV , 2018. S. Ginosar , A. Bar, G. K ohavi, C. Chan, A. Owens, and J. Malik. Learning indi vidual styles of con versational gesture. In CVPR , 2019. Ian Goodfello w , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-Farley , Sherjil Ozair, Aaron Courville, and Y oshua Bengio. Generativ e adversarial nets. In NeurIPS , 2014. Ian Goodfellow , Aaron Courville, and Y oshua Bengio. Deep learning . MIT Press Cambridge, 2017. David Greenwood, Iain Matthe ws, and Stephen Laycock. Joint learning of facial expression and head pose from speech. In Interspeech , 2018. Daniel Grif fin and Jae Lim. Signal estimation from modified short-time fourier transform. In ICASSP , 1984. Sepp Hochreiter and J ¨ urgen Schmidhuber . Long short-term memory . Neural computation , 1997. A. J. Hunt and A. W . Black. Unit selection in a concatenati ve speech synthesis system using a lar ge speech database. In ICASSP , 1996. 10 Published as a conference paper at ICLR 2021 Serge y Ioffe and Christian Szegedy . Batch normalization: Accelerating deep network training by reducing internal cov ariate shift. In ICML , 2015. Y e Jia, Y u Zhang, Ron W eiss, Quan W ang, Jonathan Shen, Fei Ren, Patrick Nguyen, Ruoming Pang, Ignacio Lopez Moreno, Y onghui W u, et al. T ransfer learning from speaker verification to multispeaker text-to-speech synthesis. In NeurIPS , 2018. Alexander B Kain, John-Paul Hosom, Xiaochuan Niu, Jan PH V an Santen, Melanie Fried-Oken, and Janice Staehely . Improving the intelligibility of dysarthric speech. Speech communication , 2007. Hirokazu Kameoka, T akuhiro Kaneko, K ou T anaka, and Nobukatsu Hojo. Acv ae-vc: Non-parallel many-to-man y v oice conv ersion with auxiliary classifier variational autoencoder . arXiv pr eprint arXiv:1808.05092 , 2018. T akuhiro Kaneko and Hirokazu Kameoka. Parallel-data-free v oice con version using cycle-consistent adversarial networks. In EUSIPCO , 2018. T akuhiro Kaneko, Hirokazu Kameoka, K ou T anaka, and Nobukatsu Hojo. Cyclegan-vc2: Improv ed cycle gan-based non-parallel voice con version. In ICASSP , 2019a. T akuhiro Kaneko, Hirokazu Kameoka, K ou T anaka, and Nobukatsu Hojo. Star gan-vc2: Rethinking conditional methods for stargan-based v oice con version. In Interspeec h , 2019b. Evangelos Kazakos, Arsha Nagrani, Andrew Zisserman, and Dima Damen. Epic-fusion: Audio- visual temporal binding for egocentric action recognition. In ICCV , 2019. Hyeongwoo Kim, Pablo Garrido, A yush T ewari, W eipeng Xu, Justus Thies, Matthias Niessner , Patrick P ´ erez, Christian Richardt, Michael Zollh ¨ ofer , and Christian Theobalt. Deep video por- traits. ACM T rans. Graph. , 2018. Diederik P Kingma and Max W elling. Auto-encoding variational bayes. arXiv pr eprint arXiv:1312.6114 , 2013. Prajwal KR, Rudrabha Mukhopadhyay , Jerin Philip, Abhishek Jha, V inay Namboodiri, and CV Jawahar . T owards automatic face-to-f ace translation. In ACM MM , 2019. Alex Krizhevsk y , Ilya Sutske ver , and Geoffre y E Hinton. Imagenet classification with deep con v o- lutional neural networks. In NeurIPS , 2012. Neeraj K umar , Srishti Goel, Ankur Narang, and Mujtaba Hasan. Robust one shot audio to video generation. In CVPR W orkshop , 2020. Maxim Maximov , Ismail Elezi, and Laura Leal-T aix ´ e. Ciagan: Conditional identity anonymization generativ e adversarial netw orks. In CVPR , 2020. Sachit Menon, Alexandru Damian, Shijia Hu, Nikhil Ravi, and Cynthia Rudin. Pulse: Self- supervised photo upsampling via latent space exploration of generati ve models. In CVPR , 2020. Seyed Hamidreza Mohammadi and T aehwan Kim. One-shot voice con version with disentangled representations by lev eraging phonetic posterior grams. In Interspeec h , 2019. Arsha Nagrani, Samuel Albanie, and Andrew Zisserman. Seeing voices and hearing faces: Cross- modal biometric matching. In CVPR , 2018. Keigo Nakamura, T omoki T oda, Hiroshi Saruwatari, and Kiyohiro Shikano. Speaking-aid systems using gmm-based voice con version for electrolaryngeal speech. Speech Commun. , 2012. T oru Nakashika, T etsuya T akiguchi, and Y asuo Ariki. High-order sequence modeling using speaker - dependent recurrent temporal restricted boltzmann machines for voice conv ersion. In Interspeech , 2014. T ae-Hyun Oh, T ali Dekel, Changil Kim, Inbar Mosseri, W illiam T . Freeman, Michael Rubinstein, and W ojciech Matusik. Speech2face: Learning the face behind a voice. In CVPR , 2019. 11 Published as a conference paper at ICLR 2021 Aaron v an den Oord, Sander Dieleman, Heiga Zen, Karen Simonyan, Oriol V inyals, Ale x Grav es, Nal Kalchbrenner, Andrew Senior , and K oray Kavukcuoglu. W avenet: A generative model for raw audio. arXiv pr eprint arXiv:1609.03499 , 2016. Alan V . Oppenheim, Alan S. W illsky , and S. Hamid Nawab . Signals & Systems . 1996. Andrew Owens and Ale xei A Efros. Audio-visual scene analysis with self-supervised multisensory features. In ECCV , 2018. Andrew Owens, Jiajun W u, Josh H McDermott, William T Freeman, and Antonio T orralba. Ambient sound provides supervision for visual learning. In ECCV , 2016. Dipjyoti Paul, Y annis P antazis, and Y annis Stylianou. Non-parallel v oice conv ersion using weighted generativ e adversarial netw orks. In Interspeech , 2019. John F Pitrelli, Raimo Bakis, Ellen M Eide, Raul Fernandez, W ael Hamza, and Michael A Picheny . The ibm expressi ve te xt-to-speech synthesis system for american english. In ICASSP , 2006. Adam Polyak and Lior W olf. Attention-based wa v enet autoencoder for uni versal voice con version. In ICASSP , 2019. Kaizhi Qian, Y ang Zhang, Shiyu Chang, Xuesong Y ang, and Mark Haseg awa-Johnson. Autovc: Zero-shot voice style transfer with only autoencoder loss. In ICML , 2019. Alec Radford, Luke Metz, and Soumith Chintala. Unsupervised representation learning with deep con v olutional generativ e adversarial netw orks. In ICLR , 2016. Andreas R ¨ ossler , Davide Cozzolino, Luisa V erdoliv a, Christian Riess, Justus Thies, and Matthias Nießner . FaceForensics++: Learning to detect manipulated facial images. In ICCV , 2019. Arda Senocak, T ae-Hyun Oh, Junsik Kim, Ming-Hsuan Y ang, and In So Kweon. Learning to local- ize sound source in visual scenes. In CVPR , 2018. Joan Serr ` a, Santiago Pascual, and Carlos Segura. Blow: a single-scale hyperconditioned flow for non-parallel raw-audio v oice con v ersion. In NeurIPS , 2019. Jonathan Shen, Ruoming Pang, Ron J W eiss, Mike Schuster , Navdeep Jaitly , Zongheng Y ang, Zhifeng Chen, Y u Zhang, Y uxuan W ang, Rj Skerrv-Ryan, et al. Natural tts synthesis by con- ditioning wa venet on mel spectrogram predictions. In ICASSP , 2018. Eli Shlizerman, Lucio Dery , Hayden Schoen, and Ira K emelmacher-Shlizerman. Audio to body dynamics. In CVPR , 2018. Gina Marie Stev ens and Charles Doyle. Privacy: An overview of federal statutes governing wir e- tapping and electr onic eavesdr opping . 2011. Lifa Sun, Shiyin Kang, Kun Li, and Helen Meng. V oice conv ersion using deep bidirectional long short-term memory based recurrent neural networks. In ICASSP , 2015. Ilya Sutsk ev er , Oriol V inyals, and Quoc V Le. Sequence to sequence learning with neural networks. In NeurIPS , 2014. Supasorn Suwajanakorn, Ste ven M. Seitz, and Ira K emelmacher-Shlizerman. Synthesizing obama: Learning lip sync from audio. ACM T rans. Graph. , 2017. Sarah T aylor , T aehwan Kim, Y isong Y ue, Moshe Mahler , James Krahe, Anastasio Garcia Rodriguez, Jessica Hodgins, and Iain Matthe ws. A deep learning approach for generalized speech animation. A CM T rans. Graph. , 2017. Justus Thies, Mohamed Elgharib, A yush T ewari, Christian Theobalt, and Matthias Nießner . Neural voice puppetry: Audio-dri ven f acial reenactment. In ECCV , 2020. Patrick Lumban T obing, Y i-Chiao W u, T omoki Hayashi, Kazuhiro K obayashi, and T omoki T oda. Non-parallel voice con version with cyclic v ariational autoencoder . In Interspeec h , 2019. 12 Published as a conference paper at ICLR 2021 T omoki T oda, Alan W Black, and K eiichi T okuda. V oice conv ersion based on maximum-likelihood estimation of spectral parameter trajectory . In ICASSP , 2007. Aaron van den Oord, Oriol V inyals, et al. Neural discrete representation learning. In NeurIPS , 2017. Christophe V eaux, Junichi Y amagishi, and Kirsten M acdonald. Superseded - CSTR VCTK Corpus: English multi-speaker corpus for CSTR v oice cloning toolkit. 2016. Pascal V incent, Hugo Larochelle, Y oshua Bengio, and Pierre-Antoine Manzagol. Extracting and composing robust features with denoising autoencoders. In ICML , 2008. Ke vin W ang, De va Ramanan, and Aayush Bansal. V ideo exploration via video-specific autoen- coders. , 2021. Sheng-Y u W ang, Oliv er W ang, Richard Zhang, Andrew Owens, and Ale xei A Efros. Cnn-generated images are surprisingly easy to spot...for now . In CVPR , 2020. Y uxuan W ang, RJ Skerry-Ryan, Daisy Stanton, Y onghui W u, Ron J W eiss, Navdeep Jaitly , Zongheng Y ang, Y ing Xiao, Zhifeng Chen, Samy Bengio, et al. T acotron: T owards end-to-end speech synthesis. In Interspeech , 2017. Hani C Y ehia, T akaaki K uratate, and Eric V atikiotis-Bateson. Linking facial animation, head motion and speech acoustics. Journal of Phonetics , 2002. Heiga Zen, K eiichi T okuda, and Alan W Black. Statistical parametric speech synthesis. Speech Communication , 2009. Han Zhang, T ao Xu, and Hongsheng Li. Stackgan: T ext to photo-realistic image synthesis with stacked generati ve adv ersarial networks. In ICCV , 2017. Shengkui Zhao, Trung Hieu Nguyen, Hao W ang, and Bin Ma. Fast learning for non-parallel many- to-many voice conv ersion with residual star generati ve adversarial networks. In Interspeec h , 2019. Hang Zhou, Y u Liu, Ziwei Liu, Ping Luo, and Xiaogang W ang. T alking face generation by adver - sarially disentangled audio-visual representation. In AAAI , 2019. Hao Zhu, Aihua Zheng, Huaibo Huang, and Ran He. High-resolution talking face generation via mutual information approximation. arXiv preprint , 2018. Jun-Y an Zhu, T aesung Park, Phillip Isola, and Alex ei A Efros. Unpaired image-to-image translation using cycle-consistent adv ersarial networks. In ICCV , 2017. 13 Published as a conference paper at ICLR 2021 A B R O A D E R I M PAC T S Our work falls in line with a body of work on content generation that retargets video content, often considered in the context of “facial puppeteering”. While there exist many applications in enter- tainment, there also exist many potentials for serious abuse. Past work in this space has included broader impact statements (Fried et al., 2019; Kim et al., 2018), which we build upon here. Our Setup: Compared to prior work, unique aspects of our setup are (a) the choice of input and output modalities and (b) requirements on training data. In terms of (a), our models take audio as input, and produce audiovisual (A V) output that captures the personalized style of the target indi- vidual but the linguistic content (words) of the source input. This factorization of style and content across the source and tar get audio is a ke y technical aspect of our work. While past work has dis- cussed broader impacts of visual image/video generation, there is less discussion on responsible practices for audio editing. W e begin this discussion below . In terms of (b), our approach is unique in that we require only a few minutes of training on target audio+video, and do not require train- ing on large populations of indi viduals. This has implications for generalizability and ease-of-use for non-experts, increasing the space of viable target applications. Our target applications include entertainment/education and assistiv e technologies, each of which is discussed below . W e conclude with a discussion of potential abuses and strate gies for mitigation. Assistive T echnology: An important application of voice synthesis is voice generation for the speak- ing impaired. Here, generating speech in an indi vidual’ s personalized style can be profoundly im- portant for maintaining a sense of identity 2 . W e demonstrate applications in this direction in our summary videos. W e have also begun collaborations with clinicians to explore personalized and stylistic speech outputs from physical devices (such as an electrolarynx) that directly sense vocal utterances. Currently , there are considerable data and pri v acy considerations in acquiring such sen- sitiv e patient data. W e believe that illustrating results on well-kno wn individuals (such as celebrities) can be used to build trust in clinical collaborators and potential patient v olunteers. Entertainment/Education: Creating high-quality A V content is a labor intensiv e task, often re- quiring days/weeks to create minutes of content for production houses. Our work has attracted the attention of production houses that are interested in creating documentaries or narrations of e vents by historical figures in their own voice (W inston Churchill, J F Kennedy , Nelson Mandela, Martin Luther King Jr). Because our approach can be trained on small amounts of tar get footage, it can be used for personalized storytelling (itself a potentially creati v e and therapeutic endeav our) as well as educational settings that have access to less computational/artistic resources b ut more immediate classroom feedback/retraining. Abuse: It is crucial to ackno wledge that audiovisual retargeting can be used maliciously , including spreading false information for propaganda purposes or pornographic content production. Address- ing these abuses requires not only technical solutions, but discussions with social scientists, policy makers, and ethicists to help delineate boundaries of defamation, priv acy , copyright, and fair use. W e attempt to begin this important discussion here. First, inspired by (Fried et al., 2019), we introduce a recommended policy for A V content creation using our method. In our setting, retar geting in volv es two pieces of media; the source individual providing an audio signal input, and target individual who’ s audiovisual clip will be edited to match the words of the source. Potentials for abuse include plagiarism of the source (e.g., representing someone else’ s words as your own), and misrepresen- tation / copyright violations of the target. Hence, we adv ocate a policy of always citing the source content and always obtaining permission from the target individual. Possible exceptions to the pol- icy , if any , may fall into the category of fair use 3 , which typically includes education, commentary , or parody (e g., retargeting a zebra texture on Putin (Zhu et al., 2017)). In all cases, retargeted content should ackno wledge itself as edited, either by clearly presenting itself as parody or via a w atermark. Importantly , there may be violations of this polic y . Hence, a major challenge will be identifying such violations and mitigating the harms caused by such violations, discussed further belo w . 2 https://news.northeastern.edu/2019/11/18/personalized- text- to- speech- v oices- help- people- with- speech- disabilities- maintain- identity- and- social- connection/ 3 https://fairuse.stanford.edu/overview/fair- use/what- is- fair- use/ 14 Published as a conference paper at ICLR 2021 A udiovisual F orensics: W e describe three strategies that attempt to identify misuse and the harms caused by such misuse. W e stress that these are not e xhaustiv e: (a) identifying “fake” content, (b) data anonymization, and (c) technology awareness. (a) As approaches for editing A V content ma- ture, society needs analogous approaches for detecting such ”fake” content. Contemporary forensic detectors tend to be data-dri ven, themselves trained to distinguish original v ersus synthesized media via a classification task. Such forensic real-vs-fake classifiers e xist for both images (Maximov et al., 2020; R ¨ ossler et al., 2019; W ang et al., 2020) and audio 4 , 5 . Because access to code for generating “fake” training examples will be crucial for learning to identify fake content, we commit to making our code freely available. Appendix B provides extensti ve analysis of audio detection of Exemplar Autoencoder fak es. (b) Another strategy for mitigating abuse is controlling access to priv ate me- dia data. Methods for image anonymization, via face detection and blurring, are widespread and crucial part of contemporary data collection, ev en mandated via the EU’ s General Data Protection Regulation (GDPR). In the US, audio restrictions are e ven more severe because of federal wiretap- ping regulations that pre vent recordings of oral communications without prior consent (Stevens & Doyle, 2011). Recent approaches for image anonymization make use of generativ e models that “de- identify” data without degradi v e blurring by retargeting each face to a generic identity (e.g., make ev eryone in a dataset look like PersonA) (Maximov et al., 2020). Our audio retargeting approach can potentially be used for audio de-identification by making e veryone in a recording sound like PersonA. (c) Finally , we point out that audio recordings are currently used in critical societal set- tings including leg al e vidence and biometric v oice-recognition (e.g., accessing one’ s bank account via automated recognition of speech ov er-the-phone (Ste vens & Doyle, 2011)). Our results suggest that such use cases need to be re-e v aluated and thoroughly tested in context of stylistic audio synthe- sis. In other terms, it is crucial for society to understand what information can be reliably deduced from audio, and our approach can be used to empirically explore this question. T raining datasets: Finally , we point out a unique property of our technical approach that dif fers prior approaches for audio and video content generation. Our exemplar approach reduces the re- liance on population-scale training datasets. Facial synthesis models trained on existing large-scale datasets – that may be dominated by english-speaking celebrities – may produce higher accuracy on sub-populations with skin tones, genders, and languages over -represented in the training dataset (Menon et al., 2020). Exemplar-based learning may exhibit different properties because models are trained on the target ex emplar individual. That said, we do find that pre-training on a single individual (b ut not a population) can speed up con v ergence of learning on the target indi vidual. Be- cause of the reduced dependency on population-scale training datasets (that may be dominated by English), ex emplar models may better generalize across dialects and languages underrepresented in such datasets. W e also present results for stylized multilingual translations (retar geting Oliv er to speak in Mandarin and Hindi) without ev er training on any Mandarin or Hindi speech. B F O R E N S I C S T U D Y In the pre vious section, we outline both positive and negati ve outcomes of our research. Compared to prior art, most of the no vel outcomes arise from using audio as an additional modality . As such, in this section, we conduct a study which illustrates that Ex emplar Autoencoder fakes can be detected with high accuracy by a forensic classifier , particularly when trained on such fak es. W e hope our study spurs additional forensic research on detection of manipulated audiovisual content. Speaker Agnostic Discriminator: W e begin by training a real/fake audio classifier on 6 identities from CelebAudio. The model structure is the same as (Serr ` a et al., 2019). The training datasets contains (1) Real speech of 6 identities: John F K ennedy , Alex ei Efros, Nelson Mandela, Oprah W infrey , Bill Clinton, and T akeo Kanade. Each has 200 sentences. (2) Fake speech: generated by turning each sentence in (1) into a different speaker in those 6 identities. So there is a total of 1200 generated sentences. W e test performance under 2 scenarios: (1) speaker within-the-training set: John F Kennedy , Ale xei Efros, Nelson Mandela, Oprah W infrey , Bill Clinton, and T akeo Kanade; (2) speaker -out-of-the-training set: Barack Obama, Theresa May . Each identity in the test set contains 100 real sentences and 100 fake ones. W e ensure test speeches are disjoint from training speeches, 4 https://www.blog.google/outreach- initiatives/google- news- initiative/ad vancing- research- fake- audio- detection/ 5 https://www.asvspoof.org/ 15 Published as a conference paper at ICLR 2021 Speaker -Agnostic Discriminator Accuracy(%) Speaker -Specific Discriminator Accuracy(%) speaker within training set 99.0 style within training set 99.7 real 98.3 real 99.3 fake 99.7 fake 100 speaker out of training set 86.8 style out of training set 99.6 real 99.7 real 99.2 fake 74.0 fake 100 T able 4: F ake A udio Discriminator: (a) Speaker Agnostic Discriminator detects fake audio while agnostic of the speaker . W e train a real/fake audio classifier on 6 identities from CelebAudio, and test it on both within-set speakers and out-of-set speakers. The training datasets contains (1) Real speech of 6 identities: John F Kennedy , Ale xei Efros, Nelson Mandela, Oprah W infrey , Bill Clinton, and T akeo Kanade. Each has 200 sentences. (2) Fak e speech: generated by turning each sentence in (1) into a dif ferent speaker in those 6 identities. The testing set contains (1) speaker within the training set; (2) speaker out of the training set: Barack Obama, Theresa May . Each identity in the testing set contains 100 real sentences and 100 fak e ones. F or within-set speakers, results sho w our model can predict with very lo w error rate ( 1%). For out-of-set speakers, our model can still classify real speeches very well, and pro vide a reasonable prediction for f ake detection. (b) Speaker Specific Discriminator detects fak e audio of a specific speak er . W e train a real/fake audio classifier on a specific style of Barack Obama, and test it on 4 styles of Obama (taken from speeches spanning different ambient en vironments and stylistic deli veries including presidential press conferences and univ ersity commencement speechs). The training set contains 1200 sentences evenly split between real and fake. Each style in the testing set contains 300 sentences e venly split as well. Results sho w our classifier provides reliable predictions on fak e speeches e ven on out-of-sample styles. ev en for the same speaker . T able 4 shows the classifier performs very well on detecting fak e of within-set speakers, and is able to provide a reasonable reference for out-of-sample speak ers. Speaker -Specific Discriminator: W e restrict our training set to one identity for specific fake de- tection. W e train 4 e xemplar autoencoders on 4 dif ferent speeches of Barack Obama to get dif ferent styles of the same person. The specific fake audio detector is trained on only 1 style of Obama, and is tested on all the 4 styles. The training set contains 600 sentences for either real or fake. Each style in the testing set contains 150 sentences for either real or fake. T able 4 sho ws our classifier pro vides reliable predictions on fake speeches e v en on out-of-set styles. C I M P L E M E N TA T I O N D E T A I L S W e provide the implementation details of Exemplar Autoencoders used in this work. C . 1 A U D I O C O N V E R S I O N STFT : The speech data is sampled at 16 kHz. W e clip the training speech into clips of 1.6s in length, which correspond to 25,600-dimensional vectors. W e then perform STFT(Oppenheim et al., 1996) on the ra w audio signal with a window size of 800, hop size of 200, and 80 mel-channels. The output of STFT is a complex matrix with size of 80 × 128 . W e represent the complex matrix in polar form (magnitude and phase), and only keep the magnitude for next steps. Encoder: The input to the encoder is the magnitude of 80 × 128 Mel-spectrogram, which is rep- resented as a 1D 80-channel signal (shown in Figure 4). This input is feed-forward to three layers of 1D conv olutional layers with a kernel size of 5, each followed by batch normalization (Iof fe & Szegedy, 2015) and ReLU acti v ation (Krizhevsk y et al., 2012). The channel of these con volutions is 512. The stride is one. There is no time down-sampling up till this step. The output is then fed into two layers of bidirectional LSTM (Hochreiter & Schmidhuber, 1997) layers with both the forward and backward cell dimensions of 32. W e then perform a different down-sampling for the forward and backward paths with a factor of 32 following (Qian et al., 2019). The result content embedding is a matrix with a size of 64 × 4 . 16 Published as a conference paper at ICLR 2021 A udio Decoder: The content embedding is up-sampled to the original time resolution of T . The up-sampled embedding is sequentially input to a 512-channel LSTM layer and three layers of 512- channel 1D con volutional layers with a k ernel size of 5. Each step accompanies batch normalization and ReLU activ ation. Finally , the output is fed into two 1024-channel LSTM layers and a fully connected layer to project into 80 channels. The projection output is regarded as the generated magnitude of Mel-spectrogram ˜ m . V ocoder: W e use W a veNet as vocoder (Oord et al., 2016) that acts like an inv erse fourier-transform, but merely use frequency magnitudes. It generates speech signal ˜ x based on the reconstructed mag- nitude of Mel-spectrogram ˜ m . T raining Details: Our model is trained at a learning rate of 0.001 and a batch size of 8. T o train a model from scratch, it needs about 30 minutes of the tar get speaker’ s speech data and around 10k iterations to conv erge. Although our main structure is straightforw ard, the vocoder is usually a large and complicated network, which needs another 50k iterations to train. Ho wev er , transfer learning can be beneficial in reducing the number of iterations and necessary data for training purposes. When fine-tuning a ne w speaker’ s autoencoder from a pre-trained model, we only need about 3 minutes of speech from a new speaker . The entire model, including the v ocoder , conv erges around 10k iterations. C . 2 V I D E O S Y N T H E S I S Network Architectur e: W e keep the v oice-con version frame work unchanged and enhance it with an additional audio-to-video decoder . In the voice-con version network, we hav e a content encoder that extracts content embedding from speech, and an audio decoder that generates audio output from that embedding. T o include video synthesis, we add a video decoder which also takes the content embedding as input, but generates video output instead. As shown in Figure 4 (bottom-row), we then hav e an audio-to-audio-video pipeline. V ideo Decoder: W e borrow architecture of the video decoder from (Radford et al., 2016; Zhang et al., 2017). W e adapt this image synthesis network by generating the video frame by frame, as well as replacing the 2D-conv olutions with 3D-con v olutions to enhance temporal coherence. The video decoder takes the unsampled content codes as input. This step is used to align the time resolution with 20-fps videos in our experiments. W e down-sample it with a 1D con v olutional layer . This step helps smooth the adjacent video frames. The output is then fed into the synthesis netw ork to get the video result ˜ v . T raining Details: W e train an audiovisual model based on a pretrained audio model, with a learning rate of 0.001 and a batch size of 8. When fine-tuning from a pre-trained model, the ov erall model con ver ges around 3k iterations. D C E L E B A U D I O D A T A S E T W e introduce a ne w in-the-wild CelebAudio dataset for audio translation. Different from well- curated speech corpus such as VCTK, CelebAudio is collected from the Internet, and thus has such slight noises as applause and cheers in it. This is helpful to v alidate the effecti veness and rob ustness of various v oice-con version methods. The distrib ution of speech length is shown in Figure 6. W e select a subset of 20 identities for ev aluation in our work. The list of 20 identities is: • Native English Speakers: Alan Kay , Carl Sagan, Claude Shannon, John Oliv er , Oprah W infrey , Richard Hamming, Robert T iger , David Attenborough, Theresa May , Bill Clin- ton, John F Kennedy , Barack Obama, Michelle Obama, Neil Degrasse T yson, Margaret Thatcher , Nelson Mandela, Richard Feyman • Non-Native English Speakers: Alex ei Efros, T akeo Kanade • Computer-generated V oice: Stephen Hawking 17 Published as a conference paper at ICLR 2021 Figure 6: Data Distribution of CelebA udio: CelebAudio contains 100 dif ferent speeches. The av erage speech length is 23 mins. Each speech ranges from 3 mins (Hillary Clinton) to 113 mins (Neil Degrasse T yson). E V E R I FI C A T I O N O F R E P R O J E C T I O N P RO P E RT Y In Section 3.2, we describe a reprojection property (Eq. 6) of our exemplar autoencoder: the output of out-of-sample data ˆ x is the projection to the manifold M spanned by the training set. D ( E ( ˆ x )) ≈ arg min m ∈ M Error ( s, ˆ x ) , where M = { D ( E ( x )) | x ∈ R d } . (6) This is equiv alent to two important properties: (a) The output lies in the manifold M . (Eq. 7); (b) The output is closer to the input ˆ x than any other point on manifold M . (Eq. 8). D ( E ( ˆ x )) ∈ M , where M = { D ( E ( x )) | x ∈ R d } . (7) Error ( D ( E ( ˆ x )) , ˆ x ) ≤ Error ( x, ˆ x ) , ∀ x ∈ M . (8) T o verify these, we conduct an experiment (T able 5) on the parallel corpus of VCTK(V eaux et al., 2016). W e randomly sample tongue-twister words spoken by 2 speakers (A and B) from VCTK dataset. W e train an exemplar autoencoder on A ’ s speech, and input B’ s words ( w B ) to get w B → A . T o verify Eq. 7, we train a speaker-classifier (similar to Serr ` a et al. (2019)) on A and B’ s speech. W e report the likelihood that w B → A is regarded as A ’ s words in T able 5 (last column). T o verify Eq. 8, we calculate Error ( D ( E ( ˆ x )) , ˆ x ) in the second column and Error ( x, ˆ x ) in the third column. W e calculate the mean distance from A ’ s subspace to w B in the fourth column. Distance from w B to w B → A is close to the minimum, and much smaller than the mean. W e also sho w words corresponding to min distance. The results show that our conjecture about the reprojection property is reasonable. F A D D I T I O NA L D E T A I L S A N D E X P E R I M E N T S F . 1 D E T A I L S O F H U M A N S T U D I E S All the users of AMT were chosen to hav e Master Qualification (HIT approv al rate more than 98% for more than 1 , 000 HITs). W e also restricted the users to be from United States to ensure English- speaking audience. 18 Published as a conference paper at ICLR 2021 w B || w B → A − w B || min w ∈ s A || w − w B || mean w ∈ s A || w − w B || arg min w ∈ s A || w − w B || Likelihood ( % ) “please” 17.46 23.11 53.93 “please” 100 “things” 21.59 19.87 50.95 “things” 81.8 “these” 25.22 21.26 82.00 “these” 55.6 “cheese” 21.98 20.34 51.19 “cheese” 100 “three” 24.39 22.13 61.07 “three” 84.1 “mix” 16.19 16.61 55.77 “mix” 100 “six” 20.43 16.75 66.85 “six” 100 “thick” 21.21 18.38 50.16 “thick” 92.3 “kids” 16.98 16.65 48.78 “kids” 100 “is” 16.84 16.25 70.88 “is” 78.0 A verage 20.23 19.14 59.16 T able 5: V erification of the reprojection property: T o verify the reprojection property , we ran- domly sample T ongue-twister words spoken by 2 speakers (A and B) in VCTK dataset. For the autoencoder trained by A, all the words spoken by B are out-of-sample data. Then for each word w B , we generate w B → A which is the projection to A ’ s subspace. W e need to verify 2 properties - (i) The output w B → A lies in A ’ s subspace; (ii) The autoencoder minimizes the input-output error . T o v erify (i), we train a speaker classification network on speaker A and B, and predict the speaker of w B → A . W e report the softmax output to show how much likely w B → A is to be classified as A ’ s speech (Likelihood in the table). T o verify (ii), we calculate (1) distance from w B to w B → A ; (2) minimum distance from w B to any sampled words by A; and (3) we calculate the mean distance from A ’ s subspace to w B . Distance from w B to w B → A is close to the minimum, and much smaller than the mean. This empirically suggests that nonlinear autoencoder behave similar to their linear counterparts (i.e., they approximately minimize the reconstruction error of the out-of-sample input). F . 2 A B L A T I O N A N A L Y S I S O F E X E M P L A R T R A I N I N G W e earlier mentioned that it is easier to disentangle style from content when the entire training dataset consists of a single style. T o verify this, we train an autoencoder with following setup: (1) speeches from 2 people; (2) speeches from 3 people; (3) 4 speeches with different settings from 1 person. W e contrast it with e xemplar autoencoder trained using 1 speech from 1 person using A/B testing. Our results are preferred 71 . 9% ov er (1), 83 . 3% ov er (2), and 90 . 6% ov er (3). F . 3 A B L A T I O N A N A L Y S I S O F R E M OV I N G S P E A K E R E M B E D D I N G W e point out that generic speaker embeddings struggles to capture stylistic details of in-the-wild speech. Here we provide the ablation analysis of removing such pre-trained embeddings. W e perform new experiments that fine-tune a pretrained Auto-VC for each specific speaker (ex- emplar training) b ut still keeps the pre-trained embedding, and find it performs similarly to the multi-speaker fine-tuning in T able 3. Our outputs are preferred 91 . 7% times over it. F . 4 A B L A T I O N A N A L Y S I S O F W A V E N E T W e adopt a neural-net v ocoder to conv ert Mel-spectrograms back to raw audio signal. W e prefer W aveNet (Oord et al., 2016) to traditional vocoders like Griffin-Lim (Grif fin & Lim, 1984), since W avenet is one of the state-of-the-art methods. The existence of W av eNet in our structure makes our method end-to-end trainable and can safely be considered a part of ex emplar autoencoder (no special adaptation required). While Auto-VC (Qian et al., 2019) and VQ-V AE (van den Oord et al., 2017) also use W aveNet, we substantially outperform them on Celeb-Audio dataset. Here we add the ablation analysis of W aveNet in T able 6. W e remove the W aveNet vocoder from our approach and AutoVC, and replace it with Grif fin-Lim. W e also compare with Chou et al. (Chou et al., 2019), which does not use neural-net v ocoders. The results sho w our model can also generate reasonable outputs with traditional vocoders, and yet outperform other zero-shot approaches not using W aveNet. 19 Published as a conference paper at ICLR 2021 VCTK (V eaux et al., 2016) V oice Similarity (SCA / %) Content Consistency (MCD) Chou et al. (Chou et al., 2019) 57.0 491.1 A uto-VC (Qian et al., 2019) with W av eNet 98.5 408.8 without W av eNet 96.5 408.8 Ours with W av eNet 99.6 420.3 without W av eNet 97.0 420.3 T able 6: Ablation Analysis of W aveNet: W e replace the W av eNet vocoder with Griffin-Lim tradi- tional vocoder , and compare our method with two zero-shot methods: (1) AutoVC without W avenet, and (2) Chou et al. (Chou et al., 2019). W e measure V oice Similarity by speaker-classification accu- racy (SCA) criterion, where higher is better . The results show our approach without W av eNet still outperform other zero-shot approaches not using W av eNet. W e also report MCD same as T able 2 as MCD is only related to Freq. features. For reference, we also list the results of “with W avenet” experiments. V oxCeleb Finetune Audio Decoder MSE ↓ PSNR ↑ Baseline-(a) 7 7 77 . 844 ± 15 . 612 29 . 304 ± 0 . 856 Baseline-(b) 7 3 77 . 139 ± 12 . 577 29 . 315 ± 0 . 701 Ours 3 3 76 . 401 ± 12 . 590 29 . 616 ± 0 . 963 T able 7: Ablation Analysis of V ideo Synthesis: T o verify the effecti v eness of a pre-trained audio model and audio decoder , we construct two e xtra baselines: (a) T rain only the audio-to-video trans- lator (1st Baseline). (b) Jointly train audio decoder and video decoder from scratch (2nd Baseline). W e compare these 2 baselines with our method: First train an autoencoder of audio, then train video decoder while finetuning the audio part. From the results, we can see the performance clearly drops without the help of finetuning and audio decoder . F . 5 A B L A T I O N A N A L Y S I S O F V I D E O S Y N T H E S I S W e have sho wn the effecti veness of jointly training a audio-to-audio-video pipeline based on a pre- trained audio model. T o further verify the helpfulness of audio bottleneck features and finetuning from audio model in video synthesis, we conduct two ablation experiments on audio-to-video trans- lation, and compare with ours. (a) T rain only the audio-to-video translator . (First Baseline in T able 7) (b) Jointly train audio decoder and video decoder from scratch. (Second Baseline in T able 7) (c) First train an autoencoder of audio, then train video decoder while finetuning the audio part. (Ours) From the results in T able 7, ours outperforms (b), which indicates the effecti v eness of finetuning from a pre-trained audio model. And (b) also outperforms (a). This implies the effecti veness of audio bottleneck features. 20

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment