Multivariate Uncertainty in Deep Learning

Deep learning has the potential to dramatically impact navigation and tracking state estimation problems critical to autonomous vehicles and robotics. Measurement uncertainties in state estimation systems based on Kalman and other Bayes filters are t…

Authors: Rebecca L. Russell, Christopher Reale

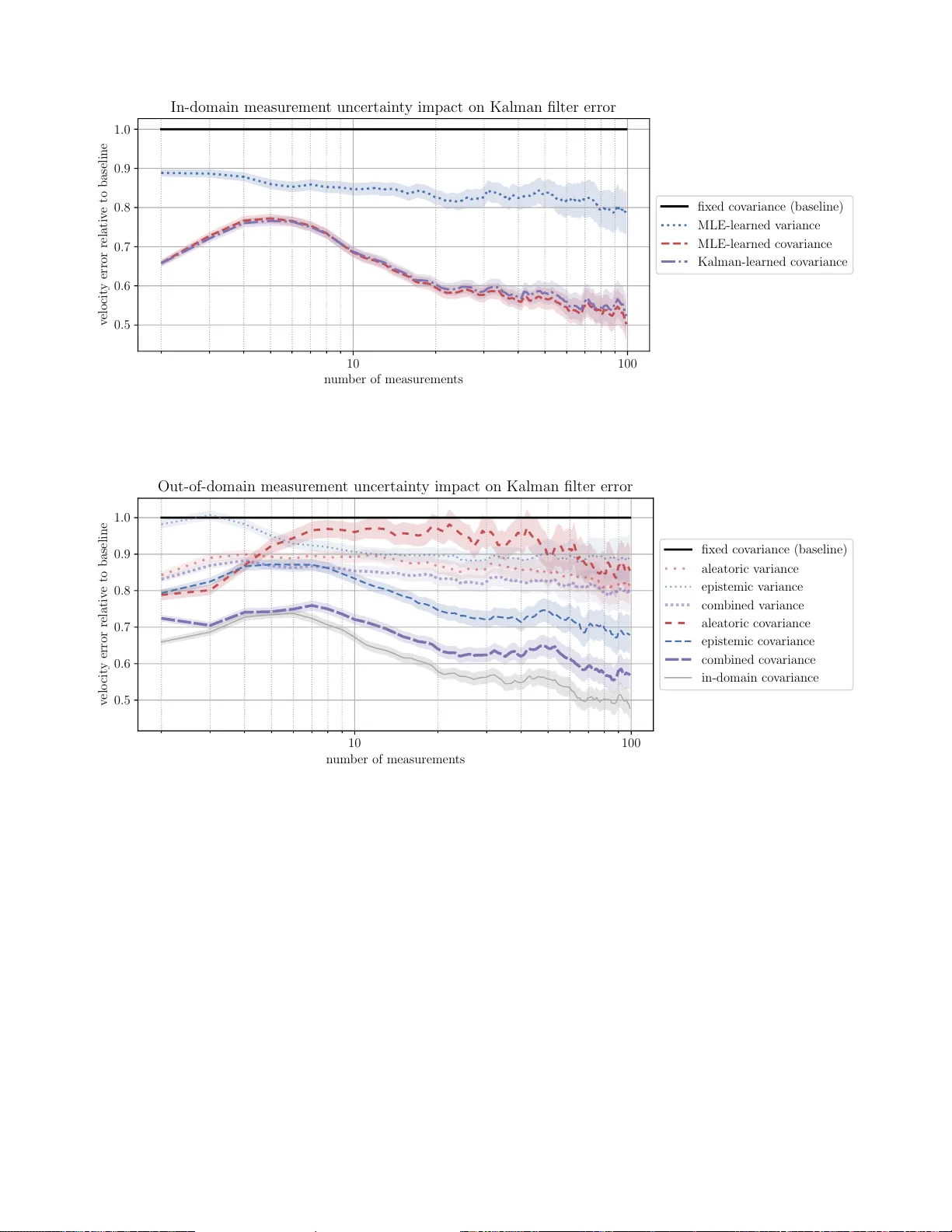

1 Multi v ariate Uncertainty in Deep Learning Rebecca L. Russell and Christopher Reale Abstract —Deep learning has the potential to dramatically impact navigation and tracking state estimation problems critical to autonomous vehicles and robotics. Measurement uncertainties in state estimation systems based on Kalman and other Bayes filters are typically assumed to be a fixed cov ariance matrix. This assumption is risk y , particularly for “black box” deep learning models, in which uncertainty can vary dramatically and unexpectedly . Accurate quantification of multivariate uncertainty will allow f or the full potential of deep learning to be used more safely and r eliably in these applications. W e show how to model multivariate uncertainty for regr ession problems with neural networks, incorporating both aleatoric and epistemic sources of heteroscedastic uncertainty . W e train a deep uncer- tainty co variance matrix model in two ways: directly using a multivariate Gaussian density loss function, and indirectly using end-to-end training through a Kalman filter . W e experimentally show in a visual tracking problem the large impact that accurate multivariate uncertainty quantification can have on Kalman filter performance for both in-domain and out-of-domain evaluation data. W e additionally show in a challenging visual odometry problem how end-to-end filter training can allow uncertainty predictions to compensate for filter weaknesses. Index T erms —Deep learning, covariance matrices, Kalman filters, neural networks, uncertainty I . I N T R O D U C T I O N Despite making rapid breakthroughs in computer vision and other perception tasks, deep learning has had limited deploy- ment in critical navigation and tracking systems. This lack of real-world usage is in large part due to challenges associated with integrating “black box” deep learning modules safely and effecti vely . Navigation and tracking are important enabling technologies for autonomous vehicles and robotics, and have the potential to be dramatically improv ed by recent deep learning research in which physical measurements are directly regressed from raw sensor data, such as visual odometry [1], object localization [2], human pose estimation [3], object pose estimation [4], and camera pose estimation [5]. Proper uncertainty quantification is an important challenge for applications of deep learning within these systems, which typically rely on probabilistic filters, such as the Kalman filter [6], to recursiv ely estimate a probability distribution over the system’ s state from uncertain measurements and a model of the system ev olution. Accurate estimates of the uncertainty of a neural network “measurement” (i.e., prediction) would enable the inte grated system to mak e better -informed decisions based on the fusion of measurements over time, measurements from other sensors, and prior knowledge of the underlying system. The con ventional approach of using a fixed estimate This work was carried out with funding from D ARP A/MTO (HR0011-16-S- 0001). Any opinions, findings and conclusions or recommendations expressed in this material are those of the authors. The authors are with The Charles Stark Draper Laboratory , Inc., Cambridge, MA 02139 (e-mail: rrussell@draper.com; creale@draper .com). Fig. 1. Data from a vector function with heteroscedastic covariance of measurement uncertainty can lead to catastrophic system failures when prediction errors and correlations are dynamic, as is often the case with deep learning perception modules. By accurately quantifying uncertainty that can vary from sample to sample, termed heter oscedastic uncertainty , we can create systems that gracefully handle deep learning errors while fully lev eraging its strengths. In this work, we study the quantification of heteroscedastic and correlated multi variate uncertainty (illustrated in Figure 1) for regression problems with the goal of improving overall performance and reliability of systems that rely on probabilis- tic filters. Heteroscedastic uncertainty in deep learning can be modeled from two sources: epistemic uncertainty and aleatoric uncertainty [7]. Epistemic uncertainty reflects uncertainty in the model parameters and has been addressed by recent work to de velop fast approximate Bayesian inference for deep learning [8], [9], [10]. Accurate estimation of epistemic uncertainty enables systems to perform more reliably in out- of-domain situations where deep learning performance can dramatically degrade. Aleatoric uncertainty reflects the noise inherent to the data and is irreducible with additional training. Accurate estimation of aleatoric uncertainty enables systems to achieve maximum performance and most effecti vely fuse deep learning predictions. Finally , since the uncertainties of predictions of multiple values can be highly corr elated , it is important to account for the full multiv ariate uncertainty from both aleatoric and epistemic sources. Existing methods for uncertainty quantification in deep learning generally neglect correlations in uncertainty , though these correlations are often critically important in autonomy applications. W e show how to model multiv ariate aleatoric uncertainty through direct training with a special loss function in Sec- tion III-A and through guided training via backpropagation 2 through a Kalman filter in Section III-B. In Section III-C, we show how to incorporate multiv ariate epistemic uncertainty , building off of the existing body of Bayesian deep learning literature. Finally , in Section IV, we experiment with our techniques on a synthetic visual tracking problem that allo ws us to e valuate performance in-domain and out-of-domain test data and on a real-world visual odometry dataset with strong correlations between measurements. I I . R E L A T E D W O R K Heteroscedastic noise is an important topic in the filtering literature. The adaptive Kalman filter [11] accounts for het- eroscedastic noise by estimating the process and measurement noise covariance matrices online. This approach prev ents the filter from keeping up with rapid changes in the noise profile. In contrast, multiple model adapti ve estimation [12] uses a bank of filters with different noise properties and dynamically chooses between them, which can work well when there are a small number of regimes with different noise properties. Co- variance estimation techniques for specific applications, such as the iterativ e closest point algorithm [13] and simultaneous localization and mapping [14], have been de veloped but do not generalize well. Parametric [15] and non-parametric [16], [17], [18], [19] machine learning methods, including neural networks [20], hav e been used to model aleatoric heteroscedastic noise from sensor data. Most of these approaches scale poorly and, since they ignore epistemic uncertainty , cannot be used with mea- surements inferred through deep learning. Kendall and Gal [7] showed how to predict the variance of neural network outputs including epistemic uncertainty , but neglected correlations between the uncertainty of different outputs and how these correlations might affect downstream system performance. Sev eral recent works hav e also in vestigated the direct learn- ing of neural network models [21], [22], including mea- surement variance models [23], via backpropagation through Bayes filters. These works demonstrated the practicality and power of filter-based training, but none attempted to account for the epistemic uncertainty or full multiv ariate aleatoric uncertainty of the neural networks. Additionally , the impact of improv ed uncertainty quantification, rather than simply improv ed measurement and process modeling, has not yet been studied for deep learning in probabilistic filters. W e build upon this prior work by showing how to predict multiv ariate uncertainty from both epistemic and aleatoric sources without neglecting correlations. Furthermore, we show how to do this by either training through a Kalman filter or independently from one. Our work is the first to show how to comprehensively quantify deep learning uncertainty in the context of Bayes filtering systems that are dependent on the outputs of deep learning models. I I I . M U LT I V A R I AT E U N C E RTA I N T Y P R E D I C T I O N W e present two methods for training a neural network to predict the multiv ariate uncertainty of either its own regressed outputs or those of another measurement system. The first is based on direct training using a Gaussian maximum likelihood T ABLE I N OTA T I O N x ∈ X Neural network model input y ∈ R k Regression label for neural network f : X → R k Neural network model of expected y mean Σ : X → R k × k Neural network model of expected y cov ariance z ∈ R n Filter system state ˆ z ∈ R n Estimate of system state z P ∈ R n × n Estimate of system state z covariance F : R n → R n State-transition model (fixed, linear) H : R n → R k Observation model (fixed, linear) Q ∈ R n × n Process noise covariance (fixed) loss function (Section III-A) and the second is indirect end- to-end training through a Kalman filter (Section III-B). These two methods can be either used alone or in conjunction (using direct training as a pre-training step before end-to-end training), depending on the exact application and av ailability of labeled data. For training a neural network to estimate its own uncertainty , we also present a method to approximately incorporate epistemic uncertainty at test time (Section III-C). T able I summarizes the important notation used in this section and throughout the rest of the paper . A. Gaussian maximum likelihood training In this first method, we directly learn to predict cov ariance matrix parameters that describe the distrib ution of training data labels with respect to the corresponding predictions of them. W e assume that the probability of a label y ∈ R k giv en a model input x ∈ X can be approximated by a multiv ariate Gaussian distrib ution p ( y | x ) = 1 p (2 π ) k | Σ ( x ) | × exp − 1 2 ( y − f ( x )) T Σ ( x ) − 1 ( y − f ( x )) , (1) where f : X → R k is a model of the mean, E [ y | x ] , and Σ : X → R k × k is a model of the cov ariance, E h ( y − f ( x ) ( y − f ( x )) T x i . (2) T o train f and Σ , we find the parameters that minimize our loss L , the negati ve logarithm of the Eq. 1 likelihood, L = 1 2 ( y − f ( x )) T Σ ( x ) − 1 ( y − f ( x )) + 1 2 ln | Σ ( x ) | . (3) This loss function allows us to train f and Σ either simulta- neously or separately . T ypically , we use a single base neural network that outputs f and Σ simultaneously . The Σ model should output k values s , which we use to define the variances along the diagonal Σ ii = σ 2 i = g v ( s i ) (4) and k ( k − 1) / 2 additional values r , which, along with s , define the of f-diagonal cov ariances Σ ij = ρ ij σ i σ j = g ρ ( r ij ) q g v ( s i ) g v ( s j ) , (5) 3 where Σ ij = Σ j i for j < i . W e use the g v = exp activ ation for the variances, σ 2 i , and g ρ = tanh activ ation for the Pearson correlation coefficients, ρ ij , to stabilize training and help encourage prediction of v alid positi ve-definite cov ariance matrices. B. Kalman-filter training Our second method of training a neural network to pre- dict multiv ariate uncertainty uses indirect training through a Kalman filter , illustrated in Figure 2. The Kalman filter [6] is a state estimator for linear Gaussian systems that recursiv ely fuses information from measurements and predicted states. The relativ e contribution of these two sources is determined by their cov ariance estimates. Similarly to Section III-A, we use a neural network to predict the heteroscedastic measurement cov ariance, but instead of training with the Eq. 3 loss function, we train using the error of the Kalman state estimate. The standard Kalman filter assumes that we can specify a state-transition model F and observation model H . The state- transition model describes the e volution of the system state z by z t = F z t − 1 + w t , (6) where w t ∼ N ( 0 , Q ) and Q is the covariance of the process noise. W e model the relationship between our deep learning “measurement” f ( x t ) and the system state as f ( x t ) = H z t + v t , (7) where v t ∼ N ( 0 , Σ ( x t )) and Σ ( x t ) is the covariance of the measurement noise. The difference between the measurement and the predicted measurement is the innovation i t = f ( x t ) − H F ˆ z t − 1 , (8) which has covariance S t = Σ ( x t ) + H F P t − 1 F T + Q H T , (9) the sum of the measurement cov ariance and the predicted cov ariance from the previous state estimate, P t − 1 . W e can then update our state estimate based on the innova- tion by ˆ z t = F ˆ z t − 1 + K t i t , (10) where K t is the Kalman gain , which determines ho w much the state estimate should reflect the current measurement compared to previous measurements. The optimal Kalman gain that maximizes the likelihood of the true state z t giv en our Gaussian assumptions is K t = F P t − 1 ( H F ) T S − 1 t . (11) The cov ariance P t of the updated state estimate ˆ z t is then giv en by P t = ( I − K t H ) FP t − 1 F T + Q . (12) Our measurement cov ariance Σ enters the Kalman filter in the calculation of the innov ation cov ariance S t in Eq. 9 and thus directly but in versely affects the Kalman gain K t in Eq. 11. W e can therefore backpropagate errors from the state estimate ˆ z t through the Kalman filter to train the model for ˆ z t x t f ( x t ) ⌃ ( x t ) P t ˆ z t 1 L t = k ˆ z t z t k P t 1 x t 1 ⌃ ( x t 1 ) f ( x t 1 ) Kalman filter Kalman filter neural net work neural net work Fig. 2. Simultaneously training a neural network via a Kalman filter to output a measurement f and its measurement error covariance Σ based on some input x . At each time step t , the Kalman filter calculates a state estimate ˆ z t and error cov ariance matrix P t based on the previous state estimate and its cov ariance and the new measurement and its covariance. The loss contribution L t at time step t from the Kalman filter state estimate thus depends on the current and all previous outputs of f and Σ . Blue arrows show the forward propagation of information and red arrows show the backpropagation of gradients that train the neural network. Σ . In fact, we can use any subset S of the state indices to train the measurement cov ariance model so long as X b ∈ S H ab 6 = 0 ∀ a ∈ { 1 , ..., k } , (13) which is useful when full state labels are not av ailable, as is often the case in more complex systems. Like wise, we can easily learn to predict the covariance of just a subset of the measurement space, for e xample, when the filter is fusing other well-characterized sensors with a neural network prediction. W e rely on automatic differentiation to handle the calculation of the fairly complicated gradients through the filter . If all the assumptions for the Kalman filter hold, Σ will con verge to Eq. 2 maximizing the likelihood of { z t } , theoret- ically equiv alent to the method in Section III-A. In that case, the main benefit to using Kalman filter training is to allow for labels other than { y } to be used in training. In situations where the Kalman filter assumptions are violated and the Kalman filter is the desired end-usage, it can be preferable to optimize for the ov erall end-to-end system performance. As demonstrated experimentally in Section IV, this end-to- end training allows the model of Σ to compensate for other modeling weaknesses. On the other hand, training through a Kalman filter can be slow or prone to instability for many applications, so the more direct approach Section III-A is preferable when the appropriate labels are av ailable, at the minimum as a pre-training stage. The approach shown here for the standard Kalman filter can be extended to other Bayes filters including non-linear and sampling-based variants, allowing use in nearly any state estimation application. 4 C. Incorporation of epistemic uncertainty Our two uncertainty prediction methods dev eloped in Sec- tions III-A and III-B quantify aleatoric uncertainty in the training data independently of epistemic uncertainty . Epistemic uncertainty , also known as “model uncertainty”, represents uncertainty in the neural network model parameters them- selves. Epistemic uncertainty for neural networks models is reduced through the training process, allowing us to isolate the contributions of aleatoric uncertainty in the training data in both of our aleatoric uncertainty prediction methods. Like aleatoric uncertainty , epistemic uncertainty can vary dramat- ically from measurement-to-measurement. Epistemic uncer- tainty is a particular concern for neural networks giv en their many free parameters, and can be large for data that is significantly different from the training set. Thus, for any real- world application of neural network uncertainty estimation, it is critical that it be taken into account. Numerous sampling-based approaches for Bayesian infer- ence hav e been developed that allow for the estimation of epistemic uncertainty , all of which are compatible with our uncertainty quantification frame work. The easiest and most practical approach is to use dropout Monte Carlo [9], which trades of f accuracy for speed and con venience. Recent Bayesian ensembling approaches [10] driv en by the empirical success of ensembling for estimating uncertainty [24] are also promising. As in Ref. [7], we assume that aleatoric and epistemic uncer - tainty are independent. W e train a neural network to output the aleatoric uncertainty cov ariance Σ ( x ) of the prediction f ( x ) through either method presented in Sections III-A and III-B. Then, using any sampling-based method for the estimation of epistemic uncertainty from N samples of f ( x ) , the total predictiv e cov ariance estimate should be calculated by Σ pr ed = Σ epistemic + Σ aleatoric ≈ 1 N N X n =1 f n ( x ) f n ( x ) T − 1 N 2 N X n =1 f n ( x ) ! N X n =1 f n ( x ) ! T + 1 N N X n =1 Σ n ( x ) . (14) As long as epistemic uncertainty for the training data is small relative to aleatoric uncertainty , this formulation only needs to be used at test time. Ho wever , if epistemic uncertainty is significant for data in the training set after training, the predicted Σ directly from the neural network will incorporate this uncertainty in its prediction, making it challenging to separate. W e found it possible to calculate the epistemic uncertainty cov ariances for the training data set and tune the model cov ariance prediction to predict the residual from that with Eq. 3 while holding the main model f constant. Howe ver , this tuning is less stable and our best results were generally achie ved by training until the epistemic uncertainty had become small relative to aleatoric uncertainty for the training set. I V . E X P E R I M E N T S W e ev aluate our methods dev eloped in Section III on two practical use cases. First, we run experiments on a synthetic 3D visual tracking dataset that allows us to in vestigate the impact of aleatoric and epistemic uncertainty for in-domain and out-of-domain data. Second, we study our methods on a real-world visual odometry dataset with strong correlations between measurements and complex underlying dynamics. T o provide a fair comparison between different uncertainty prediction methods, for each experiment, we freeze the pre- diction results f ( x ) from a trained model and then tune the individual uncertainty quantification methods on these prediction results from the training dataset. W e compare four methods for representing the multiv ariate uncertainty Σ from a neural network in a Kalman filter: 1) F ixed covariance : As a baseline, we calculate the mea- surement error covariance ov er the full dataset. This is the con ventional approach to estimating Σ for a Kalman filter . 2) MLE-learned variance : The co variance prediction is learned assuming assuming no correlation between out- puts, equi valent to the loss function giv en in Ref. [7]. 3) MLE-learned covariance : The cov ariance prediction is learned using the Eq. 3 loss function, as described in Section III-A. 4) Kalman-learned covariance : The covariance prediction is learned through training via Kalman filter , as de- scribed in Section III-B. Finally , we compare these results to using a fully-supervised recurrent neural network (RNN) instead of a Kalman filter , to provide a point of reference for an end-to-end deep learning approach that does not explicitly incorporate uncertainty or prior kno wledge. A. 3D visual tracking pr oblem W e first consider a task that is simple to simulate but is a reasonable proxy for many practical, complex applications: 3D object tracking from video data. For this problem, we trained a neural network to regress the x - y - z position of a predetermined object in a single image, and used a Kalman filter to fuse the individual measurements ov er the video into a full track with estimated velocity . This application allows the neural network to handle the challenging computer vision component of the problem, while the Kalman filter builds in our knowledge of physics, geometry , and statistics. By using simulated data, we are able to carefully ev aluate our methods of learning multiv ariate uncertainty on both in-domain and out-of-domain data. W e generated data using Blender [25] to render frames of objects moving through 3D space. Each track was generated with random starting location, direction, and velocity (from 10 mm/s to 200 mm/s). The tracks had constant velocity , though a more complex kinematic model or process noise could easily be added without changing the experiments significantly . The object orientation was sampled uniformly independently for each frame in order to add an additional source of visual noise. Our experiments used a ResNet-18 [26] with the final linear 5 x (m) − 2 . 0 − 1 . 5 − 1 . 0 − 0 . 5 0 . 0 0 . 5 1 . 0 1 . 5 2 . 0 y (m) − 1 . 7 − 1 . 6 − 1 . 5 − 1 . 4 − 1 . 3 − 1 . 2 z (m) 4 . 10 4 . 15 4 . 20 4 . 25 4 . 30 4 . 35 4 . 40 4 . 45 true trac k NN p osition estimate NN p osition uncertain t y Kalman state estimate Kalman state uncertain t y Fig. 3. Kalman filter state estimates (blue) from the neural network’ s position and position covariance predictions (red) for the 3D visual tracking problem. As additional observations are made (left to right), the Kalman filter state estimate approaches the true track (black). layer replaced by a 512 × 512 linear layer with dropout and size-3 position and size-6 cov ariance outputs. An example of a Kalman filter being used on our visual tracking problem is shown in Figure 3, with both the neural network measurement uncertainty Σ and the Kalman state estimate uncertainty P at each frame shown. W e represent the state for our tracking problem as the 3D position and velocity of the object, using a constant-velocity state-transition model and no process noise. During ev aluation, the full Σ pr ed giv en in Eq. 14 should be used to incorporate epistemic uncertainty in the Kalman filter’ s error handling. Our four comparison methods of uncertainty quantification were ev aluated using the track velocity estimation of the Kalman filter on a test set of in-domain track data. The results, shown in T able II, indicate that moving from the fixed cov ariance to heteroscedastic cov ariance estimation yields a large improvement in the quality of the filter estimates. Both learned cov ariance methods further dramatically improve the results, indicating that accounting for the correlations within the measurements can be very important. The MLE-based and Kalman-based covariance learning methods were generally consistent with each other . The improvement over the baseline fixed cov ariance method is plotted versus the number of tracked measurements in Figure 4. T o test the quality of the uncertainty estimation methods when epistemic uncertainty is significant, we simulated out-of- domain data by randomly jittering the input image color chan- nel, a form of data augmentation not seen during training. The results for the MLE-trained v ariance and cov ariance, as well as their break down into aleatoric and epistemic uncertainty , are shown in T able III and Figure 5. The “in-domain cov ariance” results when just the uncertainty estimation model is trained on the out-of-domain position predictions are added to provide a best-case-scenario point of comparison. When e valuated on out-of-domain data, the performances of the aleatoric-only uncertainty estimates are greatly diminished, and the incor- poration of correlation into the estimation no longer seems to help. Howe ver , when the epistemic and aleatoric uncertainties T ABLE II I N - D O M A IN TR A CK I N G V E LO C I T Y E ST I M A T I O N error (mm/s) relativ e error uncertainty method mean median mean median fixed cov ariance (baseline) 2.00 0.75 1 1 MLE-learned variance 1.87 0.61 1.00 0.88 MLE-learned covariance 1.36 0.32 0.70 0.51 Kalman-learned covariance 1.37 0.32 0.72 0.53 T ABLE III O U T - O F - D O M A IN T RA CK I N G V E LO C I T Y E ST I M A T I O N error (mm/s) relativ e error uncertainty method mean median mean median fixed cov ariance (baseline) 2.14 0.74 1 1 aleatoric variance 1.98 0.62 1.01 0.89 epistemic variance 1.94 0.61 0.93 0.89 combined variance 2.00 0.61 0.97 0.89 aleatoric covariance 1.87 0.48 1.10 0.73 epistemic covariance 1.65 0.43 0.76 0.67 combined covariance 1.56 0.37 0.75 0.60 in-domain covariance 1.46 0.32 0.71 0.53 are combined , the results are close to the in-domain best- case-scenario and accounting for the correlation in uncertainty again gives a large improv ement. These results illustrate how critical the incorporation of epistemic uncertainty is in real applications of neural networks, where data is not guaranteed to remain in-domain. T o provide a final comparison, we replaced the Kalman filter with an RNN that was trained directly on the track velocity labels using the same pre-trained conv olutional filters as before. On the in-domain tracking velocity estimation task, after careful tuning, the RNN was able to achiev e a mean error of 1.37 mm/s, equiv alent to our multiv ariate uncertainty approaches. This result indicates that the RNN w as able to suc- cessfully learn the filtering process and implicitly incorporate aleatoric uncertainty in individual measurements. Howe ver , on the out-of-domain velocity estimation task, the RNN achiev ed only a mean error of 2.05 mm/s, as it has no way to incorporate epistemic uncertainty in its estimates and is vulnerable to 6 10 100 n um b er of measuremen ts 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 v elo cit y error relativ e to baseline In-domain measuremen t uncertain t y impact on Kalman filter error fixed co v ariance (baseline) MLE-learned v ariance MLE-learned co v ariance Kalman-learned co v ariance Fig. 4. Improvement in Kalman filter velocity estimation in the 3D visual tracking problem as a function of measurement count for three uncertainty estimation methods. Large improvement over the conv entional fixed measurement covariance approach is seen from accounting for both heteroscedasticity and correlation. 10 100 n um b er of measuremen ts 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 v elo cit y error relativ e to baseline Out-of-domain measuremen t uncertain t y impact on Kalman filter error fixed co v ariance (baseline) aleatoric v ariance epistemic v ariance com bined v ariance aleatoric co v ariance epistemic co v ariance com bined co v ariance in-domain co v ariance Fig. 5. Improvement in Kalman filter velocity estimation in the 3D visual tracking problem as a function of measurement count for three uncertainty estimation methods. Large improvement over the conv entional fixed measurement covariance approach is seen from accounting for both heteroscedasticity and correlation. catastrophic prediction failures when input data is far out of domain. B. Real-world visual odometry pr oblem In order to ev aluate our methods on real-world visual imagery in another important practical application, we study monocular visual odometry using the KITTI V ision Bench- mark Suite dataset [27]. Monocular visual odometry is the estimation of camera motion from a single sequence of images, and is particularly challenging since stereo imagery or depth is typically needed to estimate the distance of features in the scene from the camera in a pair of images. While the KITTI dataset provides both stereo images and laser ranging data, deep learning has the potential to perform effecti ve visual odometry with a much simpler and cheaper set of sensors, enabling better localization for inexpensi ve autonomous vehi- cles and robots. W e focus on the problem of estimating camera motion from a single pair of images using a neural network, as shown in Figure 6. The neural network directly predicts the change in orientation ( ∆ θ ) and position ( ∆ x ) of the camera, as well as its multiv ariate uncertainty Σ . The KITTI dataset contains driving data from a standard passenger vehicle in relati vely flat areas, so we focus on predicting the vehicle speed and change in heading. W e filter these measurements with noisy IMU (inertial measurement unit) data, which reflect what would be av ailable on an inexpensiv e platform, as well as with the previous measurements from both sensor sources. The filtered estimate of ∆ θ can then be integrated to find the heading of the vehicle, combined with ∆ x calculate the velocity components of the vehicle, and those components integrated to find the 7 ✓ t , x t , ⌃ t t 1 t neural netw ork ˆ z t , P t Kalman filter 100 m Fig. 6. An illustration of the KITTI visual odometry problem, with data from validation run 06. T wo adjacent video frames are input to the neural network, which predicts the change in angle ( ∆ θ t ) and position ( ∆ x t ) between them. A Kalman filter fuses these predictions with previous predictions and other sensor measurements, resulting in a estimate (blue dashed line) of the true trajectory (black line). T ABLE IV V I SU AL O DO M E T R Y E X P E RI M E N T R ES U LTS standard Kalman time-correlated uncertainty method ∆ θ err ∆ x err ∆ θ err ∆ x err fixed cov ariance (baseline) 0.239 0.147 0.233 0.136 MLE-learned variance 0.205 0.163 0.188 0.128 MLE-learned covariance 0.183 0.162 0.163 0.129 Kalman-learned covariance 0.171 0.138 0.155 0.128 position of the vehicle, also illustrated in Figure 6. For our neural network architecture and training, we follow the general approach of W ang et al. [28], using a pre-trained FlowNet [29] con volutional neural network to extract features from input image pairs. Instead of using a recurrent neural network to fuse these features ov er many images, we hav e two fully-connected layers that output ∆ θ t , ∆ x t , and Σ t . W e train on KITTI sequences 00, 01, 02, 05, 08, and 09, and ev aluate on sequences 04, 06, 07, and 10. W e ev aluate results using two different filters: a standard Kalman filter and a Kalman filter that takes into account time-correlated measurement noise. Both filters use a simple constant velocity and rotation model of the dynamics, which thus has a high corresponding process noise Q determined from the data. The standard Kalman filter assumes that all measurements hav e independent errors, a poor assumption for the neural network outputs, which are highly correlated be- tween adjacent times. The “time-correlated” Kalman filter [30] accounts for this correlation by modeling the measurement error as part of the estimated state ˆ z t and combining the uncorrelated part of Σ t into Q . The results from our visual odometry e xperiments are sho wn in T able IV, where ∆ θ err is the frame-pair angle error in degrees and ∆ x err is the frame-pair position error in meters. For the standard Kalman filter , which neglects the lar ge measurement time-correlation, we find that the MLE-learned methods perform poorly but the Kalman-learned cov ariance is able to compensate by strategically increasing the pre- dicted uncertainty for highly time-correlated measurements. W ith the time-correlated Kalman filter variant, all uncertainty- quantification methods achiev e better performance, and the MLE-learned cov ariance results are more in-line with the Kalman-learned cov ariance results. Again, the Kalman-learned cov ariance is able to achiev e the best performance out of all methods by directly optimizing for the desired end state estimate. Finally , we provide a comparison to an end-to-end deep learning RNN method, equiv alent to the approach of W ang et al. [28] with the addition of an IMU input data. The RNN is trained to fuse sequences of FlowNet features into odometry outputs, allowing it to incorporate learned kno wledge about trajectory dynamics in addition to the fusion of correlated measurements in its “filtering” process. The RNN was able to achiev e a ∆ θ err of 0.155 degrees, equal to our Kalman- learned covariance approach, but a ∆ x err of 0.176 meters, worse than any of our Kalman filter results. W e believ e that the RNN was able to learn a very good trajectory model for vehicle heading (driv e straight with occasional large turns). The Kalman filter assumption of constant rotation was not a very good one for the data, explaining why the Kalman- learned cov ariance was able to improve upon the MLE-learned cov ariance so much for ∆ θ err . On the other hand, the constant velocity assumption was a relativ ely good one and robust for trajectories with high epistemic uncertainty , such as when test trajectories are significantly faster or slower than typical training data. This result illustrates a primary strength of our uncertainty quantification approach: It allows traditional filtering methods to robustly handle scenarios that are not very well represented in the training data. V . C O N C L U S I O N S & D I S C U S S I O N W e hav e provided tw o methods for training a neural netw ork to predict its own correlated multiv ariate uncertainty: direct training with a Gaussian maximum likelihood loss function and indirect end-to-end training through a Kalman filter . In addition, we hav e shown how to incorporate multiv ariate epistemic uncertainty during test time. Our experiments show that these methods yield accurate uncertainty estimates and can dramatically improve the performance of a filter that uses them. Significant improvement in filter state estimation came from accounting for both the heteroscedasticity in and correla- tion between the model outputs uncertainty . For out-of-domain data, the incorporation of epistemic uncertainty was critical to the high performance of the combined filtering system. Finally , when the data violated the underlying filter assumptions, the uncertainty estimates trained end-to-end through the filter were able to partially compensate for the resulting errors. These methods of multiv ariate uncertainty estimation help enable the usage of neural networks in critical applications such as navigation, tracking, and pose estimation. Our methods hav e several limitations that should be ad- dressed by future work. First, we have assumed that both aleatoric and epistemic uncertainty for neural network predic- tions follo w multiv ariate Gaussian distributions. While this is a reasonable assumption for many applications, it is problematic in two scenarios: (1) When error distributions are long-tailed, the likelihood of large errors may be extremely underes- timated; (2) When errors follo w a multimodal distribution, 8 which can occur in highly nonlinear systems, the precision of the estimation will be impaired. The former scenario may be addressed by replacing the Gaussian distribution with a Laplace distribution, which produces more stable training gradients for large errors. The latter scenario may be addressed by quantile regression extensions to our method. While both of these scenarios violate Kalman filter assumptions, they can be handled with more advanced Bayes filters such as particle filters. Finally , our methods assume that the uncertainty for mea- surements is uncorrelated in time between dif ferent measure- ments. In most real-world applications, we expect uncertainty to be made up of both independent sources, such as sensor noise, and correlated sources, such as occluding scene content visible in multiple frames. The independence assumption was strongly violated in our visual odometry experiment in Section IV -B, which required a special Kalman filter variant to handle it. A valuable extension to our work would allow neural networks to quantify and predict these correlations in a way that is interpretable and easy to integrate into con ventional filtering systems. R E F E R E N C E S [1] V . Mohanty , S. Agrawal, S. Datta, A. Ghosh, V . D. Sharma, and D. Chakrav arty , “DeepVO: A deep learning approach for monocular visual odometry , ” arXiv preprint , 2016. [2] S. Ren, K. He, R. Girshick, and J. Sun, “Faster R-CNN: T owards real- time object detection with region proposal networks, ” in Advances in Neural Information Pr ocessing Systems , pp. 91–99, 2015. [3] A. T oshev and C. Szegedy , “DeepPose: Human pose estimation via deep neural networks, ” in Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , pp. 1653–1660, 2014. [4] J. Wu, B. Zhou, R. Russell, V . Kee, S. W agner, M. Hebert, A. T orralba, and D. M. Johnson, “Real-time object pose estimation with pose interpreter networks, ” in 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) , pp. 6798–6805, IEEE, 2018. [5] A. Kendall, M. Grimes, and R. Cipolla, “PoseNet: A con volutional network for real-time 6-DOF camera relocalization, ” in Proceedings of the IEEE International Conference on Computer V ision , pp. 2938–2946, 2015. [6] R. E. Kalman, “ A new approach to linear filtering and prediction problems, ” Journal of Basic Engineering , vol. 82, no. 1, pp. 35–45, 1960. [7] A. Kendall and Y . Gal, “What uncertainties do we need in Bayesian deep learning for computer vision?, ” in Advances in Neural Information Pr ocessing Systems , pp. 5574–5584, 2017. [8] C. Blundell, J. Cornebise, K. Kavukcuoglu, and D. Wierstra, “W eight uncertainty in neural networks, ” 2015. [9] Y . Gal and Z. Ghahramani, “Dropout as a Bayesian approximation: Representing model uncertainty in deep learning, ” in International Confer ence on Machine Learning , pp. 1050–1059, 2016. [10] T . Pearce, M. Zaki, A. Brintrup, and A. Neel, “Uncertainty in neural net- works: Bayesian ensembling, ” arXiv pr eprint arXiv:1810.05546 , 2018. [11] R. Mehra, “On the identification of variances and adaptive Kalman filtering, ” IEEE T ransactions on Automatic Control , vol. 15, no. 2, pp. 175–184, 1970. [12] D. Magill, “Optimal adaptive estimation of sampled stochastic pro- cesses, ” IEEE T ransactions on Automatic Control , vol. 10, no. 4, pp. 434–439, 1965. [13] A. Censi, “ An accurate closed-form estimate of ICP’ s covariance, ” in Proceedings 2007 IEEE International Conference on Robotics and Automation , pp. 3167–3172, IEEE, 2007. [14] M. Pupilli and A. Calway , “Real-time visual SLAM with resilience to erratic motion, ” in 2006 IEEE Computer Society Conference on Computer V ision and P attern Recognition (CVPR’06) , vol. 1, pp. 1244– 1249, IEEE, 2006. [15] H. Hu and G. Kantor, “Parametric covariance prediction for het- eroscedastic noise, ” in 2015 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) , pp. 3052–3057, IEEE, 2015. [16] K. Kersting, C. Plagemann, P . Pfaff, and W . Burgard, “Most likely heteroscedastic Gaussian process regression, ” in Proceedings of the 24th International Conference on Machine Learning , pp. 393–400, ACM, 2007. [17] A. G. Wilson and Z. Ghahramani, “Generalised Wishart processes, ” 2010. [18] W . V ega-Bro wn, A. Bachrach, A. Bry , J. Kelly , and N. Roy , “CELLO: A fast algorithm for cov ariance estimation, ” in 2013 IEEE International Confer ence on Robotics and Automation , pp. 3160–3167, IEEE, 2013. [19] A. T allavajhula, B. P ´ oczos, and A. Kelly , “Nonparametric distribution regression applied to sensor modeling, ” in 2016 IEEE/RSJ International Confer ence on Intelligent Robots and Systems (IR OS) , pp. 619–625, IEEE, 2016. [20] K. Liu, K. Ok, W . V ega-Bro wn, and N. Roy , “Deep inference for covari- ance estimation: Learning Gaussian noise models for state estimation, ” in 2018 IEEE International Confer ence on Robotics and Automation (ICRA) , pp. 1436–1443, IEEE, 2018. [21] T . Haarnoja, A. Ajay , S. Levine, and P . Abbeel, “Backprop KF: Learning discriminativ e deterministic state estimators, ” in Advances in Neural Information Pr ocessing Systems , pp. 4376–4384, 2016. [22] R. Jonschkowski, D. Rastogi, and O. Brock, “Differentiable particle filters: End-to-end learning with algorithmic priors, ” Robotics: Science and Systems , 2018. [23] H. Coskun, F . Achilles, R. DiPietro, N. Nav ab, and F . T ombari, “Long short-term memory Kalman filters: Recurrent neural estimators for pose regularization, ” in Pr oceedings of the IEEE International Confer ence on Computer V ision , pp. 5524–5532, 2017. [24] B. Lakshminarayanan, A. Pritzel, and C. Blundell, “Simple and scalable predictiv e uncertainty estimation using deep ensembles, ” in Advances in Neural Information Pr ocessing Systems , pp. 6402–6413, 2017. [25] Blender Online Community, Blender – F ree and Open 3D Creation Softwar e . Blender Foundation, Blender Institute, Amsterdam, 2019. [26] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition, ” in Pr oceedings of the IEEE Conference on Computer V ision and P attern Recognition , pp. 770–778, 2016. [27] A. Geiger, P . Lenz, and R. Urtasun, “ Are we ready for autonomous driv- ing? The KITTI V ision Benchmark Suite, ” in Conference on Computer V ision and P attern Recognition (CVPR) , 2012. [28] S. W ang, R. Clark, H. W en, and N. T rigoni, “DeepV O: T owards end-to- end visual odometry with deep recurrent con volutional neural networks, ” in 2017 IEEE International Confer ence on Robotics and Automation (ICRA) , pp. 2043–2050, IEEE, 2017. [29] A. Dosovitskiy , P . Fischer , E. Ilg, P . Hausser, C. Hazirbas, V . Golkov , P . V an Der Smagt, D. Cremers, and T . Brox, “Flownet: Learning optical flow with con volutional networks, ” in Proceedings of the IEEE international conference on computer vision , pp. 2758–2766, 2015. [30] K. W ang, Y . Li, and C. Rizos, “Practical approaches to Kalman fil- tering with time-correlated measurement errors, ” IEEE T ransactions on Aer ospace and Electr onic Systems , v ol. 48, no. 2, pp. 1669–1681, 2012.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment