Equivalence of Dataflow Graphs via Rewrite Rules Using a Graph-to-Sequence Neural Model

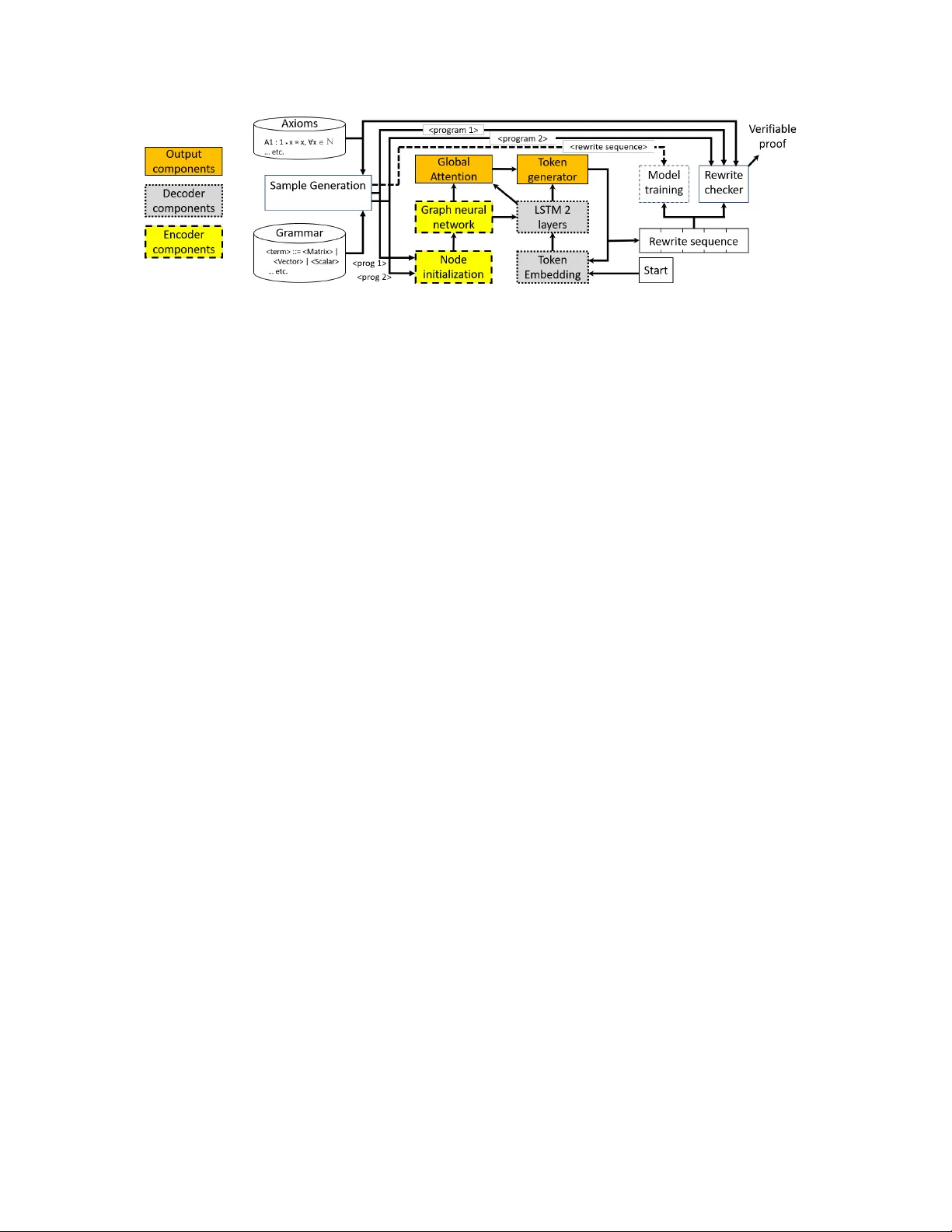

In this work we target the problem of provably computing the equivalence between two programs represented as dataflow graphs. To this end, we formalize the problem of equivalence between two programs as finding a set of semantics-preserving rewrite r…

Authors: Steve Kommrusch, Theo Barollet, Louis-No"el Pouchet