A Formal Approach to Physics-Based Attacks in Cyber-Physical Systems (Extended Version)

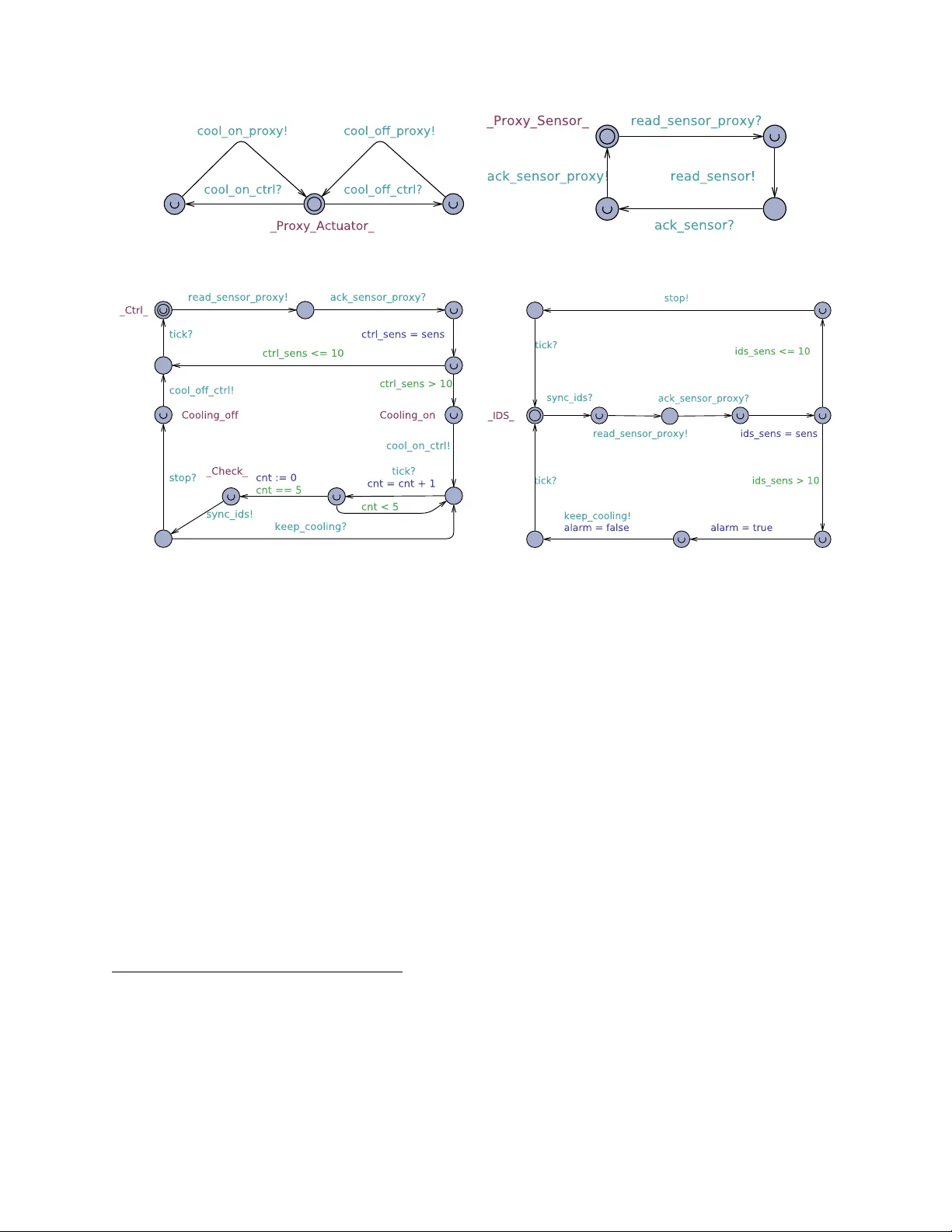

We apply formal methods to lay and streamline theoretical foundations to reason about Cyber-Physical Systems (CPSs) and physics-based attacks, i.e., attacks targeting physical devices. We focus on a formal treatment of both integrity and denial of se…

Authors: Ruggero Lanotte, Massimo Merro, Andrei Munteanu