Game Theoretical Approach to Sequential Hypothesis Test with Byzantine Sensors

In this paper, we consider the problem of sequential binary hypothesis test in adversary environment based on observations from s sensors, with the caveat that a subset of c sensors is compromised by an adversary, whose observations can be manipulate…

Authors: Zishuo Li, Yilin Mo, Fei Hao

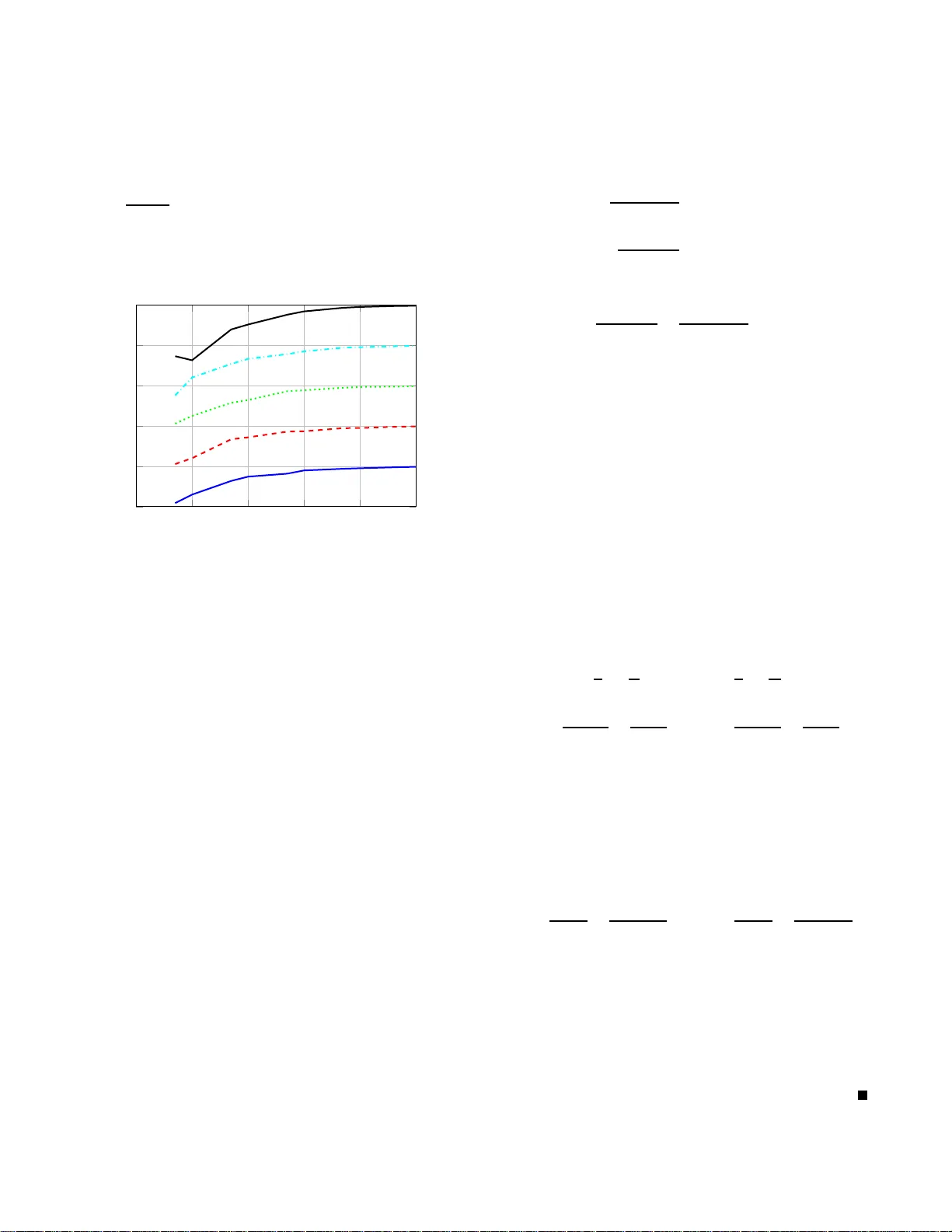

Game Theoretica l A ppro ach to Sequential Hypothesis T est with Byzantine Sensors Zishuo Li 1 , Y ilin Mo 2 , and Fe i Hao 1 Abstract — In this paper , we consider the problem of sequen- tial binary hypothesis test in adversar y envir onment based on observ ations from s sensors, with the cav eat that a subset of c sensors is compromised b y an adversary , whose observ ations can be manipulated arbitrarily . W e choose the asym ptotic A verage Sample Numb er (ASN) required to reach a certain lev el of error p robability as the p erformance metric of the system. The p roblem is cast as a game between t h e detector and th e adversary , where t h e detector aims to optimize t he system p erf ormance while t h e adversary tries to d eteriorate it. W e propose a pair of flip attack strategy and voting hypothesis testing rule and prov e that they fo rm an equ i librium strategy pair for the game. W e further inv estigate th e performance of our proposed d etection scheme with un known nu mb er of com- promised sensors and corroborate our result with simulation. I . I N T RO D U C T I O N Recent advancements in c ommun ica tion techn o logy and sensing e le m ents have made networked sensor system mo re readily av ailable in control systems, perfo rming the fu nc- tion o f observation, detection an d mo nitoring. Howev er , the reliance on c ommun ica tion and sparsely spacial distribution make the sensor system vulnerab le in the presence of v arious cyber attac k s such as m easurement manip ulation, comm u- nication block, false data injection , etc. Since malicious attacks, such as Stuxnet [1] and BlackEnergy malware [ 2] may incur substantial dam age on economy , eco system and ev en public safety , d esigning resilient networked system with secure detection , estimation and co ntrol algorithm has b een recogn ize d b y both engin e ers and scholars as a sign ifica n t research field. In this paper we c onsider the problem of detecting a binary state θ with s sensors in adversarial environment. W e assume c out o f s sensors a re comprom ised a nd their o b servations could be ma n ipulated ar bitrarily by the adversary . W e in- troduce th e Byzantine attac k setting where system manag er has no in formation about the exact set o f corru p ted sen so rs but only knows th e c a rdinality of the set. The detection perfor mance is ev alu a te d by its A verag e Sample Number under prescrib ed level of sign ificance (pro bability of e r ror). W e adopt a similar form ulation as [3] where the pro blem is considered as a game between the dete c to r a nd the attacker, in which the detector attem p ts to optimize th e perf ormance while the adversary intends to deteriorate it. A pair of strategy (attack strategy from the adversary and h ypothe sis 1 : Z. Li and F . Hao are with the School of Automation Sci- ence and E lectrical E ngineer ing, Beihang Univ ersity , China. Email: lizishu o1523@b uaa.edu.cn; fhao@b uaa.edu.cn . 2 : Y . Mo is with the Depart ment of Automation and BNRist, Tsinghua Uni versi ty , China. Email: ylmo@tsinghua.edu.cn. testing scheme f r om the d etector) is proposed and pr oved to be a Nash equilibriu m pair fo r th e game. Further more, scenario with un known number of comp r omised senso r s is investigated an d ch oice of param eter in sequential test algorithm is discu ssed. Related W ork: The study of seq uential analysis ( to the b est of our knowledge) origin a ted from Abraham W ald et al. [4][5] who p roposed the Sequential Probab ility Ratio T est (SPR T) and p roved its optimality in 1940s. Due to its wide ap - plicability and optimality in hy pothesis testing, sequential analysis has gained wide application in sensor network se- curity design [6][7], chang e detectio n[8][9], signal an o maly detection [1 0][11], e tc. As threa ts to contro l systems from cyber attacks ar e increasing rapidly these days, studies abo ut secure detec- tion p r oblem draw a ttention f rom research ers. The r esearch efforts can be classified into tw o main direction s: anomaly diagnose an d resilient algo rithm d esign. In the f ormer one, anomaly diagn osis schemes are d esigned to reveal the exis- tence of attack and trigger alarm and/or r ecovery mechanism. For e xample, the problem of revealing the e xistence of attacks o r vu lnerable part of the system that req uires pro - tection is consider ed in [ 12] and [13]. In the research about resilient algor ithm design, research ers pursuit a d e sign of secure system which has graceful perf ormance degrada tio n in the presence of attack . Since attack s may not be elim in ated immediately even if we know its existence becau se of the concealmen t o f attackers in cybersp ace, resilient algorithm design is pref erred in the sense of safety guar antee. W e choose resilient testing algorith m design as our resear ch goal in this paper . The prob lem of resilient inf erence has been studied fr o m various persp e cti ve recen tly includin g h ypothesis testing [3][14], cha nge detectio n [9][8], state estimatio n [1 5], etc. W e fo cus on hypo thesis testing problem . Similar fo rmulation of detectin g a bin ary state with multiple sensors und er Byzantine attack is studied by Ren et al. [16] recently and the problem of security-efficiency trade-off is raised. Moreover , the m odel is extended to multi-hy pothesis testing and heterog eneous sensor scenario wher e game theoretic approa c h is adop ted [17] an d sensor selection prob lem is in vestigated [ 1 8]. W e consider the proble m o f d etecting a bin ary state using seq uential an alysis in the sense that stopping time is determined by observations while some other resear ches use a pr e scribed num ber of observed samples, e.g. one-sh ot scheme [19][20] and fixed time an alysis [16]. By making decision adapted to observations, A verage Samp le Num ber is sa ved (as c a n b e seen in Remark 8) because sampling is stopp e d as soon as the existing observations possess enoug h pre f erence on a certain h ypothe sis. The efficiency of detection sampling in o ur pa p er is ev aluated an d op timized by in tegrating ASN into perfo rmance metric (see defin itio n of d elay in equation 4). Similar meth o dology could be seen in the stud y of chan ge d etection (e.g . [8][2 1]). The rest of this paper is organ ized as f ollows: In Sec- tion II, we for mulate th e p roblem of binary hy pothesis test and define the a dmissible attack and b in ary state d etecting strategy as well as the p erforman ce metric. In Section I I I, we propo se an attack strategy by flippin g th e distribution o f observations fro m the comp romised sensors and a resilient detection stra tegy by voting among all sensor s. This p air of strategy is then pr oved to form an equilibriu m p air for the gam e between attac ker an d detector . I n Section IV, the scenario wh ere actual n umber of compro mised senso rs is unknown is in vestigated and corresp o nding p erforma n ce is quantified. Simulation result is provided in Section V, and Section VI finally co ncludes the p aper . Notations: W e d e n ote by Z + the set of positi ve integers and by R the set of r eal num bers. W e de note by x ∼ y when x / y → 1 . Cardinality o f a finite set S is deno ted as | S | . T ranspose of a vector or matr ix is denoted by superscrip t T . I I . P R O B L E M F O R M U L A T I O N A. B inary Hyp othesis T esting Suppose th ere is a bin ary state θ ∈ { 0 , 1 } d etected by a group of s sensors. At each discrete time index k , th e observation f rom each sensor i ∈ S , { 1 , 2 , ..., s } is collected by a f u sion cen te r . Let row vector x x x i = [ x i ( 1 ) , x i ( 2 ) , x i ( 3 ) , ... ] denote the sequence of obser vations from the i th sensor and column vector x x x ( k ) = [ x 1 ( k ) , x 2 ( k ) , x 3 ( k ) , ..., x s ( k )] T denote the observations a t time k fr om all sensors. W e assume that all observations from different sensors at different time are ind ependen tly identically d istributed fo r ea c h θ . Simialr to notation s in [16], when the hy pothesis is false ( θ = 0), probab ility measu r e generated by x i ( k ) is d enoted as ν and it is deno ted as µ when th e hyp othesis is tru e ( θ = 1). In o ther words, fo r any Borel-me a su rable set B ⊆ R , the probab ility that x i ( k ) ∈ B equals to ν ( B ) whe n θ = 0 and equals to µ ( B ) when θ = 1. W e den ote the pro bability space generated by a ll measur e m ents x x x ( 1 ) , x x x ( 2 ) , . . . as ( Ω , F , P θ ) , where for any l ≥ 1 P θ ( x i 1 ( k 1 ) ∈ B 1 , . . . , x i l ( k l ) ∈ B l ) = ( ν ( B 1 ) ν ( B 2 ) . . . ν ( B l ) if θ = 0 µ ( B 1 ) µ ( B 2 ) . . . µ ( B l ) if θ = 1 , when ( i j , k j ) 6 = ( i j ′ , k j ′ ) for a ll j 6 = j ′ . The expectation taken with respect to P θ is d e noted by E θ . W e fur th er assume tha t pr obability me a su re ν and µ are absolutely co ntinuou s with respect to each oth e r . Therefo re, the log-likelihoo d ratio L i ( k ) of x i ( k ) is well-defin ed as L i ( k ) , lo g d µ d ν ( x i ( k )) , (1) where d µ / d ν is the Rad on-Nikody m de r iv ative. B. B yzantine Attack Let the (ma nipulated) o bservation received b y the fusion center at tim e k be x x x ′ ( k ) = x x x ( k ) + x x x a ( k ) , (2) where x x x a ( k ) ∈ R s is th e deflective vector injected b y th e attacker at tim e k . By ad ding values to th e real observations x x x ( k ) , the attacker can rewrite them to arbitrary value they assign. W e have the fo llowing assum ptions o n the attacker . Assumption 1 (Sparse Atta ck): T h ere exists an ind ex set C ⊆ S with | C | = c such that S ∞ k = 1 supp { x x x a ( k ) } = C where supp ( x x x ) , { i ∈ S : x i 6 = 0 } is the support of vecto r x x x . Fur- thermor e , the system kn ows the car dinality c , but it does not know the set C . Remark 1 : It is conventional in the literature (e.g. [8] [22][23]) to assume that the attacker p ossesses limited re- sources, i.e. , the number (o r percentage) o f comp r omised sensors is fixed and is known by th e system manager . Th e value of c can also be seen as a design parameter repr esenting the tolerance of senso r cor ruptions in the system. W e d e note by N , S \ C the hone st (not affected b y attack) sensor . The inf ormation th e attacker have access to is assum e d as f ollows: Assumption 2 (Attacker Kno wledge): (1) The attacker knows the p robability m e a sure, i.e. µ and ν ; (2) Th e attacker knows th e r eal system state θ ; (3 ) The attacker knows th e real obser vation from all compromised sen sors from the beginning to the present time instant. Remark 2 : Th e only restriction o n the attack strategy is that the set o f c o mprom ised sensors is fixed (fr o m Assump- tion 1). The attacker hav e adequ ate knowledge about the system and c a n carr y ou t co mplex attack strategies su ch as time-varying or probabilistic o nes. This assumption is con- ventional in literature co ncerning the worst-case attacks (e.g. [24]). Nevertheless, assuming the adversary to be powerful when designing system would make sure its security and is in accordan ce w ith the Kerckhoffs’ s princip le. An admissible attack strategy is a mapping from attacker’ s informa tio n set to the b ias vector that satisfies Assumption 1. Let the co m promised sensor index set C = { i 1 , i 2 , · · · , i c } . Define X X X C ( k ) as th e matrix fo rmed b y tru e mea su rements from time 1 to k at co mpromised sensor s: X X X C ( k ) , [ x x x C ( 1 ) , x x x C ( 2 ) , · · · , x x x C ( k )] ∈ R c × k with x x x C ( k ) , [ x i 1 ( k ) , x i 2 ( k ) , · · · , x i c ( k )] T ∈ R c × 1 . Similar to X X X C ( k ) , X X X a ( k ) ∈ R s × k is defined as the matrix formed by b ias vectors x x x a ( k ) ∈ R s × 1 from time 1 to k . The injected bias vector is designed b y the attacker based on its informa tio n set, i.e. x x x a ( k ) = g ( X X X C ( k ) , X X X a ( k − 1 ) , θ , k ) , (3) where g is a measurab le func tion of accessible observa- tions X X X C ( k ) , history attacks X X X a ( k − 1 ) , rea l state θ and time k such that x x x a ( k ) satisfies Assumptio n 1. Denote the probab ility spa ce generated by a ll ma nipulated observations x x x ′ ( 1 ) , x x x ′ ( 2 ) , . . . as ( Ω , F , P g θ ) where θ is the r eal state. T he correspo n ding expectation is denoted as E g θ . C. P erformance Metric The detector at time k is define d as a mapping fro m the manipulated observation matrix to the set of decision : f k : X X X ′ ( k ) →{ cont inue , 0 , 1 } , where cont inue deno te tak ing next observation at tim e k + 1 because existing knowledge is not enough to make a deci- sion. Decision 0 and 1 denote stop taking ob servations and choose hyp othesis H 0 and H 1 respectively . System’ s strategy f , ( f 1 , f 2 , · · · ) is de fined as an infin ite seq u ence o f de te c tors from time 1 to ∞ . Based on the definition of d etection strategies, the stop ping time T repr esenting the time that the test ter m inates is a { F ′ t } -stoppin g time, wh e re F ′ t is a σ -field of all the (m a- nipulated) observations fr om time 1 to k : F ′ t = σ { X X X ′ ( k ) } . Define the worst case A verage Sample Numb er ( d etection delay) under attack g as D ( T ) , max θ = 0 , 1 E g θ [ T ] . (4) Denote the pro bability of T ype-I and T y pe-II erro r 1 of the binary hypo thesis testing task as α and β respectively , e.g. α , P 0 [ f T = 1 ] , β , P 1 [ f T = 0 ] . As a detector needs to make decisions based on as f ew samples as p ossible under erro r prob ability c o nstraints which v ary in different situations, we consider the asym ptotic p erforma nce as error probab ility ten ds to zer o : γ ( f , g ) , lim α = β → 0 + log ( 1 / α ) D ( T ) . (5) Remark 3 : By definition, γ ( f , g ) ≥ 0 for any admissible f and g be cause α ≤ 1 a n d D ( T ) > 0. The per forman ce integrates erro r p robab ilities α , β with d etection delay D ( T ) which we ho pe to be small at th e same time . It means larger γ indicates b etter de te c tion p e rforman ce. Remark 4 : Th e perfo r mance γ is determ in ed by both the detection r u le f and attack strategy g so it is de noted as γ ( f , g ) . T h e system manage r inten ds to design a resilient detector f to maxim ize γ while the attacker needs malicious attack g to minimize γ . In this paper, we intend to pr o pose a pair of strategy ( f ∗ , g ∗ ) , suc h that for any strategies f and g , the f ollowing inequality h olds: γ ( f , g ∗ ) ≤ γ ( f ∗ , g ∗ ) ≤ γ ( f ∗ , g ) . (6) As a result, the pair of strategy ( f ∗ , g ∗ ) reaches a Nash equilibriu m (which is n ot necessarily unique ) . In other words, giv en strategy of one player as f ∗ or g ∗ , th e other p layer d o 1 In statistical hypothesis testing, a type-I error is rejection of a true null hypothesi s H 0 , while a type-II error is the fai lure to reject a fa lse null hypothesi s. not have a strictly better strategy . W e pre sent the strategy pair in the n ext section. I I I . E QU I L I B R I U M S T R AT E G Y P A I R In this section we p resent an a ttac k strategy and a detection scheme and p r ove that they can fo rm a Nash equ ilibrium pair . A. P r elimina ries Resu lts Before we go o n, we first present some basic resu lts of hy p othesis testing scheme without attack wh ich will be helpful f or futu r e discussion. Den ote the Kullback-Leibler (K–L) divergences between tho se two distribution we are trying to d istinguish (i.e. µ and ν ) as I 1 , Z x ∈ R log d µ ( x ) d ν ( x ) d µ ( x ) , I 0 , − Z x ∈ R log d µ ( x ) d ν ( x ) d ν ( x ) T o av oid degenerate problem s, we adop t the fo llowing assumptions: Assumption 3 : Th e K–L divergences are well-defin ed, i.e., 0 < I 0 , I 1 < ∞ . W e introd uce a sequential test strategy for multiple sensor based o n Seq uential Pro bability Ratio T est propo sed by W ald [25]. W e denote the cu mulative log - likelihood ratio o f sensor i at time n by S i ( n ) and th e one summing over set M by S M ( n ) : S i ( n ) , n ∑ k = 1 L i ( k ) , S M ( n ) , ∑ i ∈ M S i ( n ) , (7) where M ⊆ S . T h e decision is taken a c c ording to whether the prescribed thr eshold is cr o ssed, i.e. f k = 0 , S M ( k ) ≤ − a cont inue , − a < S M ( k ) < b 1 , S M ( k ) ≥ b , (8) where a , b > 0 are chosen to r egulate erro r p robabilities α , β . Denote the d efined de tec tion rule based o n su m med log - likelihood ratio from sensors in M as f M . W e have the following lem ma quan tifying per forman c e of this test (called sum-SPR T) in the absenc e o f attack. The proo f is pr ovided in Append ix ( Section VI I-A). Lemma 1 : Defin e I , min { I 0 , I 1 } , fo r all admissible test rule f based on sensor inform a tion in M , γ ( f , g = 0 0 0 ) ≤ γ ( f M , g = 0 0 0 ) = | M | · I , (9) where g = 0 0 0 means the attacker is absent. Remark 5 : Th e p erforma nce of f M is prop ortional to th e number of sensors | M | and th e constant I defin ed by K-L div ergence. Constant I who repr esents the ”distance” of two distributions cou ld be treated a s a basic unit of per forman ce. Now we move on to consider the d etection problem under attack. W e assume s > 2 c to prevent trivial pr oblems in the rest of p aper if withou t fur ther notice. B. A ttack Strate gy In this sub section we show an attack strategy whe r e th e attacker flip s the distribution of the comp romised sensor observations under d ifferent states to con fuse the detector . W e denote it as g ∗ (named flip attack) and it is defined in the following: Denote sensor in dex set of the first c sensors as O 1 , { 1 , 2 , . . . , c } and the set of last c sensors as O 2 , { s − c + 1 , s − c + 2 , . . . , s } . If θ = 0, g e nerates rando m ob servations ˜ x i ( k ) at time k for every sensor i ∈ O 1 accordin g to the oppo site distribution µ , i.e. fo r each Borel set B P [ ˜ x i ( k ) ∈ B ] = µ ( B ) , θ = 0 , i ∈ O 1 . (10) Then d esign b ias d ata to make sure the final observations x i ( k ) + x a i ( k ) of sensors in O 1 is the sam e a s ˜ x i ( k ) : x a i ( k ) = ˜ x i ( k ) − x i ( k ) , θ = 0 , i ∈ O 1 . (11) If θ = 1, observations in O 2 is m anipulated in similar way . P [ ˜ x i ( k ) ∈ B ] = ν ( B ) , θ = 1 , i ∈ O 2 . (12) x a i ( k ) = ˜ x i ( k ) − x i ( k ) , θ = 1 , i ∈ O 2 . (13) For sensors not mentio n ed ab ove, the bias value x a i ( k ) = 0. By this oper ation, the following inequ ality of performan ce holds. Theorem 1 : For any admissible detection strategy f we have γ ( f , g ∗ ) ≤ ( s − 2 c ) I . (14) Remark 6 : Th e coefficient ( s − 2 c ) indicates that the d e - tector will have positiv e p erforma n ce wh en le ss than half of the sensors are com p romised. I t also implies every increase of co mprom ised sen so r will in cur two un its of perfor mance decrease. The result fo llows Th eorem 3 ( 2) in [16]. Pr oof: Under attack g ∗ , for either θ = 0 or θ = 1, sensors in O 1 will follow d istribution µ and sensors in O 2 will follow distribution ν . In oth er words, only sensors in S \ ( O 1 ∪ O 2 ) have different distributions und er different θ . Since we assume s > 2 c , S \ ( O 1 ∪ O 2 ) 6 = / 0. I f we define M = S \ ( O 1 ∪ O 2 ) , a ccording to Lem ma 1, γ ( f , g ∗ ) ≤ γ ( f M , g = 0 0 0 ) = | S \ ( O 1 ∪ O 2 ) | I = ( s − 2 c ) I . Thus, equation (14) is o btained. C. Detection Strate gy In this section we present a dete c tion strategy that cou ld form a Nash equilibriu m pa ir with flip attack g ∗ . Before we present the d e te c tion ru le, we first define some notation s. First we defin e the stopping tim e of single thresho ld test for ea ch sensor i in (15)(16). Sim ilar to b asic SPR T , those two thr esholds are den oted as − a < 0 < b : τ + i ( b ) , inf k ∈ Z + { S i ( k ) ≥ b } . (15) τ − i ( a ) , inf k ∈ Z + { S i ( k ) ≤ − a } . (16) Then sort those stop ping time o f the same thr eshold in an ascending o rder an d den ote th e m as τ − ( i ) ( a ) , τ + ( i ) ( b ) : τ − ( 1 ) ( a ) ≤ τ − ( 2 ) ( a ) ≤ · · · ≤ τ − ( s ) ( a ) , τ + ( 1 ) ( b ) ≤ τ + ( 2 ) ( b ) ≤ · · · ≤ τ + ( s ) ( b ) . Define r as the param eter o f d ecision rules with s / 2 < r ≤ s and the voting rule f ( r ) is defined as taking correspond ing hypoth esis the first time when ther e have b een r crossing of the same thr eshold. The rule is showed formally in the following. For each time k , f ( r ) k = cont inue , k < min { τ − ( r ) ( a ) , τ + ( r ) ( b ) } 0 , k = τ − ( r ) ( a ) < τ + ( r ) ( b ) 1 , k = τ + ( r ) ( b ) < τ − ( r ) ( a ) 0 or 1 , k = τ + ( r ) ( b ) = τ − ( r ) ( a ) . (17) The decisio n 0 or 1 mean s stop samplin g and tak e H 0 or H 1 with the same p robab ility 0.5. Deno te the detection strategy d efined above as f ( r ) , { f ( r ) 1 , f ( r ) 2 , . . . } . W e denote the stopping time of detectio n ru le f ( r ) as T ( r ) . Before we show th e perform ance o f d etection strategy , we provide some prelim in ary results of stopping time s and erro r probab ilities in ab sence o f attack whose p roof is provided in Append ix (Sectio n VII -B) Theorem 2 : ( 1 ) lim a = b → ∞ E 0 τ − ( r ) ( a ) a − 1 I 0 = 0 , lim a = b → ∞ E 1 τ + ( r ) ( b ) b − 1 I 1 = 0 (18) ( 2 ) lim a = b → ∞ E 0 [ T ( r ) ] a ≤ 1 I 0 , lim a = b → ∞ E 1 [ T ( r ) ] b ≤ 1 I 1 (19) ( 3 ) lim a = b → ∞ 1 b log P 0 [ τ + ( r ) ( b ) ≤ τ − ( r ) ( a )] ≤ − r (20) lim a = b → ∞ 1 a log P 1 [ τ − ( r ) ( a ) ≤ τ + ( r ) ( b )] ≤ − r (21) Based on Theorem 2 we are ready to show the p erfor- mance of o ur d etection ru le with caref ully designed r . Theorem 3 : For any admissible attack strategy g , fix r = s − c an d d enote f ∗ , f ( s − c ) . W e have γ ( f ∗ , g ) ≥ ( s − 2 c ) I . Pr oof: W e show the following inequalities fo r arbitr ary attack g (notice th at P g θ and E g θ denote p robab ility an d expectation un der attack g ) E g 1 [ τ + ( r ) ( b )] ≤ E 1 [ τ + ( r + c ) ( b )] . (22) E g 0 [ τ − ( r ) ( a )] ≤ E 0 [ τ − ( r + c ) ( a )] . (23) P g 1 [ τ − ( r ) ( a ) ≤ τ + ( r ) ( b )] ≤ P 1 [ τ − ( r − c ) ( a ) ≤ τ + ( r − c ) ( b )] . (24) P g 0 [ τ − ( r ) ( a ) ≥ τ + ( r ) ( b )] ≤ P 0 [ τ − ( r − c ) ( a ) ≥ τ + ( r − c ) ( b )] . (25) Considering the reada b ility o f this section , the pro of of these four inequalities a bove is shown in Ap pendix (Sec- tion VII -C). The left hand side o f the m are expected stopp ing times and err or pro babilities und er attack while the rig ht hand side are the ones with o ut attac k s. They are obtain ed considerin g cu mulative log-likeliho od ratio and thre sh old- reached tim e in the worst case given all ad missible attack. W e are ready to q uantify the perf ormance un der attack with the he lp of in equalities (22) to (25). On one h and, detection delay can be upper bound ed based on (18) and (22): E g 1 [ T ( r ) ] ≤ E g 1 [ τ + ( r ) ( b )] ≤ E 1 [ τ + ( r + c ) ( b )] ∼ b I 1 . in wh ich the first inequ ality come s from d efinition o f voting rule (17). On the other hand , er ror probab ility can be quantified b ased on (2 1) an d (2 4): β ≤ P g 1 [ τ − ( r ) ( a ) ≤ τ + ( r ) ( b )] ≤ P 1 [ τ − ( r − c ) ( a ) ≤ τ + ( r − c ) ( b )] ≤ Ce − ( r − c ) a , where C is a c o nstant term. Those two in equalities im ply lim α = β → 0 + log ( 1 / β ) E g 1 [ T ( r ) ] = lim a = b → ∞ log ( 1 / β ) E g 1 [ T ( r ) ] ≥ lim a = b → ∞ ( r − c ) · a b / I 1 = ( r − c ) I 1 . When θ = 0, similar results co uld be derived fro m e q uation (23) an d (25). T hus, by replacin g r w ith s − c , th e final result is o b tained: γ ( f ∗ , g ) ≥ ( s − c − c ) min { I 0 , I 1 } = ( s − 2 c ) I . The p roof is com pleted. Combining Theorem 1 and 3, we ar e re ady to show th e Nash equilibriu m p air of strategies. Theorem 4 : Dete c tion strategy f ∗ defined in (17) with r = s − c and attack strategy g ∗ defined in ( 10) to ( 13) for m a Nash equilibriu m , i.e. fo r any ad m issible detection rule f and attack g , γ ( f , g ∗ ) ≤ γ ( f ∗ , g ∗ ) = ( s − 2 c ) I ≤ γ ( f ∗ , g ) . Pr oof: Set th e detector in Theore m 1 as f ∗ and attac k in Theorem 3 as g ∗ and we can o btain γ ( f ∗ , g ∗ ) ≥ ( s − 2 c ) I and γ ( f ∗ , g ∗ ) ≤ ( s − 2 c ) I at the same time. Su bstituting ( s − 2 c ) I with γ ( f ∗ , g ∗ ) in th eorem 1 an d 3 leads to the result. Remark 7 : Th e pay offs for p layers o f the g a me are γ ( f , g ) (for detector f ) an d − γ ( f , g ) (for attacker g ). Notice tha t the strategy set for this game is n on-co m pact, the Nash equilibriu m do es not nec e ssarily exist. Ou r result actually proved the existence of Nash equ ilib rium in ad dition to a pair o f specific strategy . Remark 8 : Sinc e the d e finition of γ ( f , g ) can a lso be used to ev aluate no n-sequen tial detection schemes, we a re able to compare their p erforma n ce w ith ou r s. W e d efine ˜ I , − lo g inf w ∈ R Z x ∈ R d µ ( x ) d ν ( x ) w d ν ( x ) . It ha s been shown in [16] The o rem 2 that 0 < ˜ I < I . The detector perf ormance defined in [16] is the same as o urs for fixed samp le detecting scheme. Howev er , the value of detector performan ce in th at paper is ( s − 2 c ) ˜ I which is smaller than o urs. I n this sense, o ur scheme is mor e sample- efficient bec a u se the sampling is terminated as soon as there is enou gh statistical information ind icating the real hypoth esis. Remark 9 : Sing le time step computation complexity of our detection scheme is O ( s ) as comp uting S i ( k ) and voting among senso rs both hav e a complexity of O ( s ) . Th erefore, the compu tational complexity is lower than the result in [16] where the so r ting algorithm cause a comp utational com- plexity o f O ( s log s ) . Moreover , voting de te c tion alg orithm is more easily ap plied to distributed co mputing because the sensors do not need to send th e actu a l observations to th e control center but only need to inf orm whether the thre sh old is cr ossed. System based on o ur detection algorithm have less inf ormation transm ission pressure an d is m ore likely to achieve better efficiency a nd r esilience. I V . E X T E N S I O N S In the previous section , we assume the nu mber of compro- mised sensors c is kn own to the system manag er . Howev er , in practice the real value may be unknown and what we have is a estimation o f its u pper boun d . It can be seen as a design p arameter denoting how many sensor cor ruptions the system can toler ate. In this section, we study the conditio n where we h av e an up per b ound c and th e actual nu mber of compro mised sensors c can take value in { 0 , 1 , 2 , . . . , c } . W e den ote the voting detection ru le with r = s − c as ˜ f , f ( s − c ) . W e have the following Th eorem revealing the lower bound of its per forman ce. Theorem 5 : Given detector ˜ f , assum e c is the actual number of compr omised sensors and c ≤ c < s / 2. Under any admissible attac k , we have γ ( ˜ f , g ) ≥ ( s − c − c ) I . Pr oof: In this setting, Theorem 3 still holds true and the only difference is th e cho ice of r . Thus, th e r esult is obtained by sub stituting s − c with s − c . Remark 1 0: The perfo rmance lo ss is in pro portiona l to the sum of estimation nu mber of corrup tio n c and the actual number of co rruption c . If c is fixed, excessiv e c > c will cause u n necessary pe r forman ce lo ss. The result in Theor em 5 implies the perfor m ance lower bound is ( s − c ) I w h en all sensors are benign. W e present it in the f o llowing Co rollary fo rmally . Corollary 1: When there is no attack, i.e. c = 0, perfor- mance is lower b ounded : γ ( ˜ f , g = 0 0 0 ) ≥ ( s − c ) I . Remark 1 1: γ ( ˜ f , g = 0 0 0 ) could be seen as th e detection efficiency of voting rule at nor mal operatio n (attacker is absent). The increasing o f c will sacrifice detection p er- forman ce in ab sence of attack while gaining better system resilience. T h us, sufficient k nowledge abo ut the attacker ( e.g. how many sensors will be compr omised) will be he lp ful fo r system efficiency-security trade off. Sin ce the equilibriu m strategy pair is not un ique, questing fo r a d etection rule who can ach ieve m aximum p erforman ce when the attack is present and absent simu ltaneously is m eaningfu l and could be o u r fu ture work. V . S I M U L A T I O N In this section, we provide some numerical examples to verify the results established in th e pr evious section s. W e assume the o b servations of sensors follow i.i.d. distribution of N ( − 1 , 1 ) 2 when θ = 0 an d N ( 1 , 1 ) when θ = 1. In this case I = I 0 = I 1 = 2. W e set s = 10 and c varies from 0 to 4. In Fig. 1, d etection and attack strategy are f ∗ and g ∗ respectively . W e calcu late detection delay D ( T ) and err or pr o bability α with th reshold a = b vary fro m 5 × 10 0 to 1 × 10 5 for each fixed c . The result log ( 1 / α ) D ( T ) is norma lized by I and shou ld tend to s − 2 c accordin g to Th e orem 4. T o simulate the error p robab ility with higher accuracy , we adopt the imp ortance samplin g approa c h [2 6]. 10 0 10 1 10 2 10 3 10 4 10 5 0 2 4 6 8 10 threshold a , b ( a = b ) γ ( f ∗ , g ∗ ) / I Fig. 1. Normalized performanc e of equilibr ium strate gy pair ( f ∗ , g ∗ ) when s = 10 for c = 0 (black solid line), c = 1 (cy an dash dot line), c = 2 (green dot line) , c = 3 (red dash line) and c = 4 (blue solid line). V I . C O N C L U S I O N In this paper, we formu late the pro blem of binary sequ en- tial detectio n in adversarial environment as a game between the detector and the attacker . Detection perform ance is de- fined asymptotically by both error pr obability and A verage Sample Number as error probability tends to zero and this value is integrated in th e g ame as payoff which the detector intends to ma ximize while the attacker inten ds to minimize . W e p r opose a pair of detection rule and a ttack strategy and prove them to be an eq uilibrium pair of the game . Furthermo re, th e pe r forman ce in conditio n where nu mber of compro mised sensor is u nknown and wher e all sensor s are benign is quantified. The choice of detection rule pa r am- eter r is discussed and result is corrobor a ted by numerical simulation. Th e future work includ es th e trade-off between system’ s security and efficiency as well as discussion ab out achiev ability of optimal security and efficiency . V I I . A P P E N D I X A. P r oof of Lemma 1 First we claim the in equality in (9) is tru e by contradictio n to optimum character o f SPR T in [5]. Denote | M | I as I M . Assum e th ere exists a detectio n rule f ′ that satisfy γ ( f ′ , g = 0 0 0 ) = I ′ > I M . W e consider the co ndition 2 N ( p , q 2 ) represent Normal distributio n with mean p and varianc e q 2 . where pr o bability of type-I er r or equa ls to th at of type-I I error . Those err o r p robabilities is den oted as p M , α ( f M ) = β ( f M ) and p ′ , α ( f ′ ) = β ( f ′ ) . The stopping time is deno ted as T M and T ′ respectively . By definition ∀ ε > 0 th ere exists P > 0 such that ∀ 0 < p M , p ′ < P log ( 1 / p M ) D ( T M ) − I M < ε , log ( 1 / p ′ ) D ( T ′ ) − I ′ < ε . Let p ′ = p M and cho ose ε < ( I ′ − I M ) / 2 th en on e ob tains D ( T ′ ) < log ( 1 / p ′ ) I ′ − ε < log ( 1 / p M ) I M + ε < D ( T M ) , which im plies min θ { E θ ( T ′ ) } < min θ { E θ ( T M ) } . Tha t con- tradicts to { E θ ( T ′ ) ≥ E θ ( T M ) } , θ = 0 , 1 (com ing from opti- mality o f W ald’ s T est). T hus co mpletes th e pro of. Remark 1 2: The optimality of SPR T is o riginally proved for sin g le sen sor scenar io. As the obser vation fro m sensor s are i.i.d. distributed, they could be form ulated as a s dimen- sion observation vector and each hyp othesis rep r esents a jo int distribution. For example, when θ = 0, ν ( X 1 = x 1 , . . . , X s = x s ) , ∏ s i = 1 ν ( X i = x i ) . And the o ptimality (minimal A verage Sample Nu mber with same er ror pr o bability) still hold s. In the f ollowing we show the asym ptotic p erform a n ce of sum-SPR T . Som e of useful asymptotic proper ties of α , β and E θ [ T M ] has been pr ovided by Berk [27] an d we show some equiv alent statements in the following Lemma. Lemma 2 : Assume a , b > 0, M 6 = / 0 and we ha ve the following results hold with p robability one : lim α = β → 0 + 1 a log 1 β = 1 , lim α = β → 0 + 1 b log 1 α = 1 , lim α = β → 0 + E 0 [ T M ] a = 1 | M | I 0 , li m α = β → 0 + E 1 [ T M ] b = 1 | M | I 1 . Pr oof: Tho se r esults o f single sensor (i.e. | M | = 1 ) follow straig htforwardly fro m [27] Theorem 2 . 1 and 2 . 2. W e focus on th e gen eralization of multi- sensor scenario . Firstly , the asympto tic ch aracteristic o f error p robabilities do not rely on sensor n u mbers for th e same r eason as in Remark 12 and theref ore first two equ alities are tru e. W e concentr a te on the A verage Sample Nu mber . First we hav e the following f rom [27]. For every i ∈ S lim α = β → 0 + E 0 [ T i ] a = 1 E 0 [ L i ( k )] , li m α = β → 0 + E 1 [ T i ] b = 1 E 1 [ L i ( k )] , where T i is the sto p ping time o f T M when M is a singleto n set { i } . According to definition in (7), the summed log- likelihood ratio over set M at time k is ∑ i ∈ M L i ( k ) . Th erefore, E 0 " ∑ i ∈ M L i ( k ) # = ∑ i ∈ M E 0 [ L i ( k )] = | M | I 0 . The similar result could b e obtain e d when θ = 1. Proof of Lemma 2 is accomplished . W e are ready to verify the eq uality in (9). For readab ility purpo ses, the lim itations without sub scripts in the fo llowing equation mean s limits as α = β tends to 0 + . With results above, noticing a ∼ b , one obtains γ ( f M , g = 0 0 0 ) = lim log ( 1 / α ) max { lim E 0 [ T M ] , lim E 1 [ T M ] } = min lim b a · lim a E 0 [ T M ] , lim b E 1 [ T M ] = min { | M | I 0 , | M | I 1 } = | M | · I . Proof of Lemma 1 is finished . B. P r oof of Theorem 2 Before we prove results in Th eorem 2 , we need to present two lemmas. Th e following paragrap h is the shared assum p- tion o f tho se two lemmas. Assume x x x ( k ) , [ x 1 ( k ) , . . . , x m ( k )] is a seq uence of ind e- penden tly an d identically distributed rando m vector s o f m dimensions. Den ote the prob ability measure an d expectatio n with respect to it as P , E and den ote the expectation of every element as η , E [ x 1 ( k )] . W e assum e 0 < η < ∞ . De fin e random walk S i ( n ) , ∑ n k = 1 x i ( k ) . Lemma 3 : Defin e two stop p ing time in the f ollowing ( b > 0) T ( b ) , inf { n ∈ Z + , m ax 1 ≤ i ≤ m S i ( n ) ≥ b } , T ( b ) , inf { n ∈ Z + , min 1 ≤ i ≤ m S i ( n ) ≥ b } . Then we have lim b → ∞ E T ( b ) b − 1 η = 0 , lim b → ∞ E T ( b ) b − 1 η = 0 . Pr oof: It’ s a special case of [28] T h eorem 1 . Lemma 4 : W e assume th a t there exist h < 0 so th at E [ e hx i ] = 1 and in ad dition E [ x i e hx i ] < ∞ . The stopp ing time τ − i ( a ) is defined same as equ ation ( 1 6). W e ha ve the following result f o r every 1 ≤ i ≤ m : lim a = b → ∞ 1 a log P [ τ − i ( a ) < ∞ ] = −| h | . Pr oof: The symmetric result ( µ < 0 , h > 0 ) is p rovided in [29] Section 1 . As all x i are iden tically distributed, results hold for all 1 ≤ i ≤ m . Now we ca n proceed to p rove T heorem 2. Part (1) lim a = b → ∞ E 0 τ − ( r ) ( a ) a − 1 I 0 = 0 , lim a = b → ∞ E 1 τ + ( r ) ( b ) b − 1 I 1 = 0 . Pr oof: W e first prove the latter one and the former one can b e dealt with similarly . Define the first time wh e n there ar e r statistics S i ( k ) ab ove threshold b or below threshold − a at the same time: T + ( r ) ( b ) , inf k ∈ Z + { S ( s − r + 1 ) ( k ) ≥ b } , T − ( r ) ( a ) , inf k ∈ Z + { S ( r ) ( k ) ≤ − a } , where S ( i ) ( k ) is the ascendin g or d ered cum ulativ e log- likelihood r atio S i ( k ) , i.e., S ( 1 ) ( k ) ≤ S ( 2 ) ( k ) ≤ . . . S ( s ) ( k ) . In the absence of attac k, for the same a or b we have th e following inequality: τ − ( 1 ) ( a ) = T − ( 1 ) ( a ) ≤ τ − ( r ) ( a ) ≤ τ − ( s ) ( a ) ≤ T − ( s ) ( a ) , τ + ( 1 ) ( b ) = T + ( 1 ) ( b ) ≤ τ + ( r ) ( b ) ≤ τ + ( s ) ( b ) ≤ T + ( s ) ( b ) . It suffices to prove lim a = b → ∞ E 1 T + ( 1 ) ( b ) b − 1 I 1 = 0 , lim a = b → ∞ E 1 T + ( s ) ( b ) b − 1 I 1 = 0 . According to Lem ma 3 (notation s T , T are also fr om Lem ma 3), T + ( s ) ( b ) = T ( b ) , T + ( 1 ) ( b ) = T ( b ) . If we set m = s an d tho se elements x i ( k ) in vector x x x ( k ) as log -likelihood ratios L i ( k ) from s sensors, statement ab ove is tru e becau se exceptio n of log-likelihood ratio by definition equ als to K – L diver gence. Thus, the pr oof is fin ish ed. Part (2) lim a = b → ∞ E 0 [ T ( r ) ] a ≤ 1 I 0 , lim a = b → ∞ E 1 [ T ( r ) ] b ≤ 1 I 1 . Pr oof: W e prove the second o ne and the proof f o r the first one is similar . Accord in g to definition in (17), th e stopping tim e o f de tection rule satisfy T ( r ) = min { τ − ( r ) ( a ) , τ + ( r ) ( b ) } . Therefo re, lim a = b → ∞ E 1 [ T ( r ) ] b ≤ lim a = b → ∞ E 1 [ τ + ( r ) ( b )] b = 1 I 1 , where the equ a tion comes from Part (1 ). The proof is completed . Part (3) lim a = b → ∞ 1 b log P 0 [ τ + ( r ) ( b ) ≤ τ − ( r ) ( a )] ≤ − r , lim a = b → ∞ 1 a log P 1 [ τ − ( r ) ( a ) ≤ τ + ( r ) ( b )] ≤ − r . Pr oof: W e prove th e seco nd one and the fir st one can be proved similarly . As what we h av e don e in Part (1), we set those e le m ents x i ( k ) in vector x x x ( k ) in Lemma 4 as log-likelihood r atios L i ( k ) fr om s sensors. W e obtain the following asymptotic r esult (26) from Lemma 4 by showing that conditio n s in the lem m a is satisfied by h = − 1. P 1 [ τ − i ( a ) < ∞ ] ∼ Ce − a ∀ i ∈ S , a = b → ∞ , (2 6) where C is a co nstant term . In or der to prove (26), it suffices to sh ow that E 1 [ e − L i ( k ) ] = 1 and E 1 [ L i ( k ) e − L i ( k ) ] < ∞ for every i ∈ S , k ∈ Z + . First we have E 1 [ e − L i ( k ) ] = Z R d µ ( x ) d ν ( x ) − 1 d µ ( x ) = Z R d ν ( x ) = 1 . For the second one, E 1 [ L i ( k ) e − L i ( k ) ] = Z R log d µ ( x ) d ν ( x ) d ν ( x ) = I 1 < ∞ . Considering the i.i.d. setting, (26) is proo fed. Event { τ − ( r ) ( a ) ≤ τ + ( r ) ( b ) } im plies that there exists a index set R , { i 1 , i 2 , . . . , i r } ⊆ S th at for e very i in the set, e vent { τ − i ( a ) < ∞ } occu rs. Consid e ring the independ ence of every cumulative log -likelihood ratio, we o btain P 1 [ τ − ( r ) ( a ) ≤ τ + ( r ) ( b )] ≤ [ | R | = r , R ⊆ S P 1 max i ∈ R τ − i ( a ) < ∞ ≤ [ | R | = r , R ⊆ S ∏ i ∈ R P 1 [ τ − i ( a ) < ∞ ] ∼ s r Ce − ra . This direc tly le a ds to result of P art (3) a s the log arithm o f constant term will co nverge to zero when divided by a . C. Pr oof of inequ alities (22) to (2 5) Pr oof: W e pr ove (22)(24) in this section an d (23)(25) can be proved in th e same way . I n order to analyze stoppin g time τ + ( r ) ( b ) , with out loss of generality , we assume S i ( k ) has been ordered by subscr ipt for some fixed k , i.e. S 1 ( k ) ≤ S 2 ( k ) ≤ · · · ≤ S s ( k ) . The worst case o f stoppin g tim e τ + ( r ) ( b ) under attac k is that the largest c cu mulative log - likelihood ratios S i ( k ) are assigned to be smaller than all oth er o nes. I f we denote cumulative lo g -likelihood ratios o f comp romised sensor as S ′ i ( k ) , then in th e worst case the largest c sensors (index s − c + 1 to s ) is manipu lated an d assigned to be sma ll eno ugh so that max s − c + 1 ≤ i ≤ s S ′ i ( k ) < S 1 ( k ) . In this con d ition, the stopp ing time is rea c hed if o ther r honest S i ( k ) are no smaller than threshold b , i.e. τ + ( r ) ( b ) ≤ inf k { S s − r − c + 1 ( k ) ≥ b } , which im plies (22). F or e rror pr o bability P g 1 [ τ − ( r ) ( a ) ≤ τ + ( r ) ( b )] , th e worst case is that cumulativ e log-likelihood ratios fro m co mprom ised sen ors satisfy S i ( n ) ≤ − a , ∀ i ∈ C , ∀ n ∈ Z + , wh ic h m eans the wro ng decision is made as long as there are r − c m istaken votes because there have been c manipu lated votes. It can be denoted as e vent { τ − ( r − c ) ( a ) ≤ τ + ( r − c ) ( b ) } occur in absence of attack and (2 4) is thu s o btained. R E F E R E N C E S [1] R. Langner, “Stuxne t: Dissecting a cyberwarf are weapon, ” IE EE Securit y Privacy , vol . 9, no. 3, pp. 49–51, May 2011. [2] G. S alles-Loustau, L. Garcia, P . Sun, M. Dehnav i, and S. Z onouz, “Po wer grid safety control via fine-grained multi-person a pro- grammable logic controllers, ” in 2017 IEEE International Confer ence on Smart Grid Communic ations (SmartGridComm) , Oct 2017, pp. 283–288. [3] J. Y an, X. Ren, and Y . Mo, “Sequentia l detection in adv ersarial en vironment, ” in 2017 IEE E 56th Annual Confer ence on Decision and Contr ol (CDC) , Dec 2017, pp. 170–175. [4] A. W ald, “Sequential tests of statistical hypotheses, ” The Annals of Mathemat ical Statistics , vol. 16, no. 2, pp. 117–186, 1945. [5] A. W ald and J. W olfowit z, “Optimum charac ter of the sequential probabil ity ratio test, ” Annals of Mathematic al Statistics , vol. 19, 11 1947. [6] J. Ho, “Distrib uted detection of replica cluster atta cks in sensor netw orks using seque ntial analysis, ” in 2008 IE EE International P erformance , Computing and Communication s Conferen ce , Dec 2008, pp. 482–485. [7] J. Ho, M. W right, and S. K. Das, “Fast detection of mobile replica node attack s in wireless sensor networ ks using sequential hypothesis testing , ” IEEE T ransactions on Mobile Computing , vol. 10, no. 6, pp. 767–782, June 2011. [8] G. Fellouris, E . Bayrak tar , and L. Lai, “Efficien t byzantine sequential change detecti on, ” IEEE T ransact ions on Informatio n Theory , vol. 64, no. 5, pp. 3346–336 0, May 2018. [9] H. W ang, D. Zhang, and K. G. Shin, “Change-point monitoring for the detection of dos attacks, ” IE EE Tr ansacti ons on Dependable and Secur e Computing , vol. 1, no. 4, pp. 193–208, Oct 2004. [10] D. Li, M. Zhang, Z. Lai, and Y . Shen, “Sequentia l probabilit y ratio test based fault detect ion method for actuat ors in gnc system, ” in Pr oceedi ngs of the 32nd Chinese Contr ol Confere nce , July 2013, pp. 6324–6327. [11] D. Y uan, H. Li, and M. Lu, “ A method for gnss spoofing detecti on based on sequential probability ratio test, ” in 2014 IEEE/ION P osition, Location and Navigatio n Symposium - P LANS 2014 , May 2014, pp. 351–358. [12] A. T eixeira , I. Shames, H. Sandber g, and K. H. Johansson, “Rev eali ng stealt hy attac ks in control systems, ” in 2012 50th Annual Allerton Confer ence on Communicatio n, Contr ol, and Computing (Allerton) , Oct 2012, pp. 1806–1813. [13] L . Hu, Z. W ang, Q.-L. Han, and X. Liu, “Sta te estimation under false data injecti on atta cks: Security analysis and system protection, ” Automat ica , vol. 87, pp. 176 – 183, 2018. [14] B. Kailkhur a, Y . S. Han, S. Brahma, and P . K. V arshne y, “ Asymptotic analysi s of distrib uted bayesian detection with byzantin e data, ” IEEE Signal Proce ssing Letter s , vol. 22, no. 5, pp. 608–612, May 2015. [15] H. Fawzi, P . T abuada , and S. Digga vi, “Secure estimation and control for cyber-p hysical systems under adversarial attac ks, ” IEE E T ransac- tions on Automatic Contr ol , vol. 59, no. 6, pp. 1454–1467, June 2014. [16] X. Ren, J. Y an, and Y . Mo, “Binar y hypothesis testing with byzantine sensors: Fundamenta l tradeof f betwee n s ecurity and effici enc y , ” IEE E T ransactions on Signal Pr ocessing , vol. 66, no. 6, pp. 1454–1468, March 2018. [17] X. Ren and Y . Mo, “Multiple hypothesis testing in adversaria l en- vironments: A game-theor etic approach, ” in 2018 Annual American Contr ol Confer ence (AC C) , June 2018, pp. 967–972. [18] ——, “Secure detection: Performance metric and sensor deployment strate gy , ” in IEEE T ransactions on Signal Pr ocessing , vol. 66, no. 17, Sep. 2018, pp. 4450–4460 . [19] K. G. V amvoudak is, J. P . Hespanha, B. Sinopoli, and Y . Mo, “De- tecti on in adversarial en vironment s, ” IEEE T ransactions on Automatic Contr ol , vol. 59, no. 12, pp. 3209–3223, Dec 2014. [20] Y . Mo, J. P . Hespanha, and B. Sinopoli, “Resilien t detect ion in the presence of integ rity attac ks, ” IEEE T ransact ions on Signal Pro cess- ing , vol. 62, no. 1, pp. 31–43, Jan 2014. [21] V . V . V eera v alli and T . Banerje e, “Quickest change detection, ” Math- ematics , vol. 33, no. 14, pp. 4434–4457, 2012. [22] E . Soltanmohammadi, M. Orooji, and M. Naraghi-Po ur, “Decent ral- ized hypothesis testing in wireless sensor netw orks in the presence of misbeha ving nodes, ” IEEE T ransactions on Information F orensics and Securit y , vol. 8, no. 1, pp. 205–215, Jan 2013. [23] B. Kailkhur a, Y . S. Han, S. Brahma, and P . K. V arshne y, “ Asymptotic analysi s of distrib uted bayesian detection with byzantin e data, ” IEEE Signal Proce ssing Letter s , vol. 22, no. 5, pp. 608–612, May 2015. [24] S. Marano, V . Matta, and L. T ong, “Distribut ed detec tion in the pres- ence of byzanti ne attack s, ” IEEE T ransactions on Signal Proc essing , vol. 57, no. 1, pp. 16–29, J an 2009. [25] A. W ald, Sequential Analysis . Ne w Y ork: W ile y , 1947. [26] R. Y . Rubinstein and D. P . Kroese, Simulati on and the Monte Carlo method . John Wile y & Sons, 2016. [27] R. H. Berk, “Some asymptotic aspects of sequential analysis, ” The Annals of Statistic s , vol . 1, no. 6, pp. 1126–1138, 11 1973. [28] R. H. Farrell, “Limit theorems for stopped random walks, ” The Annals of Mathematical Statisti cs , vol. 35, no. 3, pp. 1332–134 3, 1964. [29] J. KUGLER and V . W A CHT EL, “Upper bounds for the m aximum of a random walk with negati ve drift, ” Jo urnal of Applied Prob abilit y , vol. 50, no. 4, pp. 1131–1146, 2013.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment