Greed is Good: Exploration and Exploitation Trade-offs in Bayesian Optimisation

The performance of acquisition functions for Bayesian optimisation to locate the global optimum of continuous functions is investigated in terms of the Pareto front between exploration and exploitation. We show that Expected Improvement (EI) and the …

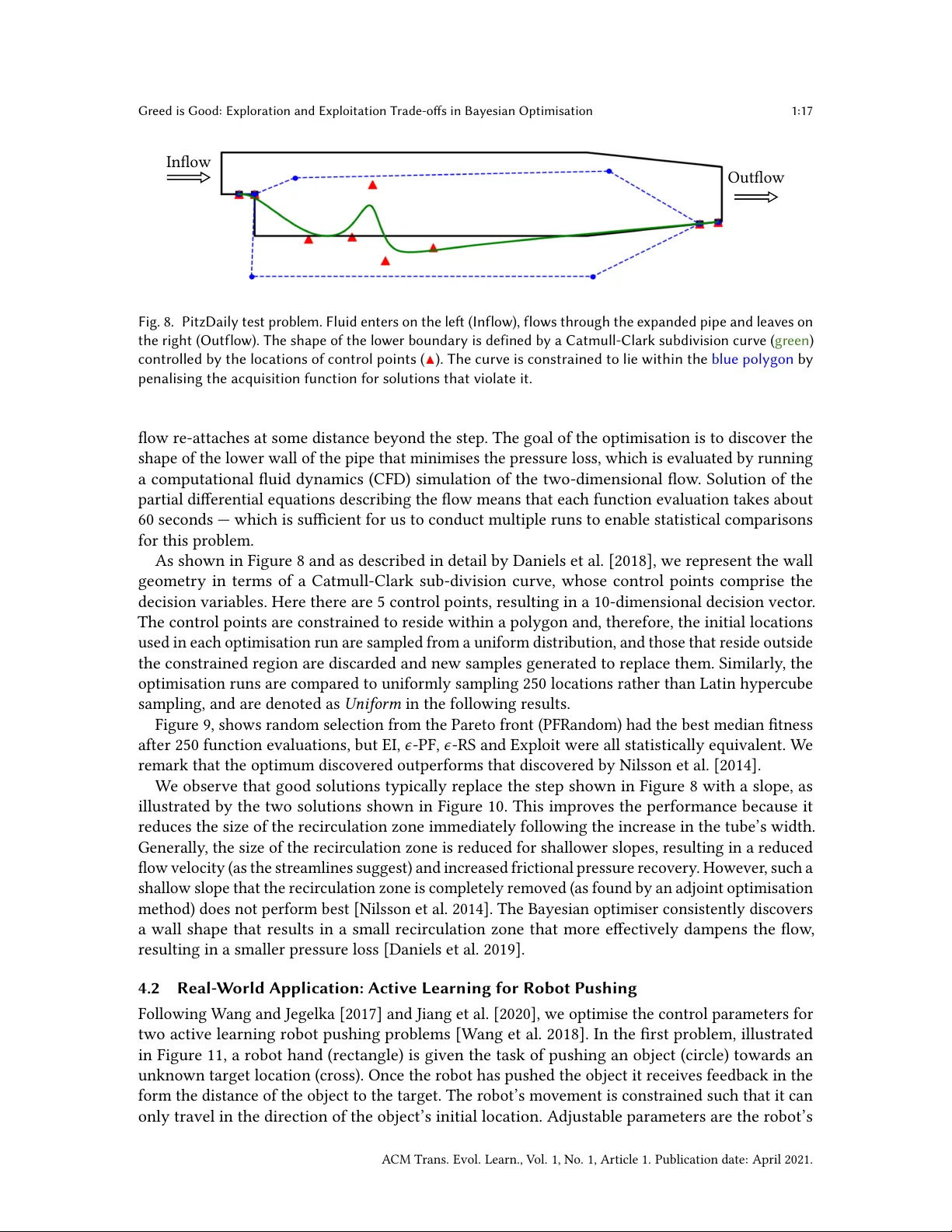

Authors: George De Ath, Richard M. Everson, Alma A. M. Rahat