Developing an Unsupervised Real-time Anomaly Detection Scheme for Time Series with Multi-seasonality

On-line detection of anomalies in time series is a key technique used in various event-sensitive scenarios such as robotic system monitoring, smart sensor networks and data center security. However, the increasing diversity of data sources and the va…

Authors: Wentai Wu, Ligang He, Weiwei Lin

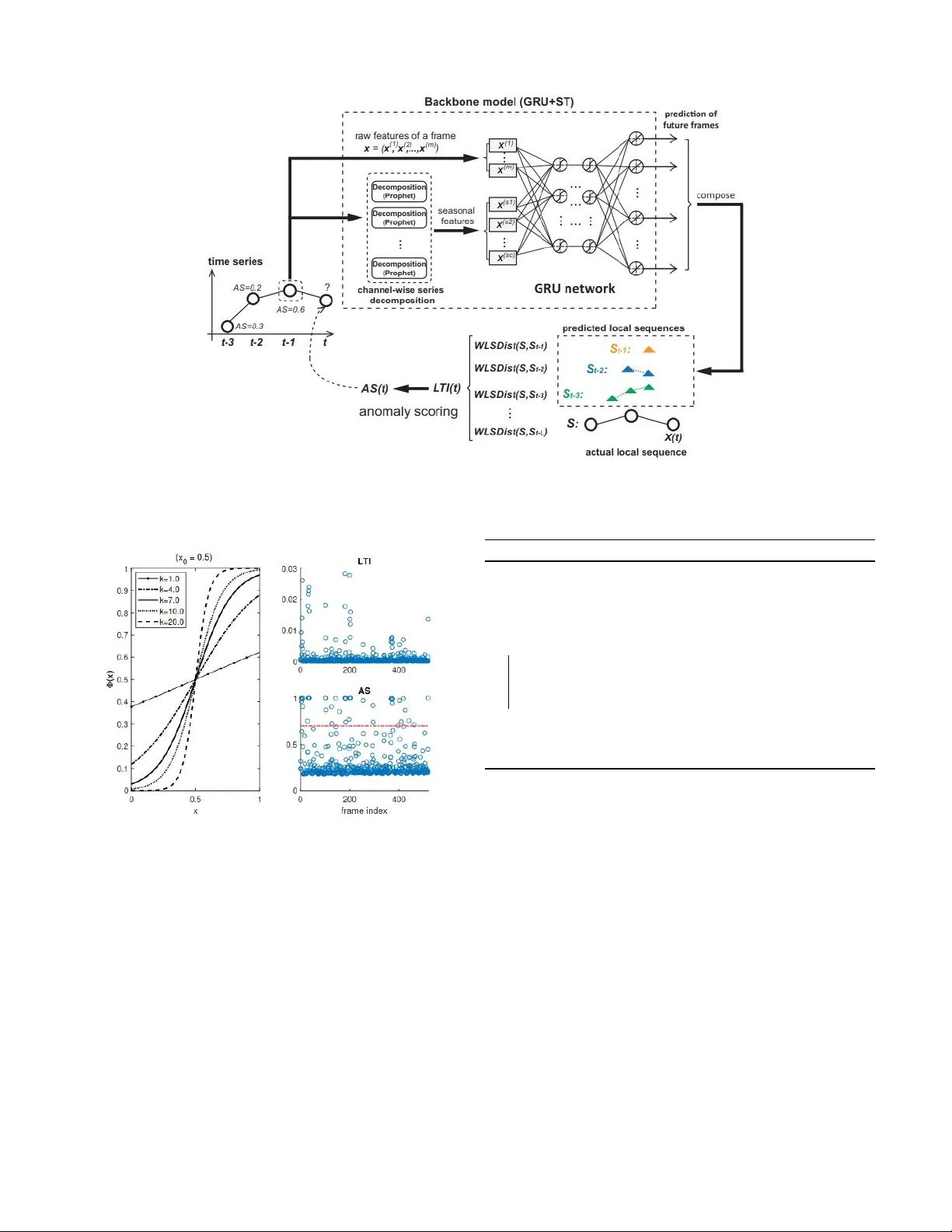

THIS IS A PREPRINT VERSION OF THE WORK 10.1109/TKDE.2020.3035685 PUBLISHED IN THE IEEE TKDE BY ©IEEE 1 De v eloping an Unsupervised Real-time Anomaly Detection Scheme for T ime Series with Multi-seasonality W entai W u, Student Member , IEEE , Ligang He, Member , IEEE , W eiwei Lin, Y i Su, Y uhua Cui, Carsten Maple, and Stephen Jarvis, Member , IEEE Abstract —On-line detection of anomalies in time series is a key technique used in various e vent-sensitiv e scenarios such as robotic system monitoring, smart sensor networks and data center security . Howev er , the increasing diversity of data sour ces and the variety of demands make this task more challenging than e ver . Firstly , the rapid increase in unlabeled data means supervised learning is becoming less suitable in many cases. Secondly , a large portion of time series data ha ve complex seasonality features. Thirdly , on-line anomaly detection needs to be fast and reliable. In light of this, we hav e developed a prediction- driven, unsupervised anomaly detection scheme, which adopts a backbone model combining the decomposition and the inference of time series data. Further , we propose a novel metric, Local T rend Inconsistency (L TI), and an efficient detection algorithm that computes L TI in a real-time manner and scores each data point robustly in terms of its probability of being anomalous. W e hav e conducted extensive experimentation to evaluate our algorithm with several datasets from both public repositories and production en vironments. The experimental results show that our scheme outperf orms existing repr esentative anomaly detection algorithms in terms of the commonly used metric, Area Under Curve (A UC), while achieving the desired efficiency . Index T erms —time series, seasonality , anomaly detection, un- supervised learning I . I N T R O D U C T I O N T ime series data sources have been of interest in a v ast variety of areas for many years – the nature of time series data was examined in a seminal study by Y ule [1] and the techniques were applied to areas such as econometric [2] and oceanographic data [3] since the 1930s. Howe ver , in an era of hyperconnecti vity , big data and machine intelligence, ne w technical scenarios are emerging such as autonomous driving, edge computing and Internet of Things (IoT). Analysis of such systems poses new challenges to the detection of anomalies in time series data. Further , for a wide range of systems which require 24/7 monitoring services, it has become crucial to hav e the detection techniques that can provide early , reliable reports of anomalies. In cloud data centers, for e xample, a distributed monitoring system usually collects a variety of log data from the virtual machine level to the cluster level on a Corresponding author: Ligang He. W . Wu, L. He and Y . Su are with the Department of Computer Science, University of W arwick. W . Lin is with the School of Computer Science and Engineering at the South China University of T echnology . Y Cui is with the Research Institute of W orldwide Byte Information Security . C. Maple is with the W arwick Manufacturer Group, Univ ersity of W arwick. S. Jarvis is with the College of Engineering and Physical Sciences, Uni versity of Birmingham. regular basis and sends them to a central detection module, which then analyzes the aggreg ated time series to detect any anomalous ev ents including hardware failures, unav ailability of services and c yber attacks. This requires a reliable on- line detector with strong sensitivity and specificity . Otherwise, the inefficient detection may cause unnecessary maintenance costs. Sev eral classes of schemes hav e been applied to the problem of anomaly detection for time series data. In certain cases de- cent results can be achieved by these traditional methods such as outlier detection [4][8][9][10], pattern (segment) extraction [12][13][14][15] and sequence mapping [18][20][21]. Ho w- ev er, we are facing a growing number of new scenarios and applications which produce large volumes of time series data with unprecedented complexity , posing challenges that tradi- tional anomaly detection methods cannot address effecti vely . First, more and more time series data are being produced without labels since data labeling/annotation is usually very time-consuming and costly . Sometimes it is also unrealistic or impossible to acquire reliable labels when their correctness has to be guaranteed. Second, some applications may produce multi-channel series with complex features such as multi- period seasonality (i.e., multiple seasonal, such as yearly or monthly , patterns within one channel), long periodicity , fairly unpredictable channels and different seasonality between chan- nels. As a result, learning these patterns requires effecti ve sea- sonality discovery and strong ability of generalization. Third, the process is commonly required to be fast enough to support instant reporting or alarming once unexpected situation occurs. The capability of on-line detection is especially important in a wide range of event-sensiti ve scenarios such as medical and industrial process control systems. In this paper , we propose a predictive solution to detecting anomalies effecti vely in time series with complex seasonality . The fundamental idea is to inspect the data samples as they ar- riv e and match the data samples with an ensemble of forecasts made chronologically . Specifically , our solution comprises an augmented forecasting model and a novel detection algorithm that exploits the predictions of local sequences made by the underlying forecasting model. W e built a frame-to-sequence Gated Recurrent Unit (GR U) netw ork while extending its input with seasonal terms extracted by decomposing the time series of each sample channel. The integration of the seasonal features can alle viate negati ve impact from anomalous samples in the training data since the anomalous samples have minor THIS IS A PREPRINT VERSION OF THE WORK 10.1109/TKDE.2020.3035685 PUBLISHED IN THE IEEE TKDE BY ©IEEE 2 impact on the long-term periodic patterns. Because of the abov e reasons, our prediction framework does not require the labels (specifying which data are normal or abnormal) or uncontaminated training data (i.e., our solution tolerates polluted/abnormal training samples). After predicting local sequences (i.e., the output of the forecasting model), we use a novel method to weight the ensemble of different forecasts based on the reliability of their forecast sources and make it a chronological process to fit the on-line detection. The weight of each forecast is determined dynamically during the process of detection by scoring each forecast source (i.e., the forecast made based on this data source), which reflects ho w likely the predictions made by a forecast source is trustworthy . Based on the above ensemble, we propose a new metric, termed Local Trend Inconsistenc y (L TI), for measuring the deviation of an actual sequence from the predictions in real-time, and assigns an anomaly score to each of the ne wly arriv ed data points (which we also call frames) in order to quantify the probability that a frame is anomalous. W e also propose a method to map the L TI value of a frame to its Anomaly Score (AS) by a logistic-shaped function. The mapping further dif ferentiates anomalies and normal data. In order to determine the logistic mapping function, we propose a method to automatically determine the optimal values of the fitting parameters in the logistic mapping function. The AS value of a frame in turn becomes the weight of its impact on the detection of future frames. This makes our L TI metric robust to the anomalous frames in the course of detection and significantly mitigates the potential impact of anomalous samples on the detection results of the future frames. This feature also enables our algorithm to work chronologically without maintaining a large reference database or caching too many historical data frames. T o the best of our knowledge, the existing prediction-driv en detection schemes do not take into account the reliability of the forecast sources. The main contributions of our work are as follows: • W e designed a frame-to-sequence forecasting model in- tegrating a GR U network with time series decomposition (using Prophet, an additi ve time series model de veloped by Facebook [29]) to enable the contamination-tolerant training on multi-seasonal time series data without any labels. • W e propose a new metric termed Local Trend Incon- sistency (L TI), and based on this metric we further propose an unsupervised detection algorithm to score the probability of data anomaly . An practical method is also proposed for fitting the scoring function. • W e mathematically present the computation of L TI in the form of matrix operations and prov e the possibility of parallelization for further speeding up the detection procedure. • W e conducted extensi ve experiments to e valuate the proposed scheme on two public datasets from the UCI data repository and a more complex dataset from a pro- duction en vironment. The result shows that our solution outperforms the e xisting algorithms significantly with lo w detection overhead. The rest of this paper is organized as follows: Section II discusses a number of studies related to anomaly detection. In Section III, we introduce Local Trend Inconsistency as the key metric in our unsupervised anomaly detection scheme. W e then systematically present our unsupervised anomaly detection solution in Section IV , including the backbone model for prediction and a scoring algorithm for anomaly detection. W e present and analyze the experimental results in Section V , and finally conclude this paper in Section VI. I I . R E L A T E D W O R K The term anomaly refers to a data point that significantly deviates from the rest of the data which are assumed to follow some distribution or pattern. There are two main categories of approaches for anomaly detection: novelty detection and outlier detection. While nov elty detection (e.g. classification methods [39][40][41][42]) requires the training data to be clas- sified, outlier detection (e.g., clustering, principal component analysis [20] and feature mapping methods [43][44]) does not need a prior knowledge of classes (i.e., labels) and thus is also known as unsupervised anomaly detection. The precise terminology and definitions of these terminology may v ary in different sources. W e use the same taxonomy as Ahmed et al. did in reference [45] whilst in the survey presented by Hodge and Austin [38] unsupervised detection is classified as a subtype of outlier detection. The focus of our work is on unsupervised anomaly detection since we aim to design a more generic scheme and thus do not need to assume the labels are unav ailable. In the detection of time series anomalies, we are interested in discovering abnormal, unusual or unexpected records. In a time series, an anomaly can be detected within the scope of a single record or as a subsequence/pattern. Many classical algorithms can be applied to detect single-record anomaly as an outlier , such as the One Class Support V ector Machine (OCSVM) [4], a variant of SVM that exploits a hyperplane to separate normal and anomalous data points. Zhang et al. [5] implemented a network performance anomaly detector using OCSVM with Radial Basic Function (RBF), which is a commonly used kernel for SVM. Maglaras and Jiang [6] developed an intrusion detection module based on K- OCSVM, the core of which is an algorithm that performs K- means clustering iterativ ely on detected anomalies. Shang et al. [7] applies Particle Swarm Optimization (PSO) to find the optimal parameters for OCSVM, which they applied to detect the abnormalities in TCP traffic. In addition, Radov anovi ´ c et al. [9] in vestigated the correlation between hub points and outliers, providing a useful guidance on using rev erse nearest- neighbor counts to detect anomalies. Liu et al. [8] found that anomalies are susceptible to the property of ”isolation” and thus proposed Isolation Forest (iForest), an anomaly detection algorithm based on the structure of random forest. T aking advantage of iForest’ s flexibility , Calheiros et al. [10] adapted it to dynamic failures detection in lar ge-scale data centers. For anomalous sequence or pattern detection, there are a number of classical methods av ailable such as box modeling [11], symbolic sequence matching [18] and pattern e xtraction THIS IS A PREPRINT VERSION OF THE WORK 10.1109/TKDE.2020.3035685 PUBLISHED IN THE IEEE TKDE BY ©IEEE 3 [14][15]). F or example, Huang et al. [19] proposed a scheme to identify the anomalies in VM liv e migrations by combining the extended Local Outlier Factor (LOF) and Symbolic Aggregate ApproXimation (SAX). Recent advance in machine learning techniques inspires prediction-driv en solutions for intelligent surveillance and de- tection systems (e.g., [48][49]). A prediction-dri ven anomaly detection scheme is often a sliding window-based scheme, in which future data values are predicted and then the predictions are compared against the actual v alues when the data arriv e. This type of anomaly detection schemes has been attracting much attention recently thanks to the remarkable performance of recurrent neural networks (RNNs) in prediction/forecasting tasks. Filonov et al. [33] proposed a fault detection framework that relies on a Long Short T erm Memory (LSTM) network to make predictions. The set of predictions along with the measured v alues of data are then used to compute error distribution, based on which anomalies are detected. Similar methodologies are used by [34] and [24]. LSTM-AD [34] is also a prediction scheme based on multiple forecasts. In LSTM-AD the abnormality of data samples is ev aluated by analyzing the prediction error and the corresponding probabil- ity in the context of an estimated Gaussian error distribution obtained from the training data. Ho wev er, the dra wback of LSTM-AD is that it is prone to the contamination of training data. Therefore, when the training data contains both normal and anomalous data, the accuracy of the prediction model is likely to be affected, which consequently make the anomaly detection less reliable. Malhotra et al. [23] adopt a dif ferent architecture named encoder-decoder , which is based on the notion that only normal sequences can be reconstructed by a well-trained encoder- decoder network. A major limitation of their model is that an unpolluted training set must be provided. As revealed by Pas- canu et al. [25], RNNs may struggle in learning complex sea- sonal patterns in time series particularly when some channels of the series have long periodicity (e.g., monthly and yearly). A possible solution to that is decomposing the series before feeding into the network. Shi et al. [35] proposed a wav elet- BP (Back Propagation) neural network model for predicting the wind power . They decompose the input time series into the frequency components using the wa velet transform and b uild a prediction network for each of them. T o forecast time series with complex seasonality , De Liv era et al. [37] adopt a novel state space modeling frame work that incorporates the seasonal decomposition methods such as the Fourier representation. A similar model was implemented by Gould et al. [36] to fit hourly and daily patterns in utility loads and traffic flo ws data. Ensuring low ov erhead is essential for real-time anomaly detection. For example, Gu et al. [16] proposed an efficient motif (frequently repeated patterns) discovery frame work in- corporating an improved SAX indexing method as well as a trivial match skipping algorithm. Their experimental results on the CPU host load series show excellent time efficienc y . Zhu et al. [17] propose a new method for locating similar sub- sequences as well as a parallel approach using GPUs to accel- erate Dynamic Time W arping (DTW) for time series pattern discov ery . Similarly , parallel algorithms (e.g., [50][51][52]) hav e been applied to sev eral forms of machine learning models for efficienc y boost. I I I . L O C A L T R E N D I N C O N S I S T E N C Y In this section, we first introduce a series of basic notions and frequently-used symbols, then define a couple of distance metrics, and finally present the core concept in our anomaly detection scheme - Local T r end Inconsistency ( LTI ). In some systems, more than one data collection de vice is deployed to gather information from multiple variables relat- ing to a common entity simultaneously , which consequently generates multi-variate time series. In this paper we call them multi-channel time series. Definition 1: A channel is the full-length sequence of a single v ariable that comprises the feature space of a time series. For the sake of conv enience, we define a frame as follo ws. This concept of a frame is inspired by , but is more general than, a frame in video processing (since a video clip can be reckoned as a time series of images.) Definition 2: A frame is the data record at a particular point of time in a series. A frame is a vector in a multi-channel time series, or a scalar value in a single-channel time series. Most of previous schemes detect anomalies by analyzing the data items in a time series as separate frames. Howe ver , in our approach we attempt to conduct the analysis from the perspectiv e of local sequences. Definition 3: A local sequence is a fragment of the target time series; a local sequence at frame x is defined as a fragment of the series spanning from a pre vious frame to frame x . For clarity , we list all the symbols frequently used in this paper in T able I. T ABLE I L I S T O F S Y M B O L S Symbol Description X A time series X X ( t ) The t -th frame of time series X X ( c ) The c -th channel of time series X X ( c ) ( t ) The c -th component of the t -th frame of time series X x ( i ) The i -th feature of frame x ˆ x k The forecast of the frame x predicted by frame k S An actual local sequence from the target time series S k A local sequence predicted by frame k S ( i ) The i -th frame in local sequence S S ( i, j ) An actual local sequence spanning from frame i to j S k ( i, j ) A local sequence predicted by k spanning from frame i to j Euclidean Distance and Dynamic T ime W arping (DTW) Distance are commonly used to measure the distance between two vectors. Howe ver , the scale of Euclidean Distance largely depends on the dimensionality , i.e., vector length. DTW dis- tance can measure the sequence similarity , but cannot produce the length-independent results. With the relativ ely high time complexity ( O ( n 2 m ) for m -dimensional sequences of length n ), DTW is often applied to the sequence-lev el analysis, in which the target is a sequence of frames or a pattern of varying length. Howe ver , our work aims to perform the frame-wise, THIS IS A PREPRINT VERSION OF THE WORK 10.1109/TKDE.2020.3035685 PUBLISHED IN THE IEEE TKDE BY ©IEEE 4 on-line detection, i.e., detect whether a frame is anomalous as the frame arriv es. Therefore, in this paper we use a modified form of Eu- clidean distance, called Dimension-independent Frame Dis- tance ( D F Dist ) as formulated in Eq. (1), to measure the distance between two frames x and y : D F Dist ( x, y ) = 1 m m X i =1 ( x ( i ) − y ( i ) ) 2 (1) where m is the number of dimensions (i.e., number of chan- nels) and x ( i ) and y ( i ) are the i -th component of frame x and frame y , respectiv ely . W e do not square root the result. This does not impact the effecti veness of our approach, but makes it easier to handle when we transform all computations into matrix operations at the later stage of the processing. Also, the desired scale (i.e., D F Dist ∈ [0 , 1] ) of the distance still holds for normalized data. W ith D F Dist , we can further measure the distance between two local sequences of the same length. The desired metric for sequence distance should be independent on the length of the sequences as we want to have a unified scale for any pair of sequences. W e formulate the Length-independent Sequence Distance ( LS D ist ) between two sequences S X and S Y of the same length in Eq. (2), where L is the length of the two local sequences. LS D ist ( S X , S Y ) = 1 L L X i =1 D F Dist ( S X ( i ) , S Y ( i )) (2) Although the definition of LS Dist already provides a unified scale of distance, the temporal information of the time series data is neglected. Assuming we are detecting the anomaly of the ev ent at time t , we need to compare the local sequence at frame t with a ground truth sequence (assume there is one) to see if anything goes wrong in the latest time window . If we use LS D ist as the metric, then ev ery time point is regarded as being equally important. Howe ver , this does not practically comply with the rule of time decay , namely , the most recent data point typically has the greatest reference value and also the greatest impact on what will happen in the next time point. Therefore, we refine LS D ist by weighting each term and adding a normalization factor . The W eighted Length-independent Sequence Distance ( W LS D ist ) is defined in Eq. (3), where d i is the weight of time decay for frame i and D L is the normalization factor (so that W LS D ist remains in the same scale as LS D ist ). W LS D ist ( S X , S Y ) = P L i =1 d i · D F Dist ( S X ( i ) , S Y ( i )) D L (3) T ime decay is applied on the basis that the two sequences are chronologically aligned. In this paper , we use the expo- nentially decaying weights, which is similar to the exponential moving a verage method [46]: d i = e − ( L − i ) , i = 1 , 2 , ..., L (4) where i denotes the frame index and L − i is the temporal distance (with i = L being the current frame). Hence, the corresponding normalization factor D L in Eq. (3) is the summation of a geometric series of length L : D L = L X i =1 e − ( L − i ) = 1 − e − L 1 − e − 1 (5) where L is the sequence length. Ideally it is easy to identify the anomalies by calculating W LS D ist between the target (such as local sequence or frame) and the ground truth. Howe ver , this approach is not feasible if the labels are una vailable (i.e., there is no ground truth). A possible solution is to replace the ground truth with expectation, which is obtained typically by using time series forecasting methods [22][34], which is the basic idea of the so- called prediction-driven anomaly detection schemes. Ho wev er , a critical problem with such a prediction-dri ven scheme is the reliability of forecast. On the one hand, the prediction error is inevitable. On the other hand, the predictions made based on the historical frames, which may include anomalous frames, can be unreliable. This poses a great challenge for prediction- driv en anomaly detection schemes. En visaging the abo ve problems, we propose a nov el, re- liable prediction scheme, which mak es use of multi-source forecasting. Unlike previous studies that use frame-to-frame predictors, our scheme makes a series of forecast at dif ferent time points (i.e, from dif ferent sources) by b uilding a frame-to- sequence predictor . The resulting collection of forecasts form a common expectation from multiple sources for the target. When the target arri ves and if it deviates from the common expectation, it is deemed that the target is lik ely to be an anomaly . This is the underlying principle of our unsupervised anomaly detection. In order to quantitativ ely measure ho w far the target de viates from the collection of expectations obtained from multiple sources, we propose a metric we term the Local T rend Incon- sistency (L TI). L TI takes into account the second challenging issue discussed above (i.e, there may exist anomalous frames in history) by weighting the prediction made based on a source (i.e., a frame at a previous time point) with the probability of the source being normal. For a frame t (i.e., by which we refer to the frame arri ving at time point t ), LT I ( t ) is formally defined in Eq. (6), where S ( i + 1 , t ) is the actual sequence from frame i + 1 to frame t , and S i ( i + 1 , t ) is the sequence of the same span predicted by frame i (i.e., prediction made when frame i arri ves). L is the length of the prediction windo w , which is a hyper -parameter determining the maximum length of the predicted sequence and also the number of sources that make the predictions (i.e., the number of predictions/expectations) of the same target. P ( i ) denotes the probability of frame i being normal. Z t is the normalization factor for frame t defined as the sum of all the probabilistic weights shown in Eq. (7). Z t is used to normalize the value of LT I ( t ) to the range of [0 , 1] . THIS IS A PREPRINT VERSION OF THE WORK 10.1109/TKDE.2020.3035685 PUBLISHED IN THE IEEE TKDE BY ©IEEE 5 LT I ( t ) = 1 Z t t − 1 X i = t − L P ( i ) · W LS D ist S ( i + 1 , t ) , S i ( i + 1 , t ) (6) Z t = t − 1 X i = t − L P ( i ) (7) Fig. 1. An example demonstrating the calculation of Local Trend Inconsis- tency with the max probe length L equal to 3. Fig. 1 illustrates ho w L TI is calculated in a case where L = 3 (i.e., the length of the prediction window is 3). Based on the actual data arriving at t 0 (the actual data are represented by circles), our scheme predicts the frames at three future time points, i.e., t 1 , t 2 and t 3 , which are depicted as green triangles in the left part of Fig. 1. When the time elapses to t 1 , the data at t 1 arriv es and our scheme predicts the data at the time points of t 2 , t 3 and t 4 (in the figure we only plot the predictions up to the time point t 3 ), which are colored blue. Similarly , when the time elapses to t 2 , the data at t 2 arriv es and our scheme forecasts the data at the time points of t 3 , t 4 and t 5 (colored orange). Now assume we want to calculate LT I ( t 3 ) to gauge the abnormality of the data arri ving at time t 3 . As shown in Fig. 1, at time t 3 , we know the actual local sequence from t 0 to t 3 , i.e., S ( t 0 , t 3 ) (corresponding to the term S ( i + 1 , t ) in Eq. 6), and also we have made the following three predictions, which are the forecasts at three dif ferent time points: • S 0 ( t 1 , t 3 ) : the predicted local sequence from t 1 to t 3 , which is predicted at time t 0 ; • S 1 ( t 2 , t 3 ) : the predicted local sequence from t 2 to t 3 , which is predicted at time t 1 ; • S 2 ( t 3 ) : the prediction of frame t 3 made at time t 2 . LT I ( t 3 ) is then obtained by i) calculating the weighted distances (i.e., W S LD ist in Eq. 3) between the predicted sequences and the corresponding actual sequence up to time t 3 , i.e., the distances between S 0 ( t 1 , t 3 ) and S ( t 1 , t 3 ) (sho wn at the bottom right of Fig. 1), between S 1 ( t 2 , t 3 ) and S ( t 2 , t 3 ) (middle right of Fig. 1), and between S 2 ( t 3 ) and S ( t 3 ) (top right of Fig. 1); ii) calculating the weighted sum (the weight is P ( i ) ) of the distances obtained in last step, and iii) normalizing the weighted sum (i.e. divided by Z t in Eq. (7)). This multi-source prediction establishes the common expec- tation for the data v alues. Ho w far the actual data de viates from the predicted data, which is measured by the distance between them, is used to quantify the abnormality of the giv en data. The whole process can be formulated using matrix opera- tions. Assume we are detecting anomaly at frame t and the size of the prediction window is L . F or brevity let d f k ( t ) denote the distance between frame t and a forecast of the frame made at time k (i.e., D F D ist ( t, ˆ t k ) ). W e first define the frame-distance matrix D F : D F = D F ( t − L ) D F ( t − L +1) . . . D F ( t − 1) where D F ( u ) = d f u ( u + 1) d f u ( u + 2) . . . d f u ( t ) T Then we define two diagonal normalization matrices N 1 and N 2 as follows: N 1 = 1 D L 0 1 D L − 1 . . . 0 1 D 1 N 2 = 1 Z t 0 1 Z t . . . 0 1 Z t where D L and Z t are defined in (5) and (7), respectiv ely . For con venience let ds k ( t ) denote W LS D ist S ( k + 1 , t ) , S k ( k + 1 , t ) . Hence we can derive the matrix of weighted local sequence distances denoted as D S : D S = ds t − L ( t ) ds t − L +1 ( t ) . . . ds t − 1 ( t ) = N 1 D F T where T is the time decay vector defined as: T = d 1 d 2 . . . d L where d i is computed via Eq. (4). Now we assume the probability of being normal is already known for each of frame t ’ s predecessors (i.e., P ( t − 1) , P ( t − 2) , ... ), and we put them together into a 1 × L matrix P : P = P ( t − L ) P ( t − L + 1) · · · P ( t − 1) Then we can reformulate LT I ( t ) as belo w: LT I ( t ) = PN 2 D S = PN 2 N 1 D F T (8) THIS IS A PREPRINT VERSION OF THE WORK 10.1109/TKDE.2020.3035685 PUBLISHED IN THE IEEE TKDE BY ©IEEE 6 Through the use of matrices to formulate the calculation of LT I , we can know that the calculation can be performed efficiently in parallel. The Degree of Parallelism (DoP) of its calculation can be higher than L . This is because the DoP for calculating the L terms in Eq. (6) can be L apparently (the calculation of every term is independent on each other). The calculation of each term can be further accelerated (including the calculations of W LS D ist and D F D ist ) by parallelizing the matrix multiplication. F or example, with a number of L × L processes (i.e., a grid of processes) and exploiting the Scalable Univ ersal Matrix Multiplication Algorithm (SUMMA) [47], we can achie ve a roughly L 2 speedup in the multiplication of any two matrices with the dimension size of L , which helps reduce the time complexity of computing N 1 D F from O ( L 3 ) to O ( L ) . Further, with the resulting N 1 D F the computation of N 1 D F T and PN 2 can be performed in parallel as both of them are vector -matrix multiplication requiring only L pro- cesses and hav e time complexity of O ( L 2 /L ) = O ( L ) . Finally multiplying the resulting matrices of PN 2 (dimension= 1 × L ) and N 1 D F T (dimension= L × 1 ) consumes O ( L ) . Note that the matrix D F contains L × L entries of frame distance, each of which is calculated using Eq. (1). Therefore, updating D F (upon a new frame arriv es) is an operation with the complexity of O ( L 2 m/L 2 ) = O ( m ) , where m is the frame dimension. Consequently , the time complexity of computing LT I ( t ) in parallel is O ( m + L ) in theory . I V . A N O M A LY D E T E C T I O N W I T H L T I Our anomaly detection scheme is based on L TI (Local T rend Inconsistency) as L TI can ef fectiv ely indicate ho w significantly the series deviates locally from the common expectation established by multi-source prediction. As can be seen from Eq. (6), there are still two problems to be solved in calculating LT I . First, a mechanism is required to make reliable predictions of local sequences. Second, we need an algorithm to quantify the probabilistic factors (in matrix P ) as they are not known apriori. In this section, we first introduce the backbone model we build for achieving accurate frame-to-sequence forecasting. The model is designed to learn the comple x patterns in multi- seasonal time series with tolerance to pollution in the training data. Then we illustrate how to make use of the predictions (from multiple source frames) made to compute L TI. Finally , we propose an anomaly scoring algorithm that uses a scoring function to chronologically calculate anomaly probability for each frame based on L TI. A. Pr ediction Model T o ef fectively learn and accurately predict local sequences in multi-seasonal time series, we adopt a combinatorial backbone model composed of a decomposition module and an inference module. Recurrent Neural Network (RNN) is an ideal netw ork to implement the inference module of our prediction model. RNNs (including mutations such as Long Short T erm Memory (LSTM) and Gated Recurrent Unit (GR U)) are usually applied as end-to-end models (e.g., [26] [27]). Howe ver , a major limitation of them is the dif ficulty in learning complex sea- sonal patterns in multi-seasonal time series. Even though the accuracy may be impro ved by stacking more hidden layers and increasing back propagation distance (through time) during training, it could cause prohibitive training cost. In view of this, we propose to include the seasonal features of the input data explicitly as the input of the neural network. This is achieved by conducting time series decomposition before running the prediction model, which is the purpose of the decomposition module. The resulting seasonal features can be regarded as the outcome of feature engineering. T echnically speaking, seasonal features are essentially the ”seasonal terms” decomposed from each channel of the tar get time series. W e use Pr ophet [29], a frame work based on the decomposable time series model [28], to extract the channel-wise seasonal terms. Let X ( c ) denote the c -th channel of time series X , and X ( c ) ( t ) the t -th record of the channel. The outcome of time series decomposition for channel c is formulated as below: X ( c ) ( t ) = g c ( t ) + s c ( t ) + h c ( t ) + (9) where g c ( t ) is the trend term that models non-periodic changes, s c ( t ) represents the seasonal term that quantifies the seasonal effects. h c ( t ) reflects the effects of special oc- casions such as holidays, and is the error term that is not accommodated by the model. For simplicity , we in this paper only consider daily and weekly seasonal terms as additional features for the inference module of our model. Pr ophet relies on Fourier series to model multi-period seasonality , which enables the fle xible approximation of an y periodic patterns with arbitrary length. The underlying details can be referred to [29]. Separating seasonal terms from original frame v alues and using them as additional features ef fectively improve RNN from the following perspecti ves. First, explicit input of sea- sonal terms helps reduce the dif ficulty of learning comple x seasonal terms in RNN. The extracted seasonal terms quantify seasonal ef fects. Second, time cost of training is expected to decrease as we can apply the Truncated Back Propagation Through Time (TBPTT) with a distance much shorter than the length of periodicity . Besides, the series decomposition process is very efficient, which will be demonstrated later by experiments. The top part of Fig. 2 shows the architecture of our backbone prediction model. In the prediction model, a stacked GR U network is implemented as the inference module, which takes as input the raw features of a frame concatenated with its seasonality features. W e demonstrate the effecti veness of this backbone model in Section V -A. B. Computing LTI based on Pr edictions When we calculate Local T rend Inconsistency (L TI) in Eq. (6), we are actually measuring the distance between a local sequence and an ensemble of its predictions by a well trained backbone prediction model. The workflo w of our on- line anomaly detection method includes three main steps: i) feed e very arriving frame into the prediction model and continuously gather its output of predicting future frames, ii) organize the frame predictions by their sources (i.e., the frames THIS IS A PREPRINT VERSION OF THE WORK 10.1109/TKDE.2020.3035685 PUBLISHED IN THE IEEE TKDE BY ©IEEE 7 which made the forecast) and concatenate them into local sequences, and iii) compute L TI of the newly arrived frame according to Eq. (6). Fig. 2 demonstrates the entire process, in which L TI of a frame is con verted to a score of abnormality using the algorithms to be introduced later . C. Anomaly Scoring In theory , the v alues of LT I ( t ) can be directly used to score frame t in terms of its abnormality . Ho wev er , the range of this metric is application-specific. So we further dev elop a measure that can represent the probability of data anomaly . Specifically , we define a logistic mapping function to con vert the value of LT I ( t ) to a probabilistic value: Φ( x ) = 1 1 + e − k ( x − x 0 ) (10) where k is the logistic growth rate and x 0 the x-value of the function’ s midpoint. The left part of Fig. 3 sho ws the shapes of Φ( · ) with different values of k when x 0 is set to 0.5. The shape of Φ( · ) becomes steeper as k increases. W e will introduce how to determine the optimal values of k and x 0 later . Now we define the probabilistic anomaly score of frame t as below: AS ( t ) = Φ( LT I ( t )) (11) The reason why we use Eq. (10) to map LT I ( t ) to AS ( t ) are three folds. First, we find that the LT I ( t ) v alues are clustered together closely (top right of Fig. 3), which means that the difference in LT I ( t ) values between normal and abnormal frames are not significant. This makes it difficult to differentiate them in practice although we can do so in theory . The right part of Fig. 3 illustrates the situation where we map raw LT I ( t ) v alues to AS ( t ) . It can be seen from the figure that the value of anomaly scores are better dispersed leaving a clearer divide between normal data and (potential) anomalies. For example, the red line we draw separates out roughly 10 percent of potential anomalies with high scores. Second, as discussed in the pre vious section, our scheme makes a series of forecast from different sources for the tar get, which establishes a common e xpectation for the target. The challenge is that there may exist anomalous sources, from which the forecast made is unreliable. Thus we hav e to differentiate the quality of the predictions by specifying lar ge weights (i.e., the P ( i ) in Eq. 6) for normal sources and small weights for the sources that are likely to be abnormal. W ith the function Φ( · ) to disperse the LT I ( t ) v alues (by mapping them into AS ( t ) , the impact difference between normal and abnormal frames is magnified. Last but not the least, we find that the actual values of LT I ( t ) depend on particular applications that our detection scheme is applied to. After mapping, the AS ( t ) values becomes less application-dependent, making it possible to set a uni versal anomaly threshold. This is similar to the scenario of determining the unusual e vents if the samples follow the normal distribution: the values lying beyond two standard de viations from the mean are often regarded as unusual. Considering the second reason discussed abov e, we replace P ( i ) in Eq. (6) with 1 − AS ( i ) where i = t − L, t − L + 1 , ..., t − 1 . Consequently , LT I ( t ) is reformulated as: LT I ( t ) = 1 Z t t − 1 X i = t − L (1 − AS ( i )) · W LS D ist S ( i + 1 , t ) , S i ( i + 1 , t ) (12) where Z t is the normalization factor reformulated as P t − 1 i = t − L (1 − AS ( i )) and 1 − AS ( i ) represents the probability that frame i is normal. The function Φ( · ) contains two parameters, k and x 0 . The values of these two parameters need to be set before the func- tion can be used to calculate the anomaly . Since x 0 is supposed to the midpoint of x , we set x 0 to be mean( LT I ) . W e set k to c/ stdev ( LT I ) ( stdev( LT I ) is the standard de viation of LT I , and c is a constant multiplier). The purpose of the mapping function is to disperse the L TI values that are densely clustered. On the one hand, the standard deviation stdev( LT I ) can be used to represent how densely the L TI values reside around the mean. The lower the v alue of stdev ( LT I ) , the more closely the L TI values are clustered. On the other hand, k represents how steep the middle slope of the logistic mapping function is. The greater k is, the steeper the logistic mapping function is. The more densely clustered the L TI values are, the steeper the logistic function needs to be in order to disperse those values. Therefore, for a set of L TI values with lower deviation, a bigger v alue should be set for k . Instead of setting the values of k and x 0 manually , we propose an automated approach in this work to determine their values. More specifically , we design an iterativ e algorithm. The algorithm runs on a reference time series which is a portion of the training data. The algorithm is outlined in Algorithm 1. Algorithm 1: Iterativ e procedure for unparameterizing Φ( · ) Input : prediction span L , reference series length r , predicted local sequences S i ( i + 1 , i + L ) for i ∈ [0 , r − 1] Output: k , x 0 k ← 1 . 0 , x 0 ← 0 . 5 AS ( i ) ← 0 for all i ∈ [0 , r − 1] while con ver gence criterion is not satisfied do for t ← L to r − 1 do compute LT I ( t ) via Eq. (12) compute AS ( t ) via Eq. (11) end k ← c stdev( LT I ) , x 0 ← mean( LT I ) end In Algorithm 1, parameters k and x 0 are set to 1 . 0 and 0 . 5 initially , respectiv ely . Note that it does not matter much what the initial values of k and x 0 are. When Algorithm 1 is run on the reference time series, L TI for each frame of the reference series is calculated. The values of k and x 0 will con verge to c/ stdev( LT I ) and mean( LT I ) ev entually . In the THIS IS A PREPRINT VERSION OF THE WORK 10.1109/TKDE.2020.3035685 PUBLISHED IN THE IEEE TKDE BY ©IEEE 8 Fig. 2. An overview of the proposed prediction-driven anomaly detection framework for the time series, which uses a seasonality augmented GRU network as the backbone model to support the abnormality scoring based on Local Trend Inconsistency (L TI). Fig. 3. The mapping function Φ( · ) we use for anomaly scoring (left), and the dispersion effect by mapping LT I values (top right) to anomaly scores (bottom right) with Φ( · ) . algorithm, we set a con vergence criterion, in which both k and x 0 change by less than 0.1% since last update. In each loop, the algorithm computes LT I ( t ) and AS ( t ) along the reference series for each frame t . After each loop, we update k and x 0 and check if the criterion is met. W ith the anomaly scoring function AS ( · ) and the trained backbone model for the target series, we no w present our Anomaly Detection based on Local T rend Inconsistency (AD- L TI). Assume we are detecting the anomaly for frame t , the pseudo-code of our on-line detection procedure is described in Algorithm 2. The information required for detection at frame t includes frame t itself, anomaly scores of previous frames, and the Algorithm 2: Anomaly Detection based on L TI Input : current frame t , prediction span L , previous frames from t − L to t − 1 , AS ( i ) for i ∈ [ t − L, t − 1] Output: AS ( t ) for i ← t − L to t − 1 do use the proposed prediction model to forecast S i ( i + 1 , t ) compute W LS D ist S ( i + 1 , t ) , S i ( i + 1 , t ) end compute LT I ( t ) according to Eq. (12) compute AS ( t ) according to Eq. (11) predicted local sequences ending at t , which is the out- put of our backbone prediction model. T o analyze the time complexity of Algorithm 2, let m denote the number of dimensions of a frame (i.e., channels of the time series) and L the prediction span, which is a hyper -parameter shared by the backbone prediction model and the detection algorithm. W ithout parallelization, it takes O ( m ) to calculate D F D ist between each pair of frames, so the time cost for obtaining W LS D ist between two local sequences is O ( Lm ) . Therefore, the time complexity of detection at a single frame t is O ( L 2 m ) since L sources of forecast are used (see Eq. 12). As analyzed in Section III, the complexity can be reduced to O ( m + L ) with the proper parallelization. V . E X P E R I M E N T S In this section, we first e valuate the effecti veness of our backbone prediction model. Then we compare AD-L TI with the existing anomaly detection algorithms in sensitivity and specificity (using the A UC metric). THIS IS A PREPRINT VERSION OF THE WORK 10.1109/TKDE.2020.3035685 PUBLISHED IN THE IEEE TKDE BY ©IEEE 9 W e set up our experiments on a machine equipped with a dual-core CPU (model: Intel Core i5-8500, 3.00 GHz), a GPU (model: GTX 1050Ti) and 32GB memory . The inference module of our backbone model is implemented on Pytorch (version: 1.0.1) platform and the decomposition module is implemented using Prophet (version: 0.4) released by Face- book. W e select three datasets for ev aluation. CalIt2 and Dodgers Loop Sensor are two public datasets published by the Uni versity of California Irving (UCI) and av ailable in the UCI machine learning repository . Another dataset we use is from the priv ate production en vironment of a cyber-security company , which is the collaborator of this project. This dataset collects the server logs from a number of clusters (owned by other third-party enterprises) on a regular basis. The dataset is referred to as the Server Log dataset in this paper . CalIt2 Dataset CalIt2 is a multiv ariate time series dataset containing 10080 observations of two data streams corresponding to the counts of in-flow and out-flow of a building on UCI campus. The pur- pose is to detect the presence of an ev ent such as a conference and seminar held in the building. The timestamps are contained in the dataset. The original data span across 15 weeks (2520 hours) and is half-hourly aggreg ated. W e truncated the last 120 hours and conducted a simple processing on the remaining 2400 hours of data by making it hourly-aggregated. The CalIt2 dataset is provided with annotations that label the date, start time and end time of ev ents ov er the entire period. There are 115 anomalous frames (4.56% contamination ratio) in total. In our experiment, labels are omitted during training (because our prediction model forecasts local sequences of frames) and will only be used for e valuating detecting results. Server Log Dataset The Server Log dataset is a multi-channel time series with a fixed interv al between two consecutiv e frames. The dataset spans from June 29th to September 4th, 2018 (1620 hours in total). The raw data is provided to us in form of separate log files, each of which stores the counts of a Linux server ev ent on an hourly basis. The log files record the in vocations of fiv e different processes, which include CR OND, RSYSLOGD, SESSION, SSHD and SU. Each process represents a channel of observing the server . W e pre-processed the data by aggre- gating all the files to form a fi ve-channel time series. Fig. 4 shows the time series of all fiv e channels. Currently , the company relies on security technicians to observe the time series and spot the potential anomalies, which might be caused by the security attacks. The aim of this project is to develop the automated method to spot the potential anomalies and quantify them at real time as the process in vocations are being logged in the server . Anomalous ev ents such as external cyber attacks exist in the Server Log dataset, but the labels are not av ailable. W e acquired the manual annotations for the test set from the technicians in the company . T otally 76 frames are labeled as anomalies in the test set, equiv alent to a contamination ratio of 14.6%. Dodgers Loop Sensor Dataset Dodgers Loop Sensor is also a public dataset a vailable in the UCI data repository . The data were collected at the Glendale on-ramp for the 101 North free way in Los Angeles. The sensor is close enough to the stadium for detecting unusual traffic after a Dodgers game, but not so close and heavily used by the game traf fic. T raffic observations were taken over 25 weeks (from Apr . 10 to Oct. 01, 2005) with date and timestamps provided for both data records and ev ents (i.e., the start and end time of games). The raw dataset contains 50400 records in total. W e pro-processed the data to make it an hourly time series dataset. Fig. 4. The Server Log time series dataset A. Evaluating Backbone Model W e trained our prediction model on the datasets separately to ev aluate its accuracy as well as the impact of seasonal terms extracted by the decomposition module. W e split the datasets into training, validation and test sets. On CalIt2, the first 1900 frames were used for training and the follo wing 500 for testing. On the Serv er Log dataset, 1100 frames for training and 520 for test. On Dodgers Loop, 3000 records for training and 1000 for test. 300, 300, and 500 frames were used for validation on CalIt2, Server Log and Dodgers Loop, respectively . The proposed model uses Pr ophet to implement the decom- position module and a stacked GR U network to implement the prediction module. W e extracted daily and weekly terms for each channel. More specifically , for each channel we generated two mapping lists after fitting the data by Prophet . One list contains the readings at each of 24 hours in a day , while the other list includes the readings at each of 7 days in a week. Fig. 5 shows an example of the mapping lists. The v alues of seasonal terms are different for CalIt2, Server Log and Dodgers datasets, but the resulting mapping lists share the same format as the e xample shown in Fig. 5. Based on the mapping lists and the timestamp field provided in the data we build our prediction network with seasonal features as additional input. T able II sho ws the network structures adopted for each of the datasets, where L is the maximum length of local sequences as a hyper -parameter . tanh is used as the acti vation function and Mean Square Error (MSE) loss as the loss function. Dropout is not enabled and THIS IS A PREPRINT VERSION OF THE WORK 10.1109/TKDE.2020.3035685 PUBLISHED IN THE IEEE TKDE BY ©IEEE 10 Fig. 5. An example of seasonal terms mapping in which the numerical values quantify seasonal impacts we set a weight decay of 6 e − 6 during the training to prev ent ov er-fitting. W e use Adam [30] as the optimizer with the initial learning rate set to 0.001. T ABLE II N E T W O R K S T RU C T U R E S O F T H E I N F E R E N C E PA RT F O R P R E D I C T I N G L O C A L S E Q U E N C E O F L E N G T H L Dataset type # of features (raw+seasonal) topology CalIt2 GR U 2+4 [6 , 20 × 2 , 2 L ] Server log GRU 5+10 [15 , 20 × 3 , 5 L ] Dodgers GR U 1+2 [3 , 20 × 2 , L ] In order to ev aluate the impact of the concatenated seasonal features, we also implemented a baseline GRU network with the same structure and hyper-parameters as our inference mod- ule except that the seasonal features are not included. W e also consider the impact of a critical hyper -parameter , time steps , in training the inference networks. The larger the time steps , the longer the gradients back-propagate through time and the more time-consuming the training process becomes. W e set different values of time steps for the training of both our inference network and the baseline network to in vestigate the impact of seasonal features. The prediction span L is fixed to 5 (hours). The results are summarized in T able III. In T able III, the decomposition time and training time refer to the fitting/training time spent by the decomposition module and the inference module, respectiv ely . W e ev aluated three cases where time steps takes different values of 24 (daily seasonality length), 72 or 168 (weekly seasonality length). Mean squared error (MSE) is calculated on the normalized test data to reflect the model quality . From the results we can first observe that it only takes the decomposition module of our model a few seconds to extract the seasonal terms from all the channels. More importantly , we find that augmenting the GR U model with seasonal terms (ST) makes the backbone model (GR U+ST) more complicated in structure, but it does not increase the training cost while resulting in much better accuracy – it outperforms the baseline GR U network (without Seasonal T erms) significantly in accuracy (i.e., lo wer error). The accuracy increases by more than 20 percent on CalIt2 and by from 35 to nearly 50 percent on the Server Log dataset. B. Evaluating AD-LTI In this section we ev aluate our unsupervised anomaly detection algorithm AD-L TI. W e also implement a number of representative related algorithms for comparison. These baseline algorithms include One Class Support V ector Ma- chine (OCSVM) [4], Isolation Forest (iForest) [8], Piece wise Median Anomaly Detection [32], LSTM-based Fault Detec- tion (LSTM-FD) [33] and LSTM-AD, which is LSTM-based anomaly detection scheme using multiple forecasts [34]. OCSVM is a mutation of SVM for unsupervised outlier detection. OCSVM shares the same theoretical basis as SVM while using an additional argument ν as an anomaly ratio- related parameter . Isolation forest is an outlier detection ap- proach based on random forest in which isolation trees are built instead of decision trees. An a priori parameter cr is required to indicate the contamination ratio. Both OCSVM and Isolation Forest are embedded in the Scikit-learn package [31]. Piece wise Median Anomaly Detection is a windo w-based algorithm that splits the series into fixed-size windo ws within which anomalies are detected based on a decomposable series model. LSTM-FD is a typical prediction-driv en approach that detects anomalies in time series by simply analyzing (predic- tion) error distribution. They adopt a frame-to-frame LSTM network as their backbone model. Similar to our approach, LSTM-AD also uses a multi-source prediction scheme (we discussed its working in Section II). W e use the A UC metric to measure the effecti veness. Area Under the Curve, abbreviated as A UC, is a commonly used metric for comprehensively assessing the performance of binary classifiers. ”The curve” refers to the Receiv er Operator Characteristic (R OC) Curv e, which is generated by plotting the true positiv e rate (y-axis) against the false positiv e rate (x- axis) based on the dynamics of decisions made by the target classifier (the anomaly detector in our case). The concept of R OC and A UC can reveal the ef fectiv eness of a detection algorithm from the perspectiv es of both specificity and sen- sitivity . Another reason why we choose A UC is because it is a threshold-independent metric. AD-L TI does not perform classification but presents the detection results in the form of probability . Hence metrics such as precision and recall cannot be calculated unless we consider the threshold as an extra parameter , which violates our aim of designing a generic scheme. W e ev aluate AD-L TI and the baseline algorithms on these three datasets. Parameters for baseline algorithms are set to the default or the same as in the original papers if they were suggested. For LSTM-FD, LSTM-AD and AD-L TI, time steps is set to 72 (hours). As shown in Fig. 6, Fig. 7 and Fig. 8, we draw three groups of 1-D heatmaps to compare the detection decisions made by each algorithm (labelled on the y-axis) with the ground truth on each test dataset. Normal and anomalous frames are marked by green and red, respectively , on the map of ground truth. Frames are also marked by each anomaly detection algorithm with scores, which are reflected using a range of colors from green to red. Anomaly e vents are sparse in Calit2 dataset (Fig. 6) while comparatively more anomalous data point exist in the Server Log (Fig. 7). From the figures, we can see that most of the “hotspots” are captured by our scheme and its false alarm rate is comparatively low . Notably we also observe that OCSVM produces a large number of false alarms on Calit2 THIS IS A PREPRINT VERSION OF THE WORK 10.1109/TKDE.2020.3035685 PUBLISHED IN THE IEEE TKDE BY ©IEEE 11 T ABLE III C O M PA R I N G G RU + S T ( T H E P RO P O S E D B AC K B O N E M O D E L AU G M E N T E D W I T H S E A S O NA L F E ATU R E S ) W I T H T H E V A N I L L A G RU I N A C C U R AC Y , W H I C H I S I N D I C T E D B Y T H E L O W E S T T E S T M S E ( M E A N S Q U A R E E R RO R ) AC H I E V E D U N D E R D I FF E R E N T T R A I N I N G S E T T I N G S O F time steps ( ts ) . I N E AC H G R O U P O F C O M PA R I S O N , B OT H M O D E L S H A V E C O N V E R G E D A N D T R A I N E D F O R T H E S A M E N U M B E R O F E P O C H S . Calit2 Dataset Server Log Dataset Dodgers Loop Dataset GR U+ST GR U GR U+ST GR U GR U+ST GR U seasonal term decomp. time 2.7s - 6.6s - 2.9s - ts =24 T est MSE 0.0068 0.0092 0.0020 0.0039 0.0098 0.0113 T raining time to con verge 173.7s 176.7s 460.4s 464.9s 550.2s 552.3s ts =72 T est MSE 0.0066 0.0089 0.0013 0.0020 0.0066 0.0085 T raining time to con verge 180.2s 185.6s 468.3s 436.9s 628.6s 632.9s ts =168 T est MSE 0.0067 0.0085 0.0018 0.0033 0.0072 0.0086 T raining time to con verge 169.8s 174.5s 421.0s 446.5s 709.9s 752.9s but fails to spot most of the anomaly frames on the Server Log dataset. The Piecewise method misses a lot of anomalies, while the iForest method tends to mistakenly label a large portion of normal data as anomalies. LSTM-AD produced the results close to our method on the Dodgers dataset, but rendered a large portion of false alarms on other two datasets. T o giv e a more intuitive view , we plot the ROC curves of AD-L TI and the baseline algorithms on the test data in Fig. 9, Fig. 10 and Fig. 11. Fig. 6. Heatmaps of detection decisions made by AD-L TI and baseline algorithms compared with the ground truth on CalIt2 dataset Fig. 7. Heatmaps of detection decisions made by AD-L TI and baseline algorithms compared with the ground truth on Server Log dataset Fig. 8. Heatmaps of decision results by AD-L TI and baseline algorithms compared with the ground truth on Dodgers Loop dataset From the ROC curves we can observ e that AD-L TI produced the most reliable decisions as its curve is the closest to the Fig. 9. ROC curves of anomaly detection algorithms on Calit2 dataset Fig. 10. R OC curves of anomaly detection algorithms on Server Log dataset top-left corner for all of the three datasets, especially on the Server Log Dataset (see Fig. 10), which features the complex seasonality in each channel. The detection dif ficulty on the Server Log dataset appears to be harder for other existing algorithms (the reason is explained later) - none of other algorithms achie ve high true positi ve rate at a lo w false positive rate. W e further calculate the corresponding A UC for each algorithm on both datasets. The resulting A UC v alues are shown in T able IV. As sho wn in T able IV, AD-L TI achiev es the highest A UC values of 0.93, 0.977 and 0.923 on CalIt2, Server Log dataset and the Dodgers Loop datasets, respectiv ely . On CalIt2, the A UC v alues of the baseline algorithms are between 0.8 and 0.9 with the only exception of OCSVM when nu is set to 0.05 - the approximately actual anomaly rate (0.046, precisely) for CalIt2. This to some degree indicates that OCSVM is sensitiv e THIS IS A PREPRINT VERSION OF THE WORK 10.1109/TKDE.2020.3035685 PUBLISHED IN THE IEEE TKDE BY ©IEEE 12 Fig. 11. R OC curves of anomaly detection algorithms on Dodgers Loop dataset T ABLE IV C O M PA R I N G T H E AU C V A L U E S O F A N O M A L Y D E T E C T I O N A L G O R I T H M S O N C A L I T 2 , S E RV E R L O G A N D D O D G E R S L O O P DAT A S E T S W H E R E I N AC T U A L C O N TA M I N A T I O N R A T I O S ( C R ) A R E A P P R OX I M A T E LY 0 . 0 5 , 0 . 1 5 A N D 0 . 1 0 , R E S P E C T I V E L Y . CalIt2 Server Log Dodgers Loop OCSVM [4](default) 0.876 0.677 0.591 OCSVM ( nu = CR) 0.708 0.672 0.525 iForest [8](default) 0.891 0.756 0.535 iForest ( cr = CR) 0.877 0.761 0.518 Piecewise AD [32] 0.833 0.721 0.751 LSTM-FD [33] 0.847 0.755 0.829 LSTM-AD [34]( L = L ∗ ) 0.900 0.793 0.859 AD-L TI ( L = L ∗ ) 0.935 0.977 0.923 to parameters. Anomaly detection is much more challenging on the Server Log dataset due to the increase in the number of channels, and the complexity in seasonality and uncertainty (e.g., channel SU is fairly unpredictable). As the result shows, the A UC v alues for all existing algorithms drop below 0.8 with the best of them, Isolation Forest, reaching 0.761 (with the contamination ratio cr set to 0.15), which could float as it is a randomized algorithm. Howe ver , the actual contamination ratio is hardly a priori knowledge in practical scenarios. W e also observed that prediction-driv en approaches (LSTM-FD, LSTM-AD and AD-L TI) significantly outperformed others on the Dodgers Loop dataset – this is mainly because of the presence of strong noise in the traffic data. The proposed AD-L TI algorithm makes the most reliable decisions in all of the tested scenarios. The main reasons are two-fold: from one perspectiv e, the underlying backbone model for AD-L TI is very accurate with the complement of seasonal features that ef fecti vely captures complex seasonality and mitigates the noise in raw data. From another perspective, AD-L TI is robust in scoring each frame because we lev erage multi-source forecasting and weight each prediction based on the confidence of the prediction source. AD-L TI has an important hyper-parameter L , which deter- mines both the prediction length for the backbone model and the maximum probe length for computing L TI. W e ev aluated our algorithm against LSTM-AD (which is also based on multiple forecasts) with different L v alues to inv estigate the impact of L on detection reliability and time ef ficiency . The result is summarized in T able V. From T able V we can see our method outperformed LSTM- AD and also observe dif ferent impacts of the probe windo w length L on dif ferent datasets. On CalIt2 and Dodgers, the impact of L on the detection reliability (rev ealed by A UC) is subtle, while on the Server Log dataset very lar ge L values sho w obvious ne gativ e ef fect on our scheme. The reasons behind these results are partly because as L becomes bigger ( L is set to 20 or above), the prediction made by the backbone model becomes less accurate, and partly because of the dilution of local information. In comparison, LSTM-AD is much more susceptible to the hyper-parameter L . Besides, as expected a longer probe length leads to the increased ov erhead in detection, which can be mitigated by running the scheme in parallel. Empirically , we recommend setting L to a value between 5 and 20 considering both detection reliability and efficienc y . V I . C O N C L U S I O N On-line detection of anomalies in time series has been cru- cial in a broad range of information and control systems that are sensiti ve to une xpected events. In this paper , we propose an unsupervised, prediction-driv en approach to reliably detecting anomalies in time series with complex seasonality . W e first present our backbone prediction model, which is composed of a time series decomposition module for seasonal feature extraction, and an inference module implemented using a GR U network. Then we define Local T rend Inconsistency , a nov el metric that measures abnormality by weighting local expectations from previous records. W e then use a scoring function along with a detection algorithm to con vert the LT I value into the probability that indicates a record’ s likelihood of being anomalous. The whole process can leverage the matrix operations for parallelization. W e e v aluated the proposed de- tection algorithm on three different datasets. The result shows that our scheme outperformed se veral representati ve anomaly detection schemes commonly used in practice. In the future we plan to focus on extending our work to address new challenges in large-scale, information-intensi ve distributed systems such as edge computing and IoT . W e aim to refine our method with scenario-oriented designs, for in- stance, detection in asynchronized streams sent by distributed sensors, and build a robust monitoring mechanism in order to support intelligent decisioning in these types of systems. A C K N O W L E D G E M E N T This work is partially supported by W orldwide Byte Se- curity Co. L TD, and is supported by National Natural Sci- ence Foundation of China (Grant Nos. 61772205, 61872084), Guangdong Science and T echnology Department (Grant No. 2017B010126002), Guangzhou Science and T echnology Pro- gram key projects (Grant Nos. 201802010010, 201807010052, 201902010040 and 201907010001), and the Fundamental Re- search Funds for the Central Uni versities, SCUT (Grant No. 2019ZD26). R E F E R E N C E S [1] Y ule, G. U. (1926). Why do we sometimes get nonsense-correlations between Time-Series?–a study in sampling and the nature of time-series. Journal of the royal statistical society , 89(1), 1-63. THIS IS A PREPRINT VERSION OF THE WORK 10.1109/TKDE.2020.3035685 PUBLISHED IN THE IEEE TKDE BY ©IEEE 13 T ABLE V AU C V A L U E S A N D D E T E C T I O N OV E R H E A D S ( I N M S P E R F R A M E ) U S I N G L S T M - A D A N D A D - L T I U N D E R D I FF E R E N T S E T T I N G S O F P RO B E L E N G T H L . B O T H M E T H O D S U S E M U LT I P L E F O R E C A S T S W I T H E A C H F R A M E B E I N G P R E D I C T E D F O R L T I M E S . Algorithm L Calit2 Dataset Server Log Dataset Dodgers Loop Dataset A UC overhead(ms/frame) A UC ov erhead(ms/frame) A UC overhead(ms/frame) LSTM-AD [34] L = 5 0.900 0.126 0.793 0.129 0.859 0.123 L = 10 0.883 0.142 0.753 0.148 0.778 0.142 L = 20 0.847 0.174 0.596 0.183 0.813 0.174 L = 30 0.813 0.206 0.505 0.215 0.815 0.205 AD-L TI L = 5 0.911 0.189 0.977 0.282 0.912 0.196 L = 10 0.912 0.353 0.925 0.399 0.923 0.316 L = 20 0.935 0.706 0.845 0.784 0.906 0.707 L = 30 0.912 1.125 0.784 1.461 0.915 1.110 [2] Frisch, R., & W augh, F . V . (1933). Partial time regressions as com- pared with individual trends. Econometrica: Journal of the Econometric Society , 387-401. [3] Seiwell, H. R. (1949). The principles of time series analyses applied to ocean wav e data. Proceedings of the National Academy of Sciences of the United States of America, 35(9), 518. [4] Sch ¨ olkopf, B., W illiamson, R. C., Smola, A. J., Sha we-T aylor , J., & Platt, J. C. (2000). Support vector method for novelty detection. Proceedings of the 12th International Conference on Neural Information Processing Systems (NIPS’99), pp. 582-588. [5] Zhang, R., Zhang, S., Lan, Y ., & Jiang, J. (2008). Network anomaly detection using one class support vector machine. In Proceedings of the International MultiConference of Engineers and Computer Scientists (V ol. 1). [6] Maglaras, L. A., & Jiang, J. (2014, August). Ocsvm model combined with k-means recursive clustering for intrusion detection in scada systems. In 10th International conference on heterogeneous networking for quality , reliability , security and robustness (pp. 133-134). IEEE. [7] Shang, W ., Zeng, P ., W an, M., Li, L., & An, P . (2016). Intrusion detection algorithm based on OCSVM in industrial control system. Security and Communication Networks, 9(10), 1040-1049. [8] Liu, F . T ., T ing, K. M., & Zhou, Z. H. (2012). Isolation-based anomaly detection. ACM T ransactions on Knowledge Discovery from Data (TKDD), 6(1). [9] Radov anovi ´ c, M., Nanopoulos, A., & Ivanovi ´ c, M. (2014). Rev erse nearest neighbors in unsupervised distance-based outlier detection. IEEE transactions on knowledge and data engineering, 27(5), 1369-1382. [10] Calheiros, R. N., Ramamohanarao, K., Buyya, R., Leckie, C., & V ersteeg, S. (2017). On the effecti veness of isolation-based anomaly detection in cloud data centers. Concurrency and Computation: Practice and Experience, 29(2017)e4169. doi: 10.1002/cpe.4169 [11] Chan, P . K., & Mahoney , M. V . (2005, November). Modeling multiple time series for anomaly detection. In Fifth IEEE International Confer- ence on Data Mining (ICDM’05) (pp. 8-pp). IEEE. [12] Y e, L., & Keogh, E. (2009, June). Time series shapelets: a new primitiv e for data mining. In Proceedings of the 15th ACM SIGKDD international conference on Knowledge discovery and data mining (pp. 947-956). A CM. [13] Zakaria, J., Mueen, A., & Keogh, E. (2012, December). Clustering time series using unsupervised-shapelets. In 2012 IEEE 12th International Conference on Data Mining (pp. 785-794). IEEE. [14] Y eh, C. C. M., Zhu, Y ., Ulanova, L., Begum, N., Ding, Y ., Dau, H. A., ... & Keogh, E. (2018). T ime series joins, motifs, discords and shapelets: a unifying vie w that exploits the matrix profile. Data Mining and Knowledge Discovery , 32(1), 83-123. [15] Hou, L., Kwok, J. T ., & Zurada, J. M. (2016, February). Efficient learning of time series shapelets. In 13th AAAI Conference on Artificial Intelligence. [16] Gu, Z., He, L., Chang, C., Sun, J., Chen, H., & Huang, C. (2017). Dev eloping an ef ficient pattern discov ery method for CPU utilizations of computers. International Journal of Parallel Programming, 45(4), 853- 878. [17] Zhu, H., Gu, Z., Zhao, H., Chen, K., Li, C. T ., & He, L. (2018). Dev eloping a pattern discovery method in time series data and its GPU acceleration. Big Data Mining and Analytics, 1(4), 266-283. [18] W ei, L., Kumar , N., Lolla, V . N., K eogh, E. J., Lonardi, S., & Chotirat (Ann) Ratanamahatana. (2005, June). Assumption-Free Anomaly Detec- tion in T ime Series. In SSDBM (V ol. 5, pp. 237-242). [19] Huang, T ., Zhu, Y ., Wu, Y ., Bressan, S., & Dobbie, G. (2016). Anomaly detection and identification scheme for VM liv e migration in cloud infrastructure. Future Generation Computer Systems, 56, 736-745. [20] Hyndman, R. J., W ang, E., & Laptev , N. (2015, November). Large-scale unusual time series detection. In 2015 IEEE international conference on data mining workshop (ICDMW) (pp. 1616-1619). IEEE. [21] Li, J., Pedrycz, W ., & Jamal, I. (2017). Multivariate time series anomaly detection: A framework of Hidden Markov Models. Applied Soft Com- puting, 60, 229-240. [22] Chauhan, Sucheta and V ig, Lovekesh. Anomaly detection in ECG time signals via deep long short-term memory networks. In Data Science and Advanced Analytics (DSAA), 2015. 36678 2015. IEEE International Conference on, pp. 1–7. IEEE, 2015. [23] Malhotra, P ., Ramakrishnan, A., Anand, G., V ig, L., Agarwal, P ., & Shroff, G. (2016). LSTM-based encoder-decoder for multi-sensor anomaly detection. arXiv preprint [24] Ahmad, S., Lavin, A., Purdy , S., & Agha, Z. (2017). Unsupervised real- time anomaly detection for streaming data. Neurocomputing, 262, 134- 147. [25] Pascanu, R., Mikolov , T ., & Bengio, Y . (2013, February). On the diffi- culty of training recurrent neural networks. In International conference on machine learning (pp. 1310-1318). [26] T ang, X. (2019). Large-Scale Computing Systems W orkload Prediction Using P arallel Impro ved LSTM Neural Netw ork. IEEE Access, 7, 40525-40533. [27] Chen, S., Li, B., Cao, J., & Mao, B. (2018). Research on Agricultural En vironment Prediction Based on Deep Learning. Procedia computer science, 139, 33-40. [28] Harvey , A. & Peters, S. (1990), Estimation procedures for structural time series models, Journal of Forecasting, V ol. 9, 89-108. [29] T aylor, S. J., & Letham, B. (2018). Forecasting at scale. The American Statistician, 72(1), 37-45. [30] Kingma, D. and Ba, J. (2015) Adam: A Method for Stochastic Opti- mization. Proceedings of the 3rd International Conference on Learning Representations (ICLR 2015). [31] Pedregosa, F ., V aroquaux, G., Gramfort, A., Michel, V ., Thirion, B., Grisel, O., ... & V anderplas, J. (2011). Scikit-learn: Machine learning in Python. Journal of machine learning research, 12(Oct), 2825-2830. [32] V allis, O., Hochenbaum, J., & Kejariwal, A. (2014). A novel technique for long-term anomaly detection in the cloud. In 6th USENIX W orkshop on Hot T opics in Cloud Computing (HotCloud 14). [33] P . Filonov , A. Lavrentyev , A. V orontsov , Multivariate Industrial T ime Series with Cyber-Attack Simulation: Fault Detection Using an LSTM- based Predictive Data Model, NIPS Time Series W orkshop 2016, Barcelona, Spain, 2016. [34] Malhotra, P ., V ig, L., Shroff, G., & Agarwal, P . (2015, April). Long short term memory networks for anomaly detection in time series. In Proceedings of European Symposium on Artificial Neural Networks, Computational Intelligence and Machine Learning (ESANN 15’), pp. 89-94. [35] Shi, H., Y ang, J., Ding, M., & W ang, J. (2011). A short-term wind power prediction method based on wa velet decomposition and BP neural network. Automation of Electric Power Systems, 35(16), 44-48. [36] Gould, P . G., Koehler , A. B., Ord, J. K., Snyder, R. D., Hyndman, R. J., & V ahid-Araghi, F . (2008). Forecasting time series with multiple seasonal patterns. European Journal of Operational Research, 191(1), 207-222. [37] De Livera, A. M., Hyndman, R. J., & Snyder , R. D. (2011). Forecasting time series with complex seasonal patterns using exponential smoothing. Journal of the American Statistical Association, 106(496), 1513-1527. THIS IS A PREPRINT VERSION OF THE WORK 10.1109/TKDE.2020.3035685 PUBLISHED IN THE IEEE TKDE BY ©IEEE 14 [38] Hodge, V ., & Austin, J. (2004). A survey of outlier detection method- ologies. Artificial intelligence revie w , 22(2), 85-126. [39] Janssens, O., Slavko vikj, V ., V ervisch, B., Stockman, K., Loccufier, M., V erstockt, S., ... & V an Hoecke, S. (2016). Convolutional neural network based fault detection for rotating machinery . Journal of Sound and V ibration, 377, 331-345. [40] Ince, T ., Kiranyaz, S., Eren, L., Askar, M., & Gabbouj, M. (2016). Real- time motor fault detection by 1-D con volutional neural networks. IEEE T ransactions on Industrial Electronics, 63(11), 7067-7075. [41] Sabokrou, M., Fayyaz, M., Fathy , M., Moayed, Z., & Klette, R. (2018). Deep-anomaly: Fully conv olutional neural network for fast anomaly de- tection in crowded scenes. Computer V ision and Image Understanding, 172, 88-97. [42] Zheng, Y ., Liu, Q., Chen, E., Ge, Y ., & Zhao, J. L. (2014, June). T ime series classification using multi-channels deep conv olutional neural networks. In International Conference on W eb-Age Information Man- agement (pp. 298-310). Springer , Cham. [43] Rajan, J. J., & Rayner , P . J. (1995). Unsupervised time series classifi- cation. Signal processing, 46(1), 57-74. [44] L ¨ angkvist, M., Karlsson, L., & Loutfi, A. (2014). A re view of unsu- pervised feature learning and deep learning for time-series modeling. Pattern Recognition Letters, 42, 11-24. [45] Ahmed, M., Mahmood, A. N., & Hu, J. (2016). A surve y of network anomaly detection techniques. Journal of Network and Computer Ap- plications, 60, 19-31. [46] Holt, C. C. (2004). Forecasting seasonals and trends by exponentially weighted moving av erages. International journal of forecasting, 20(1), 5-10. [47] V an De Geijn, R. A., & W atts, J. (1997). SUMMA: Scalable univ ersal matrix multiplication algorithm. Concurrency: Practice and Experience, 9(4), 255-274. [48] Chen, J., Li, K., Deng, Q., Li, K., & Philip, S. Y . (2019). Distributed Deep Learning Model for Intelligent V ideo Surveillance Systems with Edge Computing. IEEE T ransactions on Industrial Informatics. [49] Chen, J., Li, K., Bilal, K., Metwally , A. A., Li, K., & Y u, P . (2018). Parallel protein community detection in large-scale PPI networks based on multi-source learning. IEEE/A CM transactions on computational biology and bioinformatics. [50] Chen, J., Li, K., Bilal, K., Li, K., & Philip, S. Y . (2018). A bi- layered parallel training architecture for large-scale conv olutional neural networks. IEEE transactions on parallel and distributed systems, 30(5), 965-976. [51] Duan, M., Li, K., Liao, X., & Li, K. (2017). A parallel multiclassifi- cation algorithm for big data using an extreme learning machine. IEEE transactions on neural networks and learning systems, 29(6), 2337-2351. [52] Chen, C., Li, K., Ouyang, A., T ang, Z., & Li, K. (2017). Gpu-accelerated parallel hierarchical extreme learning machine on flink for big data. IEEE Transactions on Systems, Man, and Cybernetics: Systems, 47(10), 2740-2753.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment