Pseudo-Zernike Moments Based Sparse Representations for SAR Image Classification

We propose radar image classification via pseudo-Zernike moments based sparse representations. We exploit invariance properties of pseudo-Zernike moments to augment redundancy in the sparsity representative dictionary by introducing auxiliary atoms. …

Authors: Shahzad Gishkori, Bernard Mulgrew

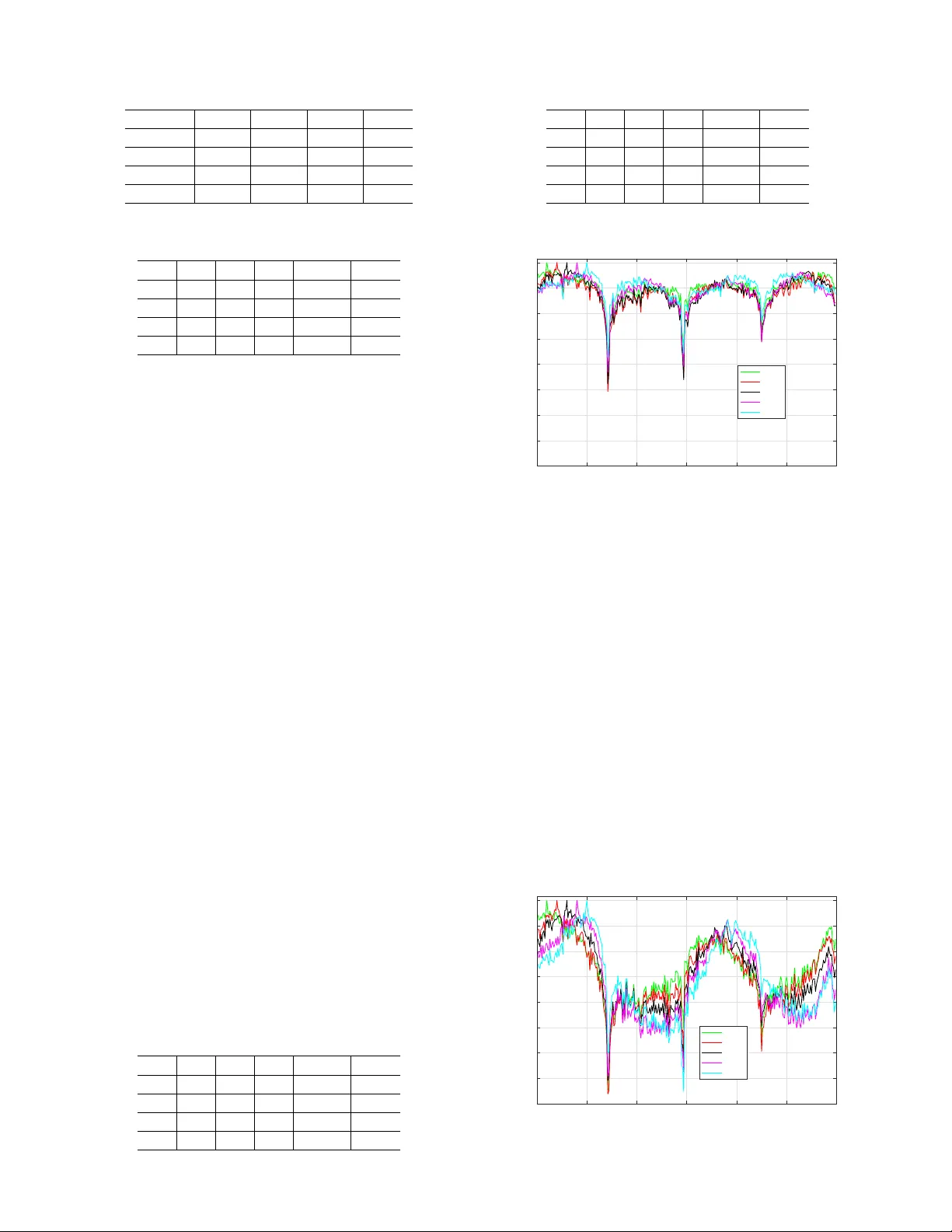

1 Pseudo-Zernike Moments Based Sparse Representations for SAR Image Classification Shahzad Gishkori and Bernard Mulgre w Abstract —W e propose radar image classification via pseudo- Zernike moments based sparse repr esentations. W e exploit in- variance properties of pseudo-Zernike moments to augment re- dundancy in the sparsity repr esentative dictionary by intr oducing auxiliary atoms. W e employ complex radar signatur es. W e pr ove the validity of our proposed methods on the publicly available MST AR dataset. Index terms— Sparse representations, pseudo-Zernike mo- ments, SAR image classification, complex signatures I . I N T RO D U C T I O N Synthetic aperture radar (SAR) can provide all-weather imagery with a v ery high resolution [1]. This has naturally led to using SAR for the purpose of automatic target recognition or classification. Initial usage w as military related. Ho wev er , SAR imaging with the aim of classification is making very quick strides for the automotive usage as well [2]. T raditionally , a number of techniques are used for SAR image classification. Here, we briefly mention a couple of them. T emplate based classification [3] requires generation of a large number of templates for each target and then matching the test image with those templates in an exhausti ve search manner . It is an effecti v e linear approach. Howe ver , it is computationally quite expensi ve. Among the nonlinear approaches, support vector machine classifier (SVC) has been quite popular [4]. It is a large margin classifier and it can outperform the template based classifier . Howe ver , this approach is dependent upon accurate estimation of the pose angle which in volv es an extra preprocessing stage. Recent trends in classification are based on sparse represen- tations, also kno wn as sparse coding [5], [6]. Initially , efforts were made to find or use a unified dictionary for all the classes, see, e.g., [7], [8] and references therein. Instead of using a single dictionary for all the classes, [9] proposed to use unit normalised measurements of the objects as the columns of an ov ercomplete dictionary . Coding is done through an ` 1 - norm minimisation problem and the classification is based on a least-squares metric w .r .t. the group of columns specific to a particular class object. This is kno wn as sparse representation based classifier (SRC). The ease of formulating a dictionary by using the measurements of the class objects directly , made SRC a fav ourable choice for classification in a wide range of fields. In SAR image classification, SRC was used in [10] S. Gishkori and B. Mulgre w are with Institute for Digital Communications (IDCOM), The School of Engineering, The University of Edinburgh, UK. Emails: { s.gishkori, b.mulgre w } @ed.ac.uk This work was supported by Jaguar Land Rover and the UK-EPSRC grant EP/N012240/1 as part of the jointly funded T ow ards Autonomy: Smart and Connected Control (T ASCC) Programme. from the perspectiv e of class manifolds [11]. A class manifold is defined ov er the set of measurements for a particular class object, and the SAR image is claimed to lie in that manifold by using the fact that linear representation can be provided to a nonlinear manifold if a local region of the manifold is considered [12]. This local region of the manifold giv es the basis for sparse representation of a test image ov er that manifold. Therefore, it obviates the need for a rigorous preprocessing as well as pose angle estimation. Dimensionality reduction can be achieved via random projections. Howe ver , it can result in a performance loss. It was shown in [9], [10] that SRC can outperform linear SVC (LSVC), i.e., when a linear kernel is used. Howe ver , SRC is primarily based upon sparse reconstruction or coding, and it does not inv olve the classification aspect during the coding process. A number of papers have been written to incorporate this aspect in sparse representations. Some discriminati ve dictionary learning techniques hav e been proposed in [13], [14]. Similarly , joint dictionary learning and encoding has been proposed in [15]. Although, these methods pro vide good performance but dictionary learning, whether discriminativ e or not, is a computationally intensi ve process. Moments based image representations hav e been successfully used over many decades [16], [17]. The basic idea is to derive image features which are scale-, shift- and rotation-inv ariant by using nonlinear combinations of the regular moments (also called geometric moments). Howe ver , the gains hav e been limited, primarily due to the non-orthogonality of regular mo- ments. Orthogonal moments, e.g., Legendre moments, Zernike moments and pseudo-Zernike (PZ) moments [18], [19] have been a popular substitute in pattern recognition. Among these, PZ-moments stand apart both in terms of generating the maximum number of in v ariant moments as well as in terms of performance regarding noise rejection. PZ-moments have been used for radar automatic target recognition in [20] with a nearest neighbour classifier . Similarly , in [21], PZ-moments hav e been used for radar classification based on its micro- Doppler signatures, with an SVC. Howe ver , in both these cases, the emphasis has been on feature extraction w .r .t. PZ- moments and not on the choice of an optimal classifier . Contributions . In this paper, we propose using PZ-moments in combination with the SRC framework (PZ-SRC), in order to gain from both optimal feature extraction as well as optimal classification. By using a finite number of PZ-moments, we reduce the dimensionality of the problem. Due to inv ariance properties of the PZ-moments, we obtain good performance, albeit in the low dimensional setting. W e also introduce auxiliary atoms in the dictionary to increase the redundancy of 2 information, which further exploits the in variance properties of the PZ-moments. Thus, information is better localised in individual class manifolds. This results in a further improv e- ment in classification performance. Note, this also forms a unique contribution to the SRC framew ork, in general, as well. In order to utilise both the magnitude as well as the phase information of the complex radar signatures, we fuse the two parameters by a simple averaging mechanism (see [22] for details on the fusion mechanisms). This results in an ev en more informativ e radar signatures with direct positiv e impact ov er the classification performance. Note, a similar approach has been used in [23]. Ho wev er , the feature extraction there is based on regular moments and the authors do not use auxiliary atoms. Now , in order to encode the test image in our proposed framew ork of PZ-SRC, we use state-of-the-art technique of iterativ e hard thresholding (IHT) algorithm [24]. IHT provides very f ast con ver gence as well accuracy (in comparison to the approach of [9]), both of which are crucial in real-time radar image classification applications. W e test our proposed methods on the publicly av ailable MST AR dataset. Organisation . Section II giv es the basics of PZ-moments, Section III briefly describes the SRC method, Section IV details our proposed method of PZ-SRC, Section V provides simulation results and conclusions are gi ven in Section VI. Notations . Matrices are in upper case bold while column vectors are in lower case bold, ( · ) T denotes transpose, [ x ] i is the i th element of x , ˆ x is the estimate of x , ∆ = defines an entity , and the ` p -norm is denoted as || x || p = ( P N − 1 i =0 | [ x ] i | p ) 1 /p . I I . P S E U D O - Z E R N I K E M O M E N T S Let a piece wise continuous function s ( x, y ) (with bounded support) be the intensity function of a 2 -D real image in Cartesian coordinates. The regular moments of s ( x, y ) can be defined as µ p,q = Z x Z y x p y q s ( x, y ) dx dy (1) where { p, q } ∈ Z + and p + q is the degree of the moments. Note, (1) represents the projection of s ( x, y ) on monomial x p y q . Since { x p y q } is not an orthogonal set, µ p,q are not independent moments. In contrast, the PZ-moments are gen- erated from a set of orthogonal polynomials. W e refer to these polynomials as PZ-polynomials. The PZ-polynomials are a set of complex polynomials described as z m n ( r , θ ) = ρ m n ( r ) exp ( j mθ ) (2) where r ∆ = p x 2 + y 2 and θ ∆ = tan − 1 ( y /x ) are the length and angle of the position vector of a point ( x, y ) w .r .t. the centre of the image, respectiv ely , n ∈ Z + is the degree of the polynomial with frequency m , i.e., m ∈ [ − n, + n ] , and ρ m n ( r ) ∆ = n −| m | X κ ( − 1) κ (2 n + 1 − κ )! r n − κ κ ! ( n + | m | + 1 − κ )! ( n − | m | − κ )! (3) is the radial polynomial. When defined over a unite circle, i.e., r ≤ 1 , the PZ-polynomials exhibit orthogonality , i.e., Z 2 π 0 Z 1 0 [ z m n ( r , θ )] ∗ z m 0 n 0 ( r , θ ) r dr dθ = π n + 1 δ nn 0 δ mm 0 (4) where δ ii 0 is the Kronecker delta function. Note, it can be seen via simple enumeration that cardinality of the set of PZ- polynomials with degree ≤ n is, P = ( n + 1) 2 . Now , the PZ-moments can be obtained by projecting the image onto the PZ-polynomials as 1 a m n = n + 1 π Z 2 π 0 Z 1 0 [ z m n ( r , θ )] ∗ s ( r , θ ) r dr dθ (5) where s ( r , θ ) = s ( x, y ) | x = r cos θ,y = r sin θ . Due to (4), it can be shown that (5) generates a set of independent moments. The in variance properties of the PZ-moments can be estab- lished via mathematical manipulations. For scale and transla- tion in variance, one way is to use the regular moments of the image. The transformed image can be written as g ( x, y ) = s x υ + m x , y υ + m y (6) where m x ∆ = µ 1 , 0 /µ 0 , 0 and m y ∆ = µ 0 , 1 /µ 0 , 0 are the centroid adjustment parameters of the image s ( x, y ) , and υ ∆ = p ξ /µ 0 , 0 is the scale adjustment parameter of the image s ( x, y ) with a predetermined value ξ . No w , the scale- and translation- in v ariant PZ-moments can be generated by replacing s ( r, θ ) with g ( r , θ ) in (5), where g ( r, θ ) = g ( x, y ) | x = r cos θ,y = r sin θ . Since PZ-polynomials are a set of complex polynomials, the PZ-moments generated via (5) are also complex. The rotation in v ariance of the PZ-moments refers to the magnitude part only , i.e., | a m n | and not the phase. I I I . S PA R SE R E P R E S E N TA T I O N B A S E D C L A S S I FI E R Let a generic √ N × √ N image with intensity function g ( x, y ) (or g ( r, θ ) ) is represented as an N × 1 vector g via a lexicographic ordering (column or ro w ordered). Let g k j be the j th image measurement of the k th object class, for j = 1 , · · · , J k and k = 1 , · · · , K . Now , giv en a set of training image measurements { g k j } , with k g k j k 2 2 = 1 , the SRC method defines the dictionary as G ∆ = [ G 1 , G 2 , · · · , G K ] (7) where G is an N × J matrix with J = P K k =1 J k and G k ∆ = [ g k 1 , g k 2 , · · · , g k J k ] is an N × J k matrix acting as a sub-dictionary for class k , for k = 1 , · · · , K . Any test image measurement, represented as an N × 1 vector ˜ y can then be decomposed or encoded according to the linear model ˜ y = G ˜ x + ˜ n (8) where ˜ x is a J × 1 vector of coef ficients defined as, ˜ x ∆ = [ ˜ x 1 T , ˜ x 2 T , · · · , ˜ x K T ] T , where ˜ x k are the coef ficients w .r .t. the sub-matrix G k , and the N × 1 vector ˜ n accounts for model errors with a bounded energy , i.e., k ˜ n k 2 < ˜ . It is clear from (8) that gi ven ˜ y belongs to the k th class, ˜ x would be a sparse vector . Now , an estimate of ˜ x can be obtained by solving the following ` 1 -norm optimisation problem (OP). ˆ ˜ x = arg min ˜ x k ˜ y − G ˜ x k 2 2 + λ k ˜ x k 1 1 (9) 1 Note, in case of a digital image, the integrals in the projection operations are replaced by summations. 3 where λ > 0 . The classification result is then obtained by finding the k for which k ˜ y − G k ˆ ˜ x k k 2 2 is minimum, for k = 1 , · · · , K . In case of feature based representation, the SRC model takes the form, R ˜ y = R G ˜ x + R ˜ n , where R is an R × N linear transformation matrix. Generally , R is a random matrix with R N . I V . P Z - M O M E N T S B A S E D S PA R S E R E P R E S E N TA T I O N S In this paper we consider feature based sparse representa- tions. In our case, PZ-moments form the feature set of the radar image. Since PZ-moments are generated by projecting the image onto PZ-polynomials, we can easily generate the features by con verting the PZ-polynomials into a basis matrix and then projecting the image vector onto this basis matrix. Let a P × 1 vector z i of PZ-polynomials, with degree ≤ n , w .r .t. image point ( r i , θ i ) , where ( r i , θ i ) are the Polar co- ordinates equiv alent of the image point ( x i , y i ) in Cartesian coordinates, for i = 1 , · · · , N , be defined as z i ∆ = [ γ 0 z 0 0 ( r i , θ i ) , γ 1 z − 1 1 ( r i , θ i ) , γ 1 z 0 1 ( r i , θ i ) , γ 1 z +1 1 ( r i , θ i ) , · · · , γ n z − n n ( r i , θ i ) , γ n z − n +1 n ( r i , θ i ) , · · · , γ n z + n n ( r i , θ i )] T (10) where γ n ∆ = ( n + 1) / ( π N ) accounts for subsequent constants as well as integration to summation approximations in (5). Note, we assume that r i ≤ 1 , ∀ i ∈ [1 , N ] , which ensures that all image points are within the unit circle. The PZ-polynomials based basis matrix can then be defined as a P × N matrix Z , i.e., Z ∆ = [ z 1 , z 2 , · · · , z N ] (11) which is still a matrix of complex polynomials. Now , given a set of training image measurements { g k j } , for j = 1 , · · · , J k and k = 1 , · · · , K , the dictionary based on PZ-moments features, with the property of rotational in v ariance, can be defined as a column normalised (i.e., normalised to unity) P × J matrix A , i.e., A ∆ = abs( ZG ) = A 1 , A 2 , · · · , A K (12) where abs( · ) is a function which generates element-wise absolute values, and A k ∆ = abs( ZG k ) is a P × J k matrix of PZ-moments w .r .t. G k , for k = 1 , · · · , K . In order to capitalise on the in v ariance structure provided by PZ-moments, we introduce auxiliary atoms in the dictionary (see Section IV -B for details). Thus, the dictionary can be defined as Φ ∆ = [ A 1 , f ( A 1 )] , [ A 2 , f ( A 2 )] , · · · , [ A K , f ( A K )] = Φ 1 , Φ 2 , · · · , Φ K (13) where f ( A k ) is a P × L k auxiliary matrix (with columns normalised to unity) and it is a function of the columns of A k , Φ k ∆ = [ A k , f ( A k )] is a P × Q k matrix with Q k = J k + L k , for k = 1 , · · · , K , and the ov er-complete dictionary Φ is a P × Q matrix with Q = P K k =1 Q k . Now , the test image ˜ y can be encoded according to the follo wing linear model. y = Φx + n (14) where y ∆ = abs( Z ˜ y ) is the P × 1 vector of PZ-moments of the test image, x is the Q × 1 encoded vector defined as, x ∆ = [ x 1 T , x 2 T , · · · , x K T ] T , where x k is the Q k × 1 encoding vector w .r .t. Φ k , for k = 1 , · · · , K , and n is the P × 1 model error v ector with bounded energy , i.e., k n k 2 < . It is clear from (14), given that the test image belongs to a particular class, x would be a sparse vector with nonzero elements ideally corresponding to the sub-dictionary of only a particular class. A. Sparse Reconstruction and Classification Since P Q , (14) is an under-determined system of linear equations. In order to recover x in (14), we use IHT as the sparse recovery algorithm. An estimate of x can be obtained by processing the follo wing iterations. ˆ x [ t +1] = H Γ ˆ x [ t ] + Φ T y − Φ ˆ x [ t ] (15) where t is the iteration index (starting with t = 0 ) and H Γ is the hard thresholding operator defined as H Γ ( q ) ∆ = q I { i | [ q ] i ≥ [ Ascend ( q )] Γ , ∀ i } (16) where I {·} is an indicator operator which discards those ele- ments of vector q that are not in the indicator set (the set given in its subscript), and Ascend ( q ) is a sorting function which sorts the elements of q in an ascending order . Essentially , H Γ ( q ) preserves only the Γ largest element magnitudes of q in each iteration t . Thus, (15) approximates the ` 0 -norm estimate of x , i.e., ˆ x = arg min x k y − Φx k 2 2 subject to k x k 0 0 ≤ Γ (17) where Γ is the order of sparsity . Note, the stopping criterion of iterations in (15) can either be the maximum number of allow able iterations or the minimum residual error , i.e., k y − Φ ˆ x [ t ] k 2 2 / k y k 2 2 . After sparse encoding of y , the classification of the target image can be done by solving the following OP . ˆ k = arg min k k y − Φ k ˆ x k k 2 2 , for k = 1 , · · · , K (18) where ˆ x k is the estimate obtained in the PZ-SRC framew ork, when the stopping criterion for (17) has been achieved. B. Auxiliary Atoms The auxiliary atoms can hav e a substantial impact on the performance of the classification. Ideally , v ariations in the image measurements w .r .t. different aspect angles, should not produce any v ariations in their respecti ve PZ-moments. Howe v er , radar reflectivities at different aspect angles might not be uniform. Therefore, image at one aspect angle might be absolutely different from the image obtained at another aspect angle. Also, noise in the form of clutter or other artefacts can play a disruptive role. Auxiliary atoms try to recover the information lost due to these irregularities. In this section, we present a number of techniques to generate the auxiliary atoms. Note, here our focus is primarily on rotational in variance of the moments. 4 1) Fixed Auxiliary Atoms ( AuxFix ): In case the measure- ments are obtained at random aspect angles, we propose to constitute the auxiliary atoms as an overall av erage of the PZ- moments based measurements of each class, i.e., f ( A k ) = J k X j =1 a k j (19) where a k j ∆ = abs ( Zg k j ) , for k = 1 , · · · , K . AuxFix causes the effect of irregular reflecti vities to be av eraged out. Here, L k = 1 , for k = 1 , · · · , K . 2) Moving A verag e Based Auxiliary Atoms ( AuxMo v ): In case the measurements are arranged in the order of increasing aspect angles around the object, a moving av erage of atoms ov er each class can constitute the auxiliary atoms, i.e., f j ( A k ) = + W k / 2 X w = − W k / 2 a k w + j (20) where W k is the window size for the k th class, for k = 1 , · · · , K , and j = 1 , · · · , J k . W e can see that the windo w is centred o ver the j th column of A k . Note, in case ( w + j ) < 1 or ( w + j ) > J k , a k w + j can be considered as zero vectors. Here, L k = J k . The auxiliary matrix can be formed as f ( A k ) = f 1 ( A k ) , f 2 ( A k ) , · · · , f J k ( A k ) . (21) 3) Correlation Based Auxiliary Atoms ( AuxCorr ): An op- timal method is to find correlated atoms w .r .t. ev ery training measurement for each class, i.e., the columns of A k . The auxiliary atoms can then be generated based on a minimum correlation v alue, i.e., f j ( A k ) = J k X l =1 a k T j a k l > Υ a k l (22) where a k T j a k l performs the inner product, Υ is the correlation threshold, and j = 1 , · · · , J k . Here, L k = J k . The auxiliary matrix can be formed according to (21). This procedure ensures that all informativ e measurements, i.e., measurements with high mutual correlation, are accounted for . C. Complex Signatur es W e can see from the previous sections that most of the classification strategies use only the intensities or magnitudes of the images. Howe ver , a radar signature contains information both in the magnitude as well as in the phase. T o this end, we combine the magnitude and the phase of the radar signatures via an a veraging fusion metric, and use the fused image to create the PZ-moments. Thus, the fused image has the form, 0 . 5[abs( { g k j } ) + phase( { g k j } )] , where phase( · ) is an element- wise phase generating function. V . S I M U L A T I O N S In this section we present simulation results of our proposed methods. W e use the publicly av ailable MST AR dataset. W e consider three targets, i.e., 2S1 tank, D-7 land clearing v ehicle and T62 tank (so K = 3 ). Figure 1 shows the optical and SAR (a) 2S1 (b) D-7 (c) T62 Fig. 1: MST AR T argets. (magnitude only) images, for one aspect angle of these targets. For the purpose of training, a total of J k = 299 measurements are considered, for each target, at a radar elev ation angle of 17 ◦ . The measurements hav e been taken at sequentially increasing aspect angles of approximately 1 . 2 ◦ , i.e, covering the complete angular range of 360 ◦ . Note, the measurements are in the form of 96 × 96 SAR images. These images are vectorised for the sake of processing. Thus, N = 9216 . For the purpose of testing, a total of 273 image measurements (for each class) are considered, which ha ve been taken at different aspect angles ov er the complete angular range of 360 ◦ , with a radar elev ation angle of 15 ◦ . Aspect angles of the testing measurements are different from the training measurements. Thus, pose angle estimation is a valid issue. W e define the classification/recognition accuracy/performance for the k th class as Ω k ∆ = 100 TP k 273 (23) where TP k are the true positives of the target class k , for k = 1 , · · · , K , and the overall performance is defined as Ω ∆ = 1 K K X k =1 Ω k . (24) Note, both Ω and Ω k quantify performance in percentages. For PZ-moments, we consider n = 10 as the degree of the polynomials which generates P = 121 PZ-moments. In comparison to N , this is a dimensionality reduction of over 98% . Note, the value of n can impact the performance of classification. Generally , higher values of n can represent an image better . Ho wev er , very large v alues can cause numerical instabilities. Therefore, we select a moderate value of n . Fe w tests on the training data can also give a good idea over the choice of n . For sparse reconstruction, we use IHT for PZ- SRC (as well as for SRC, for a fair comparison) and consider the order of sparsity Γ = 5 . Note, the parameter Γ is a tuning parameter and can be selected based on dif ferent cross validation approaches. In terms of simulation results, we first consider the magni- tude only radar signatures. T able I shows the classification performance results of dif ferent classifiers. Note, we also consider PZ-moments based LSVC (PZ-LSVC) for the sake of comparison. W e can see that SRC outperforms LSVC for all target classes. PZ-LSVC is slightly better than SRC 5 T ABLE I: Performance Comparison of Different Classifiers Ω 1 (%) Ω 2 (%) Ω 3 (%) Ω(%) LSVC 91 . 94 98 . 90 92 . 67 94 . 50 SRC 95 . 97 99 . 26 93 . 04 96 . 09 PZ-LSVC 93 . 40 99 . 26 96 . 33 96 . 33 PZ-SRC 96 . 70 99 . 26 96 . 33 97 . 43 T ABLE II: Confusion Matrix for PZ-SRC (Magnitude Only) 2S1 D-7 T62 Ω k (%) Ω(%) 2S1 264 0 9 96 . 70 - D-7 0 271 2 99 . 26 - T62 2 8 263 96 . 33 - - - - - 97 . 43 in the overall performance. Howe ver , PZ-SRC shows better classification performance than all the classifiers, in every category . In general, PZ-moments based methods ha ve an edge over other methods, despite the dimensionality reduction. T able II provides the confusion matrix for PZ-SRC (magnitude only). Performance of each target is gi ven in their respective rows. Each column shows the number of test images classified as the title target. Last two columns show the classification performance of individual targets and the o verall, respectiv ely . T able III pro vides the confusion matrix for complex radar signatures, where we use the fusion technique of Section IV -C. W e see an improved ov erall performance of 98 . 41% in comparison to 97 . 43% of the magnitude only in T able II. For the rest of the simulations, we use fused complex sig- natures. W e first obtain classification results by considering AuxFix of Section IV -B1 as auxiliary atoms. T able IV sho ws the confusion matrix in this regard. The performance improve- ment has been encouraging, with Ω = 98 . 53% . Next, we simulate the classification problem by considering AuxMov of Section IV -B2 as auxiliary atoms. T able V sho ws the perfor- mance of PZ-SRC for varying sizes of W k (same ∀ k ). W e can see that the classification performance is affected by changing size of W k . The best performance is achie ved when W k /J k is a multiple of 0 . 5 . If all the test measurements are di vided into four quadrants, with each quadrant corresponding to a range of aspect angles of approximately 90 ◦ , then W k /J k = 0 . 5 essentially corresponds to the numerical size of one quadrant, when the best performance is achiev ed. This can be explained as follows. Due to the rotational inv ariance properties of the PZ-moments, measurements at consecutiv e aspect angles are correlated to each other , in general, with some variations mostly because of radar reflectivity irregularities. Ho wev er , T ABLE III: Confusion Matrix for PZ-SRC (Complex) 2S1 D-7 T62 Ω k (%) Ω(%) 2S1 263 0 10 96 . 33 - D-7 0 271 2 99 . 26 - T62 0 1 272 99 . 63 - - - - - 98 . 41 T ABLE IV: Confusion Matrix for PZ-SRC ( AuxFix ) 2S1 D-7 T62 Ω k (%) Ω(%) 2S1 264 0 9 96 . 70 - D-7 0 271 2 99 . 26 - T62 0 1 272 99 . 63 - - - - - 98 . 53 0 50 100 150 200 250 300 Training Measurements 0.84 0.86 0.88 0.9 0.92 0.94 0.96 0.98 1 Υ j = 10 j = 20 j = 30 j = 40 j = 50 Fig. 2: Correlations among PZ-moments based Measurements. measurements at the boundary of two quadrants correspond to the fine corners of the considered rectangular-shaped targets, and these measurements are highly uncorrelated with all the measurements in the preceding or the succeeding quadrant. This phenomenon can be seen in Figure 2, which shows the correlations of a few training measurements (PZ-moments) with the rest of the measurements in a k th target class. W e can see that mutual correlations are minimum at quadrants, i.e., when W k / 2 = 75 , 150 , 225 . Thus, it is better to exploit only the correlated measurements for generating auxiliary atoms and that happens when the size of W k is such that it contains most of the correlated measurements of a quadrant or its multiple. Since, correlation is an important parameter for generating auxiliary atoms, we next assess the classification performance by considering AuxCorr of Section IV -B3. T able 0 50 100 150 200 250 300 Training Measurements 0.84 0.86 0.88 0.9 0.92 0.94 0.96 0.98 1 Υ j = 10 j = 20 j = 30 j = 40 j = 50 Fig. 3: Correlations among T est Measurements. 6 T ABLE V: Performance with V arying W k in (20) W k 29 59 89 119 149 179 209 239 269 299 328 358 388 418 448 W k /J k 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 1 . 1 1 . 2 1 . 3 1 . 4 1 . 5 Ω(%) 98 . 16 98 . 41 98 . 65 98 . 77 98 . 90 98 . 65 98 . 65 98 . 77 98 . 90 98 . 90 98 . 90 98 . 77 98 . 65 98 . 77 98 . 90 T ABLE VI: Performance with V arying Υ in (22) Υ 0 . 98 0 . 97 0 . 96 0 . 95 0 . 94 0 . 93 0 . 92 Ω(%) 98 . 29 98 . 53 98 . 65 98 . 77 98 . 90 98 . 53 98 . 53 T ABLE VII: Confusion Matrix for PZ-SRC ( AuxMov ) 2S1 D-7 T62 Ω k (%) Ω(%) 2S1 266 0 7 97 . 43 - D-7 0 271 2 99 . 26 - T62 0 0 273 100 - - - - - 98 . 90 VI sho ws the classification performance with varying Υ . W e can see that best performance is achie ved for Υ = 0 . 94 . This is quite understandable. A higher value of Υ does not collect enough number of informativ e measurements and a lo wer value of Υ in volves noisy or non-informativ e measurements. This can be seen from Figure 2 as well. W e also provide a confusion matrix regarding the performance of PZ-SRC with fused complex signatures and using W k /J k = 0 . 5 , in T able VII. An overall performance of 98 . 90% is achiev ed. Note, in order to better appreciate the in variance properties of the PZ-moments, we also plot the correlations among original test measurements, i.e., without PZ-moments, in Figure 3. W e can see that the correlation structure is quite inconsistent in comparison to the PZ-moments as in Figure 2. V I . C O N C L U S I O N S In this paper , we presented sparse representations for radar image classification by using pseudo-Zernike moments. W e obtained a reduction in the dimensionality of the problem without compromising the performance. W e exploited the in- variance properties of the pseudo-Zernike moments to generate auxiliary atoms to complement the dictionary , which resulted in an enhanced classification performance. W e used a fusion strategy to gain both from the magnitude as well as the phase of the radar signatures. W e proved the validity of our proposed methods via simulations on the MST AR dataset. A C K N O W L E D G E M E N T S This work has been approv ed for submission by T ASSC- P A THCAD Sponsor , Chris Holmes, Senior Manager Research, Research Department, Jaguar Land Rov er , Cov entry , UK. R E F E R E N C E S [1] W . Carrara, R. Goodman, and R. Majewski, Spotlight Synthetic Aperture Radar . Boston: Artech House, 1995. [2] S. Palm, R. Sommer , M. Caris, N. Pohl, A. T essmann, and U. Stilla, “Ultra-high resolution SAR in lower terahertz domain for applications in mobile mapping, ” in 2016 GeMiC , March 2016, pp. 205–208. [3] G. J. Owirka, S. M. V erbout, and L. M. Novak, “T emplate-based SAR A TR performance using different image enhancement techniques, ” in of Pr oc. SPIE , 1999, pp. 302–319. [4] Q. Zhao and J. C. Principe, “Support v ector machines for SAR automatic target recognition, ” IEEE T ransactions on Aer ospace and Electronic Systems , vol. 37, no. 2, pp. 643–654, Apr 2001. [5] B. Olshausen and D. Field, “Emergence of simple-cell receptiv e field properties by learning a sparse code for natural images, ” Nature , vol. 381, pp. 607–609, 1996. [6] S. S. Chen, D. L. Donoho, and M. A. Saunders, “ Atomic decomposition by basis pursuit, ” SIAM Journal on Scientific Computing , vol. 20, pp. 33–61, 1998. [7] M. Aharon, M. Elad, and A. Bruckstein, “ K -SVD: An algorithm for designing overcomplete dictionaries for sparse representation, ” IEEE T rans. on Signal Pr ocessing , vol. 54, no. 11, pp. 4311–4322, Nov 2006. [8] K. Huang and S. A viyente, “Sparse representation for signal classifi- cation, ” in Pr oceedings of the 19th International Conference on NIPS . Cambridge, MA, USA: MIT Press, 2006, pp. 609–616. [9] J. Wright, A. Y . Y ang, A. Ganesh, S. S. Sastry , and Y . Ma, “Robust face recognition via sparse representation, ” IEEE Tr ans. on P attern Analysis and Machine Intelligence , vol. 31, no. 2, pp. 210–227, Feb 2009. [10] J. J. Thiagarajan, K. N. Ramamurthy , P . Knee, A. Spanias, and V . Berisha, “Sparse representations for automatic target classification in sar images, ” in 2010 4th ISCCSP , March 2010, pp. 1–4. [11] M. B. W akin, D. L. Donoho, H. Choi, and R. G. Baraniukr, “The multiscale structure of non-differentiable image manifolds, ” in SPIE Confer ence Series , 2005, pp. 413–429. [12] S. T . Roweis and L. K. Saul, “Nonlinear dimensionality reduction by locally linear embedding, ” Science , vol. 290, pp. 2323–2326, 2000. [13] M. Y ang, L. Zhang, J. Y ang, and D. Zhang, “Metaf ace learning for sparse representation based face recognition, ” in 2010 IEEE International Confer ence on Image Pr ocessing , Sept 2010, pp. 1601–1604. [14] I. Ramirez, P . Sprechmann, and G. Sapiro, “Classification and clustering via dictionary learning with structured incoherence and shared features, ” in 2010 IEEE Computer Society Conference on Computer V ision and P attern Recognition , June 2010, pp. 3501–3508. [15] M. Y ang, L. Zhang, X. Feng, and D. Zhang, “Fisher discrimination dictionary learning for sparse representation, ” in 2011 International Confer ence on Computer V ision , Nov 2011, pp. 543–550. [16] M.-K. Hu, “V isual pattern recognition by moment in variants, ” IRE T rans. on Info. Theory , vol. 8, no. 2, pp. 179–187, February 1962. [17] F . A. Sadjadi and E. L. Hall, “Three-dimensional moment in variants, ” IEEE T rans. P attern Anal. Mach. Intell. , vol. 2, no. 2, pp. 127–136, Feb . 1980. [18] C.-H. T eh and R. T . Chin, “On image analysis by the methods of moments, ” IEEE T rans. P attern Anal. Mac h. Intell. , vol. 10, no. 4, pp. 496–513, Jul. 1988. [19] A. Khotanzad and Y . H. Hong, “Inv ariant image recognition by zernike moments, ” IEEE T rans. P attern Anal. Mach. Intell , vol. 12, no. 5, pp. 489–497, May 1990. [20] C. Clemente, L. Pallotta, I. Proudler , A. D. Maio, J. J. Soraghan, and A. Farina, “Pseudo-Zernike-based multi-pass automatic target recogni- tion from multi-channel synthetic aperture radar, ” IET Radar , Sonar Navigation , vol. 9, no. 4, pp. 457–466, 2015. [21] C. Clemente, L. Pallotta, A. D. Maio, J. J. Soraghan, and A. Farina, “ A novel algorithm for radar classification based on Doppler character- istics exploiting orthogonal pseudo-Zernike polynomials, ” IEEE T r ans. Aer osp. Electr on. Syst , vol. 51, no. 1, pp. 417–430, Jan. 2015. [22] F . Sadjadi, “Comparative image fusion analysais, ” in 2005 IEEE Com- puter Society Confer ence on Computer V ision and P attern Recognition (CVPR’05) - W orkshops , June 2005, pp. 8–8. [23] ——, “ Adaptiv e object classification using complex SAR signatures, ” in 2016 IEEE Conference on Computer V ision and P attern Recognition W orkshops (CVPRW) , June 2016, pp. 299–303. [24] T . Blumensath and M. E. Davies, “Iterativ e hard thresholding for compressed sensing, ” Applied and Computational Harmonic Analysis , vol. 27, no. 3, pp. 265–274, 2009.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment