Incremental Semiparametric Inverse Dynamics Learning

This paper presents a novel approach for incremental semiparametric inverse dynamics learning. In particular, we consider the mixture of two approaches: Parametric modeling based on rigid body dynamics equations and nonparametric modeling based on in…

Authors: Raffaello Camoriano, Silvio Traversaro, Lorenzo Rosasco

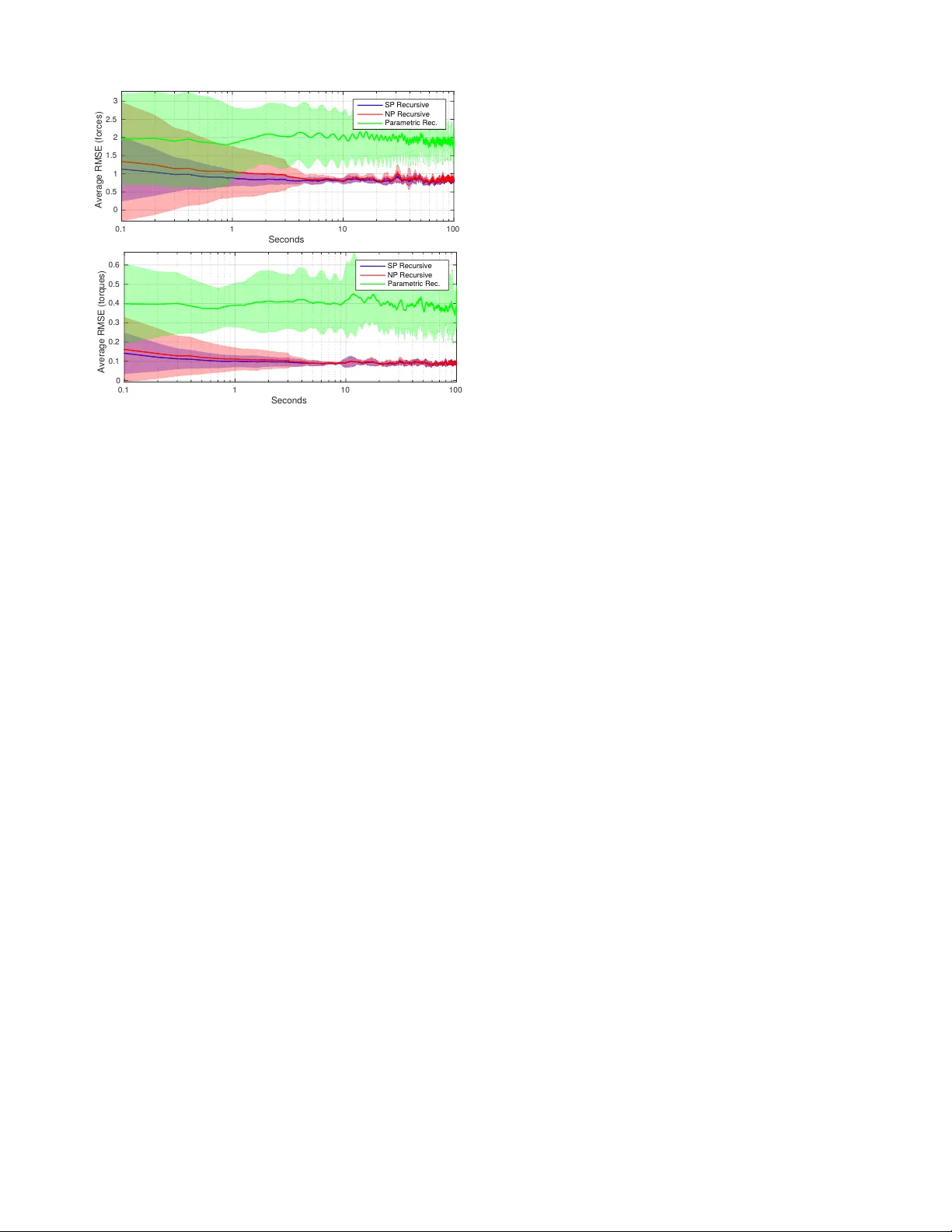

Incr emental Semiparametric In verse Dynamics Lear ning Raf faello Camoriano ∗† , Silvio Tra versaro ‡ , Lorenzo Rosasco , Giorgio Metta M , and Francesco Nori ‡ Abstract — This paper presents a novel approach for incre- mental semiparametric in verse dynamics learning. In partic- ular , we consider the mixture of two approaches: Parametric modeling based on rigid body dynamics equations and non- parametric modeling based on incremental k ernel methods, with no prior information on the mechanical properties of the system. This yields to an incremental semiparametric approach, leveraging the advantages of both the parametric and nonparametric models. W e validate the proposed technique learning the dynamics of one arm of the iCub humanoid robot. I . I N T RO D U C T I O N In order to control a robot a model describing the relation between the actuator inputs, the interactions with the world and bodies accelerations is required. This model is called the dynamics model of the robot. A dynamics model can be obtained from first principles in mechanics, using the techniques of rigid body dynamics (RBD) [1], resulting in a parametric model in which the values of physically mean- ingful parameters must be provided to complete the fixed structure of the model. Alternati vely , the dynamical model can be obtained from experimental data using Machine Learning techniques, resulting in a nonparametric model . T raditional dynamics parametric methods are based on sev eral assumptions, such as rigidity of links or that friction has a simple analytical form, which may not be accurate in real systems. On the other hand, nonparametric methods based on algorithms such as Kernel Ridge Regression (KRR) [2], [3], [4], Kernel Re gularized Least Squares (KRLS) [5] or Gaussian Processes [6] can model dynamics by extrapolat- ing the input-output relationship directly from the a vailable data 1 . If a suitable kernel function is chosen, then the nonparametric model is a univ ersal approximator which can account for the dynamics effects which are not considered by the parametric model. Still, nonparametric models have ∗ Corresponding author . † Raff aello Camoriano is with iCub Facility , Istituto Italiano di T ec- nologia, V ia Morego 30, Genoa 16163, Italy , and DIBRIS, Universit ` a degli Studi di Genova, Via All’Opera Pia, 13, Genoa 16145, Italy . Email: raffaello.camoriano@iit.it ‡ Silvio Trav ersaro and Francesco Nori are with RBCS Department, Istituto Italiano di T ecnologia, V ia Morego 30, Genoa 16163, Italy . Email: name.surname@iit.it Lorenzo Rosasco is with LCSL, Istituto Italiano di T ecnologia and Massachusetts Institute of T echnology , Cambridge, MA 02139, USA, and DIBRIS, Universit ` a degli Studi di Genova, V ia All’Opera Pia, 13, Genoa 16145, Italy . Email: lrosasco@mit.edu M Giorgio Metta is with iCub Facility , Istituto Italiano di T ecnologia, V ia Morego 30, Genoa 16163, Italy . Email: giorgio.metta@iit.it 1 Note that KRR and KRLS ha ve a v ery similar formulation, and that these are also equiv alent to the techniques derived from Gaussian Processes, as explained for instance in Chapter 6 of [4]. T ABLE I: Summary of related works on semiparametric or incremental robot dynamics learning. A uthor , Y ear Parametric Nonparametric Nguyen-T uong, 2010 [7] Batch Batch Gijsberts, 2011 [8] - Incremental T ingfan W u, 2012 [9] Batch Batch De La Cruz, 2012 [10] CAD ∗ Incremental Camoriano, 2015 Incremental Incremental ∗ In [10] the parametric part is used only for initializing the nonparametric model. no prior kno wledge about the tar get function to be approxi- mated. Therefore, they need a suf ficient amount of training examples in order to produce accurate predictions on the entire input space. If the learning phase has been performed offline, both approaches are sensitive to the variation of the mechanical properties ov er long time spans, which are mainly caused by temperature shifts and wear . Even the inertial parameters can change ov er time. For example if the robot grasps a heavy object, the resulting change in dynamics can be described by a change of the inertial parameters of the hand. A solution to this problem is to address the variations of the identified system properties by learning incr ementally , continuously updating the model as long as new data becomes a vailable. In this paper we propose a no vel technique that joins parametric and nonparametric model learning in an incremental fashion. Classical methods for physics-based dynamics modeling can be found in [1]. These methods require to identify the mechanical parameters of the rigid bodies composing the robot [11], [12], [13], [14], which can then be employed in model-based control and state estimation schemes. In [7] the authors present a learning technique which combines prior knowledge about the physical structure of the mechanical system and learning from a v ailable data with Gaussian Process Regression (GPR) [6]. A similar approach is presented in [9]. Both techniques require an offline training phase and are not incremental, limiting them to scenarios in which the properties of the system do not change significantly ov er time. In [10] an incremental semiparametric robot dynamics learning scheme based on Locally W eighted Projection Re- gression (L WPR) [15] is presented, that is initialized using a linearized parametric model. Howe ver , this approach uses a fixed parametric model, that is not updated as ne w data becomes av ailable. Moreover , L WPR has been sho wn to underperform with respect to other methods (e.g. [8]). In [8], a fully nonparametric incremental approach for in verse dynamics learning with constant update complexity is presented, based on kernel methods [16] (in particular KRR) and random features [17]. The incremental nature of this approach allo ws for adaptation to changing conditions in time. The authors also sho w that the proposed algorithm outperforms other methods such as L WPR, GPR and Local Gaussian Processes (LGP) [18], both in terms of accuracy and prediction time. Nev ertheless, the fully nonparametric nature of this approach undermines the interpretability of the inv erse dynamics model. In this work we propose a method that is incremental with fixed update comple xity (as [8]) and semiparametric (as [7] and [9]). The fixed update complexity and prediction time are key properties of our method, enabling real-time perfor - mances. Both the parametric and nonparametric parts can be updated, as opposed to [10] in which only the nonparametric part is. A comparison between the existing literature and our incremental method is reported in T able I. W e validate the proposed method with experiments performed on an arm of the iCub humanoid robot [19]. Fig. 1: iCub learning its right arm dynamics. The article is organized as follows. Section II introduces the existing techniques for parametric and nonparametric robot dynamics learning. In Section III, a complete descrip- tion of the proposed semiparametric incremental learning technique is introduced. Section IV presents the validation of our approach on the iCub humanoid robotic platform. Finally , Section V summarizes the content of our work. I I . BAC K G R O U N D A. Notation The following notation is used throughout the paper . • The set of real numbers is denoted by R . Let u and v be two n -dimensional column vectors of real numbers (unless specified otherwise), i.e. u , v ∈ R n , their inner product is denoted as u > v , with “ > ” the transpose operator . • The Frobenius norm of either a vector or a matrix of real numbers is denoted by k · k . • I n ∈ R n × n denotes the identity matrix of dimension n ; 0 n ∈ R n denotes the zero column v ector of dimen- sion n ; 0 n × m ∈ R n × m denotes the zero matrix of dimension n × m . B. P arametric Models of Robot Dynamics Robot dynamics parametric models are used to represent the relation connecting the geometric and inertial parameters with some dynamic quantities that depend uniquely on the robot model. A typical example is obtained by writing the robot in verse dynamics equation in linear form with respect to the robot inertial parameters π : τ = M ( q ) ¨ q + C ( q , ˙ q ) ˙ q + g ( q ) = Φ( x ) π , (1) where: q ∈ R n dof is the vector of joint positions, τ ∈ R n dof is the vector of joint torques, π ∈ R n p is the vector of the identifiable (base) inertial parameters [1], Φ( x ) ∈ R n dof × n p is the “re gressor”, i.e. a matrix that depends only on the robot kinematic parameters. In the rest of the paper we will indicate with x the triple given by ( q , ˙ q , ¨ q ) . Other parametric models write different measurable quantities as a product of a regressor and a vector of parameters, for example the total energy of the robot [20], the istantaneous power provided to the robot [21], the sum of all external forces acting on the robot [22] or the center of pressure of the ground reaction forces [23]. Regardless of the choice of the measured variable y , the structure of the regressor is similar: y = Φ( q , ˙ q , ¨ q ) π = Φ( x ) π , (2) where y ∈ R t is the measured quantity . The π vector is composed of certain linear combinations of the inertial parameters of the links, the base inertial parameters [24]. In particular , the inertial parameters of a single body are the mass m , the first moment of mass m c ∈ R 3 expressed in a body fix ed frame and the inertia matrix I ∈ R 3 × 3 expressed in the orientation of the body fixed frame and with respect to its origin. In parametric modeling of robot dynamics, the regressor structure depends on the kinematic parameters of the robot, that are obtained from CAD models of the robot through kinematic calibration techniques. Similarly , the inertial pa- rameters π can also be obtained from CAD models of the robot, ho wev er these models may be unav ailable (for example) because the manufacturer of the robot does not provide them. In this case the usual approach is to estimate π from experimental data [14]. T o do that, given n measures of the measured quantity y i (with i = 1 . . . n ), stacking (2) for the n samples it is possible to write: y 1 y 2 . . . y n = Φ( x 1 ) Φ( x 2 ) . . . Φ( x n ) π . (3) This equation can then be solved in least squares (LS) sense to find an estimate ˆ π of the base inertial parameters. Giv en the training trajectories it is possible that not all parameters in π can be estimated well as the problem in (3) can be ill-posed, hence this equation is usually solved as a Regularized Least Squares (RLS) problem. Defining y n = y 1 y 2 . . . y n , Φ n = Φ( x 1 ) Φ( x 2 ) . . . Φ( x n ) , the RLS problem that is solved for the parametric identifi- cation is: ˆ π = arg min π ∈ R n p k Φ n π − y n k 2 + λ k π k 2 , λ > 0 . (4) C. Nonpar ametric Modeling with K ernel Methods Consider a probability distribution ρ ov er the probability space X × Y , where X ⊆ R d is the input space (the space of the d measured attrib utes) and Y ⊆ R t is the output space (the space of the t outputs to be predicted). In a nonparametric modeling setting, the goal is to find a function f ∗ : X → Y belonging to a set of measurable functions H , called hypothesis space , such that f ∗ = argmin f ∈H Z X ×Y ` ( f ( x ) , y ) dρ ( x , y ) | {z } E ( f ) , (5) where x ∈ X are row vectors, y ∈ Y , E ( f ) is called expected risk and ` ( f ( x ) , y ) is the loss function . In the rest of this work, we will consider the squared loss ` ( f ( x ) , y ) = k f ( x ) − y k 2 . Note that the distribution ρ is unknown, and that we assume to hav e access to a discrete and finite set of measured data points S = { x i , y i } n i =1 of cardinality n , in which the points are independently and identically distributed (i.i.d.) according to ρ . In the context of kernel methods [16], H is a r epr oducing kernel Hilbert space (RKHS). An RKHS is a Hilbert space of functions such that ∃ k : X × X → R for which the follo wing properties hold: 1) ∀ x ∈ X k x ( · ) = k ( x , · ) ∈ H 2) g ( x ) = h g, k x i H ∀ g ∈ H , x ∈ X , where h· , ·i H indicates the inner product in H . The function k is a r eproducing kernel , and it can be shown to be symmetric positiv e definite (SPD). W e also define the kernel matrix K ∈ R n × n s.t. K i,j = k ( x i , x j ) , which is symmetric and positiv e semidefinite (SPSD) ∀ x i , x j ∈ X , with i, j ∈ { 1 , . . . , n } , n ∈ N + . The optimization problem outlined in (5) can be ap- proached empirically by means of many different algorithms, among which one of the most widely used is Kernel Regular - ized Least Squares (KRLS) [3], [5]. In KRLS, a regularized solution ˆ f λ : X → Y is found solving ˆ f λ = argmin f ∈H n X i =1 k f ( x i ) − y i k 2 + λ k f k 2 H ! , λ > 0 , (6) where λ is called r e gularization par ameter . The solution to (6) exists and is unique. Follo wing the representer theorem [16], the solution can be con veniently expressed as ˆ f λ ( x ) = n X i =1 α i k ( x i , x ) (7) with α = ( K + λI n ) − 1 Y ∈ R n × t , α i i -th row of α and Y = y > 1 , . . . , y > n > . It is therefore necessary to in vert and store the kernel matrix K ∈ R n × n , which implies O ( n 3 ) and O ( n 2 ) time and memory complexities, respectively . Such complexities render the above-mentioned KRLS approach prohibitiv e in settings where n is large, including the one treated in this work. This limitation can be dealt with by resorting to approximated methods such as random featur es , which will no w be described. 1) Random F eatur e Maps for K ernel Appr oximation: The random features approach was first introduced in [17], and since then is has been widely applied in the field of large- scale Machine Learning. This approach leverages the fact that the kernel function can be expressed as k ( x , x 0 ) = h φ ( x ) , φ ( x 0 ) i H , (8) where x , x 0 ∈ X are row vectors, φ : R d → R p is a featur e map associated with the kernel, which maps the input points from the input space X to a feature space of dimensionality p ≤ + ∞ , depending on the chosen kernel. When p is very large, directly computing the inner product as in (8) enables the computation of the solution, as we have seen for KRLS. Howe ver , K can become too cumbersome to in vert and store as n gro ws. A random feature map ˜ φ : R d → R D , typically with D p , directly approximates the feature map φ , so that k ( x , x 0 ) = h φ ( x ) , φ ( x 0 ) i H ≈ ˜ φ ( x ) ˜ φ ( x 0 ) > . (9) D can be chosen according to the desired approximation accuracy , as guaranteed by the conv ergence bounds reported in [17], [25]. In particular , we will use random Fourier features for approximating the Gaussian kernel k ( x , x 0 ) = e − k x − x 0 k 2 2 σ 2 . (10) The approximated feature map in this case is ˜ φ ( x ) = e i x ω 1 , . . . , e i x ω D , where ω ∼ p ( ω ) = (2 π ) − D 2 e − k ω k 2 2 σ 2 , (11) with ω ∈ R d column vector . The fundamental theoretical result on which random Fourier features approximation relies is Bochner’ s Theorem [26]. The latter states that if k ( x , x 0 ) is a shift-inv ariant kernel on R d , then k is positiv e definite if and only if its F ourier transform p ( ω ) ≥ 0 . If this holds, by the definition of F ourier transform we can write k ( x , x 0 ) = k ( x − x 0 ) = Z R d p ( ω ) e i ( x − x 0 ) ω d ω , (12) which can be approximated by performing an empirical av erage as follo ws: k ( x − x 0 ) = E ω ∼ p h e i ( x − x 0 ) ω i ≈ ≈ 1 D P D k =1 e i ( x − x 0 ) ω = ˜ φ ( x ) ˜ φ ( x 0 ) > . (13) Therefore, it is possible to map the input data as ˜ x = ˜ φ ( x ) ∈ R D , with ˜ x row vector , to obtain a nonlinear and nonparametric model of the form ˜ f ( x ) = ˜ x ˜ W ≈ ˆ f λ ( x ) = n X i =1 α i k ( x i , x ) (14) approximating the exact kernelized solution ˆ f λ ( x ) , with ˜ W ∈ R D × t . Note that the approximated model is nonlinear in the input space, but linear in the random features space. W e can therefore introduce the regularized linear re gression problem in the random features space as follo ws: ˜ W λ = arg min ˜ W ∈ R d × t k ˜ X ˜ W − Y k 2 + λ k ˜ W k 2 , λ > 0 , (15) where ˜ X ∈ R n × D is the matrix of the training inputs where each row has been mapped by ˜ φ . The main advantage of performing a random feature mapping is that it allows us to obtain a nonlinear model by applying linear regression methods. For instance, Re gularized Least Squares (RLS) can compute the solution ˜ W λ of (15) with O ( nD 2 ) time and O ( D 2 ) memory complexities. Once ˜ W λ is known, the prediction ˆ y ∈ R 1 × t for a mapped sample ˜ x can be computed as ˆ y = ˜ x ˜ W λ . D. Re gularized Least Squar es Let Z ∈ R a × b and U ∈ R a × c be tw o matrices of real numbers, with a, b, c ∈ N + . The Regularized Least Squares (RLS) algorithm computes a regularized solution W λ ∈ R b × c of the potentially ill-posed problem Z W = U , enforcing its numerical stability . Considering the widely used T ikhonov regularization scheme, W λ ∈ R b × c is the solution to the follo wing problem: W λ = argmin W ∈ R b × c k Z W − U k 2 + λ k W k 2 | {z } J ( W,λ ) , λ > 0 , (16) where λ is the regularization parameter . By taking the gradient of J ( W , λ ) with respect to W and equating it to zero, the minimizing solution can be written as W λ = ( Z > Z + λI b ) − 1 Z > U. (17) Both the parametric identification problem (4) and the nonparametric random features problem (15) are specific instances of the general problem (16). In particular, the parametric problem (4) is equiv alent to (16) with: W λ = ˆ π , Z = Φ n , U = y n while the random features learning problem (15) is equi valent to (16) with: W λ = ˜ W λ , Z = ˜ X , U = Y . Hence, both problems for a gi ven set of n samples can be solved applying (17). E. Recursive Re gularized Least Squares (RRLS) with Cholesky Update In scenarios in which supervised samples become av ailable sequentially , a very useful extension of the RLS algorithm consists in the definition of an update rule for the model which allo ws it to be incrementally trained, increasing adap- tivity to changes of the system properties through time. This algorithm is called Recursi ve Re gularized Least Squares (RRLS). W e will consider RRLS with the Cholesk y update rule [27], which is numerically more stable than others (e.g. the Sherman-Morrison-W oodbury update rule). In adaptiv e filtering, this update rule is known as the QR algorithm [28]. Let us define A = Z > Z + λI b with λ > 0 and B = Z > U . Our goal is to update the model (fully described by A and B ) with a new supervised sample ( z k +1 , u k +1 ) , with z k +1 ∈ R b , u k +1 ∈ R c row vectors. Consider the Cholesky decomposition A = R > R . It can always be obtained, since A is positive definite for λ > 0 . Thus, we can express the update problem at step k + 1 as: A k +1 = R > k +1 R k +1 = A k + z > k +1 z k +1 = R > k R k + z > k + i z k +1 , (18) where R is full rank and unique, and R 0 = √ λI b . By defining ˜ R k = R k z k +1 ∈ R b +1 × b , (19) we can write A k +1 = ˜ R > k ˜ R k . Ho wev er , in order to com- pute R k +1 from the obtained A k +1 it would be necessary to recompute its Cholesky decomposition, requiring O ( b 3 ) computational time. There exists a procedure, based on Giv ens rotations, which can be used to compute R k +1 from ˜ R k with O ( b 2 ) time complexity . A recursiv e expression can be obtained also for B k +1 as follows: B k +1 = Z > k +1 U k +1 = Z > k U k + z > k +1 u k +1 . (20) Once R k +1 and B k +1 are kno wn, the updated weights matrix W k can be obtained via back and forward substitution as W k +1 = R k +1 \ ( R > k +1 \ B k +1 ) . (21) The time complexity for updating W is O ( b 2 ) . As for RLS, the RRLS incremental solution can be applied to both the parametric (4) and nonparametric with random features (15) problems, assuming λ > 0 . In particular, RRLS can be applied to the parametric case by noting that the arriv al of a new sample (Φ r , y r ) adds t rows to Z k = Φ r − 1 and U k = y r − 1 . Consequently , the update of A must be decomposed in t update steps using (20). F or each one of these t steps we consider only one row of Φ r and y > r , namely: z k + i = (Φ r ) i , u k + i = ( y > r ) i , i = 1 . . . t where ( V ) i is the i -th row of the matrix V . For the nonparametric random features case, RRLS can be simply applied with: z k +1 = ˜ x r , u k +1 = y r . where ( ˜ x r , y r ) is the supervised sample which becomes av ailable at step r . I I I . S E M I P A R A M E T R I C I N C R E M E N TA L DY NA M I C S L E A R N I N G W e propose a semiparametric incremental inv erse dy- namics estimator , designed to hav e better generalization properties with respect to fully parametric and nonparametric ones, both in terms of accurac y and con vergence rates. The estimator, whose functioning is illustrated by the block diagram in Figure 2, is composed of two main parts. The first one is an incremental parametric estimator taking as input the rigid body dynamics regressors Φ( x ) and computing two quantities at each step: • An estimate ˆ y of the output quantities of interest • An estimate ˆ π of the base inertial parameters of the links composing the rigid body structure The employed learning algorithm is RRLS. Since it is su- pervised, during the model update step the measured output y is used by the learning algorithm as ground truth. The parametric estimation is performed in the first place, and it is independent of the nonparametric part. This property is desirable in order to give priority to the identification of the inertial parameters π . Moreover , being the estimator incremental, the estimated inertial parameters ˆ π adapt to changes in the inertial properties of the links, which can occur if the end-effector is holding a heavy object. Still, this adaptation cannot address changes in nonlinear effects which do not respect the rigid body assumptions. The second estimator is also RRLS-based, fully nonpara- metric and incremental. It leverages the approximation of the kernel function via random Fourier features, as outlined in Section II-C.1, to obtain a nonlinear model which can be updated incrementally with constant update complexity O ( D 2 ) , where D is the dimensionality of the random feature space (see Section II-E). This estimator receives as inputs the current vectorized x and ˆ y , normalized and mapped to the random features space approximating an infinite- dimensional feature space introduced by the Gaussian kernel. The supervised output is the residual 4 y = y − ˆ y . The nonparametric estimator provides as output the estimate 4 ˜ y of the residual, which is then added to ˆ y to obtain the semiparametric estimate ˜ y . Similarly to the parametric part, in the nonparametric one the estimator’ s internal nonlinear model can be updated during operation, which constitutes an advantage in the case in which the robot has to explore a previously unseen area of the state space, or when the mechanical conditions change (e.g. due to wear , tear or temperature shifts). I V . E X P E R I M E N T A L R E S U L T S A. Softwar e For implementing the proposed algorithm we used two existing open source libraries. For the RRLS learning part we used GURLS [29], a regression and classification library based on the Regularized Least Squares (RLS) algorithm, av ailable for Matlab and C++. For the computations of the regressors Φ( q , ˙ q , ¨ q ) we used iDynT ree 2 , a C++ dynamics library designed for free floating robots. Using SWIG [30], iDynT ree supports calling its algorithms in se veral program- ming languages, such as Python, Lua and Matlab. For pro- ducing the presented results, we used the Matlab interfaces of iDynTree and GURLS. B. Robotic Platform FT sensor Upper arm ? Forearm Fig. 3: CAD drawing of the iCub arm used in the experi- ments. The six-axis F/T sensor used for validation is visible in the middle of the upper arm link. iCub is a full-body humanoid with 53 degrees of freedom [19]. For v alidating the presented approach, we learned the dynamics of the right arm of the iCub as measured from the proximal six-axis force/torque (F/T) sensor embedded in the arm. The considered output y is the reading of the F/T sensor , and the inertial parameters π are the base parameters of the arm [31]. As y is not a input v ariable for the system, the output of the dynamic model is not directly usable for control, but it is still a proper benchmark for the dynamics learning problem, as also sho wn in [8]. Nev ertheless, the joint torques could be computed seamlessly from the F/T sensor readings if needed for control purposes, by applying the method presented in [32]. C. V alidation The aim of this section is to present the results of the ex- perimental validation of the proposed semiparametric model. The model includes a parametric part which is based on phys- ical modeling. This part is expected to provide acceptable prediction accuracy for the force components in the whole workspace of the robot, since it is based on prior knowledge about the structure of the robot itself, which does not abruptly 2 https://github.com/robotology/idyntree q ˙ q ¨ q f τ x y Φ( x ) ˆ π ˆ y ˜ x 4 ˜ y − + 4 y + ˜ y Fig. 2: Block diagram displaying the functioning of the proposed prioritized semiparametric in verse dynamics estimator . f and τ indicate measured force and torque components, concatenated in the measured output vector y . The parametric part is composed of the RBD re gressor generator and of the parametric estimator based on RRLS. Its outputs are the estimated parameters ˆ π and the predicted output ˆ y . The nonparametric part maps the input to the random features space with the Random Features Mapper block, and the RFRRLS estimator predicts the residual output 4 ˜ y , which is then added to the parametric prediction ˆ y to obtain the semiparametric prediction ˜ y . change as the trajectory changes. On the other hand, the nonparametric part can provide higher prediction accuracy in specific areas of the input space for a given trajectory , since it also models nonrigid body dynamics effects by learning directly from data. In order to provide empirical foundations to the above insights, a validation experiment has been set up using the right arm of the iCub humanoid robot, considering as input the positions, velocities and accelerations of the 3 shoulder joints and of the elbow joint, and as outputs the 3 force and 3 torque components measured by the six-axis F/T sensor in-built in the upper arm. W e employ two datasets for this experiment, collected at 10 H z as the end-ef fector tracks (using the Cartesian controller presented in [33]) circumferences with 10 cm radius on the transverse ( X Y ) and sagittal ( X Z ) planes 3 at approximately 0 . 6 m/s . The total number of points for each dataset is 10000 , corresponding to approximately 17 minutes of continuous operation. The steps of the validation experiment for the three models are the following: 1) Initialize the recursive parametric, nonparametric and semiparametric models to zero. The inertial parameters are also initialized to zero 2) T rain the models on the whole X Y dataset (10000 points) 3) Split the X Z dataset in 10 sequential parts of 1000 samples each. Each part corresponds to 100 seconds of continuous operation 4) T est and update the models independently on the 10 splitted datasets, one sample at a time. In Figure 4 we present the means and standard de viations of the av erage root mean squared error (RMSE) of the predicted force and torque components on the 10 dif ferent test sets for the three models, averaged over a 3-seconds sliding window . The x axis is reported in log-scale to facilitate the comparison of predictive performance for the 3 For more information on the iCub reference frames, see http:// eris.liralab.it/wiki/ICubForwardKinematics different approaches in the initial transient phase. W e observe similar behaviors for the force and torque RMSEs. After fe w seconds, the nonparametric (NP) and semiparametric (SP) models provide more accurate predictions than the paramet- ric (P) model with statistical significance. At regime, their force prediction error is approximately 1 N , while the one of the P model is approximately two times larger . Similarly , the torque prediction error is 0 . 1 N m for SP and NP , which is considerably better than the 0 . 4 N m average RMSE of the P model. It shall also be noted that the mean average RMSE of the SP model is lo wer than the NP one, both for forces and torques. Howe ver , this slight dif ference is not very signifi- cant, since it is relatively small with respect to the standard deviation. Giv en these experimental results, we can conclude that in terms of predictive accuracy the proposed incremental semiparametric method outperforms the incremental para- metric one and matches the fully nonparametric one. The SP method also sho ws a smaller standard de viation of the error with respect to the competing methods. Considering the previous results and observations, the proposed method has been sho wn to be able to combine the main adv antages of parametric modeling (i.e. interpretability) with the ones of nonparametric modeling (i.e. capacity of modeling nonrigid body dynamics phenomena). The incremental nature of the algorithm, in both its P and NP parts, allows for adaptation to changing conditions of the robot itself and of the surrounding en vironment. V . C O N C L U S I O N S W e presented a novel incremental semiparametric model- ing approach for inv erse dynamics learning, joining together the advantages of parametric modeling derived from rigid body dynamics equations and of nonparametric Machine Learning methods. A distincti ve trait of the proposed ap- proach lies in its incremental nature, encompassing both the parametric and nonparametric parts and allowing for the prioritized update of both the identified base inertial parameters and the nonparametric weights. Such feature Seconds 0.1 1 10 100 Average RMSE (forces) 0 0.5 1 1.5 2 2.5 3 SP Recursive NP Recursive Parametric Rec. Seconds 0.1 1 10 100 Average RMSE (torques) 0 0.1 0.2 0.3 0.4 0.5 0.6 SP Recursive NP Recursive Parametric Rec. Fig. 4: Predicted forces (top) and torques (bottom) compo- nents av erage RMSE, averaged ov er a 30-samples window for the recursive semiparametric (blue), nonparametric (red) and parametric (green) estimators. The solid lines indicate the mean values over 10 repetitions, and the transparent areas correspond to the standard deviations. On the x axis, time (in seconds) is reported in logarithmic scale, in order to clearly show the behavior of the estimators during the initial transient phase. On the y axis, the average RMSE is reported. is ke y to enabling robotic systems to adapt to mutable conditions of the en vironment and of their o wn mechanical properties throughout extended periods. W e validated our approach on the iCub humanoid robot, by analyzing the performances of a semiparametric in verse dynamics model of its right arm, comparing them with the ones obtained by state of the art fully nonparametric and parametric approaches. AC K N OW L E D G M E N T This paper was supported by the FP7 EU projects CoDyCo (No. 600716 ICT -2011.2.1 - Cogniti ve Systems and Robotics), Koroibot (No. 611909 ICT -2013.2.1 - Cogni- tiv e Systems and Robotics), WYSIWYD (No. 612139 ICT - 2013.2.1 - Robotics, Cogniti ve Systems & Smart Spaces, Symbiotic Interaction), and Xperience (No. 270273 ICT - 2009.2.1 - Cogniti ve Systems and Robotics). R E F E R E N C E S [1] R. Featherstone and D. E. Orin, “Dynamics.” in Springer Handbook of Robotics , B. Siciliano and O. Khatib, Eds. Springer , 2008, pp. 35–65. [2] A. E. Hoerl and R. W . Kennard, “Ridge Regression: Biased Estimation for Nonorthogonal Problems, ” T echnometrics , vol. 12, no. 1, pp. pp. 55–67, 1970. [3] C. Saunders, A. Gammerman, and V . V ovk, “Ridge Regression Learning Algorithm in Dual V ariables.” in ICML , J. W . Shavlik, Ed. Morgan Kaufmann, 1998, pp. 515–521. [4] N. Cristianini and J. Shawe-T aylor, An Introduction to Support V ector Machines and Other Kernel-based Learning Methods . Cambridge Univ ersity Press, 2000. [Online]. A vailable: https: //books.google.it/books?id=B- Y88GdO1yYC [5] R. Rifkin, G. Y eo, and T . Poggio, “Regularized least-squares classi- fication, ” Nato Science Series Sub Series III Computer and Systems Sciences , no. 190, pp. 131–154, 2003. [6] C. E. Rasmussen and C. K. I. W illiams, Gaussian Pr ocesses for Machine Learning . MIT Press, 2006. [Online]. A vailable: http://www .gaussianprocess.org/gpml;http://www . bibsonomy .org/bibtex/257ca77b8164cba5c6a0ac94918219119/3mta3 [7] D. Nguyen-T uong and J. Peters, “Using model knowledge for learning in verse dynamics.” in ICRA . IEEE, 2010, pp. 2677–2682. [8] A. Gijsberts and G. Metta, “Incremental learning of robot dynamics using random features.” in ICRA . IEEE, 2011, pp. 951–956. [9] T . Wu and J. Mov ellan, “Semi-parametric Gaussian process for robot system identification, ” in Intelligent Robots and Systems (IROS), 2012 IEEE/RSJ International Confer ence on , Oct 2012, pp. 725–731. [10] J. Sun de la Cruz, D. Kulic, W . Owen, E. Calisgan, and E. Croft, “On-Line Dynamic Model Learning for Manipulator Control, ” in IF AC Symposium on Robot Contr ol , vol. 10, no. 1, 2012, pp. 869–874. [11] K. Y amane, “Practical kinematic and dynamic calibration methods for force-controlled humanoid robots.” in Humanoids . IEEE, 2011, pp. 269–275. [12] S. Trav ersaro, A. D. Prete, R. Muradore, L. Natale, and F . Nori, “In- ertial parameter identification including friction and motor dynamics.” in Humanoids . IEEE, 2013, pp. 68–73. [13] Y . Ogawa, G. V enture, and C. Ott, “Dynamic parameters identification of a humanoid robot using joint torque sensors and/or contact forces.” in Humanoids . IEEE, 2014, pp. 457–462. [14] J. Hollerbach, W . Khalil, and M. Gautier, “Model identification, ” in Springer Handbook of Robotics . Springer , 2008, pp. 321–344. [15] S. V ijayakumar and S. Schaal, “Locally W eighted Projection Regres- sion: Incremental Real T ime Learning in High Dimensional Space.” in ICML , P . Langley , Ed. Morgan Kaufmann, 2000, pp. 1079–1086. [16] B. Sch ¨ olkopf and A. J. Smola, Learning with K ernels: Support V ector Machines, Re gularization, Optimization, and Beyond (Adaptive Computation and Machine Learning) . MIT Press, 2002. [17] A. Rahimi and B. Recht, “Random Features for Large-Scale Kernel Machines, ” in NIPS . Curran Associates, Inc., 2007, pp. 1177–1184. [18] D. Nguyen-T uong, M. Seeger, and J. Peters, “Model Learning with Local Gaussian Process Regression, ” Advanced Robotics , vol. 23, no. 15, pp. 2015–2034, 2009. [Online]. A vailable: http://dx.doi.org/10.1163/016918609X12529286896877 [19] G. Metta, L. Natale, F . Nori, G. Sandini, D. V ernon, L. Fadiga, C. von Hofsten, K. Rosander, M. Lopes, J. Santos-V ictor , A. Bernardino, and L. Montesano, “The iCub Humanoid Robot: An Open-systems Platform for Research in Cognitive Development, ” Neur al Netw . , vol. 23, no. 8-9, pp. 1125–1134, Oct. 2010. [20] M. Gautier and W . Khalil, “On the identification of the inertial parameters of robots, ” in Decision and Contr ol, 1988., Proceedings of the 27th IEEE Conference on . IEEE, 1988, pp. 2264–2269. [21] M. Gautier , “Dynamic identification of robots with power model, ” in Robotics and Automation, 1997. Pr oceedings., 1997 IEEE Interna- tional Conference on , vol. 3. IEEE, 1997, pp. 1922–1927. [22] K. A yusawa, G. V enture, and Y . Nakamura, “Identifiability and identification of inertial parameters using the underactuated base-link dynamics for legged multibody systems, ” The International J ournal of Robotics Resear ch , vol. 33, no. 3, pp. 446–468, 2014. [23] J. Baelemans, P . van Zutven, and H. Nijmeijer, “Model parameter estimation of humanoid robots using static contact force measure- ments, ” in Safety , Security , and Rescue Robotics (SSRR), 2013 IEEE International Symposium on , Oct 2013, pp. 1–6. [24] W . Khalil and E. Dombre, Modeling, identification and contr ol of r obots . Butterworth-Heinemann, 2004. [25] A. Rahimi and B. Recht, “Uniform approximation of functions with random bases, ” in Communication, Contr ol, and Computing, 2008 46th Annual Allerton Confer ence on , Sept 2008, pp. 555–561. [26] W . Rudin, F ourier Analysis on Gr oups , ser . A Wiley-interscience publication. W iley , 1990. [27] ˚ A. Bj ¨ orck, Numerical Methods for Least Squar es Pr oblems . Siam Philadelphia, 1996. [28] A. H. Sayed, Adaptive F ilters . Wiley-IEEE Press, 2008. [29] A. T acchetti, P . K. Mallapragada, M. Santoro, and L. Rosasco, “GURLS: a least squares library for supervised learning, ” The Journal of Machine Learning Resear ch , vol. 14, no. 1, pp. 3201–3205, 2013. [30] D. M. Beazley et al. , “SWIG: An easy to use tool for integrating scripting languages with C and C++, ” in Pr oceedings of the 4th USENIX Tcl/Tk workshop , 1996, pp. 129–139. [31] S. Tra versaro, A. Del Prete, S. Ivaldi, and F . Nori, “Inertial parameters identification and joint torques estimation with proximal force/torque sensing, ” in 2015 IEEE International Conference on Robotics and Automation (ICRA 2015) . [32] S. Iv aldi, M. Fumagalli, M. Randazzo, F . Nori, G. Metta, and G. Sandini, “Computing robot internal/external wrenches by means of inertial, tactile and F/T sensors: Theory and implementation on the iCub, ” in Humanoid Robots (Humanoids), 2011 11th IEEE-RAS International Conference on , Oct 2011, pp. 521–528. [33] U. Pattacini, F . Nori, L. Natale, G. Metta, and G. Sandini, “An experimental evaluation of a novel minimum-jerk cartesian controller for humanoid robots, ” in Intelligent Robots and Systems (IROS), 2010 IEEE/RSJ International Confer ence on , Oct 2010, pp. 1668–1674.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment