Convergence and sample complexity of gradient methods for the model-free linear quadratic regulator problem

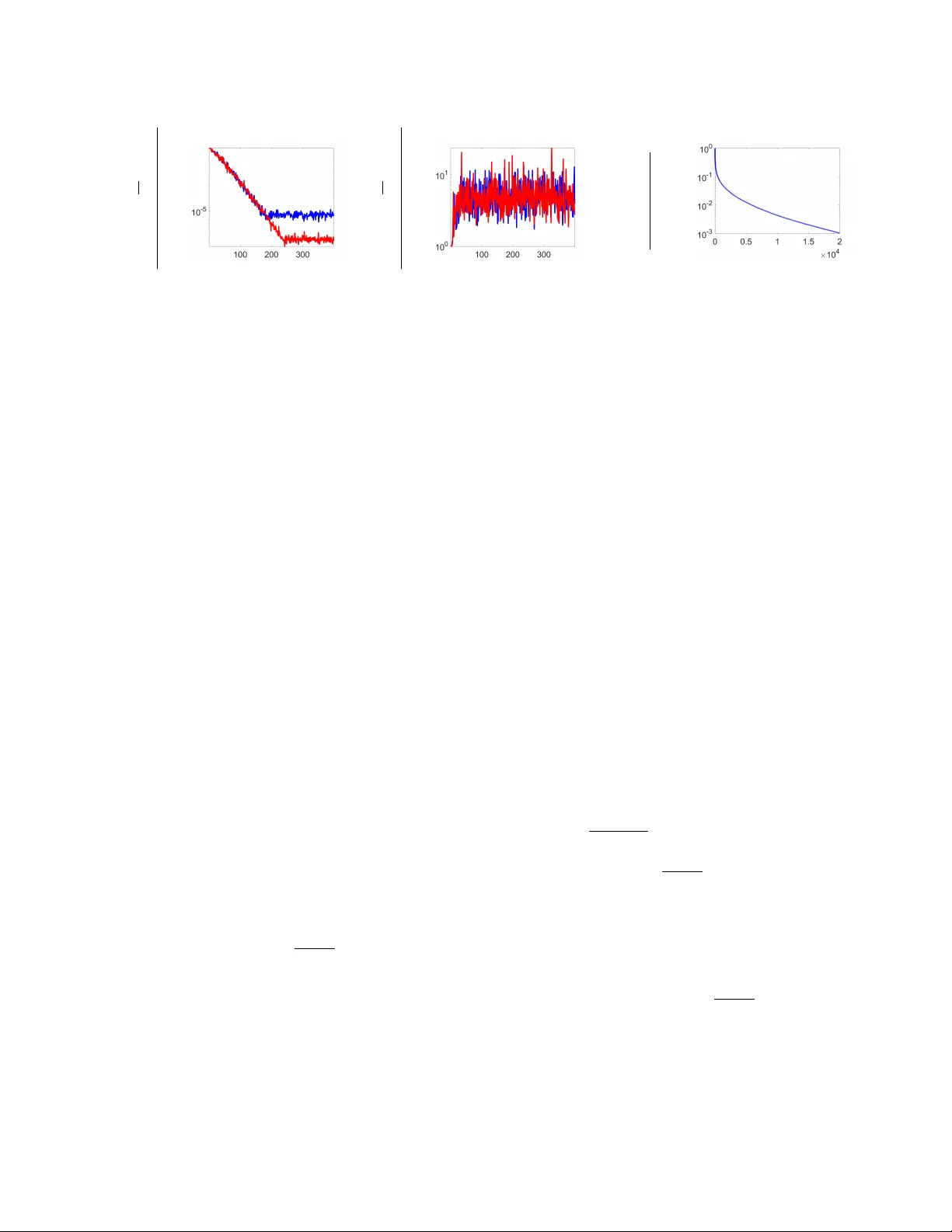

Model-free reinforcement learning attempts to find an optimal control action for an unknown dynamical system by directly searching over the parameter space of controllers. The convergence behavior and statistical properties of these approaches are of…

Authors: Hesameddin Mohammadi, Armin Zare, Mahdi Soltanolkotabi