Multi-scale fully convolutional neural networks for histopathology image segmentation: from nuclear aberrations to the global tissue architecture

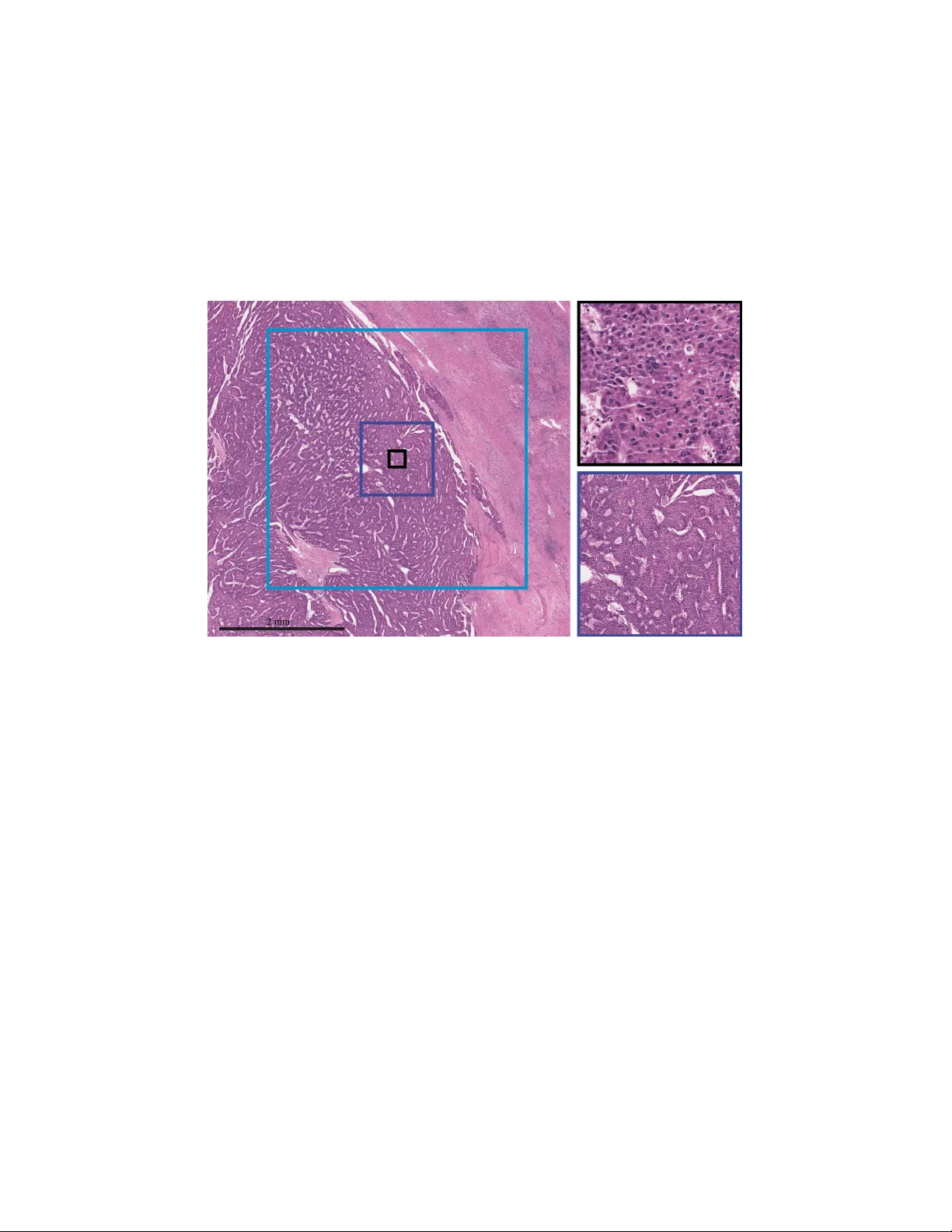

Histopathologic diagnosis relies on simultaneous integration of information from a broad range of scales, ranging from nuclear aberrations ($\approx \mathcal{O}(0.1{\mu m})$) through cellular structures ($\approx \mathcal{O}(10{\mu m})$) to the globa…

Authors: R"udiger Schmitz, Frederic Madesta, Maximilian Nielsen