Handheld Multi-Frame Super-Resolution

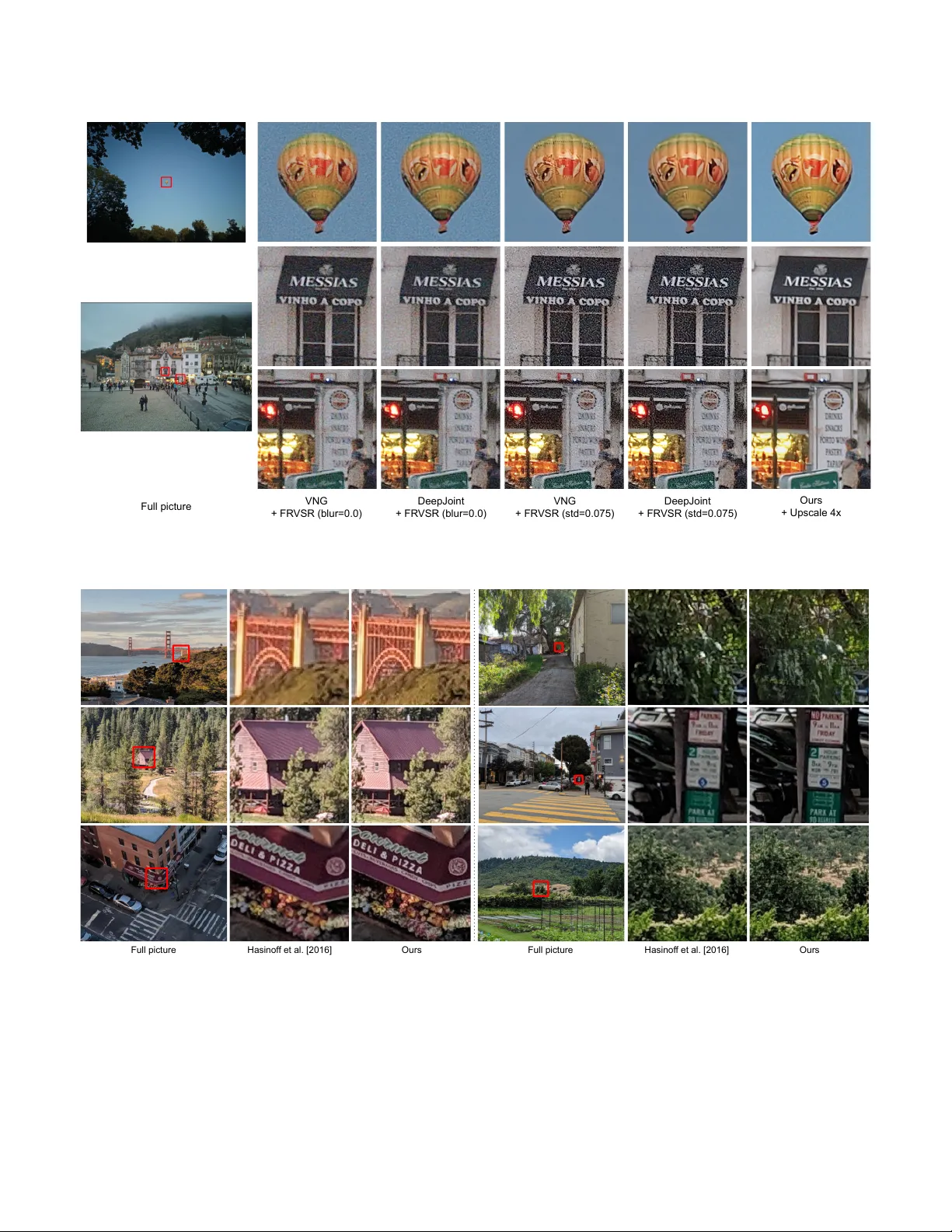

Compared to DSLR cameras, smartphone cameras have smaller sensors, which limits their spatial resolution; smaller apertures, which limits their light gathering ability; and smaller pixels, which reduces their signal-to noise ratio. The use of color f…

Authors: Bartlomiej Wronski, Ignacio Garcia-Dorado, Manfred Ernst