From Audio to Semantics: Approaches to end-to-end spoken language understanding

Conventional spoken language understanding systems consist of two main components: an automatic speech recognition module that converts audio to a transcript, and a natural language understanding module that transforms the resulting text (or top N hy…

Authors: Parisa Haghani, Arun Narayanan, Michiel Bacchiani

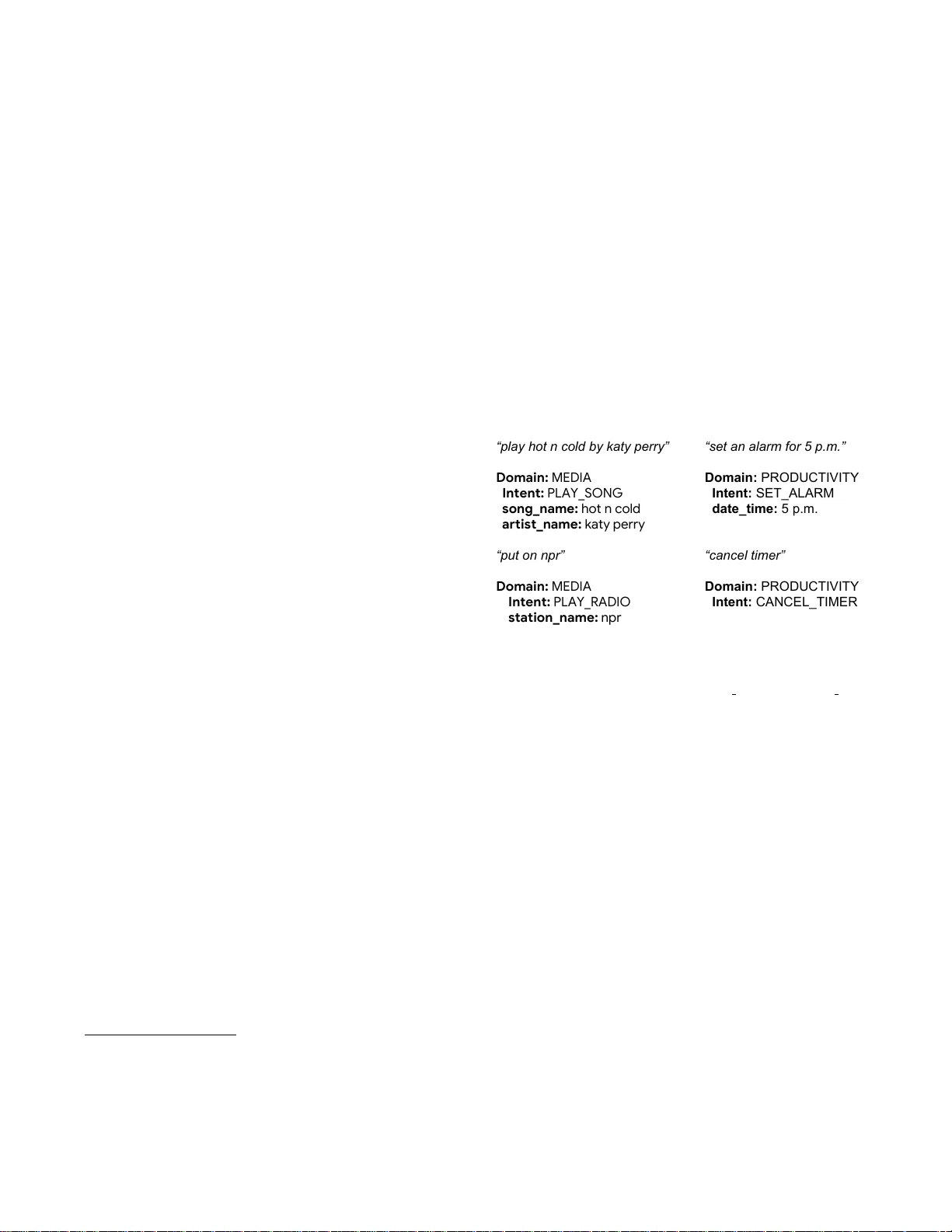

FR OM A UDIO T O SEMANTICS: APPR O A CHES TO END-T O-END SPOKEN LANGU A GE UNDERST ANDING P arisa Ha ghani * , Arun Narayanan ∗ , Michiel Bacc hiani, Galen Chuang Neeraj Gaur , P edr o Mor eno, Rohit Prabhavalkar , Zhongdi Qu, A ustin W ater s Google Inc., USA ABSTRA CT Con ventional spoken language understanding systems consist of tw o main components: an automatic speech recognition module that con- verts audio to a transcript, and a natural language understanding module that transforms the resulting text (or top N hypotheses) into a set of domains, intents, and arguments. These modules are typ- ically optimized independently . In this paper , we formulate audio to semantic understanding as a sequence-to-sequence problem [1]. W e propose and compare various encoder-decoder based approaches that optimize both modules jointly , in an end-to-end manner . Evalu- ations on a real-world task show that 1) having an intermediate text representation is crucial for the quality of the predicted semantics, especially the intent arguments and 2) jointly optimizing the full system improves overall accuracy of prediction. Compared to in- dependently trained models, our best jointly trained model achiev es similar domain and intent prediction F 1 scores, but improves argu- ment word error rate by 18% relati ve. Index T erms — spoken language understanding, sequence-to- sequence, end-to-end training, multi-task learning, speech recogni- tion 1. INTRODUCTION Understanding semantics from a user input or a query is central to any human computer interface (HCI) that aims to interact naturally with users. Spoken dialogue systems that aim to solv e this for spe- cific tasks hav e been a focus of research for more than two decades [2]. W ith the widespread adoption of smart devices like Google- Home [3], Amazon Alexa, Apple Siri and Microsoft Cortana, spoken language understanding (SLU) is moving to the forefront of HCI. T ypically , SLU in volv es multiple modules. An automatic speech recognition system (ASR) first transcribes the user query into a tran- script. This is then fed to a module that does natural language under - standing (NLU) 1 . NLU itself in v olves domain classification, intent detection, and slot filling [2]. In traditional NLU systems, first the high level domain of a transcript is identified. Subsequently , intent detection and slot filling are performed according to the predicted domain’ s semantic template. Intent detection identifies the finer- grained intent class a gi ven transcript belongs to. Slot filling, or argu- ment prediction, 2 is the task of extracting semantic components, like the argument values corresponding to the domain. Figure 1 sho ws ∗ The first tw o authors ha ve equal contribution, the rest of the list is in alphabetic order . 1 W e use NLU to refer to the component that predicts domain, intent, and slots gi ven the ASR transcript. SLU refers to the full system that predicts the aforementioned starting from audio. 2 W e use slots and arguments interchangeably . example transcripts and their corresponding domain, intent, and ar- guments. Recent work [4, 5] has sho wn that jointly optimizing these three tasks improv es the overall quality of the NLU component. For conciseness, we use the w ord semantics to refer to all three of do- main, intent and arguments. “play hot n cold by katy perry” Domain: MEDIA Intent: PLAY_SONG song_name: hot n cold artist_name: katy perry “put on npr” Domain: MEDIA Intent: PLAY_RADIO station_name: npr “set an alarm for 5 p.m.” Domain: PRODUCTIVITY Intent: SET_ALARM date_time: 5 p.m. “cancel timer” Domain: PRODUCTIVITY Intent: CANCEL_TIMER Fig. 1 : Example transcripts and their corr esponding domain, intent, and arguments. Only arguments that have corresponding values in a transcript are shown. F or example, song name and station name ar e both arguments in the MEDIA domain b ut only one has a corr e- sponding value in each of the MEDIA e xamples. Even though user interactions in an SLU system start as a voice query , most NLU systems assume that the transcript of the request is av ailable or obtained independently . The NLU module is typically optimized independent of ASR. While accuracy of ASR systems hav e improved over the years [6, 7], errors in recognition worsen NLU performance. This problem gets exacerbated on smart devices, where interactions tend to be more conv ersational. Howe ver , not all ASR errors are equally bad for NLU. For most applications, the se- mantics consist of an action with relev ant arguments; a large part of the transcript of the ASR module has no impact on the end result as long as intent classification and predicted arguments are accurate. For example, for a user query , “Set an alarm at two o’clock, ” in- tent, “alarm, ” and its arguments, ‘tw o o’clock’, are more important than filler words, like ‘an’. Joint optimization can focus on improv- ing those aspects of transcription accuracy that are aligned with the end goal, whereas independent optimization fails at that objectiv e. Furthermore, for some applications, there are intents that are more naturally predicted from audio compared to transcript. F or example, when training an automated assistant, like Google Duplex [8] or an airline travel assistant, it would be useful to identify acoustic ev ents like background noise, music and other non-verbal cues as special intents, and tailor the assistant’ s response accordingly to improv e the overall user e xperience. Hence, training various components of the SLU system jointly can be advantageous. There hav e been some early attempts at using audio to perform NLU. Domain and intent are predicted directly from audio in [9], and this approach is shown to perform competiti vely , but w orse than predicting from transcript. Alternativ ely , using multiple ASR hy- pothesis [10] or the word confusion network [11] or the recognition lattice [12] hav e been proposed to account for ASR errors, but inde- pendent optimization of ASR and NLU can still lead to sub-optimal performance. In [13], an ASR correcting module is trained jointly with NLU component. T o account for ASR errors, multiple ASR hy- potheses are generated during training as additional input sequences, which are then error-corrected by the slot-filling model. While the slot-filling module is trained to account for the errors, the ASR mod- ule is still trained independent of NLU. Similar to the work in [9], an end-to-end system is proposed in [14] that does intent classification directly from speech, with an intermediate ASR task. But unlike the current work, it uses a connectionist temporal classification (CTC) [15] acoustic model, and only performs intent prediction. In the cur- rent work, we show that NLU, i.e., domain, intent, and argument prediction can be done jointly with ASR starting directly from au- dio and with a quality of performance that matches or surpasses an independently trained counterpart. The systems presented in this study are moti vated by the encoder-decoder based sequence-to-sequence (Seq2Seq) [16, 17, 1] approach that has shown to perform well for machine translation [18] and speech recognition tasks [19]. Encoder-decoder based approaches provide an attractiv e framework to implementing SLU systems, since the attention mechanism allo ws for jointly learning an alignment while predicting a target sequence that has a many-to-one relationship with its input [20]. Such techniques hav e already been used in NLU [21], but using ASR transcripts, not audio, as input to the system. In this work, we present and compare various end-to-end ap- proaches to SLU for joinlty predicting semantics from audio. The presented techniques simplify the ov erall architecture of SLU sys- tems. Using a lar ge training set comparable to what is typically used for b uilding lar ge-vocab ulary ASR systems, we sho w that not only can predicting semantics from audio be competitive, it can in some conditions outperform the conv entional two-stage approach. T o the best of our knowledge, this is the first study that shows all three of domain, intent, and ar guments can be predicted from audio with competitiv e results. The rest of the paper is organized as follo ws. Section 2 presents various models and architectures explored in this work. The exper- imental setup and results are described in Section 3 and Section 4, respectiv ely . W e conclude in Section 5. 2. SYSTEM ARCHITECTURE Our work is based on the encoder-decoder framework augmented by attention. W e start by re viewing this framew ork in Section 2.2. There are multiple ways to model an end-to-end SLU system. One can either predict semantics directly from audio, ignoring the tran- script, or have separate modules for predicting the transcript and se- mantics that are optimized jointly . These different approaches and the corresponding formulation are described in Sections 2.4 – 2.6. Figure 2 shows a schematic representation of these architectures. 2.1. Notation T o revie w the general encoder-decoder frame work, we denote the input and output sequences by A = { a 1 , . . . , a K } and B = Encoder1 Decoder1 x 1 ...x T s 1 ...s M Encoder1 Decoder1 x 1 ...x T w 1 ...w N s 1 ...s M Encoder1 x 1 ...x T Decoder1 w 1 ...w N Decoder2 s 1 ...s M Encoder1 Decoder1 x 1 ...x T w 1 ...w N Encoder2 Decoder2 s 1 ...s M a. Direct model b. Joint model c. Multitask model d. Multistage model Fig. 2 : Differ ent model ar c hitecture s in vestigated in this paper . X stands for acoustic features, W for tr anscripts and S for seman- tics (domain, intent and arguments). Dotted lines r epr esent both the conditioning of output label on its history and the attention module, which is tr eated as a part of the decoder . { b 1 , . . . , b L } , where K and L denote their lengths. In this work, since we start from audio, the input sequence to the model are acous- tic features (we describe the details of acoustic feature computation in Section 3.3), while the output sequence, depending on the model architecture, may be the transcript, the corresponding semantics, or both. While the semantics of an utterance is best represented as structured data, we use a simple deterministic scheme for serializing it by first including the domain and intent, follo wed by the argument labels and their v alues (see T able 1). More details are described in Section 3.2. For the rest of the paper, we denote the input acous- tic features by X = { x 1 , . . . , x T } , where T stands for the total number of time frames. The transcript is represented as a sequence of graphemes. It is denoted as W = { w 1 , . . . , w N } , where N stands for the number of graphemes in the transcript. The semantics sequence is represented by S = { s 1 , . . . , s M } , where M stands for the number of tokens. The tokens come from a dictionary consisting of the domain, intent, ar gument labels, and graphemes to represent the argument v alues. 2.2. Encoder -decoder framew ork Giv en the training pair ( A , B ) and model parameters θ , a sequence- to-sequence model computes the conditional probability P ( B|A ; θ ) . This can be done by estimating the terms of the probability using chain rule: T ranscript Serialized Semantics “can you set an alarm for 2 p.m. ” < DOMAIN >< PR ODUCTIVITY >< INTENT >< SET ALARM >< DA TETIME > 2 p.m. “remind me to buy milk” < DOMAIN >< PR ODUCTIVITY >< INTENT >< ADD REMINDER >< SUBJECT > buy milk “next song please” < DOMAIN >< MEDIA CONTROL > “how old is barack obama” < DOMAIN >< NONE > T able 1 : Example transcripts and their corr esponding serialized semantics. P ( B|A ; θ ) = L Y i =1 P ( b i | b 1 , . . . , b i − 1 , A ; θ ) (1) The parameters of the model are learned by maximizing the condi- tional probabilities for the training data: θ ? = argmax θ X ( A , B ) log P ( B|A ; θ ) (2) In the encoder-decoder framew ork [16, 1], the model is param- eterized as a neural network, most commonly a recurrent neural net- work, consisting of two main parts: An encoder that receiv es the input sequence and encodes it into a higher le vel representation, and a decoder that generates the output from this representation after first being fed a special start-of-sequence symbol. Decoding terminates when the decoder emits the special end-of-sequence symbol. The modeling power of encoder -decoder framework has been improv ed by the addition of an attention mechanism [17]. This mechanism was introduced to overcome the bottleneck of having to encode the en- tire v ariable length input sequence in a single v ector . At each output step, the decoder’ s last hidden state is used to generate an attention vector over the entire encoded input sequence, which is used to sum- marize and propagate the needed information from the encoder to the decoder at every output step. In this work, we use multi-headed attention [22] that allows the decoder to focus on multiple parts of the input when generating each output. The ef fectiv eness of this type of attention for ASR was explored and v erified in [23]. 2.3. Direct model In the direct model the semantics of an utterance are directly pre- dicted from the audio. The model does not learn to fully transcribe the input audio; it learns to only transcribe parts of the transcript that appear as argument v alues. Conceptually , this is the simplest formu- lation for end-to-end semantics prediction. But it also makes the task challenging, since the model has to implicitly learn to ignore parts of the transcript that is not part of an ar gument and the corresponding audio, while also inferring the domain and intent in the process. Follo wing the notation introduced in Section 2.2, the model di- rectly computes P ( S |X ; θ ) , as in Equation 1. The encoder takes the acoustic features, X , as input and the decoder generates the semantic sequence, S . 2.4. Joint model This model still consists of an encoder and a decoder, similar to the direct model, but the decoder generates the transcript followed by domain, intent, and arguments. The output of this model is thus the concatenation of transcript and its corresponding semantics: [ W : S ] where [:] denotes concatenation of the first and the second sequence. This formulation conditions intent and ar gument prediction on the transcript: P ( S , W |X ; θ ) = P ( S |W , X ; θ ) P ( W |X ; θ ) (3) This model retains the simplicity of the direct model, while simulta- neously making learning easier by introducing an intermediate tran- script representation corresponding to the input audio. 2.5. Multitask model Multitask learning [24] (MTL) is a widely used technique when learning related tasks, typically with limited data. Related tasks act as inductive bias, improving generalization of the main task by choosing parameters that are optimal for all tasks. Although predict- ing the text transcript is not necessary for domain, intent and argu- ment prediction, it is a natural secondary task that can potentially offer a strong inductiv e bias while learning. In MTL, we f actorize P ( S , W |X ; θ ) as: P ( S , W |X ; θ ) = P ( S |X ; θ ) P ( W |X ; θ ) . (4) In the case of neural nets, multitask learning is typically done by sharing hidden representations between tasks [25]. In this work, we do this by sharing the encoder and having separate decoders for pre- dicting transcripts and semantics. W e then learn parameters that op- timize both tasks: θ ? = argmax θ X ( X , W , S ) log P ( W |X ; θ e , θ W d ) + log P ( S |X ; θ e , θ S d ) , (5) where, θ = ( θ e , θ W d , θ S d ) . θ e , θ W d , θ S d are the parameters of the shared encoder, the decoder that predicts the transcript, and the decoder that predicts semantics, respectively . The shared encoder learns representations that enable both transcript and semantics prediction. 2.6. Multistage model Multistage (MS) model, when trained under the maximum likeli- hood criterion, is most similar to the con ventional approach of train- ing the ASR and NLU components independently . In MS modeling, semantics are assumed to be conditionally independent of acoustics giv en the transcript: P ( S , W |X ; θ ) = P ( S |W ; θ ) P ( W | X ; θ ) . (6) Giv en this formulation, θ can be learned as: θ ? = argmax θ X ( X , W , S ) log P ( W |X ; θ W ) + log P ( S |X ; θ W , θ S ) , (7) Here, θ W , θ S are, respecti vely , the parameters of the first stage, which predicts the transcript, and the second stage, which predicts semantics. For each training example, we assume that the triplet T able 2 : Distribution of domains consider ed in this study in the training and test data. domain T rain T est MEDIA 30% 20% MEDIA CONTROL 8% 16% PR ODUCTIVITY 7% 5% DELIGHT 2% 2% NONE 53% 56% ( X , W , S ) is av ailable. As a result, the two terms in Eq. 6 can be independently optimized, thereby reducing the model to a con ven- tional 2-stage SLU system. In practice, howe ver , it is possible to weakly tie the two stages together during training by using the pr e- dicted W at each time-step and allowing the gradients to pass from the second stage to the first stage through that label index. In Sec. 4, we will present results using alternati ve strategies to pick W from the first stage to propagate to the second stage, like the argmax of the softmax layer or sampling from the multinomial distribution in- duced by the softmax layer . By weakly tying the two stages, we allow the first stage to be optimized jointly with the second stage, based on the criterion that is relev ant for both stages. One of the advantages of the multistage approach is that the pa- rameters for the 2 tasks are decoupled. Therefore, we can easily use different corpora to train each stage. T ypically , the amount of data av ailable to train a speech recognizer far e xceeds the amount av ail- able to train an NLU system. In such cases, we can use the av ailable ASR training data to tune the first stage and finally train the entire system using whate ver data is av ailable to train jointly . Furthermore, a stronger coupling between the 2 stages can be made when opti- mizing alternativ e loss criterion like the minimum Bayes risk (MBR) [26][27]. W e’ll leave these aspects of multistage modeling to future work, as the focus of current study is more to understand the feasi- bility of predicting directly from audio and training jointly . 3. EXPERIMENT AL SETUP 3.1. Data Our training data consists of 24 M anonymized English utterances transcribed by humans. Similarly , our test set consists of 16 K hand- transcribed utterances. Both training and testing sets represent a slice of traffic from Google Home that we are interested in. The labeling for domain, intent, and arguments is generated from pass- ing the ground-truth transcription through context free grammars (CFG). The CFGs are used to parse and transform ground-truth tran- scripts to domain, intent, and arguments. W e only consider non- con versational (one-shot) queries in this work. In total, there are 5 domains: MEDIA, MEDIA CONTR OL, PR ODUCTIVITY , DE- LIGHT , and NONE. As the name suggests, an y utterance that cannot be classified into the first four domains is labeled NONE. W e con- sider ∼ 20 intents in this study , such as SET ALARM, SELF NO TE, etc., and tw o arguments: D A TETIME and SUBJECT . The distribu- tion of domains in the train and test sets is shown in T able 2. 3.2. Serializing/De-serializing Semantics W e use a simple scheme for serializing semantics: The domain is specified first using a special tag ‘ < DOMAIN > ’ followed by its name. If the domain is further divided into intents, we use the tag ‘ < INTENT > ’ followed by the intent’ s name. Any optional argu- ments are specified similarly using the name of the argument and its corresponding v alue. T able 1 sho ws a few example transcripts and their corresponding serialized semantics. At inference time, the predicted semantics sequence, S , is de- serialized in a similar fashion to extract the domain, intent, and ar - gument label and v alues. This is done using a simple parser that tokenizes the sequence by the domain tag, intent tag and argument name and treats the sequence in between them as the corresponding values. This parser is agnostic to the order of these special tags, i.e., the domain tag can come ahead of the intent tag. In the case of the joint model where the output sequence is the concatenation of the transcript and semantics, the first observed special tag or argument name marks the start of the semantic sequence. The vocab ulary that we use includes the domain and intent tags, domain, intent and argument names, (i.e., all symbols enclosed in “ < ” and “ > ” in T able 1) as well as English graphemes for repre- senting transcript and ar gument values. The graphemes in this study are limited to lowercase English alphabets and digits, punctuation and a few other special symbols such as underscore, brackets, start- of-sentence, and end-of-sentence. The total size of the vocabulary is 110 . Note that the special tags used for representing semantics are each a single ouput, e.g., “ < DOMAIN > ” is one output and not eight graphemes “ < , D, ..., N, > ”. 3.3. Models All experiments use the same acoustic features: 80 -dimensional log- Mel filterbanks, computed with a 25 msec window , shifted ev ery 10 msec. Similar to [28], features from 3 contiguous frames are stacked, resulting in a 240 -dimensional vector . These stacked features are downsampled by a factor of 3 generating inputs at 30 ms frame rate that the encoder operates on. T able 3 : Model arc hitectur es used in the e xperiments. In each of the Enc/Dec columns, the first number indicates the number of layers and the second number shows the number of cells per layer . The cell type in all the models is Long Short T erm Memory (LSTM). The last column shows the total number of parameters (in million). Model Enc.1 Dec.1 Enc.2 Dec.2 #Params (UniDi) (BiDi) Direct 5 × 1400 2 × 1024 - - 97 M Joint 5 × 1400 2 × 1024 - - 97 M Multitask 5 × 1400 2 × 512 - 2 × 512 86 M Multistage 5 × 700 2 × 512 5 × 700 2 × 512 84 M T able 3 summarizes the architecture of the various models used in our experiments. W e maintain a similar number of parameters (within 15% difference) across models to allow for a fair com- parison. All encoder and decoders use Long Short T erm Memory (LSTM) [29] cells. The first encoder in all models is unidirectional, while the second encoder (in multistage models) uses bidirectional LSTMs [30]. Prior work [5] has shown that using bidirectional cells for encoding a transcript for the task of classifying its domain and intent achieves better performance compared to the unidirectional version. The first layer in all decoders is an embedding layer of size 128 . The second encoder in the multistage model, which tak es the transcript as input, also uses an embedding layer of the same size. All decoders use 4 -headed additiv e attention [31, 17, 22]. T able 4 : Domain and intent F 1 scor es, and ar gument WER for the pr edicted semantics. Model Domain F1 Intent F1 Arg WER Baseline 96 . 6 95 . 1 15 . 04 Direct 96 . 2 94 . 2 18 . 22 Joint 96 . 8 95 . 7 14 . 93 Multitask 96 . 7 95 . 8 15 . 02 Multistage (ArgMax) 96 . 5 95 . 4 14 . 84 Multistage (SampledSoftmax) 96 . 5 95 . 2 12 . 29 Our Baseline is the multistage model in which the two stages that do ASR and NLU are trained independently , b ut using the same training data. W e consider 2 variants of the multistage model that weakly couples the 2 stages. Multista ge (Ar gMax) passes the argmax of the softmax layer of the first stage decoder , which predicts tran- scripts, to the second stage. Multistage (SampledSoftmax) , on the other hand, passes on an unbiased sample from multinomial distri- bution represented by the output of the softmax layer [32]. All neural networks are trained from scratch with the cross- entropy criterion in the T ensorFlow framework [33]. W e use beam search during inference with a beam size of 8 . The models are trained using T ensor Processing Units [34] using the Adam opti- mizer [35] and synchronous gradient descent. 3.4. Evaluation Metrics W e use the typical ASR and NLU metrics for evaluation. For mod- els that generate the transcript, we measure and report word error rate (WER). For semantics, we measure multi-class F 1 scores [36] for domain and intent. NLU systems that use in-out-begin (IOB) format for tagging arguments (see [5] for an example of IOB for- mat) report F 1 scores for argument tags (e.g., [36] in the case of named-entities), but it is not clear how to measure this metric when the input transcript and the output arguments do not match, or when the input is audio. For example, if ground truth semantics con- tains “ < D A TETIME > fiv e p.m. ” but the hypothesized semantics is “ < D A TETIME > high p.m. ”, it would be useful to ha ve an error met- ric that captures the misrecognition of “five” to “high”. For that reason, we choose to report WER for the arguments, instead of the F 1 scores. In our computation, we count over triggers and misses tow ards 100% WER. For example, if the ground truth semantics contains a D A TETIME ar gument, b ut the recognized semantics does not, that instance has a 100% WER for DA TETIME. W e compute per argument WER and report the weighted av erage where each ar- gument’ s WER is weighted according to its number of occurrences. 4. RESUL TS T able 4 compares domain, intent, and argument prediction perfor- mance of the models presented in the pre vious section. As can be seen, all models perform relatively similarly when it comes to clas- sifying the domains. The J oint model works the best, with an F 1 score of 96 . 8 %. Dir ect model, which has the lowest F 1 score, is only worse by 0.6% absolute. Performance on intent prediction is slightly worse, on average, compared to domain prediction. The Multitask and J oint models achie ve the best F 1 scores of 95 . 8 % and 95 . 7 %, respectiv ely . Both these models use the encoded acous- tic features as input to the decoder, and unlike the Direct model, also predict the transcripts. This shows that having access to acous- tic features and ha ving an intermediate text representation are both important when predicting intent. Comparing the Baseline model with the multistage models that weakly couple the 2 stages, Multistage (ArgMax) and Multistage (SampledSoftmax) , we can see that the y all work very similarly when it comes to domain and intent prediction, and are generally worse than Joint and Multitask models. This further shows the importance of complimenting transcripts with acoustic features when predicting intent. The dif ferences in argument WER is more pronounced among the different models. Dir ect model performs the worst, getting a WER of 18 . 2 . This shows that including transcription loss while training end-to-end models can help improv e argument prediction. Contrary to domain and intent F 1 scores, Multistage (Ar gMax) and Multistag e (SampledSoftmax) , work better than the Joint and Multitask models. Nev ertheless, all jointly optimized models w ork better than the independently trained baseline. Notably , Multista ge (SampledSoftmax) model improves upon the baseline multistage model by 18 % relativ e. Since the domain, intent and argument labeling for training and test data was obtained using CFG-parsers, we did a second exper - iment that used the predicted transcript from the various models, pipelined with the same CFG-parsers. The CFG-parsers are used to deriv e domain, intent, and arguments from the predicted transcript. Results are sho wn in T able 5. The table also shows the o verall WER obtained by the various models. Compared to the results in T able 4, we can see that domain F 1 scores are similar , but intent F 1 scores are better . Interestingly , the argument WER significantly improved. For example, for the Baseline model, WER improved from 15 . 0 to 11 . 9 . While this is not entirely surprising, since this strategy of pre- dicting semantics matches what is used for generating ground truth labels for training data, it is interesting to see that models that are optimized jointly still w ork better in terms of intent F 1 scores and argument WER. For example, the Multitask model gets an intent F 1 score of 97 . 2 , which is better than the baseline by 1 . 3 points. Sim- ilarly , Multistage (SampledSoftmax) and Joint models get an argu- ment WER of 11 . 3 , which is 0 . 6 % absolute better than the baseline. The results show that joint training can also help impro ve perfor- mance of the ASR component of the model when using the original CFG-parser for intent prediction. 5. CONCLUDING REMARKS In this work, we have proposed and e valuated multiple end-to-end approaches to SLU that optimize the ASR and NLU components of the system jointly . W e show that joint optimization results in better performance not just when we do end-to-end domain, intent, and ar - gument prediction, but also when using the transcripts generated by a jointly trained end-to-end model and a con ventional CFG-parsers for NLU. Our results highlight se veral important aspects of joint op- timization. W e show that having an intermediate text representation T able 5 : T ranscription WER, domain and intent F 1 scores, and argument WER when NLU is performed on the model’ s top reco gnized transcript using the CFG-parser that was used for g enerating truth semantic labels during training . Model WER Domain F1 Intent F1 Arg WER Baseline 5 . 9 96 . 4 95 . 9 11 . 89 Direct - - - - Joint 5 . 5 96 . 5 96 . 5 11 . 28 Multitask 5 . 7 95 . 4 97 . 2 11 . 71 Multistage (ArgMax) 5 . 9 96 . 5 96 . 3 12 . 59 Multistage (SampledSoftmax) 6 . 0 96 . 5 96 . 5 11 . 26 is important when learning SLU systems end-to-end. As expected, our results also show that joint optimization helps the model focus on errors that matter more for SLU as evidenced by the lo wer argument WERs obtained by models that couple ASR and NLU. It was also observed that direct prediction of semantics from audio by ignoring the ground truth transcript, does not perform as well. There are several interesting avenues to impro ve performance going forward. As noted before, the amount of training data that is available to train ASR is usually several times larger than what is av ailable to train NLU systems. It would be interesting to un- derstand how a jointly optimized model can make use of ASR data to improve performance. For optimization, the current work uses the cross-entropy loss. Future work will consider more task specific losses, like MBR, that optimizes intent and argument prediction ac- curacy directly . It is also important to understand how to incorporate new grammars with limited training data into an end-to-end system. The CFG-parsing based approach that decouples itself from ASR can easily incorporate additional grammars. But end-to-end opti- mization relies on data to learn ne w grammars, making the introduc- tion of new domains more challenging. Framing spoken language understanding as a sequence to se- quence problem that is optimized end-to-end significantly simplifies the overall complexity . It is also easy to scale such models to more complex tasks, e.g., tasks that inv olve multiple intents within a sin- gle user input, or tasks for which it is not easy to create a CFG-based parser . The ability to run inference without the need of additional re- sources like a lexicon, language models and parsers also make them ideal for deploying on devices with limited compute and memory footprint. 6. A CKNO WLEDGEMENTS The authors would like to thank Edgar Gonz ` alez Pellicer , Alex K ouzemtchenko, Ben Swanson, Ashish V enugopal, Kai Zhao in help in obtaining labels used as truth for semantics, and Kanishka Rao for discussions around the joint model. W e thank Khe Chai Sim and Amarnag Subramanya for helpful feedback on earlier drafts of the paper . 7. REFERENCES [1] Ilya Sutske ver , Oriol V inyals, and Quoc V Le, “Sequence to sequence learning with neural networks, ” in Advances in neu- ral information pr ocessing systems , 2014, pp. 3104–3112. [2] Gokhan T ur and Renato De Mori, Spoken language under- standing: Systems for extracting semantic information fr om speech , John W iley & Sons, 2011. [3] Bo Li, T ara Sainath, Arun Narayanan, Joe Caroselli, Michiel Bacchiani, Ananya Misra, Izhak Shafran, Hasim Sak, Golan Pundak, Kean Chin, et al., “ Acoustic modeling for google home, ” INTERSPEECH-2017 , pp. 399–403, 2017. [4] Y oung-Bum Kim, Sungjin Lee, and Karl Stratos, “Onenet: Joint domain, intent, slot prediction for spoken language under- standing, ” in Automatic Speech Recognition and Under stand- ing W orkshop (ASR U), 2017 IEEE . IEEE, 2017, pp. 547–553. [5] Dilek Hakkani-T ¨ ur , G ¨ okhan T ¨ ur , Asli Celikyilmaz, Y un-Nung Chen, Jianfeng Gao, Li Deng, and Y e-Y i W ang, “Multi-domain joint semantic frame parsing using bi-directional rnn-lstm., ” in Interspeech , 2016, pp. 715–719. [6] Andreas Stolcke and Jasha Droppo, “Comparing human and machine errors in conv ersational speech transcription, ” in Pr oc. INTERSPEECH , 2017, pp. 137–141. [7] George Saon, Gakuto Kurata, T om Sercu, Kartik Audhkhasi, Samuel Thomas, Dimitrios Dimitriadis, Xiaodong Cui, Bhu- vana Ramabhadran, Michael Picheny , L ynn-Li Lim, Bergul Roomi, and Phil Hall, “English conv ersational telephone speech recognition by humans and machines, ” in Proc. IN- TERSPEECH , 2017, pp. 132–136. [8] “Google duplex: An AI system for accom- plishing real-world tasks ov er the phone, ” “https://ai.googleblog.com/2018/05/duplex-ai-system-for - natural-con versation.html, ” Accessed: 2018-06-29. [9] Dmitriy Serdyuk, Y ongqiang W ang, Christian Fuegen, Anuj Kumar , Baiyang Liu, and Y oshua Bengio, “T ow ards end- to-end spoken language understanding, ” arXiv pr eprint arXiv:1802.08395 , 2018. [10] Fabrizio Morbini, Kartik Audhkhasi, Ron Artstein, Maarten V an Segbroeck, Kenji Sagae, Panayiotis Georgiou, David R T raum, and Shri Narayanan, “ A reranking approach for recog- nition and classification of speech input in conv ersational di- alogue systems, ” in Spoken Language T echnology W orkshop (SLT), 2012 IEEE . IEEE, 2012, pp. 49–54. [11] Dilek Hakkani-T ¨ ur , Fr ´ ed ´ eric B ´ echet, Giuseppe Riccardi, and Gokhan T ur, “Beyond asr 1-best: Using w ord confusion net- works in spoken language understanding, ” Computer Speech & Language , v ol. 20, no. 4, pp. 495–514, 2006. [12] Faisal Ladhak, Ankur Gandhe, Markus Dre yer , Lambert Math- ias, Ariya Rastrow , and Bj ¨ orn Hoffmeister , “Latticernn: Recur- rent neural networks over lattices., ” in INTERSPEECH , 2016, pp. 695–699. [13] Raphael Schumann and Pongtep Angkititrakul, “Incorporating asr errors with attention-based, jointly trained rnn for intent detection and slot filling, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2018 IEEE International Conference on . IEEE, 2018. [14] Y uan-Ping Chen, Ryan Price, and Srini vas Bang alore, “Spo- ken language understanding without speech recognition, ” in Acoustics, Speech and Signal Processing (ICASSP), 2018 IEEE International Confer ence on . IEEE, 2018. [15] Alex Grav es, Santiago Fern ´ andez, Faustino Gomez, and J ¨ urgen Schmidhuber , “Connectionist temporal classification: la- belling unse gmented sequence data with recurrent neural net- works, ” in Pr oceedings of the 23r d international conference on Machine learning . A CM, 2006, pp. 369–376. [16] Kyunghyun Cho, Bart v an Merri ¨ enboer , C ¸ alar G ¨ ulc ¸ ehre, Dzmitry Bahdanau, Fethi Bougares, Holger Schwenk, and Y oshua Bengio, “Learning phrase representations using rnn encoder–decoder for statistical machine translation, ” in Pro- ceedings of the 2014 Confer ence on Empirical Methods in Nat- ural Language Processing (EMNLP) , Doha, Qatar , Oct. 2014, pp. 1724–1734, Association for Computational Linguistics. [17] Dzmitry Bahdanau, Kyunghyun Cho, and Y oshua Bengio, “Neural machine translation by jointly learning to align and translate, ” arXiv preprint , 2014. [18] Y onghui W u, Mik e Schuster , Zhifeng Chen, Quoc V Le, Mo- hammad Norouzi, W olfgang Macherey , Maxim Krikun, Y uan Cao, Qin Gao, Klaus Macherey , et al., “Google’ s neural ma- chine translation system: Bridging the gap between human and machine translation, ” arXiv preprint , 2016. [19] C.C. Chiu, T .N. Sainath, Y . W u, R. Prabha valkar , P . Nguyen, Z. Chen, A. Kannan, R.J. W eiss, K. Rao, K. Gonina, and N. Jaitly , “State-of-the-art speech recognition with sequence- to-sequence models, ” in Acoustics, Speec h and Signal Process- ing (ICASSP), 2017 IEEE International Confer ence on . IEEE, 2017. [20] William Chan, Navdeep Jaitly , Quoc Le, and Oriol V in yals, “Listen, attend and spell: A neural network for large vocabu- lary conv ersational speech recognition, ” in Acoustics, Speec h and Signal Pr ocessing (ICASSP), 2016 IEEE International Confer ence on . IEEE, 2016, pp. 4960–4964. [21] Bing Liu and Ian Lane, “ Attention-based recurrent neural net- work models for joint intent detection and slot filling, ” in Pr oc. Interspeech , 2016, pp. 685–689. [22] Ashish V aswani, Noam Shazeer , Niki Parmar, Jakob Uszko- reit, Llion Jones, Aidan N Gomez, Łukasz Kaiser , and Illia Polosukhin, “ Attention is all you need, ” in Advances in Neural Information Pr ocessing Systems , 2017, pp. 5998–6008. [23] Chung-Cheng Chiu, T ara Sainath, Y onghui W u, Rohit Prab- hav alkar , Patrick Nguyen, Zhifeng Chen, Anjuli Kannan, Ron J. W eiss, Kanishka Rao, Katya Gonina, Navdeep Jaitly , Bo Li, Jan Chorowski, and Michiel Bacchiani, “State-of- the-art speech recognition with sequence-to-sequence models, ” 2018. [24] Rich Caruana, “Multitask learning, ” Machine learning , vol. 28, no. 1, pp. 41–75, 1997. [25] Minh-Thang Luong, Quoc V Le, Ilya Sutskever , Oriol V inyals, and Lukasz Kaiser, “Multi-task sequence to sequence learn- ing, ” arXiv preprint , 2015. [26] Matt Shannon, “Optimizing expected word error rate via sam- pling for speech recognition, ” 2017. [27] Rohit Prabhav alkar , T ara N Sainath, Y onghui Wu, Patrick Nguyen, Zhifeng Chen, Chung-Cheng Chiu, and An- juli Kannan, “Minimum word error rate training for attention-based sequence-to-sequence models, ” arXiv preprint arXiv:1712.01818 , 2017. [28] Hasim Sak, Andre w W . Senior, Kanishka Rao, and Franoise Beaufays, “Fast and accurate recurrent neural network acoustic models for speech recognition, ” in Proceedings of Interspeech , 2015. [29] J ¨ urgen Schmidhuber and Sepp Hochreiter , “Long short-term memory , ” Neural Comput , vol. 9, no. 8, pp. 1735–1780, 1997. [30] Mike Schuster and Kuldip K Paliwal, “Bidirectional recurrent neural networks, ” IEEE T ransactions on Signal Processing , vol. 45, no. 11, pp. 2673–2681, 1997. [31] Chung-Cheng Chiu, T ara N Sainath, Y onghui W u, Rohit Prab- hav alkar , Patrick Nguyen, Zhifeng Chen, Anjuli Kannan, Ron J W eiss, Kanishka Rao, Katya Gonina, et al., “State-of-the-art speech recognition with sequence-to-sequence models, ” arXiv pr eprint arXiv:1712.01769 , 2017. [32] Samy Bengio, Oriol V inyals, Navdeep Jaitly , and Noam Shazeer , “Scheduled sampling for sequence prediction with recurrent neural networks, ” in Advances in Neural Information Pr ocessing Systems , 2015, pp. 1171–1179. [33] Mart ´ ın Abadi, Paul Barham, Jianmin Chen, Zhifeng Chen, Andy Davis, Jeffrey Dean, Matthieu De vin, Sanjay Ghemaw at, Geoffre y Irving, Michael Isard, et al., “T ensorflow: a system for large-scale machine learning., ” in OSDI , 2016, vol. 16, pp. 265–283. [34] Norman P Jouppi, Cliff Y oung, Nishant Patil, David Patter- son, Gaurav Agrawal, Raminder Bajwa, Sarah Bates, Suresh Bhatia, Nan Boden, Al Borchers, et al., “In-datacenter perfor- mance analysis of a tensor processing unit, ” in Computer Ar - chitectur e (ISCA), 2017 A CM/IEEE 44th Annual International Symposium on . IEEE, 2017, pp. 1–12. [35] Diederik P Kingma and Jimmy Ba, “ Adam: A method for stochastic optimization, ” arXiv preprint , 2014. [36] Erik F . Tjong Kim Sang and Fien De Meulder , “Introduction to the conll-2003 shared task: Language-independent named entity recognition, ” in Pr oceedings of the Seventh Conference on Natural Language Learning at HL T -NAA CL 2003 - V olume 4 , Stroudsburg, P A, USA, 2003, CONLL ’03, pp. 142–147, Association for Computational Linguistics.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment