Learning Similarity Metrics for Numerical Simulations

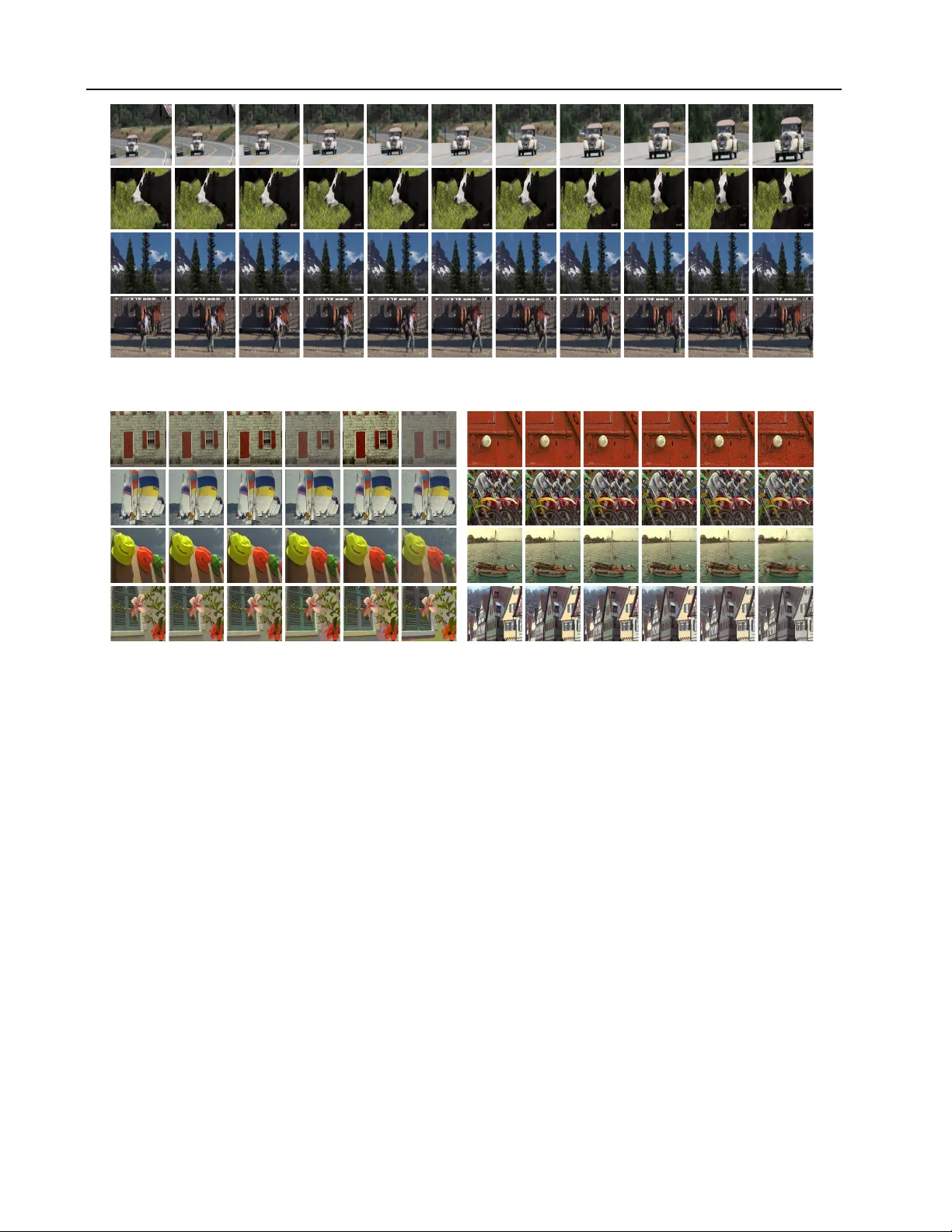

We propose a neural network-based approach that computes a stable and generalizing metric (LSiM) to compare data from a variety of numerical simulation sources. We focus on scalar time-dependent 2D data that commonly arises from motion and transport-…

Authors: Georg Kohl, Kiwon Um, Nils Thuerey