PLLay: Efficient Topological Layer based on Persistence Landscapes

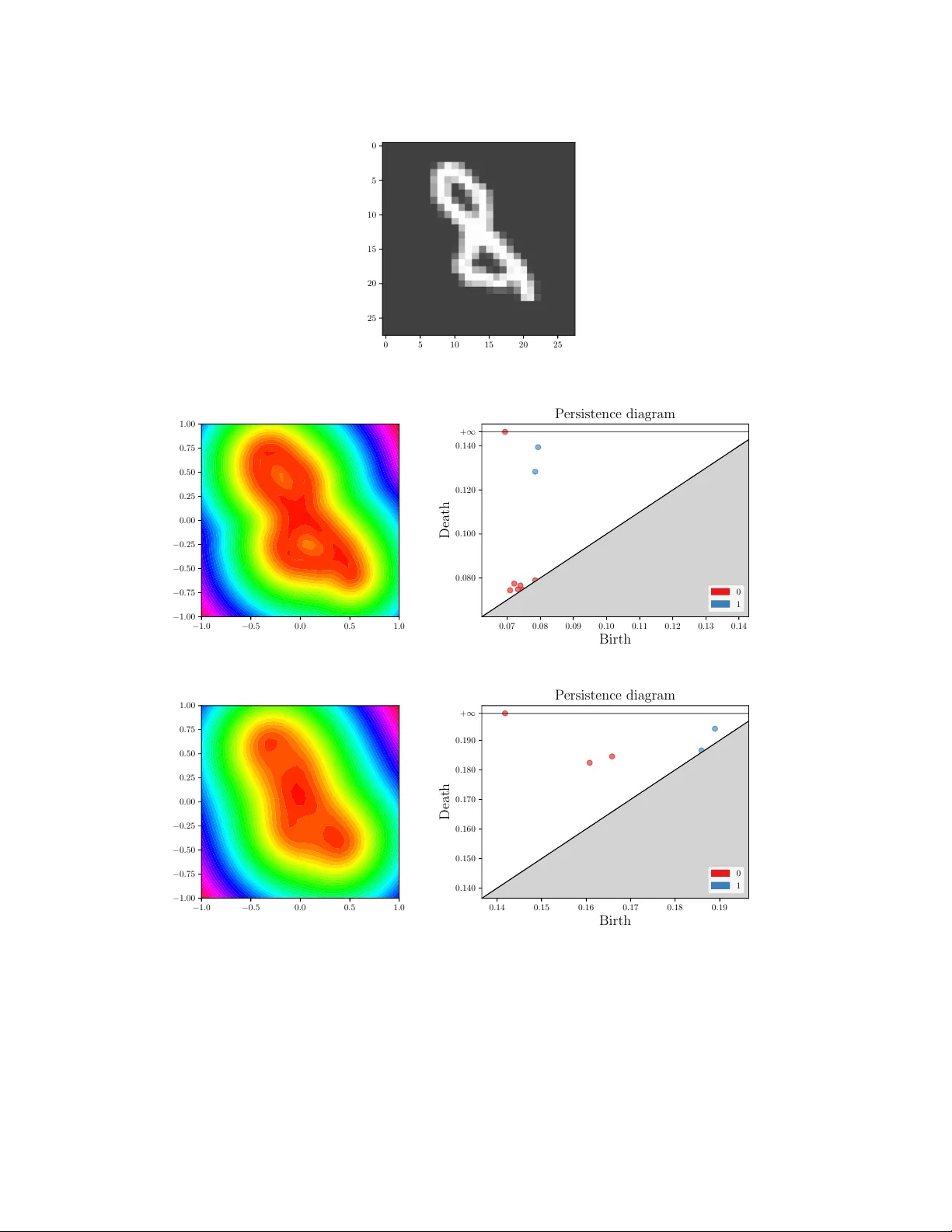

We propose PLLay, a novel topological layer for general deep learning models based on persistence landscapes, in which we can efficiently exploit the underlying topological features of the input data structure. In this work, we show differentiability…

Authors: Kwangho Kim, Jisu Kim, Manzil Zaheer