Programmable Spectrometry -- Per-pixel Classification of Materials using Learned Spectral Filters

Many materials have distinct spectral profiles. This facilitates estimation of the material composition of a scene at each pixel by first acquiring its hyperspectral image, and subsequently filtering it using a bank of spectral profiles. This process…

Authors: Vishwanath Saragadam, Aswin C. Sankaranarayanan

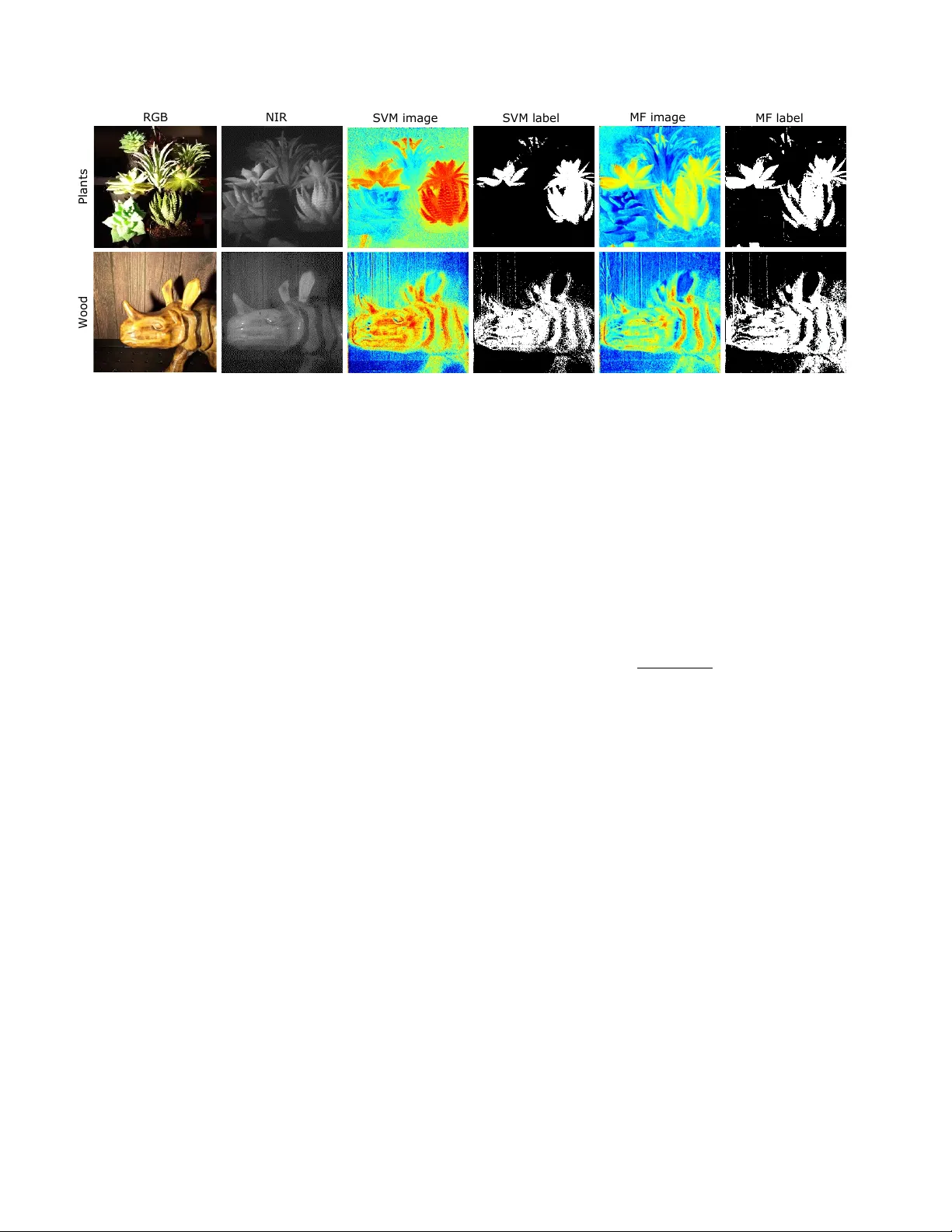

Pr ogrammable Spectr ometry — P er -pixel Classification of Materials using Learned Spectral Filters V ishw anath Saragadam, and Aswin C. Sankaranarayanan Department of ECE, Carnegie Mellon Uni versity , USA vishwanathsrv@cmu.edu Abstract Many materials have distinct spectral pr ofiles. This fa- cilitates estimation of the material composition of a scene at eac h pixel by first acquiring its hyperspectr al image, and subsequently filtering it using a bank of spectral pr ofiles. This pr ocess is inherently wasteful since only a set of lin- ear pr ojections of the acquired measur ements contribute to the classification task. W e pr opose a novel pr ogrammable camera that is capable of pr oducing images of a scene with an arbitrary spectral filter . W e use this camera to optically implement the spectr al filtering of the scene’ s hyper spectral image with the bank of spectral pr ofiles needed to perform per-pixel material classification. This pr ovides gains both in terms of acquisition speed — since only the rele vant mea- sur ements ar e acquir ed — and in signal-to-noise ratio — since we in variably avoid narr owband filters that ar e light inefficient. Given tr aining data, we use a rang e of classi- cal and modern techniques including SVMs and neural net- works to identify the bank of spectral pr ofiles that facilitate material classification. W e verify the method in simulations on standar d datasets as well as r eal data using a lab pr oto- type of the camera. 1. Introduction Material composition of a scene can often be identified by analyzing variations of light intensity as a function of spectrum or wa velengths. Since materials tend to hav e unique spectral profiles, spectrum-based material classifica- tion has found widespread use in numerous scientific disci- plines including molecular identification using Raman spec- troscopy [ 5 ], tagging of ke y cellular components in fluores- cence microscopy [ 17 ], land coverage and weather moni- toring [ 4 , 11 ], and even the study of chemical composition of stars and astronomical objects using line spectroscopy . It would not be a stretch to suggest that spectroscopy or its imaging variant, hyperspectral imaging (HSI), is an impor- tant scientific tool for material identification. F r am e 1 F r am e 40 F ra m e 75 L ab e l R GB O p ti c a l S V M Figure 1: V ideo-rate material classifier . W e propose an optical setup that is capable of classifying material on a per-pixel basis. This is achie ved by building a programmable spectral filter that can image at high spatial resolution. The images here show a video sequence of a identifying real plants from plastic plants. W e cap- tured the data at 4 fps, and performed only a per-pix el thresholding to get the video result. While hyperspectral imaging has also found application in computer vision tasks [ 14 , 25 , 31 ], its widespread adop- tion has been hindered due to inherent challenges in acqui- sition them. Capturing a HSI requires sampling of a very high dimensional signal; for e xample, me ga-pixel images at hundreds of spectral bands, a process that is daunting to do at video rate. This problem is further aggrav ated by the fact that hyperspectral measurements have to combat lo w sig- nal to noise ratios, as a fixed amount of light is divided in to several spectral bands — leading to long exposure times that can ev en span several minutes per HSI. This paper proposes a nov el approach for enabling spectrometry-based per-pix el material classification by 1 ov ercoming the limitations posed by HSI acquisition. T o understand our proposed approach, we first need to delve deeper into the process of classification itself. Classifica- tion techniques in volv e comparing the spectral profile at each pixel with known or learned spectra by taking a lin- ear projection. Intuitiv ely , giv en K material classes, we would compute O ( K ) such linear projections. F or exam- ple, a support vector machine (SVM) classifies by finding distance of features from the separating hyperplane; in the context of spectral classification, this translates to spectrally filtering the scene with the hyperplane coefficients. Hence, spectral classification can be made practical if we can cap- ture the linear projections directly without ha ving to acquire the complete HSI. Such an operation translates to optically filtering the scene’ s HSI using kno wn spectral filters, which can be achie ved if the camera’ s spectral response can be ar- bitrarily programmed. T o enable per-pix el material classification, we propose a new imaging architecture with a programmable spectral response that can changed on-the-fly at video rate. Gi ven a training dataset of spectral profiles, we use of f-the-shelf classification techniques like SVMs and deep neural net- works to identify linear projections that facilitate material classification. For a novel scene, the camera captures multi- ple images, each with a different spectral response; the cap- tured measurements are used with the classifier to perform per-pix el material classification. The proposed pipeline has numerous benefits. Optical computing of the linear projections allo ws us to circum- vent the measurement of sampling the full HSI. This has the dual benefit of reducing the acquisition time (from min- utes to hundreds of milliseconds) as well as increasing light efficienc y of each captured image since the linear projec- tions often correspond to broadband spectral profiles. For binary classification problem, our lab prototype provides a classification result ev ery second frame thereby providing material labels at 4 frames per second. W e also show re- sults on multi-class labeling problems using a classifier that can differentiate between fi ve distinct material types. 2. Prior W ork W e discuss prior w ork in the areas of material classifica- tion using HSIs as well as optical computing and design of programmable spectral filters. Hyperspectral classification. Consider the HSI of a scene, H ( x, y, λ ) , where each pixel ( x, y ) is assumed to be- long to one of K material classes. Specifically , the spectra at each pixel can be written as, H ( x, y , λ ) = α ( x, y ) S L ( x,y ) ( λ ) , (1) where L ( x, y ) is label of the material contrib uting to spec- trum at ( x, y ) , and α ( x, y ) is scaling parameter . Note that the model above assumes all spatial pix els are pure, i.e., e v- ery pixel gets contribution from only one material. W e use this model for the sak e of e xposition and later discuss about how to relax it later to handle mix ed pixel. The goal of classification is to estimate the label at each pixel, L ( x, y ) , which forms a label map. There are broadly two approaches to spectral classification — generativ e and discriminativ e. Generativ e techniques rely on decomposing the HSI as a linear combination of basic materials that are called end-members [ 6 ]. Specifically , the HSI of the scene is decomposed as, H ( x, y , λ ) = K X k =1 s k ( λ ) a k ( x, y ) , (2) where s k ( λ ) is the spectra of k th material, and a ( x, y ) is the relativ e contribution of material k at ( x, y ) . The abundances at each pixel along with the end-member spectra provide a feature vector that can be used to spatially cluster the mate- rials and subsequently identify them. Discriminativ e techniques rely on directly learning dis- cerning features from the HSI without the intermittent stage of low-dimensional decomposition. Here, we identify a set of spectral filters, { ( d k ( λ ) , β k ) } M k =1 that generate per-pixel feature vector via spectral-domain filtering: F k ( x, y ) = Z λ H ( x, y , λ ) d k ( λ ) dλ + β k . (3) Hence, each image F k ( x, y ) is a spectrally-filtered version of the HSI with an added of fset. In case of SVMs, the learned spectral filters form separating hyperplanes; this has been a de facto way of HSI classification [ 7 , 22 ]. More sophisticated learning techniques based on neural net- works use spectral features [ 13 ] or spatio-spectral features [ 30 , 18 , 10 , 15 , 3 , 16 , 21 , 12 ] for classification. Inv ariably , the number of spectral features used, i.e, the dimensional- ity of the projection, tends to be smaller than the number of spectral channels in the HSI. Hence, we seek to measure the features directly , by computing ( 3 ) optically . As is to expected, such a paradigm of optical classification requires the design of cameras that can be programmed with arbi- trary spectral filters. Optical computing. Instead of relying on both spatial and spectral information, we consider a simpler approach which relies only on the spectral profiles for classification. Such a strategy is less accurate than spatial and spectral versions [ 30 , 18 , 10 , 15 , 3 , 16 , 21 , 12 ], b ut significantly reduces the complexity of the imaging system. This approach is similar , in spirit, to using BRDFs to perform per -pixel classification by v arying the incident illumination [ 19 , 9 ], or using first layer of a neural network to capture light fields [ 2 ]. Such a setup offers tw o-fold advantage: 1. F ewer measurements. Since the number of material classes is far fe wer than number of spectral bands, we need to measure far fe wer measurements. For example, we sho w in our experiments that 3 − 5 images suf fice for a 5-class classification task. 2. Incr eased SNR. The discriminating filters tend to be spectrally broadband, and hence each image is measured at higher light le vels than any individual narrow spectral band. Hence, the images can be captured at higher SNR or at faster acquisition rates. Optical computing has found use in various computer vision tasks such as capturing light transport matrices [ 24 ], low- rank approximation of hyperspectral images [ 29 ], and spec- tral classification using programmable light sources [ 8 , 26 ]. W e adopt the paradigm of optical computing to make dis- criminativ e filter measurements by building a camera whose spectral response can be arbitrarily programmed. Dynamic spectral filters. Spectral filtering can be achiev ed by modified the response of the camera; a canoni- cal and static example being the Bayer pattern or more inter- estingly , the case of fluorescence filters in microscopy . It is howe ver more useful to have a camera whose response can be altered arbitrarily in a fast manner . Numerous techniques to achiev e spectral filtering have been proposed in the past. Agile spectral imager [ 23 ] rely on the coding the so-called “rainbow plane” to achie ve arbitrary spectral filtering. This was further developed by [ 20 ] where they placed a digital micromirror de vice (DMD) on the rainbow plane to achieve dynamic spectral filtering. Howe ver , such architectures come with a debilitating problem — usage of simple pupil codes such as open aper- ture or a slit directly tradeoff spatial resolution for spectral resolution. This was first identified in [ 29 ] in the context of hyperspectral imaging. They showed that a slit, a common choice for spectrometry , leads to large spatial blur . Simi- larly an open aperture, a common choice for high-resolution imaging, leads to large spectral blur . Hence, such apertures are not capable of spectral classification with high accurac y . W e instead rely on the optical setup in [ 29 ] to overcome the spatial-spectral tradeoff. The key idea is to use a coded aperture that introduces an in vertible blur in both spatial and spectral domains. An important difference is that the setup in [ 29 ] is designed for HSI image acquisition; this paper adapts the underlying ideas for performing material classi- fication in the scene. 3. Programmable Spectral Filter Our optical setup is a modification of the optical setup proposed in [ 29 ]. W e briefly explain the rele vant parts of the optical setup here. The interested reader is referred to [ 29 ] as well as appendix for a detailed deriv ation. l e n s w i t h c ode d a pe r t u r e d i f f r a c t i o n g r at in g r a i n bow p lan e s p at ial p lan e f f f f r e l a y l e ns r e l a y l e ns P1 P2 P3 (a) Setup schematic Dif fr action grating NIR bandpass filter L1 L2 Coded apertur e Relay + coded apertur e L3 PBS Spectral coding Objective l ens Unpolarized light P-polarized light S-polarized light PBS Polarizing Beam Splitt er LCoS display NIR camera (b) Practical realization Figure 2: Schematic for programmable spectral filter . The optical architecture in (a) consists of a lens assembly with coded aperture which introduces spatial and spectral blurs. By placing an SLM in P2, the HSI of the scene can be spectrally filtered and sensed by a camera sensor on P3. (b) sho ws a compact realization of the optical setup. 4f system f or spectral pr ogramming. W e borrow the op- tical schematic for spectral programming from [ 29 ], sho wn in Fig. 2 (a). Given the HSI, H ( x, y , λ ) , that is focused on the grating at P1, we seek to derive the intensity on planes P2 and P3. The intensity on rainbo w plane P2, I 4 ( x, y ) = a 2 ( − x, − y ) ∗ S x f ν 0 e c x f ν 0 , (4) where S ( λ ) = R ( x,y ) H ( x, y , λ ) is spectrum of the scene, e c ( λ ) is response of the optical system, and ν 0 is the density of grov es in mm − 1 . The intensity on image plane P3, I 5 ( x, y ) = Z λ H ( x, y , λ ) ∗ 1 λ 2 f 2 A − x λf , − y λf 2 ! dλ, (5) where A ( u, v ) is the 2D Fourier transform of a ( x, y ) . The key observation from ( 4 ), ( 5 ) is that a coded aperture placed on plane P2 causes a spectral blur giv en by a ( x, y ) and a spatial blur given by A − x λf , − y λf 2 . As shown in Fig. 3 , a slit causes a severe spatial blur , whereas an open aper- ture causes large spectral blur . The solution is to introduce an in vertible blur in both domains, which can be achiev ed using a coded aperture, shown in the last column. W e use sp e c tr um 3 0 μm s lit op e n I n v e r t i bl e c o de sp a c e Figure 3: Spatio-spectral r esolution tradeoff. A slit is capable of high spectral resolution whereas an open aperture is capable of high spatial resolution b ut both are inappropriate for high spatio- spectral HSI imaging. In contrast, a coded aperture introduces an inv ertible spatial and spectral blurs which can then be decon- volv ed. Figure reproduced with permission from [ 29 ]. the same coded aperture that was used in [ 29 ], as it is de- signed to promote in vertibility in both domains. Optical setup. Our optical setups is in principle similar to Fig. 2 (a). W e place a spatial light modulator on the rainbow plane (P2) and sensor on spatial plane (P3) to achie ve spec- tral filtering. The optimized binary code [ 29 ] is placed in the lens assembly Figure 2 (b) shows a schematic of a prac- tical implementation of the same optical setup. W e use a Liquid Crystal on Silicon (LCoS) display as a spatial light modulator for spectral filtering. Effect of coded aperture. Gi ven the HSI of the scene, H ( x, y , λ ) , the coded aperture introduces spatial and spec- tral blurs in the following w ay , b H ( x, y , λ ) = A x λf , y λf ∗ H ( x, y , λ ) ∗ a ( λν 0 f , y ) , (6) i.e., all operations are no w performed on a modified version of the HSI of the scene. Giv en a spectral profile s k ( λ ) , the proposed setup directly computes filtered image,: b f k ( x, y ) = Z λ b H ( x, y , λ ) s k ( λ ) c ( λ ) dλ, (7) by loading s k ( λ ) on the spatial light modulator . W ith the optical setup in place, we will next see how to use the pro- grammable spectral filter to perform optical classification. 4. Learning Discriminant Filters W ith camera that is capable of capturing images with ar - bitrary spectral profiles, we pursue tw o questions; one, ho w many filters are required for classifying K classes, and two, what spectral filters maximize classification accuracy . The L e a r ning a c la s s ific a t io n ne t w o r k 2 56 × × 25 6 2 56 × 12 8 1 28 × 6 4 S p e c t r a lly p r o g r ammab l e came r a L a b e le d t r a ining d a t a L ea r n ed filt e r s P r e d ic t io n 6 4 × 3 2 × 2 56 25 6 × 1 28 12 8 × 6 4 64 × 32 C o m p ut e fir s t la y e r o p t ic a lly in t e s t ing p ha s e pre d Figure 4: Proposed optical classifier . The proposed optical clas- sifier broadly consists of two stages. In the first stage, we learn the weights of a neural network with spectrum as input and class label as output. The training process outputs the set of discerning filters, marked ”learned filters” in the image. In testing stage, we filter the HSI of the scene with the learned filters, thereby replac- ing the first layer of the classifier with an optical implementation. This results in a high accurac y , per -pixel classifier while requiring far fe wer measurements than the size of the HSI. questions above are closely tied to the type of classifier un- der consideration. W e detail the two classifier architectures we explore in this paper which help answer the questions abov e. Note that any classifier which relies on the linear projection can be used. For the sake of exposition, we only ev aluate SVM and neural networks. 4.1. Support V ector Machine SVMs provide a binary , linear classifier by learning a separating hyperplane on the training dataset. Giv en a set of data points { x k , y k } N k =1 , where y k ∈ { 0 , 1 } is the la- bel of x k , SVM seeks to solve the following optimization problem, min w ,c 1 N k X k =1 max(0 , 1 − y k ( w > x k + c )) + λ k w k 2 , (8) where λ is a tuning parameter . The output of solving the optimization problem is the vector w and intercept c . In the context of optical classification, w is the filter that maxi- mizes accuracy for binary decision. F or K -class decision, we choose a one-vs-all classification strategy , which uses K hyperplanes, and hence K spectral filters. 4.2. Deep Neural Networks Deep neural networks (DNNs) provide a richer alterna- tiv e to SVMs. W e model the first linear unit of the DNN to be the programmable spectral filter and train a model whose input is the spectral profile at a pixel and whose output is the material class label as a one-hot vector . While there are many possible architectures, we choose a simple, fiv e-layer Method Classifier Coding strategy #Measurements Accura cy Santara et al. DNN Non - linear, spatial and spectral 220 96.7% ( reported ) Hu et al. DNN Convol utional , spectrum - only 220 90.16% ( reported ) Lee et al. DNN Convol utional , spatial and spectral 220 93.6% ( reported ) Melgani et al. SVM Linear , spectrum - only 16 84% ( computed ) This paper DNN Linea r , spectrum - only 16 90% ( Computed ) Figure 5: Simulations on the Indian Pines dataset. W e compare state-of-the-art classifiers against the classifiers proposed in this paper . By r eported we report the accuracy figures listed in the respectiv e papers, while computed results were generated by us. A key feature of our optical setup is that it can only compute linear projections of spectra. While this leads to reduction in accuracy , the number of captured images are far fe wer. neural network as an example with all layers being fully connected. Figure 4 gives a brief overvie w of the proposed training and testing methodology . The weights of first fully connected layer , A 1 are the learned discriminating filters, and hence the first layer can be ev aluated optically , thereby circumventing the need to measure the full spectrum at each pixel. The number of filters, Q depends on the number of materials and ho w easily they can be separated. In our ex- periments, we classified a total of 5 objects. W e then v aried the number of filters and computed mean classification ac- curacy . Based on this, we picked the optimal number of filters. W e note that the idea of optically computing the first layer has been explored before in the context of designing color filter arrays [ 1 ] and processing light fields [ 2 ]. 4.3. Simulations. W e compare SVM and the 5-layer DNN classifier to some of the state-of-the-art techniques in spectral- classification on the NASA Indian Pine dataset which con- sists of 220 spectral bands with 16 object classes. Figure 5 tabulates the accuracies with classifiers used in this pa- per in bold. W e observe that the accuracy is lower than state-of-the-art, which is expected as we only use spectral information, while the other techniques use both spatial and spectral information. Howe ver , relying on a spectrum-only classifier lets us capture far fe wer images than the number of spectral bands. 5. Experiments W e demonstrate capabilities of our setup for video- rate binary classification with binary SVM as well as matched filtering, and multi-class classification with multi- class SVM and DNNs. Gray s c al e c am e ra ( H a m a m a t s u F l a s h 4 .0 L T ) C o d e d ape rture O b j ec t i v e l en s D i f f rac ti o n grati ng LC o S S L M ( H ol oey e ) Figure 6: Lab prototype. The picture shows the lab proto- type we built with only the major components marked. W e used an objectiv e lens of 8 mm focal length, while all other lenses were 100 mm. (a) RGB (b) 650 nm (c) 750 nm (d) 850 nm 650 700 750 800 (nm) 0.2 0.4 0.6 0.8 Rel. intensity Point 1 Point 2 Point 3 Figure 7: Example HSI. Our prototype is designed to cap- ture images from 600 nm to 900 nm. (a) was captured using a cellphone while (b)-(d) are images captured by our setup. Bottom row shows spectral profiles at three marked points. Note how all the pigments disappear at λ = 850 nm in (d). Optical setup. Figure 6 shows a photograph of the lab prototype we built along with labels for relev ant compo- nents. A detailed optical layout along with the list of com- ponents is in appendix. Our SLM is a Holoeye LCoS SLM with a frame rate of 60 Hz that works as a secondary mon- itor . W e used an NIR-sensitive sCMOS camera (Hamatasu ORCA Flash 4.0 L T). In order to classify materials accu- rately , we designed our system to image from 600 nm to 900 nm, which is the near infrared (NIR) regime. Our setup is capable of coding spectrum at a resolution of 3 . 3 nm, gi v- ing us 100 spectral bands. Finally , the SLM acts as a dy- namic spectrally-selectiv e camera and hence can be directly used for measuring the complete HSI. T o do so, we dis- play permuted Hadamard patterns on the SLM to capture a 512 × 512 × 256 dimensional HSI. Figure 7 shows an ex- ample of captured HSI of an acrylic painting. Calibration. Our optical setup broadly requires calibra- tion of the code resulting in spectral blur , calibration of wa velengths and finally , spatial PSF . W e use narrow-band RGB Cardboard NIR Wood Plant Wood - textu red paper Plas tic p lant (a) V isible and NIR images. 650 700 750 800 850 (nm) 0.2 0.4 0.6 0.8 1 Rel. intensity Cardboard Fake plant Fake wood Plant Wood Figure 8: Material dataset. The figure sho ws false colored images of the 5 different materials we collected for classifi- cation purpose. (b) shows av erage spectra of the materials as measured by our lab prototype. 500 600 700 800 900 (nm) 0 1 2 3 4 5 Relative intensity Filter 1 Filter 2 Filter 3 Filter 4 Filter 5 (a) SVM filters 500 600 700 800 900 (nm) 0 1 2 3 4 5 Relative intensity Filter 1 Filter 2 Filter 3 Filter 4 Filter 5 (b) DNN filters Figure 9: Learned filters. The output of a multi-class SVM is K separating hyperplanes, which results in K filters, shown in (a). Similarly , the DNN architecture consists of sev eral layers, of which the first layer is linear . Hence the training process results in weights shown in (b) that can be used as spectral filters. lasers for calibrating both code and wav elengths, and use a 10 µ m pinhole for calibrating spatial PSF . Details are av ail- able in the appendix. Dataset. W e show classification results with a total of fi ve types of subjects: 1) black cardboard, 2) varnished wood, 3) wood-te xtured paper , 4) real plants, and 5) plastic plants. The choice of objects stems from similarity of these mate- rials (plants vs plastic plants) in visible wav elengths, while (a) RGB image (b) Full HSI scan + projection (256 meas.) (c) optical projection (2 meas.) 0 0.5 1 1.5 2 2.5 3 3.5 4 4.5 5 False positive 10 -3 0.996 0.997 0.998 0.999 1 True positive Full measurement Optical computing Figure 10: Advantage of optical computing. W e show an example of binary classification between cardboard and wood (a) using per-pixel SVM. Optical computing achiev es higher accuracy with far fe wer measurements. 2 4 6 8 10 12 14 16 Number of filters 75 80 85 90 Mean accuracy Neural net. SVM Figure 11: Accuracy vs. number of filters. The plot shows accuracy as a function of number of filters. The accuracy increases initially and then saturates. W e hence use the knee point of the curve as the optimal number of filters. (a) RGB (b) SVM score (c) Label Figure 12: P er-pixel classification. Due to per-pix el op- eration with high spatial resolution, our imager can clearly identify the micro-structures such as the cactus thorns by capturing only two images instead of the complete HSI. having distinctly dif ferent spectra in NIR domain. W e col- lect one HSI for each of the materials and manually label them, giving a total of 5 HSI for training. Figure 8 shows P l a n ts W o o d R G B NIR SVM i m a g e SVM l a b e l M F i m a g e M F l a b e l Figure 13: V arious binary classifiers. W e compare binary classification using SVM and matched filtering (MF). First ro w is a comparison of real wood (rhino) and fake wood (background, printed paper), while the second row is real and fake plants. Due to dynamic programming capability , we can classify with arbitrary filters and hence utilize any classifier that relies on linear projection of spectrum at each pixel. visible and false-NIR images, as well as the av erage spec- trum for each material. W e note that none of the objects used for training the classifiers were reused in testing phase. T raining classifiers. W e trained two classifiers – multi- class SVM and DNN with v arying number of filters. F or SVM, we used Scikit-Learn [ 28 ] in a one-vs-all configura- tion which learned a total of 5 spectral filters. The learned spectral filters are shown in Fig. 9 (a) DNNs were trained with the network architecture sho wn in Fig. 4 with loss function set to cross entropy . The num- ber of spectral filters were v aried from 1 to 20 to compare performance. W e learned the network using the PyT orch framew ork [ 27 ] with learning rate set to 10 − 3 for a total of 50 epochs. W e then extracted weights of first layer and used them as spectral filters. The learned filters are sho wn in Fig. 9 (b). Further details about the learning process are included in appendix. Figure 11 shows a plot of accuracy as a function of num- ber of filters, Q . Accuracy of the classifier increases sharply initially and then saturates which implies that more spectral filters do increase accuracy but there is diminishing returns after a point. Based on this, we used 3 , 5 , 10 filters for com- parisons in our real experiments. Handling scale of features. A key requirement of any classifier is that the scale of features be same during training and testing. A common practice is to set the norm of feature at ( x 0 , y 0 ) , k H ( x 0 , y 0 , λ ) k to unity , or the maximum value to unity . In our case, this requires having knowledge of the complete spectral profile, which defeats the purpose of op- tical computing. instead, we normalize our measurements with sum of the spectrum, R λ H ( x 0 , y 0 , λ ) , which can be measured by displaying a spectral profile with all ones. The measured featured vectors are then, I sum ( x 0 , y 0 ) = Z λ H ( x 0 , y 0 , λ ) dλ (9) e I k ( x 0 , y 0 ) = Z λ H ( x 0 , y 0 , λ ) s k ( λ ) dλ (10) I k ( x 0 , y 0 ) = e I k ( x 0 , y 0 ) I sum ( x 0 , y 0 ) (11) W e scale the spectra the same way ev en while training, which makes the scaling consistent. Hence any set of mea- surements with spectral profiles requires one extra image. Binary classification. The simplest task possible with our optical setup is a binary classification, where the label at each pixel belongs to one of the two possible classes. In such a situation, one may either use a linear SVM where the spectral filter is the learned supporting hyperplane, w , or use a matched filter , where the spectral filter is difference of spectra of the tw o classes, s 1 ( λ ) − s 2 ( λ ) . Figure 10 e val- uates the advantages of optical classification. (b) visualizes the SVM score at each pix el obtained by scanning the com- plete HSI and then computing the projection to the SVM hyperplane, which requires a total of 256 measurements. In contrast, optical projection, shown in (c) requires only two images. Bottom row shows the Receiver operating Charac- teristic (RoC) of classification for both cases. The SNR ad- vantage is e vident; the area under the curve for optical pro- jection ( 0 . 7194 ) is higher than full measurement and then projection ( 0 . 7912 ). Figure 12 shows classification of a real cactus surrounded by sev eral plastic plants. The SVM score R GB scen e N I R scen e S VM D NN Q = 3 D NN Q = 5 D NN Q = 1 0 G r o und tr uth C ar d b o ar d Pl a nt W ood F a k e p l a nt F a ke w ood L eg en d Figure 14: Optical classification. W e sho w two e xamples of classification where the linear operations are directly computed in the optical domain. The ground truth labels were obtained by hand annotation. SVM required a total of 11 measurements for five filters, whereas DNN with 3, 5, 10 filters required 7, 11, 21 images respecti vely . The RGB images shown how the objects are not easily discernable in the visible domain, while they are accurately identified in the NIR domain along with optical classification. Accuracy: 72.55% 89.2% 70456 3.9% 3099 0.4% 278 0.8% 597 5.8% 4551 9.4% 1393 56.4% 8329 12.3% 1820 3.6% 533 18.2% 2681 0.0% 0 8.6% 5971 63.4% 43822 0.3% 174 27.8% 19204 0.0% 0 3.6% 804 10.3% 2334 72.3% 16321 13.7% 3100 0.0% 0 10.0% 2419 34.8% 8399 0.7% 174 54.5% 13150 Cardboard Fake plant Fake wood Plant Wood Target Class Cardboard Fake plant Fake wood Plant Wood Output Class (a) SVM Accuracy: 77.33% 91.1% 69997 1.8% 1413 0.9% 654 1.2% 887 5.1% 3900 11.6% 1753 47.2% 7112 26.1% 3929 0.9% 132 14.2% 2140 0.1% 21 5.7% 2226 91.1% 35541 0.7% 276 2.5% 960 0.0% 0 0.3% 47 2.5% 351 97.2% 13753 0.0% 3 0.1% 78 15.2% 9824 25.1% 16178 4.3% 2751 55.3% 35683 Cardboard Fake plant Fake wood Plant Wood Target Class Cardboard Fake plant Fake wood Plant Wood Output Class (b) Neural net. Figure 15: Confusion matrices for classifiers. Neural net- works typically outperform SVM. Among object classes, “wood” and “fake wood” get confused the most, as their spectra are similar . In contrast, “cardboard” is most differ- ent from all other spectra and hence has high accuracy . in (b) as well as the labels sho w that our setup is capable of resolving very thin structures such as the cactus thorns. Fig- ure 13 shows classification results for real vs. plastic plants and real vs. fake wood with binary SVM as well as matched filtering. Note that the objects are not easily discernable in RGB domain, while they are easily isolated after spectral filtering. Figure 1 sho ws a video rate classification of a real plant and a fake plant. The video was captured at 4 frames per second with alternating spectral profile and all ones pat- tern. Note how the real plant is tracked across all frames, while the fake plant is ignored. Multi-class classification. W e test the SVM and DNN fil- ters learned on training data to classify a scene made of vari- ous materials from the set of fiv e materials. Figure 14 shows classification results for two scenes for various techniques. The ground truth annotation was obtained by manually an- notating the objects, and then this was used for measuring the accuracy of classification. V isually , DNNs outperform SVM, as is visible from the accurate classification of the wooden rhinoceros head. Figure 15 shows a confusion ma- trix for SVM and DNN with 5 filters. The accuracies are not very high as we depend on spectral features alone. Ac- curacy can be significantly increased if spatial information is used along with spectral profiles. This is done by first capturing the Q images and then using the spatial informa- tion to classify . 6. Discussions and Conclusion W e propose a per-pixel material classifier that relies on a high resolution programmable spectral filter . W e achieve this by learning spectral filters that can achiev e high classifi- cation accuracy and then measure images of the scene with the learned filters. Owing to a simple, per-pixel decoding strategy , we can achiev e classification at video rates. W e showed several compelling real world examples with em- phasis on binary video-rate and multi-class classification. Limitations. A ke y limitation of our setup is the assump- tion that the pixels come from a single material class. Some real world examples are made of a mixture of materials at each class, an example being land cover . In such a case, out- putting just a class label may not suffice but relative prob- abilities of each class is desired. This can be achieved by modifying the classifiers to output a score for each material at each pixel instead of most probable class. 7. Acknowledgment The authors acknowledge support from the National Sci- ence Foundation under the CAREER aw ard CCF-1652569, the Expeditions a ward IIS-1730147, and the National Geospatial-Intelligence Agencys Academic Research Pro- gram (A ward No. HM0476-17-1-2000). A. Theoretical backgr ound A.1. Image f ormation model. W e specified a simplified version of image formation model where we said that the HSI of the scene can be rep- resented as H ( x, y , λ ) . W e discuss a more precise model here. Consider the scene’ s spectral reflectance function, H R ( x, y , λ ) , where we assume that each point in 3D space is well modeled by Lambertian reflectance. Let L ( λ ) be the spectral distrib ution of a spatially uniform light source. The HSI of the scene under this illumination is then giv en by , H o ( x, y , λ ) = H R ( x, y , λ ) L ( λ ) , (12) which was the signal model we used in the main paper . Then, given a camera with spectral response C ( λ ) , the mea- sured image is, I ( x, y ) = Z λ H o ( x, y , λ ) C ( λ ) dλ = Z λ H R ( x, y , λ ) L ( λ ) C ( λ ) dλ (13) From the abov e equation, we see that the camera measures spectral albedo of the scene’ s HSI and not the spectral re- flectance of models. Ho we ver , this is not a problem, as long as the light’ s spectral distribution is kno wn a priori . B. Learning details W e provide details about our training process with em- phasis on choice of parameters and hyperparameters. W e captured a total of 1 , 000 , 000 spectral profiles ov er 5 ma- terial types. For each classifier , we used 20% for training, 5% for v alidation and 80% for testing. W e found that the testing accuracy did not improve ev en if we used more than 20% data. Support V ector Machine. W e used the function LinearSVC from Scikit-Learn [ 28 ] for training a one-vs- all SVM. The only hyperparamter of tuning was penalty for the hyperplanes, C , which was tuned by performing a grid search over the log space from 10 − 5 to 1 . Hyperparamter search was done through a 3-fold cross-v alidation. Neural Netw orks. W e used PyT orch [ 27 ] for training our neural network (DNN) classifiers. The architecture used for learning is shown in 4 and the details of each layer is pro- vided in T able 1 . Q is the number of filters and was varied Layer Components 1 ( Filters ) Linear (256xQ), ReLU , Dropout (0.1) 2 Linear (Qx256), ReLU , Dropout (0.1) 3 Linear (256x128), ReLU , Dropout (0.1) 4 Linear (128x64), ReLU , Dropout (0.1) 5 Linear (64x32), ReLU , Dropout (0.1) 6 ( Output ) Linear (32x5) T able 1: Components of our DNN classifier . All the layers are formed of fully connected layers with a ReLU and dropout added after each linear layer. Here, Q is the number of spectral filters and w as variable in our experiments to compare performance. The output was a single linear layer . During training process, we used cross-entropy as loss function. from 1 to 20 to ev aluate performance as a function of mea- surements. W e trained the network with an initial learning rate of 10 − 3 and trained for a total of 60 epochs. The filters were initialized with a principal component analysis (PCA) decomposition of training data. This lead to smoother filters and higher accuracy . For each Q , we picked the model with best accuracy on v alidation dataset. C. Hardwar e details Hardwar e prototype. Figure 16 shows a picture of our lab prototype with names of major components. The last lens in the setup was replaced by a 50 mm objectiv e lens focused at infinity . This led to a better spatial resolution than an achromat. Calibration. As described in the main paper, our setup re- quired calibration of coded aperture, wa velengths and spa- tial PSF . W e detail the calibration procedure here. 1. Coded apertur e calibration : This is required to capture the code that blurs the spectrum. W e measure the coded aperture by illuminating a spectrally flat object (such as spectralon) with a laser of known wa velength and scan- ning the complete HSI. W e then average all spatial pix- els to get the spectrum of the scene. Since a laser can be treated as a discrete delta, the measured spectrum will be the coded aperture. W e threshold the measured spectrum appropriately to get the binary coded aperture, as shown in Fig. 17 (a). 2. W avelength calibration : T o find the correspondence be- tween band inde x (1 - 256) and the corresponding wa ve- lengths, we capture two scenes, each one comprised of a spectrally flat object illuminated by a narrowband laser light source. The averaged spectrum of the HSI is a blurred version of the laser spectrum. By deconv olving with the previously estimated coded aperture, we get lo- cation of the laser in terms of band index. W e use this 1 2 3 4 5 6 1 8mm Objectiv e Lens Thorlabs M VL8M23 3 Polarizing B eam Splitter Moxtex Wi regrid Polar izing Beam splitter 4 300 groves/m m Diffraction Gr ating Dynasil G30 0TN31.7CC 5 NIR LCoS Disp lay Holoeye HE D6001 with N IR-enhancemen t 6 sCMOS camera Hamamatsu O RCA Flash 4.0 L T 100mm Achrom at Thorlabs A C254-100-B 2 Coded Ape rture Printed by Photoplot store with a feature size of 10μm Figure 16: Lab prototype. A picture of the lab prototype along with major components marked with details. W e skipped details about opto-mechanical components such as cage plates and posts to av oid clutter . The inset image shows the printed mask we used as coded aperture. 100 200 300 400 500 Band index 0 0.2 0.4 0.6 0.8 1 Measured spectrum Computed code (a) Code calibration 600 700 800 (nm) 0 0.2 0.4 0.6 0.8 1 635 nm (calib) 850 nm (calib) 780 nm (test) 830 nm (test) (b) W avelength calibration Figure 17: W avelength calibration. W e first estimate the blur due to coded aperture by capturing a scene illuminated by a narrowband light source (635nm laser), giving us the code in (a). W e then calibrate the correspondence between band index and wa velengths by capturing two separate scenes illuminated by known laser light sources (635nm, 850nm). The results of the two calibration are show in (b), where we capture two more scenes with 780nm and 830nm laser . information along with laser wa velength to calibrate the correspondence. 3. Spatial PSF : T o find the spatial blur kernel, we capture a single image of a 10 µ m pinhole. Since the PSF is well conditioned, deblurring the spatial images is well condi- tioned. 600 650 700 750 800 850 (nm) 0.2 0.4 0.6 0.8 (a) T arget profile (b) W ithout correction (c) W ith correction Figure 18: Displaying desir ed spectral profile. Gi ven a target profile (a), we display a binary image on the SLM, as shown in (b), which ensures grayscale modulation despite wa velength-dependent gamma curve. Howe ver , since the SLM is 2 f aw ay from the camera sensor , there will be ef- fects of dif fraction. W e counter this by adding a small DC offset, as sho wn in (c). 4. Radiometric calibration of SLM : The LCoS SLM in our optical setup is based on twisted-nematic design, and hence has different gamma curves for different wav e- (a) Raw (b) Decon volved 0.2 0.4 0.6 0.8 1 Line pairs/pixel 0.2 0.4 0.6 MTF Raw image Deconvolved (c) MTF Figure 19: Spatial decon volution. Due to design of an in- vertible spatial blur , the optical setup is capable of high res- olution after decon volution. (a) shows a raw image, with enlarged PSF in inset, (b) shows result of wiener decon vo- lution, and (c) sho ws a comparison of modulation transfer function (MTF). There is a marked increase in resolution both quantitativ ely and qualitatively . lengths. Since the spectrum on the SLM is a blurred v er- sion of the true spectrum, we cannot perform a column- wise gamma correction. Instead, we use the SLM only as a binary modulator and achie ve grayscale modulation by varying height of each column as shown in Fig 18 (b). This way , the SLM has a linear gamma curv e for all wa velengths. Figures of merit. Our setup is capable of achie ving spec- tral resolution of up to 3 . 3 nm over the wavelength range of 600 − 900 nm, which is the designed resolution (see KRISM.pdf for further details). Due to inv ertible spatial blur , our setup is capable of high resolution after decon vo- lution. Figure 19 visualizes the captured image in (a) and decon volved image in (b) of a sector star target. (c) shows plot of Modulation T ransfer Function (MTF) as a function of line pairs per pixel. Image was deconv olved using sim- ple W iener deconv olution. The MTF30 after deconv olution was 0 . 45 linepairs/pixel. Handling diffraction due to SLM. Since the SLM is placed 2 f away from the image plane, any pattern displayed on SLM will lead to a diffraction blur . T o counter this effect, we alw ays display ones in the middle of the pattern to be displayed on the SLM. This reduces the effect of dif fraction while adding a simple offset to the data, which can be re- mov ed by capturing image with only the central part open. References [1] A. Chakrabarti. Learning sensor multiplexing design through back-propagation. In Advances in Neural Information Pr o- cessing Systems , pages 3081–3089, 2016. 5 [2] H. G. Chen, S. Jayasuriya, J. Y ang, J. Stephen, S. Si vara- makrishnan, A. V eeraraghav an, and A. Molnar . Asp vision: Optically computing the first layer of con volutional neural networks using angle sensitiv e pixels. In IEEE Conf. Com- puter V ision and P attern Recognition , 2016. 2 , 5 [3] Y . Chen, H. Jiang, C. Li, X. Jia, and P . Ghamisi. Deep feature extraction and classification of hyperspectral images based on con volutional neural networks. T rans. Geoscience and Remote Sensing , 54(10):6232–6251, 2016. 2 [4] E. Cloutis. Revie w article hyperspectral geological remote sensing: ev aluation of analytical techniques. International J . Remote Sensing , 17(12):2215–2242, 1996. 1 [5] N. Colthup. Intr oduction to infrar ed and Raman spec- tr oscopy . Else vier, 2012. 1 [6] N. Dobigeon, J.-Y . T ourneret, C. Richard, J. C. M. Bermudez, S. McLaughlin, and A. O. Hero. Nonlinear un- mixing of hyperspectral images: Models and algorithms. IEEE Signal Pr ocessing Magazine , 31(1):82–94, 2014. 2 [7] M. Fauvel, J. Chanussot, J. A. Benediktsson, and J. R. Sveinsson. Spectral and spatial classification of hyperspec- tral data using svms and morphological profiles. In IEEE Int. Geoscience and Remote Sensing Symposium , pages 4834– 4837, 2007. 2 [8] M. Goel, E. Whitmire, A. Mariakakis, T . S. Saponas, N. Joshi, D. Morris, B. Guenter, M. Gavriliu, G. Borriello, and S. N. Patel. Hypercam: hyperspectral imaging for ubiq- uitous computing applications. In A CM Intl. J oint Conf. P er- vasive and Ubiquitous Computing , pages 145–156, 2015. 3 [9] R. Gross, I. Matthews, and S. Baker . Fisher light-fields for face recognition across pose and illumination. In Joint P at- tern Recognition Symposium , pages 481–489, 2002. 2 [10] A. B. Hamida, A. Benoit, P . Lambert, and C. B. Amar . 3-d deep learning approach for remote sensing image classifica- tion. T rans. Geoscience and Remote Sensing , 56(8):4420– 4434, 2018. 2 [11] J. C. Harsanyi and C.-I. Chang. Hyperspectral image clas- sification and dimensionality reduction: An orthogonal sub- space projection approach. IEEE T rans. Geoscience and Re- mote Sensing , 32(4):779–785, 1994. 1 [12] M. He, B. Li, and H. Chen. Multi-scale 3d deep con volu- tional neural network for hyperspectral image classification. In Intl. Conf. Imag e Processing , 2017. 2 [13] W . Hu, Y . Huang, L. W ei, F . Zhang, and H. Li. Deep con volu- tional neural netw orks for hyperspectral image classification. Journal of Sensor s , 2015, 2015. 2 [14] M. H. Kim, T . A. Harvey , D. S. Kittle, H. Rushmeier , J. Dorsey , R. O. Prum, and D. J. Brady . 3d imaging spec- troscopy for measuring hyperspectral patterns on solid ob- jects. ACM T rans. Graphics (TOG) , 31(4):38, 2012. 1 [15] H. Lee and H. Kwon. Contextual deep cnn based hyperspec- tral classification. In Intl. Geoscience and Remote Sensing Symposium , 2016. 2 [16] Y . Li, H. Zhang, and Q. Shen. Spectral–spatial classifica- tion of hyperspectral imagery with 3d conv olutional neural network. Remote Sensing , 9(1):67, 2017. 2 [17] J. W . Lichtman and J.-A. Conchello. Fluorescence mi- croscopy . Natur e methods , 2(12):910, 2005. 1 [18] B. Liu, X. Y u, P . Zhang, X. T an, A. Y u, and Z. Xue. A semi- supervised con volutional neural network for hyperspectral image classification. Remote Sensing Letters , 8(9):839–848, 2017. 2 [19] C. Liu and J. Gu. Discriminati ve illumination: Per-pixel clas- sification of raw materials based on optimal projections of spectral brdf. IEEE trans. pattern analysis and machine in- telligence , 36(1):86–98, 2014. 2 [20] S. P . Love and D. L. Graff. Full-frame programmable spectral filters based on micromirror arrays. J. Mi- cr o/Nanolithography , MEMS, and MOEMS , 13(1):011108, 2014. 3 [21] Y . Luo, J. Zou, C. Y ao, X. Zhao, T . Li, and G. Bai. Hsi-cnn: A novel conv olution neural network for hyperspectral image. In Intl. Conf. A udio, Language and Image Pr ocessing , 2018. 2 [22] F . Melgani and L. Bruzzone. Classification of hyperspec- tral remote sensing images with support vector machines. IEEE T rans. geoscience and r emote sensing , 42(8):1778– 1790, 2004. 2 [23] A. Mohan, R. Raskar, and J. T umblin. Agile spectrum imag- ing: Programmable wav elength modulation for cameras and projectors. In Computer Graphics F orum , 2008. 3 [24] M. O’T oole and K. N. Kutulakos. Optical computing for fast light transport analysis. ACM T rans. Graph. , 29(6):164, 2010. 3 [25] Z. Pan, G. Healey , M. Prasad, and B. T romberg. Face recog- nition in hyperspectral images. IEEE T rans. P attern Analysis and Machine Intelligence , 25(12):1552–1560, 2003. 1 [26] J.-I. Park, M.-H. Lee, M. D. Grossberg, and S. K. Nayar . Multispectral imaging using multiplexed illumination. In IEEE Intl. Conf. Computer V ision , 2007. 3 [27] A. P aszke, S. Gross, S. Chintala, G. Chanan, E. Y ang, Z. De- V ito, Z. Lin, A. Desmaison, L. Antiga, and A. Lerer . Auto- matic differentiation in p ytorch. In NIPS-W , 2017. 7 , 9 [28] F . Pedre gosa, G. V aroquaux, A. Gramfort, V . Michel, B. Thirion, O. Grisel, M. Blondel, P . Prettenhofer, R. W eiss, V . Dubour g, J. V anderplas, A. Passos, D. Cournapeau, M. Brucher , M. Perrot, and E. Duchesnay . Scikit-learn: Ma- chine learning in Python. Journal of Machine Learning Re- sear ch , 12:2825–2830, 2011. 7 , 9 [29] V . Saragadam and A. Sarankaranarayanan. KRISM—krylov subspace-based optical computing of hyperspectral images. arXiv:1801.09343 , 2018. 3 , 4 [30] V . Sharma, A. Diba, T . T uytelaars, and L. V an Gool. Hyperspectral cnn for image classification & band selec- tion, with application to face recognition. T echnical report KUL/ESA T/PSI/1604, KU Leuven, ESA T , Leuven, Belgium , 2016. 2 [31] Y . T arabalka, J. Chanussot, and J. A. Benediktsson. Segmen- tation and classification of hyperspectral images using water - shed transformation. P attern Reco gnition , 43(7):2367–2379, 2010. 1

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment