AeGAN: Time-Frequency Speech Denoising via Generative Adversarial Networks

Automatic speech recognition (ASR) systems are of vital importance nowadays in commonplace tasks such as speech-to-text processing and language translation. This created the need for an ASR system that can operate in realistic crowded environments. T…

Authors: Sherif Abdulatif, Karim Armanious, Karim Guirguis

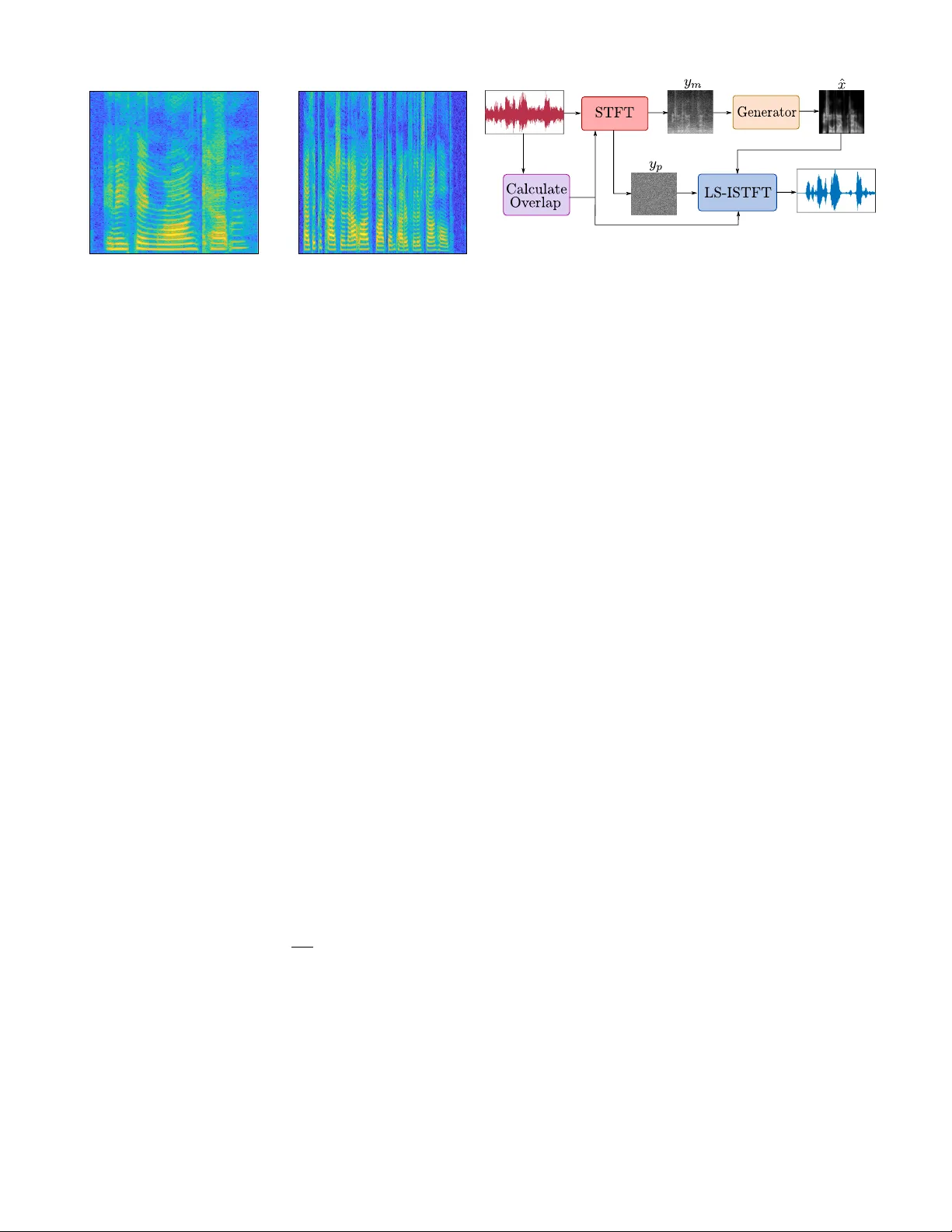

AeGAN: T ime-Frequenc y Speech Denoising via Generati ve Adv ersarial Netw orks Sherif Abdulatif ? , Karim Armanious ? , Karim Guirguis, Jayasankar T . Sajee v , Bin Y ang Univ ersity of Stuttgart, Institute of Signal Processing and System Theory , Stuttgart, Germany Abstract —A utomatic speech recognition (ASR) systems are of vital importance nowadays in commonplace tasks such as speech- to-text processing and language translation. This cr eated the need for an ASR system that can operate in realistic crowded en vironments. Thus, speech enhancement is a valuable b uilding block in ASR systems and other applications such as hearing aids, smartphones and teleconferencing systems. In this paper , a generativ e adversarial netw ork (GAN) based framew ork is in vestigated for the task of speech enhancement, more specifically speech denoising of audio tracks. A new architectur e based on CasNet generator and an additional feature-based loss are in- corporated to get realistically denoised speech phonetics. Finally, the proposed framework is shown to outperform other learning and traditional model-based speech enhancement approaches. Index T erms —Speech enhancement, generative adv ersarial networks, automatic speech recognition, deep learning. I . I N T RO D U C T I O N In a noisy en vironment, a typical speech signal is perceived as a mixture between clean speech and an intrusiv e background noise. Accordingly , speech denoising is interpreted as a source separation problem, where the goal is to separate the desired audio signal from the intrusive noise. The background noise type and the signal-to-noise ratio (SNR) ha ve a direct influence on the quality of the denoised speech. For instance, some common background noise types can be very similar to the desired speech such as cafe or food court noise. In these cases, estimating the desired speech from the corrupted signal is challenging and sometimes impossible in low SNR situations because the noise occupy the same frequency bands as the desired speech. This process of eliminating background noise from noisy speech signal is constructi ve for applications such as automatic speech recognition (ASR) systems, hearing aids and teleconferencing systems. Previously , traditional approaches were adopted for speech enhancement such as spectral subtraction [1], [2] and binary masking techniques [3], [4]. Moreov er , statistical approaches based on W iener filters and Bayesian estimators were applied to speech enhancement [5], [6]. Howe ver , most of these approaches require a prior estimation of the SNR based on an initial silent period and can only operate well on limited non-speech like noise types in high SNR situations. This is attributed to the lack of a precise signal model describing the distinction between the speech and noise signals. T o ov ercome such limitations, data driv en approaches based on deep neural networks (DNNs) are widely used in literature to learn deep underlying features of either the desired speech ————————————————— ? These authors contributed to this work equally . or the intrusiv e background noise from the giv en data without a signal model. For instance, denoising autoencoders (AE) were used in [7], [8] to estimate a clean track from a noisy input based on the L1-loss. Long short-term memory (LSTM) networks hav e also been utilized to incorporate temporal speech structure in the denoising process [9], [10]. Also, an adaptation of the autoregressi ve generativ e W avNet was used in [11] where a denoised sample is generated based on the previous input and output samples. In 2014, generati ve adversarial networks (GANs) were introduced as the state-of-the-art for deep generativ e models [12]. In GANs, a generator is trained adversarially with a discriminator to generate images belonging to the same joint distribution of the training data. Afterwards, variants of GANs such as conditional generati ve adversarial networks (cGANs) were introduced for image-to-image translation tasks [13]– [15]. The pix2pix model introduced in [16] is one of the first attempts to map natural images from an input source domain to a certain tar get domain. Henceforth, cGANs were used for speech enhancement either by utilizing the raw 1D speech tracks or the 2D log-Mel time-frequency (TF) magnitude representation. For instance, speech enhancement GAN (SEGAN) is a 1D adaptation of the pix2pix model operating on 1D raw speech tracks [17]. This model was further adapted to operate on 2D TF-magnitude representations via the frequenc y SEGAN (FSEGAN) frame work [18]. Due to the TF-magnitude representation being used as an implicit feature extractor , an impro ved speech denoising was reported. Howe v er , both models suf fer from multiple limitations. They rely mainly on pixel-wise losses, which have been reported to produce inconsistencies and output artifacts [16]. Additionally , both models were utilized to denoise speech tracks of fixed durations and under relati vely mild noise conditions with an av erage SNR of 10 dB. In this work, a ne w adversarial approach, inspired by [19], is proposed for denoising speech tracks by operating on 2D TF-magnitude representations of noisy speech inputs. The proposed framework incorporates a cascaded architecture in addition to a non-adversarial feature-based loss which pe- nalizes the discrepancies in the feature space between the outputs and the targets. This enhances the robustness of speech denoising with respect to harsh SNR conditions and speech- like background noise types. Additionally , we propose a new dynamic time resolution technique to embed variable track lengths in a fixed TF repre- sentation by adapting the time o verlap according to the track 0 . 2 0 . 4 0 . 6 0 . 8 1 1 . 2 1 . 4 0 1 2 3 4 5 6 7 8 Time [s] Frequency [kHz] (a) T rack duration = 1 . 4 s . 0 . 5 1 1 . 5 2 2 . 5 3 3 . 5 4 0 1 2 3 4 5 6 7 8 Time [s] Frequency [kHz] (b) T rack duration = 4 s . Fig. 1. Examples of v ariable duration tracks embedded in a fixed 256 × 256 TF-magnitude representation. length. T o illustrate the performance of the proposed approach, quantitativ e comparison is carried out against SEGAN [17], FSEGAN [18] and two traditional model-based variants of W iener filters and Bayesian estimators [5], [6] under different noise types and SNR le vels. Furthermore, the word error rate (WER) of a pre-trained automatic speech recognition (ASR) model is ev aluated. I I . D Y NA M I C T I M E R E S O L U T I O N Previously proposed architectures are designed to work on speech tracks of fixed durations. This is due to the architectural limitation of having to operate on inputs of fixed pixel dimen- sionality or number of samples for FSEGAN and SEGAN, respectiv ely . In order to accommodate this constraint, the input track length was fixed to 1 s. Accordingly , a track of arbitrary length should be first di vided into 1 s interv als and then the denoising is applied sequentially on each interval. In our proposed frame work, the input 2D TF-magnitude representation is fix ed to 256 × 256 pixels. Howe ver , the time resolution per pixel is v ariable according to the length of the 1D track as sho wn in Fig. 1. The TF-magnitude representation is computed based on short time Fourier transform (STFT) where a window function followed by FFT is applied to ov erlapping segments of the 1D track. In our case, we will consider tracks of 16 kHz sampling frequency . A hamming window of S = 512 samples is used to get a one-sided spectrum of N F = 256 frequency bins. T o fix the time dimension to N T = 256 time bins, the overlapping parameter O of the 1D segments is adjusted based on the input track length L according to the following relation: O = S − L N T (1) Finally , the track length L is modified either by omitting samples or padding a silent signal based on the follo wing constraint: L = N T ( S − O ) + O (2) After applying the denoising to the input TF-magnitude representation, getting back to the time domain is mandatory . For this we choose to use the least square in v erse short time Fig. 2. General block diagram of the proposed system. The log-magnitude is passed to the network and the output of the network is used with the input noisy phase for reconstruction. Fourier transform (LS-ISTFT) proposed in [20]. Based on this implementation, an acceptable signal-to-distortion ratio (SDR) reconstruction can be achie ved with an o verlap of at least 25%. By substituting this ov erlap ratio in Eq. 1, the longest track length that can be embedded in a 256 × 256 TF-magnitude representation should not exceed 6.1 s. Otherwise the track will be split into multiple suitable durations. The LS-ISTFT requires both magnitude and phase of the TF representation for reconstruction. Ho we ver , the phonetic information of speech is mostly available in the magnitude. Therefore, only this magnitude y m is passed as input to the denoising network. For reconstruction, the noisy phase y p of the TF representation is used together with the denoised magnitude as shown in Fig. 2. I I I . M E T H O D In this section, the proposed adversarial approach for speech enhancement will be described. First, a brief explanation of traditional cGANs will be outlined, followed by the proposed framew ork titled acoustic-enhancement GAN (AeGAN). Ho w- ev er , in this initial work the AeGAN will be applied on a speech denoising task. An ov erview of the proposed approach is presented in Fig. 3. A. Conditional Generative Adversarial Networks In general, adversarial frameworks are a game-theoretical approach which pits multiple networks in direct competition with each other . More specifically , a cGAN framework con- sists of two deep con volutional neural networks (DCNNs), a generator G and a discriminator D [16]. The generator receiv es as input the magnitude of the 2D TF representation of the noisy speech. It attempts to eliminate the intrusiv e background noise by outputting the denoised TF-magnitude ˆ x = G ( y m ) . The main goal of the generator is to render ˆ x to be indistinguishable from the target ground-truth clean speech TF-magnitude x . Parallel to this process, the discriminator network is trained to directly oppose the generator . D acts as a binary classifier recei ving y m and either x or ˆ x as inputs and classifying which of them is synthetically generated and which is real. In other words, G attempts to produce a real- istically enhanced TF-magnitude to fool D , while conv ersely D constantly improves it’ s performance to better detect the generator’ s output as fake. This adversarial training setting driv es both networks to improv e their respecti ve performance until Nash’ s equilibrium is reached. This training procedure is expressed via the following min-max optimization task o ver the adversarial loss function L adv : min G max D L adv = min G max D E x,y m [ log D ( x, y m )] + E ˆ x,y m [ log (1 − D ( ˆ x, y m ))] (3) T o further improve the output of the generator and av oid visual artifacts, an additional L1 loss is utilized to enforce pixel-wise consistency between the generator output ˆ x and the ground-truth target [16]. The L1 loss is giv en by L L1 = E x, ˆ x [ k x − ˆ x k 1 ] (4) B. F eature-Based Loss The magnitude component of the speech TF representation has rich patterns directly reflecting human speech phonetics. A straightforward minimization of the pixel-wise discrepancy via L1 loss will result in a blurry TF-magnitude reconstruction which in turns will deteriorate the speech phonetics. T o overcome this issue, we propose the utilization of the feature-based loss inspired by [19] to regularize the generator network to produce globally consistent results by focusing on wider feature representations rather than individual pixels. This is achieved by utilizing the discriminator D as a trainable feature e xtractor to extract lo w and high-lev el feature repre- sentations. The feature-based loss is then calculated as the weighted average of the mean absolute error (MAE) of the extracted feature maps: L Percep = N X i =1 λ n k D n ( x ) − D n ( ˆ x ) k 1 (5) where D n is the feature map extracted from the n th layer of the discriminator . N and λ n are the total number of layers and the individual weights gi ven to each layer , respecti vely . C. Ar chitectural Details In our proposed AeGAN framew ork, a CasNet generator and a patch discriminator architecture are utilized [19]. CasNet concatenates three U-blocks in an end-to-end manner , whereas each U-block consists of a encoder-decoder architecture joint together via skip connections. These connections av oid the excessi ve loss of information due to the bottleneck layer . The output TF-magnitude representations are progressi vely refined as they propagate through the multiple encoder -decoder pairs. The architecture of each U-block is identical to that proposed in [16]. Regarding the patch discriminator , it divides the input TF-magnitude representations into smaller patches before proceeding with classifying each patch as real or fake. For the final classification score, all patch scores are averaged out. Ho we ver , unlike the 70 × 70 pixel patches recommended in [16], a patch size of 16 × 16 was found to produce better output results in our case. Fig. 3. An o vervie w of the proposed adversarial architecture for speech TF-magnitude denoising with relev ant losses. I V . E X P E R I M E N T S The proposed speech denoising framew ork is ev aluated on the TIMIT dataset [21]. This dataset consists of 10 phoneti- cally rich sentences spoken by 630 speakers with 8 different American English dialects. All tracks are sampled at 16 kHz and the track durations are between 0.9 s to 7 s. The majority of the tracks satisfies the aforementioned track length constraint in Sec. II. Only 15 tracks were found to exceed the 6.1 s limit and were excluded from the dataset for simplicity . In the training procedure, three dif ferent noise types were utilized (cafe, food court and home kitchen) from the QUT - TIMIT proposed in [22]. The background noise was added to the clean speech in order to create a paired training set. Additionally , dif ferent total SNR lev els were used for each noise type (0, 5 and 10 dB). Thus, the total training dataset consists of 36,000 paired tracks from 462 speakers of 6 different dialects. For validation, two different experiments were conducted. In the first experiment, the trained network was v alidated on a test set of 5000 tracks utilizing the same training noise types albeit from different 168 individuals using the whole 8 av ailable dialects. In the second experiment, the generalization capability of the network was in v estigated by validating on a dataset of 500 tracks from the test set corrupted by a new noise type, the city street noise. Both experiments were conducted using the same SNR values used in training. T o compare the performance of the proposed approach, quantitativ e comparisons were conduced against the FSEGAN and SEGAN [17], [18]. Additionally , traditional model-based approaches, W iener filter [5] and an optimized weighted- Euclidean Bayesian estimator [6], were utilized in the compar- ativ e study based on their open-source implementations 1 . All trainable models were trained using the same hyperparameters for 50 epochs to ensure a fair comparison. Multiple metrics were used for the comparison in order to giv e a wider scope of interpretation for the results. The utilized metrics are the perceptual ev aluation of speech quality (PSEQ) [23], the mean opinion score (MOS) prediction of the signal distortion ————————————————— 1 https://www .crcpress.com/do wnloads/K14513/K14513_CD_Files.zip Speech + City-street Noise T ime [s] Frequency [kHz] Speech + Cafe Noise (Input) FSEGAN SEGAN AeGAN Clean Speech (T ar get) Fig. 4. Qualitativ e comparison on the TF-magnitude of dif ferent learning-based speech denoising techniques. ( ) illustrates the frequency bands of different noise types. ( ) and ( ) shows the adv antages of our model under cafe noise. T ABLE I. Quantitati ve comparison of speech denoising techniques on test dataset with speech-like noise types. Model Noisy input W iener filter [5] Bayesian est. [6] FSEGAN [18] SEGAN [17] AeGAN T arget SNR (dB) SNR (dB) SNR (dB) SNR (dB) SNR (dB) SNR (dB) 0 5 10 0 5 10 0 5 10 0 5 10 0 5 10 0 5 10 PESQ 1.43 1.69 2.03 1.46 1.79 2.20 1.45 1.79 2.21 1.57 1.99 2.47 1.79 2.18 2.59 2.17 2.64 3.04 4.5 CSIG 2.23 2.75 3.29 1.79 2.43 3.04 1.81 2.41 2.98 2.50 3.12 3.69 2.83 3.34 3.78 3.36 3.86 4.28 5.0 CB AK 1.64 2.03 2.49 1.58 2.06 2.58 1.66 2.10 2.59 2.11 2.54 2.97 2.26 2.65 3.01 2.59 3.00 3.35 5.0 CO VL 1.72 2.14 2.61 1.45 1.98 2.53 1.47 1.98 2.51 1.97 2.52 3.06 2.23 2.71 3.16 2.74 3.24 3.66 5.0 STOI 0.65 0.77 0.86 0.63 0.76 0.86 0.60 0.73 0.83 0.72 0.82 0.89 0.79 0.86 0.91 0.82 0.89 0.93 1.0 ASR WER (%) 90.0 70.4 46.3 89.6 70.0 49.6 87.7 71.3 49.0 85.9 66.4 44.7 73.8 53.7 35.8 64.6 42.9 29.7 20.0 T ABLE II. Quantitati ve results for generalization on city-street noise. Model Noisy Input W iener filter [5] Bayesian est. [6] FSEGAN [18] SEGAN [17] AeGAN T arget SNR (dB) SNR (dB) SNR (dB) SNR (dB) SNR (dB) SNR (dB) 0 5 10 0 5 10 0 5 10 0 5 10 0 5 10 0 5 10 PESQ 1.46 1.73 2.13 1.72 2.11 2.58 1.81 2.22 2.69 1.72 2.19 2.70 1.81 2.22 2.64 2.47 2.90 3.27 4.5 CSIG 2.40 2.93 3.49 2.52 3.11 3.63 2.54 3.11 3.60 2.73 3.40 3.99 3.00 3.51 3.93 3.81 4.24 4.59 5.0 CB AK 1.55 1.97 2.49 1.83 2.34 2.88 1.99 2.48 2.98 2.25 2.71 3.16 2.29 2.71 3.09 2.84 3.22 3.57 5.0 CO VL 1.79 2.23 2.75 1.96 2.50 3.03 2.03 2.56 3.08 2.16 2.76 3.33 2.32 2.81 3.26 3.12 3.56 3.94 5.0 STOI 0.72 0.80 0.87 0.72 0.80 0.88 0.70 0.78 0.85 0.75 0.84 0.89 0.81 0.88 0.92 0.86 0.90 0.94 1.0 ASR WER (%) 85.6 64.7 45.4 78.0 58.2 38.9 75.5 56.8 40.5 81.2 62.3 43.0 70.2 51.3 34.7 50.1 39.4 27.1 20.0 (CSIG), the MOS prediction of background noise (CBAK) and the overall MOS prediction score (CO VL) [24]. T o give an indication of human speech intelligibility , the short-time objectiv e intelligibility measure (STOI) was utilized [25]. Additionally , the WER was e v aluated using the Deep Speech pre-trained ASR model [26]. V . R E S U LT S A N D D I S C U S S I O N First a qualitative comparison of the TF-magnitude rep- resentation of different methods is illustrated. As shown in Fig. 4, the AeGAN is superior in cancelling the low power components of the background noise in comparison to FSEGAN as annotated by ( ). In contrast to the AeGAN, the SEGAN model shows a clear elimination of some speech intervals as annotated by ( ). Regarding the quantitative analysis, we present the metric scores of the noisy input tracks as a comparison baseline. Also the metric scores of the ground-truth target clean tracks are presented as an indicator of the maximal achiev able performance. All scores are a veraged ov er the different noise types. In T able I, the results of the first experiment is presented. In this experiment, the test tracks were based on speech- like noise types (cafe and food-court noise). Hence, the noise distribution is dif ficult to distinguish from the target speech segments. The model-based approaches resulted in minor or no speech improvements compared to the baseline noisy input. W e hypothesize that this is due to the fact that the distribution of the speech-like noise occupies the same frequency bands as the speech signals. Thus, the model-based approaches fail to distinguish the speech from the noisy background in case of speech-like corruption. Re garding the learning-based approaches, the proposed AeGAN framew ork outperforms both the FSEGAN and SEGAN models. For instance, AeGAN results in a WER of 29.7% for SNR 10 dB compared to 35.8% and 44.7% for SEGAN and FSEGAN, respectively . T o illustrate the generalization capability of the proposed framew ork, an additional comparativ e study is presented in T able II based on validating the trained models on a new noise type (city-street noise). This noise can be considered as a less challenging noise compared to the aforementioned speech-like noises because it occupies a narrower frequency band as annotated by ( ) in Fig. 4. Accordingly , the model- based approaches resulted in a more noticeable improv ement compared to the noisy input baseline. More specifically , the more recent Bayesian estimator outperformed the traditional W iener filter across the objective metrics. FSEGAN resulted in an enhanced performance in the objecti ve metrics with slight deterioration in the PESQ and WER compared to the model-based approaches. Finally , the SEGAN and AeGAN are quantitativ ely superior across all utilized metrics with AeGAN enhancing the WER by 20.1% compared to SEGAN in the 0 dB case. This illustrates that the learning-based approaches result in a significant impro vement in speech denoising per- formance with robust generalization to never seen noise types, especially SEGAN and the proposed AeGAN. Con ventionally , deep-learning approaches face a significant challenge in collecting a large enough number of labeled train- ing samples, i.e. paired (clean and noisy) samples. Howe ver , in the case of speech denoising this is easily bypassed by the av ailability of accessible audio and noise datasets that can be superimposed with the required SNR. It must also be pointed that in literature the FSEGAN authors claim a better performance in WER ov er the SEGAN model. Howe ver , this has not been observed in the abov e results. W e hypothesize that this is the result of FSEGAN now having to deal with variable time resolution input TF- magnitude representations, due to the utilized dynamic time resolution, which posses a challenge compared to the SEGAN. Howe v er , this work is not without limitation. In the future, we plan to e xtend the current comparati ve studies to include more recent model-based approaches for speech denoising such as [27], [28]. In addition to applying some subjective ev aluation tests.W e also plan to extend the AeGAN framework to accommodate dif ferent non-speech audio signals (e.g. music denoising) and other enhancement tasks such as dereverbera- tion and interference cancellation. V I . C O N C L U S I O N In this work, an adversarial speech denoising technique is introduced to operate on speech TF-magnitude representations. The proposed approach inv olves an additional feature-based loss and a CasNet generator architecture to enhance detailed local features of speech in the TF domain. Moreover , to improv e the inference efficiency , time-domain tracks with variable durations are embedded in a fixed TF-magnitude representation by changing the corresponding time resolution. Challenging speech-lik e noise types, e.g. cafe and food court noise, were in volv ed in training under lo w SNR conditions. T o ev aluate the generalization capability of our model, two experiments were conducted on dif ferent speakers and noise types. The proposed approach exhibits a significantly enhanced performance in comparison to the pre viously introduced GAN- based and traditional model-based approaches. R E F E R E N C E S [1] M. Berouti et al., “Enhancement of speech corrupted by acoustic noise, ” in IEEE International Conference on Acoustics, Speec h, and Signal Pr ocessing (ICASSP) , April 1979, vol. 4, pp. 208–211. [2] S. Kamath and P . Loizou, “ A multi-band spectral subtraction method for enhancing speech corrupted by colored noise, ” in IEEE Inter- national Confer ence on Acoustics, Speech, and Signal Pr ocessing (ICASSP) , May 2002, vol. 4, pp. 4164–4164. [3] N. Li et al., “Factors influencing intelligibility of ideal binary- masked speech: Implications for noise reduction, ” The Journal of the Acoustical Society of America , vol. 123, no. 3, pp. 1673–1682, 2008. [4] D. W ang, “Time-frequency masking for speech separation and its potential for hearing aid design, ” Tr ends in amplification , vol. 12, no. 4, pp. 332–353, 2008. [5] P . Scalart and J. V . Filho, “Speech enhancement based on a priori signal to noise estimation, ” in IEEE International Conference on Acoustics, Speech, and Signal Pr ocessing Confer ence Pr oceedings (ICASSP) , May 1996, vol. 2, pp. 629–632. [6] P . C. Loizou, “Speech enhancement based on perceptually motiv ated bayesian estimators of the magnitude spectrum, ” IEEE T ransactions on Speech and Audio Pr ocessing , vol. 13, no. 5, pp. 857–869, Sep. 2005. [7] X. Lu et al., “Speech enhancement based on deep denoising autoencoder , ” in Interspeech , Aug. 2013, pp. 436–440. [8] X. Feng, Y . Zhang, and J. Glass, “Speech feature denoising and derev erberation via deep autoencoders for noisy reverberant speech recognition, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , May 2014, pp. 1759–1763. [9] F . W eninger et al., “Speech enhancement with lstm recurrent neural networks and its application to noise-robust asr , ” in International Confer ence on Latent V ariable Analysis and Signal Separation . Springer , Aug. 2015, pp. 91–99. [10] Y . T u et al., “ A hybrid approach to combining con ventional and deep learning techniques for single-channel speech enhancement and recognition, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , April 2018, pp. 2531–2535. [11] D. Rethage, J. Pons, and X. Serra, “ A W a veNet for speech denois- ing, ” in IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , April 2018, pp. 5069–5073. [12] I. Goodfellow et al., “Generativ e adversarial nets, ” in Advances in Neural Information Pr ocessing Systems , pp. 2672–2680. 2014. [13] M. Mirza and S. Osindero, “Conditional generati ve adversarial nets, ” CoRR , vol. abs/1411.1784, 2014. [14] K. Armanious et al., “Unsupervised Medical Image T ranslation Using Cycle-MedGAN, ” in 27th Eur opean Signal Pr ocessing Con- fer ence (EUSIPCO) , Sep. 2019, pp. 3642–3646. [15] K. Armanious et al., “ An Adversarial Super-Resolution Remedy for Radar Design Trade-of fs, ” in 27th Eur opean Signal Pr ocessing Confer ence (EUSIPCO) , Sep. 2019, pp. 3642–3646. [16] P . Isola et al., “Image-to-Image translation with conditional ad- versarial networks, ” in IEEE Conference on Computer V ision and P attern Recognition (CVPR) , July 2017, pp. 5967–5976. [17] S. Pascual, A. Bonafonte, and J. Serrà, “SEGAN: Speech enhance- ment generativ e adversarial network, ” in Interspeech , 2017, pp. 3642–3646. [18] C. Donahue, B. Li, and R. Prabhav alkar , “Exploring speech en- hancement with generati ve adversarial networks for robust speech recognition, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , April 2018, pp. 5024–5028. [19] K. Armanious et al., “MedGAN: Medical image translation using gans, ” Computerized Medical Imaging and Graphics , vol. 79, 2020. [20] B. Y ang, “ A study of in verse short-time Fourier transform, ” in IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , March 2008, pp. 3541–3544. [21] J. S. Garofolo et al., “TIMIT acoustic-phonetic continuous speech corpus, ” Linguistic Data Consortium , 1992. [22] D. B. Dean et al., “The QUT -NOISE-TIMIT corpus for the ev al- uation of voice activity detection algorithms, ” in Interspeech , Sep. 2010. [23] A. W . Rix et al., “Perceptual e valuation of speech quality (PESQ)-a new method for speech quality assessment of telephone networks and codecs, ” in IEEE International Confer ence on Acoustics, Speech, and Signal Processing (ICASSP) , May 2001, v ol. 2, pp. 749– 752. [24] Y . Hu and P . C. Loizou, “Evaluation of objectiv e quality measures for speech enhancement, ” IEEE T ransactions on A udio, Speech, and Language Pr ocessing , v ol. 16, no. 1, pp. 229–238, Jan. 2008. [25] C. H. T aal et al., “ A short-time objectiv e intelligibility measure for time-frequency weighted noisy speech, ” in IEEE International Confer ence on Acoustics, Speech and Signal Processing , March 2010, pp. 4214–4217. [26] A. Hannun et al., “Deep speech: Scaling up end-to-end speech recognition, ” 2014. [27] M. Fujimoto, S. W atanabe, and T . Nakatani, “Noise suppression with unsupervised joint speaker adaptation and noise mixture model estimation, ” in IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , March 2012, pp. 4713–4716. [28] G. Enzner and P . Thüne, “Bayesian MMSE filtering of noisy speech by SNR marginalization with global PSD priors, ” IEEE/A CM T ransactions on Audio, Speech, and Language Processing , vol. 26, no. 12, pp. 2289–2304, Dec. 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment