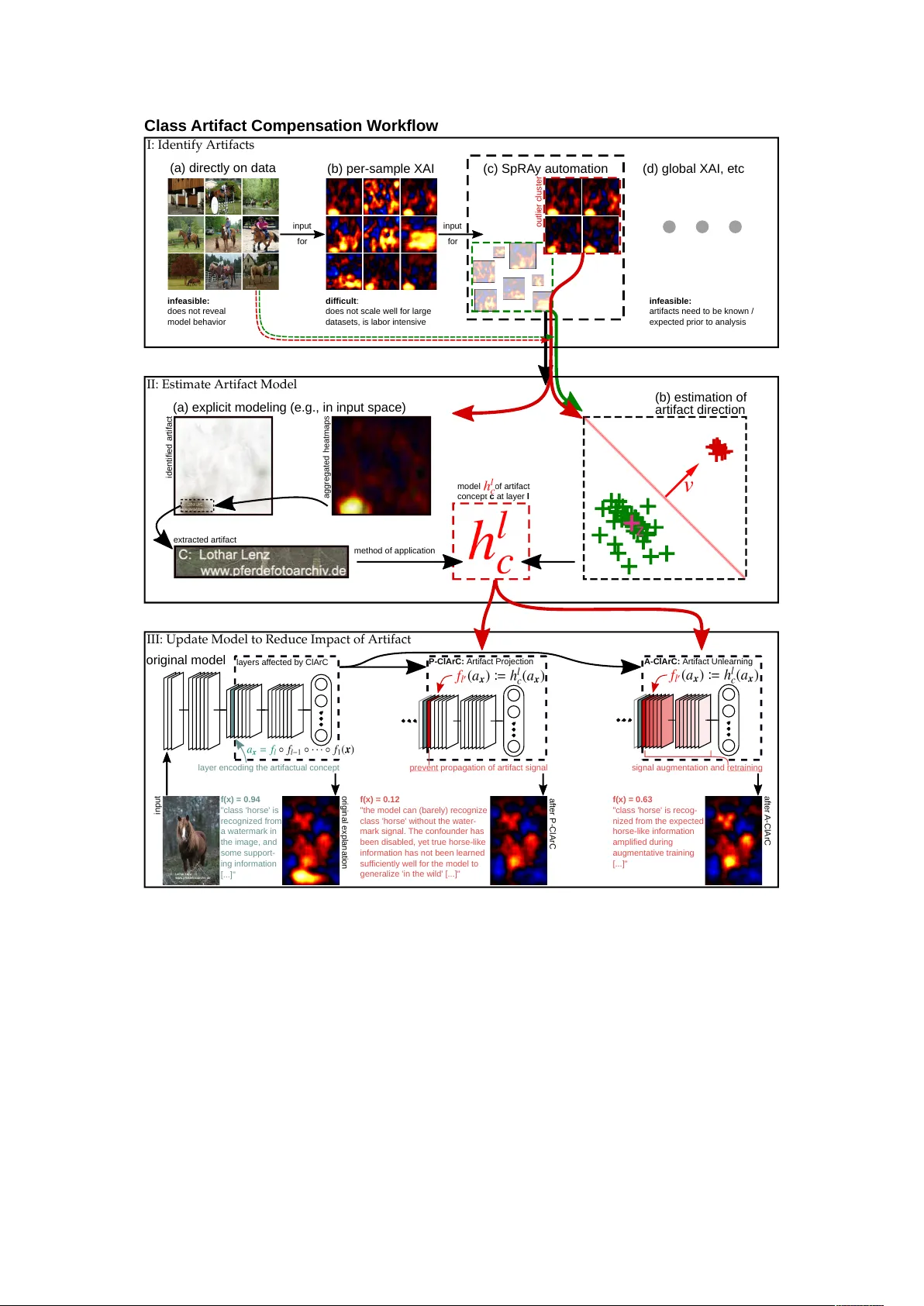

Finding and Removing Clever Hans: Using Explanation Methods to Debug and Improve Deep Models

Contemporary learning models for computer vision are typically trained on very large (benchmark) datasets with millions of samples. These may, however, contain biases, artifacts, or errors that have gone unnoticed and are exploitable by the model. In…

Authors: Christopher J. Anders, Le, er Weber