Robust and Adaptive Sequential Submodular Optimization

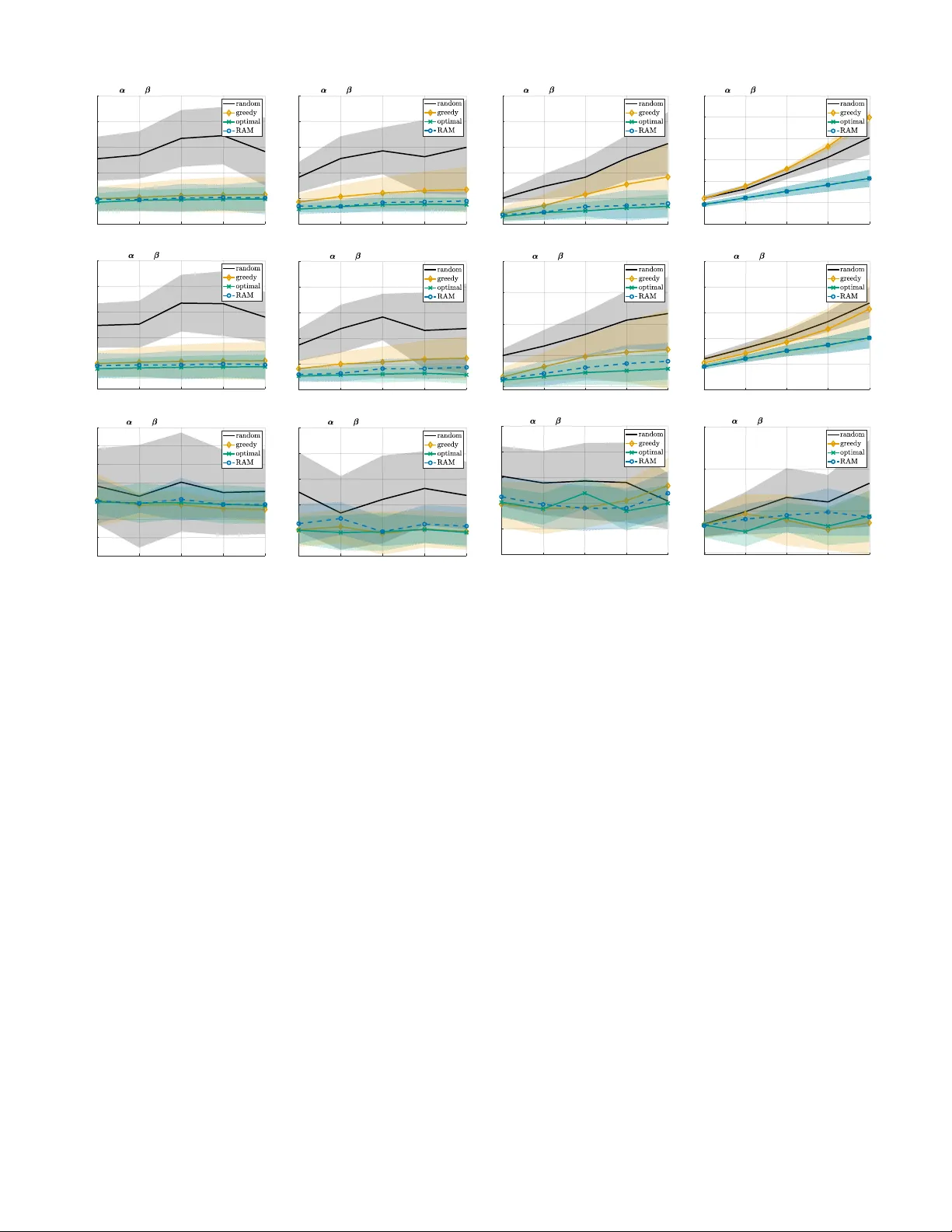

Emerging applications of control, estimation, and machine learning, ranging from target tracking to decentralized model fitting, pose resource constraints that limit which of the available sensors, actuators, or data can be simultaneously used across…

Authors: Vasileios Tzoumas, Ali Jadbabaie, George J. Pappas