Private Set Intersection: A Multi-Message Symmetric Private Information Retrieval Perspective

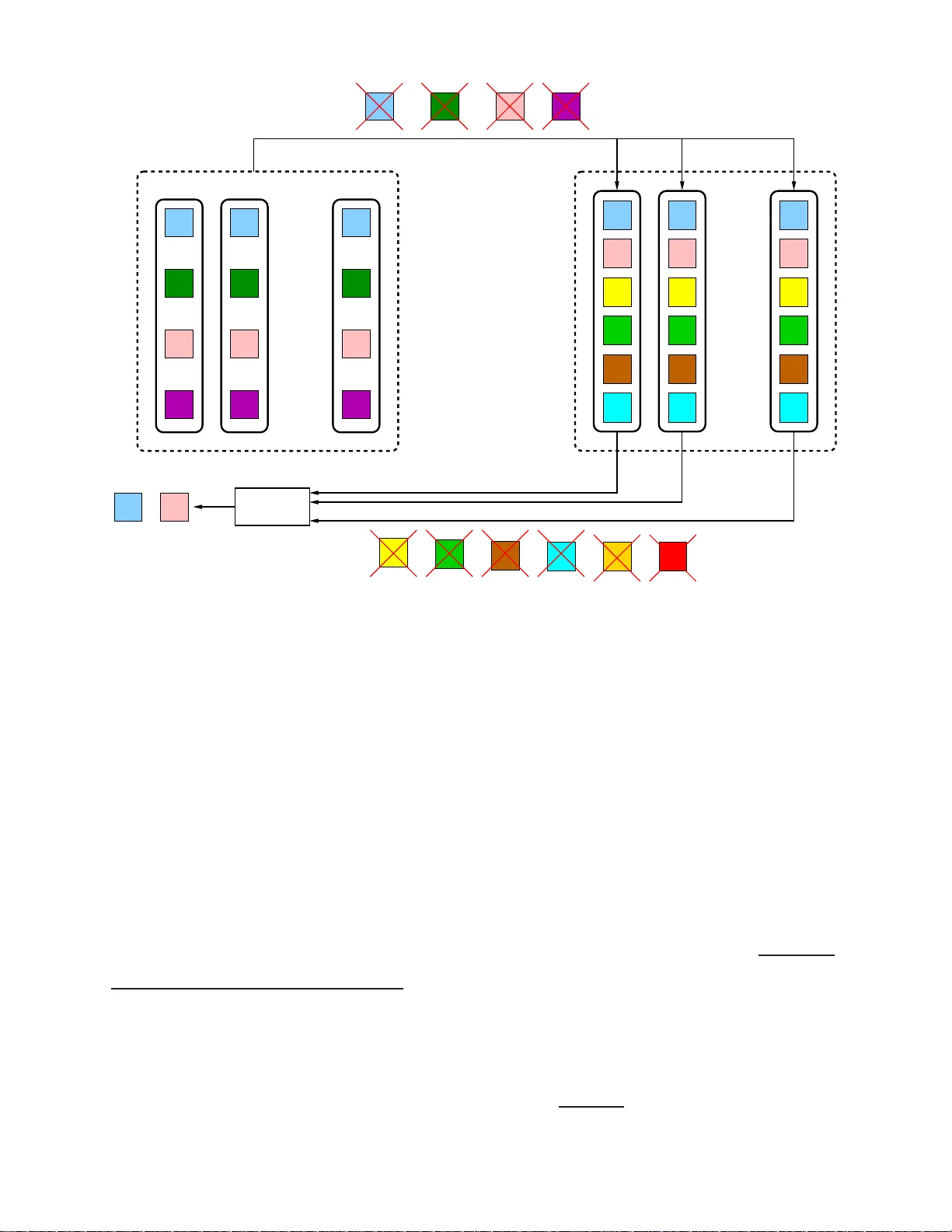

We study the problem of private set intersection (PSI). In this problem, there are two entities $E_i$, for $i=1, 2$, each storing a set $\mathcal{P}_i$, whose elements are picked from a finite field $\mathbb{F}_K$, on $N_i$ replicated and non-colludi…

Authors: Zhusheng Wang, Karim Banawan, Sennur Ulukus