i3DMM: Deep Implicit 3D Morphable Model of Human Heads

We present the first deep implicit 3D morphable model (i3DMM) of full heads. Unlike earlier morphable face models it not only captures identity-specific geometry, texture, and expressions of the frontal face, but also models the entire head, including hair. We collect a new dataset consisting of 64 people with different expressions and hairstyles to train i3DMM. Our approach has the following favorable properties: (i) It is the first full head morphable model that includes hair. (ii) In contrast to mesh-based models it can be trained on merely rigidly aligned scans, without requiring difficult non-rigid registration. (iii) We design a novel architecture to decouple the shape model into an implicit reference shape and a deformation of this reference shape. With that, dense correspondences between shapes can be learned implicitly. (iv) This architecture allows us to semantically disentangle the geometry and color components, as color is learned in the reference space. Geometry is further disentangled as identity, expressions, and hairstyle, while color is disentangled as identity and hairstyle components. We show the merits of i3DMM using ablation studies, comparisons to state-of-the-art models, and applications such as semantic head editing and texture transfer. We will make our model publicly available.

💡 Research Summary

The paper introduces i3DMM, the first deep implicit 3D morphable model that captures the full human head, including hair. Traditional 3D morphable models (3DMMs) are mesh‑based, rely on a fixed template, and require dense non‑rigid registration of training scans. This makes it extremely difficult to model the whole head, especially the hair region, which lacks reliable correspondences. i3DMM overcomes these limitations by representing surfaces with a signed distance function (SDF) learned through a neural network, thereby eliminating the need for explicit mesh registration.

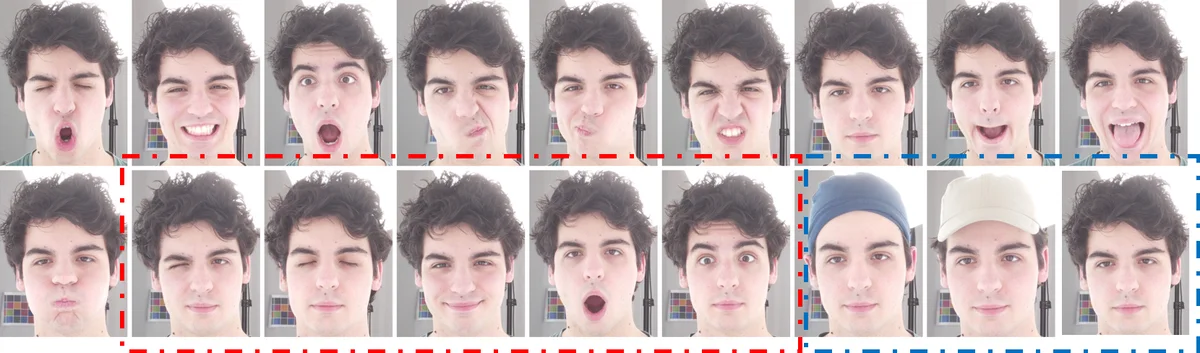

A new dataset of 64 subjects is collected using a high‑resolution multi‑view photogrammetry system (137 cameras). Each subject is captured in 10 facial expressions and 3–4 hairstyles (open hair, tied hair, two caps). After semi‑automatic landmark annotation, the scans are rigidly aligned, cropped to the head region, and watertight holes at the neck are closed. The authors then generate training triplets (query point x, signed distance s, color c) by uniformly sampling the mesh surface and densely sampling around eyes, nose, and mouth. Points are perturbed with a small Gaussian noise to obtain interior and exterior samples.

The core of i3DMM consists of three neural components: (1) a Reference Shape Network (RefNet) that learns a single mean head shape as an implicit SDF, (2) a Shape Deformation Network (DeformNet) that predicts a 3‑D displacement δ for any query point conditioned on a geometry latent code, and (3) a Color Network (ColorNet) that predicts surface color conditioned on a color latent code. All networks use fully‑connected layers with ReLU activations and sinusoidal positional encoding of the input coordinates. The final signed distance for a specific head is computed as s(x, z_geo) = f_r(x + δ), where δ = DeformNet(x, z_geo). This formulation enables implicit dense correspondence between any scan and the reference shape without any ground‑truth correspondences, only sparse facial and ear landmarks are required during training.

Disentanglement is achieved by splitting latent spaces: geometry latent z_geo = (z_id, z_ex, z_hair) encodes identity, expression, and hairstyle; color latent z_col = (z_id, z_hair) encodes identity and hairstyle color. Identity codes are learned per subject (58 unique identities), expression codes are shared across all subjects (10 expressions), and hairstyle codes are shared across subjects (4 geometry hairstyles, 3 color hairstyles). This design allows semantic control: one can change expression while keeping identity and hair fixed, swap hairstyles, or edit color independently.

Training optimizes a combination of (i) SDF L2 loss, (ii) color L2 loss, and (iii) an Eikonal regularizer enforcing ∥∇f∥≈1, with higher weighting for points near the surface to preserve fine details.

Experimental evaluation shows that i3DMM significantly outperforms state‑of‑the‑art mesh‑based head models (e.g., FLAME, LYHM) in reconstruction error, especially in the hair region where traditional models cannot represent geometry. Ablation studies confirm that (a) the reference‑shape/deformation split is crucial for learning correspondences, (b) multi‑latent disentanglement improves editing capabilities, and (c) sinusoidal encoding contributes to higher fidelity.

The authors demonstrate several applications: (1) semantic head editing, where identity, expression, and hairstyle can be independently manipulated to synthesize novel heads; (2) texture transfer, where the learned dense correspondences enable accurate mapping of hair and skin textures from one scan to another; (3) one‑shot segmentation and landmark extraction, where a single fitted i3DMM provides 3‑D facial landmarks and a segmentation mask for the whole head without additional annotation.

Limitations include the relatively small dataset (64 subjects), which restricts diversity in ethnicity, age, and hair styles, and the presence of noise in hair scans that still hampers the reconstruction of the finest hair strands. The current model is static; extending it to dynamic sequences (4‑D) to capture hair motion or temporal expression changes is left for future work. Moreover, integrating image or video inputs to infer latent codes directly (an inversion network) would broaden practical applicability.

In summary, i3DMM introduces a novel paradigm for full‑head modeling: an implicit SDF backbone combined with a reference‑shape plus deformation architecture and semantically disentangled latent spaces. It eliminates the need for dense non‑rigid registration, achieves higher reconstruction accuracy, and provides unprecedented editing flexibility for the entire head, including hair. The work paves the way toward more comprehensive, data‑driven 3‑D human models and opens several avenues for future research in larger datasets, dynamic modeling, and multimodal inference.

Comments & Academic Discussion

Loading comments...

Leave a Comment