Towards Robust Deep Neural Networks for Affect and Depression Recognition from Speech

Intelligent monitoring systems and affective computing applications have emerged in recent years to enhance healthcare. Examples of these applications include assessment of affective states such as Major Depressive Disorder (MDD). MDD describes the c…

Authors: Alice Othmani, Daoud Kadoch, Kamil Bentounes

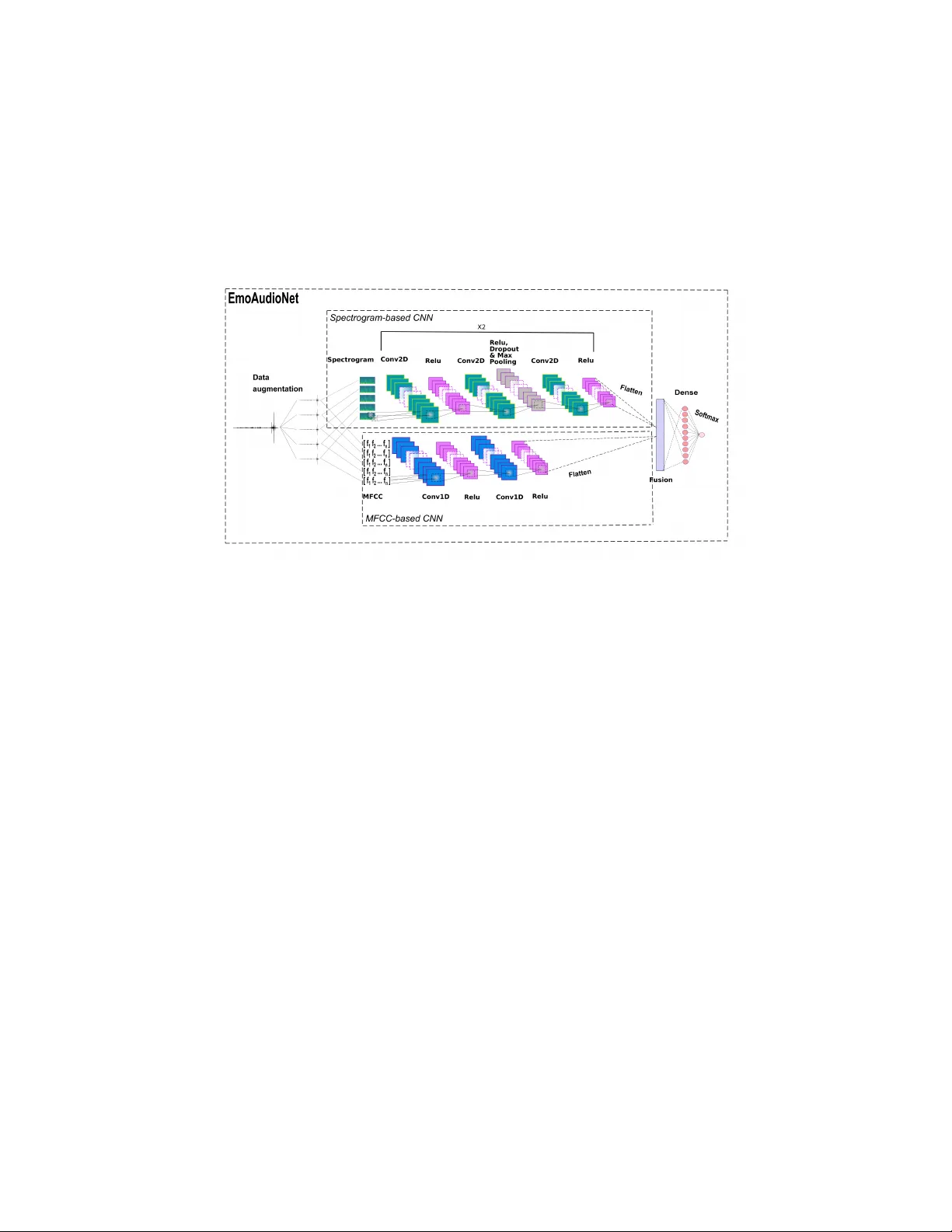

T o w ards Robust Deep Neural Net w orks for Affect and Depression Recognition from Sp eec h Alice Othmani 1 [0000 − 0002 − 3442 − 0578] , Daoud Kado c h 2 , Kamil Ben tounes 2 , Emna Rejaibi 3 , Romain Alfred 4 , and Ab denour Hadid 5 1 Univ ersity of Paris-Est Cr ´ eteil, Vitry sur Seine, F rance alice.othmani@u-pec.fr 2 Sorb onne Univ ersity , P aris, F rance 3 INSA T, T unis, T unisie 4 ENSI IE, ´ Evry , F rance 5 P olytechnic Univ ersity of Hauts-de-F rance, V alenciennes, F rance Abstract. In telligent monitoring systems and affective computing ap- plications hav e emerged in recent y ears to enhance healthcare. Examples of these applications include assessmen t of affectiv e states suc h as Ma jor Depressiv e Disorder (MDD). MDD describ es the constan t expression of certain emotions: negativ e emotions (low V alence) and lac k of interest (lo w Arousal). High-performing in telligent systems would enhance MDD diagnosis in its early stages. In this paper, w e present a new deep neu- ral netw ork architecture, called EmoAudioNet, for emotion and depres- sion recognition from sp eec h. Deep EmoAudioNet learns from the time- frequency represen tation of the audio signal and the visual represen tation of its sp ectrum of frequencies. Our mo del shows v ery promising results in predicting affect and depression. It w orks similarly or outp erforms the state-of-the-art metho ds according to several ev aluation metrics on RECOLA and on D AIC-W OZ datasets in predicting arousal, v alence, and depression. Co de of EmoAudioNet is publicly av ailable on GitHub: https://github.com/AliceOTHMANI/EmoAudioNet Keyw ords: Emotional Intelligence · Socio-Affective Computing · De- pression Recognition · Speech Emotion Recognition · Healthcare appli- cation · Deep learning. 1 In tro duction Artificial Emotional In telligence (EI) or affective computing has attracted in- creasing atten tion from the scien tific communit y . Affectiv e computing consists of endo wing machines with the ability to recognize, interpret, process and simulate h uman affects. Giving mac hines skills of emotional intelligence is an important k ey to enhance healthcare and further b o ost the medical assessment of several men tal disorders. Affect describ es the experience of a h uman’s emotion resulting from an inter- action with stimuli. Humans express an affect through facial, vocal, or gestural b eha viors. A happy or angry person will typically sp eak louder and faster, with 2 A. Othmani et al. strong frequencies, while a sad or b ored person will sp eak slo w er with low fre- quencies. Emotional arousal and v alence are the t w o main dimensional affects used to describ e emotions. V alence describes the level of pleasan tness, while arousal describes the in tensity of excitement. A final metho d for measuring a user’s affectiv e state is to ask questions and to iden tify emotions during an in- teraction. Several post-interaction questionnaires exist for measuring affective states like the P atient Health Questionnaire 9 (PHQ-9) for depression recog- nition and assessmen t. The PHQ is a self report questionnaire of nine clinical questions where a score ranging from 0 to 23 is assigned to describe Ma jor De- pressiv e Disorder (MDD) sev erity level. MDD is a mental disease whic h affects more than 300 million p eople in the world [1], i.e. , 3% of the w orldwide p op- ulation. The psychiatric taxonomy classifies MDD among the low mo ods [2], i.e. , a condition c haracterised by a tiredness and a global ph ysical, in tellectual, so cial and emotional slo w-down. In this w ay , the sp eec h of depressive sub jects is slo wed, the pauses b et ween tw o speakings are lengthened and the tone of the v oice (proso dy) is more monotonous. In this pap er, a new deep neural net work s archi tecture, called EmoAudioNet, is prop osed and ev aluated for real-life affect and depression recognition from sp eec h. The remainder of this article is organised as follows. Section 2 in tro duces related w orks with affect and depression recognition from speech. Section 3 in- tro duces the motiv ations b ehind this w ork. Section 4 describes the details of the o verall prop osed method. Section 5 describ es the entire exp eriments and the extensiv e exp erimen tal results. Finally , the conclusion and future work are presen ted in Section 6. 2 Related work Sev eral approaches are reported in the literature for affect and depression recog- nition from speech. These metho ds can b e generally categorized in to t wo groups: hand-crafted features-based approac hes and deep learning-based approaches. 2.1 Handcrafted features-based approac hes In this family of approac hes, there are tw o main steps : feature extraction and classification. An o verview of handcrafted features-based approac hes for affect and depression assessmen t from sp eec h is presented in T able 1. Handcrafted features Acoustic Low-Lev el Descriptors (LDD) are extracted from the audio signal. These LLD are group ed in to four main categories: the sp ectral LLD (Harmonic Mo del and Phase Distortion Mean (HMPDM0-24), etc.), the cepstral LLD (Mel-F requency Cepstral Co efficien ts (MF CC) [3, 13], etc.), the prosodic LLD (F ormants [21], etc.), and the v oice quality LLD (Jitter, and Shimmer [14], etc.). A set of statistical features are also calculated (max, min, v ariance and standard deviation of LLD [4, 12]). Low et al. [16] pro- p ose the exp erimen tation of the T eager Energy Op erator (TEO) based features. Title Suppressed Due to Excessiv e Length 3 A comparison of the p erformances of the prosodic, sp ectral, glottal (v oice qualit y), and TEO features for depression recognition is realized in [16] and it demonstrates that the differen t features ha v e similar accuracies. The fusion of the proso dic LLD and the glottal LLD based mo dels seems to not significan tly impro ve the results, or decreased them. How ev er, the addition of the TEO fea- tures impro ves the p erformances up to +31,35% for depressive male. Classification of Handcrafted features Comparativ e analysis of the per- formances of sev eral classifiers in depression assessment and prediction indicate that the use of an h ybrid classifier using Gaussian Mixture Mo dels (GMM) and Supp ort V ector Mac hines (SVM) model ga ve the b est ov erall classification re- sults [6, 16]. Different fusion metho ds, namely feature, score and decision fusion ha ve been also in vestigated in [6] and it has b een demonstrated that : first, amongst the fusion metho ds, score fusion p erformed b etter when combined with GMM, HFS and MLP classifiers. Second, decision fusion w orked b est for SVM (b oth for raw data and GMM mo dels) and finally , feature fusion exhibited weak p erformance compared to other fusion metho ds. 2.2 Deep learning-based approac hes Recen tly , approaches based on deep learning hav e b een prop osed [8, 23 – 30]. Sev- eral handcrafted features are extracted from the audio signals and fed to the deep neural netw orks, except in Jain [27] where only the MFCC are considered. In other approaches, ra w audio signals are fed to deep neural netw orks [19]. An o verview of deep learning-based methods for affect and depression assessmen t from sp eec h is presen ted in T able 2. Sev eral deep neural net works ha v e b een proposed. Some deep arc hitectures are based on feed-forw ard neural netw orks [11, 20, 24], some others are based on con volutional neural netw orks suc h as [27] and [8] whereas some others are based on recurrent neural netw orks such as [13] and [23]. A comparative study [25] of some neural netw orks, BLSTM-MIL, BLSTM-RNN, BLSTM-CNN, CNN, DNN- MIL and DNN, demonstrates that the BLSTM-MIL outperforms the other stud- ied arc hitectures. Whereas, in Jain [27], the Capsule Net work is demonstrated as the most efficient architecture, compared to the BLSTM with A ttention mecha- nism, CNN and LSTM-RNN. F or the assessment of the lev el of depression using the Patien t Health Questionnaire 8 (PHQ-8), Y ang et al. [8] exerts a DCNN. T o the b est of our knowledge, their approach outperforms all the existing approaches on D AIC-WOZ dataset. 3 Motiv ations and Con tributions Short-time sp ectral analysis is the most common wa y to characterize the sp eec h signal using MFCCs. How ev er, audio signals in their time-frequency represen- tations, often presen t interesting patterns in the visual domain [31]. The visual represen tation of the sp ectrum of frequencies of a signal using its sp ectrogram 4 A. Othmani et al. T able 1: Overview of Shallo w Learning based metho ds for Affect and Depression Assessmen t from Sp eec h. (*) Results obtained ov er a group of F emales. Ref F eatures Classification Dataset Metrics V alue V alstar et al. [3] proso dic + v oice SVM + grid search + D AIC-WOZ F1-score 0.410 (0.582) qualit y + sp ectral random forest Precision 0.267 (0.941) Recall 0.889 (0.421) RMSE (MAE) 7.78 (5.72) Dhall et al. [14] energy + sp ectral + v oic- ing qualit y + duration features non-linear chi-square k er- nel AFEW 5.0 unavailable unavailable Ringev al et al. proso dic LLD + voice random forest SEW A RMSE 7.78 [4] qualit y + sp ectral MAE 5.72 Haq et al. [15] energy + prosodic + spec- tral + duration features Sequen tial F orward Selec- tion + Sequen tial Back- w ard Selection + linear discriminan t analysis + Gaussian classifier uses Ba yes decision theory Natural sp eec h databases Accuracy 66.5% Jiang et al. [5] MF CC + proso dic + ensem ble logistic regres- sion hand-crafted Males accuracy 81.82%(70.19%*) sp ectral LLD + glottal mo del for detecting dataset Males sensitivity 78.13%(79.25%*) features depression E algorithm Males sp ecificit y 85.29%(70.59%*) Lo w et al. [16] teager energy op erator Gaussian mixture mo del + hand-crafted Males accuracy 86.64%(78.87%*) based features SVM dataset Males sensitivit y 80.83%(80.64%*) Males sp ecificit y 92.45%(77.27%*) Algho winem et al. [6] energy + forman ts + glot- tal features + intensit y + MF CC + prosodic + spec- tral + voice qualit y Gaussian mixture mo del + SVM + decision fusion hand-crafted dataset Accuracy 91.67% V alstar et al. [7] duration features+energy correlation based feature A ViD- RMSE 14.12 lo cal min/max re- lated function- als+sp ectral+v oicing qualit y selection + SVR + 5-flod cross-v alidation lo op Corpus MAE 10.35 V alstar et al. [17] duration features+energy SVR A VEC2014 RMSE 11.521 lo cal min/max re- lated function- als+sp ectral+v oicing qualit y MAE 8.934 Cummins et al. [9] MF CC + prosodic + spec- tral centroid SVM A VEC2013 Accuracy 82% Lop ez Otero et energy + MFCC + SVR A VDLC RMSE (MAE) 8.88 (7,02) al. [10] proso dic + spectral Meng et al. [18] sp ectral + energy PLS regression A VEC2013 RMSE 11.54 + MFCC + functionals MAE 9.78 features + duration fea- tures CORR 0.42 Title Suppressed Due to Excessiv e Length 5 T able 2: Ov erview of Deep Learning based methods for Affect and Depression Assessmen t from Sp eec h. Ref F eatures Classification Dataset Metrics V alue Y ang et al. [8] sp ectral LLD + cepstral DCNN D AIC- W OZ Depressed female RMSE 4.590 LLD + proso dic LLD + Depressed female MAE 3.589 v oice qualit y LLD + Not depressed female RMSE 2.864 statistical functionals + Not depressed female MAE 2.393 regression functionals Depressed male RMSE 1.802 Depressed male MAE 1.690 Not depressed male RMSE 2.827 Not depressed male MAE 2.575 Al Hanai et al. sp ectral LLD + cepstral LSTM-RNN D AIC F1-score 0.67 [23] LLD + proso dic LLD + Precision 1.00 v oice qualit y LLD + Recall 0.50 functionals RMSE 10.03 MAE 7.60 Dham et al. [24] proso dic LLD + voice FF-NN A VEC2016 RMSE 7.631 qualit y LLD + functionals + BoTW MAE 6.2766 Salekin et al. [25] sp ectral LLD + MFCC + NN2V ec + BLSTM- MIL D AIC- W OZ F1-score 0.8544 functionals Accuracy 96.7% Y ang et al. [26] sp ectral LLD + cepstral DCNN-DNN DAIC- W OZ F emale RMSE 5.669 LLD + proso dic LLD + F emale MAE 4.597 v oice qualit y LLD + Male RMSE 5.590 functionals Male MAE 5.107 Jain [27] MF CC Capsule Netw ork V CTK corpus Accuracy 0.925 Chao et al. [28] sp ectral LLD + cepstral LLD + proso dic LLD LSTM-RNN A VEC2014 unavailable unavailable Gupta et al. [29] sp ectral LLD + cepstral LLD + proso dic LLD + v oice quality LLD + func- tionals DNN A ViD- Corpus unavailable unavailable Kang et al. [30] sp ectral LLD + proso dic DNN A VEC2014 RMSE 7.37 LLD + articulatory features SRI’s submitted system to MAE 5.87 A VEC2014 P earson’s Product Momen t 0.800 median-w ay score- lev el fusion Correlation co efficien t Tzirakis et al. [36] ra w signal CNN and 2-la yers LSTM RECOLA loss function based on CCC .440(arousal) .787(v alence) Tzirakis et al. [19] ra w signal CNN and LSTM RECOLA CCC .686(arousal) .261(v alence) Tzirakis et al. [22] ra w signal CNN RECOLA CCC .699(arousal) .311(v alence) 6 A. Othmani et al. sho ws a set of sp ecific rep etitiv e patterns. Surprisingly and to the best of our kno wledge, it has not b een reported in the literature a deep neural net w ork ar- c hitecture that com bines information from time, frequency and visual domains for emotion recognition. The first contribution of this work is a new deep neural net work architec- ture, called EmoAudioNet, that aggregate resp onses from a short-time sp ectral analysis and from time-frequency audio texture classification and that extract deep features represen tations in a learned embedding space. In a second contri- bution, w e prop ose EmoAudioNet-based approach for instan taneous prediction of sp ontaneous and con tinuous emotions from sp eech. In particular, our sp e- cific contributions are as follows: (i) an automatic clinical depression recognition and assessment em b edding net work (ii) a small size tw o-stream CNNs to map audio data in to tw o types of contin uous emotional dimensions namely , arousal and v alence and (iii) through exp erimen ts, it is shown that EmoAudioNet-based features outp erforms the state-of-the art metho ds for predicting depression on D AIC-WOZ dataset and for predicting v alence and arousal dimensions in terms of P earson’s Co efficien t Correlation (PCC). Algorithm 1: EmoAudioNet embedding netw ork. Giv en tw o feature extractors f Θ and f φ , n umber of training steps N . for iter ation in r ange( N ) do ( X wa v , y wa v ) ← batch of input wa v files and labels e Spec ← f Θ ( X wa v ) Sp ectrogram features e MFCC ← f φ ( X wa v ) MFCC features f MFCCSpec ← [ e MFCC , e Spec ] F eature-level fusion p MFCCSpec ← f θ ( e MFCCSpec ) Predict class probabilities L MFCCSpec = cross entropy loss ( p MFCCSpec , y wa v ) Obtain all gradients ∆ all = ( ∂ L ∂ Θ , ∂ L ∂ φ ) ( Θ , φ, θ ) ← ADAM ( ∆ all ) Up date feature extractor and output heads’ parameters simultaneously end 4 Prop osed Metho d W e seek to learn a deep audio represen tation that is trainable end-to-end for emotion recognition. T o achiev e that, we propose a no v el deep neural net w ork called EmoAudioNet, which performs lo w-level and high-level features extrac- tion and aggregation function learning jointly (See Algorithm. 1). Thus, the input audio signal is fed to a small size tw o-stream CNNs that outputs the fi- nal classification scores. A data augmentation step is considered to increase the amoun t of data b y adding slightly mo dified copies of already existing data. The structure of EmoAudioNet presen ts three main parts as sho wn in Figure. 1 : (i) Title Suppressed Due to Excessiv e Length 7 An MFCC-based CNN, (ii) A sp ectrogram-based CNN and (iii) the aggregation of the responses of the MF CC-based and the sp ectrogram-based CNNs. In the follo wing, more details ab out the three parts are given. Fig. 1: The diagram of the proposed deep neural net works arc hitecture called EmoAudioNet. The output lay er is dense la y er of size n neurones with a Softmax activ ation function. n is defined according to the task. When the task concerns binary depression classification, n=2. When the task concerns depression severit y lev el assessment, n=24. While, n=10 for arousal or v alence prediction. 4.1 Data augmen tation A data augmentation step is considered to o vercome the problem of data scarcit y b y increasing the quantit y of training data and also to improv e the mo del’s robustness to noise. Two different t yp es of audio augmentation tec hniques are p erformed: (1) Adding noise: mix the audio signal with random noise. Eac h mix z is generated using z = x + α × r and ( x ) where x is the audio signal and α is the noise factor. In our exp erimen ts, α = 0 . 01, 0 . 02 and 0 . 03. (2) Pitch Shifting: lo w er the pitc h of the audio sample by 3 v alues (in semitones): (0.5, 2 and 5). 4.2 Sp ectrogram-based CNN stream The spectrogram-based CNN presen ts low-lev el features descriptor follow ed b y a high-level features descriptor. The Low-lev el features descriptor is the sp ectro- gram of the input audio signal and it is computed as a sequence of F ast F ourier 8 A. Othmani et al. T ransform (FFT) of window ed audio segments. The audio signal is split into 256 segments and the spectrum of eac h segment is computed. The Hamming windo w is applied to eac h segmen t. The spectrogram plot is a color image of 1900 × 1200 × 3. The image is resized to 224 × 224 × 3 before being fed to the High-lev el features descriptor. The high-Lev el features descriptor is a deep CNN, it tak es as input the sp ectrogram of the audio signal. Its architecture, as shown in Fig. 1, is comp osed by t wo same blo cks of la yers. Eac h block is composed of a tw o-dimensional (2D) conv olutional lay er follow ed by a ReLU activ ation func- tion, a second conv olutional lay er, a ReLU, a drop out and max p o oling lay er, a third con volutional lay er and last ReLU. 4.3 MF CC-based CNN stream The MFCC-based CNN presents also a lo w-lev el follow ed by high-level features descriptors (see Fig. 1). The low-lev el features descriptor is the MFCC features of the input audio. T o extract them, the sp eec h signal is first divided into frames b y applying a Hamming windowing function of 2.5s at fixed interv als of 500 ms. A cepstral feature v ector is then generated and the Discrete F ourier T ransform (DFT) is computed for each frame. Only the logarithm of the amplitude spec- trum is retained. The spectrum is after smo othed and 24 spectral comp onen ts in to 44100 frequency bins are collected in the Mel frequency scale. The compo- nen ts of the Mel-sp ectral v ectors calculated for each frame are highly correlated. Therefore, the Karhunen-Loeve (KL) transform is applied and is approximated b y the Discrete Cosine T ransform (DCT). Finally , 177 cepstral features are ob- tained for eac h frame. After the extraction of the MFCC features, they are fed to the high-Lev el features descriptor whic h is a small size CNN. T o a v oid o v er- fitting problem, only t wo one-dimentional (1D) con volutional lay ers follow ed b y a ReLU activ ation function eac h are performed. 4.4 Aggregation of the spectrogram-based and MF CC-based resp onses Com bining the responses of the tw o deep streams CNNs allows to study simul- taneously the time-frequency representation and the texture-like time frequency represen tation of the audio signal. The output of the spectrogram-based CNN is a feature v ector of size 1152, while the output of the MFCC-based CNN is a feature vector of size 2816. The resp onses of the tw o net works are concatenated and then fed to a fully connected lay er in order to generate the lab el prediction of the emotion lev els. 5 Exp erimen ts and results 5.1 Datasets Tw o publicly av ailable datasets are used to e v aluate the performances of EmoAu- dioNet: Title Suppressed Due to Excessiv e Length 9 Dataset for affect recognition exp erimen ts : RECOLA dataset [32] is a m ultimo dal corpus of affectiv e interactions in F renc h. 46 sub jects participated to data recordings. Only 23 audio recordings of 5 min utes of interaction are made publicly av ailable and used in our exp erimen ts. P articipants engaged in a remote discussion according to a surviv al task and six annotators measured emotion con tinuously on tw o dimensions: v alence and arousal. Dataset for depression recognition and assessment exp eriments: DAIC- W OZ depression dataset [33] is introduced in the A VEC2017 challenge [4] and it pro vides audio recordings of clinical interviews of 189 participants. Each record- ing is lab eled b y the PHQ-8 score and the PHQ-8 binary . The PHQ-8 score defines the severit y lev el of depression of the participant and the PHQ-8 binary defines whether the participan t is depressed or not. F or tec hnical reasons, only 182 audio recordings are used. The a verage length of the recordings is 15 min utes with a fixed sampling rate of 16 kHz. 5.2 Exp erimen tal Setup Sp ectrogram-based CNN architecture: The n umber of channels of the con volutional and po oling la y ers are both 128. While their filter size is 3 × 3. RELU is used as activ ation function for all the la yers. The stride of the max p ooling is 8. The drop out fraction is 0.1. MF CC-based CNN arc hitecture: The input is one-dimensional and of size 177 × 1. The filter size of its tw o con volutional lay ers is 5 × 1. RELU is used as activ ation function for all the la y ers. The dropout fraction is 0.1 and the stride of the max p ooling is 8. EmoAudioNet architecture: The tw o features vectors are concatenated and fed to a fully connected la yer of n neurones activ ated with a Softmax function. n is defined according to the task. When the task concerns binary depression classification, n=2. When the task concerns depression severit y lev el assessment, n=24. While, n=10 for arousal or v alence prediction. The ADAM optimizer is used. The learning rate is set exp erimen tally to 10e-5 and it reduced when the loss v alue stops decreasing. The batch size is fixed to 100 samples. The num b er of epo c hs for training is set to 500. An early stopping is performed when the accuracy stops impro ving after 10 ep o c hs. 5.3 Exp erimen tal results on sp on taneous and contin uous emotion recognition from sp eec h Results of three prop osed CNN arc hitectures The exp erimen tal results of the three prop osed arc hitectures on predicting arousal and v alence are given in T able 3 and T able 4 . EmoAudioNet outperforms MFCC-based CNN and the sp ectrogram-based CNN with an accuracy of 89% and 91% for predicting aroural and v alence resp ectively . The accuracy of the MF CC-based CNN is around 70% and 71% for arousal and v alence resp ectively . The sp ectrogram-based CNN is sligh tly b etter than the MFCC-based CNN and its accuracy is 76% for predict- ing arousal and 74% for predicting v alence. 10 A. Othmani et al. T able 3: RECOLA dataset results for prediction of arousal. The results obtained for the developmen t and the test sets in term of three metrics: the accuracy , the P earson’s Co efficient Correlation (PCC) and the Ro ot Mean Square error (RMSE). Dev elopment T est Accuracy PCC RMSE Accuracy PCC RMSE MF CC-based CNN 81.93% 0.8130 0.1501 70.23% 0.6981 0.2065 Sp ectrogram-based CNN 80.20% 0.8157 0.1314 75.65% 0.7673 0.2099 EmoAudioNet 94.49% 0.9521 0.0082 89.30% 0.9069 0.1229 T able 4: RECOLA dataset results for prediction of v alence. The results obtained for the developmen t and the test sets in term of three metrics: the accuracy , the P earson’s Co efficient Correlation (PCC) and the Ro ot Mean Square error (RMSE). Dev elopment T est Accuracy PCC RMSE Accuracy PCC RMSE MF CC-based CNN 83.37% 0.8289 0.1405 71.12% 0.6965 0.2082 Sp ectrogram-based CNN 78.32% 0.7984 0.1446 73.81% 0.7598 0.2132 EmoAudioNet 95.42% 0.9568 0.0625 91.44% 0.9221 0.1118 1441 60.52% Non-Depression Non-Depression 354 14.87% Depression Precision 1795 80.28% 19.72% 283 11.89% Depression Recall 1724 83.58% 16.42% 303 12.73% 586 51.71% 48.29% 657 46.12% 53.88% 2381 73.25% 26.75% Predicted Actual Fig. 2: Confusion Matrice of EmoAudioNet generated on the D AIC-WOZ test set Title Suppressed Due to Excessiv e Length 11 EmoAudioNet has a P earson Co efficien t Correlation (PCC) of 0.91 for predict- ing arousal and 0.92 for predicting v alence, and has also a Ro ot Mean Square of Error (RMSE) of 0.12 for arousal’s prediction and 0.11 for v alence’s prediction. Comparisons of EmoAudioNet and the stat-of-the art metho ds for arousal and v alence prediction on RECOLA dataset As shown in T a- ble 5, EmoAudioNet mo del has the b est PCC of 0 . 9069 for arousal prediction. In term of the RMSE, the approach prop osed b y He et al. [12] outp erforms all the existing metho ds with a RMSE equal to 0 . 099 in predicting arousal. F or v alence prediction, EmoAudioNet outperforms state-of-the-art in predict- ing v alence with a PCC of 0 . 9221 without any fine-tuning. While the prop osed approac h by He et al. [12] has the b est RMSE of 0 . 104. 5.4 Exp erimen tal results on automatic clinical depression recognition and assessmen t EmoAudioNet framework is ev aluated on tw o tasks on the DAIC-W OZ corpus. The first task is to predict depression from sp eec h under the PHQ-8 binary . The second task is to predict the depression severit y levels under the PHQ-8 scores. EmoAudioNet p erformances on depression recognition task EmoAu- dioNet is trained to predict the PHQ-8 binary (0 for non-depression and 1 for depression). The performances are summarized in Fig. 2. The ov erall accuracy ac hieved in predicting depression reac hes 73.25% with an RMSE of 0.467. On the test set, 60.52% of the samples are correctly lab eled with non-depress ion, whereas, only 12.73% are correctly diagnosed with depression. The lo w rate of correct classification of non-depression can b e explained by the imbalance of the input data on the D AIC-WOZ dataset and the small amount of the partici- pan ts labeled as depressed. F1 score is designed to deal with the non-uniform distribution of class labels b y giving a w eighted av erage of precision and recall. The non-depression F1 score reac hes 82% while the depression F1 score reaches 49%. Almost half of the samples predicted with depression are correctly classified with a precision of 51.71%. The num b er of non-depression samples is t wice the n umber of samples labeled with depression. Thus, adding more samples of de- pressed participants would significantly increase the mo del’s ability to recognize depression. EmoAudioNet p erformances on depression severit y lev els prediction task The depression sev erity lev els are assessed b y the PHQ-8 scores ranging from 0 for non-depression to 23 for severe depression. The RMSE achiev ed when predicting the PHQ-8 scores is 2.6 times better than the one achiev ed with the depression recognition task. The test loss reaches 0.18 compared to a 0.1 RMSE on the training set. 12 A. Othmani et al. T able 5: Comparisons of EmoAudioNet and the state-of-the art metho ds for arousal and v alence prediction on RECOLA dataset. Arousal V alence Metho d PCC RMSE PCC RMSE He et al. [12] 0.836 0.099 0.529 0.104 Ringev al et al. [11] 0.322 0.173 0.144 0.127 EmoAudioNet 0.9069 0.1229 0.9221 0.1118 T able 6: Comparisons of EmoAudioNet and the stat-of-the art metho ds for pre- diction of depression on D AIC-WOZ dataset. (*) The results of the depression sev erity lev el prediction task. (**) for non-depression. ( ‡ ) for depression. ( N orm ): Normalized RMSE Metho d Accuracy RMSE F1 Score Y ang et al. [8] - 1.46 (*) - (depressed male) Y ang et al. [26] - 5.59 (*) - (male) V alstar et al. [3] - 7.78 (*) - Al Hanai et al. [23] - 10.03 - Salekin et al. [25] 96.7% - 85.44% Ma et al. [34] - - 70% (**) 50% ( ‡ ) Rejaibi et al. [35] 76.27% 0.4 85% (**) 46% ( ‡ ) - 0 . 168 N or m (*) - EmoAudioNet 73.25% 0.467 82% (**) 49% ( ‡ ) - 0 . 18 N or m | 4 . 14 (*) - Title Suppressed Due to Excessiv e Length 13 Comparisons of EmoAudioNet and the state-of-the art metho ds for depression prediction on D AIC-W OZ dataset T able 6 compares the p er- formances of EmoAudioNet with the state-of-the-art approac hes ev aluated on the DAIC-W OZ dataset. T o the b est of our kno wledge, in the literature, the b est p erforming approach is the prop osed approac h in [25] with an F1 score of 85.44% and an accuracy of 96.7%. The proposed NN2V ec features with BLSTM- MIL classifier achiev es this go od p erformance thanks to the leav e-one-speaker out cross-v alidation approach. Comparing to the other prop osed approac hes where a simple train-test split is p erformed, giving the mo del the opp ortunit y to train on multiple train-test splits increase the mo del p erformances esp ecially in small datasets. In the depression recognition task, the EmoAudioNet outp erforms the prop osed arc hitecture in [34] based on a Conv olutional Neural Netw ork follow ed b y a Long Short-T erm Memory netw ork. The non-depression F1 score ac hieved with EmoAudioNet is better than the latter by 13% with the exact same depression F1 score (50%). Moreo ver, the EmoAudioNet outp erforms the LSTM netw ork in [35] in cor- rectly classifying samples of depression. The depression F1 score ac hiev ed with EmoAudioNet is higher than the MFCC-based RNN by 4%. Mean while, the o verall accuracy and loss achiev ed b y the latter are better than EmoAudioNet b y 2.14% and 0.07 resp ectiv ely . According to the summarized results of previous w orks in T able 6, the b est results achiev ed so far in the depression severit y level prediction task are obtained in [35]. The b est normalized RMSE is ac hieved with the LSTM net work to reach 0.168. EmoAudioNet reac hes almost the same loss with a v ery lo w difference of 0.012. Our prop osed architecture outp erforms the rest of the results in the literature with the low est normalized RMSE of 0 . 18 in predicting depression severit y levels (PHQ-8 scores) on the DAIC-W OZ dataset. 6 Conclusion and future work In this pap er, we prop osed a new emotion and affect recognition methods from sp eec h based on deep neural netw orks called EmoAudioNet. The prop osed EmoAu- dioNet deep neural netw orks architecture is the aggregation o f an MF CC-based CNN and a sp ectrogram-based CNN, which studies the time-frequency repre- sen tation and the visual represen tation of the sp ectrum of frequencies of the audio signal. EmoAudioNet gives promising results and it approac hes or outp er- forms state-of-art approaches of contin uous dimensional affect recognition and automatic depression recognition from speech on RECOLA and DAIC-W OZ databases. In future w ork, we are planning (1) to impro v e the EmoAudioNet arc hitecture with the given p ossible improv emen ts in the discussion section and (2) to use EmoAudioNet arc hitecture to dev elop a computer-assisted application for patien t monitoring for mo o d disorders. 14 A. Othmani et al. References 1. GBD 2015 Disease and Injury Incidence and Prev alence Collab orators: Global, re- gional, and national incidence, prev alence, and years liv ed with disability for 310 diseases and injuries, 1990-2015: a systematic analysis for the Global Burden of Disease Study 2015, L anc et, 388 , vol. 388, no 10053, pp. 1545-1602, 2015. 2. The National Institute of Men tal Health: Depression, h ttps://www.nimh.nih.gov/health/topics/depression/index.sh tml. Retriev ed 2019, June 17. 3. V alstar, M., Gratch, J., Sc huller, B., Ringev al, F., Lalanne, D., T orres T orres M., Sc herer, S., Stratou, G., Cowie, R., Pan tic, M. : Avec 2016 - Depression, mo o d, and emotion recognition w orkshop and challenge. In Pro ceedings of the 6th international w orkshop on audio/visual emotion challenge, pp. 3–10. ACM (2016). 4. Ringev al, F., Sc huller, B., V alstar, V., Gratch, J., Cowie, R., Scherer, S., Mozgai, S., Cummins, N., Sc hmitt, M., Pan tic, M. : Av ec 2017 - Real-life depression, and affect recognition w orkshop and challenge. In Pro ceedings of the 7th Ann ual W orkshop on Audio/Visual Emotion Challenge, pp. 3–9. ACM, (2017). 5. Jiang, H., Hu, B., Liu, Z., W ang, G., Zhang, L., Li, X., Kang, H. : Detecting Depres- sion Using an Ensem ble Logistic Regression Mo del Based on Multiple Sp eec h F ea- tures. In Computational and mathematical metho ds in medicine, vol. 2018, (2018). 6. Algho winem, S., Goeck e, R., W agner, M., Epps, J., Gedeon, T., Breaksp ear, M., P arker, G., : A comparative study of differen t classifiers for detecting depression from spontaneous sp eec h. In 2013 IEEE International Conference on Acoustics, Sp eec h and Signal Pro cessing, pp. 8022–8026, (2013). 7. V alstar, M., Sc h uller, B., Smith, K., Eyben, F., Jiang, B., Bilakhia, S., Sc hnieder, S., Cowie, R., P an tic, M., : A VEC 2013: the con tin uous audio/visual emotion and depression recognition challenge. In Proceedings of the 3rd ACM in ternational work- shop on Audio/visual emotion c hallenge, pp. 3–10, (2013). 8. Y ang, L., Sahli, H., Xia, X., Pei, E., Ov eneke, M.C., Jiang, D., : Hybrid depression classification and estimation from audio video and text information. In Pro ceedings of the 7th Ann ual W orkshop on Audio/Visual Emotion Challenge, pp. 45–51, A CM, (2017). 9. Cummins, N., Epps, J., Breaksp ear M., Go ec ke, R., : An in vestigation of depressed sp eec h detection: F eatures and normalization.In Twelfth Annual Conference of the In ternational Speech Communication Association, (2011). 10. Lop ez-Otero, P ., Dacia-F ernandez, L., Garcia-Mateo, C., : A study of acoustic features for depression detection. In 2nd International W orkshop on Biometrics and F orensics, IEEE, pp. 1–6, (2014). 11. Ringev al, F., Sch uller, B., V alstar, M., Jaiswal, S., Marchi, E., Lalanne, D., Co wie R., Pan tic, M., : Av+ ec 2015 - The first affect recognition challenge bridging across audio, video, and physiological data. In Proceedings of the 5th International W ork- shop on Audio/Visual Emotion Challenge, pp. 3–8, ACM, (2015). 12. He, L., Jiang, D., Y ang, L., P ei, E., W u, P ., Sahli, H., : Multimo dal affective dimension prediction using deep bidirectional long short-term memory recurren t neural net w orks. In Proceedings of the 5th International W orkshop on Audio/Visual Emotion Challenge, pp. 73–80, A CM, 2015. 13. Ringev al, F., Sc h uller, B., V alstar, M., Co wie, R., Ka ya, H., Schmitt, M., Amiri- parian, S., Cummins, N., Lalanne, D., Michaud, A., C ¸ ift¸ ci, E., G¨ ule¸ c, H., Salah, A.A., Pan tic, M., : A VEC 2018 w orkshop and c hallenge: Bip olar disorder and cross- cultural affect recognition. In Proceedings of the 2018 on Audio/Visual Emotion Challenge and W orkshop, ACM, pp. 3–13, (2018). Title Suppressed Due to Excessiv e Length 15 14. Dhall, A., Ramana Murthy , O.V., Go eck e, R., Joshi, J., Gedeon, T., : Video and image based emotion recognition challenges in the wild: Emotiw 2015. In Proceed- ings of the 2015 A CM on international conference on m ultimo dal interaction, pp. 423–426, (2015). 15. Haq, S., Jackson, P .J., Edge, J., : Speaker-dependent audio-visual emotion recog- nition. In A VSP , pp. 53–58, (2009). 16. Lo w, L.S.A., Maddage, N.C., Lech, M., Sheeber, L.B., Allen, N.B., : Detection of clinical depression in adolescents’ speech during family interactions. In IEEE T ransactions on Biomedical Engineering, v ol. 58, no. 3, pp. 574–586, (2010). 17. V alstar, M., Sch uller, B.W., Kra j ewski, J., Co wie, R., Pan tic, M., : A VEC 2014: the 4th international audio/visual emotion challenge and w orkshop. In Pro ceedings of the 22nd ACM international conference on Multimedia, pp. 1243–1244, (2014). 18. Meng, H., Huang, D., W ang, H., Y ang, H., Ai-Sh uraifi, M., W ang, Y., : Depression recognition based on dynamic facial and vocal expression features using partial least square regression. In Pro ceedings of the 3rd ACM international workshop on Audio/visual emotion challenge, pp. 21–30, (2013). 19. T rigeorgis, G., Ringev al, F., Brueckner, R., Marchi, E., Nicolaou, M.A., Sch uller, B., Zafeiriou, S., : Adieu features? end-to-end sp eec h emotion recognition using a deep con volutional recurren t netw ork. In 2016 IEEE in ternational conference on acoustics, sp eec h and signal processing (ICASSP), pp. 5200–5204, (2016). 20. Ringev al, F., Eyben, F., Kroupi, E., Y uce, A., Thiran, J.P ., Ebrahimi, T., Lalanne, D., Sch uller, B., : Prediction of asynchronous dimensional emotion ratings from audio visual and ph ysiological data. In Pattern Recognition Letters, v ol. 66, pp. 22– 30, (2015). 21. Ringev al, F., Sch uller, B., V alstar, M., Cowie, R., Pan tic, M., : A VEC 2015: The 5th international audio/visual emotion challenge and workshop. In Proceedings of the 23rd ACM in ternational conference on Multimedia, pp. 1335–1336, (2015). 22. Tzirakis, P ., T rigeorgis, G., Nicolaou, M.A., Sch uller, B.W., Zafeiriou, S., : End-to- end m ultimo dal emotion recognition using deep neural net works. In IEEE Journal of Selected T opics in Signal Pro cessing, v ol. 11, no. 8, pp. 1301–1309, (2017). 23. Al Hanai, T., Ghassemi, M.M., Glass, J.R., : Detecting Depression with Au- dio/T ext Sequence Mo deling of Interviews. In Interspeech, pp. 1716–1720, (2018). 24. Dham, S., Sharma, A., Dhall, A., : Depression scale recognition from audio, visual and text analysis. arXiv preprin t 25. Salekin, A., Eb erle, J.W., Glenn, J.J., T eachman, B.A., Stanko vic J.A., : A w eakly sup ervised learning framew ork for detecting so cial anxiet y and depression. In Pro- ceedings of the ACM on interactiv e, mobile, wearable and ubiquitous technologies, v ol. 2, no. 2, pp. 81, (2018). 26. Y ang, L., Jiang, D., Xia, X., P ei, E., Ov eneke, M.C., Sahli, H., : Multimodal mea- suremen t of depression using deep learning models. In Proceedings of the 7th Annual W orkshop on Audio/Visual Emotion Challenge, pp. 53–59, (2017). 27. Jain, R., : Impro ving performance and inference on audio classification tasks using capsule netw orks. arXiv preprint arXiv:1902.05069, (2019). 28. Chao, L., T ao, J., Y ang, M., Li, Y., : Multi task sequence learning for depression scale prediction from video. In 2015 International Conference on Affective Comput- ing and Intelligen t Interaction (A CI I), IEEE, pp. 526–531, (2015). 29. Gupta, R., Sahu, S., Espy-Wilson, C.Y., Naray anan, S.S., : An Affect Prediction Approac h Through Depression Severit y Parameter Incorp oration in Neural Net- w orks. In Interspeech, pp. 3122–3126, (2017). 16 A. Othmani et al. 30. Kang, Y., Jiang, X., Yin, Y., Shang, Y., Zhou, X., : Deep transformation learning for depression diagnosis from facial images. In Chinese Conference on Biometric Recognition, Springer, Cham, pp. 13–22, (2017). 31. Y u, G., and Slotine, J. J., : Audio classification from time-frequency texture. In 2009 IEEE In ternational Conference on Acoustics, Speech and Signal Processing, pp. 1677–1680, (2009). 32. Ringev al, F., Sonderegger, A., Sauer, J., Lalanne, D., : Introducing the RECOLA m ultimo dal corpus of remote collab orativ e and affective interactions. In 2013 10th IEEE in ternational conference and w orkshops on automatic face and gesture recog- nition (FG), IEEE, pp. 1–8, (2013). 33. Gratc h, J., Artstein, R., Lucas, G.M., Stratou, G., Scherer, S., Nazarian, A., W o od, R., Bob erg, J., DeV ault, D., Marsella, S., T raum, D.R., Rizzo, S., Morency , L.P ., : The distress analysis in terview corpus of h uman and computer interviews. LREC, pp. 3123–3128, (2014). 34. Ma, X., Y ang, H., Chen, Q., Huang, D., W ang, Y., : Depaudionet: An efficien t deep model for audio based depression classification. In Pro ceedings of the 6th In ternational W orkshop on Audio/Visual Emotion Challenge, pp. 35–42, (2016). 35. Rejaibi, E., Komat y , A., Meriaudeau, F., Agrebi, S., Othmani, A. : MFCC-based Recurren t Neural Net work for Automatic Clinical Depression Recognition and As- sessmen t from Sp eec h. arXiv preprint arXiv:1909.07208, (2019). 36. Tzirakis, P ., Zhang, J., Sch uller, B.W. : End-to-end speech emotion recognition using deep neural net works. In 2018 IEEE International Conference on Acoustics, Sp eec h and Signal Pro cessing (ICASSP), pp. 5089–5093, (2018). 37. P oria, S., Cambria, E., Ba jpai, R., Hussain, A., : A review of affective computing: F rom unimo dal analysis to multimodal fusion. In Information F usion, v ol. 37, pp. 98–125, (2017). 38. Rouast, P .V., Adam, M., Chiong, R., : Deep learning for human affect recognition: insigh ts and new developmen ts. In IEEE T ransactions on Affective Computing, (2019).

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment