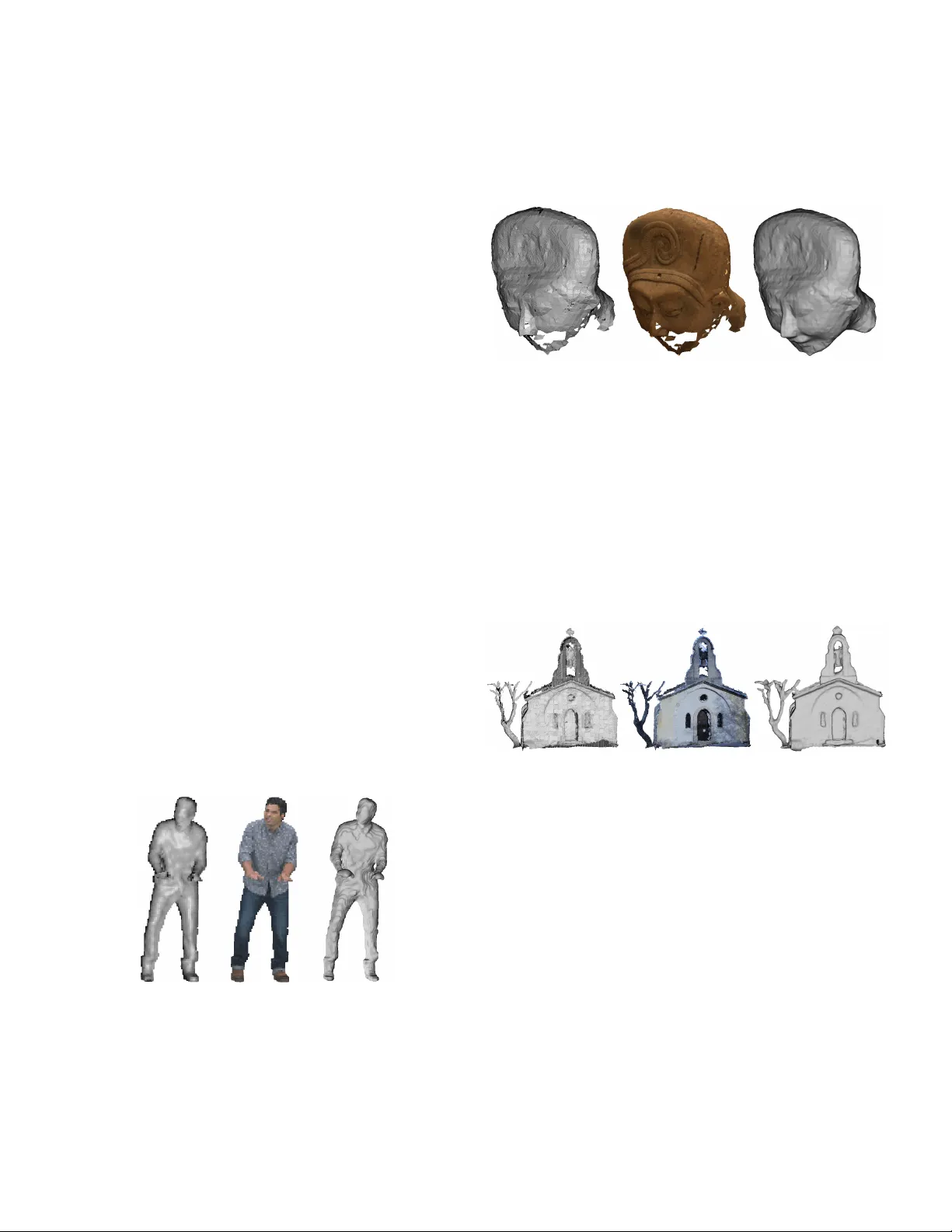

Point Cloud Rendering after Coding: Impacts on Subjective and Objective Quality

Recently, point clouds have shown to be a promising way to represent 3D visual data for a wide range of immersive applications, from augmented reality to autonomous cars. Emerging imaging sensors have made easier to perform richer and denser point cl…

Authors: Alireza Javaheri, Catarina Brites, Fern