Complex Query Answering with Neural Link Predictors

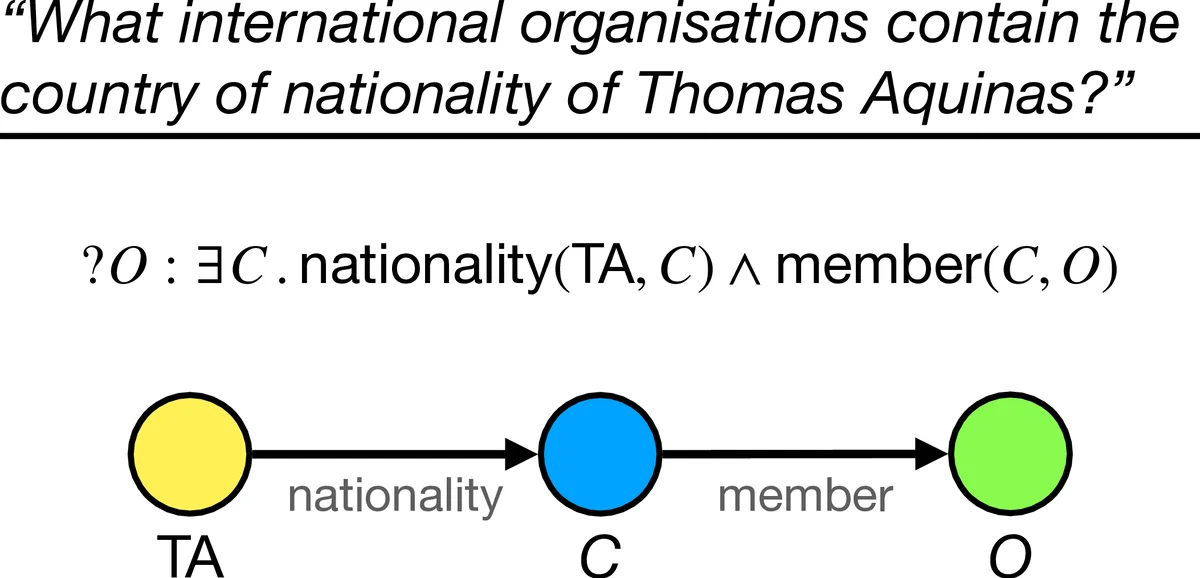

Neural link predictors are immensely useful for identifying missing edges in large scale Knowledge Graphs. However, it is still not clear how to use these models for answering more complex queries that arise in a number of domains, such as queries using logical conjunctions ($\land$), disjunctions ($\lor$) and existential quantifiers ($\exists$), while accounting for missing edges. In this work, we propose a framework for efficiently answering complex queries on incomplete Knowledge Graphs. We translate each query into an end-to-end differentiable objective, where the truth value of each atom is computed by a pre-trained neural link predictor. We then analyse two solutions to the optimisation problem, including gradient-based and combinatorial search. In our experiments, the proposed approach produces more accurate results than state-of-the-art methods – black-box neural models trained on millions of generated queries – without the need of training on a large and diverse set of complex queries. Using orders of magnitude less training data, we obtain relative improvements ranging from 8% up to 40% in Hits@3 across different knowledge graphs containing factual information. Finally, we demonstrate that it is possible to explain the outcome of our model in terms of the intermediate solutions identified for each of the complex query atoms. All our source code and datasets are available online, at https://github.com/uclnlp/cqd.

💡 Research Summary

This paper tackles the problem of answering complex first‑order logical queries on large‑scale Knowledge Graphs (KGs) when many edges are missing. While neural link predictors such as ComplEx have become standard for 1‑hop link prediction, they have not been directly usable for queries that involve conjunction (∧), disjunction (∨) and existential quantification (∃). The authors propose a general framework that converts any EPFO (Existential Positive First‑Order) query into a differentiable objective. Each atomic predicate p(s, o) is scored by a pre‑trained neural link predictor φₚ(eₛ, eₒ) ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment