Audio Cover Song Identification using Convolutional Neural Network

In this paper, we propose a new approach to cover song identification using a CNN (convolutional neural network). Most previous studies extract the feature vectors that characterize the cover song relation from a pair of songs and used it to compute …

Authors: Sungkyun Chang, Juheon Lee, Sang Keun Choe

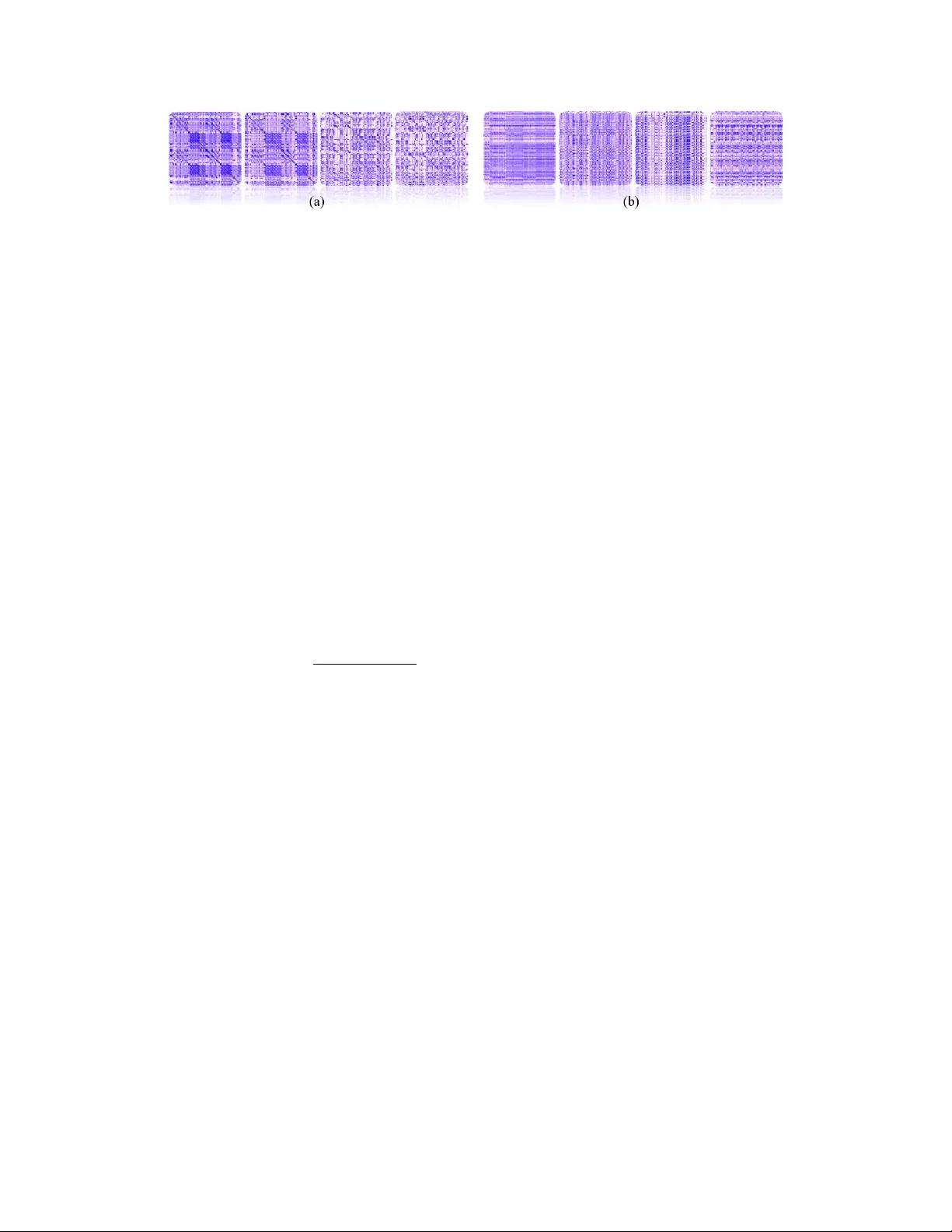

A udio Cov er Song Identification using Con volutional Neural Network Sungkyun Chang 1 , 4 , Juheon Lee 2 , 4 , Sang Keun Choe 3 , 4 and K y ogu Lee 1 , 4 Music and Audio Research Group 1 , College of Liberal Studies 2 , Dept. of Electrical and Computer Engineering, 3 Center for Superintelligence 4 , Seoul National Univ ersity {rayno1, juheon2, hana9000, kglee}@snu.ac.kr Abstract In this paper , we propose a new approach to cover song identification using a CNN (con volutional neural network). Most pre vious studies extract the feature vectors that characterize the co ver song relation from a pair of songs and used it to compute the (dis)similarity between the two songs. Based on the observation that there is a meaningful pattern between cover songs and that this can be learned, we hav e reformulated the cov er song identification problem in a machine learning framew ork. T o do this, we first build the CNN using as an input a cross-similarity matrix generated from a pair of songs. W e then construct the data set composed of cov er song pairs and non-cover song pairs, which are used as positive and negati ve training samples, respecti vely . The trained CNN outputs the probability of being in the cov er song relation giv en a cross-similarity matrix generated from any tw o pieces of music and identifies the cov er song by ranking on the probability . Experimental results sho w that the proposed algorithm achie v es performance better than or comparable to the state-of-the-art. 1 Introduction In popular music, a cov er song or cover v ersion is defined as a new recording produced by someone who is not an original composer or singer . Cov er songs share ke y musical elements, such as melody contours, basic harmonic progressions, and lyrics, with the original song. Howe ver , they can differ from the original song in other aspects, such as instrumentation, tempo, rhythm, key , harmonization, and arrangement. Applications of cover song identification include content-based music recommendation, detection of music plagiarism, and music sampling, to name a few . Con ventional methods for cov er song identification generally combines a feature extraction and a distance metric. For feature extraction, chroma feature (Serra et al. [2009]) and its v ariants (Müller and Kurth [2006], Müller and Ewert [2010]) ha ve been widely used for characterizing melodies and harmonic progressions. A distance metric then measures the similarity of sub-sequences in the feature space within two pieces of music. V arious distance metrics, including dynamic time warping (DTW ; Serra et al. [2008a]) cost, cross-correlation (Ellis and Cotton [2007]), and recently , similarity matrix profile (SimPLe; Silva et al. [2016]) and structural similarity (Cai et al. [2017])-based methods, hav e been proposed for this purpose. So far , there have been a fe w attempts to exploit machine learning for cover song identification. Humphrey et al. [2013] used sparse coding with 2-dimensional Fourier magnitude coefficient deri ved from chroma. Recently , Heo et al. [2017] attempted to apply metric learning (Davis et al. [2007]) to results from SimPLe. Both of these w orks were based on e xisting rule-based cov er song identification 31st Conference on Neural Information Processing Systems (NIPS 2017), Long Beach, CA, USA. Figure 1: Sampling of 180 × 180 cross-similarity matrices generated using the first 180 s of each song. (a) were generated from the cov er pairs of one original song “T oy - P assionate goodbye” and its four dif ferent cover v ersions. (b) were generated using the same original song and its four different non-cov er pair . algorithms, and they mainly focused on improving the scalability of cov er song discov ery by proposing a nov el embedding technique or metric subspace learning for the distance calculation, respectiv ely . In this research, we propose a conv olutional neural netw ork-based system for audio cover song identification. W e use a cross-similarity matrix generated from a pair of songs as an input feature. This idea is based on the observation that similar sub-sequences within cover songs often appear as a meaningful pattern in the cross-similarity matrix. W ith this assumption, we reformulate the audio cov er song identification problem in the image classification framew ork. 2 Basic Idea In various pre vious works on audio matching, the local chroma energy distrib utions across a shifting time window ha v e been widely used as a representation of pitch contents, including melody contour and chord progression. Based on Hu et al. [2003], we first con v ert the audio signals for each song into a 12-dimensional chroma feature with a 1 s non-overlapping window . Then, we can define a cross-similarity matrix S with respect to a pair of two chroma features { A, B } as S l,m = max (∆) − ∆ l,m max (∆) , s.t. ∆ { l,m | l ∈ L,m ∈ M } = δ ( A (: ,l ) , B (: ,m ) ) , (1) where δ denotes a distance function, and { L, M } are the entire time indices of chroma sequence { A ∈ R 12 × L , B ∈ R 12 × M } , respectiv ely . For δ , we calculate the Euclidean distance after applying the key alignment algorithm proposed in Serra et al. [2008b]. This is also known as the optimal transposition index. Fig. 1 displays eight e xamples of the S generated from (a) the four cov er pairs and (b) four non-cov er pairs. The leftmost two images of (a) were generated from co ver pairs containing almost same accompaniments, and we could observe consistent diagonal stripes with block patterns. In the third and fourth leftmost images of (a) were generated from the cov er pairs produced in dif ferent tempo and instrumentations. Although the block patterns disappeared, we could observe consistent diagonal stripes in contrast with (b) from the non-cover pairs. Based on this observation, we assume that a con v olutional neural network model for image classification can distinguish rele v ant patterns from the cross-similarity matrix. More specifically , a block of con volutional layers can sequentially perform sub-sampling and cross-correlation (or conv olution) for distinguishing meaningful patterns from images in many dif ferent scales. Currently , we only compare the first 180 s of each song: W e observed that most of popular music recordings had durations of three to fi ve minutes, and the first three minutes mostly contains main melodies. Thus, we assume that the first 180 s of each song could provide rele vant information to identify a cov er song. If the song lasted for less than 180 s, the duration of the song was standardized with zero-padding. Note that Eq. 1 is equiv alent to the intermediate process of SimPLe proposed in Silva et al. [2016]. Another closely related work is Sakoe and Chiba [1978], which exploits a cross-similarity matrix in the early process of speech alignment. In addition, similar ideas of utilizing stripe or block-like patterns in a self-similarity matrix ha ve been proposed in v arious works for audio music se gmentation (Paulus et al. [2010]). All these findings motiv ated us to use the cross-similarity matrix with a con v olutional neural network. 2 Figure 2: Overvie w of the proposed system. T able 1: Specification of conv olutional neural network: Inside the brackets are unit conv olutional blocks, and outside the brackets is the number of stacked blocks. Con v denotes a same con v olution layer with stride = 1, and its inside parentheses is (channel × width × height). Maxpool denotes a max-pooling layer with stride = 1, and its inside parentheses is (pooling size). BN and FC denote batch normalization and fully-connected layer , respecti vely . Block # Input layer block 1 block 2 block 3 block 4 block 5 Final layers Compo- nents - Con v (32 × 5 × 5 ), ReLU Con v (32 × 5 × 5 ), ReLU Maxpool (2 × 2) BN × 1 Con v (32 × 3 × 3 ), ReLU Con v (16 × 3 × 3 ), ReLU Maxpool (2 × 2) BN × 4 Dr opOut p (0.5) FC(256) , ReLU Dr opOut q (0.25) FC (2) softmax Output shape (1, 180, 180) (32,90,90) (16,45,45) (16,22,22) (16,11,11) (16,5,5) („256) („2) 3 Proposed System The proposed system, sho wn in Fig. 2, consists of three stages. In the preprocessing stage, we con v ert audio signals into chroma features for each song. Then we generate cross-similarity matrices by taking a pair of chroma features as described in Section 2. The next stage is based on the con volutional neural netw ork (hereafter , CNN), as specified in T able 1. Our CNN is built as a narro wer and deeper network ( 0 . 58 × 10 6 parameters with 10 con volution layers) than con ventional CNNs for ImageNet, such as AlexNet(Krizhe vsky et al. [2012]) which has 60 × 10 6 parameters with fi ve con volution layers. W ith respect to the size of the input cross-similarity matrix, we currently fix it as 180 × 180 (cut or zero-padded) that corresponds to comparing the first 3 min of music. With respect to the filter size of the first con volution layer , the receptiv e field of the first layer corresponds to 5 s of audio (2–4 measures in a music score). In practice, using the first con volution filter size of 5 × 5 resulted in approximately 4 % better performance than using 3 × 3 or 7 × 7. W ith respect to blocks 2–4, the basic idea in Section 2 was to run a chain of processing pattern consisting of sub-sampling and cross-correlation (or con volution) with these blocks. For this, blocks 2-4 of the CNN are built using a template con volutional block that outputs a one-half down-sampled size. In ev ery conv olutional block, we apply batch normalization (Ioffe and Szegedy [2015]). The last stage of our system performs ranking on the softmax output of the trained CNN. W e first take the cover -likelihood vector over all cov er candidates. Then we apply descending-sort on this vector for ranking the most likely top@ N covers. 4 Experimental Results 4.1 Data set W e use an ev aluation data set provided by Heo et al. [2017]. The data set resembles that used for the MIREX 1 cov er song identification task. It consists of 330 cover songs that make the query set, and 670 dummy songs that are not covered. Of the 330 query songs, there are 30 different kinds of cov er songs. Each has 11 different cover versions (each query song must have 10 ground-truth cov ers). Thus, it can yield test examples of 3,300 co ver pairs and 496,200 non-co ver pairs. The training set consists of 2,113 cov er pairs and 2,113 non-cover pairs. The held-out validation set consists of 322 cover pairs and 322 non-cover pairs. These data sets are disjoint. The audio files 1 http://www.music- ir.org/mirex/wiki/ 3 contain popular K orean music released from 1980 to 2016. They were produced in stereo with a sampling rate of 44,100 Hz. 4.2 T raining In adv ance of the training, we applied zero-mean unit standardization on the input cross-similarity matrices for feature scaling. W e trained the CNN with a total of 4,226 cross-similarity matrices (class- balanced for cover and non-co ver) . The CNN was implemented based on the Keras frame work, and run on a single GPU cloud server . Using the Adam optimizer (Kingma and Ba [2014]), the training stopped when the cross entropy loss reached con vergence for < 10 − 4 . Using a nested grid-search, we tried to optimize the two drop-out hyperparameters, denoted as drop-out p and drop-out q in T able 1. W e achiev ed the final validation accurac y 83.4% for drop-out p (0.5) and drop-out q (0.5), by not looking at test set accuracy . 4.3 Results W e ev aluated the proposed system by follo wing metrics proposed in the MIREX for audio cov er song identification task: • MNIT10: mean number of cov ers identified in top 10. • MAP: mean a verage precision. • MR1: mean rank of the first correctly identified cov er . Here, MNIT10 was calculated as {total number of correctly identified cov ers in top 10} di vided by {total number of ground-truth cov ers (= 3,300)}. T able 2: Performance of audio cover song identification Model MNIT10 MAP MR1 SimPLe Silva et al. [2016] 6.8 0.66 5.6 SimPLe + Metric Learning (Heo et al. [2017]) 7.9 0.81 15.1 CNN (proposed) 8.04 0.84 2.50 In T able 2, we compared our system with tw o baseline algorithms: Silva et al. [2016], a rule-based algorithm, and Heo et al. [2017], a metric learning-based algorithm. The lar gest MNIT10 w as achiev ed by the proposed CNN. This implies that the search result of the proposed system contained 8.04 correct covers out of 10, in a verage. With respect to MNIT10 and MAP (where larger is better), the present CNN sho wed competitiv e precision over the two compared algorithms. W ith respect to MR1 (where smaller is better), the proposed CNN achieved 80.10% improv ed performance over SimPLe, the second-best algorithm. The smaller MR1 implies that the ground-truth covers w ould more consistently appear in top search results. The effect of comparing various input lengths of each song has not been examined yet. Howe ver , the proposed system comparing only the first 180 s achiev ed the better performance ov er than all other systems comparing the entire lengths of input songs. 5 Conclusions and Future W ork W e proposed a con volutional neural network-based approach to audio co ver song identification. Our assumption w as that the cross-similarity matrix from a pair of two songs could appear as a meaningful pattern. Based on this, we trained the CNN using cross-similarity matrices in the same manner that a binary classifier for images is trained. By ranking the softmax output from the trained CNN, the proposed system was able to predict a fixed number of the most likely cov er song pairs. The performance of the proposed system was compared with a rule-based approach and another machine learning-based approach. Although the current study showed promising results, there is much room for improv ement, particularly by finding more a suitable CNN design, hyper -parameter tuning, and increasing the size of the training data set with flexible input feature length. Furthermore, we did not apply any of the embedding techniques that are necessary for a large-scale search of cov er songs. Thus, exploration of these is left for future work. 4 Acknowledgments This work was supported by Kakao and Kakao Brain corporations. References Joan Serra, Xavier Serra, and Ralph G Andrzejak. Cross recurrence quantification for cover song identification. New Journal of Physics , 11(9):093017, 2009. Meinard Müller and Frank Kurth. T owards structural analysis of audio recordings in the presence of musical variations. EURASIP J ournal on Advances in Signal Pr ocessing , 2007(1):089686, 2006. Meinard Müller and Sebastian Ewert. T owards timbre-in variant audio features for harmon y-based music. IEEE T ransactions on Audio, Speech, and Language Processing , 18(3):649–662, 2010. Joan Serra, Emilia Gómez, Perfecto Herrera, and Xa vier Serra. Chroma binary similarity and local alignment applied to cov er song identification. IEEE T ransactions on A udio, Speech, and Language Pr ocessing , 16(6):1138–1151, 2008a. Daniel PW Ellis and C Cotton. The 2007 labrosa cover song detection system. MIREX extended abstract , 2007. Diego F Silv a, Chin-Chin M Y eh, Gustavo Enrique de Almeida Prado Alv es Batista, Eamonn Keogh, et al. Simple: assessing music similarity using subsequences joins. In International Society for Music Information Retrieval Conference, XVII . International Society for Music Information Retriev al-ISMIR, 2016. Kang Cai, Deshun Y ang, and Xiaoou Chen. Cross-similarity measurement of music sections: A framew ork for large-scale cov er song identification. In Pr oceeding of the T welfth International Confer ence on Intelligent Information Hiding and Multimedia Signal Pr ocessing, Nov ., 21-23, 2016, Kaohsiung, T aiwan, V olume 1 , pages 151–158. Springer, 2017. Eric J Humphrey , Oriol Nieto, and Juan Pablo Bello. Data driv en and discriminati ve projections for large-scale co ver song identification. In ISMIR , pages 149–154, 2013. Hoon Heo, Hyunwoo J Kim, W an Soo Kim, and Kyogu Lee. Cover song identification with metric learning using distance as a feature. In ISMIR , 2017. Jason V Da vis, Brian Kulis, Prateek Jain, Suvrit Sra, and Inderjit S Dhillon. Information-theoretic metric learning. In Pr oceedings of the 24th international confer ence on Machine learning , pages 209–216. A CM, 2007. Ning Hu, Roger B Dannenberg, and Geor ge Tzanetakis. Polyphonic audio matching and alignment for music retriev al. In Applications of Signal Processing to Audio and Acoustics, 2003 IEEE W orkshop on. , pages 185–188. IEEE, 2003. Joan Serra, Emilia Gómez, and Perfecto Herrera. T ransposing chroma representations to a common key . In IEEE CS Conference on The Use of Symbols to Represent Music and Multimedia Objects , pages 45–48, 2008b. Hiroaki Sakoe and Seibi Chiba. Dynamic programming algorithm optimization for spoken word recognition. IEEE transactions on acoustics, speech, and signal pr ocessing , 26(1):43–49, 1978. Jouni P aulus, Meinard Müller , and Anssi Klapuri. State of the art report: Audio-based music structure analysis. In ISMIR , pages 625–636, 2010. Alex Krizhe vsky , Ilya Sutske ver , and Geoffre y E Hinton. Imagenet classification with deep con volu- tional neural networks. In Advances in neural information processing systems , pages 1097–1105, 2012. Serge y Ioffe and Christian Szegedy . Batch normalization: Accelerating deep network training by reducing internal cov ariate shift. In International Confer ence on Machine Learning , pages 448–456, 2015. Diederik Kingma and Jimmy Ba. Adam: A method for stochastic optimization. arXiv pr eprint arXiv:1412.6980 , 2014. 5

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment