Generative Adversarial Networks in Human Emotion Synthesis:A Review

Synthesizing realistic data samples is of great value for both academic and industrial communities. Deep generative models have become an emerging topic in various research areas like computer vision and signal processing. Affective computing, a topic of a broad interest in computer vision society, has been no exception and has benefited from generative models. In fact, affective computing observed a rapid derivation of generative models during the last two decades. Applications of such models include but are not limited to emotion recognition and classification, unimodal emotion synthesis, and cross-modal emotion synthesis. As a result, we conducted a review of recent advances in human emotion synthesis by studying available databases, advantages, and disadvantages of the generative models along with the related training strategies considering two principal human communication modalities, namely audio and video. In this context, facial expression synthesis, speech emotion synthesis, and the audio-visual (cross-modal) emotion synthesis is reviewed extensively under different application scenarios. Gradually, we discuss open research problems to push the boundaries of this research area for future works.

💡 Research Summary

This review paper provides a comprehensive examination of how Generative Adversarial Networks (GANs) have been applied to human emotion synthesis across the two primary communication modalities—audio and visual—and their cross‑modal integration. The authors begin by outlining the rapid growth of generative modeling in the past two decades, emphasizing its relevance to affective computing for tasks such as data augmentation, emotion recognition, and synthetic data generation. A concise technical background on GANs is presented, covering the vanilla min‑max formulation, the Jensen‑Shannon divergence objective, and the challenges inherent to saddle‑point optimization, mode collapse, and training instability.

The survey then categorizes the major GAN variants that have been leveraged for emotion synthesis. Conditional GANs (CGANs) introduce auxiliary information (e.g., emotion labels) to guide generation, while Laplacian Pyramid GANs (LAPGANs) adopt a multi‑scale approach for high‑resolution outputs. Stacked GANs (SGANs) and deep convolutional GANs (DCGANs) improve architectural depth and feature learning, and more recent models such as StyleGAN, Spectral‑Norm GAN, and CapsuleGAN incorporate advanced normalization, latent‑space manipulation, and capsule routing to enhance sample fidelity and diversity.

The core of the review is organized around three synthesis domains:

-

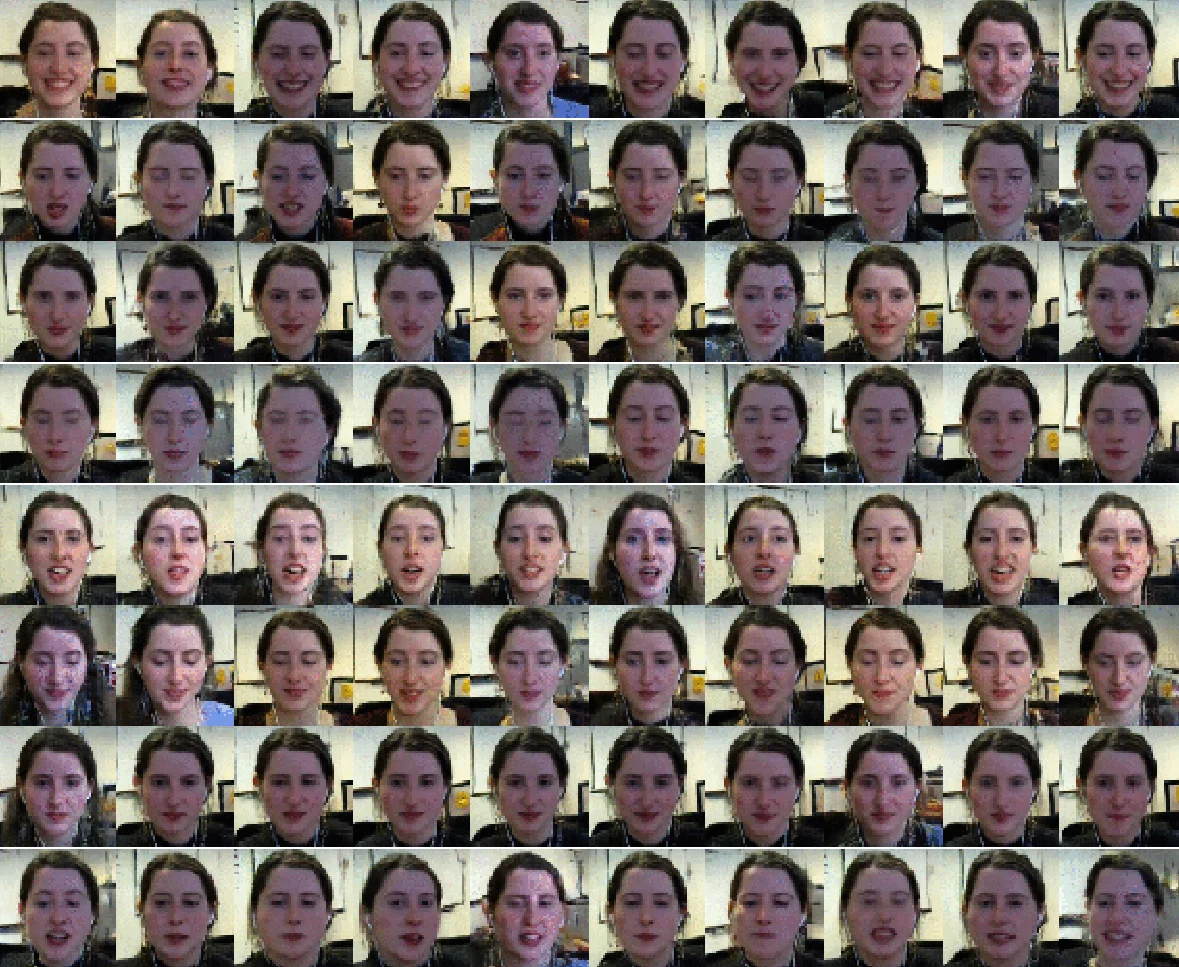

Facial Expression Synthesis – The authors discuss large‑scale image datasets (AffectNet, FER2013, CelebA) and highlight image‑to‑image translation frameworks (Pix2Pix, CycleGAN) as well as high‑resolution generators (StyleGAN). They evaluate performance using quantitative metrics (FID, IS) and qualitative human studies, noting the importance of preserving fine‑grained facial dynamics, handling pose and illumination variations, and ensuring cross‑subject generalization.

-

Speech Emotion Synthesis – For audio, the paper surveys waveform‑level GANs (WaveGAN, MelGAN) and GAN‑enhanced text‑to‑speech pipelines (Parallel WaveGAN, GAN‑TTS). It examines benchmark corpora (RAVDESS, CREMA‑D, IEMOCAP) and assessment criteria such as PESQ, MOS, and emotion classification consistency. Key challenges include maintaining spectral realism while embedding target affective states, and mitigating artifacts that degrade intelligibility.

-

Audio‑Visual (Cross‑Modal) Emotion Synthesis – The authors review multimodal datasets (AVEC, SEWA) and describe dual‑stream or shared‑latent GAN architectures that jointly generate synchronized facial movements and corresponding prosodic cues. Attention mechanisms and cross‑modal conditioning are identified as crucial for preserving temporal alignment and emotional coherence across modalities.

Throughout, the paper details training strategies designed to alleviate GAN pitfalls: label smoothing, two‑time‑scale update rules, pre‑training discriminators, and the use of perceptual or auxiliary losses. It also points out systemic limitations: dataset bias (cultural, linguistic, age diversity), real‑time generation constraints, difficulty in capturing subtle affective nuances, and ethical concerns surrounding synthetic emotional media.

To address these gaps, the authors propose several future research directions: (i) variational Bayesian GANs for explicit uncertainty modeling; (ii) reinforcement‑learning feedback loops that reward emotional consistency; (iii) multi‑task learning to leverage scarce labeled data; (iv) integration of differential privacy and cryptographic techniques to protect personal affective information; and (v) community‑driven standardization of benchmark protocols and evaluation metrics.

In conclusion, the review underscores the transformative potential of GAN‑based emotion synthesis for data augmentation, virtual avatar creation, human‑computer interaction, and clinical decision support, while calling for coordinated efforts between academia and industry to develop robust, ethical, and scalable solutions.

Comments & Academic Discussion

Loading comments...

Leave a Comment