ProbMinHash -- A Class of Locality-Sensitive Hash Algorithms for the (Probability) Jaccard Similarity

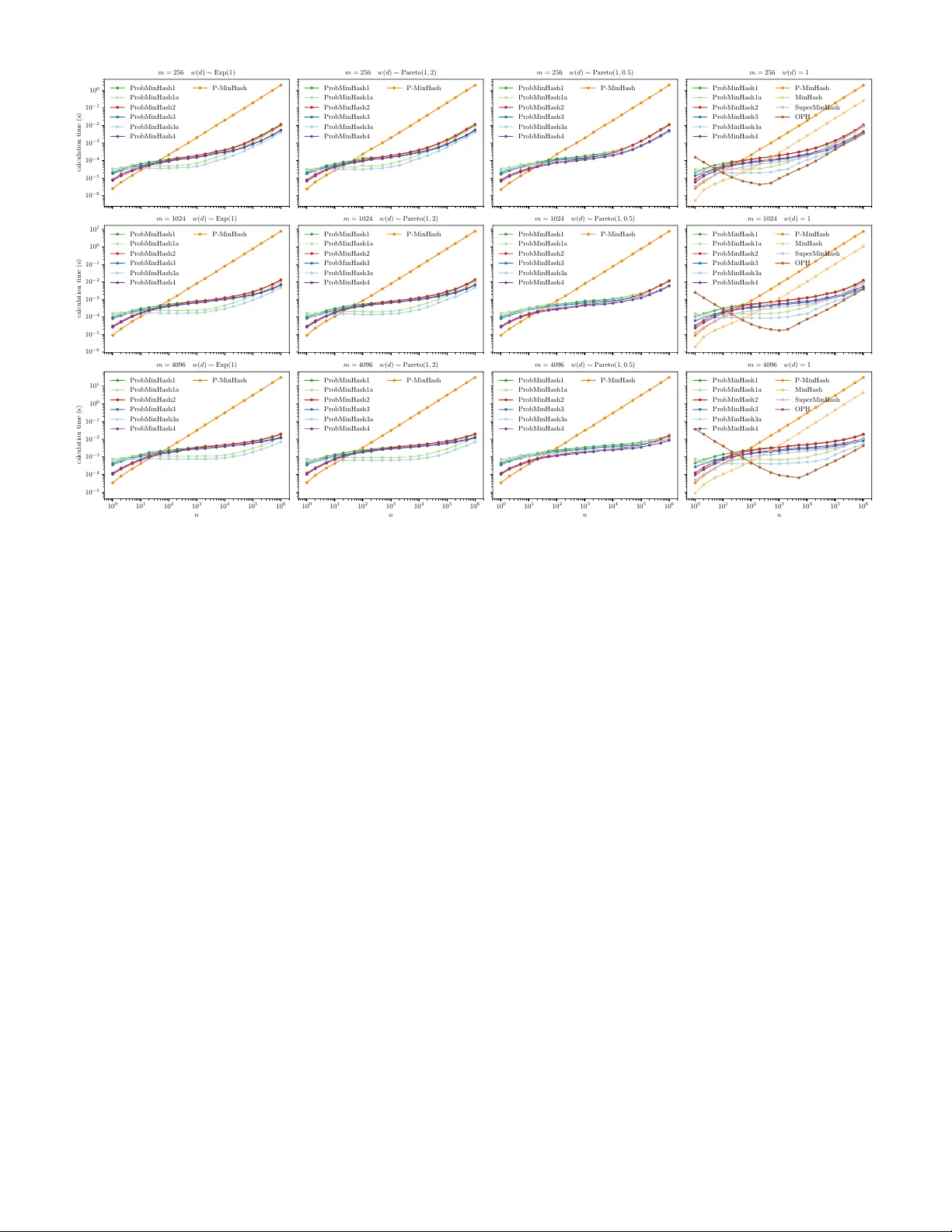

The probability Jaccard similarity was recently proposed as a natural generalization of the Jaccard similarity to measure the proximity of sets whose elements are associated with relative frequencies or probabilities. In combination with a hash algor…

Authors: Otmar Ertl