Continuous Meta-Learning without Tasks

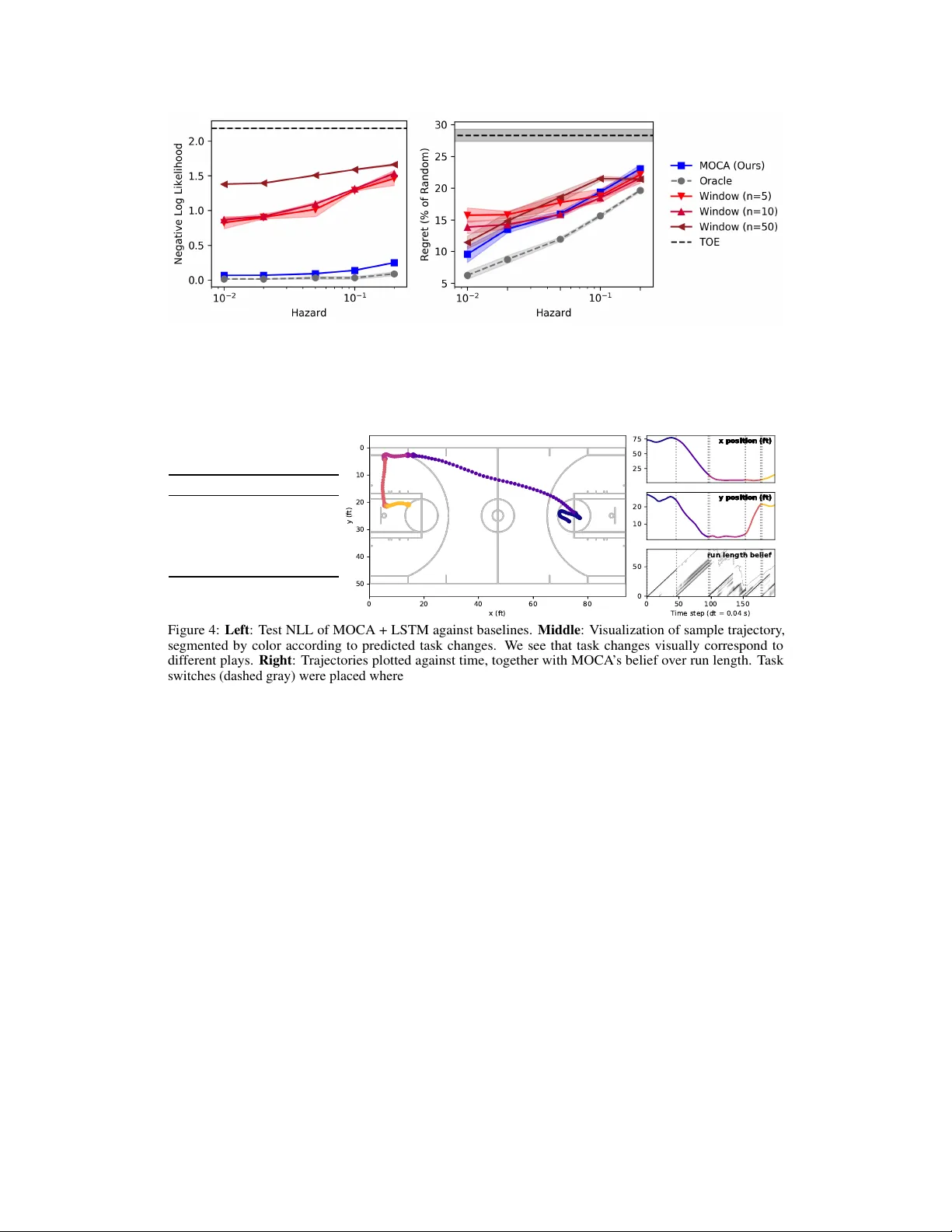

Meta-learning is a promising strategy for learning to efficiently learn within new tasks, using data gathered from a distribution of tasks. However, the meta-learning literature thus far has focused on the task segmented setting, where at train-time,…

Authors: James Harrison, Apoorva Sharma, Chelsea Finn