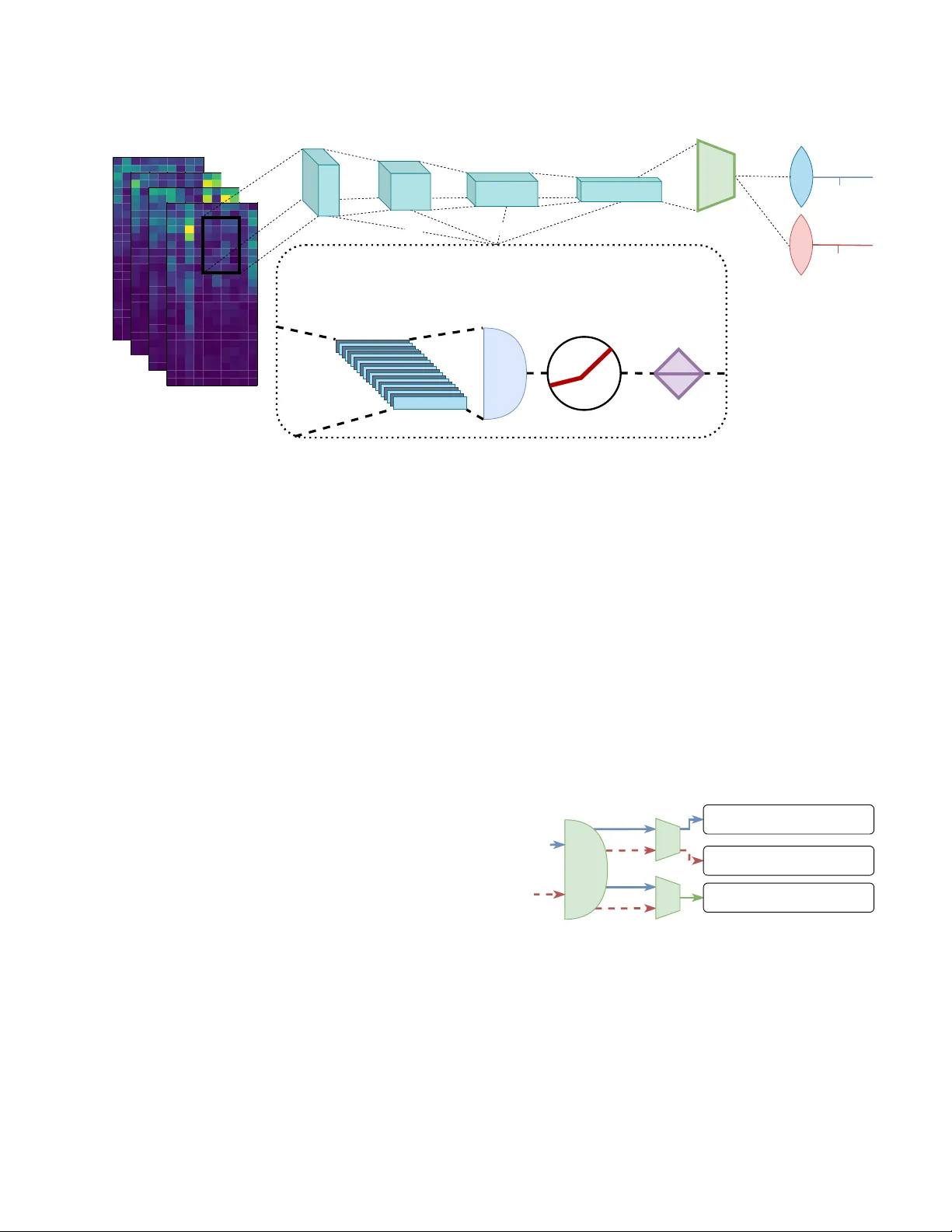

Unsupervised Domain Adversarial Self-Calibration for Electromyographic-based Gesture Recognition

Surface electromyography (sEMG) provides an intuitive and non-invasive interface from which to control machines. However, preserving the myoelectric control system's performance over multiple days is challenging, due to the transient nature of the si…

Authors: Ulysse C^ote-Allard, Gabriel Gagnon-Turcotte, Angkoon Phinyomark