Vrengt: A Shared Body-Machine Instrument for Music-Dance Performance

💡 Research Summary

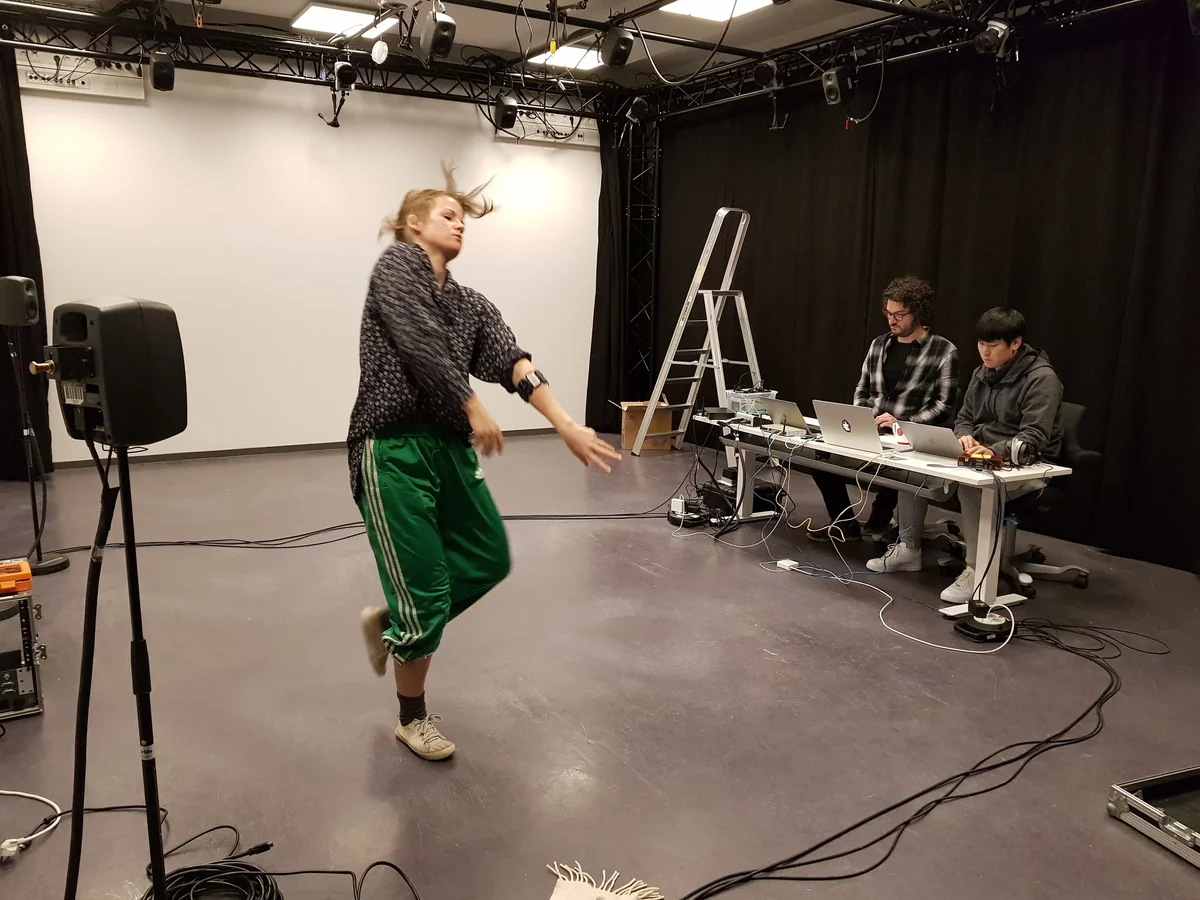

The paper presents Vrengt, a shared body‑machine instrument designed to enable a true partnership between a dancer and a musician by allowing both performers to control the same sonic parameters in real time. The authors adopt a participatory design methodology, iteratively involving the dancer and the musician throughout the development process. The system captures the dancer’s physiological signals using two Myo armbands (one on the left forearm, one on the right calf) that record electromyographic (EMG) activity at 200 Hz, and a wireless headset microphone that records breathing. These signals are transmitted via Bluetooth Low Energy to two laptops running Max/MSP patches. In Max, raw EMG data are rectified, smoothed, and reduced to mean absolute value (MAV) features, which are then classified into micro‑, meso‑, and macro‑motion levels.

Mapping is guided by a “spatio‑temporal matrix” and focuses on sonic micro‑interaction. The authors map each motion level to physically‑informed sound synthesis objects from the Sound Design Toolkit (SDT): friction, scraping, and fluid‑flow (bubble) models. For example, calf muscle activity modulates the force, stiffness, and velocity parameters of a friction model, producing a “squeaking” sound, while forearm activity controls bubble radius, rise factor, and density, yielding water‑like sounds. The breathing signal is processed through a chain of Schroeder reverbs and multiple delay lines, creating sustained, resonant feedback loops that the dancer can hear while performing.

A custom virtual mixer serves as the main user interface for the musician. It allows real‑time adjustment of volume, panning, effects, and data scaling for each sound object, effectively letting the musician shape the sonic landscape while the dancer supplies the gestural input. This design echoes Alvin Lucier’s “Music for Solo Performer,” but updates it for a digital, multi‑sensor context. The musician’s decisions steer the dancer, who describes her experience as “moving through listening,” establishing a feedback loop where sound influences movement and vice versa.

The performance structure consists of three sections: (1) Breath – the dancer, blindfolded, explores acoustic feedback loops driven by proximity to speakers, modulated by the musician; (2) Standstill – micro‑motions are sonified, allowing the audience to hear the neural commands that cause muscle contraction even when the dancer appears still; (3) Musicking – both performers engage fully, with the dancer accessing the musician’s skills and the musician responding to the dancer’s bodily cues. This progression moves the audience from awareness of breath patterns to perception of invisible micromotion, culminating in a joint music‑making process.

Two public performances (a large auditorium and a club setting) demonstrated the system’s flexibility, including an extended version with an additional musician and visual artist. Subjective evaluations reveal that the dancer felt the instrument blurred the line between stillness and sound, while the musician noted that stepping into the dancer’s athletic context required a shift in mindset but ultimately offered a rich, ecological interaction space. Metaphoric language (“squeezing a wet sponge,” “planting deeply”) emerged as a shared vocabulary, facilitating communication about the embodied sonic experience.

In discussion, the authors argue that Vrengt transcends traditional “music‑for‑dance” or “dance‑for‑music” paradigms, which often treat one performer as the primary sound source and the other as a visual element. By making body‑generated signals first‑class sound sources and giving the musician real‑time control over their mapping, the system creates a genuinely shared instrument where body and machine co‑compose. The work contributes to the fields of sound and music computing, performing arts, and human‑centered design by demonstrating how low‑level physiological data can be sonified, mapped, and mixed collaboratively, opening new avenues for embodied, interactive performance.

Comments & Academic Discussion

Loading comments...

Leave a Comment