Dynamic Pricing and Fleet Management for Electric Autonomous Mobility on Demand Systems

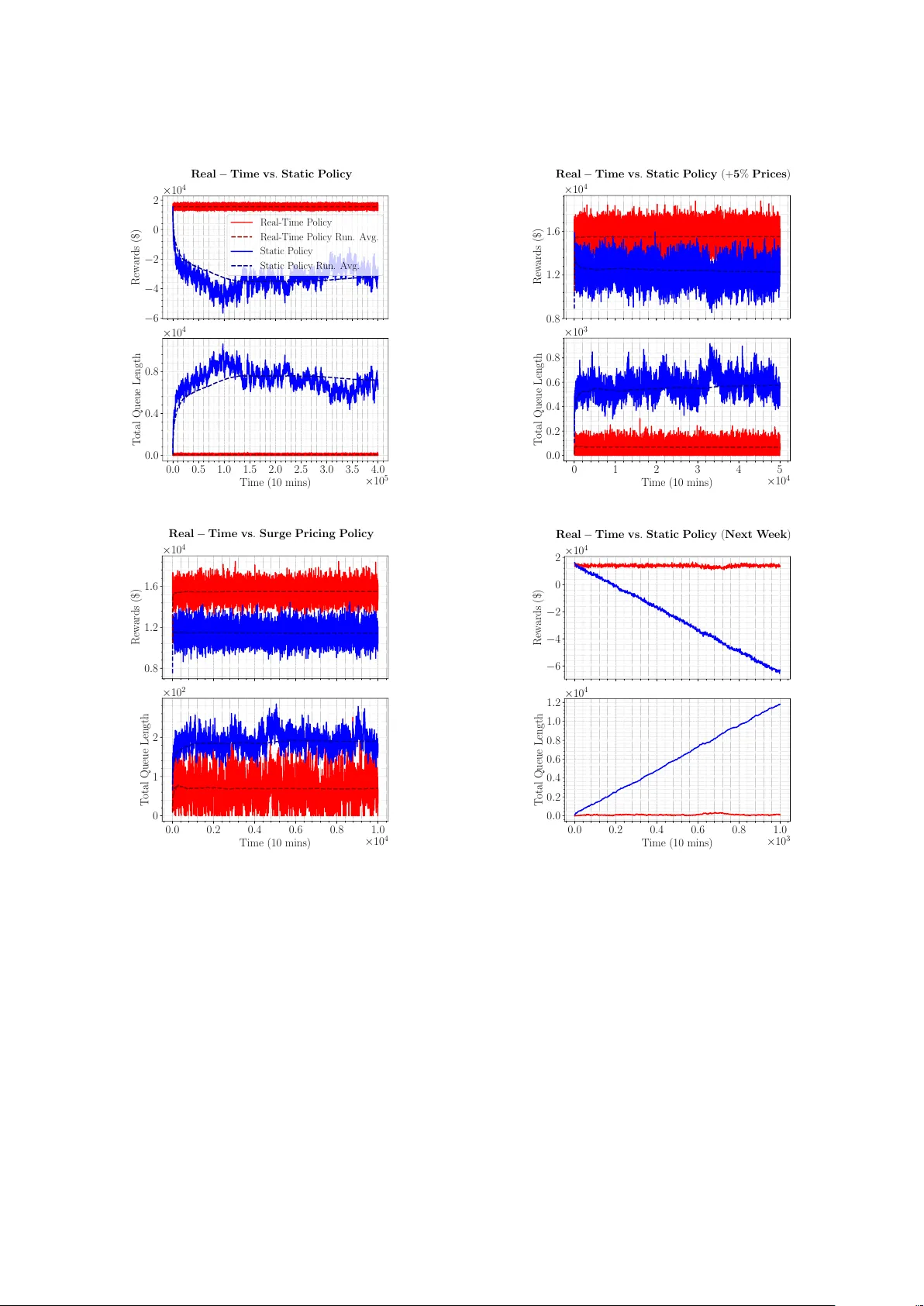

The proliferation of ride sharing systems is a major drive in the advancement of autonomous and electric vehicle technologies. This paper considers the joint routing, battery charging, and pricing problem faced by a profit-maximizing transportation s…

Authors: Berkay Turan, Ramtin Pedarsani, Mahnoosh Alizadeh