Exploring Adversarial Attack in Spiking Neural Networks with Spike-Compatible Gradient

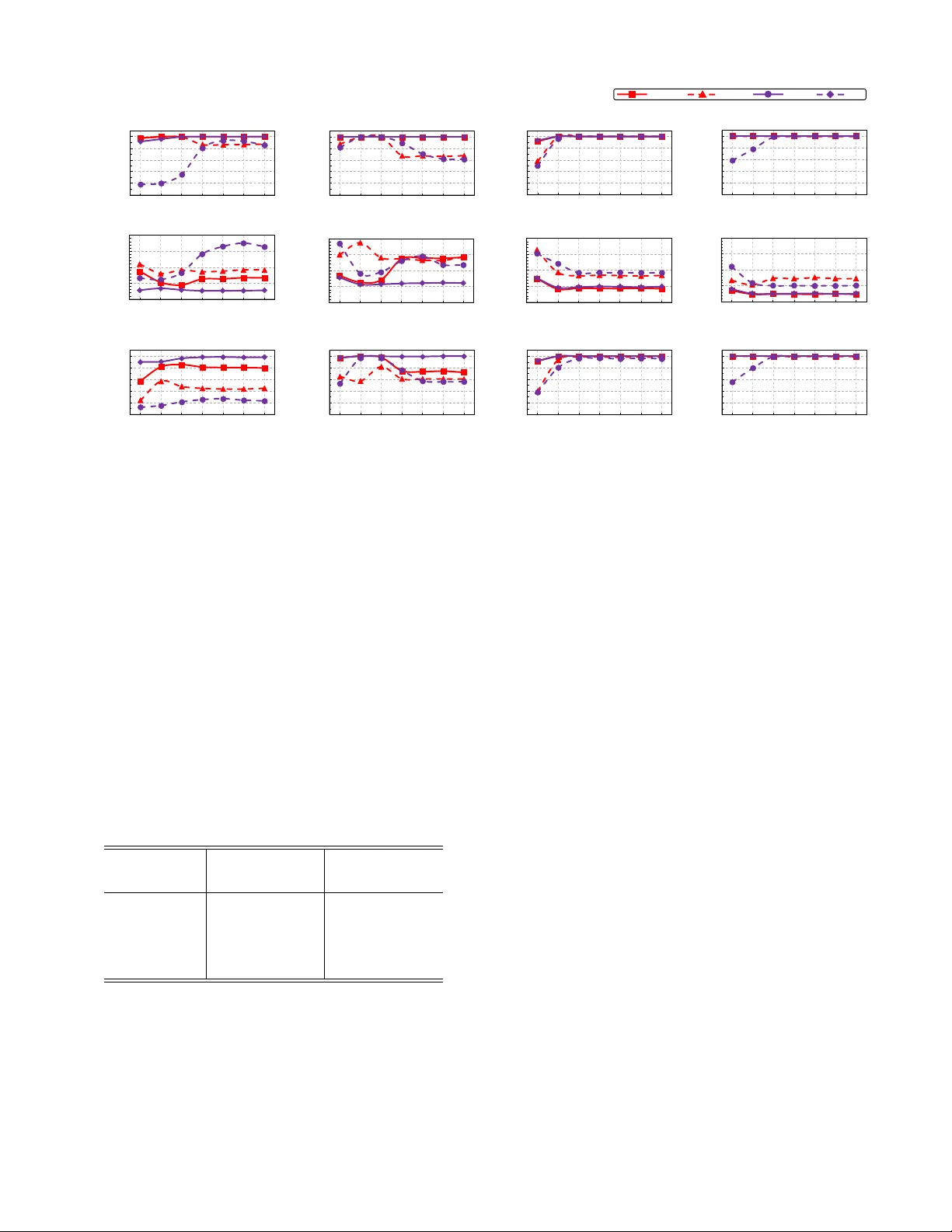

Recently, backpropagation through time inspired learning algorithms are widely introduced into SNNs to improve the performance, which brings the possibility to attack the models accurately given Spatio-temporal gradient maps. We propose two approache…

Authors: Ling Liang, Xing Hu, Lei Deng