An Overview on Optimal Flocking

The study of robotic flocking has received considerable attention in the past twenty years. As we begin to deploy flocking control algorithms on physical multi-agent and swarm systems, there is an increasing necessity for rigorous promises on safety and performance. In this paper, we present an overview the literature focusing on optimization approaches to achieve flocking behavior that provide strong safety guarantees. We separate the literature into cluster and line flocking, and categorize cluster flocking with respect to the system-level objective, which may be realized by a reactive or planning control algorithm. We also categorize the line flocking literature by the energy-saving mechanism that is exploited by the agents. We present several approaches aimed at minimizing the communication and computational requirements in real systems via neighbor filtering and event-driven planning, and conclude with our perspective on the outlook and future research direction of optimal flocking as a field.

💡 Research Summary

The paper provides a comprehensive survey of recent research that applies optimization techniques to robotic flocking, a field that has seen rapid growth over the past two decades. Recognizing that real‑world multi‑agent swarms are constrained by limited sensing, communication bandwidth, actuation power, memory, and computational resources, the authors argue that flocking control must be framed as an optimal control problem that simultaneously guarantees safety, performance, and energy efficiency.

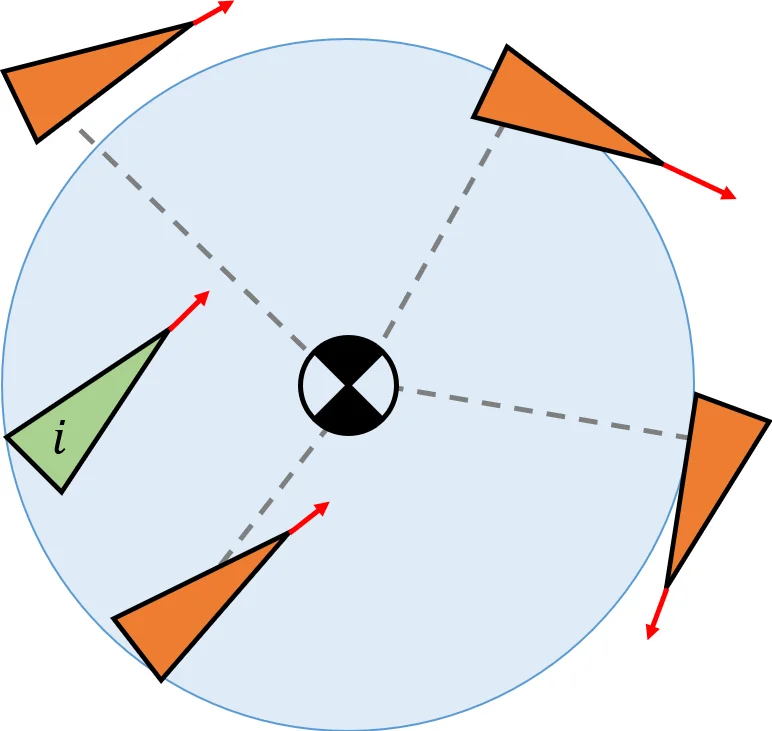

The authors first introduce a taxonomy that separates the literature into two broad categories: cluster flocking (three‑dimensional, cohesive swarms such as bird flocks) and line flocking (one‑dimensional formations such as migrating geese). Within cluster flocking, they further subdivide the work according to the system‑level objective that the designer wishes to minimize. Three objectives are identified: (1) deviation from Reynolds’ classic rules (collision avoidance, velocity matching, and flock centering), (2) tracking a global reference trajectory with the flock’s centroid, and (3) other mission‑specific goals such as area coverage, leader‑less operation, or maximizing cluster size. For each objective, the survey distinguishes between reactive (real‑time feedback‑driven) and planning (predictive, offline‑or‑online optimization) approaches.

In the reactive branch, the paper reviews a variety of learning‑based and meta‑heuristic methods. Early work by Morihiro et al. (2006) used a primitive‑selection scheme combined with a reward structure that encouraged velocity alignment and spacing. More recent studies employ deep reinforcement learning, particle swarm optimization, pigeon‑inspired optimization, and genetic algorithms to tune neural‑network controllers or directly select control actions that minimize a cost function of the form

Jᵢ = V(‖sᵢⱼ‖) + Σⱼ∈Nᵢ ‖˙sᵢ‖²,

where V is an attractive‑repulsive potential and the second term penalizes velocity mismatch. These methods typically assume each agent can observe a subset of neighbors (often the k‑nearest or left/right neighbors) and may have access to limited global information such as average heading or an entropy‑based order parameter.

Planning approaches treat flocking as a trajectory‑optimization problem. Wang et al. (2018) formulate a dynamic‑programming solution for a swarm of quadrotors in ℝ², enforcing Reynolds’ rules while moving the flock centroid toward a desired waypoint. The authors introduce an angular‑symmetry neighbor definition to avoid isolated cliques and incorporate penalties for rule violations, obstacle proximity, and deviation from the goal. Model Predictive Control (MPC) and other receding‑horizon schemes are also discussed, especially in the context of event‑driven updates that reduce computational load.

The line‑flocking section focuses on energy‑saving mechanisms. Here the objective is always to minimize per‑agent energy consumption while preserving a linear formation. The literature is organized around the specific physical mechanisms exploited (e.g., aerodynamic drafting, staggered speed profiles). The authors note that many line‑flocking studies adopt similar cost structures but differ in how they model aerodynamic coupling and how they enforce formation integrity.

A notable contribution of the survey is its treatment of multi‑objective optimization through Pareto analysis. By plotting Pareto fronts for competing metrics such as energy use, convergence speed, and safety margin, the authors illustrate how designers can select operating points that best match mission requirements. This framework also clarifies inherent trade‑offs, for example, the tension between aggressive energy‑saving (tight drafting) and robustness to disturbances.

Section 8 addresses practical considerations for physical swarms. The authors discuss techniques for reducing cyber‑physical costs, including neighbor filtering (communicating only with a reduced set of agents) and event‑driven planning (re‑optimizing only when a significant state change occurs). They also argue that flocking should be viewed as a group‑level strategy rather than merely a set of individual behaviors, enabling higher‑level mission planning such as coordinated search‑and‑rescue or environmental monitoring.

Finally, the paper outlines future research directions: (1) integration of more realistic physical models (aerodynamics, terrain interaction), (2) hybrid schemes that combine learning‑based adaptation with model‑based optimal control, (3) scalability and robustness analyses for very large swarms, and (4) human‑robot interaction frameworks that allow operators to influence flock behavior without breaking safety guarantees.

Overall, the survey re‑defines robotic flocking as a goal‑oriented optimal control problem, provides a clear taxonomy of existing approaches, highlights the algorithmic choices for both reactive and planning paradigms, and offers practical guidance for implementing safe, energy‑efficient flocking in real‑world multi‑robot systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment