A fast and accurate physics-informed neural network reduced order model with shallow masked autoencoder

💡 Research Summary

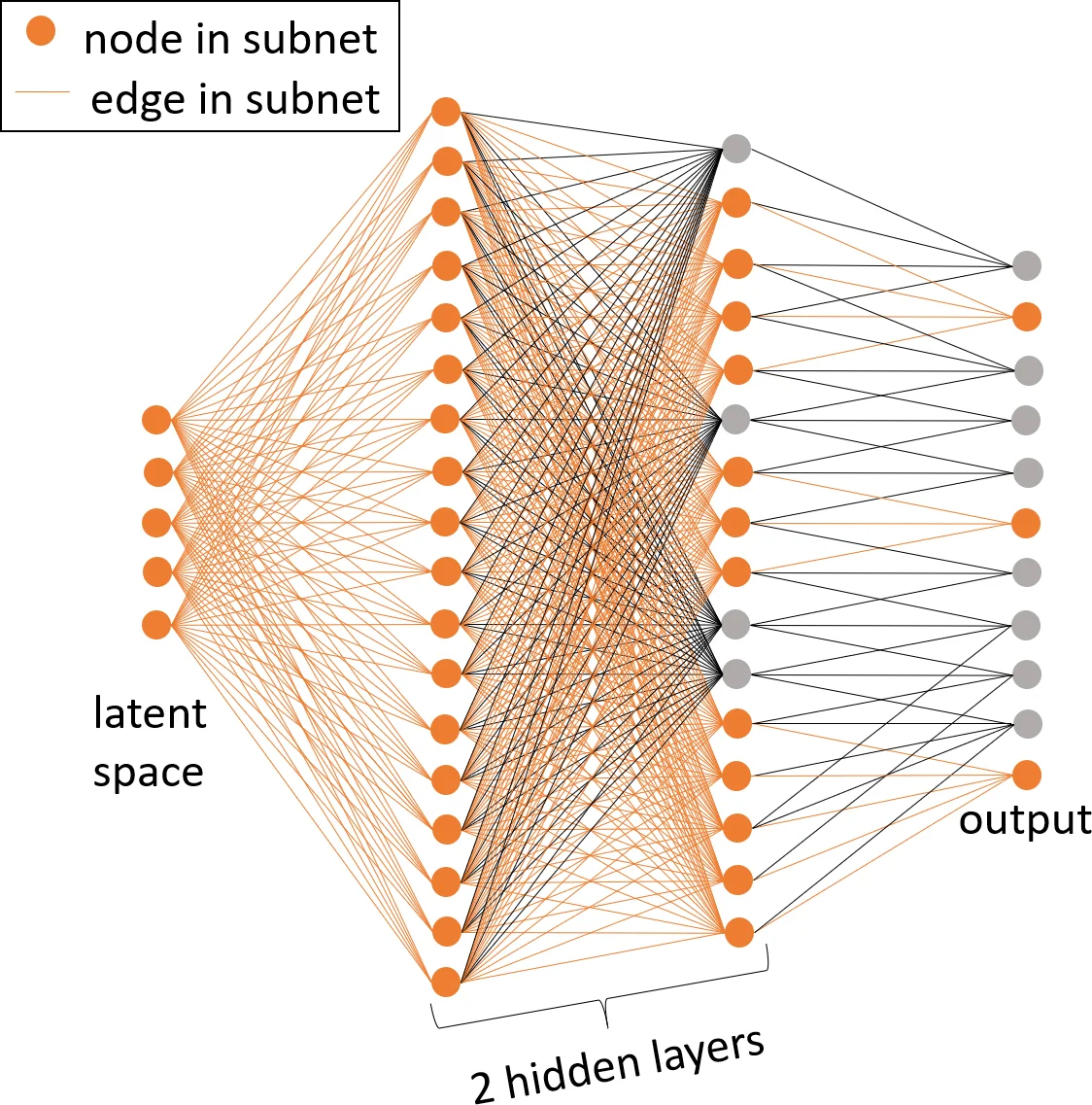

This paper addresses the well‑known limitation of traditional linear‑subspace reduced‑order models (LS‑ROMs) when applied to advection‑dominated or sharp‑gradient phenomena, which typically exhibit a large Kolmogorov n‑width and therefore cannot be captured accurately with a low‑dimensional linear basis. To overcome this obstacle, the authors propose a nonlinear‑manifold reduced‑order model (NM‑ROM) that leverages a shallow masked autoencoder to learn a compact latent representation of the full‑order model (FOM) solution manifold. The autoencoder is deliberately shallow and incorporates a masking operation that selectively disables input dimensions, thereby reducing the number of trainable parameters and facilitating an efficient hyper‑reduction (HR) stage.

The NM‑ROM framework integrates physics‑informed neural networks (PINNs) with conventional numerical solvers. Instead of treating neural‑network weights as the sole unknowns in a global residual minimization (as in classic PINN approaches), the proposed method retains the governing equations’ discretization (e.g., backward Euler, Newton‑Raphson) and uses the trained decoder as a nonlinear trial manifold. This “physics‑informed” loss enforces the governing PDE/ODE residuals while allowing the latent coordinates to evolve according to reduced‑order dynamics.

A central contribution is the development of a hyper‑reduction technique tailored to the nonlinear manifold setting. By extending ideas from the Discrete Empirical Interpolation Method (DEIM), the authors select a small set of spatial points at which the nonlinear terms are evaluated. The masked autoencoder’s structure makes this sampling inexpensive, and the resulting reduced nonlinear operators are assembled only on the selected points, dramatically lowering the per‑time‑step computational cost.

The paper systematically derives two reduced‑order formulations: NM‑Galerkin and NM‑Least‑Squares Petrov‑Galerkin (NM‑LSPG), each equipped with hyper‑reduction (NM‑Galerkin‑HR, NM‑LSPG‑HR). It also provides a rigorous a‑posteriori error bound that accounts for three sources of approximation error: manifold truncation, hyper‑reduction, and temporal discretization. This bound offers theoretical insight into how the different components of the algorithm contribute to the overall accuracy.

Numerical experiments focus on the one‑dimensional and two‑dimensional Burgers equations, canonical test cases for advection‑dominated dynamics. Compared with LS‑Galerkin and LS‑LSPG of the same reduced dimension, the NM‑ROM variants achieve substantially lower relative errors. Moreover, the hyper‑reduced NM‑ROMs deliver significant speed‑ups: a factor of 2.6 for the 1‑D case and an impressive 11.7 for the 2‑D case. These results demonstrate that the nonlinear manifold can capture the solution space with far fewer degrees of freedom than a linear subspace, and that the proposed HR scheme successfully mitigates the cost of evaluating nonlinear terms.

In summary, the authors present a fast, accurate, and physically consistent reduced‑order modeling strategy that combines a shallow masked autoencoder, physics‑informed training, and tailored hyper‑reduction. The approach extends the applicability of ROMs to problems where traditional linear subspace methods fail, and it opens avenues for real‑time simulation, optimization, and control of complex nonlinear dynamical systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment