Single-photon computational 3D imaging at 45 km

Long-range active imaging has a variety of applications in remote sensing and target recognition. Single-photon LiDAR (light detection and ranging) offers single-photon sensitivity and picosecond timing resolution, which is desirable for high-precisi…

Authors: Zheng-Ping Li, Xin Huang, Yuan Cao

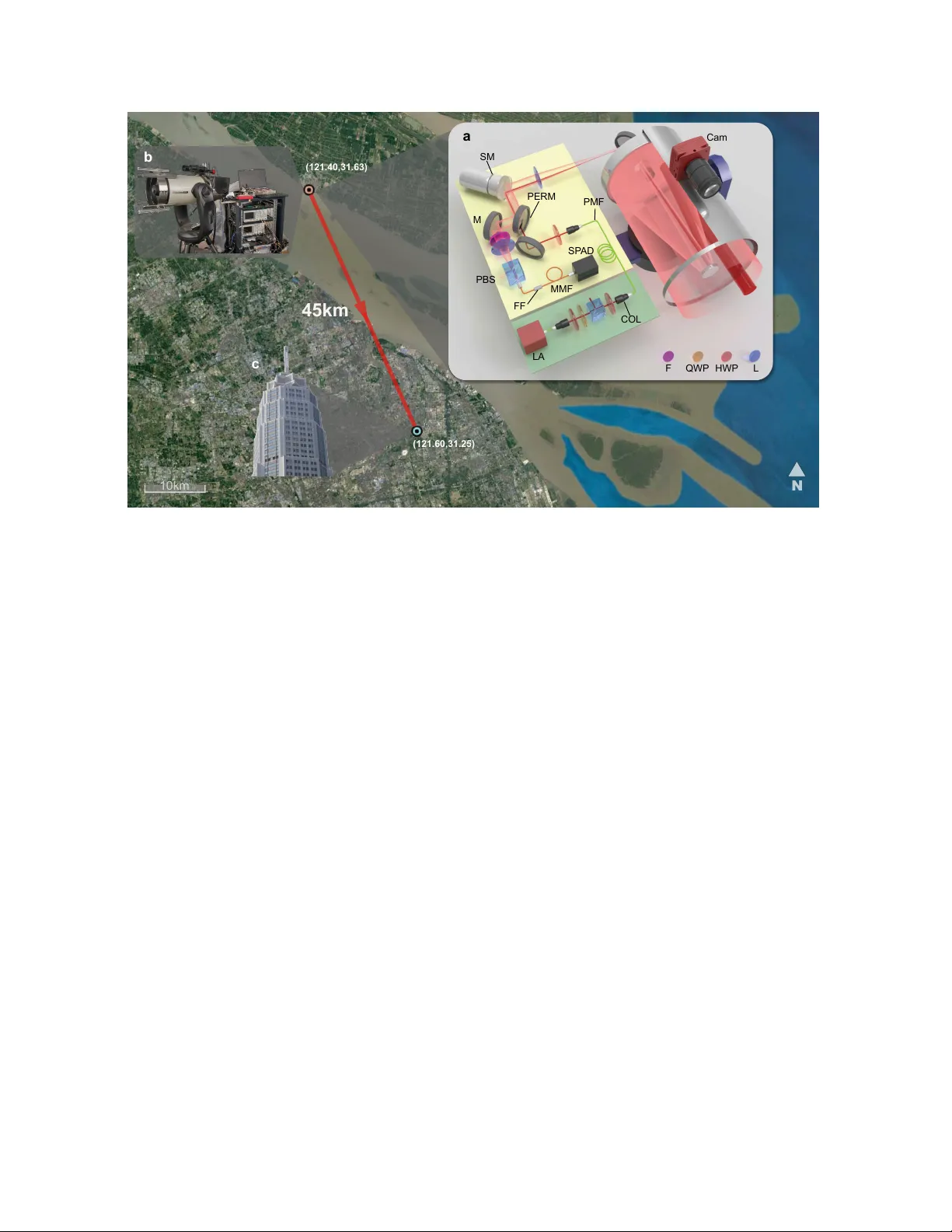

Single-photon computational 3D imaging at 45 km Zheng-Ping Li 1 , 2 , ∗ , Xin Huang 1 , 2 , ∗ , Y uan Cao 1 , 2 , ∗ , Bin W ang 1 , 2 , Y u-Huai Li 1 , 2 , W eijie Jin 1 , 2 Chao Y u 1 , 2 , Jun Zhang 1 , 2 , Qiang Zhang 1 , 2 , Cheng-Zhi Peng 1 , 2 , Feihu Xu 1 , 2 , † , Jian-W ei Pan 1 , 2 , † 1 Shanghai Branch, National Laboratory for Physical Sciences at Microscale and Department of Modern Physics, Uni versity of Science and T echnology of China, Shanghai 201315, China. 2 CAS Center for Excellence and Synergetic Inno v ation Center in Quantum Information and Quantum Physics, Uni versity of Science and T echnology of China, Shanghai 201315 China. ∗ These authors contributed equally to the paper . † e-mail: feihu.xu@ustc.edu.cn; pan@ustc.edu.cn. Long-range active imaging has a variety of applications in remote sensing and target recognition. Single-photon LiD AR (light detection and ranging) offers single-photon sen- sitivity and picosecond timing resolution, which is desirable f or high-precision three-dimensional (3D) imaging over long distances. Despite important progr ess, further extending the imag- ing range presents enormous challenges because only weak echo photons retur n and ar e mixed with strong noise. Herein, we tackled these challenges by constructing a high- efficiency , low-noise confocal single-photon LiDAR system, and developing a long-range- tailored computational algorithm that pr ovides high photon efficiency and super -r esolution in the transverse domain. Using this technique, we experimentally demonstrated active single-photon 3D-imaging at a distance of up to 45 km in an urban en vironment, with a 1 low r etur n-signal lev el of ∼ 1 photon per pixel. Our system is feasible f or imaging at a few hundreds of kilometers by refining the setup, and thus repr esents a significant milestone towards rapid, low-po wer , and high-r esolution LiD AR ov er extra-long ranges. Long-range active optical imaging has widespread applications, ranging from remote sensing 1 – 3 , satellite-based global topography 4 , 5 , and airborne surveillance 3 , to target recogni- tion and identification 6 . An increasing demand for these applications has resulted in the dev el- opment of smaller , lighter , lo wer -po wer LiD AR systems, which can provide high-resolution three-dimensional (3D) imaging ov er long ranges with all-time capability . T ime-correlated single-photon-counting (TCSPC) LiD AR is a candidate technology that has the potential to meet these challenging requirements 7 . Particularly , single-photon detectors 8 and arrays 9 , 10 can provide e xtraordinary single-photon sensiti vity and better timing resolution than analog optical detectors 7 . Such high sensitivity allo ws lower -po wer laser sources to be used and can permit time-of-flight imaging over significantly longer ranges. T remendous efforts have thus been de voted to the dev elopment of single-photon LiD AR for long-range 3D imaging 7 , 11 – 13 . Single- photon 3D imaging up to a distance of 10 km has been reported 14 . In long-range 3D imaging, a frontier question is the distance limit; i.e., over what dis- tances can the imaging system work? For a single-photon LiD AR system, the echo light signal, and thus the signal-to-noise ratio (SNR), decreases rapidly with imaging distance R , which imposes limits on the image quality . Overall, for a given system with laser po wer ( P ) and telescope aperture ( A ) , the limit of operational range R limit is determined by the SNR and the 2 algorithm ef ficiency as follo ws: R limit ∝ SNR · η a = P Aη s R 2 hν ¯ n · η a . (1) Here the free parameters, η s , ¯ n , η a are the system-detection efficienc y , background noise, and reconstruction-algorithm efficienc y , respectiv ely . The free parameters η s and ¯ n are determined by the hardware design, whereas η a is determined using the algorithm design, in which the computational approach can greatly increase its ef ficiency 15 . Recent dev elopments in acti ve imaging ha ve become increasingly dependent on computa- tional power 16 . Computational optical imaging, in particular , has seen remarkable progress 17 – 27 . An important research trend today is the dev elopment of efficient algorithms for imaging with a small number of photons 23 – 28 . One advance in this area was the demonstration of high- quality 3D structure and reflectivity in the laboratory en vironment by an acti v e imager detecting only one photon per pixel (PPP), based on the approaches of pseudo-array 23 , 24 , single-photon camera 25 , unmixing signal/noise 26 and machine learning 27 . These algorithms have the potential to greatly improv e the imaging range. Our primary interest in this work is to significantly push the imaging range tow ards the ultimate limit for high-resolution 3D imaging. W e approached this problem by de veloping an advanced technique based on both hardware and software implementations that are specif- ically designed for long-range single-photon LiD AR. On the hardware side, we designed a high-ef ficiency coaxial-scanning system (see Fig. 1 ) to more ef ficiently collect the weak echo 3 photons and more strongly suppress system background noise. On the software side, we de- veloped a computational algorithm that offers super-resolution in transverse domain and high photon ef ficiency for the data of low photon counts (i.e., ∼ 1 signal PPP) mixed with high back- ground noise (i.e., SNR ∼ 1/30). These improvements allo w us to demonstrate super-resolution single-photon 3D imaging ov er a distance of 45 km from ∼ 1 signal PPP in an urban en viron- ment. Setup. Figure 1 shows a bird’ s-eye view of the long-range activ e-imaging experiment, which was set up at Chongming Island in Shanghai city facing a target building located across the riv er . The optical transceiv er system incorporated a commercial Cassegrain telescope with a 280 mm aperture and a high-precision two-axis automatic rotating stage to allow large-scale scanning of the f ar-field target. The optical components were assembled on a custom-b uilt aluminum platform integrated with the telescope tube. The entire optical hardware system is compact and suitable for mobile applications (see Fig. 1 b). Specifically , as sho wn in Fig. 1 a, a standard erbium-doped near-infrared fiber laser (1550 nm, 500 ps pulse width, 100 kHz repetition rate) served as the light source for illumination. Operating in the near-infrared range makes the system eye-safe, reduces solar background, has lo w atmospheric loss, and is compatible with telecom optical components. The maximal av er- age laser po wer used was 120 mW . The laser output was vertically polarized and was coupled into the telescope through a small aperture consisting of an oblique hole through the mirror . 4 The beam was expanded and output with a div ergence angle of about 35 µr ad . The transmit- ted and receiv ed beams were coaxial, allo wing the area illuminated by the beam and the field of vie w (FoV) to remain matched while scanning. The returned photons were reflected by the perforated mirror and collected by a focal lens. A polarization beam splitter (PBS) served to couple only the horizontally polarized light into a multimode fiber . Finally , the photons were spectrally filtered, coupled efficiently into a multimode fiber , and detected by an InGaAs/InP SP AD (single-photon a v alanche diode) detector 29 . Further details of the setup are giv en in the Supplementary Information. T o achie v e a high-ef ficiency , low-noise confocal single-photon LiD AR system, we im- plemented sev eral optimized designs, most of which dif fered from those used in pre vious experiments 11 – 14 . First, we dev eloped a two-stage FoV scanning method—offering both fine- FoV and wide-FoV scanning—to simultaneously maintain fine features and expand the entire FoV . For fine-FoV scanning, we used a scanning mirror mounted on a piezo tip-tilt platform to steer the beam in both x and y axial directions. This coplanar dual-axis scanning scheme is capable of high-precision angle scanning with highly simplified optical elements, thereby av oiding imaging pillo w distortions. For wide-FoV scanning, we used a two-axis automatic rotation table to rotate the entire telescope. Next, the inter-pix el spacing was chosen to match the FoV of half a detector pixel. This strategy giv es high image resolution and allows us to achie ve super-resolution combined with our computational algorithm (see below). In addition, we used the polarization degree of freedom to reduce the internal back-reflected background 5 noise, which w as achiev ed by using orthogonally polarized inputs and outputs. Finally , we used miniaturized optical holders to align the apertures of all optical elements to a height of 4 cm, thereby improving the system stability . The entire optical platform was compact, measuring only 30 × 30 × 35 cm 3 , including a customized aluminum box to block the ambient light, and was mounted behind the telescope. Algorithm. The long-range operation of the LiD AR system in volv es tw o important chal- lenges that limit the image reconstruction: (i) The dif fraction limit and turbulence in the outdoor en vironment leads to a large FoV in the far field that covers multiple reflectors with different depths (see Supplementary Fig. S3), which greatly deteriorates the image resolution. (ii) The extremely low SNR limits the unmixing of signal from noise in an optical beam. These two challenges are unique to the long-range operation and were thus not considered by previous computational algorithims 15 , 23 – 27 . In particular , the widely assumed condition 26 , 27 “one depth per pixel” is not v alid for long-range operation. W e dev eloped a photon-efficient super-resolution algorithm to solve these two challenges. The forward model of the imaging system is shown in Methods. The implementation of the al- gorithm may be divided into two steps: (1) W e dev eloped a global gating approach to unmix signal from the noise. In this approach, we sum the detection counts from all the pixels to form a time-domain histogram, and then do a peak search to extract the time bins corresponding to signal detections (see Supplementary Fig. S1). The key idea is that natural scenes hav e a finite 6 number of reflectors that are clustered in depth (i.e., time) and therefore can be ef fecti vely fil- tered out in the time domain. (2) W e constructed a modified SPIRAL-T AP solver 30 to directly solve the in verse problem with a 3D matrix , which dif fers from pre vious algorithims 15 , 23 – 27 that were implemented on a tw o-dimensional 2D matrix. For long-range measurements, detection at each pixel in volv es a con volution operation with two k ernels; one in the spatial domain (within the FoV) and one in the temporal domain. W e recorded the measurements from all pixels in a 3D matrix to maintain the features of reflectivity and depth and, to solve the decon v olution problem, we use the total-variation norm to do a direct con v ex optimization on this 3D ma- trix with a transverse-smoothness constraint. In this way , the system provides super -r esolved reconstructions of reflecti vity and depth (see Supplementary Information). Results. W e present an in-depth study of our imaging system and algorithm for a variety of targets with different spatial distributions and structures over different ranges. The experi- ments were done in an urban en vironment. Depth maps of the targets were reconstructed by using the proposed algorithm with ∼ 1 PPP for signal photons and a SNR as lo w as 0.03, where the SNR is defined for a time gate of 200 ns (corresponding to an image depth of 30 m). W e also made accurate laser -ranging measurements to determine the distance to the targets (see Supplementary Information). W e first show the imaging results for a long-range target, called the Pudong Ci vil A via- tion Building, at a one-way distance of 45 km. Fig. 1 shows the topology of the experiment. 7 The imaging setup was placed on the 20th floor of a b uilding and the target was on the op- posite shore of the ri v er . The ground truth of the target is sho wn in Fig. 1 c. Fig. 2 a sho ws a visible-band photograph, taken with a standard astronomical camera (ASI120MC-S). This photograph cannot show any shape of the target due to the inadequate spatial resolution and the air turbulence in the urban en vironment. W e adopted our single-photon LiD AR to do the imaging at night and produce a (128 × 128) -pixel image. A modest laser po wer of 120 mW was used for the data acquisition. The averaged PPP was ∼ 2.59, and the SNR was 0.03. The plots in Fig. 2 b-e show the reconstructed depth obtained by using v arious imaging algorithms, including the pixelwise maximum likelihood (ML), the photon-efficient algorithm by Shin et al. 23 , the unmixing algorithm by Rapp and Goyal 26 , and the algorithm proposed herein. The proposed algorithm recovers the fine features of the building, allo wing the scenes with multi- layer distribution to be accurately identified. The other algorithms, howe v er , fail in this regard. These results clearly demonstrate clearly that the proposed algorithm operates remarkably well for spatial and depth reconstruction of long-range targets. More importantly , by fine-interval scanning (half FoV spacing), the proposed algorithm achiev es a transverse resolution of 0.6 m, which resolves the small windo ws of the target b uilding (see inset in Fig. 2 e). This resolution ov ercomes the transverse dif fraction limit of the single-photon LiD AR system, which is about 1.0 m at the far field of 45 km (see Supplementary Information). T o quantify the performance of the proposed technique, we show an example of a 3D image obtained in daylight of a solid target with complex structures at a one-way distance of 8 21.6 km (see Fig. 3 a). The target is a part of a skyscraper called K11 (see Fig. 3 b) that is located in the center of Shanghai city . Before data acquisition, a photograph of the target was taken with a visible-band camera (see Fig. 3 c); the resulting visible-band image is blurred because of the long object distance and the urban air turb ulence. The single-photon LiD AR data were acquired by scanning 256 × 256 points at an acquisition time per point of 22 ms and with a laser power of 100 mW . The av erage PPP was 1.20, and the SNR was 0.11. The plots in Fig. 3 d-g sho w the reconstructed depth profiles using the pixel wise ML method, the photon-ef ficient algorithm 23 , the unmixing algorithm 26 , and the proposed algorithm herein. The proposed algorithm allows us to clearly identify the shape of the grid structure on the walls, the symmetrical H-like structure at the top of the b uilding, and the small left-to-right gradient caused by the oblique perspecti v e. The quality of the reconstruction is quantified based on the peak signal to noise ratio (PSNR) by comparing the reconstructed image with a high-quality image obtained by using a large number of photons. The PSNR of the proposed algorithm is 14 dB better than that of the ML method, and 8 dB better than that of the unmixing algorithm. T o demonstrate the all-time capability of the proposed LiD AR system, we used it to image building K11 both in daylight and at night (i.e., 11:00 AM and 12:00 PM) on June 15, 2018 and compared the resulting reconstructions. The proposed single-photon LiD AR gav e 1.2 signal PPP and a SNR of 0.11 (0.15) in daylight (at night). Fig. 4 b and Fig. 4 c sho w front-view depth plots of the reconstructed scene. The single-photon LiDAR allows the surface features of the multilayer walls of the building to be clearly identified both in daylight and at night. 9 The enlarged images in Fig. 4 b and Fig. 4 c show the detailed features of the window frames, although, due to increased air turb ulence during the day , the daytime image is slightly blurred compared with the nighttime image. Finally , Fig. 5 sho ws a more complex natural scene with multiple trees and b uildings at a one-way distance of 2.1 km. This scene were selected and scanned in daytime to produce a (128 × 256) -pixel depth image. Fig. 5 b shows the depth profiles of the scene, and Fig. 5 c sho ws a depth-intensity plot. The con ventional visible-band photograph in Fig. 5 a is blurred mainly because of smog in Shanghai, and does not resolve the dif ferent layers of trees in the 2D image. In contrast, as sho wn in Fig. 5 b,c, the proposed LiD AR system clearly resolves the details of the scene, such as the fine features of the trees. More importantly , the 3D capability of the single-photon LiD AR system clearly resolves the multiple layers of trees and b uildings (see Fig. 5 b). This result demonstrates the superior capability of the near-infrared single-photon LiD AR system to resolve targets through smog 31 . T o summarize, we demonstrate in this work activ e single-photon 3D imaging at ranges of up to 45 km. The 3D images are generated at the single-photon-per-pix el lev el and allow for target recognition and identification at very low light le vels. The proposed high-efficienc y confocal single-photon LiD AR system, noise-suppression method, and advanced computational algorithm opens ne w opportunities for rapid and low-po wer LiD AR imaging ov er long ranges. These results should facilitate the adaptation of the system for use in future single-photon Li- 10 D AR systems with Geiger-mode detector arrays 9 , 10 , 25 for rapid data acquisition of moving tar- gets or for fast imaging from moving platforms. By refining the setup, our system is feasible for a few hundreds of kilometers (see Supplementary Information). Overall, our results open a ne w venue for high-resolution, f ast, lo w-po wer 3D optical imaging ov er ultralong ranges. Acknowledgments This work was supported by National Ke y R&D Program of China (SQ2018YFB050100), Na- tional Natural Science Foundation of China, the Chinese Academy of Science, the Thousand Y oung T alent Program of China, the Fundamental Research Funds for the Central Universities and the Shanghai Science and T echnology Development Funds (18JC1414700). The authors would like to thank Cheng W u, Ting Zeng, and Qi Shen for helpful discussions. A uthor Contributions All authors contributed e xtensi v ely to the work presented in this paper . A uthor Information The authors declare no competing financial interests. 11 Methods F orward model. For long-range imaging, the dif fraction limit of telescope projects each de- tector pixel to a large spot (FoV) that covers multiple points in the far field. The rate function for the scanning angle ( θ x , θ y ) is the con volution of the depth-reflecti vity map and a spatial- temporal kernel, R ( t ; θ x , θ y ) = Z θ 0 x ,θ 0 y ∈ FoV h xy ( θ x − θ 0 x , θ y − θ 0 y ) r ( θ 0 x , θ 0 y ) h t ( t − 2 d ( θ 0 x , θ 0 y ) /c ) dθ 0 x dθ 0 y + b, (2) where FoV denotes the field -of -vie w of the scanner , [ r ( θ 0 x , θ 0 y ) , d ( θ 0 x , θ 0 y ) ] is the [intensity ,depth] pair , c is the speed of light, and b is the background rate, and h xy and h t are spatial and temporal kernels representing the intensity distrib ution of the FoV and the shape of the laser pulse. From the theory of photodetection, the total photons detected for all pix els follo w a Pois- son distribution, which can be represented by a 3D matrix as S ∼ Poisson ( h ∗ RD + B ) . (3) Here, h xy and RD xy are discrete representations of the function h xy h t and r ( θ 0 x , θ 0 y ) δ ( t − 2 d ( θ 0 x , θ 0 y ) /c ) respectiv ely , ∗ is the con v olution operation, and B is the 3D matrix of background noise. The goal of image reconstruction is to estimate RD , which contains intensity and depth information, from the lo w-resolution photon-histogram data S . 12 Algorithm. Previous state-of-the-art photon-ef ficient algorithms 15 , 23 – 27 cannot be applied to long-range imaging because the common assumption 26 of “one depth per pixel” made in these studies is not v alid for Eq. ( 1 ). W e thus dev eloped in this w ork a computational algorithm tailored specifically for long-range 3D imaging. The implementation of this algorithm may be di vided into the following two steps: (1) W e dev eloped a global gating approach to unmix signal from noise. W e sum the detection counts from all the pixels to form a histogram in the time domain, and then apply a peak-searching procedure to e xtract the time bins for signal detection. (2) W e solve the decon volution problem by using a modified SPIRAL-T AP solv er 30 , where we generalized the solver from a 2D matrix to a 3D matrix. Specifically , with L RD being the negati ve log-likelihood function of Eq. ( 3 ), the proposed algorithm solves the follo wing problem: minimiz e RD Φ , L RD ( RD ; S , h , B ) + β T V pen T V ( RD ) subj ect to RD i,j,k ≥ 0 , ∀ i, j, k . (4) Here we impose a transverse-smoothness constraint by using the total-v ariation (TV) norm to exploit the spatial correlations of natural scenes. After minimization, a depth map is constructed by calculating the average time of arri v al for each pixel in the 3D matrix. Further details of the proposed computational algorithm are gi ven in the Supplementary Information. 1. Marino, R. M. & Davis, W . R. Jigsaw: a foliage-penetrating 3D imaging laser radar system. Lincoln Lab . J. 15 , 23–36 (2005). 2. Schwarz, B. Lidar: Mapping the world in 3D. Natur e Photonics 4 , 429 (2010). 13 3. Glennie, C. L., Carter , W . E., Shrestha, R. L. & Dietrich, W . E. Geodetic imaging with airborne lidar: the earth’ s surface rev ealed. Reports on Pr ogr ess in Physics 76 , 086801 (2013). 4. Smith, D. et al. T opography of the northern hemisphere of mars from the mars orbiter laser altimeter . Science 279 , 1686–1692 (1998). 5. Abdalati, W . et al. The ICESat-2 laser altimetry mission. Pr oceedings of the IEEE 98 , 735–751 (2010). 6. Gschwendtner , A. B. & Keicher , W . E. De velopment of coherent laser radar at lincoln laboratory . Lincoln Laboratory Journal 12 , 383–396 (2000). 7. Buller , G. & W allace, A. Ranging and three-dimensional imaging using time-correlated single-photon counting and point-by-point acquisition. IEEE J ournal of selected topics in quantum electr onics 13 , 1006–1015 (2007). 8. Hadfield, R. H. Single-photon detectors for optical quantum information applications. Na- tur e photonics 3 , 696 (2009). 9. Richardson, J. A., Grant, L. A. & Henderson, R. K. Lo w dark count single-photon av alanche diode structure compatible with standard nanometer scale cmos technology . IEEE Photon. T echnol. Lett 21 , 1020–1022 (2009). 10. V illa, F . et al. CMOS imager with 1024 spads and tdcs for single-photon timing and 3-D time-of-flight. IEEE journal of selected topics in quantum electr onics 20 , 364–373 (2014). 14 11. McCarthy , A. et al. Long-range time-of-flight scanning sensor based on high-speed time- correlated single-photon counting. Applied optics 48 , 6241–6251 (2009). 12. McCarthy , A. et al. Kilometer-range, high resolution depth imaging via 1560 nm wa ve- length single-photon detection. Optics expr ess 21 , 8904–8915 (2013). 13. Li, Z. et al. Multi-beam single-photon-counting three-dimensional imaging lidar . Optics expr ess 25 , 10189–10195 (2017). 14. Pa wliko wska, A. M., Halimi, A., Lamb, R. A. & Buller , G. S. Single-photon three- dimensional imaging at up to 10 kilometers range. Optics expr ess 25 , 11919–11931 (2017). 15. Kirmani, A. et al. First-photon imaging. Science 343 , 58–61 (2014). 16. Altmann, Y . et al. Quantum-inspired computational imaging. Science 361 , eaat2298 (2018). 17. V elten, A. et al. Recov ering three-dimensional shape around a corner using ultrafast time- of-flight imaging. Natur e communications 3 , 745 (2012). 18. Sun, B. et al. 3D computational imaging with single-pixel detectors. Science 340 , 844–847 (2013). 19. Gariepy , G., T onolini, F ., Henderson, R., Leach, J. & Faccio, D. Detection and tracking of moving objects hidden from vie w . Natur e Photonics 10 , 23 (2016). 15 20. O’T oole, M., Lindell, D. B. & W etzstein, G. Confocal non-line-of-sight imaging based on the light-cone transform. Natur e 555 , 338 (2018). 21. Xu, F . et al. Rev ealing hidden scenes by photon-ef ficient occlusion-based opportunistic acti ve imaging. Optics expr ess 26 , 9945–9962 (2018). 22. Saunders, C., Murray-Bruce, J. & Goyal, V . K. Computational periscopy with an ordinary digital camera. Natur e 565 , 472 (2019). 23. Shin, D., Kirmani, A., Goyal, V . K. & Shapiro, J. H. Photon-efficient computational 3-D and reflectivity imaging with single-photon detectors. IEEE T ransactions on Computa- tional Imaging 1 , 112–125 (2015). 24. Altmann, Y ., Ren, X., McCarthy , A., Buller , G. S. & McLaughlin, S. Lidar wa veform-based analysis of depth images constructed using sparse single-photon data. IEEE T ransactions on Image Pr ocessing 25 , 1935–1946 (2016). 25. Shin, D. et al. Photon-efficient imaging with a single-photon camera. Natur e communica- tions 7 , 12046 (2016). 26. Rapp, J. & Goyal, V . K. A fe w photons among many: Unmixing signal and noise for photon-ef ficient activ e imaging. IEEE T ransactions on Computational Imaging 3 , 445–459 (2017). 27. Lindell, D. B., O’T oole, M. & W etzstein, G. Single-photon 3D imaging with deep sensor fusion. A CM T ransactions on Graphics (TOG) 37 , 113 (2018). 16 28. Morris, P . A., Aspden, R. S., Bell, J. E., Boyd, R. W . & P adgett, M. J. Imaging with a small number of photons. Natur e communications 6 , 5913 (2015). 29. Y u, C. et al. Fully integrated free-running InGaAs/InP single-photon detector for accurate lidar applications. Optics Expr ess 25 , 14611–14620 (2017). 30. Harmany , Z. T ., Marcia, R. F . & W illett, R. M. This is SPIRAL-T AP: Sparse poisson intensity reconstruction algorithms–theory and practice. IEEE T ransactions on Image Pr o- cessing 21 , 1084–1096 (2012). 31. T obin, R. et al. Three-dimensional single-photon imaging through obscurants. Optics Expr ess 27 , 4590–4611 (2019). 17 PM fiber N 10km a LA F SP AD SM PMF MMF PERM M PBS COL Cam FF QWP HWP L 45km c b (121.60,31.25) (121.40,31.63) Figure 1: Illustration of long-range active single-photon LiD AR. Satellite image of the ex- periment layout in the urban area of Shanghai City , with the single-photon LiD AR positioned on Chongming Island. a , Schematic diagram of experimental setup. SM, scanning mirror; Cam, camera (visible band); M, mirror; PERM, 45 ◦ perforated mirror; PBS, polarization beam split- ter; SP AD, single-photon a v alanche diode detector; MMF , multimode fiber; PMF , polarization- maintaining fiber; LA, laser (1550 nm); F , filters (long pass and 9 nm bandpass); FF , fiber filter (1.3 nm bandpass); L, lens; HWP , half-wav e plate; QWP , quarter-wa v e plate; EDF A, erbium- doped fiber amplifier . b , Photograph of experimental setup, including the optical system (left) and the electronic control system (right). The optical system consists of a telescope congre- gation and an optical-component box for shielding. c , Close-up photograph of the target, the Pudong Civil A viation Building, which is on the opposite shore of the riv er from Chongming Island. The building is 45 km from the single-photon LiD AR setup. 18 a c b d e Pixelwise ML Shin et al. 2016 Rapp and Goyal 2017 Proposed method (m) Approximate FoV 0.6m Figure 2: Long range 3D imaging over 45 km. a , Real visible-band image (tailored) of the target taken with a standard astronomical camera. This photograph is substantially blurred due to the inadequate spatial resolution and the air turbulence in the urban en vironment. The red rectangle indicates the approximate LiD AR FoV . b–e , The reconstruction results obtained by using the pixelwise maximum likelihood (ML) method, the photon-efficient algorithm by Shin et al. 23 , the unmixing algorithm by Rapp and Goyal 26 , and the proposed algorithm, respecti v ely . The single-photon LiD AR recorded an average PPP of ∼ 2.59, and the SNR was ∼ 0.03. The calculated relati ve depth for each individual pixel is gi ven by the false color (see color scale on right). Our algorithm performs much better than the other state-of-art photon-efficient com- putational algorithms and provides super-resolution suf ficient to clearly resolv e the 0.6-m-wide windo ws (see expanded vie w in inset of panel e ). 19 c b e (m) d g f PSNR=5.40 dB PSNR=1 1.40dB PSNR=5.65 dB PSNR=19.58 dB a Visible-band image Ground truth Shin et al. 2016 Proposed method Rapp and Goyal 2017 Pixelwise ML 0 5 10 15 20 25 Target Setup 21.6km (K1 1) ( 121.48,31.23 ) ( 121.59,31.06 ) 5 km 5 5k m 5km 5 km m 5km 5km k m 5km 5km km k m 5 k m 5 km 5 k m 5k m 5k 5k 5 k m 5 5km m m N N N N N N N N N N N N N N N Figure 3: Long-range target taken in daylight over 21.6 km. a , T opology of the experiment. b , Ground-truth image of the target (building K11). c , V isible-band image of the target taken with a standard astronomical camera. d-g , Depth profile taken with the proposed single-photon LiD AR in daylight and reconstructed by applying the different algorithms to the data with 1.2 signal PPP and SNR = 0.11. d , Reconstruction with the pixel wise ML method. e , Recon- struction with the algorithm of Shin et al. 23 . f , Reconstruction with the algorithm of Rapp and Goyal 26 . g , Reconstruction with the proposed algorithm. The peak signal to noise ratio (PSNR) was calculated by comparing the reconstructed image with a high-quality image obtained with a large number of photons. The proposed method yields a much higher PSNR than the other algorithms. 20 (m) 0 5 10 15 20 0 5 10 15 20 Zoom In Daylight Night Visible-band image a b c Zoom In Zoom In 25 (m) 25 Figure 4: Long-range target at 21.6 km imaged in daylight and at night. a , V isible-band image of the target tak en with a standard astronomical camera. b , Depth profile of image taken in daylight and reconstructed with signal PPP=1.2, SNR=0.11. c , Depth profile of image taken at night and reconstructed with signal PPP=1.2, SNR=0.15. 21 250 200 150 depth/m 100 50 0 0 2 Y/m 2 4 4 X/m 6 8 0.125 0.25 0.375 0.5 0. 625 0.75 0. 875 reflectivity (m) a b c 0 50 100 150 200 250 Figure 5: Reconstruction of multilayer depth profile of a complex scene. a , V isible-band image of the target taken by a standard astronomical camera mounted on the imaging system with an f = 700 mm camera lens. b,c, Depth profile taken by the proposed single-photon LiD AR ov er 2.1 km, and recovered by using the proposed computational algorithm. Trees at different depths and their fine features can be identified. 22

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment