Cross-lingual Text-independent Speaker Verification using Unsupervised Adversarial Discriminative Domain Adaptation

Speaker verification systems often degrade significantly when there is a language mismatch between training and testing data. Being able to improve cross-lingual speaker verification system using unlabeled data can greatly increase the robustness of …

Authors: Wei Xia, Jing Huang, John H.L. Hansen

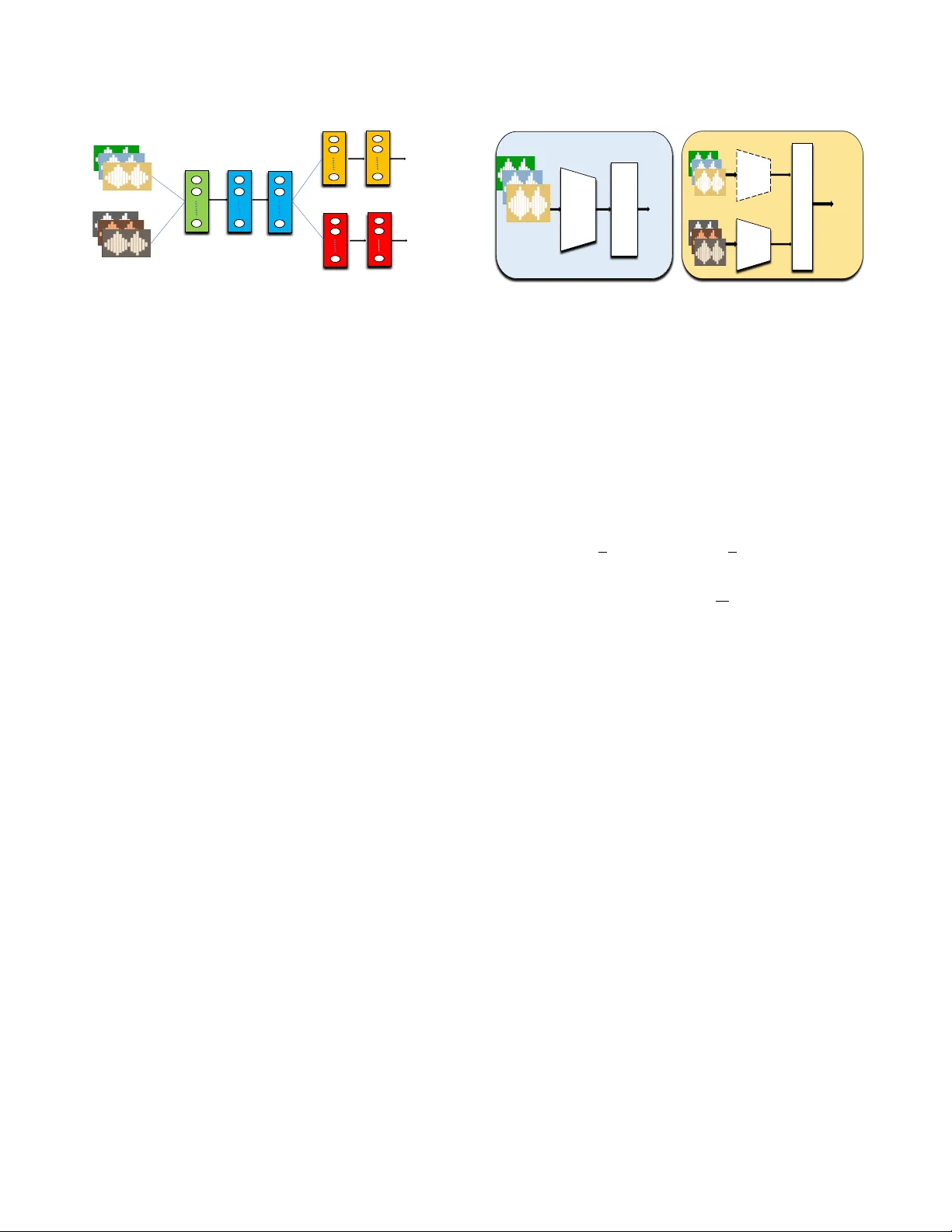

CR OSS-LINGU AL TEXT -INDEPENDENT SPEAKER VERIFICA TION USING UNSUPER VISED AD VERSARIAL DISCRIMIN A TIVE DOMAIN AD APT A TION W ei Xia 1 , Jing Huang 2 , John H.L. Hansen 1 1 Center for Robust Speech Systems, UT -Dallas, TX, USA 2 JD AI Research, Mountain V ie w , CA, USA ABSTRA CT Speaker verification systems often degrade significantly when there is a language mismatch between training and testing data. Being able to improve cross-lingual speak er v erification system using unlabeled data can greatly increase the robustness of the system and reduce hu- man labeling costs. In this study , we introduce an unsupervised Ad- versarial Discriminati ve Domain Adaptation (ADD A) method to ef- fectiv ely learn an asymmetric mapping that adapts the target domain encoder to the source domain, where the target domain and source domain are speech data from different languages. ADD A, together with a popular Domain Adversarial T raining (DA T) approach, are ev aluated on a cross-lingual speaker verification task: the training data is in English from NIST SRE04-08, Mixer 6 and Switchboard, and the test data is in Chinese from AISHELL-I. W e show that with the ADD A adaptation, Equal Error Rate (EER) of the x-vector sys- tem decreases from 9.331% to 7.645%, relatively 18.07% reduction of EER, and 6.32% reduction from DA T as well. Further data anal- ysis of ADDA adapted speaker embedding shows that the learned speaker embeddings can perform well on speaker classification for the target domain data, and are less dependent with respect to the shift in language. Index T erms — Speaker V erification, Adversarial T raining, Do- main Adaptation, Speaker Representation 1. INTR ODUCTION Speaker verification (SV) of fers a natural and flexible option for bio- metric authentication. The text-independent SV system, which does not require the fix ed input voice content, is a flexible and challenging task. In real-world scenarios, ho wev er , speaker verification systems may degrade significantly when training on one language and test it on another . Language mismatch falls into two scenarios that include (i) the speaker verification system is trained on one language, but the enrollment and test data for speakers are in a second language, and (ii) the enrollment data is in one language, but the test data is in a second language. This study focused on the first scenario where the speaker model is trained on English data, b ut the enrollment and test materials for speakers are in a new language, Chinese. Since it is not desirable to re-train the speaker model on a new language, the chal- lenge is to find an alternative solution which would allow such an existing system to maintain performance when enrollment and test speaker data are from a ne w language. Recently , the speaker representation models have moved from the commonly used i-vector model [1, 2, 3], with a probabilistic lin- ear discriminant (PLD A) back-end [4, 5] to a ne w paradigm: speaker embedding trained from deep neural networks. V arious speaker em- beddings based on different network architectures [6, 7] , attention mechanism [8, 9], loss functions [10, 11], noise robustness [12, 13], and training paradigms [14, 15] have been proposed and greatly im- prov e the performance of speaker verification systems. Snyder et al. [6] recently proposed the x-vector model, which is based on a T ime-Delay Deep Neural Network (TDNN) architecture that com- putes speaker embeddings from variable-length acoustic segments. This x-vector model has become very successful in various speaker recognition tasks. W e use it as the baseline in this study . Howe ver , models trained with these deep neural networks may not generalize well to other datasets in different domains. T o alle- viate the domain mismatch problem, we can use domain adaptation methods to reduce the domain shift. W e can compensate the mis- match by estimating the compensation model [16, 17, 18, 19] us- ing unlabeled data and source domain data. Adversarial adaptation methods [20, 21, 12, 22] were also applied to ensure that the net- work cannot distinguish the distributions of training and testing ex- amples. W ang et al. [23] proposed an unsupervised approach based on Domain Adversarial Training (D A T) to address speaker recogni- tion problem in domain mismatched conditions. In this study , we introduce the unsupervised Adversarial Dis- criminativ e Domain Adaptation (ADD A) [24] approach. It w as orig- inally tested on image classification tasks. W e adapt the ADD A approach to the cross-lingual unsupervised adaptation for text- independent speaker verification. Unsupervised adaptation without requiring target domain labels lar gely reduces labeling costs and utilizes a large amount of publicly av ailable online data. Our ap- proach only requires source and unlabeled target domain data to learn an asymmetric mapping that adapts the target domain feature encoder to the source domain. Furthermore, the ADDA uses sep- arate encoders for the source and target domain without assuming that source and target domain data has a similar class distribution. W e sho w that ADD A is more effecti ve yet considerably simpler than other domain-adversarial methods: the source data is in English from NIST SRE04-08, Mixer 6 and Switchboard, and the target data is in Chinese from AISHELL-I. W e show that with the ADD A adapta- tion, Equal Error Rate (EER) of the x-vector system decreases from 9.331% to 7.645%, relatively 18.07% reduction on EER. ADD A also has 12.54% relativ e reduction of EER compared to D A T . In the following sections, we describe the ADD A approach and corresponding baseline systems in Section 2. W e provide detailed explanations of our experiments in Section 3, as well as results and discussions in Section 4. Finally we conclude in Section 5 with fu- ture work. 1.1. Related work A number of domain adaptation approaches have been proposed to alleviate the domain shift problem. For example, W ang et al. [23] ap- ply the D A T technique to alle viate the i-vectors mismatch across dif- ferent domains. They use a multi-task learning frame work to jointly source' target j oint&fea ture&extract or s peake r&classifier& ! (x) domain label Adversarial&Domain&Classifier& " (x) s peak er' label # (x) Fig. 1 : Overvie w of the Domain Adversarial T raining (D A T) frame- work. Adversarial domain classifier has a gradient rev ersal layer . Speaker classifier and domain classifier both tak e input from the joint feature extractor , are optimized to excel in their own tasks. learn a shared feature extractor and two classifiers. W ith a gradi- ent reversal layer in the domain classifier, the shared feature extrac- tor can e xtract domain-inv ariant and speaker-discriminati ve features. In [16, 17], the authors proposed an Inter-Dataset V ariability Com- pensation (ID VC) technique to remov e the mismatch using Nuisance Attribute Projection (N AP). First, a subspace is computed represent- ing all different data-sets and then N AP is used to remov e that sub- space as an i-V ector pre-processing step. All these work were on i-vectors for speaker verification, while our work is on the recently proposed x-vectors and sho ws very promising results. 2. SPEAKER VERIFICA TION SYSTEMS 2.1. The X-vector system W e use a recently proposed successful speaker model called X- vector [6], to extract speaker representations, and a Probabilistic Linear Discriminant Analysis (PLD A) back-end to compare pairs of enrollment and test speaker embeddings. The X-vector model is based on a Time-Delay Deep Neural Network (TDNN) architec- ture that computes speaker embeddings from variable-length acous- tic segments. The network consists of layers that operate on speech frames, a statistics pooling layer that aggre gates o ver the frame-le vel representations, additional layers that operate at the segment-le vel, and finally a softmax output layer . The embeddings are extracted after the statistics pooling layers. 2.2. Cross-lingual adv ersarial training baseline In order to address the cross-lingual speak er verification problem, we first implement a Domain Adv ersarial Neural Netw ork (DANN) [23] using Domain Adversarial Training (D A T) [20] to transfer speaker information from labeled English data to another language where only unlabeled data exists, for example, Chinese. D ANN in Fig. 1 is a Y -shaped network with two discriminative branches: a speaker recognizer and an adversarial language classifier . Both branches take input from a shared feature extractor that aims to learn hidden rep- resentations that capture the underlying information of the speaker and are independent of languages. W e can implement the language independent speaker verifica- tion system assuming that DANN can learn features that perform well on speaker classification for the source and target language data, are independent with respect to the shift in language. This can be done by minimizing the speaker classification loss and maximizing the domain classification loss with a gradient rev ersal layer . DANN Classifier Source- Encoder source' Discriminato r Source- Encoder target class label Ta r g e t Encoder source' Pre 7 tr aining Adversarial-Adaptation domain label Fig. 2 : Overvie w of the proposed Adversarial Discriminative Do- main Adaptation (ADD A) approach. Source DNN encoder is fixed during the adversarial adaptation. mainly has tw o components: 1) a speaker recognizer y for the source data; 2) an adversarial language classifier d that predicts a scalar in- dicating whether the input speech is from the source language or the target language. The two classifiers take input from the shared feature extractor f , which operates on the average of the speaker embeddings. The loss function of D ANN is a multi-task loss which combines the loss of the speaker classifier and the domain classifier with a weight λ . Training D ANN consists in optimizing, E ( θ f , θ y , θ d ) = 1 n n X i =1 L i y ( θ f , θ y ) − λ [ 1 n n X i =1 L i d ( θ f , θ d ) + 1 n 0 N X i = n +1 L i d ( θ f , θ d )] , (1) where θ f , θ y , θ d are parameters of the joint feature extractor and two classifiers, and L y , L d are the prediction and the domain loss func- tions. n and n 0 are the number of samples of the source and target domain data respecti vely . W e can optimize this loss function using stochastic gradient descent to get the parameters, Using this DA T ap- proach, we are able to minimize the div ergence between the source and target feature distributions. Therefore, the learned embeddings are less dependent on the shift in language. 2.3. Adversarial discriminativ e domain adaptation Different from the DA T method which applies a gradient reversal layer to confuse the domain classifier, we apply the Adversarial Dis- criminativ e Domain Adaptation (ADD A) approach to directly learn an asymmetric mapping, in which we modify the tar get model in or- der to match the source distribution. A summary of this entire train- ing process is provided in Fig. 2. Unlike the D A T method which uses a shared feature encoder , our proposed ADD A approach uses separate encoders for the source and tar get domain data. When there is a significant domain shift, the DA T method may not work well since it inherently assumes that source and target domain data has a similar class distribution. W e define input samples x ∈ X with data labels y ∈ Y , where X and Y are input space and output space, respectiv ely . In our speaker verification experiments, x andy are x-vectors and speaker labels. The probabilistic distrib ution D ( x , y ) , howe ver , might be different between training and evaluation dataset due to various do- main mismatch such as language mismatch. W e denote S ( x , y ) and T ( x , y ) as source domain and target domain distribution respec- tiv ely . Our goal is to minimize the distance between the empirical source and target mapping distributions. W e firstly learn a source mapping M s , along with a source classifier C , and then learn to map the target domain encoder to the source domain. W e train the source classification model using a standard cross entropy loss defined belo w , min M s , C L cls ( X s , Y s ) = − E ( x s ,y s ) ∼ ( X s ,Y s ) K X k =1 1 [ k = y s ] log C ( M s ( x s )) , (2) In order to minimize the source and target representation dis- tances, we use a domain discriminator D to classify whether a data point is drawn from the source or the target domain. W e optimize D using an adversarial loss L adv D ( X s , X t , M s , M t ) , defined below: min D L adv D ( X s , X t , M s , M t ) = − E x s ∼ X s [log D ( M s ( x s ))] − E x t ∼ X t [log(1 − D ( M t ( x t )))] , (3) The D A T method uses a gradient reversal layer [20] to learn the mapping by maximizing the discriminator loss directly , where its adversarial loss L adv M = −L adv D . Different from DA T , in order to train the mapping, we use the loss function L adv M defined belo w . This objectiv e has the same fixed-point properties as the minimax loss but pro vides stronger gradients to the target mapping. min M s ,M t L adv M ( X s , X t , D ) = − E x t ∼ X t [log D ( M t ( x t ))] . (4) W e can optimize this objective function in two steps. First, we need to train a discriminativ e source classification model, we choose to use a three-layer Deep Neural Network (DNN) and the input fea- tures are x-vectors. W e start optimizing classification loss L cls ov er source domain mapping function M s and classifier C by training with the labeled source English data, X s and Y s . Because we make M s fixed while learning M t , we can then optimize L adv D and L adv M without revisiting the first objecti ve term. Through this unsupervised adversarial discriminati ve domain adaptation approach, we can adapt the target encoder to the source domain. In the next section, we will present promising results on cross-lingual text-independent speaker verification tasks using ADD A. 3. EXPERIMENT AL SETUP 3.1. English Corpora W e use Speaker Recognition Evaluation (SRE) 04-08, Mixer 6, and Switchboard (SWBD) to train the x-vector model. SRE corpus is part of the Mixer 6 project, which w as designed to support the dev el- opment of rob ust speaker recognition technology by pro viding care- fully collected speech across numerous microphones. Switchboard is a collection of about two-sided telephone conv ersations among thousands of speakers from all areas of the United States. 3.2. Chinese Corpora AISHELL-1 [25] is a subset of the AISHELL-ASR0009 corpus, which is a 500 hours multi-channel mandarin speech corpus de- signed for various speech/speaker processing tasks. Speech utter- ances are recorded at 44.1kHz via microphones, 16kHz via Android phones and 16kHz via iPhones. There are 360 participants in the recording, and speakers’ gen- der , accent, age, and birth-place are recorded as meta-data. About 80 percent of the speakers are from age 16 to 25. Most speakers come from the Northern area of China. The entire corpus includes train- ing and test sets, without speaker overlap. Though the training data provides speaker labels, we do not use any speaker label information of the training data or include it in training our x-vector model. W e only use it for unsupervised domain adaptation. W e call it AISHELL unlabeled training set. The training set contains 120,098 utterances from 340 speak- ers; T est set contains 7,176 utterances from 20 speakers. For each speaker , around 360 utterances (about 26 minutes of speech in to- tal) are released. In order to test our proposed unsupervised ADDA approach, we don’t use any speaker labels of the training data. W e train our x-vector based speaker model on the SRE04-08, Mixer 6, and switchboard dataset, and e valuate on the Chinese AISHELL test 143520 trials. 3.3. Evaluation setup W e use SRE04-08, Mix er6 and Switchboard data to train the TDNN based x-vector model. W e follow the Kaldi SRE16 recipe to aug- ment the training data by adding noises and reverberations. W e use an energy based V AD and the raw feature to train the model are 23-dimensional MFCCs. Having established the x-v ector system us- ing English data, we no w try to address the challenge of ev aluation enrollment and test speakers for a mismatched language, Chinese. T o accomplish this, A set of unlabeled data for the ne w language is needed. W e use the target domain AISHELL unlabeled training data. W e extract x-vectors on source domain SRE and SWBD data and target domain AISHELL unlabeled data to train the adaptation network. W e train the Adversarial Domain Adaptation Network (AD AN) in two steps. First, we train a DNN encoder and classifier on SRE and SWBD x-vectors. Next, we use the pre-trained source model as an initialization for the target DNN encoder and perform adver - sarial adaptation to learn a target domain mapping on the AISEHLL unlabeled x-vectors. During testing, we use AISHELL ev aluation set enrollment x- vectors and test x-vectors as the input to the ADDA, and extract the new vectors ˆ x e , ˆ x t using the trained target encoder of ADD A. Adapted embeddings ˆ x e , ˆ x t are therefore expected to be domain- in variant and speaker discriminati ve representations which stay in the same subspace. W e apply mean and length normalization on the adapted embeddings. For the back-end, we train a Probabilistic Lin- ear Discriminant Analysis (PLD A) model on combined SRE clean and noise augmented data, and compute log-likelihood ratio scores of enrollment and test trials. W e also perform unsupervised PLDA adaptation using Kaldi to utilize the AISHELL unlabeled data. 3.4. Model configuration For this experiment, our base architecture is a three-layer Deep Neu- ral Network which is fine-tuned on the source domain for 100 epochs using a batch size of 128. When training ADD A, the adversarial discriminator consists of three additional fully connected layers: 2 hidden layers and an adversarial discriminator output. W ith the ex- ception of the output, these additionally fully connected layers use a ReLU activ ation function. ADDA target encoder training then pro- ceeds for another 100 epochs with a batch size of 128. For the D A T training, the shared feature encoder is a three-layer DNN. W e use an Adam optimizer with a learning rate 10 − 4 . The speaker classi- fier and the language classifier are two-layer DNNs. T o confuse the language domain classifier , the language classifier has a gradient re- versal layer . W e use a multi-task loss with equal weights to combine the two cross entropy losses. 4. RESUL TS AND DISCUSSIONS 4.1. Results In this section, we sho w experimental results using x-vector , x- vector with D A T and x-vector with ADD A training with and without PLD A adaptations in T able 1. W e use Linear Discriminant Analysis (LD A) to reduce all three embeddings to 256 dimension for compar- ison. Also, we concatenate the D A T embedding with the x-vector since we find it always performs better than a single DA T embed- ding. From T able 1, we observe that our proposed method, ADDA, greatly improves Equal Error Rate (EER) on AISHELL test trials. After ADDA adaptation, EER of the x-vector system decreases from 9.331% to 7.645%, relatively 18.07%. The ADD A approach also achiev es relati vely 12.54% impro vement compared with the concate- nated x-vector and DA T embedding. The major reason that ADD A works better might be that it uses an adversarial discriminator to adapt the target encoder to the source domain. Also, by initializing the target representation space with the pre-trained source model, we can effecti vely learn the asymmetric mapping function. Fig. 3 sho ws the Detection Error T rade-off (DET) curve of our speaker recognition system at three dif ferent settings without PLD A adaptation. From the figure, we see after DA T or ADD A adapta- tion, the overall speaker verification system performance improves significantly compared with the x-v ector system. Further , both False Positiv e Rate (FPR) and False Negati ve Rate (FNR) of the ADD A embedding system reduce by a large margin compared with the x- vector+D A T embedding system. It indicates that ADDA embedding has more in variance to language shift. EER(%) MinDCF x-vector 9.331 0.7755 x-vector + D A T 8.741 0.7475 ADD A embedding 7.645 0.7257 x-vector + PLD A adaptation 9.162 0.7095 x-vector + D A T + PLD A adaptation 7.799 0.6989 ADD A embedding + PLD A adaptation 7.504 0.7062 T able 1 : Speaker verification results using different models with a PLD A back-end. False Positive Rate (FPR)[%] 2 5 10 20 False Negative Rate (FNR)[%] 2 5 10 20 x-vector x-vector+DAT ADDA Fig. 3 : DET curve results with dif ferent speaker representations. (a) x-vector (b) ADD A embedding Fig. 4 : V isualizations of x-vector and ADD A speaker embeddings using t-SNE 4.2. V isualization of speaker embeddings T o in vestigate the effect ADDA has on speaker verification, we further assess the quality of the learned speaker features, using t- SNE [26], we plot embeddings after LD A from same K speakers of the AISHELL test set. The results are presented in Fig. 4. Fig. 4 (a) is the visualization of x-vectors, and Fig. 4 (b) is the visualization of ADD A embedding. It can be seen that the ADDA embeddings hav e more discriminative ability to separate different speakers. Howe ver , for x-vectors, we observe that some utterances from different speak- ers are grouped together and not well separated in the embedding space. Also, for speaker “0764”, it is difficult to separate it from speaker “0765” using both methods. It is probably because these two speakers ha ve v ery similar speaker information. 4.3. Clustering analysis In order to quantitativ ely analyze the quality of adapted speak er rep- resentations, we also perform clustering on the adapted embeddings. Since t-SNE cannot maintain distance information, which is nec- essary to apply most clustering algorithms, we perform K-means clustering after LD A transformed x-vectors and ADDA embeddings. Giv en the knowledge of the ground truth speaker labels, we com- pute the Normalized Mutual Information (NMI) [27] of the K-means clustering assignment. NMI is a metric that measures the agreement of the ground truth labels and the clustering results. The NMI score of x-vectors is 0.787, and the NMI score of ADD A embeddings is 0.802, relatively 1.9% higher . This result is consistent with the vi- sualization using t-SNE. Therefore, we can conclude that with the ADD A adaptation, we can learn more speaker discriminative and language independent speaker embeddings. 5. CONCLUSIONS AND FUTURE WORK W e presented a discriminative adversarial unsupervised adaptation method in this paper . By e xploiting how to alleviate the domain mismatch problem in an English-Chinese cross-lingual speaker ver- ification task, we sho wed that our proposed unsupervised ADD A approach can perform well on speaker classification for the target domain data. Additional data analysis indicated that the representa- tions learned via ADDA can be well separated and are less dependent with respect to the shift in language. In the future, we would like to inv estigate the influence of pho- netic content on cross-lingual text-independent speaker verification. W e intend to use a phoneme decoder to analyze the linguistic factor of speaker models. 6. REFERENCES [1] Patrick K enny , Gilles Boulianne, Pierre Ouellet, and Pierre Dumouchel, “Joint factor analysis versus eigenchannels in speaker recognition, ” IEEE T ransactions on Audio, Speec h, and Language Pr ocessing , vol. 15, no. 4, pp. 1435–1447, 2007. [2] Pa vel Mat ˇ ejka, Ond ˇ rej Glembek, Fabio Castaldo, Md Jahangir Alam, Old ˇ rich Plchot, Patrick Kenny , Luk ´ a ˇ s Burget, and Jan ˇ Cernocky , “Full-co variance ubm and heavy-tailed plda in i- vector speaker verification, ” in Acoustics, Speech and Sig- nal Pr ocessing (ICASSP), IEEE International Conference on , 2011, pp. 4828–4831. [3] John HL Hansen and T aufiq Hasan, “Speaker recognition by machines and humans: A tutorial revie w , ” IEEE Signal pr o- cessing magazine , v ol. 32, no. 6, pp. 74–99, 2015. [4] Patrick K enny , “Bayesian speaker verification with heavy- tailed priors., ” in Odyssey , 2010, p. 14. [5] Simon JD Prince and James H Elder , “Probabilistic linear dis- criminant analysis for inferences about identity , ” in Computer V ision (ICCV), IEEE International Confer ence on , 2007, pp. 1–8. [6] David Snyder , Daniel Garcia-Romero, Gregory Sell, Daniel Pov ey , and Sanjeev Khudanpur , “X-vectors: Robust dnn em- beddings for speaker recognition, ” Acoustics, Speech and Sig- nal Pr ocessing (ICASSP), IEEE International Conference on , 2018. [7] Daniel Michelsanti and Zheng-Hua T an, “Conditional gener- ativ e adversarial networks for speech enhancement and noise- robust speak er verification, ” in INTERSPEECH , 2017. [8] F A Rezaur rahman Chowdhury , Quan W ang, Ignacio Lopez Moreno, and Li W an, “ Attention-based models for text- dependent speaker verification, ” in Acoustics, Speech and Sig- nal Pr ocessing (ICASSP), IEEE International Conference on , 2018, pp. 5359–5363. [9] Shi-Xiong Zhang, Zhuo Chen, Y ong Zhao, Jinyu Li, and Y ifan Gong, “End-to-end attention based text-dependent speaker ver - ification, ” in Spoken Language T echnology W orkshop (SLT) . IEEE, 2016, pp. 171–178. [10] Li W an, Quan W ang, Alan Papir , and Ignacio Lopez Moreno, “Generalized end-to-end loss for speaker verification, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), IEEE In- ternational Confer ence on , 2018, pp. 4879–4883. [11] Chunlei Zhang, Kazuhito Koishida, and John HL Hansen, “T ext-independent speaker verification based on triplet conv o- lutional neural network embeddings, ” IEEE/ACM T ransactions on Audio, Speech and Language Pr ocessing (T ASLP) , vol. 26, no. 9, pp. 1633–1644, 2018. [12] Hong Y u, Zheng-Hua T an, Zhanyu Ma, and Jun Guo, “ Ad- versarial network bottleneck features for noise robust speaker verification, ” in INTERSPEECH , 2017, pp. 1492–1496. [13] W ei Xia and John HL Hansen, “Speaker recognition with non- linear distortion: Clipping analysis and impact, ” in INTER- SPEECH , 2018, pp. 746–750. [14] Georg Heigold, Ignacio Moreno, Samy Bengio, and Noam Shazeer , “End-to-end text-dependent speaker verification, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), IEEE In- ternational Confer ence on , 2016, pp. 5115–5119. [15] Hee-soo Heo, Jee-weon Jung, IL-ho Y ang, Sung-hyun Y oon, and Ha-jin Y u, “Joint Training of Expanded End-to-End DNN for T ext-Dependent Speaker V erification, ” in INTERSPEECH , 2017, pp. 1532–1536. [16] Hagai Aronowitz, “Inter dataset variability compensation for speaker recognition, ” in Acoustics, Speech and Signal Pr o- cessing (ICASSP), IEEE International Conference on , 2014, pp. 4002–4006. [17] Ahilan Kanagasundaram, David Dean, and Sridha Sridharan, “Improving out-domain plda speaker verification using unsu- pervised inter-dataset variability compensation approach, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), IEEE In- ternational Confer ence on , 2015, pp. 4654–4658. [18] Abhinav Misra and John HL Hansen, “Maximum-likelihood linear transformation for unsupervised domain adaptation in speaker verification, ” IEEE/A CM T ransactions on Audio, Speech and Language Pr ocessing (TASLP) , vol. 26, no. 9, pp. 1549–1558, 2018. [19] Abhinav Misra and John HL Hansen, “Modelling and compen- sation for language mismatch in speaker verification, ” Speech Communication , vol. 96, pp. 58–66, 2018. [20] Y aroslav Ganin, Evgeniya Ustino va, Hana Ajakan, Pascal Ger - main, Hugo Larochelle, Franc ¸ ois Laviolette, Mario Marchand, and V ictor Lempitsky , “Domain-adversarial training of neural networks, ” The Journal of Machine Learning Resear ch , vol. 17, no. 1, pp. 2096–2030, 2016. [21] Zhongyi Pei, Zhangjie Cao, Mingsheng Long, and Jianmin W ang, “Multi-adversarial domain adaptation, ” in AAAI Con- fer ence on Artificial Intelligence , 2018. [22] Xilun Chen, Y u Sun, Ben Athiwaratkun, Claire Cardie, and Kilian W einberger , “ Adversarial deep av eraging networks for cross-lingual sentiment classification, ” arXiv preprint arXiv:1606.01614 , 2016. [23] Qing W ang, W ei Rao, Sining Sun, Leib Xie, Eng Siong Chng, and Haizhou Li, “Unsupervised domain adaptation via domain adversarial training for speaker recognition, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), IEEE International Confer ence on , 2018, pp. 4889–4893. [24] Eric Tzeng, Judy Hoffman, Kate Saenko, and Tre v or Darrell, “ Adversarial discriminati ve domain adaptation, ” in Computer V ision and P attern Recognition (CVPR) , 2017, vol. 1, p. 4. [25] Hui Bu, Jiayu Du, Xingyu Na, Bengu W u, and Hao Zheng, “ Aishell-1: An open-source mandarin speech corpus and a speech recognition baseline, ” in Conference of the Orien- tal Chapter of the International Coordinating Committee on Speech Databases and Speech I/O Systems and Assessment (O- COCOSD A) , 2017, pp. 1–5. [26] Laurens van der Maaten and Geoffre y Hinton, “V isualizing data using t-sne, ” Journal of machine learning resear ch , vol. 9, no. Nov , pp. 2579–2605, 2008. [27] Nguyen Xuan V inh, Julien Epps, and James Bailey , “Informa- tion theoretic measures for clusterings comparison: V ariants, properties, normalization and correction for chance, ” Journal of Machine Learning Resear ch , vol. 11, no. Oct, pp. 2837– 2854, 2010.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment