A robust, low-cost approach to Face Detection and Face Recognition

In the domain of Biometrics, recognition systems based on iris, fingerprint or palm print scans etc. are often considered more dependable due to extremely low variance in the properties of these entities with respect to time. However, over the last d…

Authors: Divya Jyoti, Aman Chadha, Pallavi Vaidya

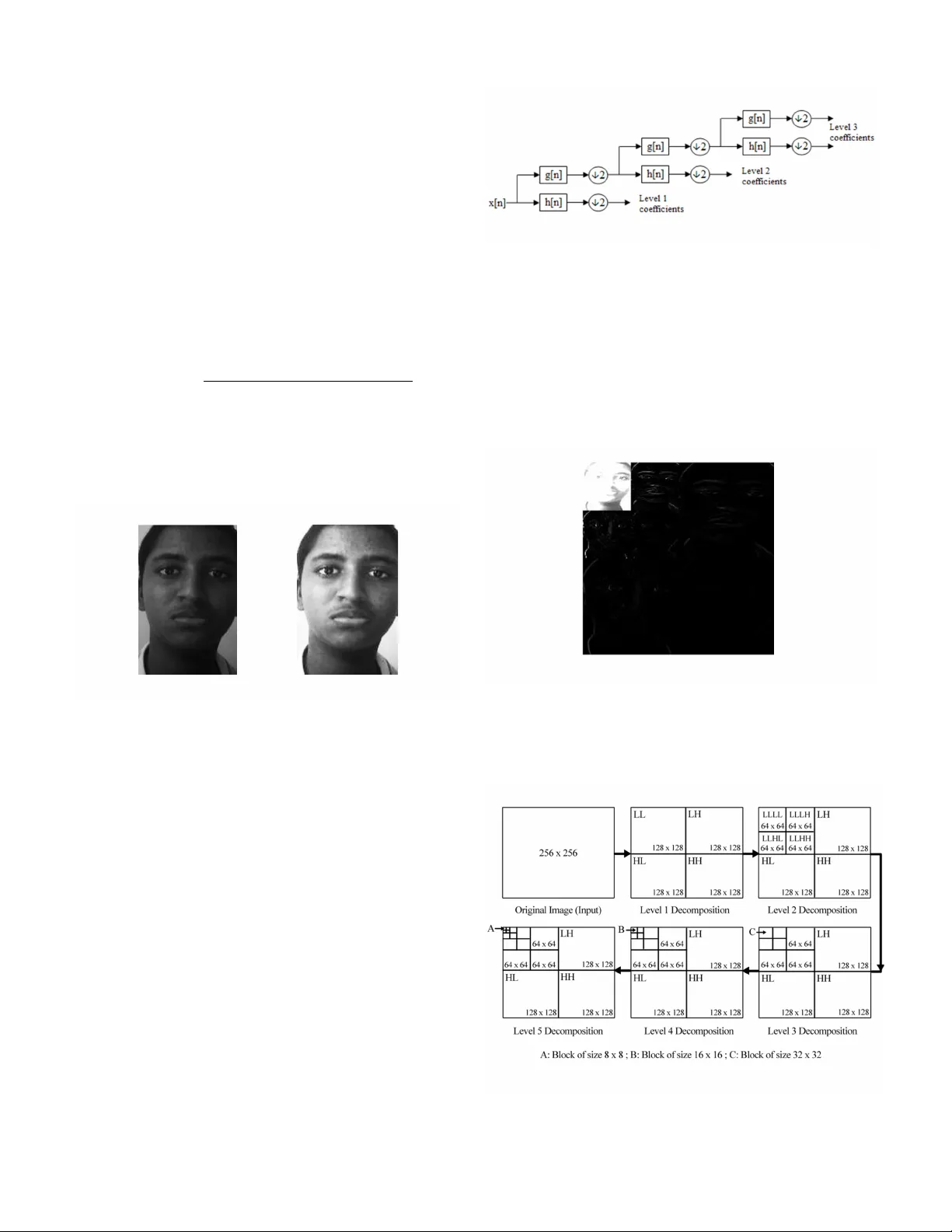

CiiT In ternat ional J ourn al of Di gital Image Proce ssing , I SSN 0974 – 969 1 ( Print ) & ISSN 0974 – 9586 ( Online ) Vol. 15 , No. 10, October 20 11 1 Abstrac t — In the doma in of Biome trics, recognition sy stems base d on ir is, fi ngerpr int or palm print s cans e tc. are of ten consi dere d more depen dabl e due to ext remely low vari ance in the p roperties of these en tities with respect to time. Ho wever, over th e last decad e da ta proce ssing c apabili ty of comput ers has inc reas ed manif old, w hich ha s made real - time vi deo conte nt an alys is possibl e. This show s that the need of the hour i s a rob ust and h ighly autom ated Fa ce Det ection and Recogni tion algorith m with credi ble accu racy rate. The pro posed Face Detectio n and Recogni tion system usin g Discret e Wavelet Transf orm (DWT) accep ts face frames as i nput fro m a database co ntain ing images fro m low cost d evices su ch as V GA cameras, webca ms or even CCTV’s, where image q ualit y is inferior. Face regi on i s then detected using propert ies of L* a*b* color spac e an d only Fronta l Face is extracted su ch th at all add itional backgro und is el iminated. Further, this ext racted image is con verted t o grayscale and i ts dimensio ns are resi zed to 128 x 128 p ixels. DW T is then applied to entire im age to obtai n the c oeff icie nts. Re cogniti on is carri ed out by compa rison of the DWT coeff icients belonging to the te st imag e with those of the registered referenc e image. On co mparison , Eucli dean dist ance classi fier is dep loyed to valid ate th e test image from the d atabase. Acc uracy for va rious lev els of DWT Decom position is obta ined a nd hence, compared. Keyword s — discret e wavel et tran sform , face detecti on , fa ce recognition , person i dentification . I. I NTRODUCTION face re cogniti on sys tem is es sentiall y an ap plication [1] intended t o identify or verify a person either f rom a digi tal image or a video frame obt ained fro m a video source. Alt hough other reli able methods of biom etric person al identificat ion exist, for e.g ., fingerpri nt analysis or iris scans, these method s inhere ntly rely on the cooperation of the participants, whereas a personal iden tification sy stem based on analy sis of front al or profile im ages of the face is often effective w ithout th e par tic ip an t’s co operat ion or intervention. Automatic identificati on or ve rification may be a chieved by comparin g selecte d fa cial f eatures from the im age and a facia l dat abase. This technique is typically used in security systems. Given a Man uscr ipt rec eiv ed Sep tem ber 11 , 201 1. 1 D. J . Raj dev is with t he T hado mal Sh ahan i Engin eeri ng C ollege, Mu mbai , 400 002, I NDI A (pho ne: +91 - 8879100684 ; e - mai l: dj.rajde v@gmail .com ). 2 A. R . Chad ha is wit h the Tha domal Shaha ni E ngine eri ng Col leg e, Mumbai , 400002, INDIA (p hone: +91 - 993055658 3; e - mail: aman. x64@gmai l.com ). 3 P. P. Vaidya is w ith the T hadom al S hahan i Eng inee ring Coll ege , Mumba i, 400002 , INDIA (e - mai l: pallavi.p .va id ya @ gmail.co m ). 4 M. M. Roja is an Associa te Professor i n the Electron ic s and Tel eco mmunic ation E ngi neer ing De partm ent, T hado mal S haha ni Eng ine ering College, 4 000 50, I NDI A (e - mail : manir oja@yaho o.com). large da tabase of images and a pho tograph, the probl em is to sel ect fro m the dat abas e a small set of reco rds suc h that on e of the i mage r ecords m atche d the phot ograph. The succ ess of th e method co uld be measured in terms o f the ratio o f the answer l ist to the number of re cords in the datab ase. The recog niti on problem is made difficult by the great v ariability in head rotati on and tilt, light ing intens ity and angle, fa cial expre ssion, aging, etc. A rob ust fac ial reco gni tion s ystem must b e able to co pe wit h the abo ve factor s and yet pro vide s atisfacto ry accu racy levels. A gener al statement of the proble m of m achi ne recogni tion of faces can [2] be formulate d as: given a still or video image of a scene, ident ify or verify one or more per sons in the scene using a stor ed d ataba se o f fa ces. T he so l ut ion to the pro blem invo lves segm entation of faces , featu re ext raction f rom f ace regi ons, recognition , or verifi cation. In identifica tion pr oblems, the input to the system is an unknown face, and the system repor ts back the determ ined identity from a data base of known individuals, wherea s in verification problems, the s ystem needs to confirm or reject th e claimed i dentity o f the input face. Some of the various application s of face recognition include driving lice nses, immigratio n, natio nal ID, pas sport, v oter registration, secu rity application, medical records, personal devic e logon, desktop log on, hum an - robot - interaction, huma n - co mputer - interacti on, s mart cards et c. Face recogniti on is such a c hallengin g yet interesti ng pro blem that it h as attracted researchers w ho have different backgroun d s: pattern rec ogniti on, neur al ne twor ks, co mput er vi sion, and c o mput er graphics, hence th e literature is vast an d diverse. The usage of a mixture of techniq ues makes it difficult to classify these syste ms b ased on what t ypes of techni ques they use for feature representatio n or cla ssification. T o have clear categorization, the propos ed paper foll ows the holisti c approach [ 2]. Specifically, th e following techniques are employed for facial feature extraction and recognition: 1) Holistic matching methods: T hese methods use the whole face region as a raw input to the recognition system. One of the most widely used representations of the face region is Eigenpictures, which is inh erently based on principal compo nent ana lysi s. 2) Featur e- based matc hin g met hod s: Ge nera lly, in the se methods, local features such as the eyes, nose and mouth are first extracted and their locations and local sta tistics are fed as inputs into a classifier. 3) Hybri d methods: It uses bot h local f eatures and whol e f ace region to recognize a f ace. This method could potentially offer the better of th e two ty pes of me thods. A robust, low- cost approach to Face Detection and Face Recognition Divya J yot i 1 , Aman C hadha 2 , Pallav i Vaidya 3 , and M. Mani Roja 4 A CiiT In ternat ional J ourn al of Di gital Image Proce ssing , I SSN 0974 – 969 1 ( Print ) & ISSN 0974 – 9586 ( Online ) Vol. 15 , No. 10, October 20 11 2 Most electronic imaging applications often desire and req uire high r esol utio n ima ges. ‘ Hi gh resolution’ b asically mean s that p ixel d ens it y withi n an i mage is high, a nd the re fo re a HR image can off er more details and su btle transitions that may be critica l in various ap plications [1 9]. For insta nce , hi gh resolution medical images could be very he lpf ul for a d oc tor to make an accurate diagnos is. It may be easy to distinguish an object f rom similar ones using high reso lutio n satellite i mages, and th e performan ce of patt ern recogni tion in com puter vision can easily be im proved if suc h i mages are prov ided. Over th e past few decades, charge - coupled device (CCD) and C MOS image sensors have been wi dely used to capture digital im ag es. Altho ug h thes e se nsor s ar e suita ble fo r most ima gi ng applications, the current resolution level and consumer price will not satisfy the futu re demand [19]. Past studies by researches and scientis ts that have investigated the chall enging task of face detection and rec ogniti on ha ve t here fore , t ypical ly use d hi gh re solut io n images. Moreover, m ost standard face databases such as the MIT - CBCL F ace Reco gnition Database [21], CMU Multi - PIE [22], The Yale Face Database [23] etc., th at are basically used as a standard test data set by researchers to benchmark their resul ts, also emplo y hi gh qua li t y image s. R esult s obtain ed by sol utions pro posed by r esearchers are therefore, relevan t for theoretical understanding of f ace detection and id entificatio n i n most c ases . Practica l conditions being rarely optim al, a num ber of fact ors play an important role in hampering syst em perfor mance. Imag e degradation, i .e., loss of re solut io n ca used mai nly b y large vie win g di stanc es as demonstrated in [4], and lack of specialized high resolution image capturing equipment such as commercial cameras are the underlying factors f or poo r performance of f ace detection and reco gnition syste ms in pra ctical situation s. There are two paradig ms to alleviate this pro blem, but both have clear disa dvant age s. O ne op tio n is to use supe r - resol ution algorith ms to enh ance th e image as pr oposed in [20] , but a s resolu tion decreases, super - res olu tion becom es more vu lnerable to environmental varia tions, and it introduces disto rtions that affect recognition performance. A detailed analysis of super - reso lut io n const rai nt s has b ee n pre sented i n [3] . On t he other hand, it is also possible to match i n the lo w - res olut io n doma in by downs ampling th e training set, but this is undesirable because feat ures important for recognit ion depend on hi gh fre quency det ails that are eras ed by dow ns ampling. These features are permanently lost upon performing downs ampling and cannot be recovered with upsampling [24]. The propos ed sy stem has been des igned k eeping in v iew these critical factors and to address such bottlenecks . II. I DEA OF THE P ROPOSED S OL UTIO N The database consists of a set o f face s ample s of 50 people. There are 5 test images and 5 training or reference i mages. Frontal face images are detected and hen ce, extracted. DWT is applied to the entire image so a s to obtain the globa l features whi ch in clude approximate coefficients (low frequency coefficients) and detail coeff icients (high frequenc y coefficients). The approximate coeff icients thus obtained, are stored and the detail coeffi cie nts are discarded. Various levels of DWT are realized and their corresponding accuracy rates are determined. A. Frontal Face Image D etecti on and Extraction The face detection problem can be defined as, g iven an input an arbitrary image, which could be a digitized video signal or a scanned photograph, determine whether or not there are any human faces in the image and if t here are, then return a code correspon ding to their loc ation . Face detecti on as a co mputer vision task has many applications. It has direct relev ance to the face recognit ion pro blem, becaus e the first an d f oremost important step of an automatic human face recogni tion syste m is usua ll y ide nti fyin g and l oc atin g the face s in a n unk no wn image [5]. For our purpose, face detectio n is actual ly a face localization problem in whi ch the imag e position of singl e fac e has to be determined [6]. The goal of our facial feature detection is to detect the presence of featur es, such as eyes, nose, n ostrils, eyebro w, mouth, lips, ears, etc. , with the assumption that t here is onl y o ne fa ce i n a n ima ge [7] . T he s ystem sho uld a lso be robust against human affective states of like happy, sad, disgusted etc. The diff iculties associated with face detection systems due to the variations in image appearance such as pose, scale, image ro tation and orientation, ill umination and fac ial e xpression make face detection a difficult pattern recognition problem . Hence, f or face det ection foll owing problem s need to be taken into account [5]: 1) Size: A face detector shoul d be able to detect faces i n different sizes . Thus, the scaling fact or bet wee n the reference and the face image under test, needs to be given due consideration. 2) Expressions: The appearance of a face changes cons iderably f or diff erent facia l expres sions an d thus, makes face detecti o n more difficult. 3) Pose variation: Face images vary due to r elative camera - face pose and som e facial features such as an eye or the nose m ay become partially or w holly occluded. Another source of variation is the distance of the face from the camera, changes in which can result in perspective distortion. 4) L igh tin g and t ext ure v ar iat io n: Cha nge s in t he l ight sour ce in particular can change a face’s appearance can also cause a change in its apparent t exture. 5) Presence or absence of structural components: Facial features such as beards, moustaches and glasses ma y o r may not be present. And also there may be variabili ty among these components including shape, colour and size. The propo sed syste m empl o ys glob al fea ture matc hing for face recognit ion. Ho wever, all com putation takes place only on the frontal f ace, b y eliminating the h air and background as these may va ry fro m one i ma ge to ano ther . All sys tems ther e for e need frontal face extraction. One approach to achieve the aforem ention ed is by manually cropping t he test ima ge for required region or by precisely align ing t he use r's fa ce with the camera before the test sample is click ed. Both these methods CiiT In ternat ional J ourn al of Di gital Image Proce ssing , I SSN 0974 – 969 1 ( Print ) & ISSN 0974 – 9586 ( Online ) Vol. 15 , No. 10, October 20 11 3 may int rod uce a high d egr ee o f huma n err or a nd so the y have been avoided. Instead, automated Frontal Face Detection and Extraction is p ut to use. Therefore, a robust automatic f ace rec ogniti on s yste m s houl d be cap ab le of ha ndli ng t he ab ove pro ble m with no ne ed for hu ma n inte rve ntio n. T hus, it i s practical and advantag eous to realize automatic face detection in a fun ctional face recognition system. Commo nl y use d m ethods for skin detection i nclude G abor f ilters, n eural netw orks and t e mplate matching . It has be en proved th at Gabor filt ers give optim um output for a wide rang e of variations in th e test image with respect to user image , b ut it i s the most ti me inte nsi ve procedure [8]. Moreover, it is unl ikely that the test im age would be severely out of sync for on the spot face recog nition , so this m ethod is not use d. M ost ne ura l - network bas ed algori thms [18], [19] are tedious and req uire training sa mples for differe nt sk in types which add to the already vast reference image database; hence, even t his d oe s not fi t the p rogr am's r equi re ments. Eve n template mat chin g has s ev ere drawbacks, in cluding high computational cost [10] and fails to work as expected when the user's fac e is positioned at an angle in the tes t image. After consi dering all the above factors, a cl assical appearance based meth odolog y is applied to extract Frontal face. The default sRGB colour space is transformed to L*a*b* gamut, be ca use L *a*b * sep ara te s int e n si t y fr o m a and b colou r com ponents [11]. L* a* b* colour is designe d to approxim ate huma n visi on i n cont rast to the RGB and CMYK col our mode ls. It aspi res to perce ptual u niform ity, and it s L compo ne nt closely matches human perception of li ghtness. It can t hus be used to mak e accurate colour balance corrections by modifying outp ut c urve s in t he a and b compon ents, or t o adjust the light ness co ntr ast usi ng the L com ponent. In RGB or CMYK spaces, which model the output of physical devices rather than huma n vis ual p erc ept ion, the se tr ansfo r matio ns ca n onl y b e done w ith th e help of appropria te blend m odes i n th e editing applicatio n [10]. This distinctio n makes L*a*b* space mor e perceptu ally uniform a s com pared to sR GB and th us ident if ying to nes, and no t j ust a si ngle colour, can be accomplished using L*a* b* sp ace. Fig. 1 sho ws the R GB col our mod el ( B) rela ting to the CMYK mod el ( C). T he l arge r ga mut o f L*a*b * (A) gi ves mor e of a sp ect rum to work with, thus maki ng the ga mut of t he device the only li mitation. Tone id entification is applie d to detec t skin by calculatin g the gra y thr es hold of a and b colou r com ponents and then conve rti ng the ima ge to pure bla ck and white (BW ) usi ng t he obtain ed thres hold. Thu s, RGB colo ur space can s eparate out only specific pigments , but L*a*b* space can separate out tones . Fi g. 2 s hows s kin co lo r d iffere ntiat io n in the for m of white c olo r using L *a*b * spa ce wher eas Fig. 4 s ho ws no s uch differentiatio n using R GB. T he extracted frontal f ace has been sho wn in F ig. 3. The re is highe r pr ob abi lity o f ski n sur face b ei ng the lig hter part of the image as compared to the gray threshold [12], This may ha ppe n due t o ill umina tio n and natura l ski n colo ur ( in most c ult ures ), so , p ure white regio ns i n the b lac k and wh ite image correspond to skin. It is assumed that face will have at least one hole, i.e., a sm all patch of absolute black du e to eyes, chin, dim ples etc. [11] and on the basi s of presence of holes frontal f ace is separated from other sk in surfaces like h ands. A boundi ng box i s created around the Frontal Face and after cropping the excess area, f rontal face extraction is complete. The above technique has been tested extensively on images obtained from standalone VGA cameras, webcams and camera equippe d mobil e device s having a resolu tion of 640 × 480. Even for r eso lutio ns as lo w as 32 0 × 200 , where the te st i mage is poorl y illum inated or e xtremely grainy , the algori thm was able to successfully extract f ro ntal f ace from test images. Thus, the propos ed sys tem is r obust enough to achieve desired res ult even whe n lo w cost eq uip me nt li ke CCT V's a nd lo w resol uti on webcams are used. Also, since equipment with inferior picture quality like C CTV's and lo w re solution webc ams are used, the Fig. 1. RGB, CMYK an d L*a*b* Colour Model Fig. 2. Reference image ( l eft), image in black an d white a plane (m iddl e) and im ag e in blac k and white b p lane ( r i ght) Fig. 3. Image in L*a*b* colo r space ( l eft), fro ntal face ext racted ima g e ( m iddle) and grayscal e resized image ( r ight) Fig. 4. B&W image o f red colo r sp ace ( l eft), B&W i mage of green colo r space ( m idd le) and B&W image o f blu e color sp ace (right ) CiiT In ternat ional J ourn al of Di gital Image Proce ssing , I SSN 0974 – 969 1 ( Print ) & ISSN 0974 – 9586 ( Online ) Vol. 15 , No. 10, October 20 11 4 algorithm w or ks as ex pected and hence can be called a low - co st approach to face detection. The extracted f rontal f ace i ma g e is then fe d as an inp ut to the DWT - based f ace r ecogni tion process. B. Normalization Since the facial images are captu red at dif ferent insta nts of the da y or on differ ent d ays, t he int ensi ty fo r ea ch i mage may exhibit variations. T o avoid these light inte nsity variations, the test images are normalized so as to have an average intensity value with res pect to the regist ere d i mage . T he ave rage intensity value of the registered images is calculated as summation o f all pixel values divid ed by the total number of pixels. Similarly, av erage intensity value of the tes t image is calculated. The normalizat ion value is calculated as : Normalization Value = image test of value Average image reference of value Average (1) This value is multip lied with each pixel o f the test ima ge. Thus we get a nor mali zed ima ge ha ving a n ave rage inte nsi ty with respect to tha t of the regis tered image. Fi g. 5 shows the t est image and t he corresponding nor malized image. C. Discre te Wav ele t Tran sform DWT [13] is a transf orm which pr ovides th e time - freq uenc y representation. Often a particular spectral component occurring at an y inst ant is o f particular interest [14]. In these cases it may be very benefi cial to know the time intervals these particular spectral components occur. For example, in EEGs, the latency of an e ven t - related p otential i s of particular interest. DW T is capable of prov iding t he ti me a nd fre que ncy i nfor mat io n simult ane ou sly, henc e gi vin g a ti me - frequency representation of the signal. In numerical analysis and functional analysis, DWT is any w avelet transform for which the w avelets are discretely sampled. In DWT, an imag e can be analyzed b y passi ng it t hro ugh a n ana lysi s fil ter bank foll owed by decimation operation. The analy sis filter consists of a low pass and high pass filter at each decomposition stage. When the signal passes thro ugh filters, it sp lits into two bands. T he l o w pass filt er which corresponds to an averaging operation, extra cts t he co ar se info rma tio n of the signa l. T he hi gh p ass filter which corresponds to a differencing operation, ext racts the detail infor mation of the signal. Fig. 6 shows the filteri ng operati on of D WT. A tw o dimensional transform is accomplish ed by perform ing two separate one d imensional tr ansforms. First the image is filtered along the r ow and decimated b y two. It is then follow ed by filte ri ng the s ub i mage a long t he c olu mn and d eci mated by two. This op eration splits the image into four bands namely LL, LH, HL and HH respectively . Further decompositions can be achie ved b y a ctin g up on t he L L sub band suc ces sive ly a nd t he resultant image is split in to multiple b ands. For repre sentational purpose , Leve l 2 d ecomposition of th e normalized test image Fig. 5 , is s ho wn in Fi g. 7 . At each l evel in the above diagram , the frontal face imag e is decom posed into l ow and high fre quencies . Due to the decom positi on process, t he input s ignal mus t be a mul tiple of 2n whe re n is the n umb er o f leve ls. T he si ze o f the i nput i ma ge at different le vels of deco mposition is ill ustrate d i n Fig. 8. The first D WT was in vente d b y the H un garia n mathematician Alfréd Haar. The Haar wavelet [15] is th e first Fig. 5 . Test Image and Normalized Image Fig. 6. D WT filte ring operation Fig. 7. Lev el 2 DW T dec ompositi on Fig. 8 . Size o f the image at diff erent levels of DWT d ecompo sition CiiT In ternat ional J ourn al of Di gital Image Proce ssing , I SSN 0974 – 969 1 ( Print ) & ISSN 0974 – 9586 ( Online ) Vol. 15 , No. 10, October 20 11 5 know n wavele t and was proposed i n 1909 by Alf red Haar. The term w avelet was coi ned much later. The Haar wavelet is also the simplest possible wavelet. Wavelets are mathe matical fun ctions dev eloped for the purpose of sorti ng dat a by frequency. Translated data ca n then be sorted at a resolu tion which matches its scal e. Study ing data at different le vels allo ws for the development of a more complete picture. Both smal l features and large features are discernable becaus e they are studied separately . Unlike the Discrete Cosine Transform (DCT), the wavelet transform is not Fo urier - based and hence, do es a better job o f handling disconti nuities in data [ 16]. For an input represented by a list of 2n numbers, the Haar wavel et transf orm may be conside red to sim ply pai r up input values, storing th e difference and passing the sum. Th is pro cess is repeated recursi vely, pairing up th e sums to provide the nex t sc ale , fin al ly re sul tin g in 2n − 1 dif fe ren ces and on e fin al sum . Each step in th e forward Haar transform calculat es a set of wavelet coefficients and a set of averages. If a data set s 0 , s 1 , s N-1 contains N ele ments; t here will be N/2 averages a nd N/2 coefficient values. The averages are s tored in the lower half of the N element array and the coeffici ents are stored in the upper half. T he aver age s be co me the inp ut fo r the ne xt ste p in the wavelet calculatio n, where for iteration i+1, N i+1 = N i /2. T he Haar wavelet operates on data by calcu lating the sums and differences of adjacent el ements. The Haar equations to calculate an averag e (a i ) and a wavelet coefficient (c i ) from an odd and even elem ent in the data set can be given as: 2 ) ( 1 + + = i i i S S a (2) 2 ) ( 1 + − = i i i S S c (3) In wavelet terminology , the Haar average is calculated by the scaling fu nction while t he coefficient is calcul ated b y the wavelet function. D. Inverse Discrete Wave let T ran sform The data input to the f orward transform can be perfectly rec onstr ucted usi ng t he fo llo wing eq uat ion s: i i i c a S + = (4) i i i c a S − = (5) After applying DWT, we tak e ap proximate coefficients , i.e., outp ut coefficients o f low pass filters. Hi gh pass coe fficients are discarded since they provide detail information which serves n o practical use for our application. Various levels of DWT are used to reduce the num ber of coefficients. III. I MPLEMENTATION S TEPS The im age si ze used in the projec t wor k is 128 × 128 pixels . On ap pl ying the wavelet tra nsform, the i mage is divid ed into approximate coeffi cients and detail coefficients. L evel 1 yields t he number of approxim ate coefficient s as 64 × 64 = 4096. The approximate coefficients (low frequency coefficients ) are stored and the detail coeffi cients (high frequency coefficients) are discarded. These approximate coefficients are used as input s to the ne xt le vel. Level 2 yields t he num ber of approximate coeffici ent s as 3 2 × 32 = 1024. Thes e steps are repeated until an i mprove m ent i n the recognitio n rate i s observed . At each level the detail coefficients are neglected and the approximate coefficients are used as inputs to the next level. These approximate coeffici ents o f inp ut i mage and r egi ster ed image are extracted. Each set coefficients b elongi n g to the test image is compared with tho se o f the registered image by taking the Euclidean distance and the recognition rate i s calculated. T a bl e 1 sho ws the co mpar iso n c arried out at each level and its recognitio n rate . Upo n inspe cti ng the res ults o btai ned, w e can infer t hat L eve l 3 offers better perform an ce in co mparison to other leve ls . Hence the image s are subjected t o decom position on ly up to L evel 3 . IV. F UTURE W OR K The proposed face detection algorith m is tim e - efficient, i.e. , having an e xecutio n speed of less t han 1.75 seco nds on an Int el Core 2 Duo 2.2 GHz proc ess or. Du e to its s pee d an d robu stne ss, it can furthe r be exten ded f or real t ime face detec tion and identifi cation in video systems. Als o, the propo sed face reco gnition method can be coupled with reco gnition using loca l featur es, thu s leadin g to an impr ovement i n accu racy. V. C ONCLUS ION The propos ed face detection and extraction scheme was ab le to succes sfully extr act the f ron tal face f or po or res olutions as low as 320 × 200, eve n w he n the origina l image was poorly illuminate d or extremely grain y. Thus, images obtaine d from low cost equi pment l ike CC TV's an d low resoluti on w ebcam s co uld be proce ssed by the algori thm. For face re cognitio n, the recogniti on rate for global featur e s using vario us levels of DWT was calcul ated. Gene rally , the reco gnition rate wa s foun d to im prov e upon nor maliz a tion . Level 3 DWT Deco mposition gi ves a superior reco gn ition r ate as com pare d to ot her d ecom pos ition level s. R EFERENCES [1] Z. Hafe d, “Face Recogni tion Us ing DCT ”, Internation al Jou rnal of Com puter Visi on , 2001, pp. 167 - 188. [2] W. Z hao, R. Chell appa, “F ace Reco gnition: A L iterature Survey ”, ACM Com putin g Surve ys , Vol .35, No.4, De cembe r 200 3, pp. 399 - 458, p . 9. TA B LE I C OMPARISON OF V ARIO U S L EVELS OF DWT Level s Coef ficients Reco gni tion Rat e w itho ut norm ali zed image Reco gni tion Rat e w ith norma lized image Level 1 4096 85.1% 91.4% Level 2 1024 91.4% 91.4% Level 3 256 93.6% 95.7% Level 4 64 89% 93.6% Level 5 16 87.6% 93.6% CiiT In ternat ional J ourn al of Di gital Image Proce ssing , I SSN 0974 – 969 1 ( Print ) & ISSN 0974 – 9586 ( Online ) Vol. 15 , No. 10, October 20 11 6 [3] S. Bake r, T. K anade, "L imits on supe r - re solutio n an d how to bre ak the m," IEEE Tra nsaction s on Patte rn Analys is and Mac hine Int elligen ce , V ol. 24, No. 9, pp. 1167 - 1183 , Septemb er 2002. [4] A. Braun , I. Jarudi and P. Sinh a, “Face R ecogniti on as a Function of Imag e Resolu ti on and Viewi ng Di stan ce, ” Journa l of V ision , Sept emb er 23, 2011 , Vol. 11 No. 11, Artic le 666 . [5] L. S. Sayana, M. Tech Diss ertatio n, Face D etecti on , I ndian I nstitute of Te chnol ogy ( IIT) Bomb ay, p. 5, pp. 10 - 15. [6] C. S chnei der , N. Esau, L. Klei nj ohan n, B. Klein jo hann, "Feat ure b as ed Face Localiza tion an d Recogni tion on Mobile Devic es," Intl. Conf. on Cont rol, Auto mation, Robo tic s and Vision , Dec. 2006, pp. 1 - 6. [7] L . V. Prase eda, S. K um ar, D. S. Vid yad haran , “Face d et ecti on and local ization of facial fe atures i n still and v ideo imag es”, IEEE Intl. Conf. on Eme rging Trend s in Engine ering and Te chnology , 20 08,pp.1 - 2. [8] K. Chung, S. C. Kee, S. R. Kim, " Face Recognitio n Using Princi pal Com ponen t Anal ys is of Gabo r Fi lter Respo nses ,” Inte rnat ional Works hop on Recogn ition, Analysi s, and Trac king of Fac es and Ge stures in Real - Time Sys tems , 199 9, p. 53. [9] T. K awanishi, T. K uroz umi, K. Kashino, S. Takagi, "A Fast Te mplate Matc hing Al gorithm wit h Ada ptive Ski pping Usi ng I nner - Subt emp lates ' Distance s," Vol . 3, 17th Int ernationa l Confe rence on Patte rn Recogni tion , 2004, pp. 654 - 65. [10] D. Margulis, P hotos hop L ab C olour : T he C anyon Conu ndrum an d Oth er Adve n tu re s i n the Mo st Powerful Co lourspac e , Pears on Educati on. ISBN 0321356 780, 20 06. [11] J. Cai, A. G oshtasby , and C. Yu, “De tecting huma n faces i n colo ur images ,” Imag e and Vis ion Com put ing , Vol. 18, No. 1, 1999, pp. 63 - 75. [12] S ingh, D. Garg, Sof t computi ng , Allie d Publis hers , 2005, p. 222. [13] S. Jayarama n, S . Esakk ira jan , T . Veera ku mar, Digi tal Imag e Proce ssin g , Mc Gr aw Hil l, 2008. [14] S. A ssegie , M.S. thesis , Departm ent of Electrical and Com puter Engi nee ring, P urd ue Univ ers ity, Efficient and Secure Imag e and Video Pro ces sin g and Tr ansmi ssio n in Wire less Sensor Networ ks , pp. 5 - 7. [15] P. Goyal, “ NUC algo rithm by cal culating the corr esponding statist ics of the de compo sed s ignal” , Int ernational Journa l on Compute r Scienc e and Technol ogy (IJCST) , Vo l. 1, I ssu e 2, p p. 1 -2 , December 2 010 . [16] Y. Ma, C . L iu, H. Sun , “A Simpl e T ransf orm M etho d in t he F iel d of Image Proce ssing”, Proceed ings of the Sixth Inte rnational Confer ence on Inte llige nt Sys tems De sign a nd Appl icat ions , 2006, pp. 1 - 2. [17] H. A. Row ley , S. Baluja and T. K anade , "Neural netw ork - based face dete ction," IEEE Tr ansactio ns on Pat tern Ana lysis and Machine Intell igence , Vol. 20 , No. 1, pp. 23 - 38, Jan uary 199 8. [18] P. Latha, L . G anesan an d S. A nnadur ai, “F ace Reco gnition U sing Neural Net work s,” Signal Proce ssing: An Interna tiona l Journal (SP IJ) , Volu me: 3 Issue: 5, pp . 153 - 160. [19] S. Park, M. Par k and M. K ang, “S uper - re solution image reco nstructio n: a techni cal ove rview ,” Signal Proc essi ng M agazi ne , pp. 21 - 36 , May 2003 . [20] P. Henni ngs - Y eomans, S. Baker , and B.V.K . Vijaya K umar, “Recogni ti on of Low - Resolut ion Faces Usi ng Mult iple St ill Images and Mu ltip le Ca mera s,” Procee dings of t he IEEE Inter nati onal C onfer ence on Biomet rics: The ory, Syste ms, and Applicat ions , p p. 1 -6 , Septem ber 2008 . [21] M IT - C BC L Face R ecogn it io n Data bas e, C ent er for B iolog ica l & Comp utat ional L e arning (CBCL ), M assac huset ts I nstit ute o f Te chnol ogy , Availab le: h ttp ://c bcl.m it.edu /s oftware - datasets /heise le/f acereco gnitio n - database .html, J uly 2011. [22] M ulti - P IE D a t a bas e, Ca rnegi e Mellon Uni vers it y, Availa ble: http: //ww w.m ultipie .or g, Jul y 20 11. [23] Th e Ya le Fac e Dat aba se, D epartm ent of Compu ter Scien ce, Yale Unive rsity , Availab le: http://cvc .yale .edu/proje cts/yal efacesB/ yale facesB.html , June 2011 . [24] T. Frajka, K. Zeger, “ Dow nsampl ing depe nde nt upsam pli ng of imag es, ” Sign al Pr ocess ing: I mage Comm unicat ion , Vol. 19 , No. 3, pp. 2 57 - 265 , March 2004 . Divya P. Jy oti (M’ 20 08 ) was bor n in B hopal (M.P .) in Indi a on Apri l 2 4 , 1990 . Sh e is cu rre ntly pu rsui ng h er unde rgr aduate studie s in the Electro ni cs and Tel eco mmunic ation E ngine ering dis cipline at Thadomal Sha hani Eng inee ring C oll ege, Mum bai. H er fields of inter est include Imag e Proces sing, and Hu man - C omputer Inte raction . Sh e has 4 paper s in I nte rnatio nal Co nfe rences and Journa ls to her credit. Aman R. Chadha (M ’ 20 08) was b orn i n Mumba i (M. H .) in I ndia o n Novemb er 2 2 , 1990 . He is cur rentl y pur suing hi s und ergradua te studies in the Elec troni cs and Tel eco mmunic ation E ngi neer ing dis cipline at T hado mal Sha hani Engin eerin g C ollege, Mumbai. H is sp eci al fi elds of interest includ e Imag e Proces si ng , Computer Vi sion (particu larly, Pattern Recognition ) and E mbe dded S yst ems . H e has 4 paper s in I nter natio nal Conf er ence s and Journa ls to his credi t. Palla vi P . Vai dy a (M ’200 6) w as bor n in Mumbai (M. H . ) in I ndia o n Mar ch 18 , 1 985 . She grad uated with a B.E. in Electr onics & Telec ommun icati on Engineerin g from Maharas htr a Ins titute o f Te chnol ogy ( M.I.T. ), Pune in 2006 , an d compl ete d her pos t - graduati on ( M.E.) in Electr onics & Telec ommu nicat ion Engin eering from Thado mal S haha ni E ngine ering Col leg e ( TSEC ) , Mumb ai Unive rsity in 2008 . She is cur rent ly working a s a Se ni o r Eng ineer at a pre mier s hipy ard co nstruc tion f irm. Her s pec ial field s of i nt erest inclu de Image Process ing a nd B iome trics . M. Mani Roja (M’ 19 90 ) was bo rn i n T irunel ve li (T.N .) in Indi a on June 19, 1 969 . Sh e h as rec eived B.E . in Electron ic s & Communication En gineeri ng from GCE Tirune lve li, Madur ai Kam raj Univ ers ity in 199 0, and M .E . in E lectroni cs from Mu mbai Uni ve rsity in 2002. Her emplo yment exp erien ce i nc ludes 21 y ear s as an educ atio nist at Thado mal Sha hani En gineerin g College (TSEC), Mumba i Un ivers ity . She hol ds the post of an Associ ate P rofess or i n TSEC . H er spe cial f ields o f i ntere st incl ude Imag e Processi ng and Data Encry ptio n. She has ove r 20 pa pers i n Natio nal / Inte rnatio nal Co nfer ence s an d Journ als to her c redit. Sh e is a m e m b e r of IE T E , IS T E , IA C S I T and AC M.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment