Quaternion Equivariant Capsule Networks for 3D Point Clouds

We present a 3D capsule module for processing point clouds that is equivariant to 3D rotations and translations, as well as invariant to permutations of the input points. The operator receives a sparse set of local reference frames, computed from an …

Authors: Yongheng Zhao, Tolga Birdal, Jan Eric Lenssen

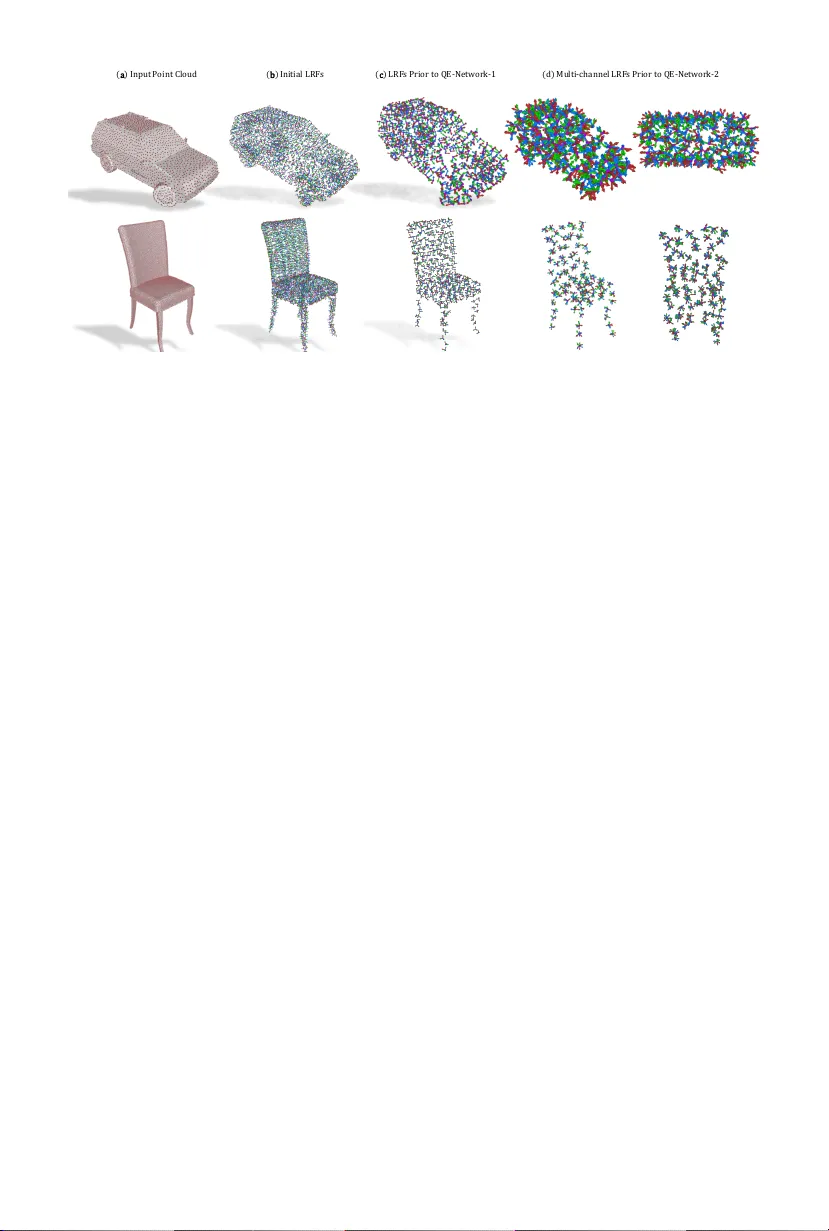

Quaternion Equiv arian t Capsule Net w orks for 3D P oin t Clouds Y ongheng Zhao 1 , 3 , ∗ , T olga Birdal 2 , ∗ , Jan Eric Lenssen 4 , Eman uele Menegatti 1 , Leonidas Guibas 2 , and F ederico T om bari 3 , 5 1 Univ ersity of Pado v a 2 Stanford Universit y 3 TU Munich 4 TU Dortmund 5 Go ogle Abstract. W e presen t a 3D capsule module for pro cessing point clouds that is equiv arian t to 3D rotations and translations, as well as in v ariant to p ermutations of the input p oints. The operator receives a sparse set of local reference frames, computed from an input point cloud and estab- lishes end-to-end transformation equiv ariance through a nov el dynamic routing procedure on quaternions. F urther, we theoretically connect dy- namic routing betw een capsules to the well-kno wn W eiszfeld algorithm, a scheme for solving iterative r e-weighte d least squar es (IRLS) problems with prov able conv ergence prop erties. It is shown that suc h group dy- namic routing can b e interpreted as robust IRLS rotation a veraging on capsule v otes, where information is routed based on the final inlier scores. Based on our operator, we build a capsule netw ork that disentangles ge- ometry from p ose, pa ving the w ay for more informativ e descriptors and a structured latent space. Our architecture allows joint ob ject classification and orien tation estimation without explicit sup ervision of rotations. W e v alidate our algorithm empirically on common benchmark datasets. W e release our sources under: https://tolgabirdal.gith ub.io/qecnetw orks. ∗ Keyw ords: 3D, equiv ariance, disen tanglement, rotation, quaternion 1 In tro duction It is no w w ell understoo d that in order to learn a compact and informativ e represen tation of the input data, one needs to resp ect the symmetries in the problem domain [17,73]. Arguably , one of the primary reasons for the success of 2D conv olutional neural netw orks (CNN) is the tr anslation-invarianc e of the 2D con volution acting on the image grid [29,36]. Recent trends aim to transfer this success into the 3D domain in order to supp ort many applications such as shap e retriev al, shape manipulation, pose estimation, 3D ob ject mo deling and detec- tion, etc. There, the data is naturally represen ted as sets of 3D p oints [55,57]. Unfortunately , an extension of CNN arc hitectures to 3D p oint clouds is non- trivial due to t wo reasons: 1) p oint clouds are irregular and unorganized, 2) the group of transformations that we are in terested in is more complex as 3D data is often observed under arbitrary non-comm utative S O (3) rotations. As a result, learning appropriate em b eddings requires 3D p oint-net w orks to b e e quivariant to these transformations, while also b eing inv ariant to p oint p ermutations. ∗ First tw o authors contributed equally to this work. 2 Y. Zhao et al. C h air (b) pai rw i se sh ape al i gn me n t (a) h i e r arch i cal v o t i n g fo r rot at i o n s ( i ) ( ii) (iii ) Fig. 1. ( a ) Our netw ork operates on lo cal reference frames (LRF) of an input point cloud ( i ). A hierarc hy of quaternion equiv arian t capsule mo dules (QEC) then p o ols the LRFs to a set of laten t capsules ( ii , iii ) disentangling the activ ations from p oses. W e can use activ ations in classification and the capsule (quaternion) with the highest activ ation in absolute (canonical) pose estimation without needing the sup ervision of rotations. ( b ) Our siamese v ariant can also solv e for the relativ e ob ject pose b y aligning the capsules of tw o shap es with differen t point samplings. Our netw ork directly consumes p oin t sets and LRFs. Meshes are included only to ease understanding. In order to fill this gap, we present a quaternion equiv arian t p oint capsule net- w ork that is suitable for processing p oint clouds and is equiv ariant to S O (3) rota- tions, compactly parameterized b y quaternions, while also preserving translation and p ermutation in v ariance. Inspired b y the lo cal group equiv ariance [40,17], we efficien tly cov er S O (3) by restricting ourselv es to a sparse set of lo cal reference frames (LRFs) that collectiv ely determine the ob ject orien tation. The prop osed quaternion e quivariant c apsule (QEC) mo dule deduces equiv arian t laten t repre- sen tations by robustly combining those LRFs using the prop osed Weiszfeld dy- namic r outing with inlier scores as activ ations, so as to route information from one lay er to the next. Hence, our latent features specify to lo cal orientations and activ ations, disen tangling orien tation from evidence of ob ject existence. Such ex- plicit and factored storage of 3D information is unique to our work and allows us to p erform rotation estimation join tly with ob ject classification. Our final arc hitecture is a hierarc hy of QEC mo dules, where LRFs are routed from low er lev el to higher level capsules as shown in Fig. 1. W e use classification error as the only training cue and adapt a Siamese version for regression of the relative rotations. W e neither explicitly sup ervise the netw ork with p ose annotations nor train b y augmenting rotations. In summary , our con tributions are: 1. W e propose a no vel, fully S O (3)-equiv ariant capsule module that produces in v ariant latent representations while explicitly decoupling the orien tation in to capsules. Notably , equiv ariance results hav e not b een previously achiev ed for S O (3) capsule net works. 2. W e connect dynamic routing b etw een capsules [60] and generalized W eiszfeld iterations [4]. Based on this connection, we theoretically argue for the con- v ergence of the included rotation estimation on votes and extend our under- standing of dynamic routing approaches. 3. W e prop ose a capsule netw ork that is tailored for simultaneous classification and orientation estimation of 3D p oint clouds. W e exp erimentally demon- strate the capabilities of our netw ork on classification and orien tation esti- mation on ModelNet10 and Mo delNet40 3D shape data. QE-Net works 3 2 Related W ork De ep le arning on p oint sets. The capability to process raw, unordered p oint clouds within a neural netw ork is in tro duced by the prosp erous P oin tNet [55] thanks to the point-wise con volutions and the p ermutation in v ariant po oling functions. Man y works hav e extended Poin tNet primarily to increase the lo- cal receptive field size [57,42,62,71]. P oint-clouds are generally thought of as sets. This makes an y p erm utation-inv arian t net w ork that can op erate on sets an amenable choice for pro cessing p oints [81,58]. Unfortunately , common neural net work op erators in this category are solely equiv ariant to p ermutations and translations but to no other groups. Equivarianc e in neur al networks. Early attempts to achiev e inv ariant data represen tations usually inv olv ed data augmentation tec hniques to accomplish tolerance to input transformations [49,56,55]. Motiv ated b y the difficult y asso- ciated with augmen tation efforts and ackno wledging the imp ortance of theoreti- cally equiv ariant or inv ariant representations, the recen t y ears hav e witnessed a leap in theory and practice of equiv arian t neural net works [6,37]. While la ying out the fundamentals of the group conv olution, G-CNNs [18] guaran teed equiv ariance with resp ect to finite symmetry groups. Similarly , Steer- able CNNs [21] and its extension to 3D vo xels [75] considered discrete symmetries only . Other works opted for designing filters as a linear combination of harmonic basis functions, leading to frequency domain filters [76,74]. Apart from suffering from the dense cov erage of the group using group con volution, filters living in the frequency space are less interpretable and less expressive than their spatial coun terparts, as the basis does not span the full space of spatial filters. Ac hieving equiv ariance in 3D is possible b y simply generalizing the ideas of the 2D domain to 3D b y vo xelizing 3D data. Ho w ever, methods using dense grids [16,21] suffer from increased storage costs, even tually rendering the imple- men tations infeasible. An extensive line of w ork generalizes the harmonic basis filters to S O (3) by using e . g . , a spherical harmonic basis instead of circular har- monics [19,25,22]. In addition to the same downsides as their 2D counterparts, these approac hes hav e in common that they require their input to b e pro jected to the unit sphere [33], which p oses additional problems for unstructured point clouds. A related line of researc h are metho ds whic h define a regular structure on the sphere to prop ose equiv arian t conv olution operators [44,13]. T o learn a rotation equiv ariant representation of a 3D shap e, one can either act on the input data or on the netw ork. In the former case, one either presents augmen ted data to the net w ork [55,49] or ensures rotation-in v ariance in the input [23,24,34]. In the latter case one can enforce equiv ariance in the bottleneck so as to achiev e an inv arian t latent representation of the input [50,66,63]. F urther, equiv arian t netw orks for discrete sets of views [27] and cross-domain views [26] ha ve b een prop osed. Here, we aim for a different wa y of embedding equiv ariance in the net work by means of an explicit latent rotation parametrization in addition to the in v arian t feature. V e ctor field networks [47] follow ed by the 3D T ensor Field Networks (TFN) [66] are closest to our work. Based up on a geometric algebra framework, the authors 4 Y. Zhao et al. did achiev e lo calized filters that are equiv ariant to rotations, translations and p erm utations. Moreov er, they are able to co ver the contin uous groups. How ever, TFN are designed for physics applications, are memory consuming and a t ypi- cal implementation is neither lik ely to handle the datasets w e consider nor can pro vide orientations in an explicit manner. Capsule networks. The idea of capsule net works was first mentioned by Hin- ton et al . [30], before Sab our et al . [60] prop osed the dynamic r outing by agr e ement , which started the recen t line of work in vestigating the topic. Since then, routing by agreement has been connected to several well-kno wn concepts, e.g. the EM algorithm [59], clustering with KL divergence regularization [68] and equiv ariance [40]. They ha v e b een extended to auto enco ders [38] and GANs [32]. F urther, capsule netw orks hav e b een applied for sp ecific kinds of input data, e.g. graphs [78], 3D p oint clouds [83,64] or medical images [1]. 3 Preliminaries and T ec hnical Bac kground W e no w provide the necessary bac kground required for the grasp of the equiv- ariance of point clouds under the action of quaternions. 3.1 Equiv ariance Definition 1 (Equiv arian t Map) F or a G -sp ac e acting on X , the map Φ : G × X 7→ X is said to b e e quivariant if its domain and c o-domain ar e acte d on by the same symmetry gr oup [18,20]: Φ ( g 1 ◦ x ) = g 2 ◦ Φ ( x ) (1) wher e g 1 ∈ G and g 2 ∈ G . Equivalently Φ ( T ( g 1 ) x ) = T ( g 2 ) Φ ( x ) , wher e T ( · ) is a line ar r epr esentation of the gr oup G . Note that T ( · ) do es not have to c ommute. It suffic es for T ( · ) to b e a homomorphism: T ( g 1 ◦ g 2 ) = T ( g 1 ) ◦ T ( g 2 ) . In this p ap er we use a stricter form of e quivarianc e and c onsider g 2 = g 1 . Definition 2 (Equiv arian t Netw ork) An ar chite ctur e or network is said to b e e quivariant if al l of its layers ar e e quivariant maps. Due to the tr ansitivity of the e quivarianc e, stacking up e quivariant layers wil l r esult in glob al ly e quiv- ariant networks e.g. , r otating the input wil l pr o duc e output ve ctors which ar e tr ansforme d by the same r otation [40,37]. 3.2 The Quaternion Group H 1 The choice of 4-vector quaternions as representation for S O (3) has multiple mo- tiv ations: (1) All 3-vector formulations suffer from infinitely many singularities as angle go es to 0, whereas quaternions av oid those, (2) 3-v ectors also suffer from infinitely man y redundancies (the norm can gro w indefinitely). Quaternions ha ve a single redundancy: q = − q that is in practice easy to enforce [9], (3) Computing the actual ‘manifold mean’ on the Lie algebra requires iterativ e techniques with subsequen t up dates on the tangent space. Such iterations are computationally and n umerically harmful for a differen tiable GPU implemen tation. QE-Net works 5 Definition 3 (Quaternion) A quaternion q is an element of Hamilton alge- br a H 1 , extending the c omplex numb ers with thr e e imaginary units i , j , k in the form: q = q 1 1 + q 2 i + q 3 j + q 4 k = ( q 1 , q 2 , q 3 , q 4 ) T , with ( q 1 , q 2 , q 3 , q 4 ) T ∈ R 4 and i 2 = j 2 = k 2 = ijk = − 1 . q 1 ∈ R denotes the sc alar p art and v = ( q 2 , q 3 , q 4 ) T ∈ R 3 , the ve ctor p art. The conjugate ¯ q of the quaternion q is given by ¯ q := q 1 − q 2 i − q 3 j − q 4 k . A unit quaternion q ∈ H 1 with 1 ! = k q k := q · ¯ q and q − 1 = ¯ q , gives a c omp act and numeric al ly stable p ar ametrization to r epr esent orientation of obje cts on the unit spher e S 3 , avoiding gimb al lo ck and singu- larities [15]. Identifying antip o dal p oints q and − q with the same element, the unit quaternions form a double c overing gr oup of S O (3) . H 1 is close d under the non-c ommutative multiplic ation or the Hamilton pr o duct: ( p ∈ H 1 ) ◦ ( r ∈ H 1 ) = [ p 1 r 1 − v p · v r ; p 1 v r + r 1 v p + v p × v r ] . (2) Definition 4 (Linear Representation of H 1 ) We fol low [12] and use the p ar- al lelizable natur e of unit quaternions ( d ∈ { 1 , 2 , 4 , 8 } wher e d is the dimension of the ambient sp ac e) to define T : H 1 7→ R 4 × 4 as: T ( q ) , q 1 − q 2 − q 3 − q 4 q 2 q 1 − q 4 q 3 q 3 q 4 q 1 − q 2 q 4 − q 3 q 2 q 1 . T o b e c oncise we wil l use c apital letters to r efer to the matrix r epr esentation of quaternions e.g. Q ≡ T ( q ) , G ≡ T ( g ) . Note that T ( · ) , the inje ctive homo- morphism to the orthonormal matrix ring, by c onstruction satisfies the c ondition in Dfn. 1 [65]: det( Q ) = 1 , Q > = Q − 1 , k Q k = k Q i, : k = k Q : ,i k = 1 and Q − q 1 I is skew symmetric: Q + Q > = 2 q 1 I . It is e asy to verify these pr op erties. T line arizes the Hamilton pr o duct or the gr oup c omp osition: g ◦ q , T ( g ) q , Gq . 3.3 3D Poin t Clouds Definition 5 (Poin t Cloud) We define a 3D surfac e to b e a differ entiable 2- manifold emb e dde d in the ambient 3D Euclide an sp ac e: M 2 ∈ R 3 and a p oint cloud to b e a discr ete subset sample d on M 2 : X ∈ { x i ∈ M 2 ∩ R 3 } . Definition 6 (Lo cal Geometry) F or a smo oth p oint cloud { x i } ∈ M 2 ⊂ R N × 3 , a lo cal reference frame (LRF) is define d as an or der e d b asis of the tan- gent sp ac e at x , T x M , c onsisting of orthonormal ve ctors: L ( x ) = [ ∂ 1 , ∂ 2 , ∂ 3 ≡ ∂ 1 × ∂ 2 ] . Usual ly the first c omp onent is define d to b e the surfac e normal ∂ 1 , n ∈ S 2 : k n k = 1 and the se c ond one is picke d ac c or ding to a heuristic. Note that recent trends, e . g . as in Cohen et al . [17], ackno wledge the ambiguit y and either emplo y a gauge (tangent frame) equiv ariant design or propagate the determination of a certain direction un til the last lay er [54]. Here, we will assume that ∂ 2 can b e uniquely and rep eatably computed, a reasonable assumption for the p oin t sets w e consider [52]. F or the cases where this do es not hold, we will rely on the robustness of the iterativ e routing pro cedures in our netw ork. W e will explain our metho d of choice in Sec. 6 and visualize LRFs of an airplane ob ject in Fig. 1. 6 Y. Zhao et al. 4 S O (3)-Equiv ariant Dynamic Routing Disen tangling orientation from representations requires guaranteed equiv ariances and in v ariances. Y et, the original capsule netw orks of Sabour et al . [60] cannot ac hieve equiv ariance to general groups. T o this end, Lenssen et al . [40] prop osed a dynamic routing pro cedure that guarantees equiv ariance and inv ariance under S O (2) actions, b y applying a manifold-mean and the geodesic distance as routing op erators. W e will extend this idea to the non-ab elian S O (3) and design cap- sule netw orks that sparsely operate on a set of LRFs computed via [53] on lo cal neigh b orho o ds of p oints. The S O (3) elements are paremeterized by quaternions similar to [82]. In the following, we b egin by introducing our nov el equiv arian t dynamic routing procedure, the main building blo ck of our arc hitecture. W e sho w the connection to the w ell kno wn W eiszfeld algorithm, broadening the un- derstanding of dynamic routing b y em b edding it into traditional computer vision metho dology . Then, w e present an example of how to stack those lay ers via a simple aggregation, resulting in an S O (3)-equiv arian t 3D capsule net work that yields inv ariant representations (or activ ations) as w ell as equiv arian t orienta- tions (laten t capsules). 4.1 Equiv arian t Quaternion Mean T o construct equiv arian t lay ers on the group of rotations, we are required to de- fine a left-equiv arian t a veraging operator A that is in v arian t under permutations of the group elements, as well as a distance metric δ that remains unchanged under the action of the group [40]. F or these, w e mak e the following choices: Definition 7 (Geo desic Distance) The R iemannian (ge o desic) distanc e on the manifold of r otations le ad to the fol lowing ge o desic distanc e δ ( · ) ≡ d quat ( · ) : d ( q 1 , q 2 ) ≡ d quat ( q 1 , q 2 ) = 2 cos − 1 ( |h q 1 , q 2 i| ) (3) Definition 8 (Quaternion Mean µ ( · ) ) F or a set of Q r otations S = { q i } and asso ciate d weights w = { w i } , the weighte d me an op er ator A ( S , w ) : H 1 n × R n 7→ H 1 n is define d thr ough the fol lowing maximization pr o c e dur e [48]: ¯ q = arg max q ∈ S 3 q > Mq (4) wher e M ∈ R 4 × 4 is define d as: M , Q P i =1 w i q i q > i . The av erage quaternion ¯ q is the eigenv ector of M corresp onding to the maxi- m um eigen v alue. This op eration lends itself to both analytic [46] and automatic differen tiation [39]. The follo wing prop erties allow A ( S , w ) to b e used to build an equiv arian t dynamic routing: Theorem 1 Quaternions, the employe d me an A ( S , w ) and ge o desic distanc e δ ( · ) enjoy the fol lowing pr op erties: QE-Net works 7 Algorithm 1: Quaternion Equiv ariant Dynamic Routing 1 input : Input p oints { x 1 , ..., x K } ∈ R K × 3 , input capsules (LRFs) Q = { q 1 , . . . , q L } ∈ H 1 L , with L = N c · K , N c is the num b er of capsules p er p oint, activ ations α = ( α 1 , . . . , α L ) T , trainable transformations T = { t i,j } i,j ∈ H 1 L × M 2 output: Up dated frames ˆ Q = { ˆ q 1 , . . . , ˆ q M } ∈ H 1 M , up dated activ ations ˆ α = ( ˆ α 1 , . . . , ˆ α M ) T 3 for Al l primary (input) c apsules i do 4 for Al l latent (output) c apsules j do 5 v i,j ← q i ◦ t i,j // compute votes 6 for Al l latent (output) c apsules j do 7 ˆ q j ← A { v 1 ,j . . . v K,j } , α // initialize output capsules 8 for k iterations do 9 for A l l primary (input) c apsules i do 10 w i,j ← α i · sigmoid − δ ( ˆ q j , v i,j ) // the current weight 11 ˆ q j ← A { v 1 ,j . . . v L,j } , w : ,j // see Eq (4) 12 ˆ α j ← sigmoid − 1 K L P 1 δ ( ˆ q j , v i,j ) // recompute activations 1. A ( g ◦ S , w ) is left-e quivariant: A ( g ◦ S , w ) = g ◦ A ( S , w ) . 2. Op er ator A is invariant under p ermutations: A ( { q 1 , . . . , q Q } , w ) = A ( { q σ (1) , . . . , q σ ( Q ) } , w σ ) . (5) 3. The tr ansformations g ∈ H 1 pr eserve the ge o desic distanc e δ ( · ) given in Dfn. 7. Pr o of. The pro ofs are given in the supplemen tary material. W e also note that the ab ov e mean is closed form, differentiable and can b e com- puted in a batch-wise fashion. W e are now ready to construct the dynamic r outing (DR) b y agreement that is equiv arian t to S O (3) actions, thanks to Thm. 1. 4.2 Equiv arian t W eiszfeld Dynamic Routing Our routing pro cedure extends previous work [60,40] for quaternion v alued input. The core idea is to r oute from the primary c apsules that constitute the input LRF set to the latent c apsules by an iterativ e clustering of votes v i,j . A t each step, w e assign the weigh ted group mean of v otes to the resp ective output capsules. The weigh ts w ← σ ( x , y ) are inv ersely prop otional to the distance b etw een the v ote quaternions and the new quaternion (cluster cen ter). See Alg. 1 for details. In the following, w e analyze our v arian t of routing as an interesting case of the affine, Riemannian W eiszfeld algorithm [4,3]. Lemma 1. F or σ ( x , y ) = δ ( x , y ) q − 2 the e quivariant r outing pr o c e dur e given in Alg. 1 is a variant of the affine subsp ac e Wieszfeld algorithm [4,3] that is a r obust algorithm for c omputing the L q ge ometric me dian. 8 Y. Zhao et al. ! " #$%% & ! ' ! (& " ) * + * , & " * - #$ % (. " ) " * / ! #$ & ! " !"#$ (⋅) (0 " ) * / ! & ! " (0 " '( ) 1 & " transform - ke rne l Dynamic(Routing 2 3 4 5 " * / ! #$ & ! " $) ! 6 . " " * / ! ) ! 6 7 " * - ) ! 7 " * - #$ & ! " 8 * + Fig. 2. Our q uaternion e quiv ariant c apsule (QEC) lay er for pro cessing lo cal patc hes: Our input is a 3D p oint set X on which we query local neighborho o ds { x i } with precomputed LRFs { q i } . Essen tially , we learn the parameters of a fully connected net work that contin uously maps the canonicalized lo cal point set to transformations t i , which are used to compute h yp otheses (v otes) from input capsules. By a sp ecial dynamic routing procedure that uses the activ ations determined in a previous lay er, w e arriv e at latent capsules that are composed of a set of orien tations ˆ q i and new activ ations ˆ α i . Thanks to the decoupling of local reference frames, ˆ α i is in v ariant and orientations ˆ q i are equiv arian t to input rotations. All the op erations and hence the en tire QE-netw ork are equiv ariant achieving a guaranteed disentanglemen t of the rotation parameters. Hat symb ol ( ˆ q ) r efers to ’estimate d’. Pr o of (Pr o of Sketch). The pro of follows from the definition of W eiszfeld itera- tion [3] and the mean and distance op erators defined in Sec. 4.1. W e first sho w that computing the weigh ted mean is equiv alen t to solving the normal equa- tions in the iteratively reweigh ted least squares (IRLS) scheme [14]. Then, the inner-most lo op corresp onds to the IRLS or W eiszfeld iterations. W e pro vide the detailed proof in supplementary material. Note that, in practice one is quite free to c ho ose the w eighting function σ ( · ) as long as it is inv ersely proportional to the geo desic distance and conca ve [2]. The original dynamic routing can also be formulated as a clustering pro cedure with a KL divergence regularization. This holistic view pa ves the wa y to better routing algorithms [68]. Our persp ective is akin y et more geometric due to the group structure of the parameter space. Thanks to the connection to W eiszfeld algorithm, the con v ergence b ehavior of our dynamic routing can be directly analyzed within the theoretical framework presented by [3,4]. Theorem 2 Under mild assumptions pr ovide d in the app endix, the se quenc e of the DR-iter ates gener ate d by the inner-most lo op almost sur ely c onver ges to a critic al p oint. Pr o of (Pr o of Sketch). Pro of, given in the app endix, is a direct consequence of Lemma 1 and directly exploits the connection to the W eiszfeld algorithm. In summary , the provided theorems sho w that our dynamic routing b y agreement is in fact a v ariant of robust IRLS rotation av eraging on the predicted v otes, where refined inlier scores for combinations of input/output capsules are used to route information from one lay er to the next. QE-Net works 9 Algorithm 2: Quaternion Equiv ariant Capsule Mo dule 1 input : Input p oints of one patc h { x 1 , ..., x K } ∈ R K × 3 , input capsules (LRFs) Q = { q 1 , . . . , q L } ∈ H 1 L , with L = N c · K , N c is the num b er of capsules p er p oint, activ ations α = ( α 1 , . . . , α L ) T 2 output: Up dated frames ˆ Q = { ˆ q 1 , . . . , ˆ q M } ∈ H 1 M , up dated activ ations ˆ α = ( ˆ α 1 , . . . , ˆ α M ) T 3 for Each input channel n c of al l the primary capsules channels N c do 4 µ ( n c ) ← A ( Q ( n c )) // Input quaternion average, see Eq (4) 5 for Each p oint x i of this p atch do 6 x 0 i ← µ ( n c ) − 1 ◦ x i // Rotate to a canonical orientation 7 { x 0 i } ∈ R K × N c × 3 // Points in multiple( N c ) canonical frames 8 for Each p oint x 0 i of this p atch do 9 t ← t ( x 0 i ) // Transform kernel, t ( · ) : R N c × 3 → R N c × M × 4 10 T ≡ { t i } ∈ H 1 K × N c i × M ← { t } ∈ H 1 L × M 11 ( ˆ Q , ˆ α ) ← DynamicRouting( X, Q , α , T ) // See Alg. 1 5 Equiv ariant Capsule Netw ork Arc hitecture In the following, we describ e how we lev erage the no vel dynamic routing algo- rithm to build a capsule netw ork for p oint cloud processing that is equiv ariant under S O (3) actions on the input. The essen tial ingredien t of our architecture, the quaternion e quivariant c apsule (QEC) mo dule that implements a capsule la yer with dynamic routing, is described in Sec. 5.1, b efore using it as building blo c k in the full arc hitecture, as describ ed in Sec. 5.2. 5.1 QEC Mo dule The main mo dule of our architecture, the QEC mo dule, is outlined in Fig. 2. W e also pro vide the corresp onding pseudoco de in Alg. 2. Input. The input to the mo dule is a lo cal patc h of p oin ts with co ordinates x i ⊂ R K × 3 , rotations (LRFs) attached to these points, parametrized as quaternions q i ⊂ H 1 K × N c and activ ations α i ⊂ R K × N c . W e also use q i to denote the input capsules. N c is the n umber of input capsule c hannels p er p oint and it is equal to the n umber of output capsules ( M ) from the last lay er. T r ainable tr ansformations. Recalling the original capsule netw orks of Sab our et al . [60], the trainable transformations t , which are applied to the input ro- tations to compute the v otes, lie in a grid kernel in the 2D image domain. Therefore, the pro cedure can learn to pro duce w ell-aligned votes if and only if the learned patterns in t match those in input capsule sets (agreemen t on evi- dence of ob ject existence). Since our input p oints in the local receptive field lie in contin uous R 3 , training a discrete set of p ose transformations t i,j based on discrete lo cal coordinates is not p ossible. Instead, we use a similar approach as 10 Y. Zhao et al. QEC$ - Module ! ⊂ ℳ , QEC$ - Module QEC$ - Module QEC$ - Module QEC$ - Module QEC$ - Module Downsample Downsample Intermediate *Capsules Rotat ion ' Estimation Classification ! ! " ! Fig. 3. Our entire capsule-netw ork architecture. W e hierarchically send all the lo cal patc hes to our QEC-mo dule as shown in Fig. 2. A t each level the points are po oled in order to increase the receptiv e field, gradually reducing the LRFs in to a single capsule p er class. W e use classification and orien tation estimation (in the siamese case) as sup ervision cues to train the transform-k ernels t ( · ). Lenssen et al . [40] and employ a contin uous kernel t ( · ) : R N c × 3 → R M × N c × 4 that is defined on the con tin uous R N c × 3 , instead of only a discrete set of po- sitions. The netw ork is shared ov er all p oints to compute the transformations t i,j = ( t ( x 0 1 ) , ..., t ( x 0 K )) i,j ⊂ R K × M × N c × 4 , whic h are used to calculate the votes for dynamic routing with v i,j = q i ◦ t i,j . The netw ork t ( · ) consists of fully- connected la yers that regresses the transformations, similar to common op er- ators for con tinuous conv olutions [61,70,28], just with quaternion output. The k ernel is able to learn pose patterns in the 3D space, whic h align the result- ing v otes if certain pose sets are presen t. Note that t ( · ) predicts quaternions by unit-normalizing the regressed output: t i,j ⊂ H 1 K × M × N c . Although Rieman- nian la y ers [7] or spherical predictions [43] can impro ve the p erformance, the simple strategy w orks reasonably for our case. In order for the k ernel to be in v arian t, it needs to b e aligned using an equiv- arian t initial orientation candidate [40]. Given p oints x i and rotations q i , we compute the mean µ i in a channel-wise manner like that of the initial candi- dates: µ i ⊂ H 1 N c . These candidates are used to bring the kernels in canonical orien tations by in v ersely rotating the input points: x 0 i = ( µ i − 1 ◦ x i ) ⊂ R K × N c × 3 . Computing the output. After computing the votes, we utilize the input ac- tiv ation α i as initialization w eights and iteratively refine the output capsule rotations (robust rotation estimation on v otes) ˆ q i and activ ations ˆ α i (final inlier scores) b y our W eiszfeld routing b y agreement as shown in Alg. 1. 5.2 Net work Arc hitecture F or processing point clouds, w e use m ultiple QEC mo dules in a hierarc hical arc hitecture as sho wn in Fig. 3. In the first lay er, the input primary capsules are represen ted by LRFs computed with FLARE algorithm [53]. Therefore, the n umber of input capsule c hannels N c in the first lay er is equal to 1 and activ ations are uniform. The output of a former lay er is propagated to the input of the latter, creating the hierarc hy . In order to gradually increase the receptiv e field, w e stack QEC modules creating a deep hierarch y , where each lay er reduces the num b er of p oints and increases the receptive field. In our exp eriments, we use a tw o level architecture, QE-Net works 11 T able 1. Classification accuracy on ModelNet40 dataset [77] for differen t methods as w ell as ours. W e also rep ort the num b er of parameters optimized for each metho d. X / Y means that we train with X and test with Y . PN PN++ DGCNN KDT reeNet Point2Seq Sph.CNNs PRIN PPF Ours (V ar.) Ours NR/NR 88.45 89.82 92.90 86.20 92.60 - 80.13 70.16 85.27 74.43 NR/AR 12.47 21.35 29.74 8.49 10.53 43.92 68.85 70.16 11.75 74.07 #Params 3.5M 1.5M 2.8M 3.6M 1.8M 0.5M 1.5M 3.5M 0.4M 0.4M whic h receiv es N = 64 patc hes as input. W e call the centers of these patches p o oling c enters and compute them via a uniform farthest point sampling as in [11]. Pooling cen ters serve as the p ositions of output capsules of the curren t la yer. Each of those centers is linked to their immediate vicinity leading to K = 9- star lo cal connectivit y from whic h serve as input to the first QEC mo dule to compute rotations and activ ations of 64 × 64 × 4 in termediate capsules. The second mo dule connects those intermediate capsules to the output capsules, whose num b er corresponds to the n umber of classes. Sp ecifically , for lay er 1, w e use K = 9 , N l c = 1 , M l = 64 and for lay er 2, K = 64 , N l c = 64 , M l = C = 40. This wa y , the last QEC mo dule receiv es only one input patc h and po ols all capsules into a single p oint with an estimated LRF. F or further details, we refer to our source code, which we will make av ailable online before publication and pro vide in the supplemen tal materials. 6 Exp erimen tal Ev aluations Implementation details. W e implement our net work in PyT orc h and use the AD AM optimizer [35] with a learning rate of 0 . 001. Our p oint-transformation mapping net work (transform-k ernel) is implemen ted by tw o FC-la y ers comp osed of 64 hidden units. W e set the initial activ ation of the input LRF to 1 . 0. In each la yer, w e use 3 iterations of DR. F or classification we use the spread loss [59] and the rotation loss is identical to δ ( · ). The first axis of the LRF is the surface normal computed by local plane fits [31]. W e compute the second axis, ∂ 2 , b y FLARE [53], that uses the nor- malized pro jection of the p oint with the largest distance within the p eriphery of the support, onto the tangent plane of the cen ter: ∂ 2 = p max − p k p max − p k . Using other c hoices suc h as SHOT [67] or GF rames [51] is possible. W e found FLARE to b e sufficien t for our exp erimen ts. Prior to all operations, we flip all the LRF quaternions suc h that they lie on the northern hemisphere : { q i ∈ S 3 : q w i > 0 } . 3D shap e classific ation. W e use Mo delNet40 dataset of [77,57] to assess our classification p erformance where eac h shap e is comp osed of 10 K p oints randomly sampled from the mesh surfaces of eac h shap e [55,57]. W e use the official split with 9,843 shap es for training and 2,468 for testing. W e assign the LRFs to a subset of the uniformly sampled p oints, N = 512 [11]. During training, w e do not augment the dataset with random rotations. All the shap es are trained with single orientation (w ell-aligned). W e call this tr aine d 12 Y. Zhao et al. T able 2. Relativ e angular error (RAE) of rotation estimation in different categories of Mo delNet10. Righ t side of the table denotes the ob jects with rotational symmetry , whic h w e include for completeness. PCA-S refers to running PCA only on a resampled instance, while PCA-SR applies b oth rotations and resampling. Method Avg. No Sym Chair Bed Sofa T oilet Monitor T able Desk Dresser NS Bath tub Mean LRF 0.41 0.35 0.32 0.36 0.34 0.41 0.34 0.45 0.60 0.50 0.46 0.32 PCA-S 0.40 0.42 0.60 0.53 0.46 0.32 0.12 0.47 0.23 0.33 0.43 0.55 PCA-SR 0.67 0.67 0.69 0.70 0.67 0.68 0.61 0.67 0.67 0.67 0.66 0.70 Poin tNetLK [5] 0.37 0.38 0.43 0.31 0.40 0.40 0.31 0.40 0.33 0.39 0.38 0.34 IT-Net [80] 0.27 0.19 0.10 0.22 0.17 0.20 0.28 0.31 0.41 0.44 0.40 0.39 Ours 0.27 0.17 0.11 0.20 0.16 0.18 0.19 0.43 0.40 0.48 0.33 0.31 Ours (siamese) 0.20 0.09 0.08 0.10 0.08 0.11 0.08 0.40 0.35 0.34 0.32 0.30 with NR . During testing, we randomly generate m ultiple arbitrary S O (3) rota- tions for each shap e and ev aluate the av erage performance for all the rotations. This is called test with AR . This proto col is similar to [5]’s and is used b oth for our algorithms and for the baselines. Our results are shown in T ab. 1 along with that of P ointNet (PN) [55], P ointNet++ (PN++) [55], DGCNN [72], KD- treeNet [41], Poin t2Seq [45], Spherical CNNs [25], PRIN [79] and the theoretically in v ariant PPF-F oldNet (PPF) [23]. W e also present a version of our algorithm ( V ar ) that av oids the canonicalization within the QE-netw ork. This is a non- equiv arian t netw ork that w e still train without data augmentation or orien tation sup ervision. While this version gets comparable results to the state of the art for the NR/NR case, it cannot handle random S O (3) v ariations (AR). Note that PPF uses the point-pair-feature [10] enco ding and hence creates in v ariant input representations. F or the scenario of NR/AR, our equiv ariant version out- p erforms all the other metho ds, including equiv arian t spherical CNNs [25] by a significan t gap of at least 5% even when [25] exploits the 3D mesh. The ob ject rotational symmetries in this dataset are resp onsible for a significant p ortion of the errors we make and we provide further details in supplementary material. It is worth men tioning that we also trained TFNs [66] for that task, but their memory demand made it infeasible to scale to this application. Computational asp e cts. As shown in T ab. 1 for Mo delNet40 our netw ork has 0 . 047 M parameters. It incurs a computational cost in the order O ( M K L ). The details are giv en in the supplemen tary material. R otation estimation in 3D p oint clouds. Our netw ork can estimate b oth the absolute and relative 3D ob ject rotations without p ose-sup ervision. T o ev al- uate this desired property , we used the w ell classified shap es on ModelNet10 dataset, a sub-dataset of Mo delnet40 [77]. This time, we use the official Mo de- lenet10 dataset split with 3991 for training and 908 shap es for testing. During testing, w e generate multiple instances p er shap e by transforming the instance with fiv e arbitrary S O (3) rotations. As w e are also affected b y the sampling of the point cloud, w e resample the mesh five times and generate differen t p o oling graphs across all the instances of the same shape. Our QE- arc hitecture can estimate the p ose in tw o wa ys: 1) c anonic al : by directly using the QE-Net works 13 𝑸 ⊂ ℝ 𝐶 x4 { 𝐐 𝒊 } 𝛂 ⊂ ℝ 𝐶 Sh ape Ali g n m en t Fig. 4. Shape alignment on the monitor (left) and toilet (righ t) ob jects via our siamese equiv arian t capsule arc hitecture. The shapes are assigned to the the maximally activ ated class. The corresp onding p ose capsule pro vides the rotation estimate. output capsule with the highest activ ation, 2) siamese : b y a siamese architecture that computes the relativ e quaternion b etw een the capsules that are maximally activ ated as sho wn in Fig. 4. Both mo des of op eration are free of the data augmen tation and w e give further sc hematics of the latter in our app endix. It is w orth mentioning that unlike regular p ose estimation algorithms whic h utilize the same shap e in b oth training and testing, our net work never sees the test shap es during training. This is also kno wn as c ate gory-level p ose estimation [69]. Our results against the baselines including a naive av eraging of the LRFs (Mean LRF) and principal axis alignmen t (PCA) are rep orted in T ab. 2 as the relativ e angular error (RAE). W e further include results of Poin tNetLK [5] and IT-Net [80], tw o state of the art 3D net w orks that iteratively align tw o given p oin t sets. These metho ds are in nature similar to iterative closest point (ICP) algorithm [8] but 1) do not require an initialization ( e . g . first iteration estimates the p ose), 2) learn data driv en up dates. Metho ds that use mesh inputs such as Spherical CNNs [25] cannot be included here as the random sampling of the same surface would not affect those. W e also av oid metho ds that are just in v ariant to rotations (and hence cannot estimate the p ose) such as T ensorfield Net works [66]. Finally , note that , IT-net [80] and Poin tNetLK [5] need to train for a lot of ep o chs ( e . g . 500) with random S O (3) rotation augmentation in order to obtain mo dels with full co verage of S O (3), whereas w e train only for ∼ 100 ep o chs. Finally , the recen t geometric capsule net w orks [64] remains similar to PCA with an RAE of 0 . 42 on No Sym when ev aluated under identical settings. W e include more details ab out the baselines in the appendix. Relativ e Angle in Degrees (RAE) b etw een the ground truth and the pre- diction is computed as: d ( q 1 , q 2 ) /π . Note that resampling and random rota- tions render the job of all methods difficult. Ho wev er, b oth of our canonical and siamese versions which try to find a canonical and a relative alignment resp ectiv ely , are b etter than the baselines. As p ose estimation of ob jects with rotational symmetry is a challenging task due to inherent am biguities, we also rep ort results on the non-symmetric subset (No Sym). 14 Y. Zhao et al. T able 3. Ablation study on p oint densit y . LRF Input LRF-10K LRF-2K LRF-1K Dropout 50% 66% 75% 100% 100% 100% Classification Accuracy 77.8 83.3 83.4 87.8 85.46 79.74 Angular Error 0.34 0.27 0.25 0.09 0.10 0.12 R obustness against p oint and LRF r esampling. Densit y changes in the lo cal neighborho o ds of the shap e are an imp ortan t cause of error for our net work. Hence, we ablate by applying random resampling (patch-wise drop out) ob jects in Mo delNet10 dataset and rep eating the classification and pose estimation as describ ed ab ov e. While w e use all the classes in classification accuracy , w e only consider the well classified non-symmetric (No Sym) ob jects for ablating on the p ose estimation. The first part (LRF-10K) of T ab. 3 shows our findings against gradual increases of the num ber of patches. Here, w e sample 2K LRFs from the 10K LRFs computed on an input point set of cardinality 10K. 100% drop out corresp onds to 2K points in all columns. On second ablation, we reduce the amoun t of p oints on which w e compute the LRFs, to 2K and 1K respectively . As w e can see from the table, our netw ork is robust to wards the changes in the LRFs as w ell as the densit y of the points. 7 Conclusion and Discussion W e ha v e presen ted a new framew ork for ac hieving permutation inv ariant and S O (3) equiv ariant representations on 3D point clouds. Proposing a v ariant of the capsule netw orks, w e op erate on a sparse set of rotations sp ecified b y the input LRFs thereby circum ven ting the effort to cov er the entire S O (3). Our net- w ork natively consumes a compact representation of the group of 3D rotations - quaternions. W e ha ve theoretically shown its equiv ariance and established con- v ergence results for our W eiszfeld dynamic routing b y making connections to the literature of robust optimization. Our net work by construction disentangles the ob ject existence that is used as global features in classification. It is among the few for having an explicit group-v alued latent space and th us naturally estimates the orien tation of the input shape, even without a sup ervision signal. Limitations. In the current form our p erformance is severely affected by the shap e symmetries. The length of the activ ation v ector dep ends on the n umber of classes and for a sufficiently descriptive laten t vector we need to hav e significan t n umber of classes. On the other hand, this allows us to p erform with merit on problems where the n umber of classes are large. The computation of LRFs are still sensitive to the p oint densit y changes and resampling. LRFs themselves can also be ambiguous and sometimes non-unique. F utur e work. Inspired by [17] and [54] our feature w ork will in volv e exploring the Lie algebra for equiv ariances, establishing inv ariance to the tangen t direc- tions, application of our netw ork in the broader context of 6DoF ob ject detection from point sets and lo oking for equiv ariances among p oint resampling. QE-Net works 15 References 1. Afshar, P ., Mohammadi, A., Plataniotis, K.N.: Brain tumor type classification via capsule net works. In: 2018 25th IEEE International Conference on Image Pro cess- ing (ICIP) (2018) 2. Aftab, K., Hartley , R.: Conv ergence of iterativ ely re-w eighted least squares to ro- bust m-estimators. In: Win ter Conference on Applications of Computer Vision. IEEE (2015) 3. Aftab, K., Hartley , R., T rumpf, J.: Generalized weiszfeld algorithms for lq opti- mization. IEEE transactions on pattern analysis and machine intelligence 37 (4) (2014) 4. Aftab, K., Hartley , R., T rumpf, J.: l q closest-p oin t to affine subspaces using the generalized weiszfeld algorithm. In ternational Journal of Computer Vision (2015) 5. Aoki, Y., Goforth, H., Sriv atsan, R.A., Lucey , S.: Poin tnetlk: Robust & efficient p oin t cloud registration using p ointnet. In: Pro ceedings of the IEEE Conference on Computer Vision and Pattern Recognition. pp. 7163–7172 (2019) 6. Bao, E., Song, L.: Equiv arian t neural net works and equiv arification. arXiv preprin t arXiv:1906.07172 (2019) 7. Becigneul, G., Ganea, O.E.: Riemannian adaptiv e optimization metho ds. In: In- ternational Conference on Learning Representations (2019) 8. Besl, P .J., McKay , N.D.: Metho d for registration of 3-d shap es. In: Sensor fusion IV: control paradigms and data structures. vol. 1611, pp. 586–606. International So ciet y for Optics and Photonics (1992) 9. Birdal, T., Arb el, M., Simsekli, U., Guibas, L.J.: Sync hronizing probability mea- sures on rotations via optimal transp ort. In: Pro ceedings of the IEEE/CVF Con- ference on Computer Vision and P attern Recognition. pp. 1569–1579 (2020) 10. Birdal, T., Ilic, S.: P oin t pair features based ob ject detection and pose estima- tion revisited. In: 2015 In ternational Conference on 3D Vision. pp. 527–535. IEEE (2015) 11. Birdal, T., Ilic, S.: A p oint sampling algorithm for 3d matching of irregular geome- tries. In: IEEE/RSJ International Conference on Intelligen t Rob ots and Systems (IR OS). IEEE (2017) 12. Birdal, T., Simsekli, U., Eken, M.O., Ilic, S.: Bay esian p ose graph optimization via bingham distributions and temp ered geo desic mcmc. In: Adv ances in Neural Information Pro cessing Systems. pp. 308–319 (2018) 13. Bo omsma, W., F rellsen, J.: Spherical conv olutions and their application in molec- ular mo delling. In: Adv ances in Neural Information Pro cessing Systems 30. pp. 3433–3443 (2017) 14. Burrus, C.S.: Iterativ e rew eighted least squares. OpenStax CNX. Av ailable online: h ttp://cnx. org/con tents/92b90377-2b34-49e4-b26f-7fe572db78a1 12 (2012) 15. Busam, B., Birdal, T., Na v ab, N.: Camera p ose filtering with lo cal regression geo desics on the riemannian manifold of dual quaternions. In: IEEE In ternational Conference on Computer Vision W orkshop (ICCVW) (Octob er 2017) 16. Chakrab ort y , R., Banerjee, M., V emuri, B.C.: H-cnns: Conv olutional neural net- w orks for riemannian homogeneous spaces. arXiv preprint arXiv:1805.05487 (2018) 17. Cohen, T., W eiler, M., Kicanaoglu, B., W elling, M.: Gauge equiv arian t conv olu- tional net w orks and the icosahedral CNN. In: Pro ceedings of the 36th In ternational Conference on Machine Learning. pp. 1321–1330 (2019) 18. Cohen, T., W elling, M.: Group equiv ariant conv olutional netw orks. In: Interna- tional conference on machine learning. pp. 2990–2999 (2016) 19. Cohen, T.S., Geiger, M., K¨ ohler, J., W elling, M.: Spherical cnns. In: 6th Interna- tional Conference on Learning Representations, (ICLR) (2018) 16 Y. Zhao et al. 20. Cohen, T.S., Geiger, M., W eiler, M.: A general theory of equiv ariant cnns on ho- mogeneous spaces. In: Adv ances in Neural Information Pro cessing Systems. pp. 9145–9156 (2019) 21. Cohen, T.S., W elling, M.: Steerable cnns. International Conference on Learning Represen tations (ICLR) (2017) 22. Cruz-Mota, J., Bogdanov a, I., P aquier, B., Bierlaire, M., Thiran, J.P .: Scale in- v arian t feature transform on the sphere: Theory and applications. In ternational Journal of Computer Vision 98 (2), 217–241 (Jun 2012) 23. Deng, H., Birdal, T., Ilic, S.: Ppf-foldnet: Unsupervised learning of rotation inv ari- an t 3d lo cal descriptors. In: Europ ean Conference on Computer Vision (ECCV) (2018) 24. Deng, H., Birdal, T., Ilic, S.: Ppfnet: Global con text aw are local features for robust 3d p oint matc hing. In: Conference on Computer Vision and P attern Recognition (2018) 25. Estev es, C., Allen-Blanc hette, C., Mak adia, A., Daniilidis, K.: Learning so (3) equiv arian t represen tations with spherical cnns. In: Proceedings of the Europ ean Conference on Computer Vision (ECCV). pp. 52–68 (2018) 26. Estev es, C., Sud, A., Luo, Z., Daniilidis, K., Mak adia, A.: Cross-domain 3d equiv arian t image embeddings. In: In ternational Conference on Machine Learn- ing (ICML) (2019) 27. Estev es, C., Xu, Y., Allen-Blanchette, C., Daniilidis, K.: Equiv arian t multi-view net works. In: Proceedings of the IEEE International Conference on Computer Vi- sion. pp. 1568–1577 (2019) 28. F ey , M., Eric Lenssen, J., W eic hert, F., M ¨ uller, H.: Splinecnn: F ast geometric deep learning with contin uous b-spline kernels. In: The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (June 2018) 29. Giles, C.L., Maxw ell, T.: Learning, inv ariance, and generalization in high-order neural netw orks. Applied optics 26 (23), 4972–4978 (1987) 30. Hin ton, G.E., Krizhevsky , A., W ang, S.D.: T ransforming auto-enco ders. In: In ter- national Conference on Artificial Neural Net works. pp. 44–51. Springer (2011) 31. Hopp e, H., DeRose, T., Duchamp, T., McDonald, J., Stuetzle, W.: Surface recon- struction from unorganized p oints, v ol. 26.2. A CM (1992) 32. Jaisw al, A., Ab dAlmageed, W., W u, Y., Natara jan, P .: Capsulegan: Generative adv ersarial capsule net work. In: Computer Vision – ECCV 2018 W orkshops. pp. 526–535. Springer International Publishing (2019) 33. Jiang, C.M., Huang, J., Kashinath, K., Prabhat, Marcus, P ., Niessner, M.: Spher- ical CNNs on unstructured grids. In: International Conference on Learning Repre- sen tations (2019) 34. Khoury , M., Zhou, Q.Y., Koltun, V.: Learning compact geometric features. In: Pro ceedings of the IEEE In ternational Conference on Computer Vision. pp. 153– 161 (2017) 35. Kingma, D.P ., Ba, J.: Adam: A method for sto chastic optimization. arXiv preprin t arXiv:1412.6980 (2014) 36. Kondor, R., Lin, Z., T riv edi, S.: Clebsch–gordan nets: a fully fourier space spher- ical conv olutional neural net work. In: Adv ances in Neural Information Pro cessing Systems (2018) 37. Kondor, R., T riv edi, S.: On the generalization of equiv ariance and conv olution in neural netw orks to the action of compact groups. In: In ternational Conference on Mac hine Learning. pp. 2747–2755 (2018) 38. Kosiorek, A., Sabour, S., T eh, Y.W., Hin ton, G.E.: Stac ked capsule auto enco ders. In: Adv ances in Neural Information Pro cessing Systems. pp. 15512–15522 (2019) QE-Net works 17 39. Laue, S., Mitterreiter, M., Giesen, J.: Computing higher order deriv atives of matrix and tensor expressions. In: Adv ances in Neural Information Pro cessing Systems (2018) 40. Lenssen, J.E., F ey , M., Libuschewski, P .: Group equiv ariant capsule netw orks. In: Adv ances in Neural Information Pro cessing Systems. pp. 8844–8853 (2018) 41. Li, J., Chen, B.M., Hee Lee, G.: So-net: Self-organizing netw ork for p oint cloud analysis. In: Pro ceedings of the IEEE conference on computer vision and pattern recognition (2018) 42. Li, Y., Bu, R., Sun, M., W u, W., Di, X., Chen, B.: P ointcnn: Conv olution on x- transformed points. In: Adv ances in Neural Information Processing Systems (2018) 43. Liao, S., Gavv es, E., Sno ek, C.G.: Spherical regression: Learning viewpoints, sur- face normals and 3d rotations on n-spheres. In: Pro ceedings of the IEEE Conference on Computer Vision and Pattern Recognition. pp. 9759–9767 (2019) 44. Liu, M., Y ao, F., Choi, C., Ay an, S., Ramani, K.: Deep learning 3d shap es using alt-az anisotropic 2-sphere conv olution. In: International Conference on Learning Represen tations (ICLR) (2019) 45. Liu, X., Han, Z., Liu, Y.S., Zwick er, M.: P oint2sequence: Learning the shap e rep- resen tation of 3d p oint clouds with an atten tion-based sequence to sequence net- w ork. In: Proceedings of the AAAI Conference on Artificial Intelligence. vol. 33, pp. 8778–8785 (2019) 46. Magn us, J.R.: On differentiating eigenv alues and eigenv ectors. Econometric Theory 1 (2) (1985) 47. Marcos, D., V olpi, M., Komodakis, N., T uia, D.: Rotation equiv arian t vector field net works. In: The IEEE International Conference on Computer Vision (ICCV) (Oct 2017) 48. Markley , F.L., Cheng, Y., Crassidis, J.L., Oshman, Y.: Av eraging quaternions. Journal of Guidance, Control, and Dynamics 30 (4), 1193–1197 (2007) 49. Maturana, D., Sc herer, S.: V oxnet: A 3d conv olutional neural net work for real-time ob ject recognition. In: Intelligen t Rob ots and Systems (IR OS). IEEE (2015) 50. Mehr, E., Lieutier, A., Sanchez Berm udez, F., Guitteny , V., Thome, N., Cord, M.: Manifold learning in quotient spaces. In: Pro ceedings of the IEEE Conference on Computer Vision and Pattern Recognition. pp. 9165–9174 (2018) 51. Melzi, S., Spezialetti, R., T om bari, F., Bronstein, M.M., Stefano, L.D., Ro dola, E.: Gframes: Gradien t-based lo cal reference frame for 3d shape matching. In: The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (June 2019) 52. P etrelli, A., Di Stefano, L.: On the rep eatability of the lo cal reference frame for partial shap e matching. In: 2011 International Conference on Computer Vision. IEEE (2011) 53. P etrelli, A., Di Stefano, L.: A repeatable and efficien t canonical reference for surface matc hing. In: 2012 Second In ternational Conference on 3D Imaging, Mo deling, Pro cessing, Visualization & T ransmission. pp. 403–410. IEEE (2012) 54. P oulenard, A., Ovsjaniko v, M.: Multi-directional geo desic neural netw orks via equiv arian t conv olution. In: SIGGRAPH Asia 2018 T echnical Papers. p. 236. ACM (2018) 55. Qi, C.R., Su, H., Mo, K., Guibas, L.J.: Poin tnet: Deep learning on p oint sets for 3d classification and segmentation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. pp. 652–660 (2017) 56. Qi, C.R., Su, H., Nießner, M., Dai, A., Y an, M., Guibas, L.J.: V olumetric and m ulti-view cnns for ob ject classification on 3d data. In: Pro ceedings of the IEEE conference on computer vision and pattern recognition. pp. 5648–5656 (2016) 18 Y. Zhao et al. 57. Qi, C.R., Yi, L., Su, H., Guibas, L.J.: P ointnet++: Deep hierarc hical feature learn- ing on point sets in a metric space. In: Adv ances in neural information processing systems. pp. 5099–5108 (2017) 58. Rezatofighi, S.H., Milan, A., Abbasnejad, E., Dic k, A., Reid, I., et al.: Deepsetnet: Predicting sets with deep neural net works. In: 2017 IEEE In ternational Conference on Computer Vision (ICCV). pp. 5257–5266. IEEE (2017) 59. Sab our, S., F rosst, N., Hinton, G.: Matrix capsules with em routing. In: 6th Inter- national Conference on Learning Representations, ICLR (2018) 60. Sab our, S., F rosst, N., Hinton, G.E.: Dynamic routing betw een capsules. In: Ad- v ances in neural information pro cessing systems. pp. 3856–3866 (2017) 61. Sc h ¨ utt, K., Kindermans, P .J., Sauceda F elix, H.E., Chmiela, S., Tk atc henko, A., M ¨ uller, K.R.: Schnet: A con tinuous-filter con volutional neural netw ork for model- ing quantum interactions. In: Adv ances in Neural Information Processing Systems (2017) 62. Shen, Y., F eng, C., Y ang, Y., Tian, D.: Mining point cloud lo cal structures by k ernel correlation and graph p o oling. In: Pro ceedings of the IEEE conference on computer vision and pattern recognition. pp. 4548–4557 (2018) 63. Sp ezialetti, R., Salti, S., Stefano, L.D.: Learning an effective equiv ariant 3d descrip- tor without sup ervision. In: Pro ceedings of the IEEE In ternational Conference on Computer Vision. pp. 6401–6410 (2019) 64. Sriv asta v a, N., Goh, H., Salakhutdino v, R.: Geometric capsule autoenco ders for 3d p oin t clouds. arXiv preprint arXiv:1912.03310 (2019) 65. Steenro d, N.E.: The topology of fibre bundles, vol. 14. Princeton Univ ersity Press (1951) 66. Thomas, N., Smidt, T., Kearnes, S., Y ang, L., Li, L., Kohlhoff, K., Riley , P .: T ensor field net works: Rotation-and translation-equiv ariant neural netw orks for 3d p oint clouds. arXiv preprint arXiv:1802.08219 (2018) 67. T ombari, F., Salti, S., Di Stefano, L.: Unique signatures of histograms for local surface description. In: European conference on computer vision. pp. 356–369. Springer (2010) 68. W ang, D., Liu, Q.: An optimization view on dynamic routing b etw een capsules (2018), https://openreview.net/forum?id=HJjtFYJDf 69. W ang, H., Sridhar, S., Huang, J., V alen tin, J., Song, S., Guibas, L.J.: Normalized ob ject co ordinate space for category-level 6d ob ject p ose and size estimation. In: Pro ceedings of the IEEE Conference on Computer Vision and Pattern Recognition. pp. 2642–2651 (2019) 70. W ang, S., Suo, S., Ma, W.C., Pokro vsky , A., Urtasun, R.: Deep parametric con tin- uous con volutional neural net works. In: The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (June 2018) 71. W ang, Y., Sun, Y., Liu, Z., Sarma, S.E., Bronstein, M.M., Solomon, J.M.: Dynamic graph cnn for learning on p oint clouds. ACM T ransactions on Graphics (TOG) (2019) 72. W ang, Y., Sun, Y., Liu, Z., Sarma, S.E., Bronstein, M.M., Solomon, J.M.: Dynamic graph cnn for learning on p oint clouds. ACM T ransactions on Graphics (TOG) 38 (5), 1–12 (2019) 73. W eiler, M., Geiger, M., W elling, M., Boomsma, W., Cohen, T.: 3d steerable cnns: Learning rotationally equiv ariant features in volumetric data. In: Adv ances in Neu- ral Information Pro cessing Systems. pp. 10381–10392 (2018) 74. W eiler, M., Hamprec ht, F.A., Storath, M.: Learning steerable filters for rotation equiv arian t cnns. In: The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (June 2018) QE-Net works 19 75. W orrall, D., Brosto w, G.: Cub enet: Equiv ariance to 3d rotation and translation. In: The Europ ean Conference on Computer Vision (ECCV) (September 2018) 76. W orrall, D.E., Garbin, S.J., T urm ukhambetov, D., Brostow, G.J.: Harmonic net- w orks: Deep translation and rotation equiv ariance. In: The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (July 2017) 77. W u, Z., Song, S., Khosla, A., Y u, F., Zhang, L., T ang, X., Xiao, J.: 3d shapenets: A deep representation for v olumetric shapes. In: Pro ceedings of the IEEE conference on computer vision and pattern recognition. pp. 1912–1920 (2015) 78. Xin yi, Z., Chen, L.: Capsule graph neural net work. In: In ternational Conference on Learning Represen tations (ICLR) (2019), openreview.net/forum?id=Byl8BnRcYm 79. Y ou, Y., Lou, Y., Liu, Q., T ai, Y.W., Ma, L., Lu, C., W ang, W.: Poin twise rotation- in v ariant netw ork with adaptiv e sampling and 3d spherical vo xel con volution. In: AAAI. pp. 12717–12724 (2020) 80. Y uan, W., Held, D., Mertz, C., Heb ert, M.: Iterative transformer netw ork for 3d p oin t cloud. arXiv preprint arXiv:1811.11209 (2018) 81. Zaheer, M., Kottur, S., Rav anbakhsh, S., P o czos, B., Salakh utdinov, R.R., Smola, A.J.: Deep sets. In: Adv ances in Neural Information Pro cessing Systems (2017) 82. Zhang, X., Qin, S., Xu, Y., Xu, H.: Quaternion product units for deep learning on 3d rotation groups. In: Pro ceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp. 7304–7313 (2020) 83. Zhao, Y., Birdal, T., Deng, H., T om bari, F.: 3d p oint capsule netw orks. In: Con- ference on Computer Vision and P attern Recognition (CVPR) (2019) 20 Y. Zhao et al. A Pro of of Prop osition 1 Before presen ting the proof we recall the three individual statements contained in Prop. 1: 1. A ( g ◦ S , w ) is left-equiv ariant: A ( g ◦ S , w ) = g ◦ A ( S , w ). 2. Op erator A is in v ariant under p ermutations: A ( { q σ (1) , . . . , q σ ( Q ) } , w σ ) = A ( { q 1 , . . . , q Q } , w ). 3. The transformations g ∈ H 1 preserv e the geo desic distance δ ( · ). Pr o of. W e will prov e the prop ositions in order. 1. W e start by transforming each element and replace q i b y ( g ◦ q i ) of the cost defined in Eq. 4 of the main paper: q > Mq = q > Q X i =1 w i q i q > i q (6) = q > Q X i =1 w i ( g ◦ q i )( g ◦ q i ) > q (7) = q > Q X i =1 w i Gq i q > i G > q (8) = q > GM 1 G > + · · · + GM Q G > q = q > G M 1 G > + · · · + M Q G > q (9) = q > G M 1 + · · · + M Q G > q (10) = q > GMG > q (11) = p > Mp , (12) where M i = w i q i q > i and p = G > q . F rom orthogonallity of G it follows p = G − 1 q = ⇒ g ◦ p = q and hence g ◦ A ( S , w ) = A ( g ◦ S , w ). 2. The proof follows trivially from the permutation in v ariance of the symmetric summation operator ov er the outer products in Eq (9). 3. It is sufficien t to sho w that | q > 1 q 2 | = | ( g ◦ q 1 ) > ( g ◦ q 2 ) | for any g ∈ H 1 : | ( g ◦ q 1 ) > ( g ◦ q 2 ) | = | q > 1 G > Gq 2 | (13) = | q > 1 Iq 2 | (14) = | q > 1 q 2 | , (15) where g ◦ q ≡ Gq . The result is a direct consequence of the orthonormality of G . QE-Net works 21 B Pro of of Lemma 1 W e will b egin b y recalling some preliminary definitions and results that aid us to construct the connection betw een the dynamic routing and the W eiszfeld algorithm. Definition 9 (Affine Subspace) A d -dimensional affine subsp ac e of R N is obtaine d by a tr anslation of a d -dimensional line ar subsp ac e V ⊂ R N such that the origin is include d in S : S = n d +1 X i =1 α i x i | d +1 X i =1 α i = 1 o . (16) Simplest choic es for S involve p oints, lines and planes of the Euclide an sp ac e. Definition 10 (Orthogonal Pro jection onto an Affine Subspace) An or- tho gonal pr oje ction of a p oint x ∈ R N onto an affine subsp ac e explaine d by the p air ( A , c ) is define d as: Π i ( x ) , pr oj S ( x ) = c + A ( x − c ) . (17) c denotes the tr anslation to make origin inclusive and A is a pr oje ction matrix typic al ly define d via the orthonormal b ases of the subsp ac e. Definition 11 (Distance to Affine Subspaces) Distanc e fr om a given p oint x to a set of affine subsp ac es { S 1 , S 2 . . . S k } c an b e written as [4]: C ( x ) = k X i =1 d ( x , S i ) = k X i =1 k x − pr oj S i ( x ) k 2 . (18) Lemma 2. Given that al l the antip o dal c ounterp arts ar e mapp e d to the northern hemispher e, we wil l now think of the unit quaternion or versor as the unit normal of a four dimensional hyp erplane h , p assing thr ough the origin: h i ( x ) = q > i x + q d := 0 . (19) q d is an adde d term to c omp ensate for the shift. When q d = 0 the origin is incident to the hyp erplane. With this p ersp e ctive, quaternion q i forms an affine subsp ac e with d = 4 , for which the pr oje ction op er ator takes the form: pr oj S i ( p ) = ( I − q i q > i ) p (20) Pr o of. W e consider Eq (20) for the case where c = 0 and A = ( I − qq > ). The former follows from the fact that our subspaces by construction pass through the origin. Th us, we only need to sho w that the matrix A = I − qq > is an orthogonal pro jection matrix on to the affine subspace spanned by q . T o this end, it is sufficient to v alidate that A is symmetric and idemp otent: A > A = AA = A 2 = A . Note that by construction q > q is a symmetric matrix and hence A 22 Y. Zhao et al. itself. Using this prop erty and the unit-ness of the quaternion, we arriv e at the pro of: A > A = ( I − qq > ) > ( I − qq > ) (21) = ( I − qq > )( I − qq > ) (22) = I − 2 qq > + qq > qq > (23) = I − 2 qq > + qq > (24) = I − qq > , A (25) It is easy to verify that the pro jections are orthogonal to the quaternion that defines the subspace by showing pro j S ( q ) > q = 0: q > pro j S ( q ) = q > Aq = q > ( I − qq > ) q = q > ( q − qq > q ) = q > ( q − q ) = 0 . (26) Also note that this choice corresp onds to tr( qq > ) = P d +1 i =1 α i = 1. Lemma 3. The quaternion me an we suggest to use in the main p ap er [48] is e quivalent to the Euclide an Weiszfeld me an on the affine quaternion subsp ac es. Pr o of. W e no w recall and summarize the L q -W eiszfeld Algorithm on affine sub- spaces [4], whic h minimizes a q -norm v ariant of the cost defined in Eq (18): C q ( x ) = k X i =1 d ( x , S i ) = k X i =1 k x − pro j S i ( x ) k q . (27) Defining M i = I − A i , Alg. 3 summarizes the iterative pro cedure. Algorithm 3: L q W eiszfeld Algorithm on Affine Subspaces [4]. 1 input: An initial guess x 0 that do es not lie any of the subspaces { S i } , Pro jection op erators Π i , the norm parameter q 2 x t ← x 0 3 while not c onver ge d do 4 Compute the weigh ts w t = { w t i } : w t i = k M i ( x t − c i ) k q − 2 ∀ i = 1 . . . k (28) 5 Solv e: x t +1 = arg min x ∈ R N k X i =1 w t i k M i ( x − c i ) k 2 (29) Note that when q = 2, the algorithm reduces to the computation of a non- w eighted mean ( w i = 1 ∀ i ), and a closed form solution exists for Eq (29) and is QE-Net works 23 giv en by the normal equations: x = k X i =1 w i M i − 1 k X i =1 w i M i c i (30) F or the case of our quaternionic subspaces c = 0 and w e seek the solution that satisfies: k X i =1 M i x = 1 k k X i =1 M i x = 0 . (31) It is w ell kno wn that the solution to this equation under the constrain t k x k = 1 lies in n ullspace of M = 1 k k P i =1 M i and can be obtained by taking the singular v ec- tor of M that corresponds to the largest singular v alue. Since M i is idemp otent, the same result can also b e obtained through the eigendecomp osition: q ? = arg max q ∈S 3 qMq (32) whic h gives us the unw eigh ted Quaternion mean [48]. C Pro of of Theorem 1 Once the Lemma 1 is prov en, we only need to apply the direct conv ergence results from the literature. Consider a set of p oints Y = { y 1 . . . y K } where K > 2 and y i ∈ H 1 . Due to the compactness, w e can sp eak of a ball B ( o , ρ ) encapsulating all y i . W e also define the D = { x ∈ H 1 | C q ( x ) < C q ( o ) } , the region where the loss decreases. W e first state the assumptions that permit our theoretical result. These as- sumptions are required b y the w orks that establish the con vergence of such W eiszfeld algorithms [2,3] : H1. y 1 . . . y K should not lie on a single geo desic of the quaternion manifold. H2. D is b ounded and compact. The top ological structure of S O (3) imp oses a b ounded con vexit y radius of ρ < π / 2. H3. The minimizer in Eq (29) is contin uous. H4. The weigh ting function σ ( · ) is conca ve and differentiable. H5. Initial quaternion (in our netw ork chosen randomly) does not b elong to any of the subspaces. Note that H5 is not a strict requiremen t as there are multiple wa ys to circum- v ent (simplest b eing a re-initialization). Under these assumptions, the sequence pro duced b y Eq (29) will con verge to a critical p oint unless x t = y i for an y t and i [3]. F or q = 1, this critical point is on one of the subspaces sp ecified in Eq (19) and th us is a geometric median. u t Note that due to the assumption H2 , we cannot conv erge from an y giv en p oin t. F or randomly initialized netw orks this is indeed a problem and do es not 24 Y. Zhao et al. QEC$ - Module ! ⊂ ℳ , QEC$ - Module QEC$ - Module QEC$ - Module QEC$ - Module QEC$ - Module Downsample Downsample Intermediate *Capsules Pose% Estimation Classification ! ! $ ⊂ ℳ 4 " ! ! " " " Classification Quaternion$Equi variant$Capsule$Arc hitecture Fig. 5. Our siamese architecture used in the estimation of relative p oses. W e use a shared net work to process tw o distinct p oint clouds ( X , Y ) to arrive at the laten t represen tations ( C X , α X ) and ( C Y , α Y ) resp ectively . W e then lo ok for the highest activ ated capsules in both p oint sets and compute the rotation from the corresp onding capsules. Thanks to the rotations disentangled into capsules, this final step simplifies to a relative quaternion calculation. guaran tee practical conv ergence. Y et, in our exp erimen ts w e ha ve not observed an y issue with the conv ergence of our dynamic routing. As our result is one of the few ones related to the analysis of DR, we still find this to b e an important first step. F or differen t choices of q : 1 ≤ q ≤ 2, the weigh ts tak e different forms. In fact, this IRLS t yp e of algorithm is shown to conv erge for a larger class of weigh ting c hoices as long as the aforemen tioned conditions are met. That is wh y in practice w e use a simple sigmoid function. D F urther Discussions On c onver genc e, runtime and c omplexity. Note that while the con ver- gence basin is kno wn, to the best of our kno wledge, a conv ergence rate for a W eiszfeld algorithm in affine subspaces is not established. F rom the literature of robust minimization via Riemannian gradient descen t (this is essentially the corresp onding particle optimizer), w e conjecture that suc h a rate dep ends upon the c hoice of the con vex regime (in this case 1 ≤ q ≤ 2) and is at b est linear – though we did not prov e this conjecture. In practice w e run the W eiszfeld iteration only 3 times, similar to the original dynamic routing. This is at least sufficien t to conv erge to a p oint goo d enough for the netw ork to explain the data at hand. QEC module summarized in the Alg. 2 of the main pap er can b e dissected in to three main steps: (i) canonicalization of the lo cal oriented p oint set, (ii) the t -k ernel and (iii) dynamic routing. Ov erall the total computational complexit y reads O ( L + K C M LP + C DR ) where C M LP and C DR are the computational costs of the MLP and the DR resp ectively: C DR = LM + M ( K + k (2 L ) + L ) = M ( K + 2( k + 1) L ) C M LP = 64 N c + 4 M N 2 c . (33) QE-Net works 25 chair sofa toilet bed monitor table night_stand bathtub dresser desk chair sofa toilet bed monitor table night_stand bathtub dresser desk 0 20 40 60 80 Classification Accuracy (%) (a) c hair monit or bed t oi l e t sof a un i t sp he r e (b) Fig. 6. (a) Confusion matrix on Mo delNet10 for classification. (b) Distribution of initial p oses p er class. Note that Eq (33) depicts the complexity of a single QEC mo dule. In our ar- c hitecture we use a stac k of those eac h of which cause an added increase in the complexit y prop ortional to the n umber of p oints downsampled. Our weigh ted quaternion a v erage relies upon a differen tiable SVD. While not increasing the theoretical computational complexit y , when done naiv ely , this op eration can cause significant increase in run time. Hence, we compute the SVD using CUDA k ernels in a batch-wise manner. This batch-wise SVD mak es it p ossible to av erage a large amount of quaternions with high efficiency . Note that w e omit the computational asp ects of LRF calculation as we consider it to b e an input to our system and differen t LRFs exhibit differen t costs. W e hav e further conducted a run time analysis in the 3D Shap e Classific ation exp erimen t on an Nvidia GeF orce R TX 2080 Ti with the net work configuration men tioned in Sec. 5.2 of the main pap er. During training, each batch (where batc h size b = 8) takes 0 . 226s and 1939M of GPU memory . During inference, pro cessing eac h instance takes 0 . 036s and consumes 1107M of GPU memory . Note that the use of LRFs helps us to restrict the rotation group to certain elemen ts and th us w e can use net works with significan tly less parameters (as lo w as 0 . 44 M ) compared to others as sho wn in T ab. 1 of the main pap er. Num- b er of parameters in our netw ork dep ends up on the num ber of classes, e.g. for Mo delNet10 w e hav e 0 . 047 M parameters. Quaternion ambiguity. Quaternions of the northern and southern hemi- spheres represen t the same exact rotation, hence one of them is r e dundant . By mapping one hemisphere to the other, we sacrifice the closeness of the manifold. This could slightly distort the b ehavior of the linearization op erator around the Ecuador. How ever, the rest of the op erations such as geodesic distances re- sp ect suc h antipo dality , as w e consider the Quaternionic manifold and not the sphere. When the subset of operations we develop and the nature of local refer- ence frames are concerned, w e did not find this transformation to cause serious shortcomings. 26 Y. Zhao et al. ( a ) I np ut P oint C lou d ( b ) I ni ti al L RF s ( c ) L RF s Prior t o Q E - Net w or k - 1 ( d ) M ult i - ch an ne l LRF s Prior t o Q E - Net w or k - 2 Fig. 7. Additional intermediate results on car (first row) and c hair (second ro w) ob jects. This figure supplements Fig. 1(a) of the main pap er. Performanc e on differ ent shap es with same orientation. The NR/NR scenario in T ab. 1 of the main paper in volv es classification on differen t shapes within a category without rotation, e . g . c hairs with different shap es. In adden- dum, w e no w provide in Fig. 6(b) an additional insigh t in to the p ose distribution for all canonicalized ob jects within a class. T o do so, we rotate the horizontal standard basis vector e x = [1 , 0 , 0] using the predict quaternion (the most ac- tiv ated output capsule) and plot the resulting point on a unit sphere as shown in Fig. 6(b). A qualitativ e observ ation rev eals that for all fiv e non-symmetric classes, the p oses of all the instances within a class w ould form a cluster. This roughly holds across all classes and indicates that the relativ e p ose information is consistent within the classes. On the other hand, ob jects with symmetries form m ultiple clusters. E Our Siamese Arc hitecture F or estimation of the relativ e p ose with supervision, we b enefit from a Siamese v ariation of our net w ork. In this case, latent capsule represen tations of t wo point sets X and Y jointly con tribute to the pose regression as sho wn in Fig. 5. W e sho w additional results from the computation of lo cal reference frames and the m ulti-channel capsules deduced from our netw ork in Fig. 7. F Additional Details on Ev aluations Details on the evaluation pr oto c ol. F or Mo delnet40 dataset used in T ab. 1, we stick to the official split with 9,843 shapes for training and 2,468 different shap es for testing. F or rotation estimation in T ab. 2, w e again used the official Mo delenet10 dataset split with 3991 for training and 908 shap es for testing. 3D p oint clouds (10K p oints) are randomly sampled from the mesh surfaces of eac h shap e [55,57]. The ob jects in training and testing dataset are different, but QE-Net works 27 Fig. 8. Additional pairwise shape alignment on more categories in Modelnet10 dataset. W e do not p erform any ICP and the transformations that align the t wo p oint clouds are direct results of the forw ard pass of our Siamese netw ork. they are from the same categories so that they can be orien ted meaningfully . During training, we did not augment the dataset with random rotations. All the shap es are trained with single orien tation (well-aligned). W e call this tr aine d with NR . During testing, we randomly generate m ultiple arbitrary S O (3) rotations for each shap e and ev aluate the a verage p erformance for all the rotations. This is called test with AR . This protocol is used in b oth our algorithms and the baselines. Confusion of classific ation in Mo delNet. T o provide additional insigh t in to how our activ ation features p erform, w e now rep ort the confusion matrix in the task of classification on the all the ob jects of ModelNet10. Unique to our algorithm, the classification and rotation estimation reinforces one another. As seen from Fig. 6(a) on the righ t, the first five categories that exhibit less rotational symmetry has the higher classification accuracy than their rotationally symmetric coun terparts. Distribution of err ors r ep orte d in T ab. 2. W e now pro vide more details on the errors attained by our algorithm as w ell as the state of the art. T o this end, w e rep ort, in Fig. 9 the histogram of errors that fall within quan tized ranges of 28 Y. Zhao et al. orien tation errors. It is noticeable that our Siamese architecture b ehav es b est in terms of estimating the ob jects rotation. F or completeness, we also included the results of the v arian ts presented in our ablation studies: Ours-2kLRF, Ours- 1kLRF. They ev aluate the model on the re-calculated LRFs in order to sho w the robustness to w ards to v arious point densities. W e hav e also modified IT- Net and Poin tNetLK only to predict rotation b ecause the original works predict b oth rotations and translations. Finally , note here that we do not use data augmen tation for training our net w orks (see AR), while both for Poin tNetLK and for I T-Net w e do use augmen tation. Ours Ours-sia Ours-2kLRF Ours-1kLRF IT-net PointNetLK Mean-LRF PCA < 1 5 ° 23.18% 58.16% 53.64% 41.36% 49.68% 45.40% 16.24% 29.80% < 3 0 ° 49.53% 79.12% 76.88% 66.40% 68.40% 49.28% 33.84% 32.00% < 6 0 ° 69.87% 87.48% 86.68% 78.76% 79.48% 55.84% 57.64% 33.80% 0 10 20 30 40 50 60 70 80 90 100 Percentage (%) Fig. 9. Cum ulative error histograms of rotation estimation on Mo delNet10. Each row ( < θ ◦ ) of this extended table shows the p ercentage of shapes that hav e rotation error less than θ . The colors of the bars corresp ond to the ro ws they reside in. The higher the errors are con tained in the first bins (light blue) the b etter. Vice versa, the more the errors are clustered tow ard the 60 ◦ the worse the performance of the method.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment