Learning Non-Parametric Invariances from Data with Permanent Random Connectomes

One of the fundamental problems in supervised classification and in machine learning in general, is the modelling of non-parametric invariances that exist in data. Most prior art has focused on enforcing priors in the form of invariances to parametri…

Authors: Dipan K. Pal, Akshay Chawla, Marios Savvides

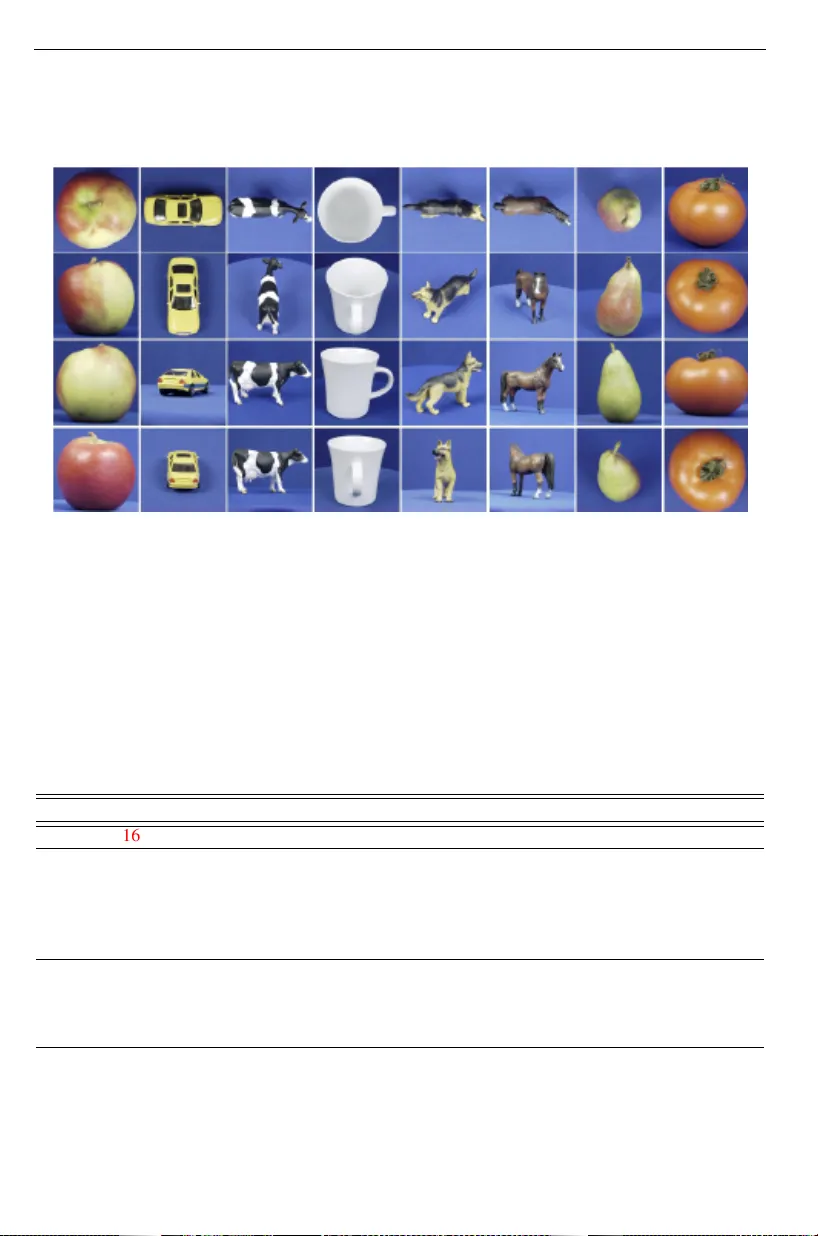

PERMANENT RANDOM CONNECTOME NETW ORKS: 1 Learning Non-P arametric In v ariances fr om Data with P ermanent Random Connectomes Dipan K. P al dipanp@andrew .cm u.edu Aksha y Chawla aksha ych@andrew .cmu.edu Marios Savvides marioss@andrew .cmu.edu Dept. Electrical and Computer Engg. Carnegie Mellon University Pittsburgh, P A, USA Abstract Learning non-parametric in variances directly from data remains an important open problem. In this paper, we introduce a ne w architectural layer for conv olutional net- works which is capable of learning general in variances from data itself. This layer can learn inv ariance to non-parametric transformations and interestingly , moti vates and in- corporates permanent random connectomes, thereby being called Permanent Random Connectome Non-Parametric T ransformation Networks (PRC-NPTN). PRC-NPTN net- works are initialized with random connections (not just weights) which are a small subset of the connections in a fully connected conv olution layer . Importantly , these connections in PRC-NPTNs once initialized remain permanent throughout training and testing. Per- manent random connectomes make these architectures loosely more biologically plau- sible than many other mainstream network architectures which require highly ordered structures. W e motiv ate randomly initialized connections as a simple method to learn in variance from data itself while inv oking in variance to wards multiple nuisance transfor- mations simultaneously . W e find that these randomly initialized permanent connections hav e positive effects on generalization, outperform much larger ConvNet baselines and the recently proposed Non-Parametric T ransformation Network (NPTN) on benchmarks such as augmented MNIST , ETH-80 and CIF AR10, that enforce learning in variances from the data itself. 1 Intr oduction The Problem of In voking In variances. Learning in variances to nuisance transformations in data has emerged to be a core problem in machine learning [ 1 , 4 , 9 , 11 , 15 ]. Moving tow ards real-world data of different modalities, it is a daunting task to theoretically model all nuisance transformations. T ow ards this goal, methods which learn non-parametric in- variances from the data itself without any change in architecture will be critical. Howe ver , before delving into methods which learn such in variances, it is important to study methods which incorporate known in variances in data. An early method to incorporate the translation prior was the Con volutional Neural Network (ConvNet) [ 17 ]. Over the years, there hav e been efforts in in vestigating what other transformations result in useful hand-crafted priors c 2020. The copyright of this document resides with its authors. It may be distributed unchanged freely in print or electronic forms. 2 PERMANENT RANDOM CONNECTOME NETW ORKS: Channel Max Pooling G Intermediate Activation Bank Input Activation Output Activation PRC-NPTN layer Invariance in Deep Networks Parametric Invariances ConvNets, G-CNN, FRPC, ScatNet, DREN, W arped CNN, SiCNN, Steerable CNN Non- Parametric Invariances Deep SymNets, NPTN PRC-NPTN Conv 3x3 (in, in*|G|) groups=|G| Permanent Rand Channel Shuffling (fixed indices) Channel Max Pooling Channel A verage Pooling (out) Figure 1: Left: Operations comprising the PRC-NPTN layer . The number of input and output channels in the Con v layer is (inch) and (inch ∗| G | ) respectively . G is the number of filters (linear transformations learnt) for each input channel. The key operation proposed is the Permanent Random Channel shuffling operation with a fixed inde x mapping for every forward pass. This indexing or connectome is initialized randomly during netw ork initializa- tion. Center: Architecture of the PRC-NPTN layer . Each input channel is con volved with a number of filters (parameterized by G). Each of the resultant activ ation maps is connected to a one of the channel max pooling units randomly (initialized once, fixed during training and testing). Each channel pooling unit pools ov er a fixed random support of a size parame- terized by CMP . Right: Explicit in variances enforced within deep networks in prior art are mostly parametric in nature. The important problem of learning non-parametric in variances from data has not receiv ed a lot of attention. in data such as rotation and scale [ 4 , 5 , 8 , 14 , 19 , 22 , 31 ]. It is important to note howe ver that these methods ultimately were limited to hand-crafted inv ariances assumed to be useful for the task at hand. Motivation of this Study: In this study , we motiv ate and inv estigate one possible ar- chitecture that can learn in variances tow ards multiple transformations from data itself. At the heart of the architecture is the structure called the permanent random connectome. This simply refers to a channel shuffling layer that uses a fixed shuf fling schedule throughout the life of the network (including training and testing) resulting in a permanent connectome. Importantly howe ver , this shuffling indexing is chosen at random at the initialization of the network. Thereby leading to the layer being referred to as the permanent random connec- tome. W e find that layers utilizing the permanent random operation allo w architectures to learn multiple in variances efficiently from data itself. Our motiv ation also loosely stems from observations re garding connectomes in the cortex. Prior Art Learning In variances from Data or using Random Connectomes. A dif- ferent class of architectures that have been recently proposed explicitly attempt to learn the transformation inv ariances directly from the data, with the only inductiv e bias being the structur e or arc hitecture that allows them to do so. One of the earliest attempts using back- propagation was the SymNet [ 9 ], which utilized kernel based interpolation to learn general in variances. Although given the interesting nature of the study , the method was limited in scalability . Spatial Transformer Networks [ 15 ] were also designed to learn activ ation nor- malization from data itself, ho wever the transformation in variance learned was parametric in nature. A more recent ef fort was through the introduction of the T ransformation Network paradigm [ 25 ]. Non-P arametric Transformation Networks (NPTN) were introduced as an generalization of the con volution layer to model general symmetries from data [ 25 ]. It was also introduced as an alternate direction of network dev elopment other than skip connections. PERMANENT RANDOM CONNECTOME NETW ORKS: 3 Transformation 1 (T1) Transformation 2 (T2) Feature invariant to only T2 Feature invariant to only T1 (a) Homogeneous Structured Pooling Transformation 1 (T1) Transformation 2 (T2) Feature invariant to both T1 and T2 (b) Permanent Random Sup- port Pooling Vectorize Random Pooling across features Random Pooling across channels (c) PRC-NPTN Random Channel Pooling Figure 2: (a) Homogeneous Structured Pooling pools across the entire range of transfor- mations of the same kind leading to feature vectors in variant only to that particular transfor- mations. Here, two distinct feature vectors are in variant to transformation T 1 and T 2 inde- pendently . (b) Heterogeneous Random Support Pooling pools across randomly selected ranges of multiple transformations simultaneously . The pooling supports are defined during initialization and remain fixed during training and testing. This results in a single feature vector that is in variant to multiple transformations simultaneously . Here, each colored box defines the support of the pooling and pools across features only inside the boxed region leading to one single feature. (c) V ectorized Random Support P ooling extends this idea to con volutional networks, where one realizes that the random support pooling on the feature grid (on the left) is equiv alent to random support pooling of the vectorized grid. Each ele- ment of the vector (on the right) no w represents a single channel in a con volutional network and hence random support pooling in PRC-NPTNs occur across channels. The con volution operation followed by pooling was re-framed as pooling across outputs from the translated versions of a filter . T ranslation forming a unitary group generates in variance through group symmetry [ 1 ]. The NPTN frame work has the important adv antage of learning general in variances without any change in architecture while being scalable. Giv en this is an important open problem, we introduce an e xtension of the T ransformation Network (TN) paradigm with an enhanced ability to learn non-parametric in variances through permanent random connectivity . There have been many seminal works that hav e indeed explored the role of temporary random connections in deep networks such as DropOut [ 29 ], DropConnect [ 32 ] and Stochastic Pooling [ 38 ]. Howe ver , unlik e the proposed approach, the connections in these networks randomly change at e very forward pass, hence are temporary . More recently , random permanent connections were explored for large scale architectures [ 35 ]. It is impor- tant howe ver to note that the basic unit of computation, the con volutional layer , remained unchanged. Our study explores permanent random connectomes within the conv olutional layer itself, and explores how it can learn non-parametric in variances to multiple transforma- tions simultaneously in a simple manner . W e briefly visit other deep architectures that hav e been proposed ov er the years in the supplementary . Relaxed Biological Motivation for Randomly Initialized Connectomes. Although not central to our moti vation, the observ ation that the corte x lacks precise local pathways for back-propagation provided the initial inspiration for this study . It further garnered pull from the observation that random unstructured local connections are indeed common in many parts of the cortex [ 6 , 27 ]. Moreov er, it has been sho wn that orientation selectivity can arise in the visual cortex ev en through local random connections [ 12 ]. Though we do not explore these biological connections in more detail, it is still an interesting observation. There has also been some interesting work which e xplored the use of random weight matrices for back propagation [ 21 ]. Here, the forward weight matrices were updated so as to fruitfully use the random weight matrices during back propagation. The moti vation of the [ 21 ] study was to 4 PERMANENT RANDOM CONNECTOME NETW ORKS: address the biological implausibility of the transport of precise gradients through the cortex due to the lack of exact connections and pathways [ 10 , 23 , 30 , 36 ]. The common presence of random connections in the cortex at a local lev el leads us to ask: Is it possible that such locally random connectomes improv e generalization in deep netw orks? W e pro vide e vidence for answering this question in the positiv e. Contributions. 1) W e motiv ate permanent random connectomes from the perspecti ve of learning in variance to multiple transformations directly from data. The fundamental problem of learning non -parametric in variances in perception has not recei ved enough attention. W e present an architectural prior capable of such a task with loose biological motiv ation. 2) W e present a theoretical result on learning in variances to transformations which do not obey a group structure in contrast to prior work. 3) W e provide results on learning inv ariances to individual and multiple transformations in data without any change in architecture whatso- ev er . Further , we demonstrate improvements in generalization while using PRC-NPTN as a drop in replacement to con v layers in DenseNets. 4) Finally , as an engineering ef fort, we dev elop fast and ef ficient CUD A k ernels for random channel pooling which result in efficient implementations of PRC-NPTNs in terms of computational speed and memory requirements compared to traditional Pytorch code. 2 P ermanent Random Connectome NPTNs W e begin by motiv ating permanent random connectomes from the perspectiv e of selecting each specific support for pooling. W e find that permanent random channel pooling in vokes in variance to multiple transformations simultaneously . Inv estigating idea of pooling across transformations to in voke in variance, permanent random pooling emerges naturally . As part of our contribution, we present a theoretical result which confirms a long standing intuition that max pooling in vokes in variance. In voking Inv ariance through Max Pooling. In pre vious years a number of theories hav e emerged on the mechanics of generating in variance through pooling. [ 1 , 2 ] develop a framew ork in which the transformations are modelled as a group comprised of unitary oper - ators denoted by { g ∈ G } . These operators transform a given filter w through the operation gw 1 , follo wing which the dot-product between these transformed filters and an novel input x is measured through h x , gw i . It was shown by [ 1 ] that any moment such as the mean or max (infinite moment) of the distrib ution of these dot-products in the set {h x , gw i| g ∈ G } is an in variant. These in variants will exhibit robustness to the transformation in G encoded by the transformed filters in practice, as confirmed by [ 20 , 26 ]. Though this framework did not make any assumptions on the distribution of the dot-products, it imposed the restricting assumption of group symmetry on the transformations. W e now show that inv ariance can be inv oked e ven when avoiding the assumption that the transformations in G need to form a group . Nonetheless, we assume that the distribution of the dot-product h x , gw i is uniform and thus we hav e the following result 2 . Lemma 2.1. (Invariance Pr operty) Assume a novel test input x and a filter w both fixed vectors ∈ R d . Further , let g denote a random variable r epresenting unitary operators with 1 The action of the group element g on w is denoted by gw to promote clarity . 2 W e provide a proof in the supplementary and thank Purvasha Chakrav arti at CMU for the proof.. The as- sumption of the distrib ution being uniform is meant to provide insight into the general behavior of the max pooling operation, rather than a statement that deep learning features are uniformly distributed. PERMANENT RANDOM CONNECTOME NETW ORKS: 5 some distrib ution. F inally , let ϒ ( x ) = h x , gw i , with ϒ ( x ) ∼ U ( a , b ) i.e. a Uniform distribution between a and b. Then, we have V ar ( max ϒ ( x )) ≤ V ar ( ϒ ( x )) = V ar ( h g − 1 x , w i ) This result is interesting because it sho ws that the max operation of the dot-products has less variance due to g than the pre-pooled features. Though this is largely known empirical result, a concrete proof for in voking inv ariance was so far missing. More importantly , it bypasses the need for a group structure on the nuisance transformations G . Practical studies such as [ 20 , 26 ] had ignored the effects of non-group structure in theory while demonstrating effecti ve empirical results. Also note that the v ariance of the max is less than the v ariance of the quantity h g − 1 x , w i , which implies that max ϒ ( x ) is more robust to g ev en in test, though it has nev er observed gx . This useful property is due to the unitarity of g . Connection to Deep Networks. PRC-NPTN as we will show , perform max pooling across channels not space, to in voke in variance. In the framew ork h x , gw i , w would be one con volution filter with g being the transformed v ersion of it. Note that this modelling is done only to satisfy a theoretical construction, we do not actually transform filters in practice. All transformed filters are learnt through backpropagation. This frame work is already utilized in Con vNets. For instance, Con vNets [ 17 ] pool only across translations (con volution operation itself followed by spatial max pooling implies g to be translation). In voking In variance through Channel Pooling in Deep Networks. Consider a grid of features that have been obtained through a dot product h x , gw i (for instance from a con- volution acti vation map, where the grid is simply populated with each k × k × 1 filter , not k × k × c ) (see Fig. 2(a) ). Assume that along the two axes of the grid, two different kinds of transformation are acted. T 1 along the horizontal axis and T 2 along the vertical. T 1 = g 1 ( · ; θ 1 ) where g 1 is a transformation parameterized by θ 1 that acts on w and similarly T 2 = g 2 ( · ; θ 2 ) . Now , pooling homogeneously across one axis in vok es inv ariance only to the corresponding g (for a more in depth analysis see [ 1 ]). Similarly , pooling along T 2 only will result in a feature vector (Feature 2) in variant only to T 2 . These representations (Feature 1 and 2) have complimentary in variances and can be used for complimentary tasks e.g. face recognition (in variant to pose) versus pose estimation (in v ariant to subject). This approach has one major limitation that this scales linearly with the number of transformations which is impractical. One therefore would need features that are in variant to multiple transformations simultane- ously . A simple yet effecti ve approach is to pool along all axes thereby being in variant to all transformations simultaneously . Howe ver , doing so will result in a degenerati ve feature (that is in variant to ev erything and discriminativ e to nothing). Therefore, the key is to limit the range of pooling performed for each transformation. Choosing the Support for Pooling at Random: Permanent Random Connectomes. A solution to trivial feature problem described above, is to limit the range or support of pooling as illustrated in Fig. 2(b) . One simple way of selecting such a support for pooling is at random. This selection would happen only once during initialization of the network (or any other model), and will remain fixed through training and testing. In order to increase the selecti vity of such features, multiple such pooling units are needed with such a randomly initialized support [ 1 , 24 ]. These multiple pooling units together form the feature that is in variant to multiple transformations simultaneously , which improves generalization as we find in our experiments. This is called heterogeneous pooling and Fig. 2(b) illustrates this more concretely . W e therefore find that permanent random pooling is motiv ated naturally through the need to attain in variance to multiple transformations simultaneously . 6 PERMANENT RANDOM CONNECTOME NETW ORKS: (a) Rotation 0 ◦ (b) Rotation 30 ◦ (c) Rotation 60 ◦ (d) Rotation 90 ◦ Figure 3: Individual T ransformation Results: T est error statistics with mean and stan- dard deviation on MNIST with progressiv ely extreme transformations with a) random ro- tations and b) random pixel shifts (in supplementary). Only for PRC-NPTN and NPTN the brackets indicate the number of channels in the layer 1 and G . Con vNet FC denotes the addition of a 2-layered pooling 1 × 1 pooling network after e very layer . Note that for this experiment, CMP= | G | . Permanent Random Connectomes help with achieving better gener- alization despite increased nuisance transformations. W e provide e xperiments on CIF AR 10 using DenseNets in the supplementary . The PRC-NPTN layer . Fig. 1 sho ws the the architecture of a single PRC-NPTN layer 3 . The PRC-NPTN layer consists of a set of N in × G filters of size k × k where N in is the number of input channels and G is the number of filters connected to each input channel. More specifically , each of the N in input channels connects to | G | filters. Then, a number of channel max pooling units randomly select a fixed number of activ ation maps to pool ov er . This is parameterized by Channel Max Pool (CMP). Note that this random support selection for pooling is the reason a PRC-NPTN layer contains a permanent random connectome. These pooling supports once initialized do not change through training or testing. Once max pooling over CMP activ ation maps completes, the resultant tensor is average pooled across channels with a av erage pool size such that the desired number of outputs is obtained. After the CMP units, the output is finally fed through a two layered netw ork with the same number of channels with 1 × 1 k ernels, which we call a pooling network. This small pooling netw ork helps in selecting non-linear combinations of the inv ariant nodes generated through the CMP operation, thereby enriching feature combinations do wnstream. For experimental rigor , we also benchmark against the baseline Con vNet supplemented with this 1x1 pooling network. 3 Empirical Evaluation and Discussion Goal. The goal of our e valuation study is to demonstrate PRC-NPTNs as capable of learning transformations from data and to showcase improvements in generalization in supervised classification o ver rele v ant baselines. The goal is not in f act, to compete with the state of the art approaches for any dataset. General Experimental Settings. For all experiments, we run all models for 300 epochs trained using SGD. The initial learning rate was kept at 0.1 and decreased by 10 at 50% and 75% epoch completion. Momentum was kept at 0.9 with a weight decay of 10 − 5 . Batch size 3 W e provide pseudo-code in the supplementary PERMANENT RANDOM CONNECTOME NETW ORKS: 7 (a) (0 ◦ , 0 pix) (b) (30 ◦ , 4 pix) (c) (60 ◦ , 8 pix) (d) (90 ◦ , 12 pix) Figure 4: Simultaneous T ransformation Results: T est error statistics with mean and standard deviation on MNIST with progressi vely e xtreme transformations with random ro- tations and random pixel shifts simultaneously . For PRC-NPTN and NPTN the brack- ets indicate the number of channels in the layer 1 and G . Note that for this experiment, CMP= | G | . W e provide e xperiments on CIF AR 10 with DenseNets in the supplementary . was kept at 64 for both MNIST and ETH-80 4 . For the MNIST experiments, gradients were clipped to norm 1. Each block for all baselines for Con vNet and PRC-NPTN had either a con volution layer or PRC-NPTN layer followed by batch normalization, PReLU and spatial max pooling. The conv olutional kernel size for all models was kept at 5 × 5 for all MNIST experiments and 3 × 3 for all other models. Spatial max pooling of size 3 × 3 was performed after ev ery layer , BN and PReLU for MNIST models. Limitations in T ypical Implementations and Developing F aster Ker nels. Our imple- mentation with traditional PyT orch still suffered from heavy GPU memory use and slower run times despite optimizing code at the PyT orch abstraction lev el. The key bottleneck in computational and memory efficiency was found to be the randomized channel pooling op- eration. The issue was addressed by dev eloping CUDA kernels that performed pooling on non-contiguous blocks of memory without creating copies of the same. This allowed for faster non-contiguous pooling ov er feature and activ ation maps with a significant reduction in memory usage. The operation was built as a CUD A-kernel that is interfaced with Py- T orch through CuPy . This engineering effort is part of our contribution and we demonstrate significant improvements in memory and computational efficienc y in our experiments. W e show improvements in memory and speed as dif ferent aspects of the PRC-NPTN networks are changed in the supplementary 5 . W e observe a consistent speed up of atleast 1.5x and a significant reduction in memory usage. Efficacy in Learning Arbitrary and Unknown T ransformations In variances from Data. W e e v aluate on one of the most important tasks of any perception system, i.e. being in variant to nuisance transformations learned from the data itself. Most other architectures based on v anilla Con vNets learn these in variances through the implicit neural network func- tional map rather than explicitly through the architecture as PRC-NPTNs. Moreover , most previous approaches needed hand crafted architectures to handle different transformations. W e benchmark our networks based on tasks where nuisance transformations such as large amounts of in-plane rotation and translation are steadily increased, with no change in archi- tecture whatsoev er . For this purpose, we utilize MNIST where it is straightforward to add such transformations without any artifacts. 4 W e provide experiments on CIF AR-10 in the supplementary . 5 W e provide details of the architecture, benchmarking and additional experiments in the supplementary . 8 PERMANENT RANDOM CONNECTOME NETW ORKS: Architecture Con vNet B C(12) - C(24) - C(48) - C(48) - GAP - FC(300) - FC(200) - FC(8) NPTN-large B C(12) - NPTN(24) - NPTN(48) - NPTN(48) - GAP - FC(300) - FC(200) - FC(8) NPTN-small B C(12) - NPTN(8) - NPTN(24) - NPTN(48) - GAP - FC(300) - FC(200) - FC(8) PRC-NPTN B C(12) - PRC(24) - PRC(48) - PRC(48) - GAP - FC(300) - FC(200) - FC(8) Con vNet C C(12) - C(24) - C(48) - C(64) - C(128) - GAP - FC(8) PRC-NPTN C C(12) - PRC(24) - PRC(48) - PRC(64) - PRC(128) - GAP - FC(8) T able 1: Architectures tested on ETH-80. C - conv olution layer , FC - fully connected layer , PRC - PRC-NPTN layer , NPTN - NPTN layer, GAP - global a verage pooling layer . Every Con v , NPTN and PRC-NPTN layer was followed by a spatial pooling layer of kernel size 2 except the last laer before the GAP . The 1 × 1 versions of these architectures have a 1 × con v layer after every 3 × 3 layer except the first C(12) layer . Con vNet B was designed to be similar to the architecture explored in [ 16 ] howe ver with more layers. Con vNet C was designed to be more aligned with modern architecture choices such as global a verage pooling followed by just one FC layer . W e benchmark on such a task as described in [ 25 ] and for fair comparisons, we follo w the exact same protocol. W e train and test on MNIST augmented with progressiv ely in- creasing transformations i.e. 1) extreme random translations (up to 12 pixels in a 28 by 28 image), 2) extreme random rotations (up to 90 ◦ rotations) and finally 3) both transformations simultaneously . Both train and test data were augmented randomly for e very sample leading to an increase in ov erall complexity of the problem. No architecture was altered in anyway between the two transformations i.e. they were not designed to specifically handle either . The same architecture for all networks is expected to learn inv ariances directly from data unlike prior art where such in variances are hand crafted in [ 4 , 14 , 19 , 28 , 31 , 37 ]. For this experiment, we utilize a two layered network with the intermediate layer 1 hav- ing up to 36 channels and layer 2 having exactly 16 channels for all networks (similar to the architectures in [ 25 ]) except a wider Con vNet baseline with 512 channels. All ConvNet, NPTN and PRC-NPTN models hav e the similar number of parameters (except the ConvNet with 512 channels). For PRC-NPTN, the number of channels in layer 1 was decreased from 36, through to 9 while | G | was increased in order to maintain similar number of parameters. All PRC-NPTN networks hav e a two layered 1 × 1 pooling network with same number of channels as that layer . For a fair benchmark, Con vnet FC has 2 two-layered pooling networks with 36 channels each. A verage test errors are reported ov er 5 runs for all networks. Discussion. W e present all test errors for this experiment in Fig. 6 and Fig. 4 6 . From both figures, it is clear that as more nuisance transformations act on the data, PRC-NPTN networks outperform other baselines with the same number of parameters. In fact, e ven with significantly more parameters, Con vNet-512 performs worse than PRCN-NPTN on this task for all settings. Since the testing data has nuisance transformations similar to the training data, the only way for a model to perform well is to learn inv ariance to these transforma- tions. It is also interesting to observe that permanent random connectomes do indeed help with generalization. Indeed, without randomization the performance of PRCN-NPTNs drop substantially . The performance improvement of PRC-NPTN also increases with nuisance transformations, showcasing the benefits arising from modelling such inv ariances. This is 6 W e display only the (12, 3) configuration for NPTN as it performed the best. The translation results and more benchmarks with NPTNs are provided in the supplementary . W e obtain similar perforamnce improvements with extreme translation as well. PERMANENT RANDOM CONNECTOME NETW ORKS: 9 Method (Protocol 1) Accuracy (%) #params Factor #filters Reduction Con vNet [ 16 ] 93 . 69 1.4M 230 - NPTN* [ 25 ] 96 . 2 1.4M 230 - Con vNet B 95 . 61 110K 1 × 3780 1 × Con vNet B (1 × 1) 94 . 54 115K 0 . 95 × 3780 1 × NPTN-large B (3) 94 . 63 189K 0 . 58 × 11268 0 . 33 × NPTN-small B (3) 95 . 09 120K 0 . 91 × 4356 0 . 86 × PRC-NPTN B (8, 2) 96 . 72 97K 1 . 13 × 708 5 . 33 × Con vNet C 93 . 90 116K 1 × 12740 1 × Con vNet C (1 × 1) 95 . 98 138K 0 . 84 × 12740 1 × PRC-NPTN C (8, 2) 95 . 93 64K 1 . 81 × 1220 10 . 44 × PRC-NPTN C (8, 4) 96 . 40 39K 2 . 97 × 1220 10 . 44 × T able 2: T est accuracy on ETH-80 Protocol 1. ∗ indicates the result was obtained from the corresponding paper, and is not on the split used for our experiments. For NPTN is number in the bracket denotes | G | , for PRC-NPTN the numbers denote | G | and CMP respectiv ely . particularly apparent from Fig. 4 , where the two simultaneous nuisance transformations pose a significant challenge. Y et, as the transformations increase, the performance improvements increase as well. Evaluation on the ETH-80 dataset The ETH-80 dataset was introduced in [ 18 ] as a benchmark to test models against 3D pose variation of v arious objects. The dataset contains 80 objects belonging to 8 dif ferent classes. Each object has images from different viewpoints on a hemisphere for a total of 41 images per object. The images were resized to 50 × 50 following [ 16 ]. This dataset is perfectly poised to test how efficiently a model can learn in variance to 3D vie wpoint variation. Protocol: For this experiment, we follow the ev aluation protocol as described in [ 16 ] 7 . W e randomly select 2,300 images to train and test on the rest. For a fair comparison we retrain the Con vNet described in [ 16 ]. W e design two ConvNet architectures which reflect more modern architecture choices such as a smaller FC layer or having only a global av erage pooling after a number of con v layers. T able. 1 presents the architectures that we train for this experiment. Every conv layer (except the first conv layer within a PRC-NPTN layer) is followed by BatchNorm and ReLU. W e train corresponding PRC-NPTN models that ha ve fewer parameters. For these experiments with PRC-NPTN, we replace the a verage pooling across channels (not max pooling or CMP) with a 1 × 1 conv olution layer . W e do this to explore the ef fect of weighted pooling instead of vanilla channel av erage pooling. T o maintain a fair comparison, we compare against equi valent Con vNet baselines with an extra 1 × 1 added. W e also perform an ablation study with the randomization remo ved. All models were trained with Adam with a learning rate of 0.01 for 100 epochs and a batch size of 64. Each architecture was trained 10 separate times with the mean of the runs being reported. W e showcase the results in T able. 2 . Discussion: W e find that of the two different types of architectures that we explore, PRC-NPTNs outperform both corresponding Con vNet architectures. Further , they do so not only with fewer number of parameters, but also fewer number of 3 × 3 filters. In fact, PRC- NPTN C for | G | = 8 and CMP=4 outperforms the corresponding ConvNet C architectures with a 2 . 97 × reduction in the number of parameters and a 10 . 44 × reduction in the number 7 W e also present results on a harder protocol we devised in the supplementary 10 PERMANENT RANDOM CONNECTOME NETW ORKS: of 3 × 3 con volution filters. Similarly , PRC-NPTNs outperform two architectures of NPTNs [ 25 ] both with significantly fewer parameters and 3 × 3 filters. These results illustrate that PRC-NPTN can utilize filters and parameters more efficiently on a classification problem which requires 3D pose in variance. This efficienc y we conjecture, is due to the fact that permanent random pooling results in an inductiv e bias that explicitly helps the learning of multiple in variances within the same layer thereby vastly increasing model capacity . Note that the almost 3X reduction in the number of parameters and 10X reduction in the number of filters is achiev ed without the use of any network pruning or post processing methods. Note that competing methods presented in [ 16 ] all perform comparably howe ver with 1.4 million parameters each with the highest result being TIGradNet [ 16 ] at 95.1, HarmNet at 94.0 [ 33 ]. PRC-NPTN outperforms these methods which were designed to inv oke in variances through inductiv e biases while using a fraction of the number of parameters. PERMANENT RANDOM CONNECTOME NETW ORKS: 11 4 A ppendix Abstract: In this supplementary material, we provide a proof for Lemma 2.1 in the main paper , a more complete table of results including different parameter comparisons of NTPN baselines, timing results of our more efficient CUD A implementation and the pseudocode for PRCN-NPTNs. Further, we present results on the dataset ETH-80 consisting of 3D viewpoint v ariations of objects in comparison with previous work. Finally , we present results on CIF AR 10 with v anilla DenseNets and PRCN applied to DenseNets to form DensePRC- NPTNs. Prior Art using Alternate Architectures. Several works have explored alternate deep layer architectures. A few of the main dev elopments were the application of the skip con- nection [ 13 ], depthwise separable con volutions [ 3 ] and group con volutions [ 34 ]. Randomly initialized channel shuffling is an operation that is central to the application of permanent random connectomes. Howe ver , deterministic non-randomized channel shuffling was ex- plored while optimizing networks for computation efficienc y [ 39 ]. Nonetheless, none of these methods explored permanent and random connectomes from the perspecti ve of ex- plicitly learning in variances from data itself. In variances in a PRC-NPTN layer . Recent work introducing NPTNs [ 25 ] had high- lighted the Transformation Network (TN) framework in which in variance is generated dur- ing the forward pass by pooling over dot-products with transformed filter outputs. A vanilla con volution layer with a single input and output channel (therefore a single con volution fil- ter) followed by a k × k spatial pooling layer can be seen as a single TN node enforcing translation inv ariance with the number of filter outputs being pooled over to be k × k . It has been sho wn that k × k spatial pooling over the con volution output of a single filter is an ap- proximation to channel pooling across the outputs of k × k translated filters [ 25 ]. The output ϒ ( x ) of such an operation with an input patch x can be expressed as ϒ ( x ) = max g ∈ G h x , gw i (1) where G is the set of filters whose outputs are being pooled ov er . Thus, G defines the set of transformations and thus the in variance that the TN node enforces. In a vanilla conv o- lution layer , this is the translation group (enforced by the conv olution operation followed by spatial pooling). An NPTN removes any constraints on G allowing it to approximately model arbitrarily comple x transformations. A v anilla con volution layer would have one filter whose con volution is pooled ov er spatially (for translation in variance). In contrast, an NPTN node has |G | independent filters whose con volution outputs are pooled across channel wise leading to general in variance. A PRC-NPTN layer inherits the property from NPTNs to learn arbitrary transformations and thereby arbitrary in variances using G . Individual channel max pooling (CMP) nodes act as NPTN nodes sharing a common filter bank as opposed to independent and disjoint filter banks for v anilla NPTNs. This allows for greater activ ation sharing, where transformations learned from data through one subset of filters can be used for inv oking similar inv ariances in a parallel computation path. This sharing and reuse of activ ation maps allo ws for higher parameter and sample ef ficiency . As we find in our experiments, randomization plays a crit- ical role here, allowing for a simple and quick approximation to obtaining high performing in variances. A high activ ation map can activ ate multiple CMP nodes, winning over multiple sub-sets of low activ ations. Gradients flow back to these winning activ ations updating the filters to further model the features observed during that particular batch. Note that, CMP 12 PERMANENT RANDOM CONNECTOME NETW ORKS: 3 4 5 6 7 Depth (# of layers) 1 1.5 2 2.5 3 3.5 Improvement Factor (xTimes) Wall Time Speedup Memory Decrease Factor (a) Depth 1 2 3 4 CMP (channel max pooling) 1 1.5 2 2.5 3 3.5 Improvement Factor (b) CMP 20 40 60 80 100 120 Width (# of channels) 1 1.5 2 2.5 3 3.5 Improvement Factor (c) Width 5 10 15 20 G (growth factor) 1 1.5 2 2.5 3 3.5 Improvement Factor (d) Growth Factor Figure 5: Computational efficiency impro vements of our CUD A kernel implementations. nodes in the same layer can pool over disjoint subsets to in voke a variety of in v ariances, leading to a more versatile network and also better modelling of a particular kind of inv ari- ance as we find in our experiments. Further, the primary source of in voking in variances in NPTN was understood to be the symmetry of the unitary group action space [ 25 ]. General in variances were assumed to be only approximately forming a group. Lemma 4.1 shows that group symmetry is not necessary to reduce variance of the quantity max ϒ ( x ) due to the action of the set elements g on some test input patch x . Though, the result makes a strong assumption regarding the distribution of ϒ ( x ) , it to the best of our knowledge the first result of its kind to show increased in variance without a group symmetric action. Efficacy on CIF AR10 Image Classification. MNIST was a good candidate for the previous experiment where the addition of nuisance transformations such as translation and rotation did not introduce any artifacts. Ho wever , in order to v alidate permanent random connectomes on more realistic data, we utilize the CIF AR10 dataset and AutoAugmentation [ 7 ] as the nuisance transformation. Note that, from the perspective of previous works in network in variance, it is unclear how to hand craft architectures to handle in v ariances due to the variety of transformations that AutoAugment inv okes. Here is where the general in variance learning capability of PRC-NPTNs would help, without the need of expertise in such hand-crafting. W e replace v anilla con volution layers with kernel size 3 in DenseNets with PRC-NPTNs without the 2-layered pooling networks. There was another modification for this experiment. For each input channel of a layer , a total of | G | = 12 filters were learnt. Howe ver only a few of them were pooled over (channel max pool or CMP). W e pool with CMP = 1, 2, 3 or 4 channels randomly k eeping | G | = 12 fixed alw ays. Note that in contrast with the MNIST e x- periment, pooling was always done ov er | G | number of channels (CMP= | G | ). This provides a dif ferent setting under which PRC-NPTN can be utilized. All models in this experiment were trained with AutoAugment and were tested on both a) the original testing images and also on b) the test set transformed by AutoAugment. Similarly to the pre vious experiment, a model would hav e learn inv ariance towards these auto-augment transformations in order to perform well. All DenseNet models hav e 12 layers with the PRC-NPTN v ariant having the same number of parameters to enable us to perform multiple runs in a reasonable amount of time. The lower accuracy compared to other studies can be accounted by this. W e train 5 models for each setting and report the mean and standard deviation of the errors. T raining 5 runs for each of the hyperparameter combination to account for the randomization is yet another reason which tended to result in unreasonably large experiment times. Importantly , the goal of this experiment is not to push the state-of-the-art, b ut rather to in vestigate the be- havior of DensePRC-NPTNs within the limits of computational resources available for this study while ex ecuting 5 runs for each network. PERMANENT RANDOM CONNECTOME NETW ORKS: 13 (a) Trans 0 pix (b) Trans 4 pix (c) Trans 8 pix (d) Trans 12 pix Figure 6: Individual T ransformation Results: T est error statistics with mean and standard deviation on MNIST with progressiv ely extreme transformations with random pixel shifts . For PRC-NPTN and NPTN the brackets indicate the number of channels in the layer 1 and G . ConvNet FC denotes the addition of a 2-layered pooling 1 × 1 pooling network after e very layer . Note that for this experiment, CMP= | G | . Permanent Random Connectomes help with achieving better generalization despite increased nuisance transformations. Method CIF AR10 (w/o Random) CIFAR 10++ (w/o Random) Speed Memory DenseNet-Conv 11 . 47 ± 0 . 19 - 21 . 37 ± 0 . 29 - DensePRC-NPTN (CMP=1) 11 . 82 ± 0 . 20 13 . 33 ± 0 . 23 22 . 03 ± 0 . 08 23 . 88 ± 0 . 38 1.29x 2.92x DensePRC-NPTN (CMP=2) 10 . 78 ± 0 . 31 11 . 67 ± 0 . 36 20 . 71 ± 0 . 23 21 . 90 ± 0 . 33 1.34x 1.96x DensePRC-NPTN (CMP=3) 10 . 95 ± 0 . 12 11 . 59 ± 0 . 23 20 . 95 ± 0 . 20 21 . 80 ± 0 . 42 1.36x 1.64x DensePRC-NPTN (CMP=4) 10 . 61 ± 0 . 11 11 . 41 ± 0 . 12 20 . 80 ± 0 . 12 21 . 47 ± 0 . 16 1.36x 1.48x T able 3: Efficacy on CIF AR10: T est error statistics on CIF AR10 with mean and standard deviation. ++ indicates AutoAugment testing. Each DenseNet and its corresponding PRC- NPTN variant has the same number of parameters. | G | = 12 for PRC-NPTN and growth rate was kept at 12 for DenseNet-Con v . (w/o Random) indicates no randomization in the connectomes constructed (as an ablation study). The speed and memory improvements are multiplicativ e improv ement factors of our CUD A kernel implementation compared to base- line optimized PyT orch code. Discussion. T able. 3 presents the results of this experiment. W e find PRC-NPTN pro- vides clear benefits e ven with architectures emplo ying heavy use of skip connections such as DenseNets with the same number of parameters. Performance seems to increase as channel max pooling increased. Further, randomization seems to be important to the ov erall archi- tecture ev en when given the complex nature of real image transformations. PRC-NPTN helps DenseNets account for nuisance transformations better ev en for those as extreme as auto-augment with its 16 transformation types ShearX/Y , TranslateX/Y , Rotate, AutoCon- trast, In vert, Equalize, Solarize, Posterize, Contrast, Color , Brightness, Sharpness, Cutout, Sample Pairing to various degrees. With these evidence, it is interesting to find that random connectomes can be motiv ated from the perspectiv e of learning heterogeneous in variances from data without any change in architectures. W e find that they provide a promising alter- nate dimension in future network design in contrast to the ubiquitous use of highly structured and ordered connectomes. Evaluation on the ETH-80 dataset The ETH-80 dataset was introduced in [ 18 ] as a benchmark to test models against 3D pose variation of v arious objects. The dataset contains 80 objects belonging to 8 dif ferent classes. Each object has images from different viewpoints on a hemisphere for a total of 41 images per object. The images were resized to 50 × 50 following [ 16 ]. This dataset is perfectly poised to test how efficiently a model can learn in variance to 3D vie wpoint variation. Protocol: For this experiment, we de vise a ne w protocol in which we train on one half of 14 PERMANENT RANDOM CONNECTOME NETW ORKS: Figure 7: Sample images from the ETH-80 database. The dataset contains 80 objects belonging to 8 different classes. Each object has images from different viewpoints on a hemisphere resulting in 3D pose and viewpoint v ariation for each object. Method (Protocol 2) Accuracy (%) #parameters Reduction #filters (3 × 3) Reduction Con vNet [ 16 ] 84 . 49 1.4M 230 - Con vNet B 95 . 58 110K 1 × 3780 1 × Con vNet B (1 × 1) 94 . 33 115K 0 . 95 × 3780 1 × NPTN-large B (3) 94 . 58 189K 0 . 58 × 11268 0 . 33 × NPTN-small B (3) 94 . 70 120K 0 . 91 × 4356 0 . 86 × PRC-NPTN B (8, 2) 94 . 34 97K 1 . 13 × 708 5 . 33 × Con vNet C 95 . 44 116K 1 × 12740 1 × Con vNet C (1 × 1) 94 . 40 138K 0 . 84 × 12740 1 × PRC-NPTN C (8, 2) 94 . 68 64K 1 . 81 × 1220 10 . 44 × PRC-NPTN C (8, 4) 95 . 63 39K 2 . 97 × 1220 10 . 44 × T able 4: T est accuracy on ETH-80 Pr otocol 2. For NPTN is number in the brack et denotes | G | , for PRC-NPTN the numbers denote | G | and CMP respectively . PERMANENT RANDOM CONNECTOME NETW ORKS: 15 the horizontal vie ws and test on the other half. W e randomly split the v ertical views between these train and test sets. W e test with the same set of architectures as in the main paper with the same experimental settings. This protocol is harder since the other side of the object is not seen along with the objects not being symmetrical. Results are shown in T ab . 4 . The results follow a similar tread to that of the original protocol described in the main paper . W e find PRC-NPTN outperforms all other methods using a almost 3X less parameters and almost 10X fewer 3x3 filters. These results show that PRC-NPTN are more effecti ve in learning e ven out of the plane in variances from data itself without an y change in architecure. 4.1 Proof of Lemma 2.1 Lemma 4.1. (Invariance Pr operty) Assume a novel test input x and a filter w both fixed vectors ∈ R d . Further , let g denote a random variable r epresenting unitary operators with some distrib ution. F inally , let ϒ ( x ) = h x , gw i , with ϒ ( x ) ∼ U ( a , b ) i.e. a Uniform distribution between a and b. Then, we have V ar ( max ϒ ( x )) ≤ V ar ( ϒ ( x )) = V ar ( h g − 1 x , w i ) Pr oof. Let X be the random variable representing the randomness in h x , gw i for fixed x , w and random g . W e assume that X ∼ U ( 0 , 1 ) . Considering a sample set X 1 , X 2 ... X n ∼ U ( 0 , 1 ) , then X ( n ) = max 1 ≤ i ≤ nX n . No w , P ( X ( n ) ≤ x ) = P ( X i ≤ x , ∀ i ) (2) = P ( X i ≤ x ) n (3) = x n (4) Let the density of X ( n ) be denoted by f X ( n ) ( x ) , then f X ( n ) ( x ) = 0 x ≤ 0 nx n − 1 0 ≤ x ≤ 1 1 x ≥ 1 Now , E [ X ( n ) ] = Z 1 0 xnx n − 1 d x = x n + 1 n + 1 n | 1 0 = n n + 1 E [ X 2 ( n ) ] = Z 1 0 x 2 nx n − 1 d x = x n + 2 n + 2 n | 1 0 = n n + 2 Therefore, V ar ( X ( n ) ) = n n + 1 − n n + 1 2 = n ( n + 2 )( n + 1 ) 2 Since the variance of U ( 0 , 1 ) is 1 12 i.e. V ar ( X i ) = 1 12 , and V ar ( X ( n ) ) is a decreasing function in n , along with the fact that V ar ( X ( n ) ) for n = 1 is 1 12 , we hav e V ar ( X ( n ) ) ≤ V ar ( X i ) For general U ( a , b ) , it follows shortly after considering Y i = X i − a b − a and that V ar ( Y i ) = V ar ( X i ) . Finally , due to unitary g , h x , gw i = h g − 1 x , w i . 16 PERMANENT RANDOM CONNECTOME NETW ORKS: 1: class PRCN-NPTN: 2: def init(self, inch, outch, G, CMP , kernelSize, padding, stride): 3: self.G = G 4: self.maxpoolSize = CMP 5: self.avgpoolSize = int((inch*self.G)/(self.maxpoolSize*outch)) 6: self.expansion = self.G*inch 7: self.con v1 = nn.Con v2d(inch, self.G*inch, kernelSize=kernelSize, groups=inch, padding=padding, bias=False) 8: self.transpool1 = nn.MaxPool3d((self.maxpoolSize, 1, 1)) 9: self.transpool2 = nn.A vgPool3d((self.avgpoolSize, 1, 1)) 10: self.index = torch.LongT ensor(self.expansion).cuda() 11: self.randomlist = list(range(self.expansion)) 12: random.shuffle(self.randomlist) 13: for ii in range(self.expansion): 14: self.index[ii] = self.randomlist[ii] 15: 16: def forward(self , x): 17: out = self.con v1(x) #inch − → G*inch 18: out = out[:,self.index,:,:] # randomization 19: out = self.transpool1(out) # G*inch − → inch*G/maxpool 20: out = self.transpool2(out) # inch*G/(maxpool*meanpool) − → outch 21: return out Figure 8: PRC-NPTN pseudo-code. Refer ences [1] Fabio Anselmi, Joel Z Leibo, Lorenzo Rosasco, Jim Mutch, Andrea T acchetti, and T omaso Poggio. Unsupervised learning of in variant representations in hierarchical ar- chitectures. arXiv preprint , 2013. [2] Fabio Anselmi, Georgios Evangelopoulos, Lorenzo Rosasco, and T omaso Poggio. Symmetry regularization. T echnical report, Center for Brains, Minds and Machines (CBMM), 2017. [3] François Chollet. Xception: Deep learning with depthwise separable con volutions. In Pr oceedings of the IEEE confer ence on computer vision and pattern r ecognition , pages 1251–1258, 2017. [4] T aco Cohen and Max W elling. Group equiv ariant conv olutional networks. In Interna- tional Confer ence on Machine Learning , pages 2990–2999, 2016. [5] T aco S Cohen and Max W elling. Steerable cnns. arXiv pr eprint arXiv:1612.08498 , 2016. [6] Joe Corey and Benjamin Scholl. Cortical selectivity through random connectivity . Journal of Neur oscience , 32(30):10103–10104, 2012. [7] Ekin D Cubuk, Barret Zoph, Dandelion Mane, V ijay V asudev an, and Quoc V Le. Autoaugment: Learning augmentation policies from data. arXiv preprint arXiv:1805.09501 , 2018. [8] Sander Dieleman, Kyle W Willett, and Joni Dambre. Rotation-in variant con volutional neural networks for g alaxy morphology prediction. Monthly notices of the r oyal astr o- nomical society , 450(2):1441–1459, 2015. PERMANENT RANDOM CONNECTOME NETW ORKS: 17 Rotation 0 ◦ *** 30 ◦ *** 60 ◦ *** 90 ◦ *** ConvNet (36) 0 . 70 ± 0 . 03 - 0 . 92 ± 0 . 03 - 1 . 32 ± 0 . 07 - 1 . 93 ± 0 . 02 - ConvNet (36) FC 0 . 66 ± 0 . 05 - 0 . 80 ± 0 . 03 - 1 . 08 ± 0 . 02 - 1 . 58 ± 0 . 01 - ConvNet (512) 0 . 65 ± 0 . 04 - 0 . 80 ± 0 . 02 - 1 . 14 ± 0 . 03 - 1 . 54 ± 0 . 03 - NPTN (36,1) 0 . 68 ± 0 . 04 - 0 . 93 ± 0 . 01 - 1 . 35 ± 0 . 05 - 1 . 92 ± 0 . 02 - NPTN (18,2) 0 . 66 ± 0 . 02 - 0 . 87 ± 0 . 04 - 1 . 18 ± 0 . 02 - 1 . 67 ± 0 . 03 - NPTN (12,3) 0 . 68 ± 0 . 06 - 0 . 84 ± 0 . 02 - 1 . 19 ± 0 . 01 - 1 . 64 ± 0 . 02 - NPTN (9,4) 0 . 64 ± 0 . 01 - 0 . 88 ± 0 . 05 - 1 . 21 ± 0 . 03 - 1 . 65 ± 0 . 02 - PRCN (36,1) 0 . 62 ± 0 . 08 0 . 62 ± 0 . 06 0 . 84 ± 0 . 01 0 . 83 ± 0 . 03 1 . 17 ± 0 . 05 1 . 19 ± 0 . 02 1 . 72 ± 0 . 05 1 . 73 ± 0 . 06 PRCN (18,2) 0 . 61 ± 0 . 02 0 . 57 ± 0 . 02 0 . 68 ± 0 . 02 0 . 73 ± 0 . 02 0 . 93 ± 0 . 04 0 . 99 ± 0 . 04 1 . 24 ± 0 . 01 1 . 33 ± 0 . 02 PRCN (12,3) 0 . 58 ± 0 . 03 0 . 62 ± 0 . 04 0 . 72 ± 0 . 02 0 . 74 ± 0 . 02 0 . 95 ± 0 . 01 1 . 04 ± 0 . 04 1 . 28 ± 0 . 01 1 . 33 ± 0 . 01 PRCN (9,4) 0 . 63 ± 0 . 02 0 . 62 ± 0 . 04 0 . 75 ± 0 . 03 0 . 77 ± 0 . 02 0 . 99 ± 0 . 03 1 . 05 ± 0 . 03 1 . 31 ± 0 . 03 1 . 40 ± 0 . 03 Translations 0 pixels *** 4 pixels *** 8 pixels *** 12 pixels *** ConvNet (36) 0 . 69 ± 0 . 04 - 0 . 72 ± 0 . 01 - 1 . 22 ± 0 . 02 - 4 . 43 ± 0 . 05 - ConvNet (36) FC 0 . 60 ± 0 . 02 - 0 . 64 ± 0 . 01 - 0 . 88 ± 0 . 05 - 3 . 49 ± 0 . 11 ConvNet (512) 0 . 63 ± 0 . 02 - 0 . 64 ± 0 . 01 - 1 . 00 ± 0 . 02 - 3 . 56 ± 0 . 04 - NPTN (36,1) 0 . 68 ± 0 . 04 - 0 . 73 ± 0 . 02 - 1 . 23 ± 0 . 01 - 4 . 42 ± 0 . 08 - NPTN (18,2) 0 . 61 ± 0 . 04 - 0 . 63 ± 0 . 02 - 1 . 11 ± 0 . 02 - 4 . 10 ± 0 . 08 - NPTN (12,3) 0 . 66 ± 0 . 02 - 0 . 64 ± 0 . 02 - 1 . 09 ± 0 . 04 - 4 . 19 ± 0 . 04 - NPTN (9,4) 0 . 65 ± 0 . 05 - 0 . 65 ± 0 . 03 - 1 . 16 ± 0 . 04 - 4 . 42 ± 0 . 07 - PRC-NPTN (36,1) 0 . 65 ± 0 . 02 0 . 65 ± 0 . 05 0 . 58 ± 0 . 01 0 . 61 ± 0 . 04 1 . 02 ± 0 . 03 1 . 00 ± 0 . 04 3 . 85 ± 0 . 11 3 . 83 ± 0 . 10 PRC-NPTN (18,2) 0 . 59 ± 0 . 07 0 . 59 ± 0 . 03 0 . 52 ± 0 . 03 0 . 58 ± 0 . 02 0 . 80 ± 0 . 03 0 . 88 ± 0 . 05 3 . 23 ± 0 . 03 3 . 34 ± 0 . 06 PRC-NPTN (12,3) 0 . 63 ± 0 . 02 0 . 66 ± 0 . 08 0 . 55 ± 0 . 02 0 . 59 ± 0 . 01 0 . 84 ± 0 . 04 0 . 89 ± 0 . 03 3 . 35 ± 0 . 04 3 . 52 ± 0 . 12 PRC-NPTN (9,4) 0 . 65 ± 0 . 02 0 . 69 ± 0 . 03 0 . 56 ± 0 . 03 0 . 56 ± 0 . 03 0 . 88 ± 0 . 02 0 . 97 ± 0 . 02 3 . 49 ± 0 . 46 3 . 69 ± 0 . 08 T able 5: Individual T ransformation Results: T est errors on MNIST with progressiv ely ex- treme transformations with a) random r otations and b) random pixel shifts . ∗ ∗ ∗ indicates ablation runs without any randomization i.e. without any random connectomes (applica- ble only to PRC-NPTNs). For PRC-NPTN and NPTN the brackets indicate the number of channels in the layer 1 and G . Con vNet FC denotes the addition of a 2-layered pooling 1 × 1 pooling network after e very layer . Note that for this experiment, CMP= | G | . Permanent Random Connectomes help with achieving better generalization despite increased nuisance transformations. Rot/Trans 0 ◦ 0 15 ◦ 2 30 ◦ 4 45 ◦ 6 60 ◦ 8 75 ◦ 10 90 ◦ 12 ConvNet (36) 0 . 68 ± 0 . 03 0 . 72 ± 0 . 02 1 . 31 ± 0 . 02 2 . 32 ± 0 . 04 5 . 06 ± 0 . 04 10 . 90 ± 0 . 08 19 . 60 ± 0 . 16 ConvNet (36) FC 0 . 64 ± 0 . 03 0 . 66 ± 0 . 01 0 . 95 ± 0 . 04 1 . 50 ± 0 . 02 3 . 42 ± 0 . 03 8 . 14 ± 0 . 11 15 . 61 ± 0 . 11 ConvNet (512) 0 . 66 ± 0 . 05 0 . 65 ± 0 . 02 0 . 97 ± 0 . 02 1 . 60 ± 0 . 04 3 . 50 ± 0 . 04 7 . 90 ± 0 . 06 15 . 19 ± 0 . 09 NPTN (36,1) 0 . 71 ± 0 . 04 0 . 78 ± 0 . 02 1 . 27 ± 0 . 02 2 . 35 ± 0 . 03 5 . 02 ± 0 . 14 11 . 08 ± 0 . 09 19 . 66 ± 0 . 33 NPTN (18,2) 0 . 65 ± 0 . 02 0 . 68 ± 0 . 02 1 . 09 ± 0 . 02 1 . 94 ± 0 . 04 4 . 17 ± 0 . 06 9 . 59 ± 0 . 10 17 . 92 ± 0 . 20 NPTN (12,3) 0 . 66 ± 0 . 02 0 . 69 ± 0 . 03 1 . 07 ± 0 . 03 1 . 85 ± 0 . 02 4 . 24 ± 0 . 11 9 . 58 ± 0 . 06 17 . 79 ± 0 . 16 NPTN (9,4) 0 . 64 ± 0 . 01 0 . 71 ± 0 . 02 1 . 09 ± 0 . 04 1 . 98 ± 0 . 04 4 . 41 ± 0 . 09 9 . 78 ± 0 . 16 18 . 14 ± 0 . 16 PRC-NPTN (36,1) 0 . 61 ± 0 . 03 0 . 70 ± 0 . 01 1 . 09 ± 0 . 04 1 . 80 ± 0 . 02 3 . 93 ± 0 . 02 9 . 09 ± 0 . 11 17 . 03 ± 0 . 13 PRC-NPTN (18,2) 0 . 57 ± 0 . 02 0 . 58 ± 0 . 01 0 . 77 ± 0 . 02 1 . 21 ± 0 . 07 2 . 74 ± 0 . 04 6 . 78 ± 0 . 12 13 . 79 ± 0 . 08 PRC-NPTN (12,3) 0 . 59 ± 0 . 03 0 . 58 ± 0 . 01 0 . 78 ± 0 . 02 1 . 26 ± 0 . 02 2 . 91 ± 0 . 05 7 . 13 ± 0 . 09 14 . 23 ± 0 . 07 PRC-NPTN (9,4) 0 . 63 ± 0 . 04 0 . 59 ± 0 . 02 0 . 81 ± 0 . 02 1 . 35 ± 0 . 02 3 . 12 ± 0 . 02 7 . 26 ± 0 . 02 14 . 62 ± 0 . 16 T able 6: Simultaneous T ransf ormation Results: T est errors on MNIST with progressively extreme transformations with random rotations and random pixel shifts simultaneously . For PRC-NPTN and NPTN the brackets indicate the number of channels in the layer 1 and G . Note that for this experiment, CMP= | G | . [9] Robert Gens and Pedro M Domingos. Deep symmetry netw orks. In Advances in neural information pr ocessing systems , pages 2537–2545, 2014. [10] Stephen Grossberg. Competiti ve learning: From interactiv e activ ation to adaptive res- onance. Cognitive science , 11(1):23–63, 1987. [11] Raia Hadsell, Sumit Chopra, and Y ann LeCun. Dimensionality reduction by learning an in variant mapping. In Computer vision and pattern r ecognition, 2006 IEEE computer society confer ence on , volume 2, pages 1735–1742. IEEE, 2006. [12] David Hansel and Carl van Vreeswijk. The mechanism of orientation selectivity in primary visual cortex without a functional map. Journal of Neuroscience , 32(12):4049– 4064, 2012. [13] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Deep residual learning 18 PERMANENT RANDOM CONNECTOME NETW ORKS: for image recognition. In Proceedings of the IEEE confer ence on computer vision and pattern r ecognition , pages 770–778, 2016. [14] João F Henriques and Andrea V edaldi. W arped con volutions: Efficient in variance to spatial transformations. In International Conference on Mac hine Learning , 2017. [15] Max Jaderberg, Karen Simonyan, Andrew Zisserman, et al. Spatial transformer net- works. In Advances in Neural Information Processing Systems , pages 2017–2025, 2015. [16] Renata Khasanov a and P ascal Frossard. Graph-based isometry in variant representation learning. In International Conference on Mac hine Learning , pages 1847–1856, 2017. [17] Y ann LeCun, Léon Bottou, Y oshua Bengio, and Patrick Haf fner . Gradient-based learn- ing applied to document recognition. Pr oceedings of the IEEE , 86(11):2278–2324, 1998. [18] Bastian Leibe and Bernt Schiele. Analyzing appearance and contour based methods for object cate gorization. In Computer V ision and P attern Recognition, 2003. Pr oceedings. 2003 IEEE Computer Society Confer ence on , volume 2, pages II–409. IEEE, 2003. [19] Junying Li, Zichen Y ang, Haifeng Liu, and Deng Cai. Deep rotation equiv ariant net- work. arXiv pr eprint arXiv:1705.08623 , 2017. [20] Qianli Liao, Joel Z Leibo, and T omaso Poggio. Learning in variant representations and applications to face verification. In Advances in Neural Information Pr ocessing Systems , pages 3057–3065, 2013. [21] Timothy P Lillicrap, Daniel Cownden, Douglas B T weed, and Colin J Akerman. Ran- dom synaptic feedback weights support error backpropagation for deep learning. Na- tur e communications , 7:13276, 2016. [22] Stéphane Mallat and Irene W aldspurger . Deep learning by scattering. arXiv preprint arXiv:1306.5532 , 2013. [23] Pietro Mazzoni, Richard A Andersen, and Michael I Jordan. A more biologically plau- sible learning rule for neural networks. Pr oceedings of the National Academy of Sci- ences , 88(10):4433–4437, 1991. [24] Dipan Pal, Ashwin Kannan, Gautam Arakalgud, and Marios Savvides. Max-margin in variant features from transformed unlabelled data. In Advances in Neur al Information Pr ocessing Systems 30 , pages 1438–1446, 2017. [25] Dipan K Pal and Marios Sa vvides. Non-parametric transformation networks for learn- ing general in variances from data. AAAI , 2019. [26] Dipan K Pal, Felix Juefei-Xu, and Marios Savvides. Discriminativ e inv ariant kernel features: a bells-and-whistles-free approach to unsupervised face recognition and pose estimation. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , pages 5590–5599, 2016. PERMANENT RANDOM CONNECTOME NETW ORKS: 19 [27] Manuel Schottdorf, W olfgang Keil, David Coppola, Leonard E White, and Fred W olf. Random wiring, ganglion cell mosaics, and the functional architecture of the visual cortex. PLoS computational biology , 11(11):e1004602, 2015. [28] Laurent Sifre and Stéphane Mallat. Rotation, scaling and deformation in variant scat- tering for texture discrimination. In Pr oceedings of the IEEE conference on computer vision and pattern r ecognition , pages 1233–1240, 2013. [29] Nitish Sriv astav a, Geoffrey Hinton, Alex Krizhevsky , Ilya Sutske ver , and Ruslan Salakhutdinov . Dropout: A simple way to prev ent neural networks from ov erfitting. The Journal of Mac hine Learning Resear ch , 15(1):1929–1958, 2014. [30] David G Stork. Is backpropagation biologically plausible. In International Joint Con- fer ence on Neural Networks , volume 2, pages 241–246, 1989. [31] Damien T eney and Martial Hebert. Learning to extract motion from videos in con vo- lutional neural networks. In Asian Confer ence on Computer V ision , pages 412–428. Springer , 2016. [32] Li W an, Matthe w Zeiler , Sixin Zhang, Y ann Le Cun, and Rob Fergus. Regularization of neural networks using dropconnect. In International Conference on Machine Learning , pages 1058–1066, 2013. [33] Daniel E W orrall, Stephan J Garbin, Daniyar Turmukhambeto v , and Gabriel J Brosto w . Harmonic networks: Deep translation and rotation equiv ariance. In Proceedings of the IEEE Conference on Computer V ision and P attern Recognition , pages 5028–5037, 2017. [34] Saining Xie, Ross Girshick, Piotr Dollár, Zhuowen T u, and Kaiming He. Aggregated residual transformations for deep neural networks. In Pr oceedings of the IEEE confer- ence on computer vision and pattern r ecognition , pages 1492–1500, 2017. [35] Saining Xie, Alexander Kirillov , Ross Girshick, and Kaiming He. Exploring randomly wired neural networks for image recognition. arXiv pr eprint arXiv:1904.01569 , 2019. [36] Xiaohui Xie and H Sebastian Seung. Equi v alence of backpropagation and contrastiv e hebbian learning in a layered network. Neural computation , 15(2):441–454, 2003. [37] Y ichong Xu, T ianjun Xiao, Jiaxing Zhang, Kuiyuan Y ang, and Zheng Zhang. Scale- in variant con volutional neural netw orks. arXiv pr eprint arXiv:1411.6369 , 2014. [38] Matthew D Zeiler and Rob Fergus. Stochastic pooling for regularization of deep con- volutional neural netw orks. arXiv pr eprint arXiv:1301.3557 , 2013. [39] Xiangyu Zhang, Xinyu Zhou, Mengxiao Lin, and Jian Sun. Shufflenet: An extremely efficient con volutional neural network for mobile devices. In Proceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , pages 6848–6856, 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment