SEAN: Image Synthesis with Semantic Region-Adaptive Normalization

We propose semantic region-adaptive normalization (SEAN), a simple but effective building block for Generative Adversarial Networks conditioned on segmentation masks that describe the semantic regions in the desired output image. Using SEAN normaliza…

Authors: Peihao Zhu, Rameen Abdal, Yipeng Qin

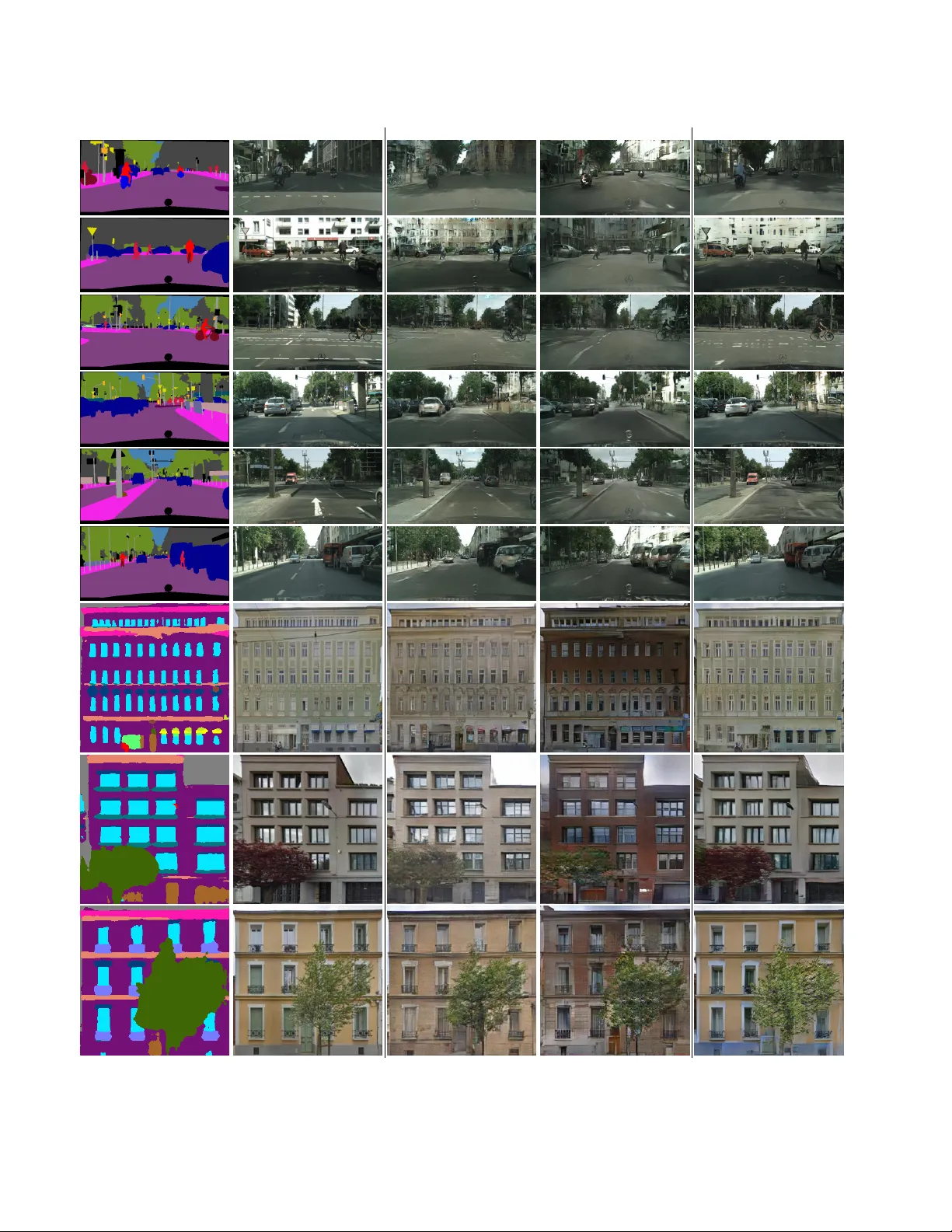

SEAN: Image Synthesis with Semantic Region-Adaptiv e Normalization Peihao Zhu 1 Rameen Abdal 1 Y ipeng Qin 2 Peter W onka 1 1 KA UST 2 Cardif f Uni versity Source Image Style Image (a) (b) (c) (d) (e) (f ) Figure 1: Face image editing controlled via style images and se gmentation masks. a) source images. b) reconstruction of the source image; segmentation mask sho wn as small inset. c - f) four separate edits; we show the image that provides ne w style information on top and sho w the part of the segmentation mask that gets edited as small inset. The results of the successiv e edits are shown in ro w two and three. The four edits change hair , mouth and eyes, skin tone, and background, respecti v ely . Abstract W e pr opose semantic re gion-adaptive normalization (SEAN), a simple but effective building bloc k for Generative Adversarial Networks conditioned on se gmentation masks that describe the semantic r e gions in the desir ed output im- age . Using SEAN normalization, we can b uild a network ar chitectur e that can contr ol the style of each semantic re- gion individually , e.g., we can specify one style r eference image per r egion. SEAN is better suited to encode , transfer , and synthesize style than the best pr evious method in terms of r econstruction quality , variability , and visual quality . W e evaluate SEAN on multiple datasets and r eport better quan- titative metrics (e.g. FID, PSNR) than the curr ent state of the art. SEAN also pushes the frontier of interactive im- age editing. W e can inter actively edit images by changing se gmentation masks or the style for any given r egion. W e can also interpolate styles fr om two r eference imag es per r e gion. Code: https://github.com/ZPdesu/SEAN . 1. Introduction In this paper we tackle the problem of synthetic im- age generation using conditional generati ve adv ersarial net- works (cGANs). Specifically , we would like to control the layout of the generated image using a segmentation mask Source Image Style Image (a) (b) (c) (d) (e) (f ) Figure 2: Editing sequence on the ADE20K dataset. (a) source image, (b) reconstruction of the source image, (c-f) various edits using style images shown in the top ro w . The regions affected by the edits are sho wn as small insets. that has labels for each semantic region and “add” realistic styles to each region according to their labels . F or exam- ple, a face generation application would use region labels like eyes, hair , nose, mouth, etc . and a landscape painting application would use labels like water , forest, sky , clouds, etc . While multiple very good frameworks exist to tackle this problem [ 23 , 8 , 40 , 47 ], the currently best architecture is SP ADE [ 39 ] (also called GauGAN). Therefore, we de- cided to use SP ADE as starting point for our research. By analyzing the SP ADE results, we found two shortcomings that we would like to impro ve upon in our w ork. First, SP ADE uses only one style code to control the en- tire style of an image, which is not suf ficient for high quality synthesis or detailed control. For example, it is easily possi- ble that the segmentation mask of the desired output image contains a labeled region that is not present in the segmen- tation mask of the input style image. In this case, the style of the missing region is undefined, which yields low qual- ity results. Further, SP ADE does not allow using a different style input image for each re gion in the segmentation mask. Our first main idea is therefore to control the style of each region individually , i.e ., our proposed architecture accepts one style image per region (or per re gion instance) as input. Second, we believe that inserting style information only in the beginning of a network is not a good architecture choice. Recent architectures [ 26 , 32 , 2 ] have demonstrated that higher quality results can be obtained if style informa- tion is injected as normalization parameters in multiple lay- ers in the network, e.g. using AdaIN [ 20 ]. Howe ver , none of these previous networks use style information to gener- ate spatially v arying normalization parameters. T o alleviate this shortcoming, our second main idea is to design a nor- malization building block, called SEAN, that can use style input images to create spatially varying normalization pa- rameters per semantic region. An important aspect of this work is that the spatially v arying normalization parameters are dependent on the se gmentation mask as well as the style input images. Empirically , we provide an e xtensiv e e v aluation of our method on sev eral challenging datasets: CelebAMask- HQ [ 29 , 25 , 33 ], CityScapes [ 10 ], ADE20K [ 53 ], and our own Fac ¸ ades dataset. Quantitati vely , we ev aluate our work on a wide range of metrics including FID, PSNR, RMSE and segmentation performance; qualitatively , we show ex- amples of synthesized images that can be e v aluated by vi- sual inspection. Our experimental results demonstrate a large improv ement ov er the current state-of-the-art meth- ods. In summary , we introduce a new architecture building block SEAN that has the following adv antages: 1. SEAN improves the quality of the synthesized images for conditional GANs. W e compared to the state of the art methods SP ADE and Pix2PixHD and achie ve clear improv ements in quantitati ve metrics (e.g. FID score) and visual inspection. 2. SEAN improves the per-region style encoding, so that reconstructed images can be made more similar to the input style images as measured by PSNR and visual inspection. 3. SEAN allo ws the user to select a different style in- put image for each semantic region. This enables im- age editing capabilities producing much higher quality and providing better control than the current state-of- the-art methods. Example image editing capabilities are interactiv e region by region style transfer and per- region style interpolation (See Figs. 1 , 2 , and 5 ). 2. Related W ork Generative Adversarial Networks. Since their introduc- tion in 2014, Generativ e Adversarial Networks (GANs) [ 15 ] hav e been successfully applied to various image synthesis tasks, e.g . image inpainting [ 51 , 11 ], image manipulation [ 55 , 5 , 1 ] and texture synthesis [ 30 , 45 , 12 ]. W ith contin- uous improvements on GAN architecture [ 41 , 26 , 39 ], loss function [ 34 , 4 ] and regularization [ 17 , 37 , 35 ], the images synthesized by GANs are becoming more and more real- istic. For example, the human face images generated by StyleGAN [ 26 ] are of very high quality and are almost in- distinguishable from photographs by untrained viewers. A traditional GAN uses noise vectors as the input and thus provides little user control. This moti v ates the de velopment of conditional GANs (cGANs) [ 36 ] where users can con- trol the synthesis by feeding the generator with condition- ing information. Examples include class labels [ 38 , 35 , 6 ], text [ 42 , 19 , 49 ] and images [ 23 , 56 , 31 , 47 , 47 , 39 ]. Our work is built on the conditional GANs with image inputs, which aims to tackle image-to-image translation problems. Image-to-Image T ranslation. Image-to-image translation is an umbrella concept that can be used to describe man y problems in computer vision and computer graphics. As a milestone, Isola et al . [ 23 ] first showed that image- conditional GANs can be used as a general solution to vari- ous image-to-image translation problems. Since then, their method is extended by sev eral follo wing works to scenar- ios including: unsupervised learning [ 56 , 31 ], fe w-shot learning [ 32 ], high resolution image synthesis [ 47 ], multi- modal image synthesis [ 57 , 22 ] and multi-domain image synthesis [ 9 ]. Among various image-to-image translation problems, semantic image synthesis is a particularly use- ful genre as it enables easy user control by modifying the input semantic layout image [ 29 , 5 , 16 , 39 ]. T o date, the SP ADE [ 39 ] model (also called GauGAN) generates the highest quality results. In this paper , we will impro ve SP ADE by introducing per-re gion style encoding. Style Encoding. Style control is a vital component for var - ious image synthesis and manipulation applications [ 13 , 30 , 21 , 26 , 1 ]. Style is generally not manually designed by a user , but extracted from reference images. In most exist- ing methods, styles are encoded in three places: i) statistics of image features [ 13 , 43 ]; ii) neural network weights ( e.g . fast style transfer [ 24 , 54 , 50 ]); iii) parameters of a netw ork normalization layer [ 21 , 28 ]). When applied to style con- trol, the first encoding method is usually time-consuming as it requires a slow optimization process to match the statis- tics of image features extracted by image classification net- works [ 13 ]. The second approach runs in real-time b ut each neural network only encodes the style of selected reference images [ 24 ]. Thus, a separate neural network is required to be trained for each style image, which limits its applica- tion in practice. T o date, the third approach is the best as it enables arbitrary style transfer in real-time [ 21 ] and it is used by high-quality networks such as StyleGAN [ 26 ], FU- NIT [ 32 ], and SP ADE [ 39 ]. Our per-region style encoding also builds on this approach. W e will show that our method generates higher quality results and enables more-detailed user control. 3. Per Region Style Encoding and Contr ol Giv en an input style image and its corresponding se g- mentation mask, this section shows i) ho w to distill the per - region styles from the image according to the mask and ii) how to use the distilled per -region style codes to synthesize photo-realistic images. 3.1. How to Encode Style? Per -Region Style Encoder . T o extract per region styles, we propose a nov el style encoder network to distill the corre- sponding style code from each semantic region of an input image simultaneously (See the subnetw ork Style Encoder in Figure 4 (A)). The output of the style encoder is a 512 × s dimensional style matrix ST , where s is the number of semantic regions in the input image. Each column of the matrix corresponds to the style code of a semantic region. Unlike the standard encoder built on a simple downscaling con v olutional neural network, our per-region style encoder employs a “bottleneck” structure to remove the information irrelev ant to styles from the input image. Incorporating the prior knowledge that styles should be independent of the shapes of semantic regions, we pass the intermediate fea- ture maps ( 512 channels) generated by the network block TCon v-Layers through a region-wise av erage pooling layer and reduce them to a collection of 512 dimensional vectors. As implementation detail we would like to remark that we use s as the number of semantic labels in the data set and set columns corresponding to regions that do not exist in an input image to 0 . As a v ariation of this architecture, we can also extract style per region instance for datasets that have instance labels, e.g., CityScapes. 3.2. How to Contr ol Style? W ith per -region style codes and a segmentation mask as inputs, we propose a new conditional normalization technique called Semantic Region-Adaptiv e Normalization (SEAN) to enable detailed control of styles for photo- realistic image synthesis. Similar to existing normalization techniques [ 21 , 39 ], SEAN works by modulating the scales and biases of generator activ ations. In contrast to all exist- ing methods, the modulation parameters learnt by SEAN are dependent on both the style codes and segmentation masks. In a SEAN block (Figure 3 ), a style map is first gen- … Ba t c h N o r m b r o a d c a s t i n g c o n v c o n v c o n v c o n v c o n v 𝛼 𝛽 𝛼 𝛾 𝛽 𝑠 γ s 𝛽 𝑜 𝛾 𝑜 S t y l e Ma p el em en t - w i s e a d d i t i o n el em en t - w i s e m u l t i p l i c a t i o n w ei g h t ed s u m 𝛼 𝛾 , 𝛼 𝛽 : l ea r n a b l e p a r a m et er ST S eg men t a t i o n Ma s k 1 - 𝛼 𝛽 1 - 𝛼 𝛾 S t y l e Ma t r i x A ij P e r - s t y l e C o n v Figure 3: SEAN normalization. The input are style matrix ST and segmentation mask M . In the upper part, the style codes in ST undergo a per style con volution and are then broadcast to their corresponding regions according to M to yield a style map. The style map is processed by con v layers to produce per pixel normalization values γ s and β s . The lower part (light blue layers) creates per pix el normalization values using only the region information similar to SP ADE. erated by broadcasting the style codes to their correspond- ing semantic re gions according to the input segmentation mask. This style map along with the input segmentation mask are then passed through two separate con volutional neural networks to learn tw o sets of modulation parameters. The weighted sums of them are used as the final SEAN parameters to modulate the scales and biases of generator activ ations. The weight parameter is also learnable during training. The formal definition of SEAN is introduced as follows. Semantic Region-Adaptive Normalization (SEAN). A SEAN block has two inputs: a style matrix ST encoding per-re gion style codes and a segmentation mask M . Let h denote the input activ ation of the current SEAN block in a deep conv olutional network for a batch of N samples. Let H , W and C be the height, width and the number of chan- nels in the activation map. The modulated activ ation value at site ( n ∈ N , c ∈ C , y ∈ H , x ∈ W ) is giv en by γ c,y ,x ( ST , M ) h n,c,y ,x − µ c σ c + β c,y ,x ( ST , M ) (1) where h n,c,y ,x is the activ ation at the site before normal- ization, the modulation parameters γ c,y ,x and β c,y ,x are weighted sums of γ s c,y ,x , γ o c,y ,x and β s c,y ,x , β o c,y ,x respec- tiv ely (See Fig. 3 for definition of γ and β variables): γ c,y ,x ( ST , M ) = α γ γ s c,y ,x ( ST ) + (1 − α γ ) γ o c,y ,x ( M ) β c,y ,x ( ST , M ) = α β β s c,y ,x ( ST ) + (1 − α β ) β o c,y ,x ( M ) (2) µ c and σ c are the mean and standard deviation of the acti- vation in channel c : µ c = 1 N H W X n,y ,x h n,c,y ,x (3) σ c = v u u t 1 N H W X n,y ,x h 2 n,c,y ,x ! − µ 2 c (4) 4. Experimental Setup 4.1. Network Ar chitecture Figure 4 (A) sho ws an ov ervie w of our generator net- work, which is building on that of SP ADE [ 39 ]. Similar to [ 39 ], we employ a generator consisting of several SEAN ResNet blocks (SEAN ResBlk) with upsampling layers. SEAN ResBlk. Figure 4 (B) shows the structure of our SEAN ResBlk, which consists of three conv olutional lay- ers whose scales and biases are modulated by three SEAN blocks respectively . Each SEAN block takes two inputs: a set of per-region style codes ST and a semantic mask M . Note that both inputs are adjusted in the beginning: the in- put segmentation mask is downsampled to the same height and width of the feature maps in a layer; the input style codes from ST are transformed per region using a 1 × 1 con v layer A ij . W e observed that the initial transforma- tions are indispensable components of the architecture be- cause the y transform the style codes according to the differ - ent roles of each neural network layer . For example, early layers might control the hair styles ( e.g . wavy , straight) of human face images while later layers might refer to the lighting and color . In addition, we observed that adding noise to the input of SEAN can improve the quality of syn- thesized images. The scale of such noise is adjusted by a per-channel scaling factor B that is learnt during training, similar to StyleGAN [ 26 ]. 4.2. T raining and Inference T raining W e formulate the training as an image reconstruc- tion problem. That is, the style encoder is trained to dis- till per-region style codes from the input images according to their corresponding segmentation masks. The genera- tor network is trained to reconstruct the input images with the extracted per-region style codes and the corresponding segmentation masks as inputs. Follo wing SP ADE [ 39 ] and Pix2PixHD [ 47 ], the dif ference between input images and reconstructed images are measured by an overall loss func- tion consisting of three loss terms: conditional adv ersarial loss, feature matching loss [ 47 ] and perceptual loss [ 24 ]. Details of the loss function are included in the supplemen- tary material. Inference During inference, we take an arbitrary segmenta- tion mask as the mask input and implement per-region style A 21 A 21 A 21 A 21 A 21 A 11 A 21 A 21 A 41 A 21 A 21 A 31 Con v - Laye rs Re gion - wi se average poo lin g … ST TC onv - Laye rs Sty le Enco de r Con v … Con v … (A) Pipe li n e A 21 A 21 B 41 A 21 A 21 B 31 A 21 A 21 B 21 A 21 A 21 B 11 SE AN Res Blk Upsampl e SE AN Res Blk Upsampl e SE AN Res Blk Upsampl e SE AN Res Blk Upsampl e (B) S EAN ResBlk SE AN Re LU 3×3 Con v SE AN Re LU 3×3 Con v SE AN Re LU 3×3 Con v B i3 A i2 A i3 B i2 Noise A i1 B i1 Figure 4: SEAN generator . (A) On the left, the style encoder takes an input image and outputs a style matrix ST . The generator on the right consists of interleaved SEAN ResBlocks and Upsampling layers. (B) A detailed view of a SEAN ResBlock used in (A). Method CelebAMask-HQ CityScapes ADE20K Fac ¸ ades SSIM RMSE PSNR SSIM RMSE PSNR SSIM RMSE PSNR SSIM RMSE PSNR Pix2PixHD [ 47 ] 0.68 0.15 17.14 0.69 0.13 18.32 0.51 0.22 13.81 0.53 0.16 16.30 SP ADE [ 39 ] 0.63 0.21 14.30 0.64 0.18 15.77 0.45 0.28 11.52 0.44 0.22 13.87 Ours 0.73 0.12 18.74 0.70 0.13 18.61 0.58 0.17 16.16 0.58 0.14 17.14 T able 1: Quantitativ e comparison of reconstruction quality . Our method outperforms current leading methods using similarity metrics SSIM, RMSE, and PSNR on all the datasets. For SSIM and PSNR, higher is better . For RMSE, lo wer is better . control by selecting a separate 512 dimensional style code for each semantic region as the style input. This enables a variety of high quality image synthesis applications, which will be introduced in the following section. 5. Results In the follo wing we discuss quantitati ve and qualitative results of our framew ork. Implementation details. Following SP ADE [ 39 ], we apply Spectral Norm [ 37 ] in both the generator and discriminator . Additional normalization is performed by SEAN in the gen- erator . W e set the learning rates to 0 . 0001 and 0 . 0004 for the generator and discriminator , respectiv ely [ 18 ]. For the opti- mizer , we choose ADAM [ 27 ] with β 1 = 0 , β 2 = 0 . 999 . All the experiments are trained on 4 T esla v100 GPUs. T o get better performance, we use a synchronized v ersion of batch normalization [ 52 ] in the SEAN normalization blocks. Datasets. W e use the following datasets in our experi- ments: 1) CelebAMask-HQ [ 29 , 25 , 33 ] containing 30 , 000 segmentation masks for the CelebAHQ face image dataset. There are 19 different region categories. 2) ADE20K [ 53 ] contains 22 , 210 images annotated with 150 dif ferent re gion labels. 3) Cityscapes [ 10 ] contains 3500 images annotated with 35 different region labels. 4) Fac ¸ ades dataset. we use a dataset of 30 , 000 fac ¸ ade images collected from Google Street V iew [ 3 ]. Instead of manual annotation, the segmen- tation masks are automatically calculated by a pre-trained DeepLabV3+ Network [ 7 ]. W e follow the recommended splits for each dataset and note that all images in the paper are generated from the test set only . Neither the segmentation masks nor the style im- ages hav e been seen during training. Metrics. W e employ the following established metrics to compare our results to state of the art: 1) segmentation ac- Figure 5: Style interpolation. W e take a mask from a source image and reconstruct with two different style images (Style1 and Style2) that are very dif ferent from the source image. W e then show interpolated results of the per -region style codes. Method CelebAMask-HQ CityScapes ADE20K Fac ¸ ades mIoU accu FID mIoU accu FID mIoU accu FID FID Ground T ruth 73.14 94.38 9.41 66.21 93.69 32.34 39.38 78.76 14.51 14.40 Pix2PixHD [ 47 ] 76.12 95.76 23.69 50.35 92.09 83.24 22.78 73.32 43.0 22.34 SP ADE [ 39 ] 77.01 95.93 22.43 56.01 93.13 60.51 35.37 79.37 34.65 24.04 Ours 75.69 95.69 17.66 57.88 93.59 50.38 34.59 77.16 24.84 19.82 T able 2: Quantitativ e comparison using semantic segmentation performance measured by mIoU and accu and generation performance measured by FID. Our method outperforms current leading methods in FID on all the datasets. curacy measured by mean Intersection-over -Union (mIoU) and pixel accuracy (accu), 2) FID [ 18 ], 3) peak signal-to- noise ratio (PSNR), 4) structural similarity (SSIM) [ 48 ], 5) root mean square error (RMSE). Quantitative comparisons. In order to facilitate a fair comparison to SP ADE, we report reconstruction perfor- Method SSIM RMSE PSNR FID Pix2PixHD [ 47 ] 0.68 0.15 17.14 23.69 SP ADE [ 39 ] 0.63 0.21 14.30 22.43 SP ADE++ 0.67 0.16 16.71 20.80 SEAN-lev el encoders 0.74 0.11 19.70 18.17 ResBlk-lev el encoders 0.72 0.12 18.86 17.98 unified encoder 0.73 0.12 18.74 17.66 w/ downsampling 0.73 0.12 18.74 17.66 w/o downsampling 0.72 0.13 18.32 18.67 w/ noises 0.73 0.12 18.74 17.66 w/o noises 0.72 0.13 18.49 18.05 T able 3: Ablation study on CelebAMask-HQ dataset. See supplementary materials for further details. mance where only a single style image is employed. W e train one network for each of the four datasets and report the previously described metrics in T able 1 and 2 . W e choose SP ADE [ 39 ] as the currently best state-of-the-art method and Pix2PixHD [ 47 ] as the second best method in the comparisons. Based on visual inspection of the results, we found that the FID score is most indicative of visual quality of the results and that a lower FID score often (but not always) corresponds to better visual results. Generally , we can observe that our SEAN frame work clearly beats the state of the art on all datasets. Qualitative results. W e show visual comparisons for the four datasets in Fig. 6 . W e can observe that the quality dif- ference between our work and the state of the art can be sig- nificant. F or example, we notice that SP ADE and Pix2Pix cannot handle more extreme poses and older people. W e conjecture that our network is more suitable to learn more of the variability in the data because of our improved style encoding. Since a visual inspection of the results is very important for generative modeling, we would like to refer the reader to the supplementary materials and the accompa- nying video for more results. Our per -region style encoding Label Ground T ruth Pix2PixHD [ 47 ] SP ADE [ 39 ] Ours Figure 6: V isual comparison of semantic image synthesis results on the CelebAMask-HQ, ADE20K, CityScapes and F ac ¸ ades dataset. W e compare Pix2PixHD, SP ADE, and our method. See supplementary materials for further details. Figure 7: Style crossover . In addition to style interpolation (bottom row), we can perform crossover by selecting different styles per ResBlk. W e show two transitions in the top two rows. The blue / orange bars on top of the images indicate which styles are used by the six ResBlks. W e can observe that earlier layers are responsible for lar ger features and later layers mainly determine the color scheme. also enables new image editing operations: iterativ e image editing with per-region style control (See Figs. 1 and 2 ), style interpolation and style crossov er (See Fig. 5 and 7 ). V ariations of SEAN generator (Ablation studies). In T a- ble 3 , we compare many variations of our architecture with previous w ork on the CelebAMask-HQ dataset. According to our analysis, Pix2PixHD has a better style extraction subnetwork than SP ADE (because the PSNR re- construction values are better), but SP ADE has a better gen- erator subnetwork (because the FID scores are better). W e therefore build another network that combines the style en- coder from Pix2PixHD with the generator from SP ADE and call this architecture SP ADE++. W e can observe that SP ADE++ improves SP ADE by a bit, but all our architec- ture variations still ha ve a better FID and PSNR score. T o ev aluate our design choices, we report the perfor- mance of variations of our encoder . First we compare three variants to encode style: SEAN-lev el encoder , ResBlk-le vel encoder , and unified encoder . A fundamental design choice is how to split the style computation into two parts: a shared computation in the style encoder and a per layer computa- tion in each SEAN block. The first two v ariants perform all style extraction per-layer , while the unified encoder is the architecture described in the main part of the paper . While the other two encoders have better reconstruction perfor - mance, they lead to lower FID scores. Based on visual in- spection, we also confirmed that the unified encoder leads to better visual quality . This is especially noticeable for diffi- cult inputs (see supplementary materials). Second, we e v al- uate if downsampling in the style encoder is a good idea and ev aluate a style encoder with bottleneck (with downsam- pling) with another encoder that uses less do wnsampling. This test confirms that introducing a bottleneck in the style encoder is a good idea. Lastly , we test the effect of the learnable noise. W e found the learnable noise can help both similarity (PSNR) and FID performance. More details of the alternative architectures are provided in supplementary materials. 6. Conclusion W e propose semantic region-adapti ve normalization (SEAN), a simple but effecti ve building block for Genera- tiv e Adversarial Networks (GANs) conditioned on se gmen- tation masks that describe the semantic regions in the de- sired output image. Our main idea is to extend SP ADE, the currently best network, to control the style of each semantic region individually , e.g. we can specify one style reference image per semantic region. W e introduce a ne w building block, SEAN normalization, that can extract style from a giv en style image for a semantic region and processes the style information to bring it in the form of spatially-v arying normalization parameters. W e ev aluate SEAN on multiple datasets and report better quantitativ e metrics (e.g. FID, PSNR) than the current state of the art. While SEAN is designed to specify style for each region independently , we also achie ve big impro vements in the basic setting where only one style image is provided for all regions. In sum- mary , SEAN is better suited to encode, transfer , and synthe- size style than the best previous method in terms of recon- struction quality , variability , and visual quality . SEAN also pushes the frontier of interacti ve image editing. In future work, we plan to extend SEAN to processing meshes, point clouds, and textures on surfaces. Acknowledgement This work was supported by the KA UST Office of Sponsored Research (OSR) under A ward No. OSR-CRG2018-3730. References [1] Rameen Abdal, Y ipeng Qin, and Peter W onka. Im- age2stylegan: How to embed images into the stylegan latent space? In Pr oceedings of the IEEE International Confer ence on Computer V ision , pages 4432–4441, 2019. 3 [2] Y azeed Alharbi, Neil Smith, and Peter W onka. Latent filter scaling for multimodal unsupervised image-to-image trans- lation. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , pages 1458–1466, 2019. 2 [3] Dragomir Anguelov , Carole Dulong, Daniel Filip, Christian Frueh, St ´ ephane Lafon, Richard L yon, Abhijit Ogale, Luc V incent, and Josh W eaver . Google street view: Capturing the world at street le vel. Computer , 43(6):32–38, 2010. 5 [4] Martin Arjovsky , Soumith Chintala, and L ´ eon Bottou. W asserstein gan. arXiv pr eprint arXiv:1701.07875 , 2017. 3 [5] David Bau, Hendrik Strobelt, William Peebles, Jonas W ulf f, Bolei Zhou, Jun-Y an Zhu, and Antonio T orralba. Se- mantic photo manipulation with a generativ e image prior . A CM T ransactions on Graphics (Pr oceedings of ACM SIG- GRAPH) , 38(4), 2019. 3 [6] Andrew Brock, Jeff Donahue, and Karen Simonyan. Large scale gan training for high fidelity natural image synthesis, 2018. 3 [7] Liang-Chieh Chen, Y ukun Zhu, George Papandreou, Flo- rian Schrof f, and Hartwig Adam. Encoder -decoder with atrous separable conv olution for semantic image segmenta- tion, 2018. 5 [8] Qifeng Chen and Vladlen Koltun. Photographic image syn- thesis with cascaded refinement networks, 2017. 2 [9] Y unjey Choi, Minje Choi, Munyoung Kim, Jung-W oo Ha, Sunghun Kim, and Jaegul Choo. Stargan: Unified genera- tiv e adversarial networks for multi-domain image-to-image translation, 2017. 3 [10] Marius Cordts, Mohamed Omran, Sebastian Ramos, Timo Rehfeld, Markus Enzweiler, Rodrigo Benenson, Uwe Franke, Stefan Roth, and Bernt Schiele. The cityscapes dataset for semantic urban scene understanding. In Pr oc. of the IEEE Confer ence on Computer V ision and P attern Recognition (CVPR) , 2016. 2 , 5 , 12 , 13 [11] Ugur Demir and Gozde Unal. Patch-based image inpaint- ing with generati ve adversarial networks. arXiv pr eprint arXiv:1803.07422 , 2018. 3 [12] Anna Frhstck, Ibraheem Alhashim, and Peter W onka. Tile- gan. ACM T ransactions on Graphics , 38(4):111, Jul 2019. 3 [13] Leon A Gatys, Alexander S Ecker , and Matthias Bethge. A neural algorithm of artistic style. arXiv , Aug 2015. 3 [14] Xavier Glorot and Y oshua Bengio. Understanding the dif- ficulty of training deep feedforward neural networks. In Y ee Whye T eh and Mike Titterington, editors, Pr oceedings of the Thirteenth International Conference on Artificial In- telligence and Statistics , volume 9 of Pr oceedings of Ma- chine Learning Resear ch , pages 249–256, Chia Laguna Re- sort, Sardinia, Italy , 13–15 May 2010. PMLR. 12 [15] Ian J. Goodfellow , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-Farley , Sherjil Ozair, Aaron Courville, and Y oshua Bengio. Generative adv ersarial networks, 2014. 3 [16] Shuyang Gu, Jianmin Bao, Hao Y ang, Dong Chen, Fang W en, and Lu Y uan. Mask-guided portrait editing with con- ditional gans. In The IEEE Conference on Computer V ision and P attern Recognition (CVPR) , June 2019. 3 [17] Ishaan Gulrajani, Faruk Ahmed, Martin Arjovsk y , V incent Dumoulin, and Aaron C Courville. Improved training of wasserstein gans. In Advances in Neural Information Pr o- cessing Systems , pages 5767–5777, 2017. 3 [18] Martin Heusel, Hubert Ramsauer , Thomas Unterthiner , Bernhard Nessler, and Sepp Hochreiter . Gans trained by a two time-scale update rule conv erge to a local nash equilib- rium, 2017. 5 , 6 [19] Seunghoon Hong, Dingdong Y ang, Jongwook Choi, and Honglak Lee. Inferring semantic layout for hierarchical text- to-image synthesis. In Pr oceedings of the IEEE Conference on Computer V ision and P attern Recognition , pages 7986– 7994, 2018. 3 [20] Xun Huang and Serge Belongie. Arbitrary style transfer in real-time with adaptiv e instance normalization, 2017. 2 [21] Xun Huang and Serge Belongie. Arbitrary style transfer in real-time with adaptive instance normalization. In ICCV , 2017. 3 [22] Xun Huang, Ming-Y u Liu, Serge Belongie, and Jan Kautz. Multimodal unsupervised image-to-image translation, 2018. 3 [23] Phillip Isola, Jun-Y an Zhu, T inghui Zhou, and Alexei A. Efros. Image-to-image translation with conditional adver - sarial networks, 2016. 2 , 3 , 11 [24] Justin Johnson, Alexandre Alahi, and Li Fei-Fei. Perceptual losses for real-time style transfer and super-resolution, 2016. 3 , 4 , 11 [25] T ero Karras, T imo Aila, Samuli Laine, and Jaakko Lehtinen. Progressiv e growing of gans for improv ed quality , stability , and variation, 2017. 2 , 5 , 13 [26] T ero Karras, Samuli Laine, and Timo Aila. A style-based generator architecture for generativ e adversarial networks, 2018. 2 , 3 , 4 [27] Diederik P . Kingma and Jimmy Ba. Adam: A method for stochastic optimization, 2014. 5 [28] Dmytro Koto venko, Artsiom Sanakoyeu, Sabine Lang, and Bjorn Ommer . Content and style disentanglement for artis- tic style transfer . In The IEEE International Conference on Computer V ision (ICCV) , October 2019. 3 [29] Cheng-Han Lee, Ziwei Liu, Lingyun W u, and Ping Luo. Maskgan: T o wards div erse and interactiv e facial image ma- nipulation. arXiv preprint , 2019. 2 , 3 , 5 , 13 [30] Chuan Li and Michael W and. Precomputed real-time te xture synthesis with markovian generativ e adversarial networks. In Computer V ision - ECCV 2016 - 14th Eur opean Confer- ence, Amster dam, The Netherlands, October 11-14, 2016, Pr oceedings, P art III , 2016. 3 [31] Ming-Y u Liu, Thomas Breuel, and Jan Kautz. Unsupervised image-to-image translation networks, 2017. 3 [32] Ming-Y u Liu, Xun Huang, Arun Mallya, T ero Karras, Timo Aila, Jaakko Lehtinen, and Jan Kautz. Few-shot unsuper- vised image-to-image translation, 2019. 2 , 3 [33] Ziwei Liu, Ping Luo, Xiaogang W ang, and Xiaoou T ang. Deep learning face attributes in the wild. In Pr oceedings of International Conference on Computer V ision (ICCV) , De- cember 2015. 2 , 5 , 13 [34] Xudong Mao, Qing Li, Haoran Xie, Raymond Y .K. Lau, Zhen W ang, and Stephen Paul Smolley . Least squares gen- erativ e adversarial networks. 2017 IEEE International Con- fer ence on Computer V ision (ICCV) , Oct 2017. 3 [35] Lars Mescheder , Andreas Geiger, and Sebastian No wozin. Which training methods for gans do actually conv erge?, 2018. 3 [36] Mehdi Mirza and Simon Osindero. Conditional generative adversarial nets. arXiv pr eprint arXiv:1411.1784 , 2014. 3 [37] T akeru Miyato, T oshiki Kataoka, Masanori Ko yama, and Y uichi Y oshida. Spectral normalization for generati ve ad- versarial networks, 2018. 3 , 5 , 11 [38] T akeru Miyato and Masanori K oyama. cgans with projection discriminator , 2018. 3 [39] T aesung Park, Ming-Y u Liu, Ting-Chun W ang, and Jun-Y an Zhu. Semantic image synthesis with spatially-adaptiv e nor- malization. In Pr oceedings of the IEEE Confer ence on Com- puter V ision and P attern Recognition , 2019. 2 , 3 , 4 , 5 , 6 , 7 , 11 , 13 , 16 , 17 , 18 , 19 [40] Xiaojuan Qi, Qifeng Chen, Jiaya Jia, and Vladlen K oltun. Semi-parametric image synthesis, 2018. 2 [41] Alec Radford, Luke Metz, and Soumith Chintala. Un- supervised representation learning with deep conv olu- tional generative adversarial networks. arXiv preprint arXiv:1511.06434 , 2015. 3 [42] Scott Reed, Zeynep Akata, Xinchen Y an, Lajanugen Lo- geswaran, Bernt Schiele, and Honglak Lee. Genera- tiv e adversarial text to image synthesis. arXiv preprint arXiv:1605.05396 , 2016. 3 [43] Omry Sendik and Daniel Cohen-Or . Deep correlations for texture synthesis. ACM T ransactions on Graphics (T OG) , 36(5):161, 2017. 3 [44] Karen Simonyan and Andrew Zisserman. V ery deep con- volutional netw orks for lar ge-scale image recognition, 2014. 11 [45] Ron Slossberg, Gil Shamai, and Ron Kimmel. High quality facial surface and texture synthesis via generative adversar- ial networks. In Eur opean Conference on Computer V ision , pages 498–513. Springer , 2018. 3 [46] Dmitry Ulyanov , Andrea V edaldi, and V ictor Lempitsky . In- stance normalization: The missing ingredient for f ast styliza- tion, 2016. 11 [47] T ing-Chun W ang, Ming-Y u Liu, Jun-Y an Zhu, Andrew T ao, Jan Kautz, and Bryan Catanzaro. High-resolution image syn- thesis and semantic manipulation with conditional gans. In Pr oceedings of the IEEE Conference on Computer V ision and P attern Recognition , 2018. 2 , 3 , 4 , 5 , 6 , 7 , 11 , 13 , 16 , 17 , 18 , 19 [48] Zhou W ang, Alan C. Bovik, Hamid R. Sheikh, and Eero P . Simoncelli. Image quality assessment: From error visibility to structural similarity . IEEE TRANSACTIONS ON IMAGE PR OCESSING , 13(4):600–612, 2004. 6 [49] T ao Xu, Pengchuan Zhang, Qiuyuan Huang, Han Zhang, Zhe Gan, Xiaolei Huang, and Xiaodong He. Attngan: Fine- grained text to image generation with attentional generativ e adversarial networks. In Pr oceedings of the IEEE Confer- ence on Computer V ision and P attern Recognition , pages 1316–1324, 2018. 3 [50] Shuai Y ang, Zhangyang W ang, Zhaowen W ang, Ning Xu, Ji- aying Liu, and Zongming Guo. Controllable artistic text style transfer via shape-matching gan. In The IEEE International Confer ence on Computer V ision (ICCV) , October 2019. 3 [51] Jiahui Y u, Zhe Lin, Jimei Y ang, Xiaohui Shen, Xin Lu, and Thomas S Huang. Generative image inpainting with con- textual attention. In Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , pages 5505– 5514, 2018. 3 [52] Hang Zhang, Kristin Dana, Jianping Shi, Zhongyue Zhang, Xiaogang W ang, Ambrish T yagi, and Amit Agrawal. Con- text encoding for semantic segmentation. In The IEEE Confer ence on Computer V ision and P attern Recognition (CVPR) , June 2018. 5 [53] Bolei Zhou, Hang Zhao, Xavier Puig, Sanja Fidler , Adela Barriuso, and Antonio T orralba. Scene parsing through ade20k dataset. In Pr oceedings of the IEEE Conference on Computer V ision and P attern Recognition , 2017. 2 , 5 , 13 [54] Y ang Zhou, Zhen Zhu, Xiang Bai, Dani Lischinski, Daniel Cohen-Or , and Hui Huang. Non-stationary texture synthesis by adversarial expansion. arXiv preprint , 2018. 3 [55] Jun-Y an Zhu, Philipp Kr ¨ ahenb ¨ uhl, Eli Shechtman, and Alex ei A. Efros. Generativ e visual manipulation on the natu- ral image manifold. In Pr oceedings of Eur opean Confer ence on Computer V ision (ECCV) , 2016. 3 [56] Jun-Y an Zhu, T aesung Park, Phillip Isola, and Alexei A. Efros. Unpaired image-to-image translation using cycle- consistent adversarial networks, 2017. 3 [57] Jun-Y an Zhu, Richard Zhang, Deepak Pathak, Tre vor Dar- rell, Alexei A Efros, Oliver W ang, and Eli Shechtman. T o- ward multimodal image-to-image translation. In Advances in Neural Information Pr ocessing Systems , 2017. 3 A. Additional Implementation Details Generator . Our generator consists of several SEAN Res- Blks. Each of them is follo wed by a nearest neighbor up- sampling layer . Note that we only inject the style codes ST into the first 6 SEAN ResBlks. The other inputs are injected to all SEAN ResBlks. The architecture of our generator is shown in Figure 8 . 3×3 - Conv - 1024 SEAN ResBlk (1024), Upsample(2) SEAN ResBlk (1024), Upsample(2) SEAN ResBlk (1024), Upsample(2) SEAN ResBlk (512), Upsample(2) SEAN ResBlk (256), Upsample(2) SEAN ResBlk (128), Upsample(2) SEAN ResBlk (64), Upsample(2) 3×3 - Conv - 3, Ta n h … ST Figure 8: SEAN Generator . The style codes ST and seg- mentation mask are passed to the generator through the pro- posed SEAN ResBlks. The number of feature map channels is shown in the parenthesis after each SEAN ResBlk. T o better illustrate the architecture, we omit the learnable noise inputs and per -style Con v layers in this figure. These details are shown in Fig.3 of the main paper (see A ij and B ij ). Discriminator . Follo wing SP ADE [ 39 ] and Pix2PixHD [ 47 ], we employed two multi-scale dis- criminators with instance normalization (IN) [ 46 ] and Leaky ReLU (LReLU). Similar to SP ADE, we apply spectral normalization [ 37 ] to all the conv olutional layers of the discriminator . The architecture of our discriminator is shown in Figure 9 . Style Encoder . Our style encoder consists of a “bottle- neck” con volutional neural network and a region-wise av- erage pooling layer (Figure 11 ). The inputs are the style image and the corresponding segmentation mask, while the outputs are the style codes ST . Loss function. The design of our loss function is inspired by those of SP ADE and Pix2PixHD which contains three 4 ×4 - Conv -512, IN, LR eLU 4 ×4 - ↓ 2- Conv -256, IN, LReLU 4 ×4 - ↓ 2- Conv -128, IN, LReLU 4 ×4 - ↓ 2- Conv - 64, LR eLU 4 ×4 - Conv -1 Concat Figure 9: Follo wing SP ADE and Pix2PixHD, our discrim- inator takes the concatenation of a segmentation mask and a style image as inputs. The loss is calculated in the same way as PatchGAN [ 23 ]. components: (1) Adversarial loss. Let E be the style encoder , G be the SEAN generator , D 1 and D 2 be two discriminators at dif- ferent scales [ 47 ], R be a given style image, M be the cor- responding se gmentation mask of R , we formulate the con- ditional adversarial learning part of our loss function as: min E ,G max D 1 ,D 2 X k =1 , 2 L GAN ( E , G, D k ) (5) Specifically , L GAN is built with the Hinge loss that: L GAN = E [max(0 , 1 − D k ( R , M ))] + E [max(0 , 1 + D k ( G ( ST , M ) , M ))] (6) where ST is the style codes of R extracted by E under the guidance of M : ST = E ( R , M ) (7) (2) F eatur e matc hing loss [ 47 ]. Let T be the total number of layers in discriminator D k , D ( i ) k and N i be the output feature maps and the number of elements of the i -th layer of D k respectiv ely , we denote the feature matching loss term L F M as: L F M = E T X i =1 1 N i h D ( i ) k ( R , M ) − D ( i ) k ( G ( ST , M ) , M ) 1 i (8) (3) P erceptual loss [ 24 ]. Let N be the total number of lay- ers used to calculate the perceptual loss, F ( i ) be the output feature maps of the i -th layer of the V GG network [ 44 ], M i be the number of elements of F ( i ) , we denote the perceptual loss L percept as: L percept = E N X i =1 1 M i h F ( i ) ( R ) − F ( i ) ( G ( ST , M )) 1 i (9) … ST i2 SE AN ReLU 3×3 Co n v SE AN ReLU 3×3 Co n v SE AN ReLU 3×3 Co n v B i3 A i2 A i3 B i2 A i1 B i1 … ST i1 … ST i3 SEA N - le ve l. en co d er SEA N - le ve l. en co d er SEA N - le ve l. en co d er … ST i SE AN ReLU 3×3 Co n v SE AN ReLU 3×3 Co n v SE AN ReLU 3×3 Co n v B i3 A i2 A i3 B i2 A i1 B i1 Res Blk - le ve l. en co d er (A) SEAN Re sBl k -- with SEAN - level en coder (A) SEAN Re sBl k -- with ResBlk - level en cod er Figure 10: Detailed usage of the SEAN-lev el encoder and the ResBlk-le vel encoder within a SEAN ResBlk. 3 ×3 - Conv - 512, T anh 3 ×3 - ↑ 2- TC o nv - 256 , LReL U 3 ×3 - ↓ 2- Conv - 128, LR eLU 3 ×3 - ↓ 2- Conv - 64, LR eLU 3 ×3 - Conv - 32, LR eLU … ST Regi on -wise average pooling Figure 11: Our style encoder takes the style image and the segmentation mask as inputs to generate the style codes ST . The final loss function used in our experiment is made up of the abov e-mentioned three loss terms as: min E ,G max D 1 ,D 2 X k =1 , 2 L GAN + λ 1 X k =1 , 2 L FM + λ 2 L percept (10) Follo wing SP ADE and Pix2PixHD, we set λ 1 = λ 2 = 10 in our experiments. T raining details. W e perform 50 epochs of training on all the datasets mentioned in the main paper . During training, all input images are resized to a resolution of 256 × 256 , except for the CityScapes dataset [ 10 ] whose images are resized to 512 × 256 . W e use Glorot initialization [ 14 ] to initialize our network weights. B. Additional Experimental Details T able 3 (main paper). Supplementing ro w 5 and 6 in T a- ble 3 of the main paper , Figure 10 shows ho w the two vari- ants of style encoders ( i.e . the SEAN-level encoder and the ResBlk-lev el encoder) are used in a SEAN ResBlk. Specifi- cally , the SEAN-le vel encoders e xtract dif ferent style codes for each SEAN block while the same style codes extracted by the ResBlk-le vel encoder are used by all SEAN blocks within a SEAN ResBlk. Figure 6 (main paper). W e used the Ground T ruth (second column in Figure 6 of the main paper) as the style input for all methods. For Pix2pixHD, we generate the results by: (i) encoding the style image into a style vector; (ii) broadcast- ing the style vector and concatenating it to the mask input of the generator . C. Justification of Encoder Choice Figure 12 sho ws that the images generated by the unified encoder are of better visual quality than those generated by the SEAN-level encoder , especially for challenging inputs (e.g. extreme poses, unlabeled regions), which justifies our choice of unified encoder . D. Additional Analysis ST -branch vs . Mask-branch. The contrib utions of ST - branch and mask-branch are determined by a linear com- bination (parameters α β and α γ ). The resulting parameters are typically in the range of 0 . 35 − 0 . 7 meaning that both branches are activ ely contributing to the result. See Fig 13 for one e xample. It is possible to completely drop the mask- branch, b ut the results will get worse. It w as our initial intu- ition that the mask branch provides the rough structure and the ST -branch additional details. Howe ver , in the end, the interaction is quite complicated and cannot be understood by just varying the mixing parameter . Extreme Cases. T o further demonstrate SEAN’ s power in Label Style Image Encoder1 Encoder2 Figure 12: Encoder choice justification. Encoder1 is the SEAN-lev el encoder and Encoder2 is the unified encoder . SEAN-lev el encoder is more sensitive to the poses and un- labeled parts of the style image due to the ov erfitting. Using unified encoder can get more robust style transfer results. : : : : Figure 13: Contributions of ST -branch and Mask-branch for each SEAN normalization block. The pie charts and SEAN normalization blocks are in one-to-one correspondence. texture transfer, we show that highly comple x textures from an artistic image can be transferred to a human face (Fig 14 ). Figure 14: Complex texture transfer . In addition, our method is highly flexible that enables users to paint a semantic region at a spatially unreasonable loca- tion arbitrarily (Fig 15 ). Figure 15: Spatially-flexible painting. Our method allows users to put eyes an ywhere on a face. E. User Study W e conducted a user preference study with Amazon Me- chanical T urk (AMT) to illustrate our superior reconstruc- tion results against e xisting methods (T able 4 ). Specifically , we created 600 questions for AMT workers to answer . In the end, our questions are answered by 575 AMT work- ers. For each question, we show the user a set of 5 im- ages: a ground truth image, its corresponding segmentation mask, and 3 reconstruction images obtained by our method, Pix2PixHD [ 47 ] and SP ADE [ 39 ]. The user is then asked to select the reconstructed image closest to the ground truth and with fewest artifacts. T o relie ve the impact of image or - ders and make a fair comparison, we picked 100 image sets randomly and created the 600 questions by enumerating all the 6 possible orders of the 3 reconstructed images in each of them. Pix2PixHD [ 47 ] SP ADE [ 39 ] Ours Preference (%) 23.17 8.83 68.00 T able 4: User preference study (CelebAMask-HQ dataset). Our method outperforms Pix2PixHD [ 47 ] and SP ADE [ 39 ] significantly . F. Additional Results T o demonstrate that the proposed per-re gion style control method builds the foundation of a highly flexible image- editing software, we designed an interactiv e UI for a demo. Our UI enables high quality image synthesis by transfer- ring the per-region styles from various images to an arbi- trary segmentation mask. New styles can be created by in- terpolating existing styles. Please find the recorded videos of our demo in the supplementary material. Figure 16 shows additional style transfer results on CelebAMask-HQ [ 29 , 25 , 33 ] dataset. Figure 17 and Figure 18 show additional style interpolation results on CelebAMask-HQ and ADE20K datasets. Figure 19 , 20 , 21 and 22 sho w additional image re- construction results of our method, Pix2PixHD and SP ADE on the CelebAMask-HQ [ 29 , 25 , 33 ], ADE20K [ 53 ], CityScapes [ 10 ] and our Fac ¸ ades datasets respecti vely . It can be observ ed that our reconstructions are of much higher quality than those of Pix2PixHD and SP ADE. Sou r ce Image La yout Style Image Figure 16: Style transfer on CelebAMask-HQ dataset Source Image Layout Style1 Style2 Interpolat ed Result s Figure 17: Style interpolation on CelebAMask-HQ dataset Source Image Layout Style1 Style2 Interpolat ed Result s Figure 18: Style interpolation on ADE20K dataset Label Ground T ruth Pix2PixHD [ 47 ] SP ADE [ 39 ] Ours Figure 19: V isual comparison of semantic image synthesis results on the CelebAMask-HQ dataset. W e compare Pix2PixHD, SP ADE, and our method. Label Ground T ruth Pix2PixHD [ 47 ] SP ADE [ 39 ] Ours Figure 20: V isual comparison of semantic image synthesis results on the ADE20K dataset. W e compare Pix2PixHD, SP ADE, and our method. Label Ground T ruth Pix2PixHD [ 47 ] SP ADE [ 39 ] Ours Figure 21: V isual comparison of semantic image synthesis results on the ADE20K dataset. W e compare Pix2PixHD, SP ADE, and our method. Label Ground T ruth Pix2PixHD [ 47 ] SP ADE [ 39 ] Ours Figure 22: V isual comparison of semantic image synthesis results on the CityScapes and F ac ¸ ades dataset. W e compare Pix2PixHD, SP ADE, and our method.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment